Patents

Literature

Hiro is an intelligent assistant for R&D personnel, combined with Patent DNA, to facilitate innovative research.

90 results about "Vanishing gradient problem" patented technology

Efficacy Topic

Property

Owner

Technical Advancement

Application Domain

Technology Topic

Technology Field Word

Patent Country/Region

Patent Type

Patent Status

Application Year

Inventor

In machine learning, the vanishing gradient problem is a difficulty found in training artificial neural networks with gradient-based learning methods and backpropagation. In such methods, each of the neural network's weights receives an update proportional to the partial derivative of the error function with respect to the current weight in each iteration of training. The problem is that in some cases, the gradient will be vanishingly small, effectively preventing the weight from changing its value. In the worst case, this may completely stop the neural network from further training. As one example of the problem cause, traditional activation functions such as the hyperbolic tangent function have gradients in the range (0, 1), and backpropagation computes gradients by the chain rule. This has the effect of multiplying n of these small numbers to compute gradients of the "front" layers in an n-layer network, meaning that the gradient (error signal) decreases exponentially with n while the front layers train very slowly.

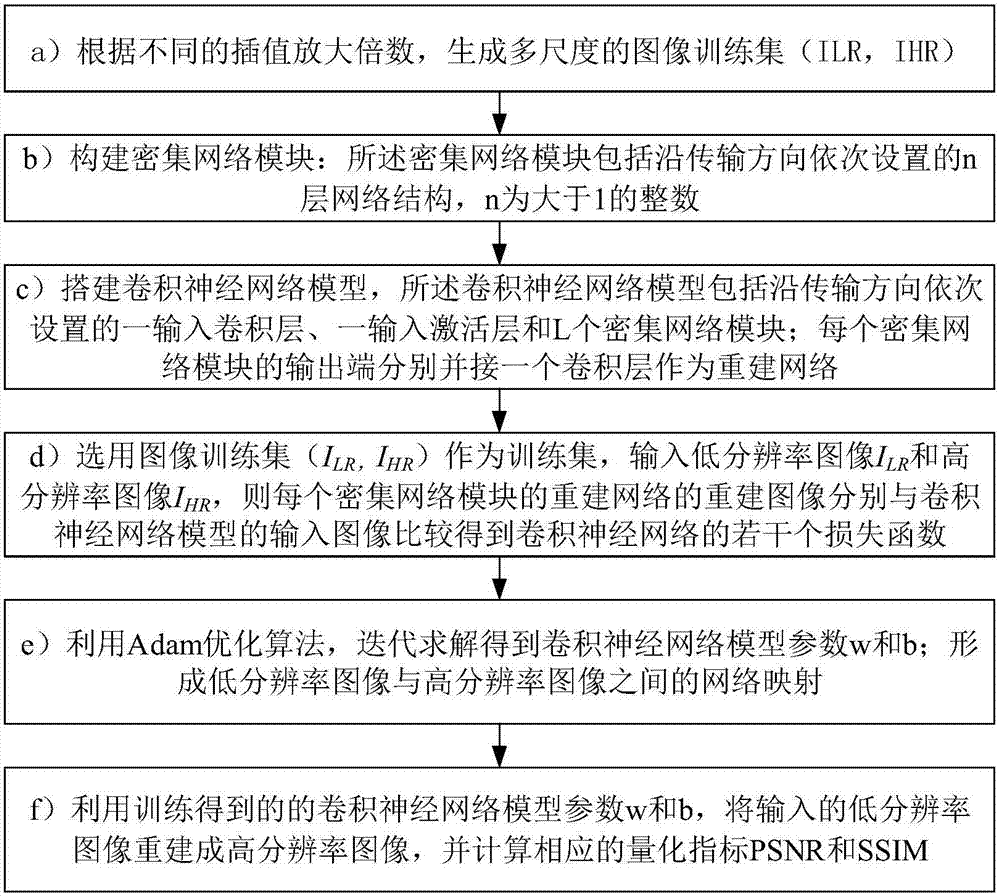

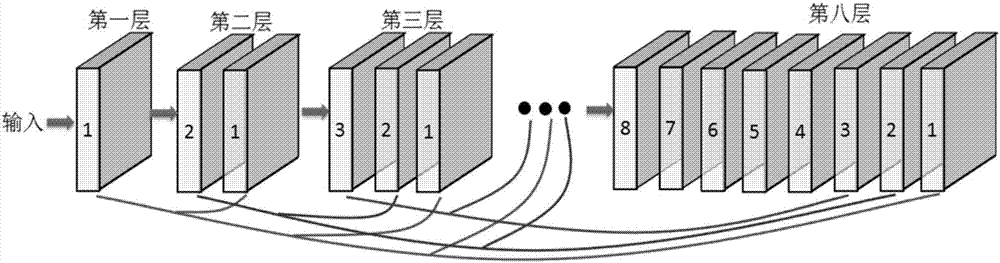

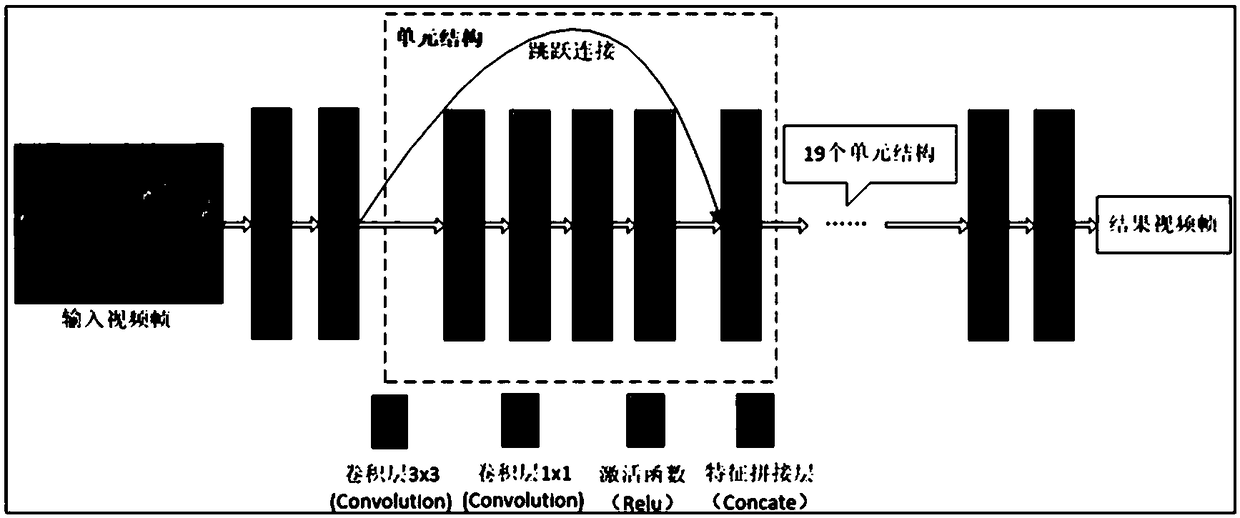

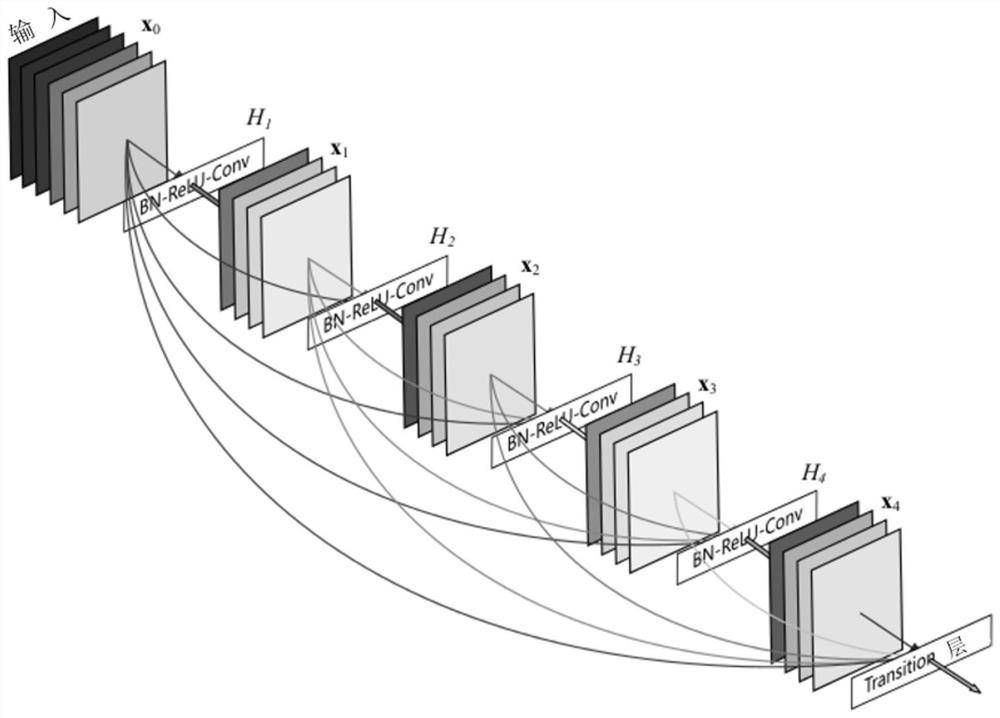

Image super-resolution method based on dense connection network

ActiveCN106991646AAvoid vanishing gradientsSolve training puzzlesGeometric image transformationModel parametersNetwork model

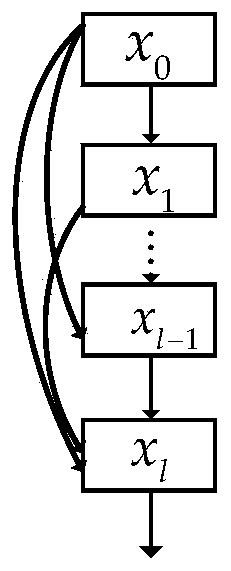

The invention discloses an image super-resolution method based on dense connection network. By increasing the depth of a convolution neural network and introducing a large quantity of jumping connection in the deep network, the image super-resolution method based on dense connection network effectively solves the problem that the gradient disappears during the reverse propagation of the deep network, optimizes flowing of information on the network, and improves the super-resolution reconstruction capability of the convolution neural network. At the same time, the image super-resolution method based on dense connection network is effectively combined with the bottom layer characteristic and the high layer abstract characteristic, and can reduce the model parameters and compress the deep network model so as to improve the reconstruction efficiency of the image super-resolution. Besides, by introducing a deep monitoring technology, the image super-resolution method based on dense connection network can reconstruct the super-resolution image at different depth of network, thus not only optimizing training of the deep network, but also being able to selecting a suitable network depth to reconstruct a high definition image according to the calculation capability of the test terminal during the testing process. Finally, the image super-resolution method based on dense connection network utilizes an image ser having a plurality of amplification factors to train, so that the obtained model can perform image super-resolution on a plurality of dimensions and does not need to train different models for every amplification factor.

Owner:福建帝视信息科技有限公司

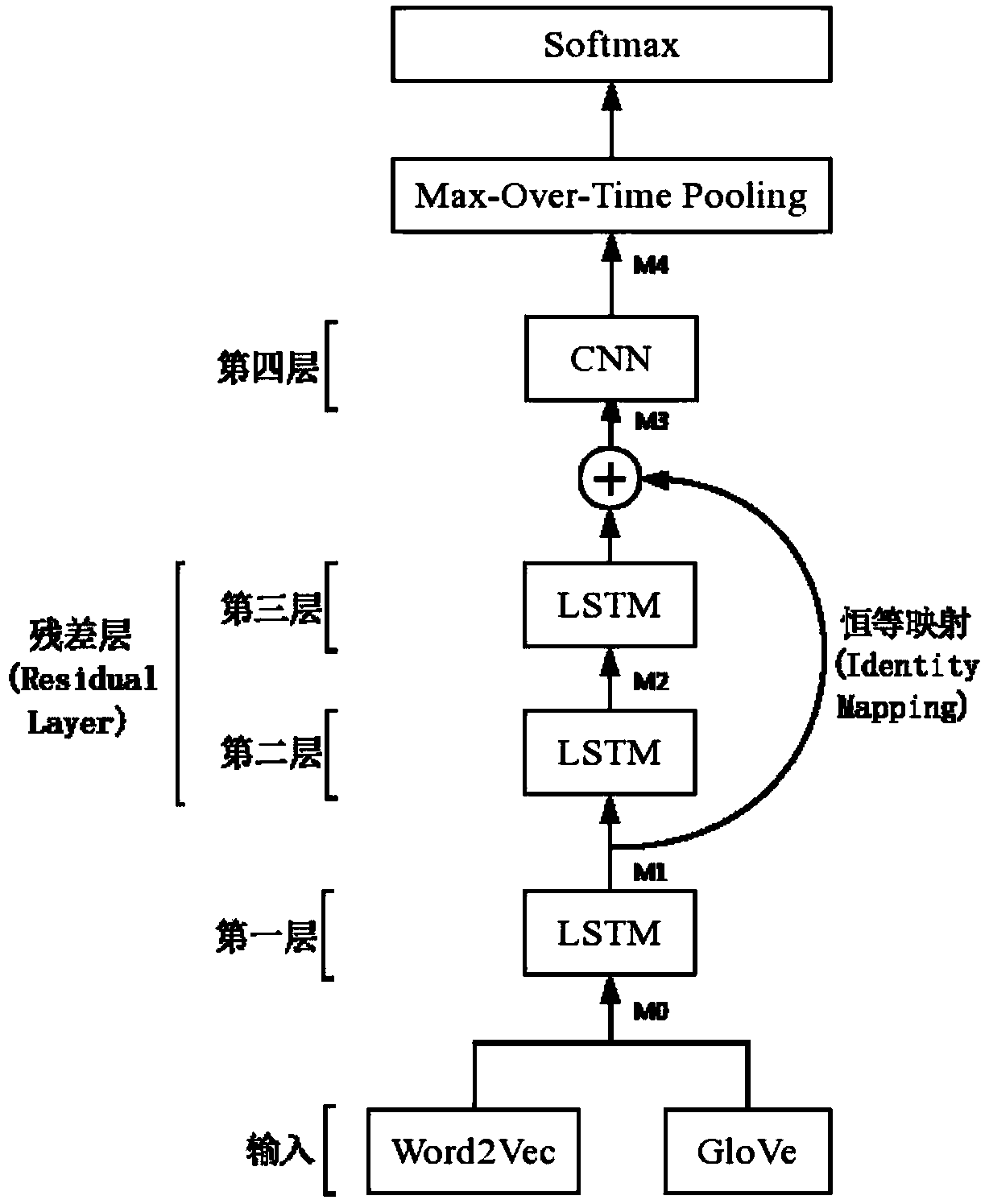

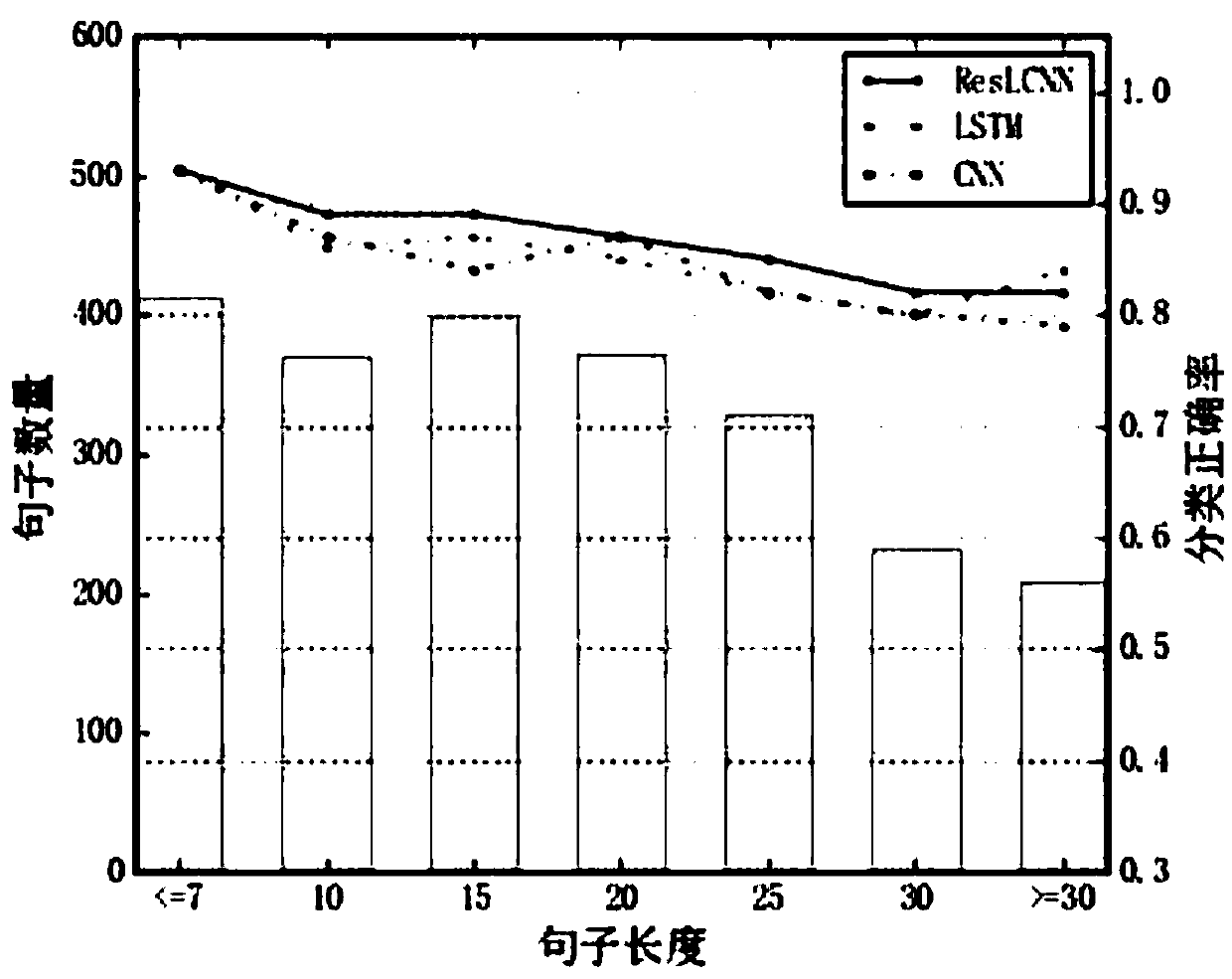

ResLCNN model-based short text classification method

InactiveCN107562784AImprove classification effectNeural architecturesSpecial data processing applicationsText miningText categorization

The invention discloses a ResLCNN model-based short text classification method, relates to the technical field of text mining and deep learning, and in particular to a deep learning model for short text classification. According to the method, characteristics of a long-short term memory network and a convolutional neural network are combined to build a ResLCNN deep text classification model for short text classification. The model comprises three long-short term memory network layer and one convolutional neural network layer; and through using a residual model theory for reference, identity mapping is added between the first long-short term memory network layer and the convolutional neural network layer to construct a residual layer, so that the problem of deep model gradient missing is relieved. According to the model, the advantage, of obtaining long-distance dependency characteristics of text sequence data, of the long-short term memory network and the advantage, of obtaining localfeatures of sentences through convolution, of the convolutional neural network are effectively combined, so that the short text classification effect is improved.

Owner:TONGJI UNIV

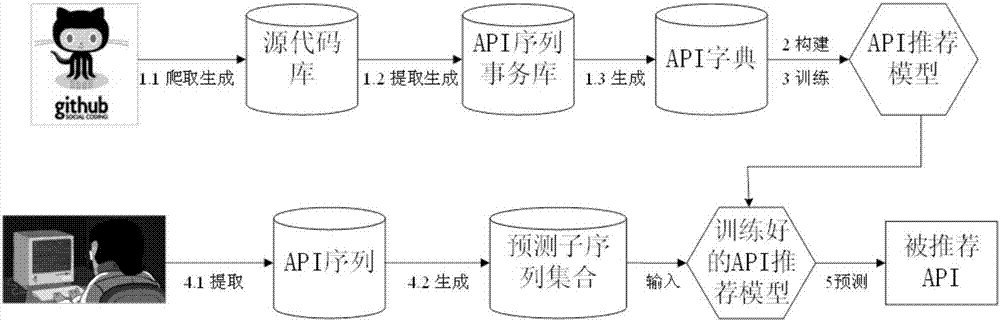

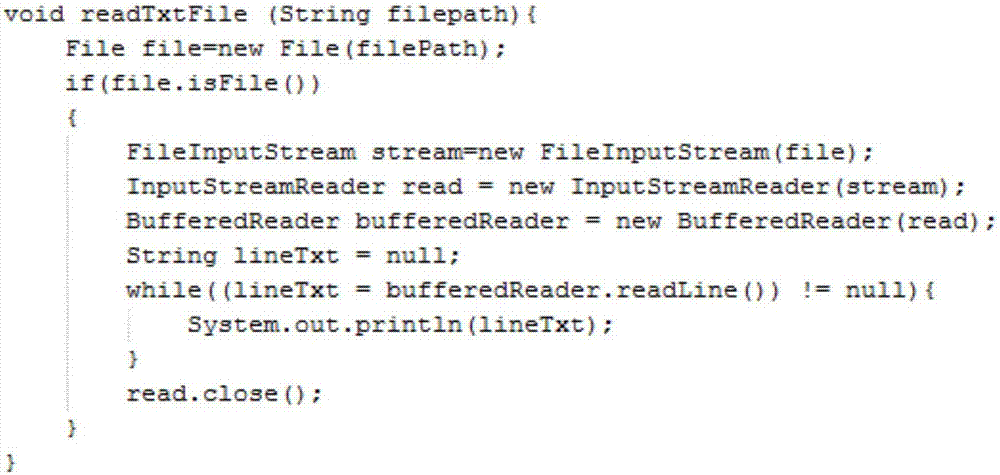

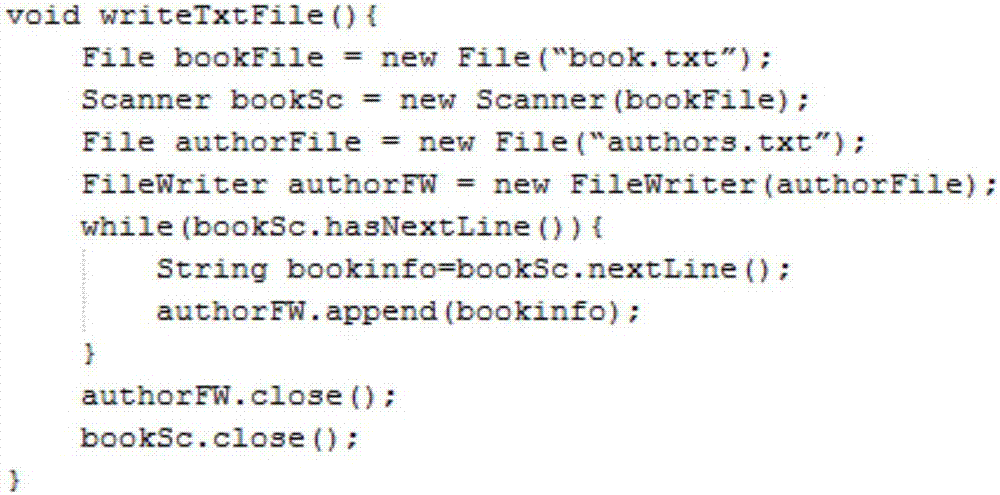

Code recommendation method based on long short-term memory (LSTM) network

InactiveCN107506414AImprove accuracyImprove recommendation efficiencySpecial data processing applicationsRecommendation modelSource code

The invention relates to a code recommendation method based on a long short-term memory (LSTM) network. For the problem that low recommendation accuracy rates, low recommendation efficiency and the like are ubiquitous in existing code recommendation technologies, the method firstly extracts source code to form an API sequence, utilizing the long short-term memory network to build a code recommendation model to learn relationships between API calls, and then carries out code recommendation. A dropout technology is used to prevent model overfitting. At the same time, using a ReLu function to instead a traditional saturation function is provided, the gradient vanishing problem is solved, a model convergence speed is accelerated, model performance is improved, and advantages of the neural network are fully exerted. The technical scheme of the invention has the characteristics of simpleness and quickness, and can better improve an accuracy rate and recommendation efficiency of code recommendation.

Owner:WUHAN UNIV

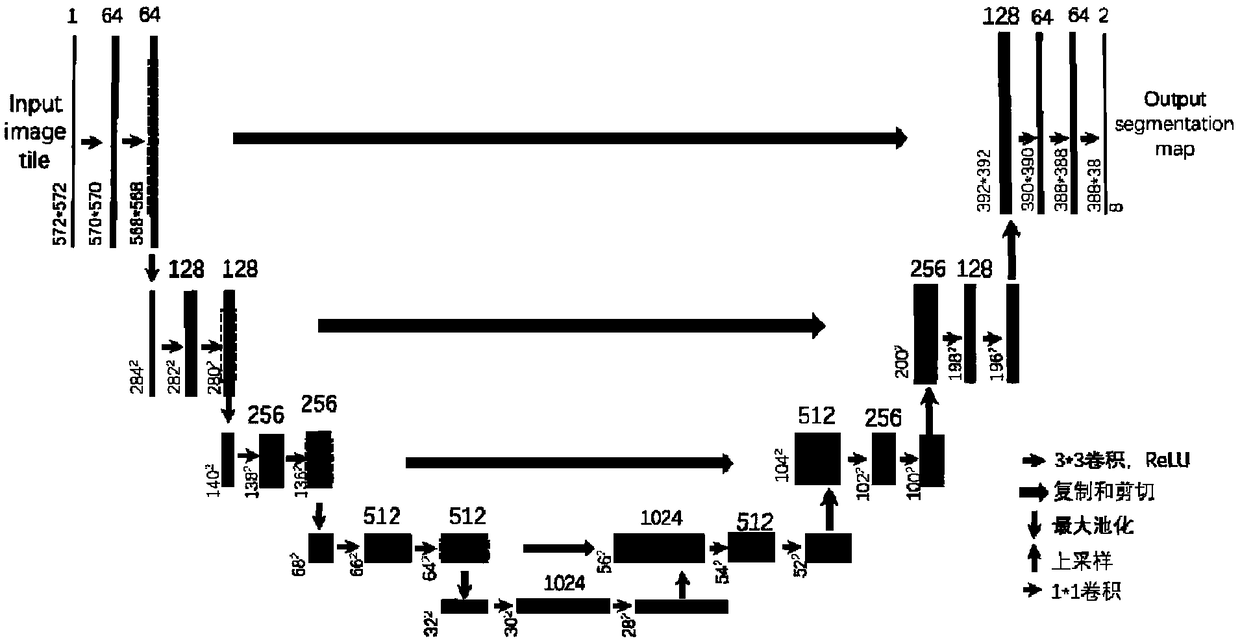

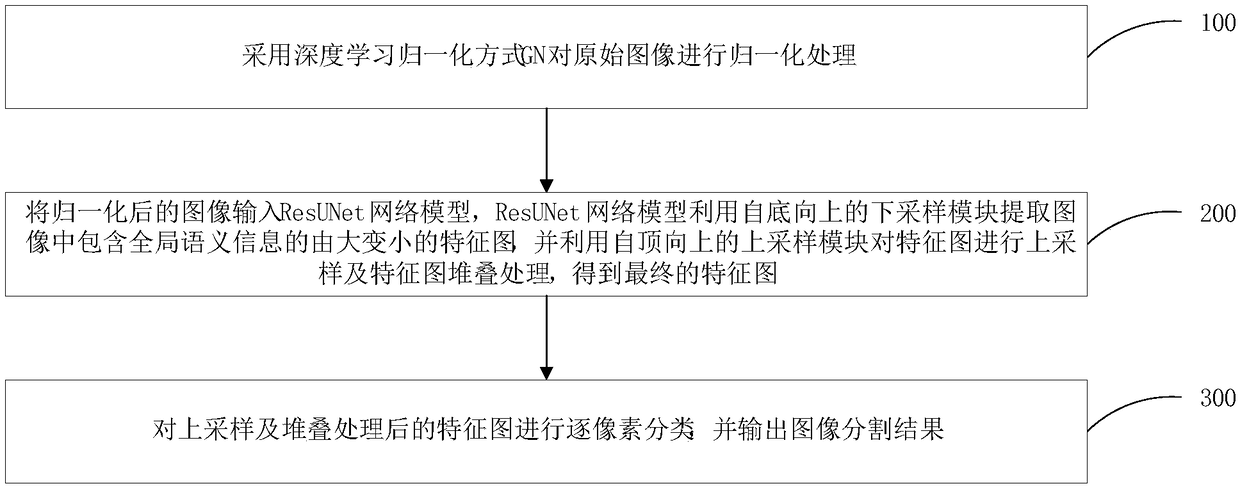

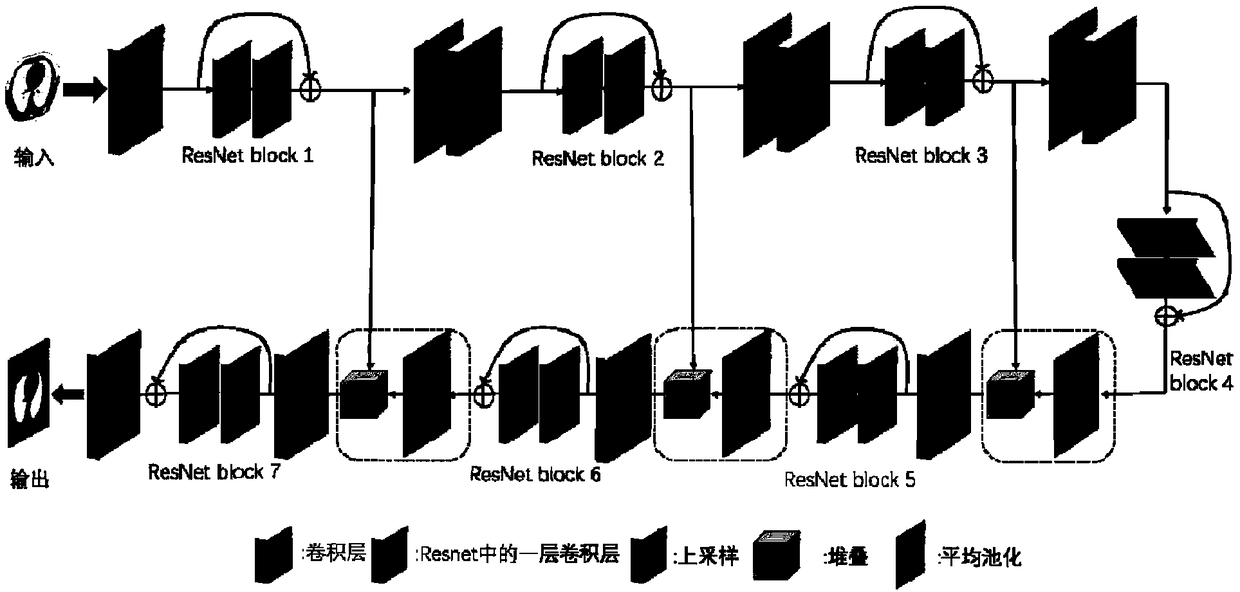

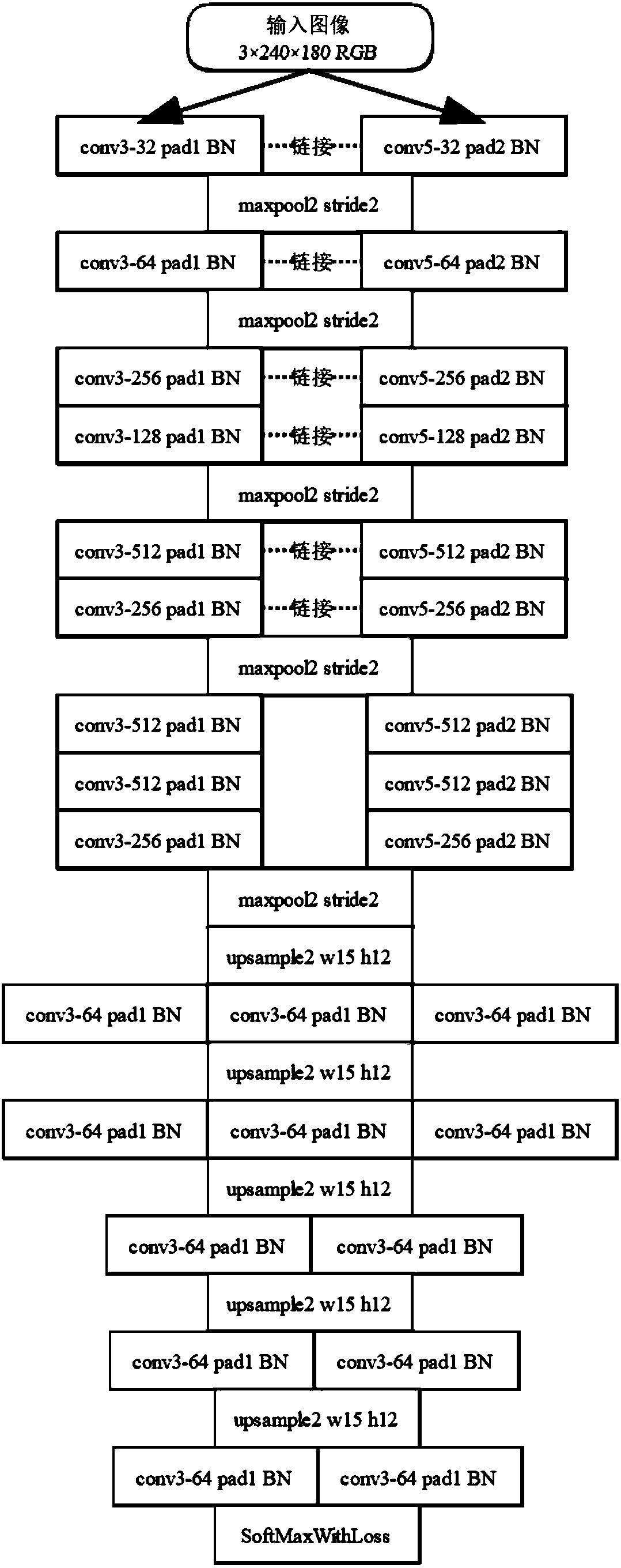

Image segmentation method, system and electronic device based on depth learning

InactiveCN109118491AEasy to trainAvoid vanishing gradientsImage enhancementImage analysisImage segmentationNetwork model

The invention belongs to the technical field of image segmentation, in particular to an image segmentation method, a system and an electronic device based on depth learning. The image segmentation method based on depth learning comprises the following steps: step A: normalizing the original image; B, inputting the normalized image into a ResUNet network model, extracting a feature map containing global semantic information from the input image by the ResUNet network model, upsampling the feature map and stacking the feature map to obtain a final feature map; step c: classifying pixel by pixelthe feature map after upsampling and stacking processing, and outputting the image segmentation result. The present application solves the common problem of gradient disappearance in a convolution neural network, and also enables the network to be more easily trained and converged to a better segmentation result.

Owner:SHENZHEN INST OF ADVANCED TECH

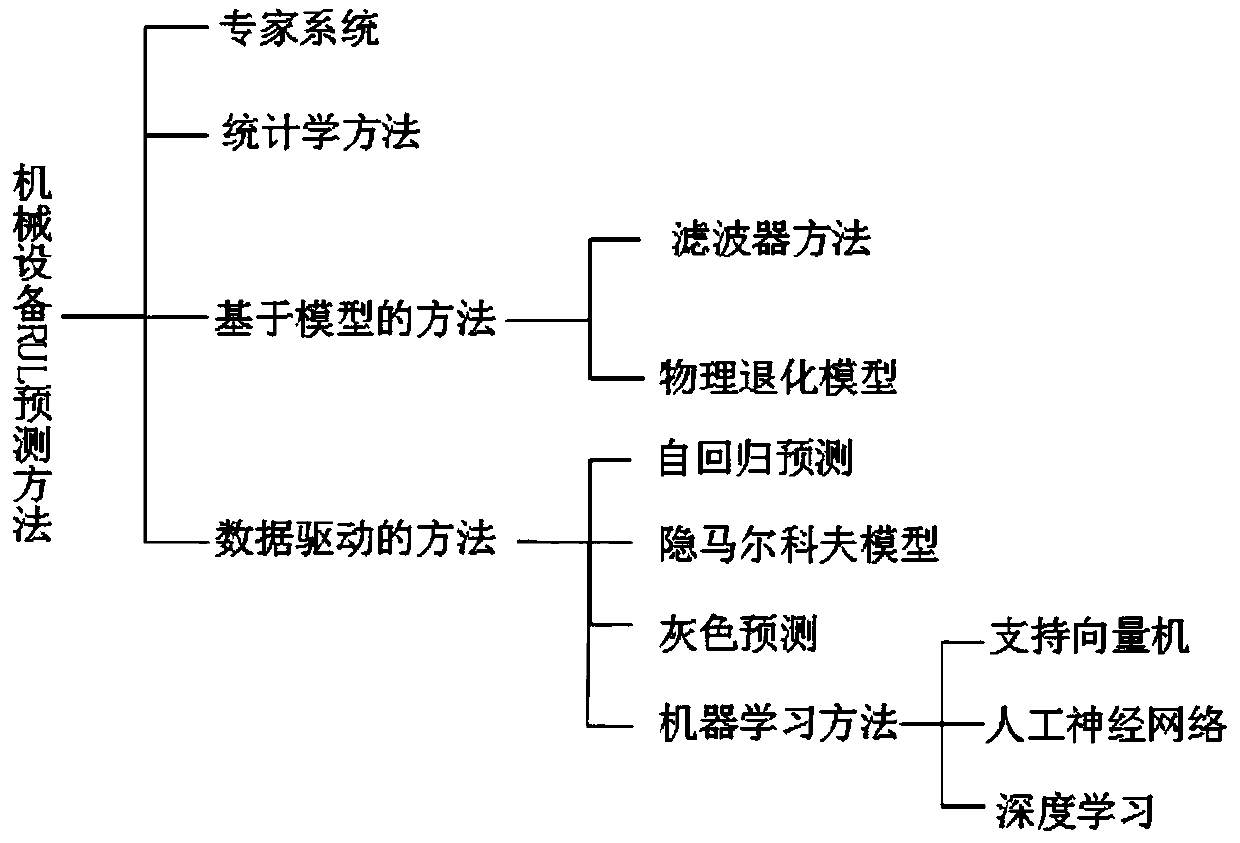

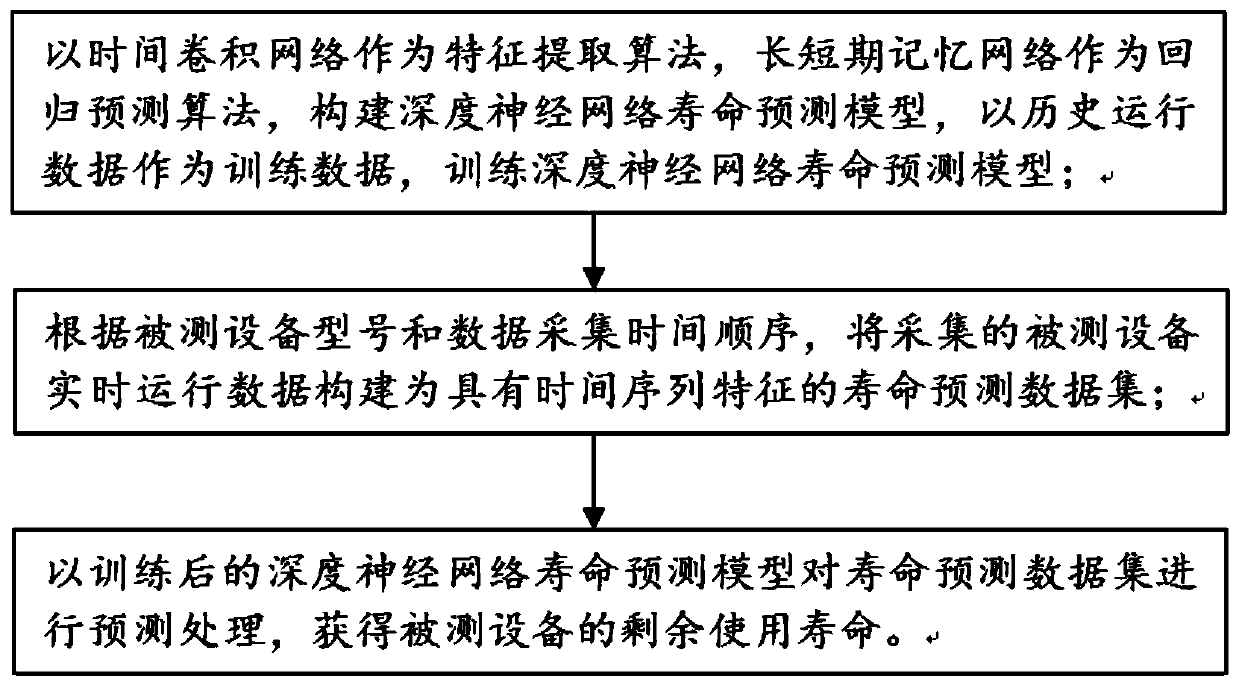

Mechanical equipment residual service life prediction method and system

PendingCN111274737AImprove mobilityImprove forecast accuracyDesign optimisation/simulationNeural architecturesPrediction algorithmsData set

The invention discloses a mechanical equipment residual service life prediction method and system. The method comprises the steps that a time convolution network serves as a feature extraction algorithm, a long-term and short-term memory network serves as a regression prediction algorithm, a deep neural network life prediction model is constructed, and the deep neural network life prediction modelis trained; according to the model of the tested equipment and the data acquisition time sequence, constructing the acquired real-time operation data of the tested equipment into a service life prediction data set with time sequence characteristics; and carrying out prediction processing on the life prediction data set by using the deep neural network life prediction model to obtain the residualservice life of the tested equipment. A state monitoring signal output by a sensor for monitoring mechanical equipment has the characteristics of a time sequence; a time convolution network and a longshort-term memory network are combined, a deep neural network life prediction model is established for RUL prediction of mechanical equipment, the problems of over-fitting and gradient disappearanceexisting in a common deep neural network model are solved, and the prediction accuracy is improved.

Owner:SHANDONG UNIV

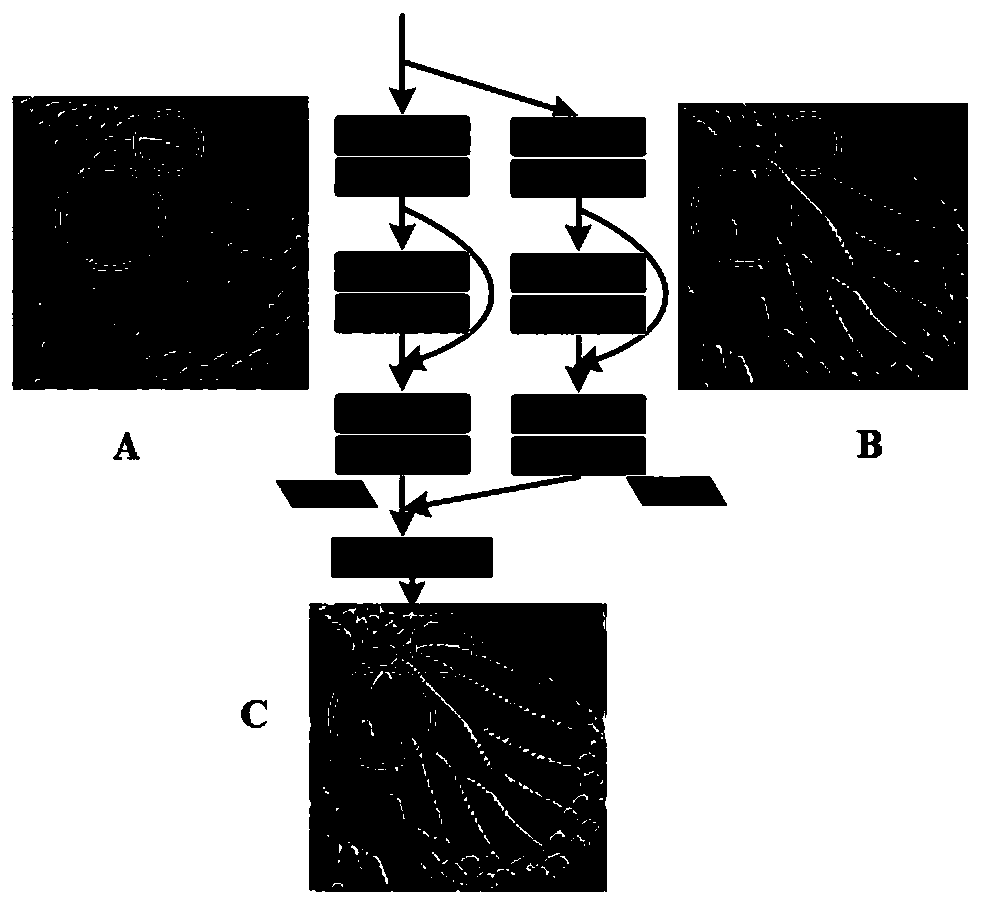

Infant brain magnetic resonance image partitioning method based on fully convolutional network

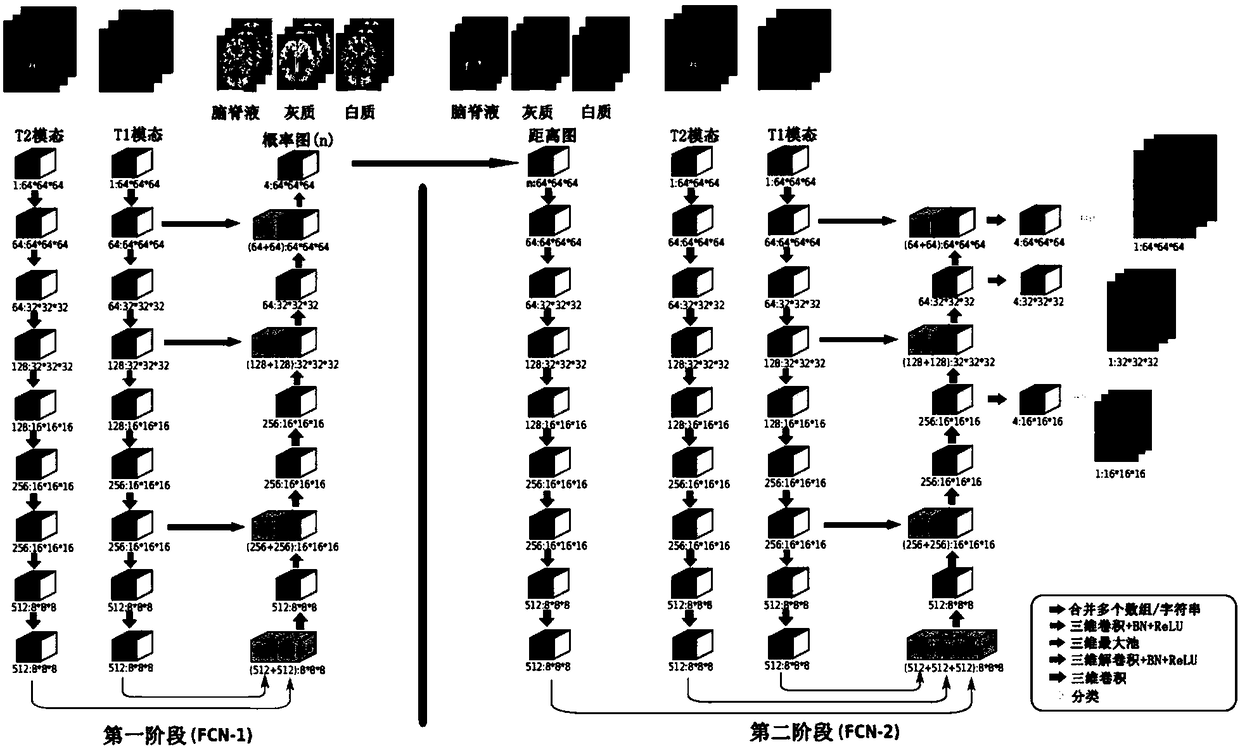

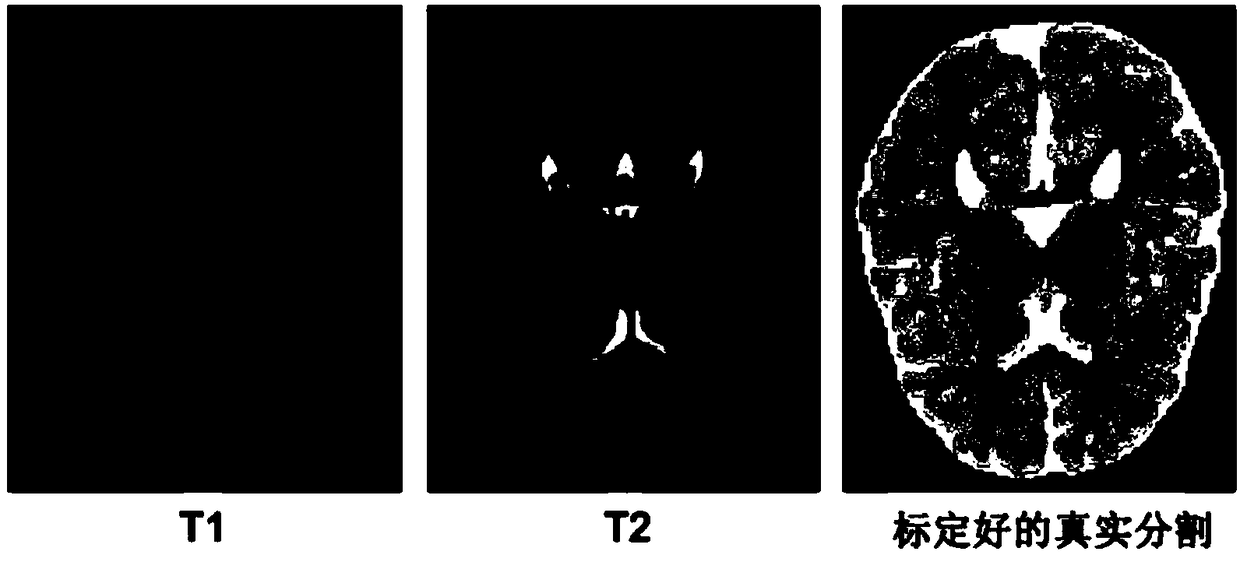

The invention provides an infant brain magnetic resonance image partitioning method based on a fully convolutional network. The main content of the method comprises the multi-stream three-dimensionalfully convolutional network (FCN) with jump connection, partial transfer learning, training and testing and evaluation. According to the process of the method, first, a probability graph of each pieceof brain tissue is learned from a multi-modal magnetic resonance image; second, initial partitions of different brain tissue are obtained from the probability graphs and used for calculating a distance graph of each piece of brain tissue; third, spatial context information is simulated according to the distance graphs; and last, final partitioning is realized by use of spatial correlation information and the multi-modal magnetic resonance image, wherein the training process mainly comprises training data increasing, training patch preparation and iterative training, and various detection values are used for evaluation after testing is performed. Through the partitioning method, a white matter area, a grey matter area and a cerebrospinal fluid area are successfully divided, the potential gradient vanishing problem of multi-level deep supervision is relieved, training efficiency is improved, and partitioning performance is greatly enhanced.

Owner:SHENZHEN WEITESHI TECH

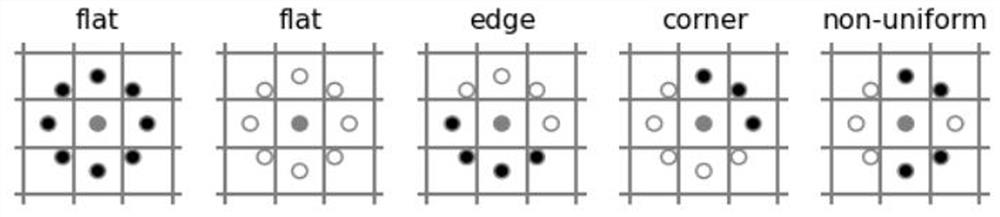

An image classification method based on a multi-scale dense convolutional neural network and a spectral attention mechanism

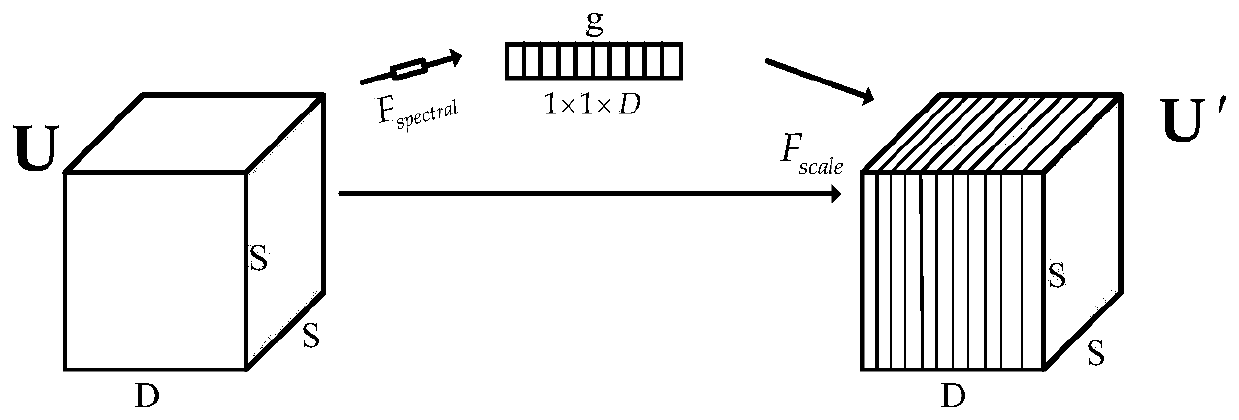

ActiveCN109784347AReduce in quantityReduce demandCharacter and pattern recognitionNeural architecturesMultiplexingSmall sample

The invention relates to an image classification method based on a multi-scale dense convolutional neural network and a spectral attention mechanism. The multi-scale dense convolutional neural networkis constructed by using a dense connection mechanism, and the dense connection mechanism can effectively alleviate the problem of gradient disappearance, enhance feature propagation, encourage feature multiplexing, greatly reduce the number of parameters, and reduce the demand for training samples in the network training process; In addition, the network is combined with a spectrum attention mechanism, and the characteristic utilization of the spectrum direction is more effective. According to the method, autonomous extraction and high-precision classification of hyperspectral image depth features are realized under the condition of small samples. Compared with an existing hyperspectral image classification method based on deep learning, the hyperspectral image classification method has the advantages that the sample demand is lower, and the precision is higher.

Owner:NORTHWESTERN POLYTECHNICAL UNIV

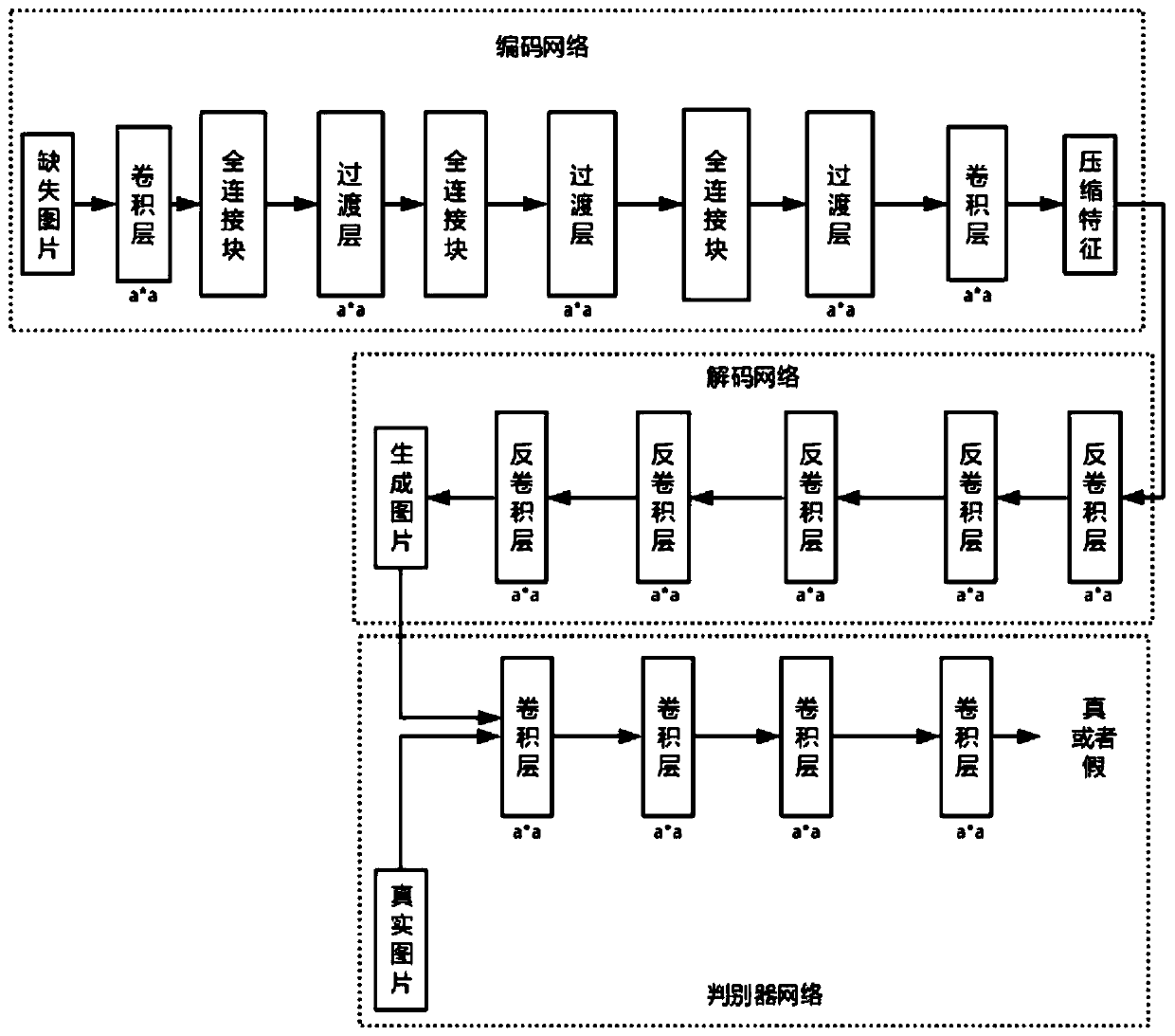

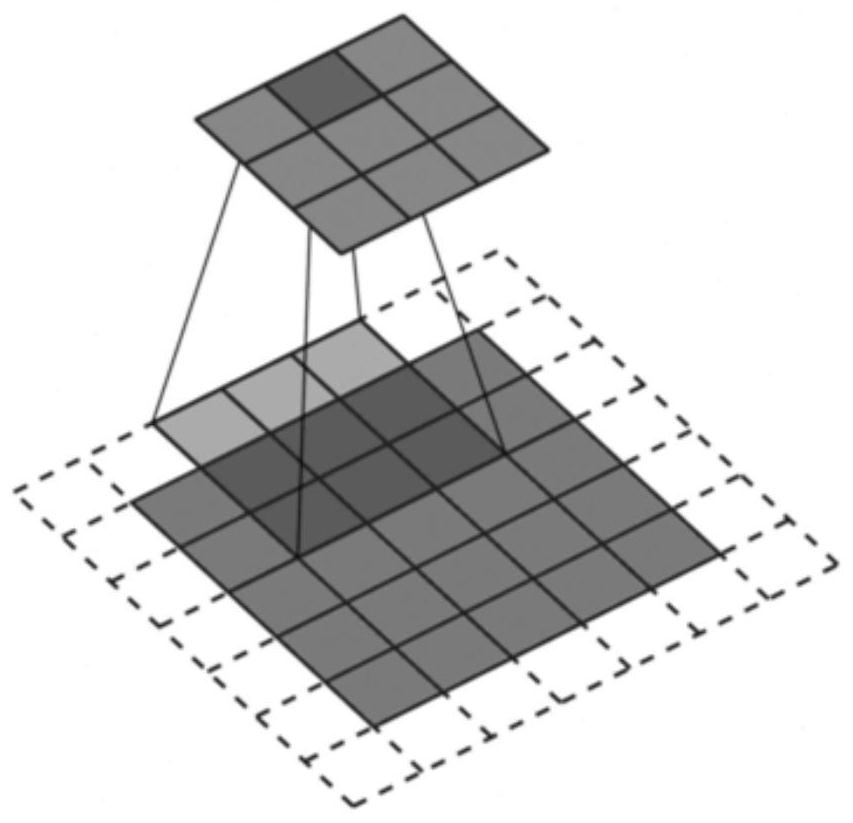

Image restoration method based on a new encoder structure

ActiveCN109801230AAccurate extractionHigh speedImage enhancementFeature learningNetwork architecture

The invention discloses an image restoration method based on a new encoder structureA convolutional neural network composed of an encoder and a decoder is trained to regress a missing pixel value foran image with missing pixels; The encoder captures an image context to obtain a compact feature representation, and the decoder generates missing image content using the representations. Alexnet can improve the operation speed, the network operation scale and the performance; And the Densenet can alleviate the problem of maximum gradient disappearance, enhance the feature utilization and reduce the number of parameters. According to the method, the advantages of the two are considered and combined, and a Densenet framework is added and used. Compared with an Alexnet network architecture used by an original coding and decoding device, the method has the advantages that more compact and real features can be extracted, and meanwhile, the WGAN-is used; GP adversarial loss replaces traditionalGAN adversarial loss, the feature learning speed and precision are improved, and the restoration effect is enhanced.

Owner:HOHAI UNIV

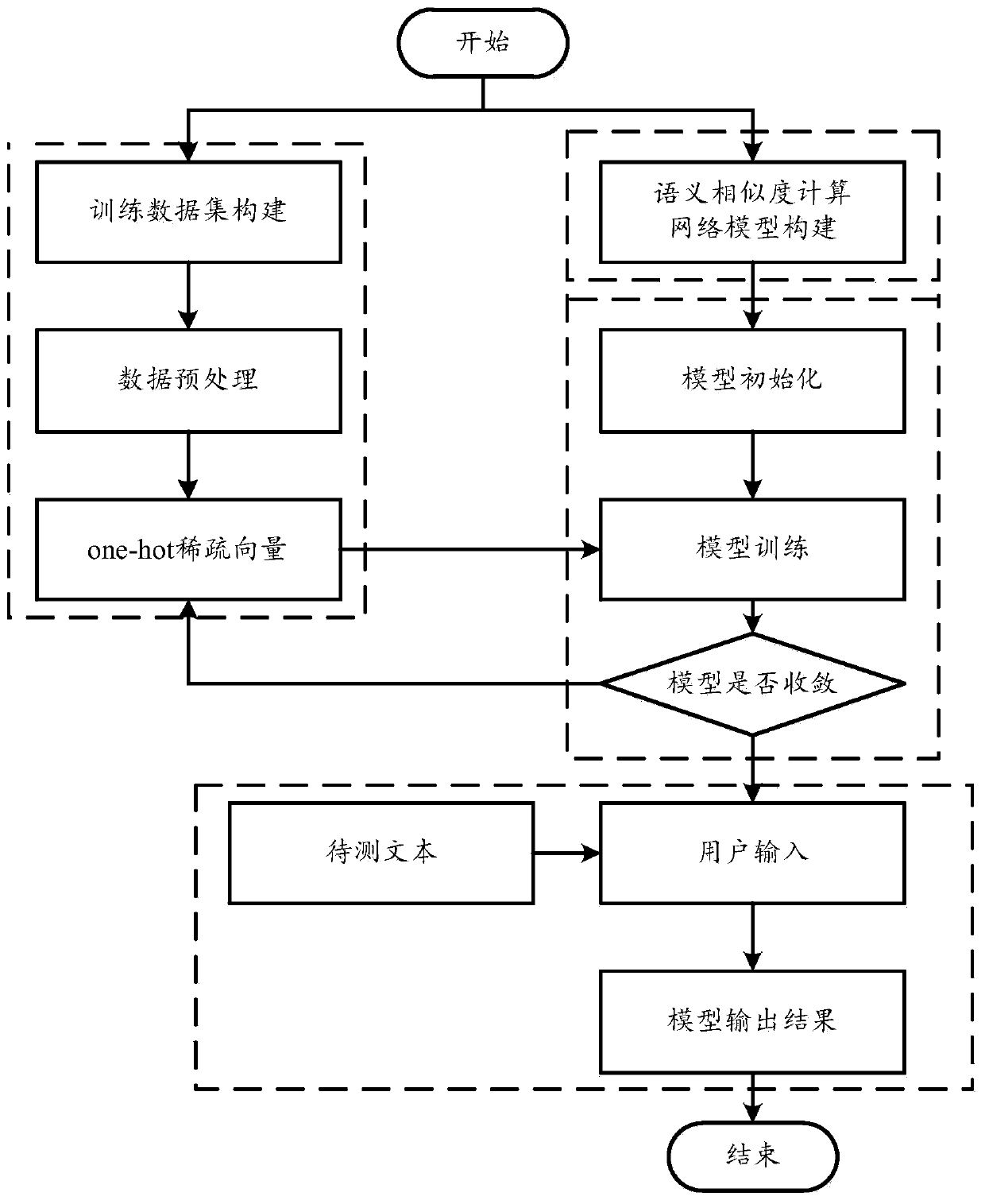

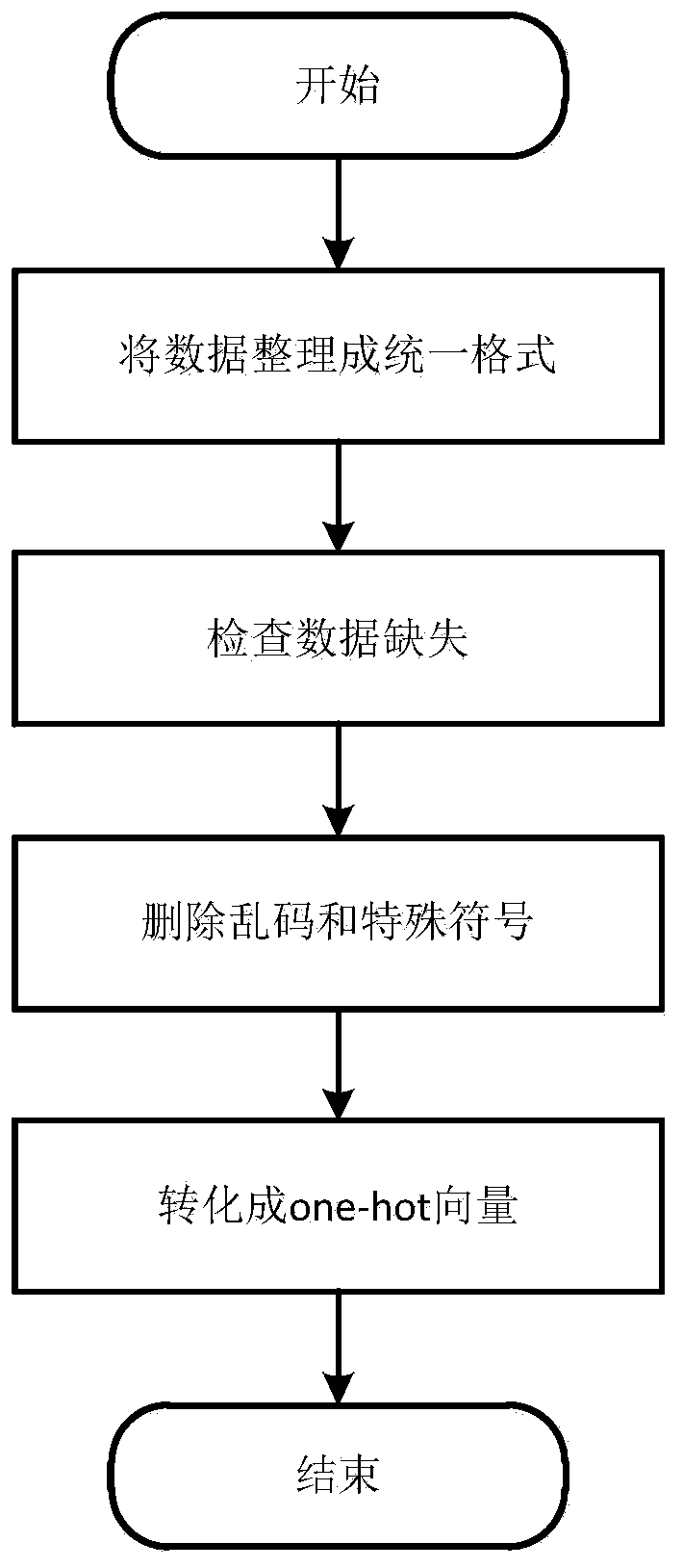

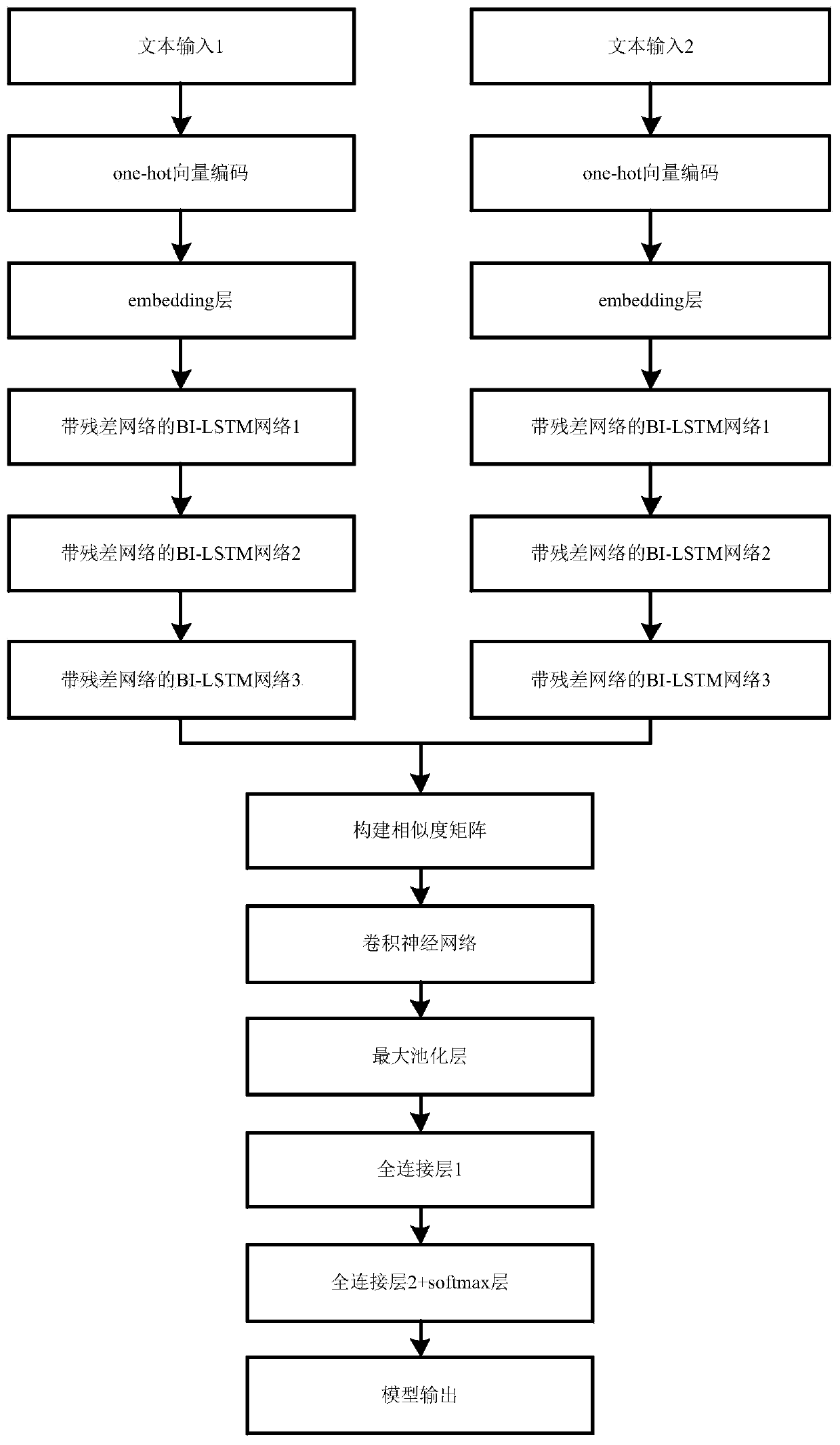

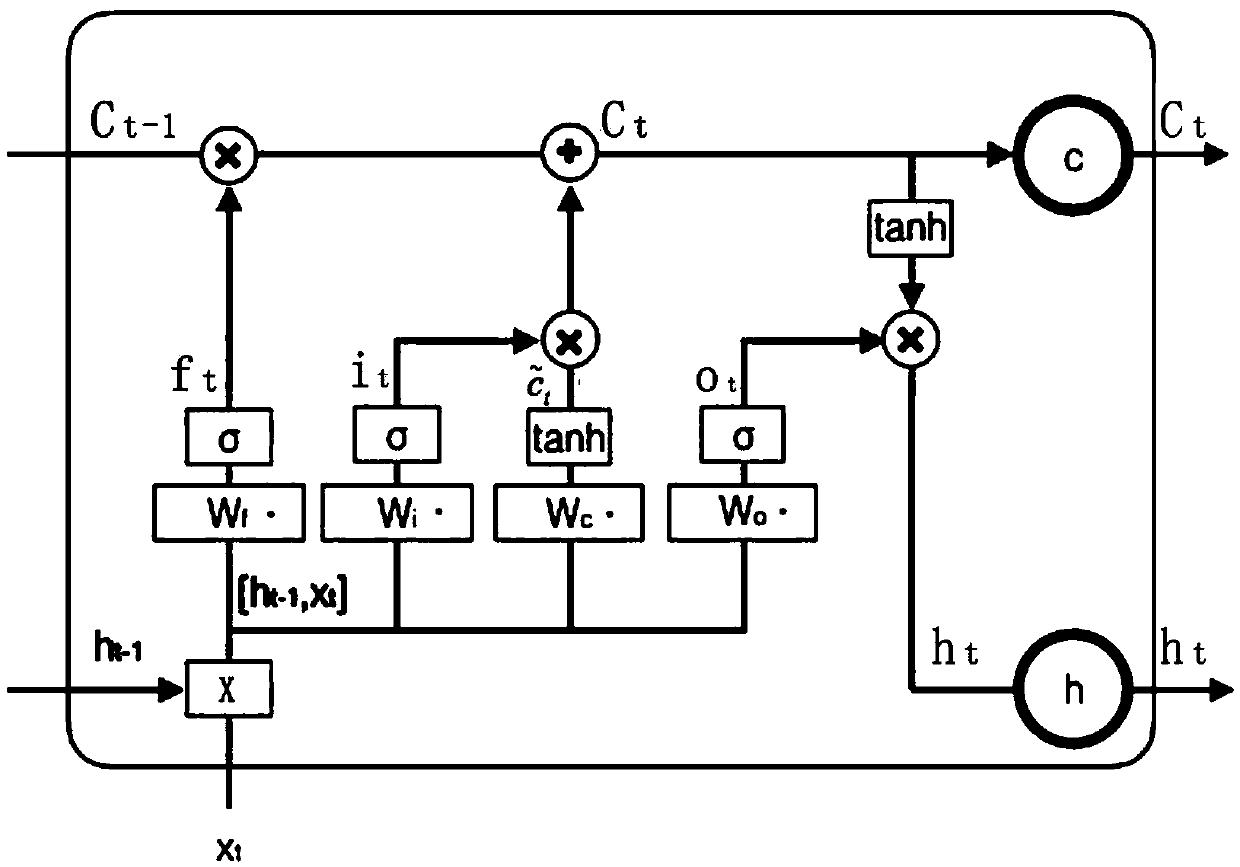

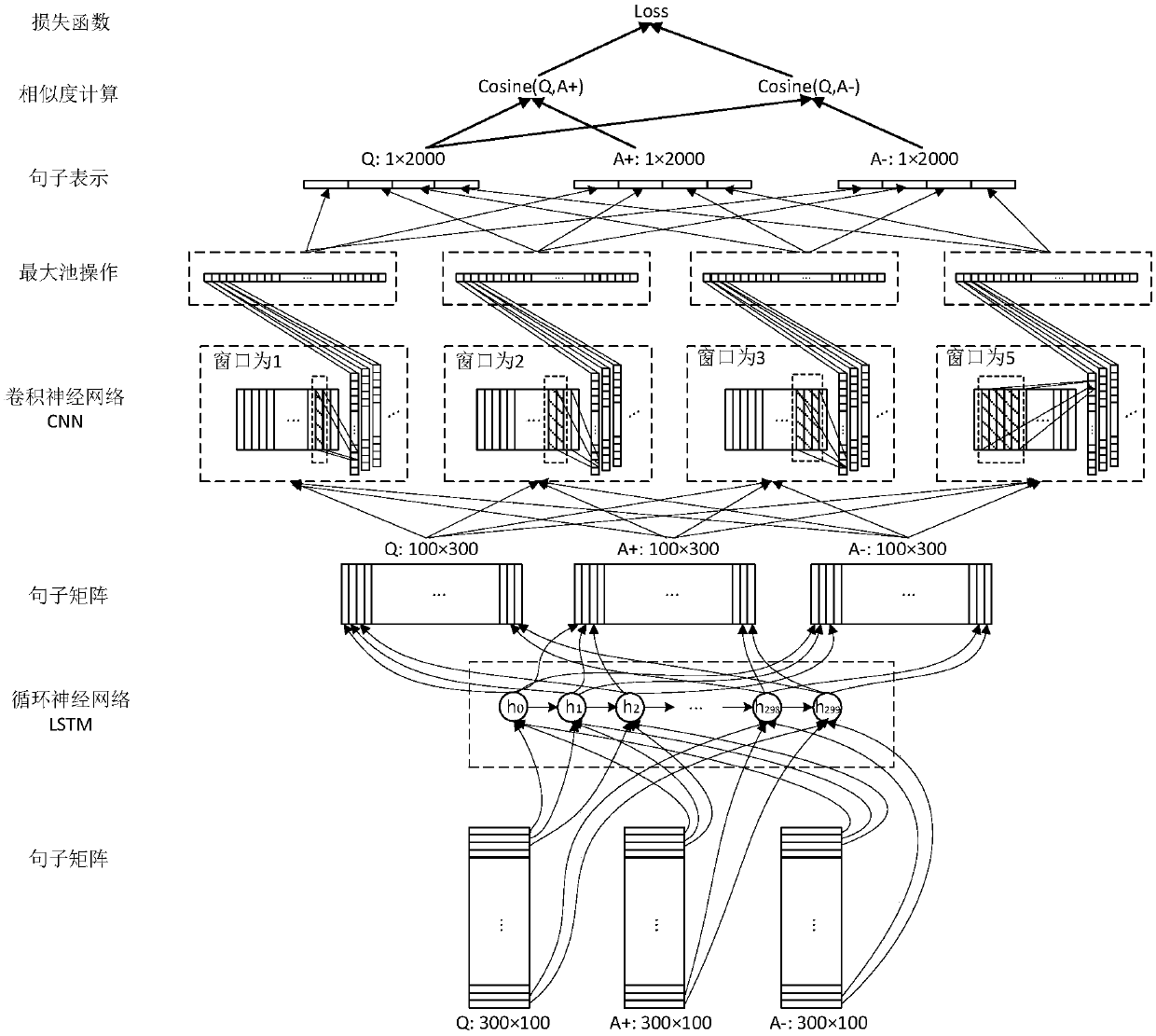

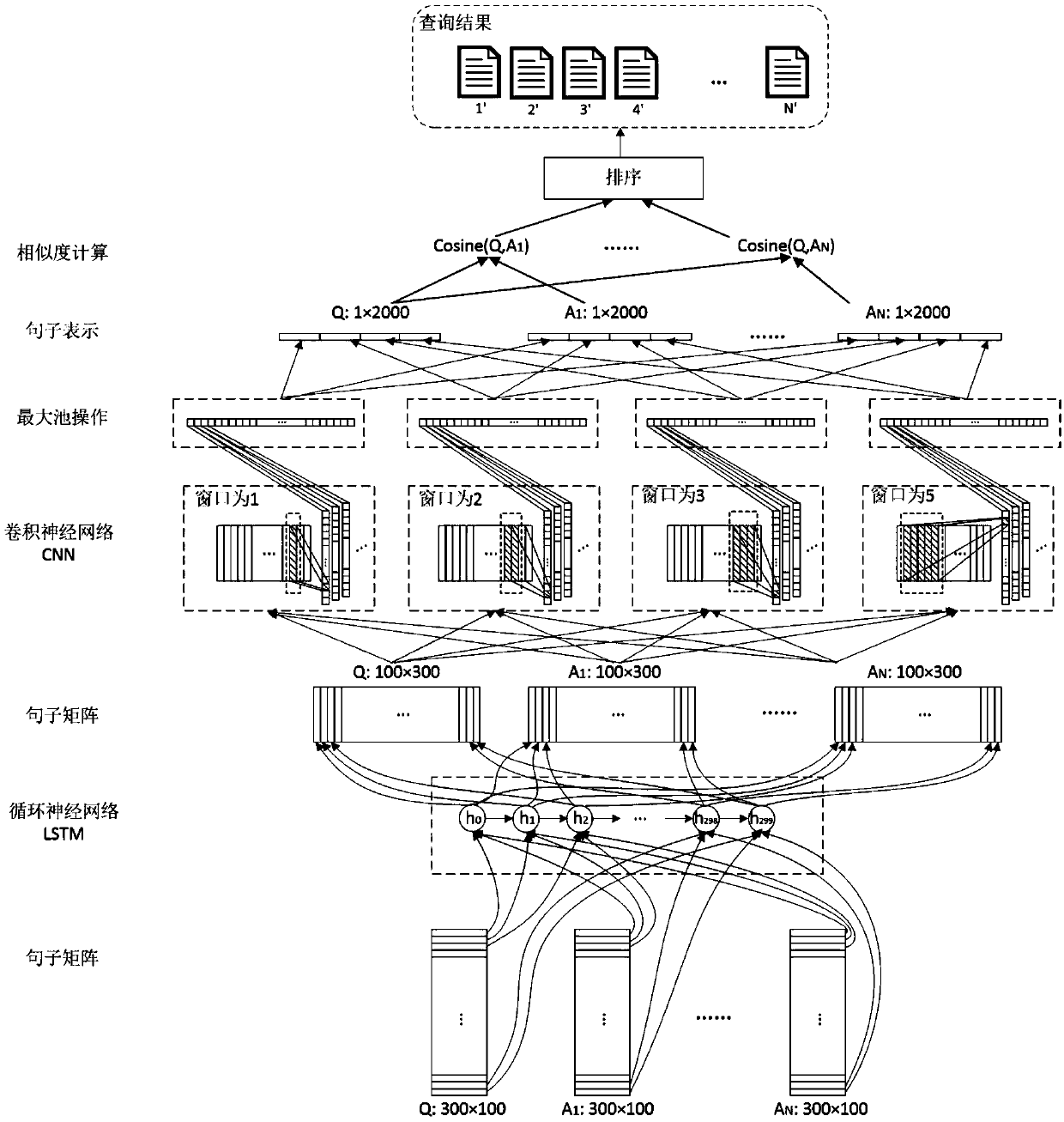

Semantic similarity calculation method based on deep learning

ActiveCN110348014AAvoid vanishing gradientsImprove feature extractionSemantic analysisCharacter and pattern recognitionData setNetwork model

The invention discloses a semantic similarity calculation method based on deep learning, and relates to the field of semantic similarity calculation. The method comprises the following steps: step 1,constructing a training data set, and preprocessing training data to obtain one-hot sparse vectors; step 2, constructing a semantic similarity calculation network model comprising N layers of BI-LSTMnetworks, a residual network, a similarity matrix, a CNN convolutional neural network, a pooling layer and a full connection layer; step 3, inputting the one-hot sparse vector into the network model,and training parameters by using a training data set to complete supervised training; and step 4, inputting a text to be tested into the trained network model, judging whether the text to be tested isa similar text or not, and outputting a result. The semantic similarity calculation network model comprises a multi-layer BI-LSTM network, a residual network, a CNN convolutional neural network, a pooling layer and a full connection layer. Meanwhile, a BI-LSTM network and a CNN convolutional neural network are used, and a residual network is added into the BI-LSTM network, so that the problem ofgradient disappearance caused by a multi-layer network is solved, and the feature extraction capability of the model is enhanced.

Owner:UNIV OF ELECTRONICS SCI & TECH OF CHINA

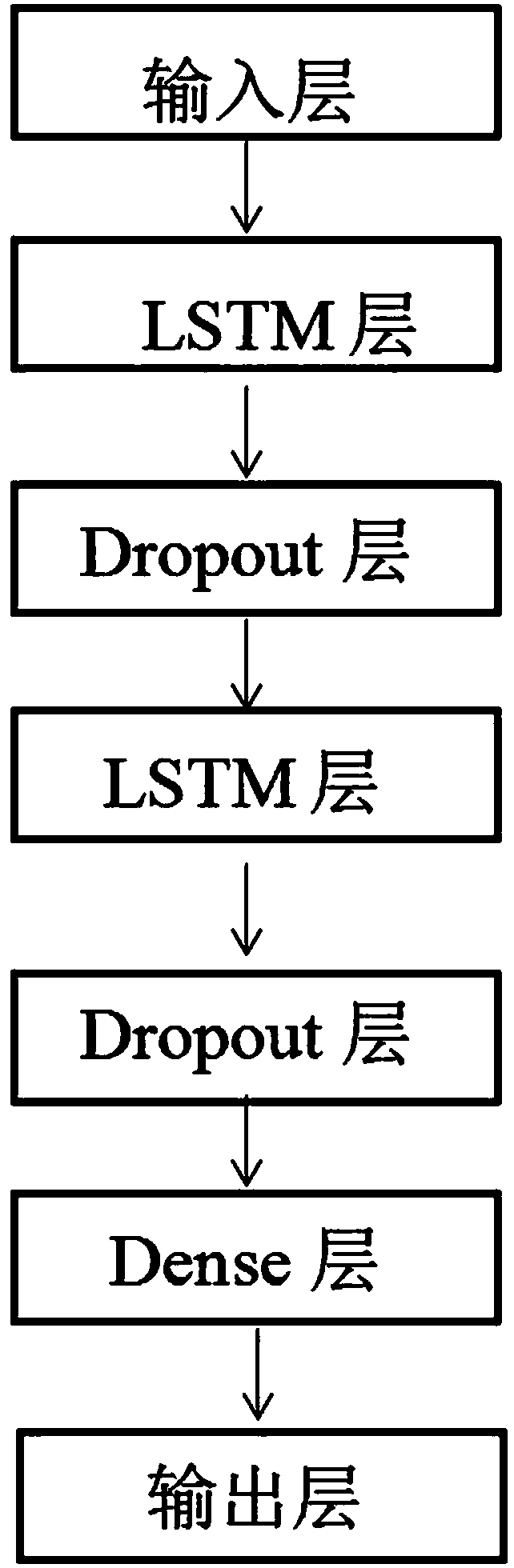

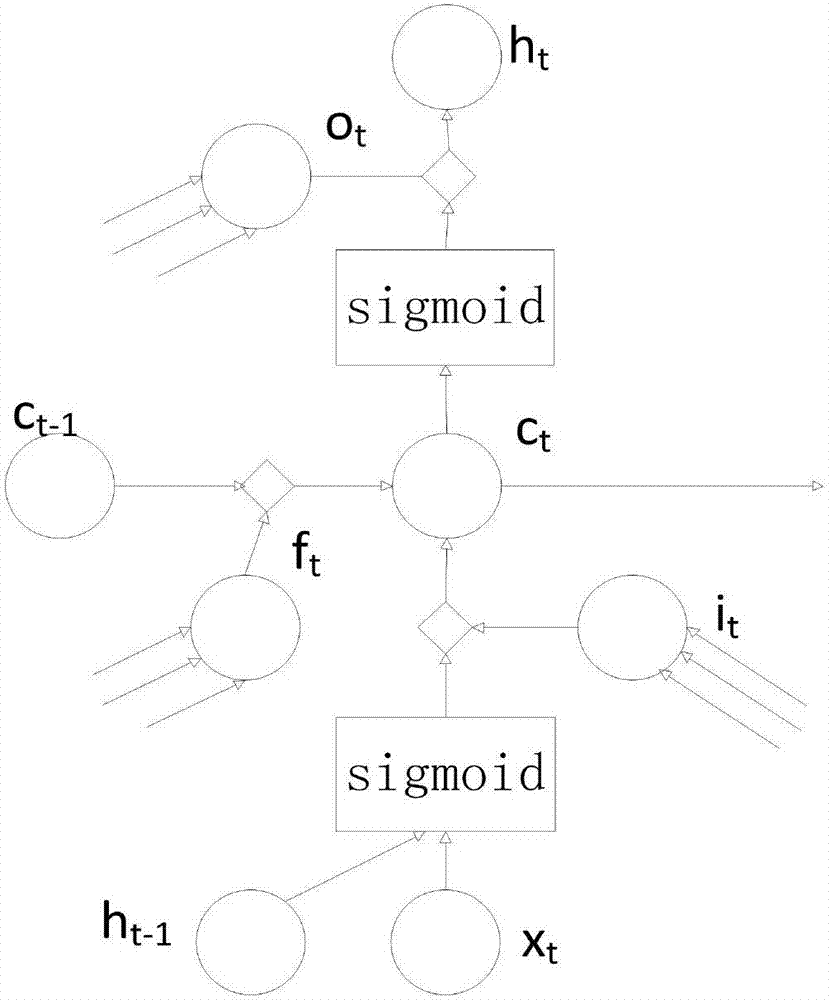

Multi-dimensional time series forecasting method of total grain yield based on LSTM neural network

InactiveCN109002917AAvoid vanishing gradientsGood forecastForecastingNeural learning methodsData informationBusiness forecasting

The invention discloses a multi-dimensional time series forecasting method of total grain yield based on an LSTM neural network. Based on LSTM neural network, the output data of many kinds of agricultural products are used as the input variables of LSTM neural network, and a multidimensional time series forecasting model based on LSTM neural network is established. Because the output data of manykinds of agricultural products are considered and the superposed LSTM is used to process the sequence data information, the problem of gradient disappearance is avoided, the forecasting effect is good, the precision is high, and the applicability is good.

Owner:SHANDONG EVAYINFO TECH CO LTD

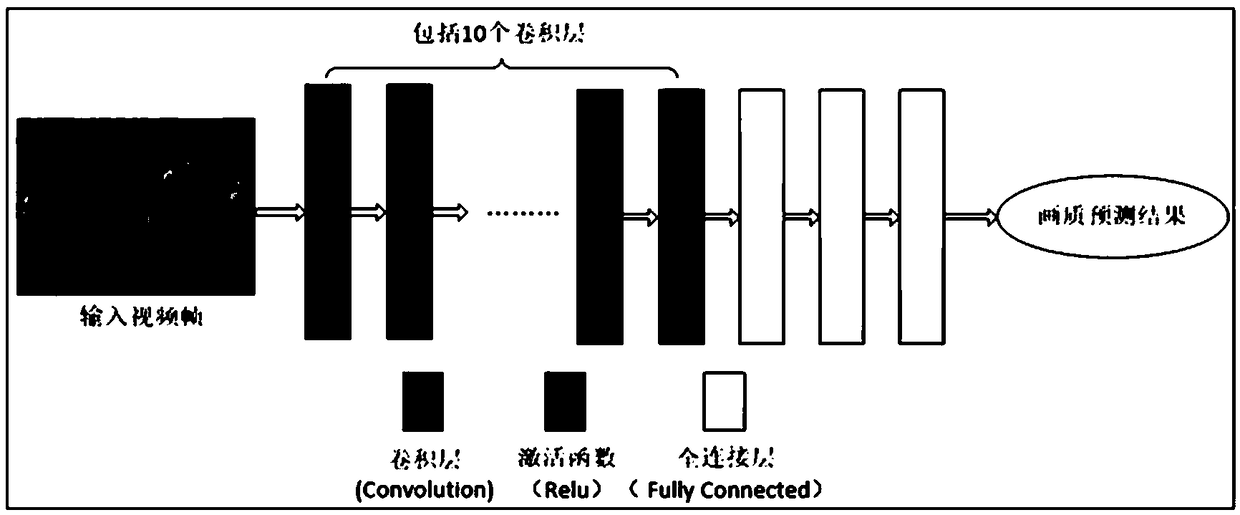

Video compression artifact adaptive removing method based on depth learning

ActiveCN109257600AEasy to deepen the structureDeepen the structureDigital video signal modificationMultiplexingImaging quality

The invention discloses a video compression artifact adaptive removing method based on depth learning, which adopts a depth-dense connected convolution network to automatically extract the compressioncharacteristics of a video frame, and can effectively avoid the shortcomings caused by the manual design of a filter in the traditional method. The invention acts on the post-processing stage of thevideo, and does not affect the processing flow and the real-time performance of the existing video coding and decoding algorithm. A new image quality prediction model is proposed to realize automaticselection of compression artifacts with different intensities, which has strong adaptive ability. Deep-connected convolution network is used to remove video compression artifacts, which can effectively alleviate the problem of gradient disappearance, deepen the network structure and enhance the network non-linear expression ability. At the same time, the network can also make full use of the characteristics of the middle layer, which not only enhances the propagation and multiplexing of features, but also greatly reduces the network parameters.

Owner:福建帝视信息科技有限公司

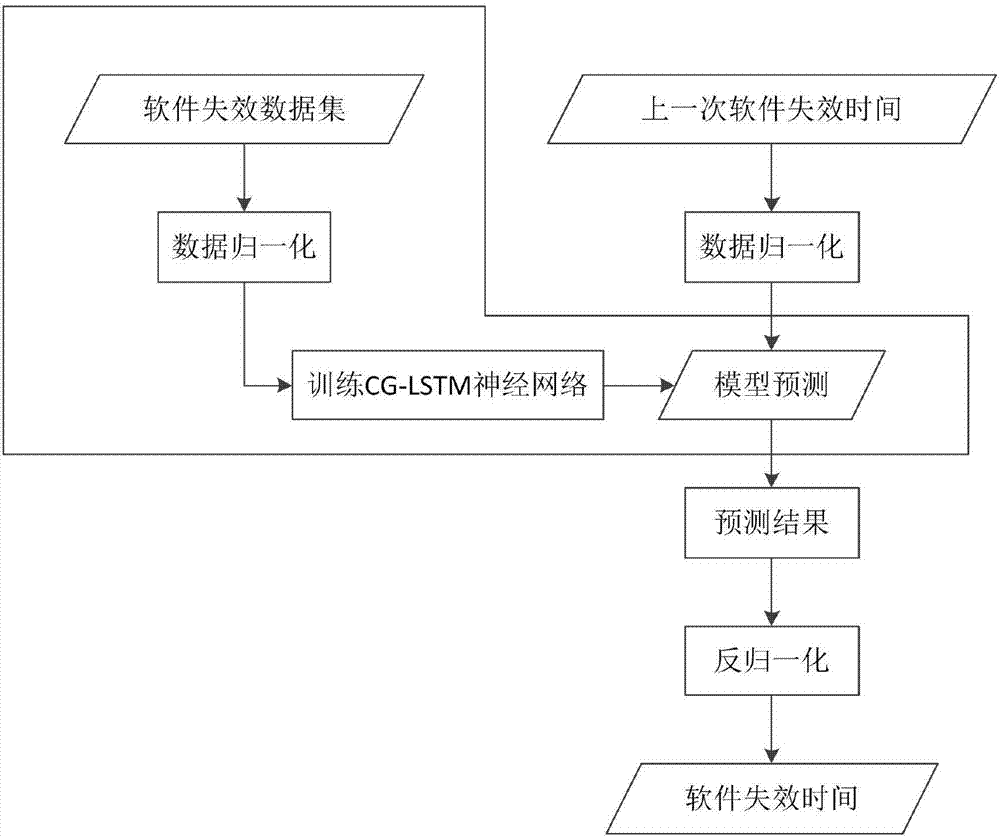

Software reliability prediction model based on depth CG-LSTM neural network

ActiveCN107544904AImprove accuracyImprove forecast accuracySoftware testing/debuggingData setNerve network

The invention discloses a software reliability prediction model based on a depth CG-LSTM neural network, and belongs to the technical field of computer software. The model comprises a model training part and a model prediction part, wherein the model training part is used for performing data normalization processing on a software failure data set; and the software reliability prediction model based on the depth CG-LSTM neural network is trained by using software failure data set has subjected to normalization processing to acquire a prediction model. The model prediction part is used for acquiring the current software failure data and performing data normalization processing, and then the acquired prediction model is input to predict future software failure to acquire a prediction result.According to the model provided by the invention, the problems of gradient vanishing and poor generalization ability of the software reliability prediction model based on the traditional neural network are overcome, the model is higher in prediction accuracy and wider in applicability.

Owner:HARBIN ENG UNIV

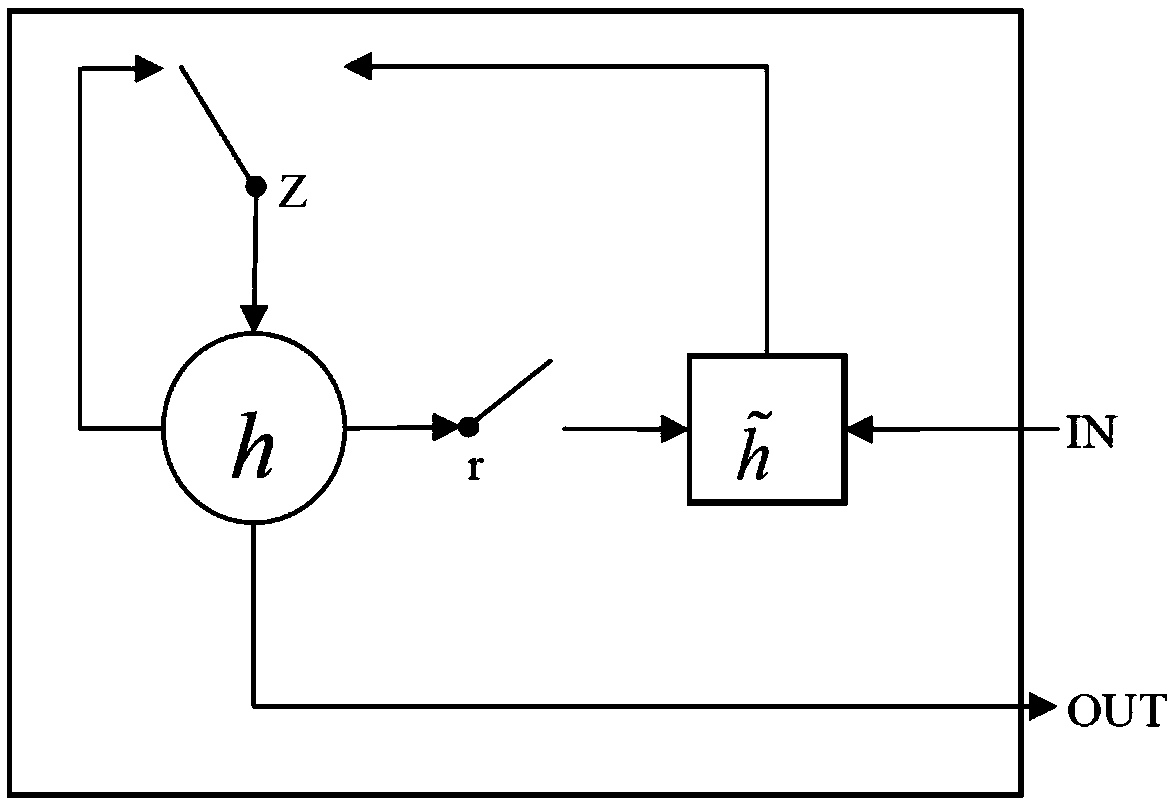

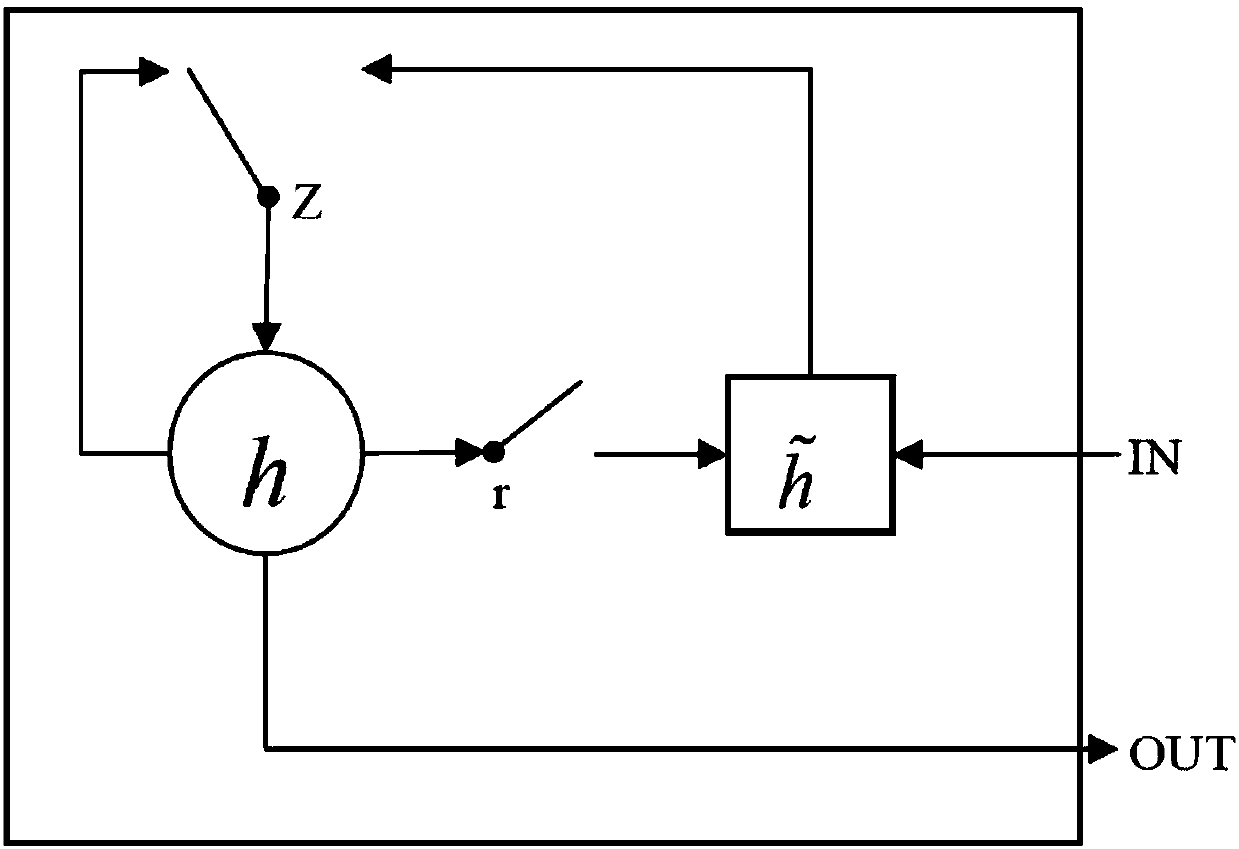

GRU based recurrent neural network multi-label learning method

PendingCN108319980AEffective memoryImprove accuracyCharacter and pattern recognitionNeural learning methodsAlgorithmMulti-label classification

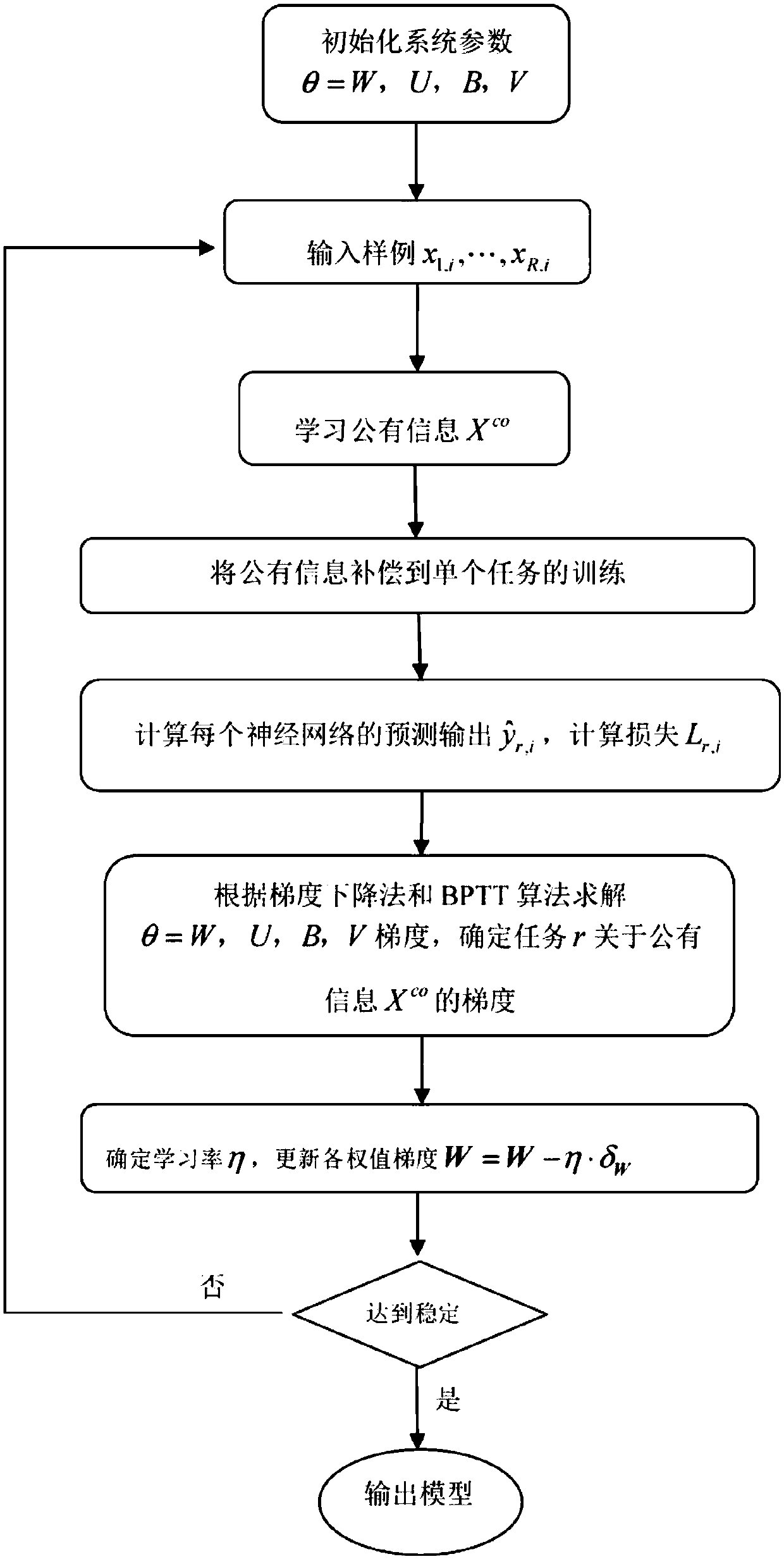

The invention provides a GRU based recurrent neural network multi-label learning method, which comprises the steps of S1, initializing a system parameter [theta]=(W, U, B); S2, inputting a sample {xi,yi}<i=1><N>, calculating a hidden state hT of the output of an RNN (Recurrent Neural Network) at each moment, wherein the sample xi belongs to R<M*1>, yi is a multi-label vector of the sample xi, andyi belongs to R<C*1>; S3, calculating a context vector hT and output zi of an output layer; S4, calculating the predicted output yi^, calculating the loss Li, and determining an objective function J;S5, solving the gradient of the system parameter [theta]=(W, U, B) according to a gradient descent method and a BPTT (Back-propagation Through Time) algorithm; S6, determining a learning rate [eta],and updating each weight gradient W=W-[eta]*[delta]W; S7, judging whether the neural network reaches stable or not, if so, executing a step S8, if not, returning the step S2, and iteratively updatingmodel parameters; and S8, outputting an optimization model. According to the invention, effective feature representation of the sample can be obtained by fully utilizing the RNN so as to improve the accuracy of multi-label classification. In addition, a problem of gradient disappearance is not easy to occur in back propagation.

Owner:HRG INT INST FOR RES & INNOVATION

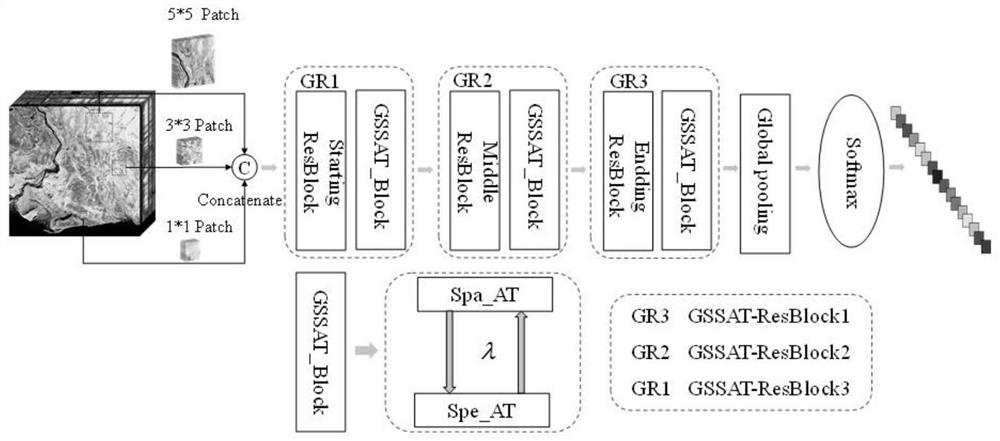

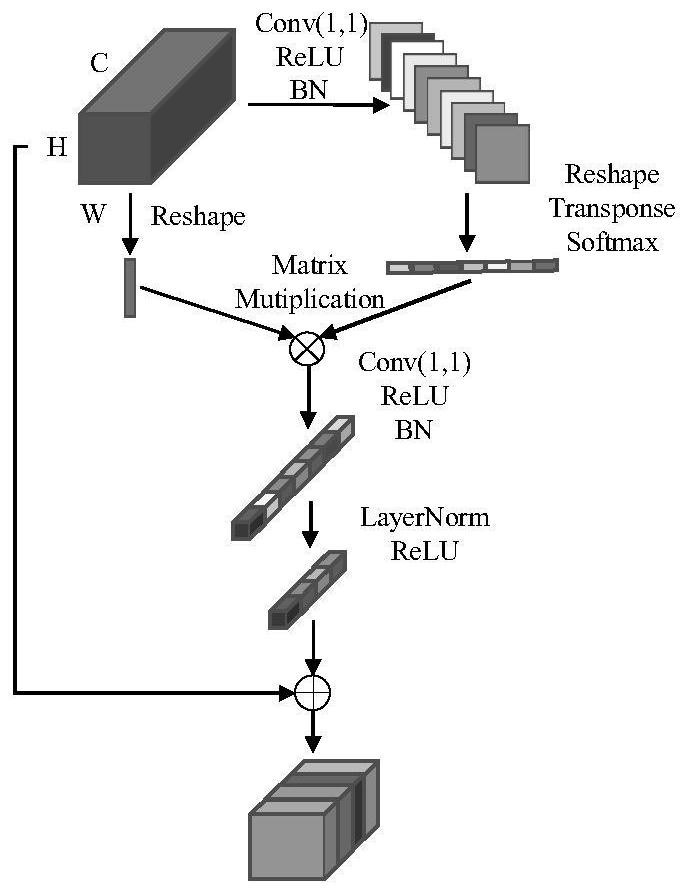

Hyperspectral image classification method based on global attention residual network

ActiveCN112836773AFast convergenceRich spatial-spectral featuresClimate change adaptationCharacter and pattern recognitionNetwork ConvergenceHyperspectral image classification

The invention discloses a hyperspectral image classification method based on a global attention residual network, and the method comprises: constructing an overall network, which comprises a multi-scale feature extraction network, a global attention module and an improved residual network module; performing multi-scale feature extraction to extract hierarchical features of the hyperspectral image; constructing a spatial and spectral dependency relationship of global pixel points by the global attention module through combination of the spatial attention module and the spectral attention module; fusing the improved residual error network module and the global attention module to form a novel global attention residual error network; and sending an output result into a classifier through global pooling for final classification, and outputting a result. According to the method, rich spatial-spectral features are obtained at the same time by introducing a multi-scale receptive field and a global attention module, and an improved residual network is added to relieve the gradient disappearance problem and accelerate network convergence, so that the classification precision is improved, and a good and stable classification effect is ensured.

Owner:HOHAI UNIV

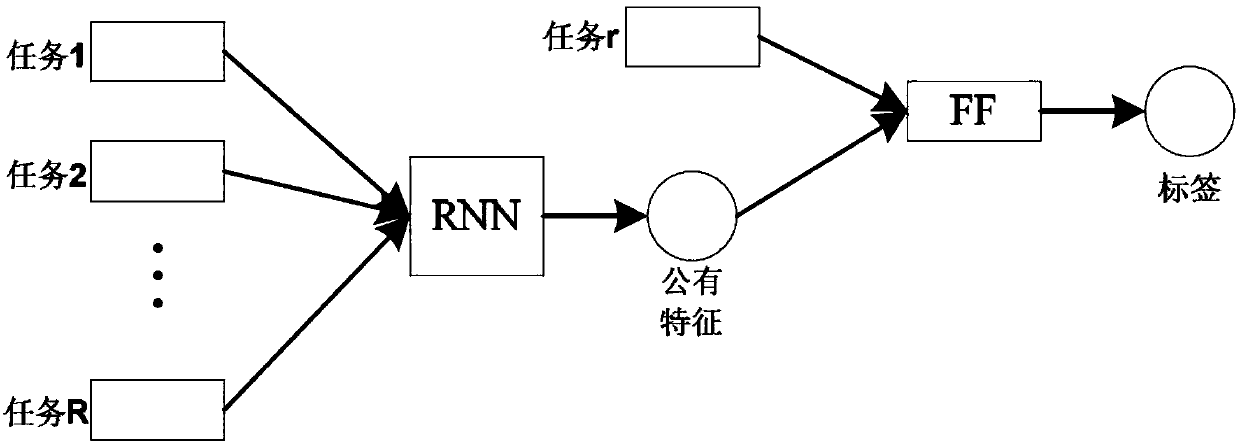

RNN-based multi-task learning method

PendingCN108197701AAchieve sharingAvoid vanishing gradientsNeural architecturesNeural learning methodsModel parametersMulti-task learning

The invention provides an RNN-based multi-task learning method. The method includes steps of S1, initializing a system parameter theta=(W, U, B, V); S2, inputting samples x<1, i>... x<R, i>, learningpublic information Xco and compensating the public information into training of single tasks; S3, calculating predication label vector output, as in the description, of each neural network and calculating loss Lr of a task <r, i>; S4, solving the gradient of theta=(W, U, B, V) according to a gradient decrease method and a BPTT algorithm and determining the gradient of a task r relative to the public information Xco; S5, determining a learning rate eta and updating each weight gradient W=W-eta*deltaW; S6, judging whether a neural network reaches stability or not, if yes, performing a step S7 and if not, returning to step S2 and performing iteration update on model parameters; S7, outputting an optimized model. According to the invention, public features among RNN learning multiple tasks canbe utilized effectively and the public features are input to learning of the single tasks, so that information share is realized. Besides, through introduction of a GRU structure in RNN, the problemof gradient vanishment can be solved effectively.

Owner:HRG INT INST FOR RES & INNOVATION

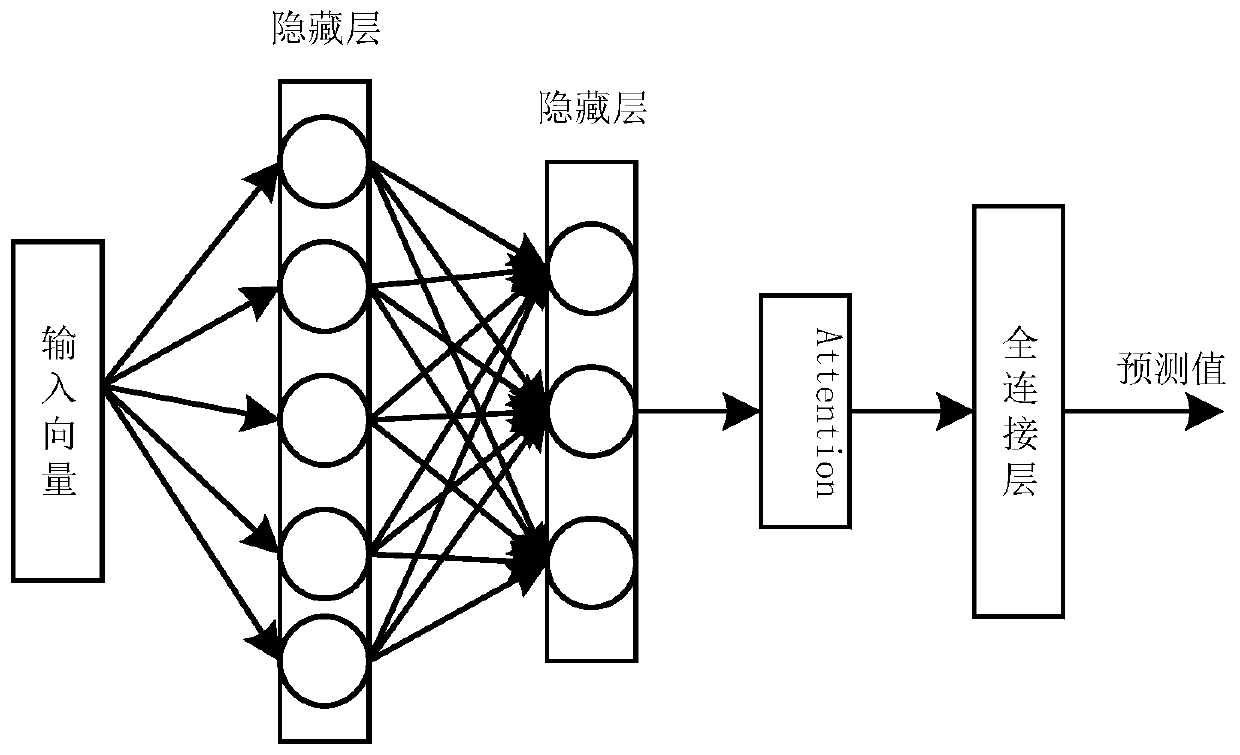

Short-term residence load prediction method based on Attention-GRU

ActiveCN110619420ASolve gradient explosionSolve vanishing gradientForecastingCharacter and pattern recognitionPrediction algorithmsDensity based

The invention discloses a short-term residence load prediction method based on Attention-GRU. The method comprises the following steps: data preprocessing; the first step of carrying out load prediction by using a deep learning model being to prepare data in a proper format and evaluate the consistency of daily power distribution by using a density-based noise application space clustering (DBSCAN)technology; in the secondary step, constructing a training set and a test set. According to the method, two algorithms of artificial intelligence in natural language processing are combined to construct a short-term residence load prediction model, and the model uses a GRU algorithm to not only overcome the defects of a recurrent neural network of a traditional intelligent prediction algorithm, but also solve the problems of gradient explosion and gradient disappearance of RNN. The Attention layer is used for endowing the input vector in the next time step with the feature weight learned by the model, and highlighting the influence of the key feature on the predicted load.

Owner:GUANGDONG UNIV OF TECH

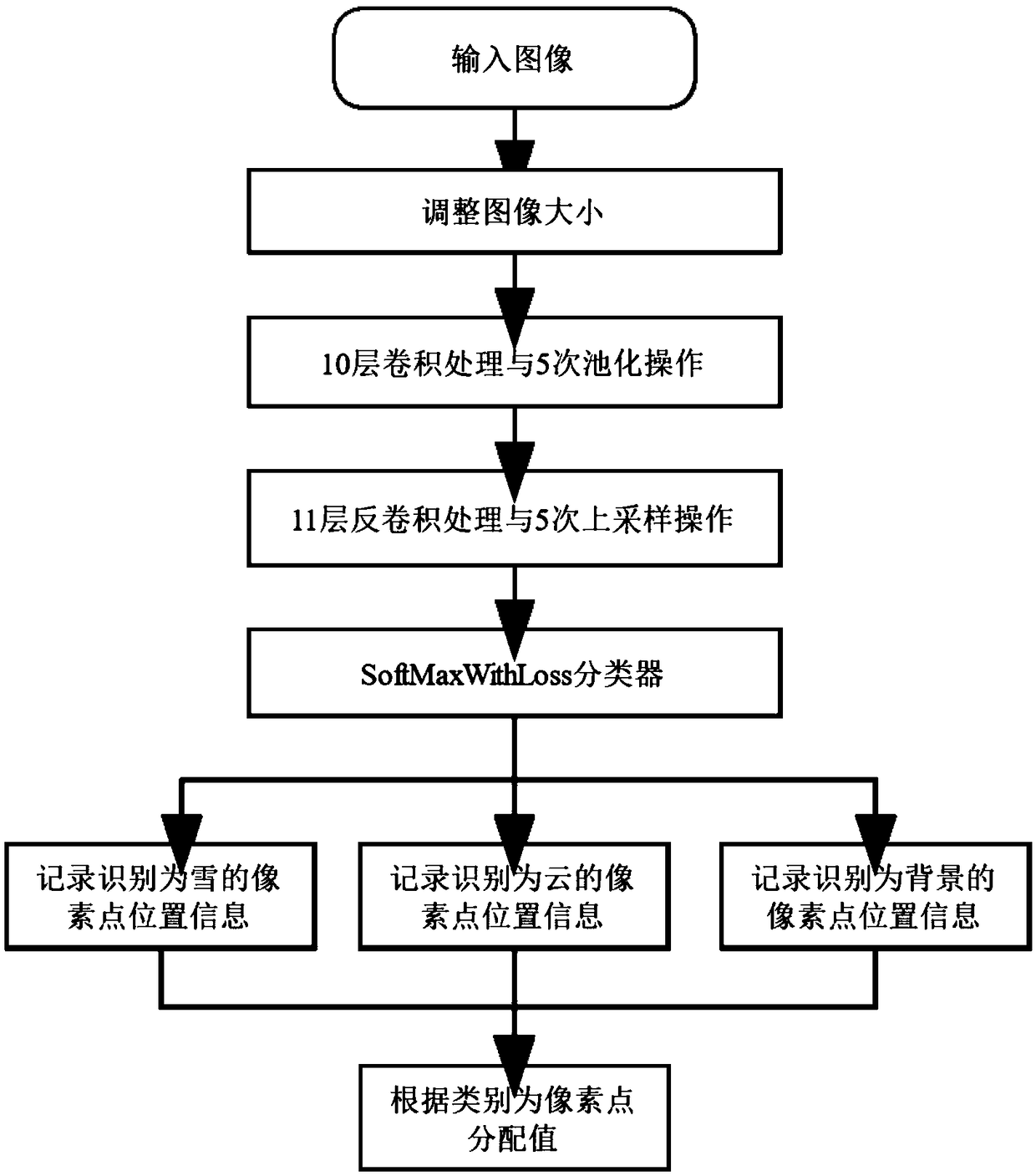

A remote sensing image cloud identification n method based on depth learning

InactiveCN109255294ARealize automatic identificationSimple structureScene recognitionNeural architecturesFeature extractionNetwork structure

The invention discloses a remote sensing image cloud identification method based on depth learning. The method comprises the following steps: automatically acquiring remote sensing cloud images, making remote sensing images into training sets and expanding the existing training sets, and making labels in the training sets, constructing a deep convolution neural network based on SegNet neural network structure with multi-scale convolution kernel and high symmetry and for restoring the feature map i by deconvolution layer finally, to avoid over-fitting, under-fitting and gradient disappearance in network training, adopting the method of subsection training, after the training, extracting the feature of the remote sensing image by using the obtained weight file, and carrying out cloud detection at the pixel level. The remote sensing image cloud identification depth convolution neural network of the invention improves the retrieval accuracy by utilizing multi-scale convolution and high symmetry.

Owner:CHINA UNIV OF GEOSCIENCES (BEIJING)

Video semantic segmentation method based on ConvLSTM convolutional neural network

ActiveCN111860386AImprove accuracyAvoid vanishing gradientsCharacter and pattern recognitionNeural architecturesData setNetwork structure

The invention relates to a video semantic segmentation method based on a ConvLSTM convolutional neural network. The method comprises the following steps: A, constructing and training a video semanticsegmentation network: (1) acquiring a data set; (2) constructing a video semantic segmentation network; (3) training a video semantic segmentation network; (4) testing the segmentation accuracy of thevideo semantic segmentation network; B, performing video semantic segmentation through the trained video semantic segmentation network structure. According to the method, the ConvLSTM module is adopted to consider the correlation between the adjacent frames of the video, so the accuracy of semantic segmentation of the video is improved. In addition, a densely-connected cavity space pyramid pooling module with densely-connected blocks is adopted, so the transmission of features and gradients is more effective, the problem of gradient disappearance in the deep network training process is solved, multi-scale context information can be systematically aggregated, and the receptive field is expanded.

Owner:SHANDONG UNIV

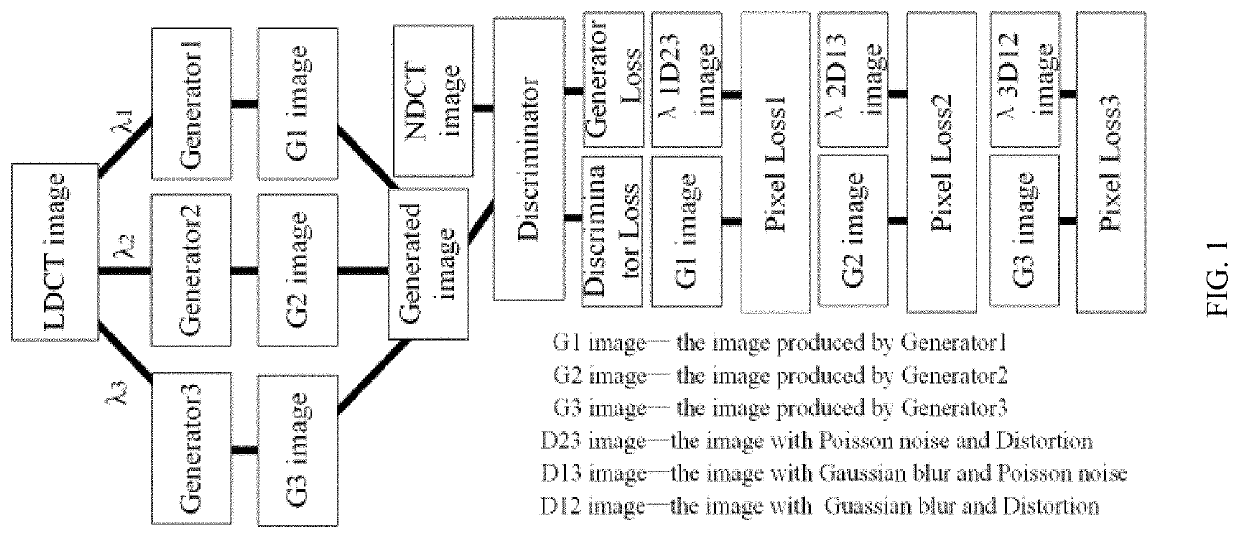

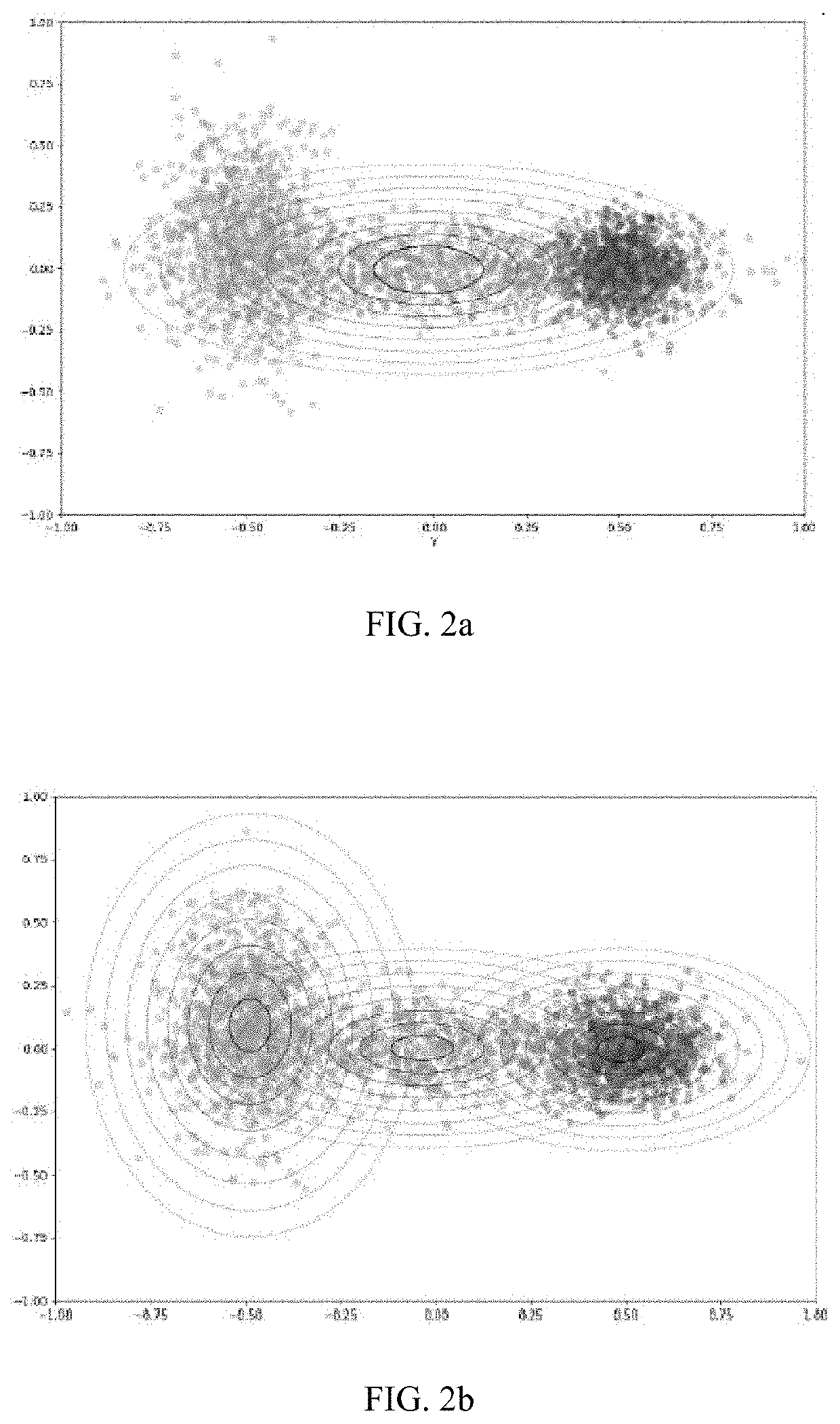

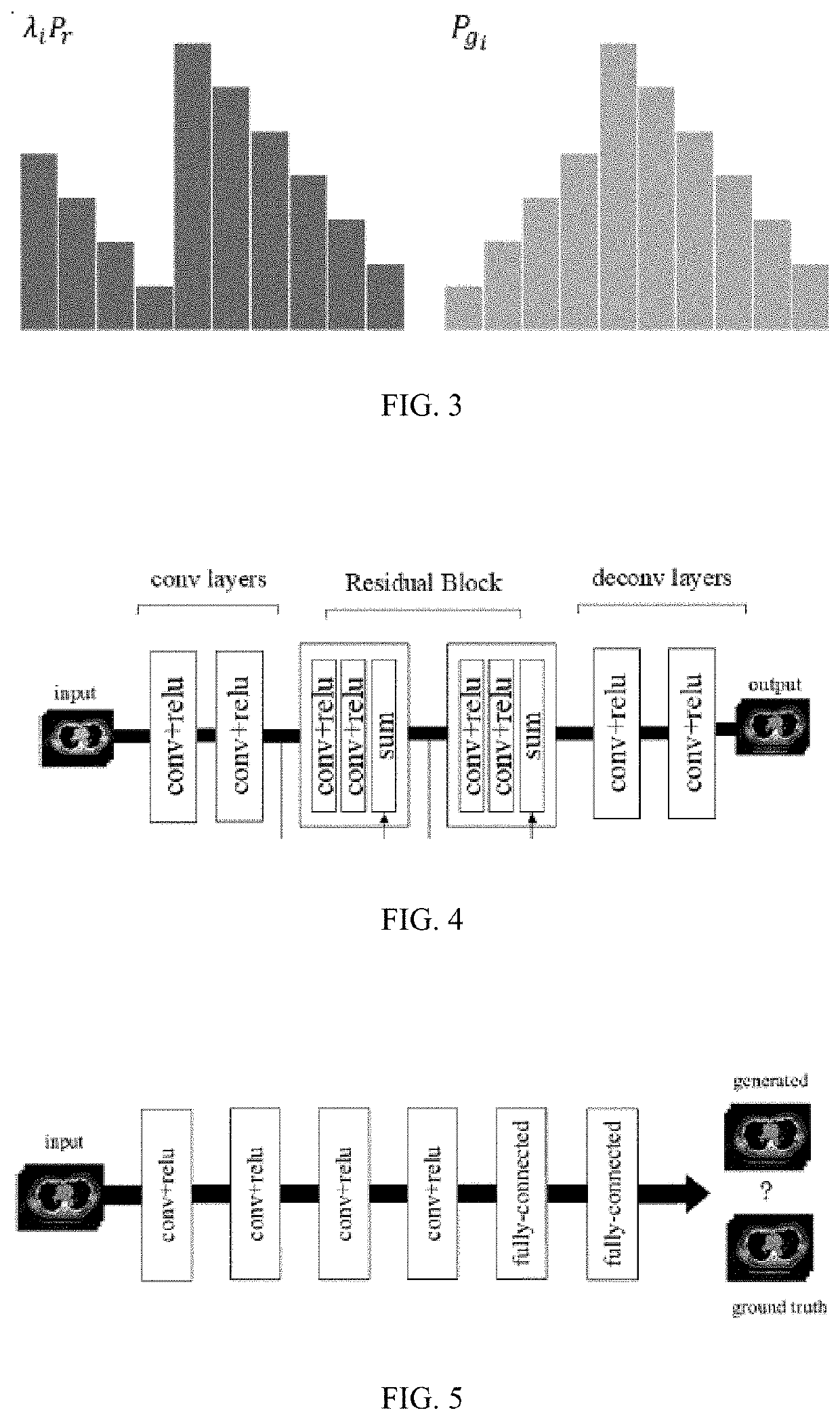

Learning Method of Generative Adversarial Network with Multiple Generators for Image Denoising

ActiveUS20220092742A1Accelerate network trainingImprove robustnessImage enhancementImage analysisImage denoisingAlgorithm

The present invention relates to a learning method of generative adversarial network (GAN) with multiple generators for image denoising, and provides a generative adversarial network with three generators. Such generators are used for removing Poisson noise, Gaussian blur noise and distortion noise respectively to improve the quality of low-dose CT (LDCT) images; the generators adopt the residual network structure. The mapped short connection used in the residual network can avoid the vanishing gradient problem in a deep neural network and accelerate the network training; the training of GAN is always a difficult problem due to the unreasonable measure between the generative distribution and real distribution. The present invention can stabilize training and enhance the robustness of training models by limiting the spectral norm of a weight matrix.

Owner:UNIV OF SHANGHAI FOR SCI & TECH +1

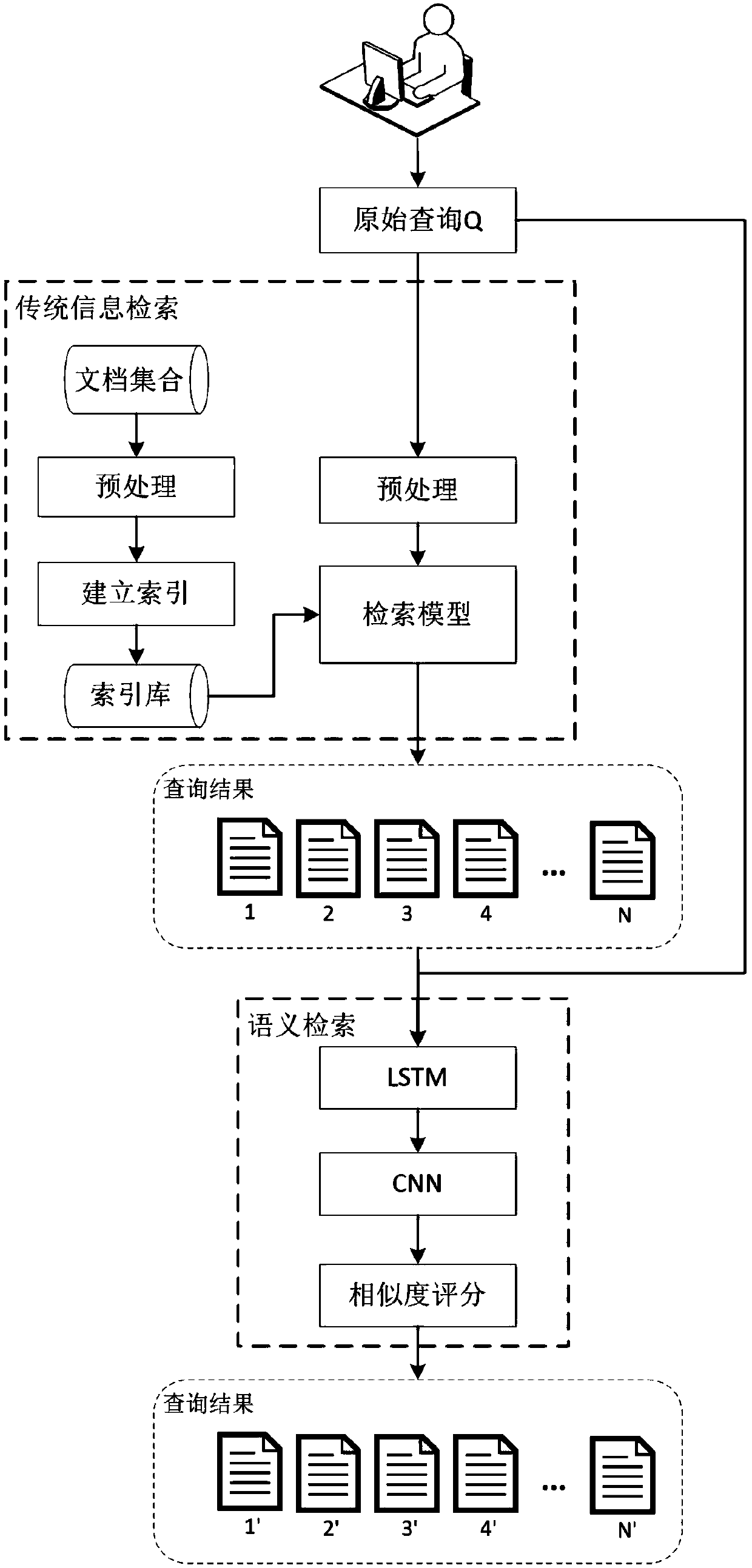

Document retrieval method for searching topic type query in TED speech

ActiveCN109635083AImprove performanceIncrease constraintsDigital data information retrievalSpecial data processing applicationsUser inputNetwork model

The invention relates to the technical field of information retrieval, and provides a semantic document retrieval method for searching topic type query in TED speech. The method comprises: training aneural network model by utilizing the existing query and document, and learning neural network model parameters; when a user inputs a query, a query likelihood retrieval model is used to obtain a preliminary retrieval result; and inputting the preliminary retrieval result into the neural network model with the fixed parameters for reordering, and determining a final retrieval result. According tothe method, the problem that a good effect cannot be achieved due to the fact that a traditional retrieval method in topic type query retrieval lacks semantic contact between queries and documents issolved; respectively modeling the topic type query and the speech document by introducing a neural network, and obtaining the semantic level correlation between the query and the document. In the neural network part, a recurrent neural network and a convolutional neural network are connected in series, and in addition, in order to solve the problem of gradient disappearance, a currently popular LSTM module is adopted.

Owner:UNIV OF SCI & TECH BEIJING

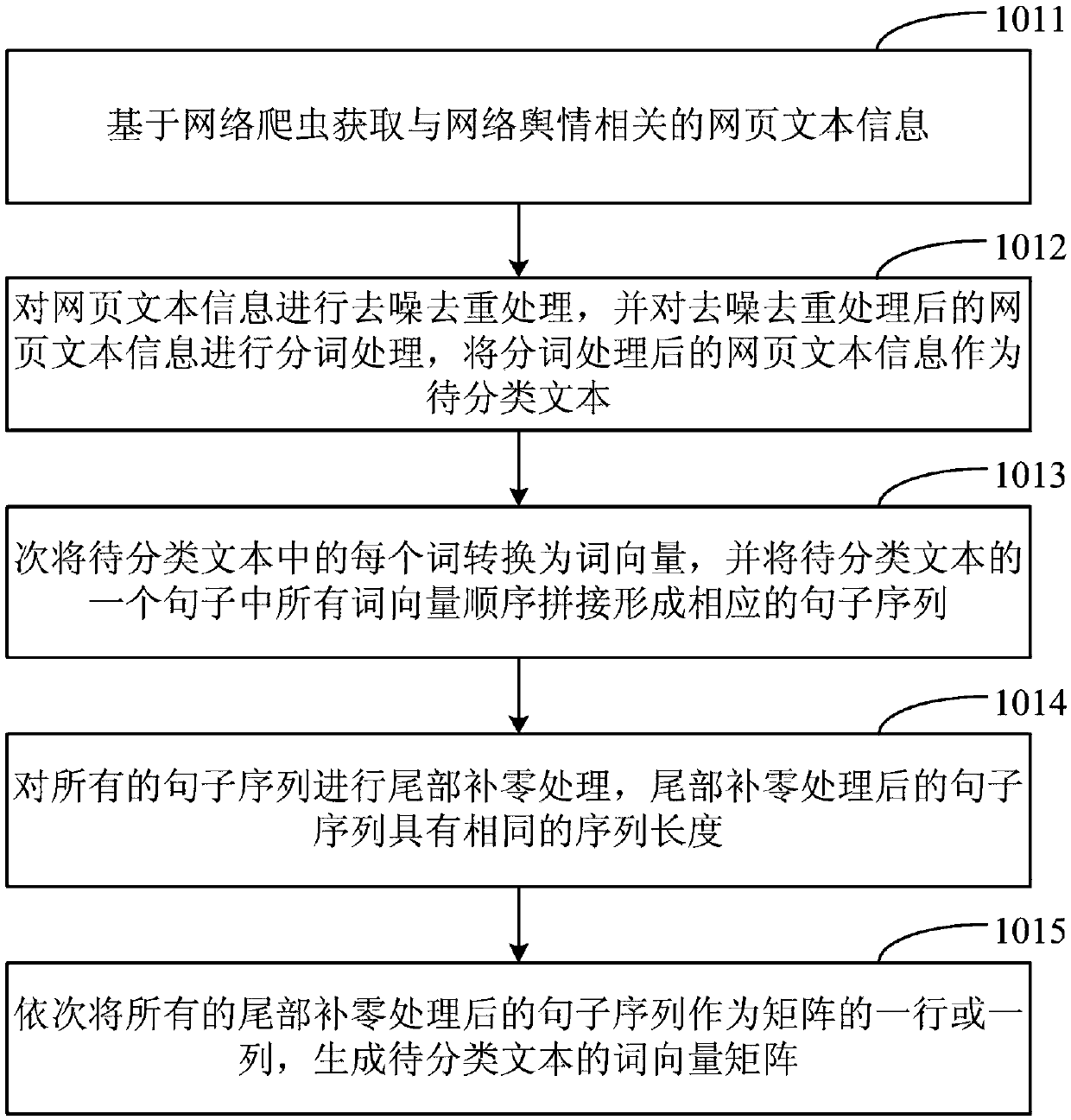

Text classification method, device, medium and apparatus based on convolution neural network

ActiveCN109543029AEasy to trainAdd depthNeural architecturesEnergy efficient computingText categorizationClassification methods

The invention provides a text classification method, device, medium and apparatus based on a convolution neural network, wherein, the method comprises the following steps: obtaining a word vector matrix of a text to be classified related to network public opinion; constructing an initial feature matrix according to the word vector matrix, and using the initial feature matrix as the input of the trained text classification model and input the initial feature matrix to the first sequential region block, and determining the output of the region block is determined; The input of each hidden layerin the region block comes from the output of all other hidden layers in the region block. The output of the current region block is taken as the input of the next region block until the output of allthe region blocks is determined, and the output of all the region blocks is transmitted to the full connection layer, and the classification result is determined according to the output of all the region blocks. The network structure adopted in the method can make the transmission of network features and gradients more effective, avoid the problem of gradient disappearance caused by the layer-by-layer transmission of loss function information, and ensure that the network depth can be enlarged while the problem of gradient disappearance can be avoided.

Owner:PING AN TECH (SHENZHEN) CO LTD

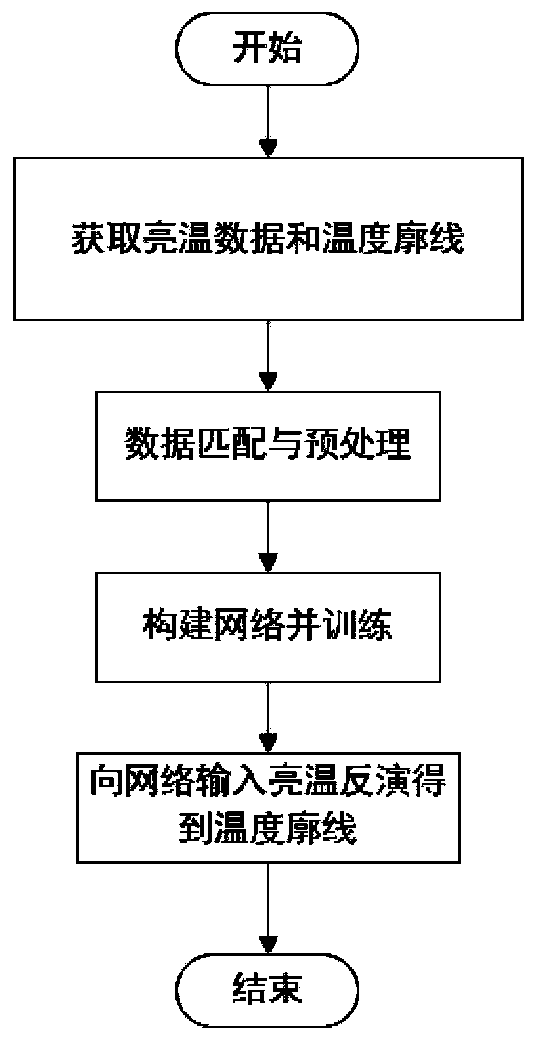

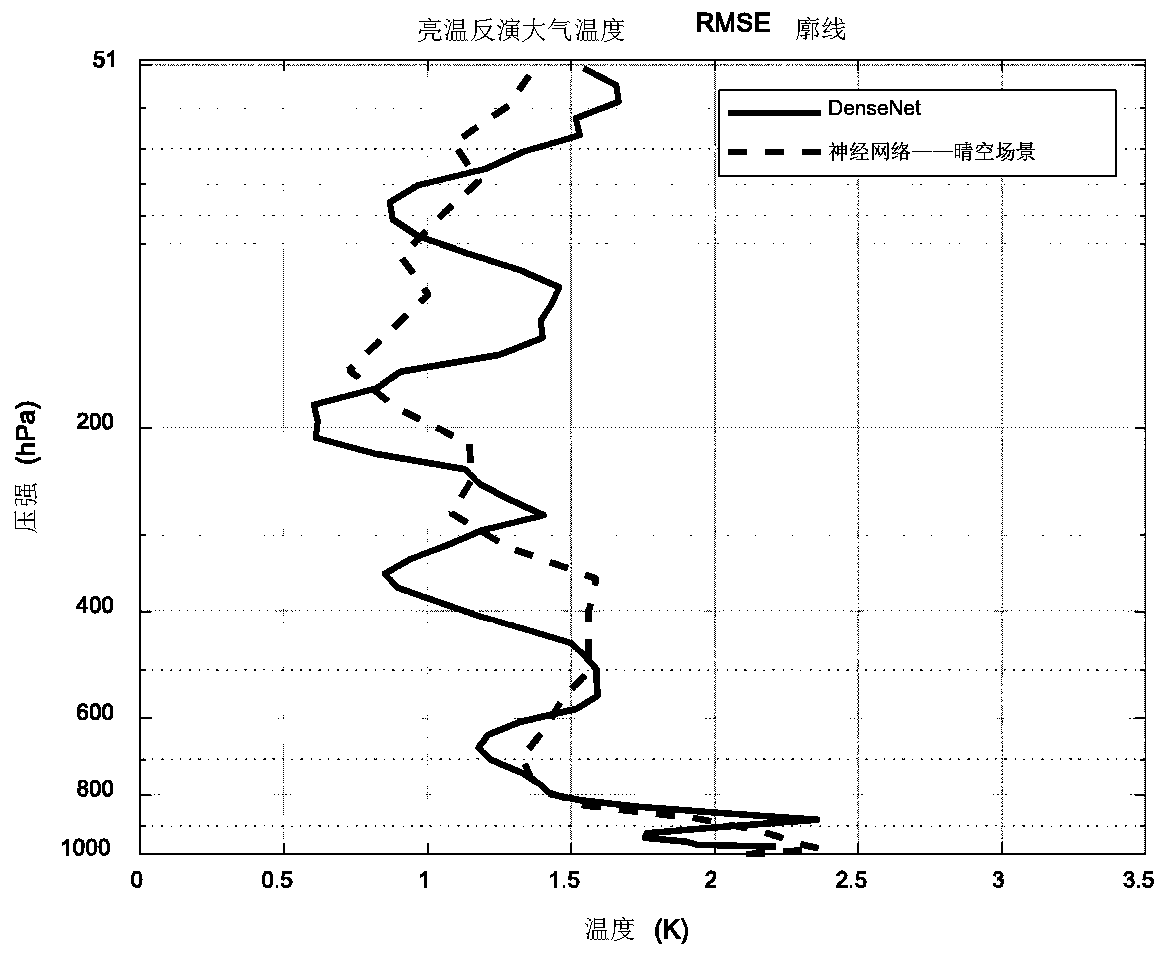

Three-dimensional atmospheric temperature profile inversion method and system based on DenseNet convolutional neural network

ActiveCN110826693AImprove generalization abilityReduce inversion errorCharacter and pattern recognitionThermometer applicationsData setAlgorithm

The invention discloses a three-dimensional atmospheric temperature profile inversion method and system based on a DenseNet convolutional neural network, and belongs to the field of atmospheric microwave remote sensing. The method comprises the following steps: constructing a training data set according to a two-dimensional atmospheric observation brightness temperature image and a three-dimensional atmospheric temperature profile of an oxygen absorption frequency band; based on the training data set, training the DenseNet convolutional neural network until the DenseNet convolutional neural network is converged to obtain a trained network; and inputting a brightness temperature image to be inverted into the trained network, and outputting a three-dimensional atmospheric temperature profileobtained by inversion. A data set used for training takes two-dimensional brightness temperature images as units, each brightness temperature image covers a certain area on the earth, the whole dataset spans a long time interval, the generalization ability is greatly improved, and the inversion error is also reduced. The DenseNet convolutional neural network is large in layer number, the problemof gradient disappearance in the training process is avoided due to the dense connection structure of the DenseNet convolutional neural network, the DenseNet convolutional neural network is suitablefor complex inversion, data of three scenes of clear sky, cloud and rainy days can be directly inverted together, and time consumption is reduced.

Owner:HUAZHONG UNIV OF SCI & TECH

Image super-resolution reconstruction method of supervised convolutional neural network based on multi-scale feature extraction fusion

PendingCN111402138AImprove adaptabilityRealize multi-channel propagationGeometric image transformationCharacter and pattern recognitionFeature extractionImage resolution

The invention discloses an image super-resolution reconstruction method of a supervised convolutional neural network based on multi-scale feature extraction fusion. The method comprises the followingsteps: image preprocessing, image feature extraction and image reconstruction; in the image feature extraction step, a plurality of MSB modules are adopted, in the MSB modules, feature extraction is carried out on an image by adopting convolution layers containing convolution kernels of different sizes, feature repeated learning is carried out in a dense connection mode, and a supervision layer error function is designed in the model and used for assisting and correcting reconstruction errors of the model. According to the method, the extracted feature map is processed on different scales, sothe adaptability of the model is enhanced; multi-channel propagation of information is achieved, the convergence speed is increased, and the gradient disappearance phenomenon is relieved; and an auxiliary supervision error function is added, so the back propagation of the gradient is enhanced, extra regularization is provided, the problem of gradient disappearance in a traditional algorithm is effectively solved, and the precision of the algorithm is improved.

Owner:TIANJIN CHENGJIAN UNIV

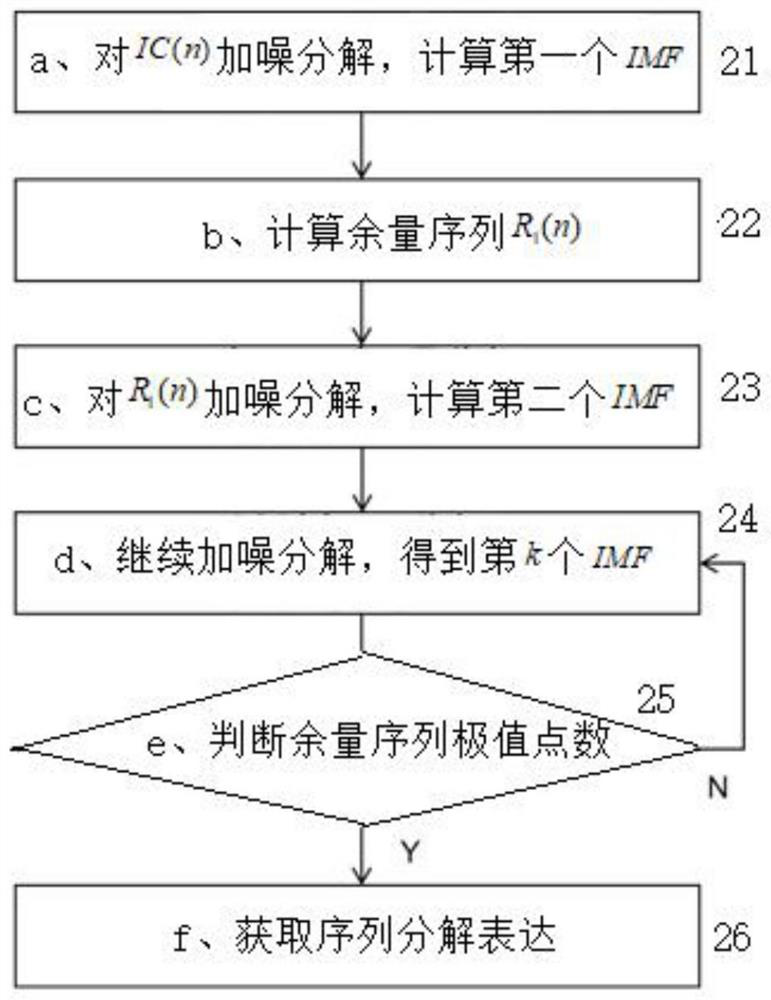

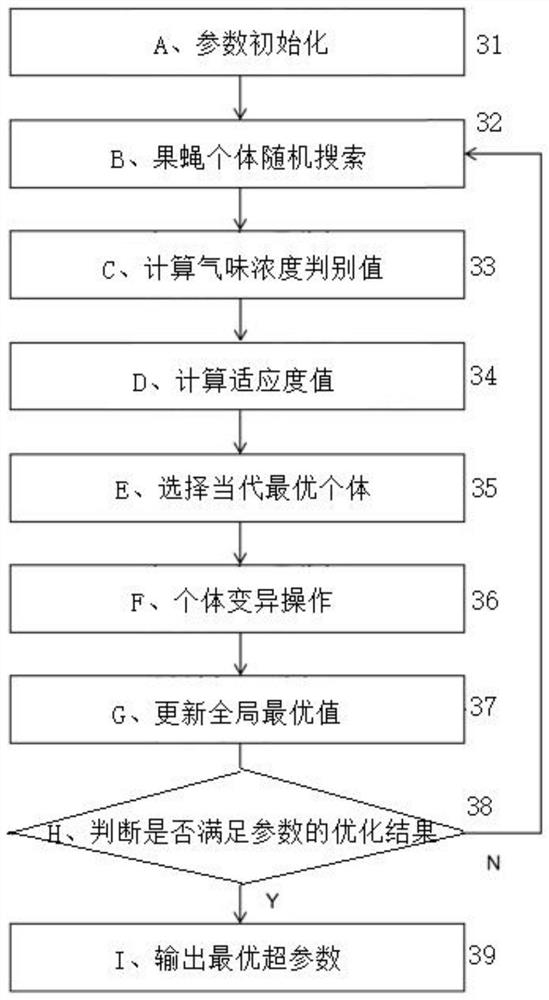

Power transmission line icing thickness prediction method based on CEEMDAN-QFOA-LSTM

ActiveCN112116162AAvoid estimation processingClear classificationQuantum computersForecastingData acquisitionEngineering

The invention discloses a power transmission line icing thickness prediction method based on CEEMDAN-QFOA-LSTM, and relates to the field of combination of power transmission line state evaluation anddeep learning. The method comprises the following steps o: (1) carrying out data acquisition and preprocessing; (2) carrying out CEEMDAN decomposition on an icing thickness historical data sequence (12); (3) optimizing hyper-parameters of the LSTM by a quantum drosophila melanogaster algorithm; (4) carrying out LSTM model training (14); and (5) predicting the icing thickness of a power transmission line and analyzing a result (15). According to the method, the CEEMDAN decomposition algorithm is used, a sequence which is difficult to directly predict is converted into a plurality of predictablecomponent sequences; a neural network can more accurately grasp the law of the sequence according to multi-dimensional feature information obtained through decomposition; a QFOA optimization algorithm is used for obtaining the hyper-parameters, a complex manual parameter adjustment process is avoided, and a network model is trained more effectively; the used LSTM neural network does not have theproblem of gradient disappearance of a general network, so that optimal convergence of the model is ensured, and the problem of short-term and long-term time sequence prediction is effectively solved.

Owner:CENT CHINA BRANCH OF STATE GRID CORP OF CHINA +1

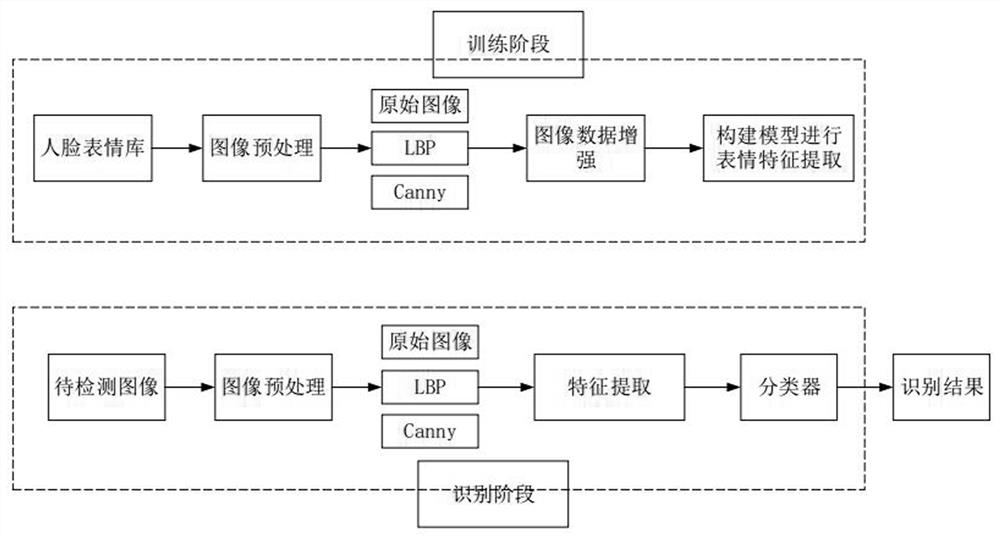

Facial expression recognition method based on multi-channel fusion and lightweight neural network

InactiveCN113989890AReduce in quantityReduce the amount of calculationCharacter and pattern recognitionNeural architecturesNetwork modelMachine learning

The invention provides a facial expression recognition method based on multi-channel fusion and a lightweight neural network, and aims to solve the problems that in a traditional facial expression recognition learning method, the feature extraction process is complex, and deeper high semantic features and deep features cannot be obtained from an original image. According to the facial expression recognition method based on the multi-channel fusion and the lightweight neural network, a three-channel feature image after multi-channel fusion is used as the input of the constructed lightweight neural network, the design idea of deep separable convolution is adopted to reduce the number of parameters and the amount of calculation, and meanwhile, a residual connection mechanism is adopted to construct a network model to solve the problems of network performance degradation and gradient disappearance, so that good performance can be ensured while a deeper network is trained. Experiments show that the model provided by the invention can effectively extract facial expression features and classify expressions, and has good accuracy and robustness.

Owner:HENAN UNIV OF SCI & TECH

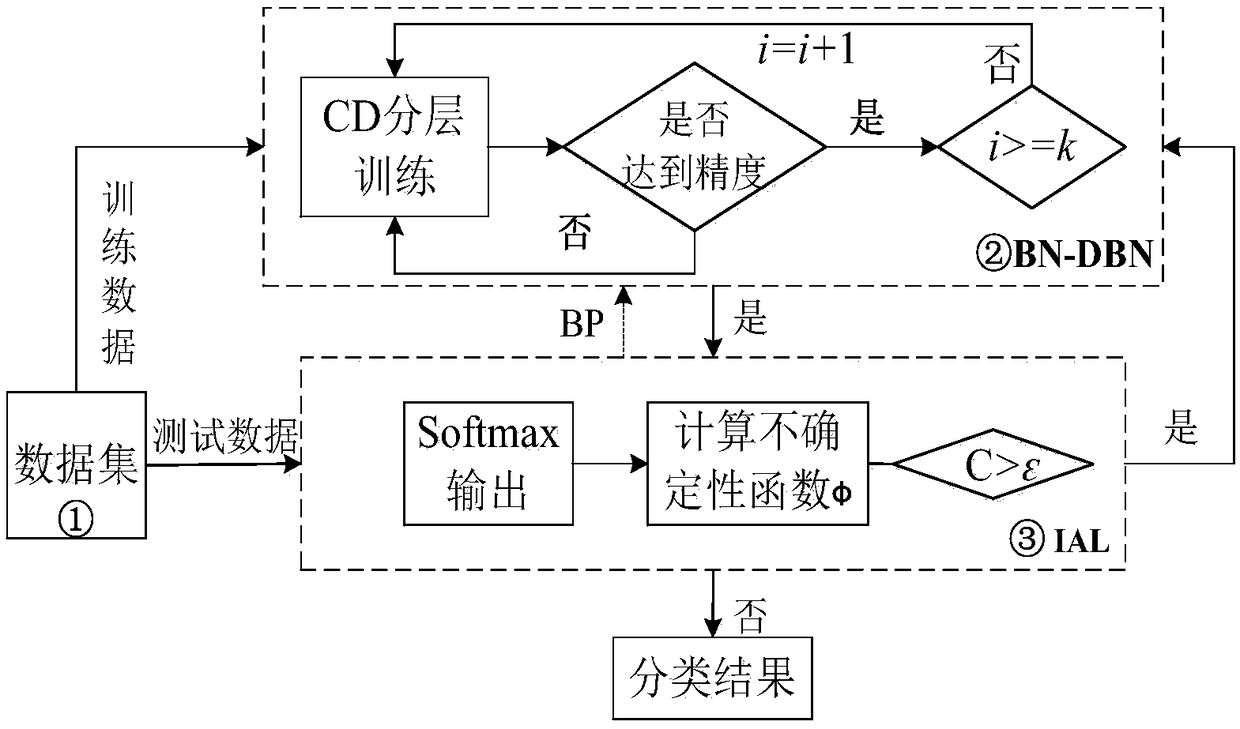

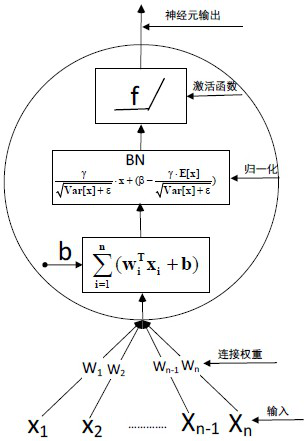

Network security situation element acquisition mechanism based on BN-DBN

InactiveCN109492751ASolve redundancySolve balance problemsMachine learningNeural architecturesDeep belief networkSmall sample

In order to accelerate the convergence speed of a Dep Belief Network (DBN) and improve the acquisition precision of situation elements under a small sample condition, the invention provides a networksecurity situation element acquisition mechanism based on BN-DBN. On one hand, BN is added into the deep neural network to solve the gradient disappearance problem; on the other hand, an improved active Learning (IAL) algorithm is put forward through the deep neural network output layer, and the deep belief network is finely adjusted in the reverse direction through the algorithm, and the algorithm balances the sample types by actively selecting training samples in each iteration. Theoretical analysis and instance data simulation results show that the mechanism can solve the problems that theconvergence speed of the deep neural network is too slow or the gradient disappears, and samples are accurately classified. And the acquisition precision, the convergence rate and the algorithm complexity of the method are superior to those of other situation element acquisition mechanisms listed in the text.

Owner:CHONGQING UNIV OF POSTS & TELECOMM

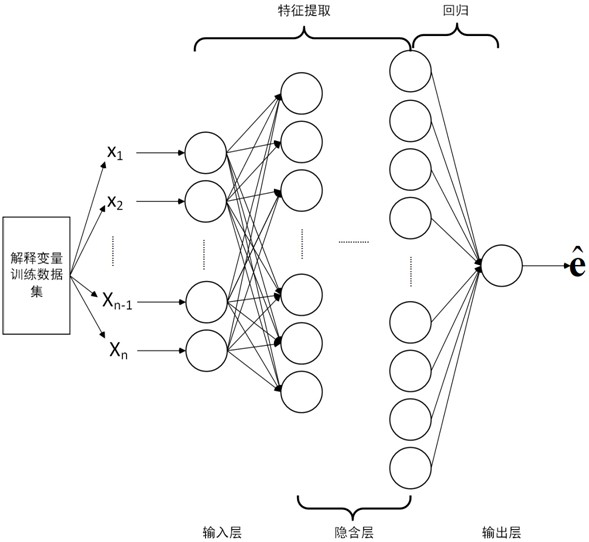

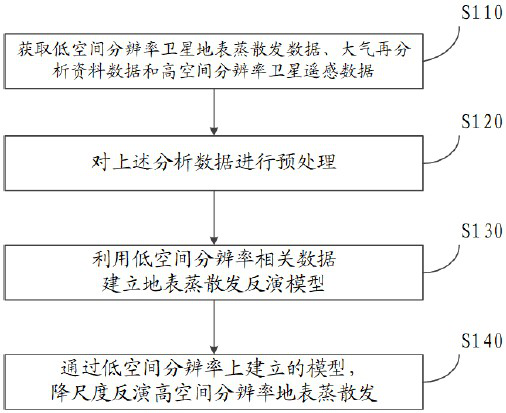

Ground surface evapotranspiration data downscaling method based on multi-source data and deep learning

ActiveCN113486000AImprove inversion accuracyFast convergenceDigital data information retrievalMeasurement devicesComputational scienceAnalysis data

The invention provides an evapotranspiration data downscaling method based on multi-source data and deep learning. The method comprises the steps of obtaining low-spatial-resolution satellite surface evapotranspiration data, low-spatial-resolution atmosphere reanalysis data and high-spatial-resolution satellite remote sensing data; carrying out data preprocessing; based on a built deep learning regression network, establishing a surface evapotranspiration inversion model; and performing downscaling inversion on high-spatial-resolution surface evapotranspiration through the surface evapotranspiration inversion model established on the low-spatial-resolution surface evapotranspiration. According to the method, the earth surface evapotranspiration inversion precision is improved by comprehensively considering earth surface evapotranspiration related influence factors. The nonlinear complex relation between remote sensing earth surface parameters and atmospheric data and earth surface evapotranspiration is deeply analyzed based on deep learning. The relation between the remote sensing earth surface parameters and atmospheric data and earth surface evapotranspiration is learned by adopting BN and a dynamic learning rate. The BN processing avoids the gradient disappearance problem, the training speed is greatly increased, and the dynamic learning rate enables the network to converge to the optimal solution better.

Owner:CHINESE ACAD OF SURVEYING & MAPPING

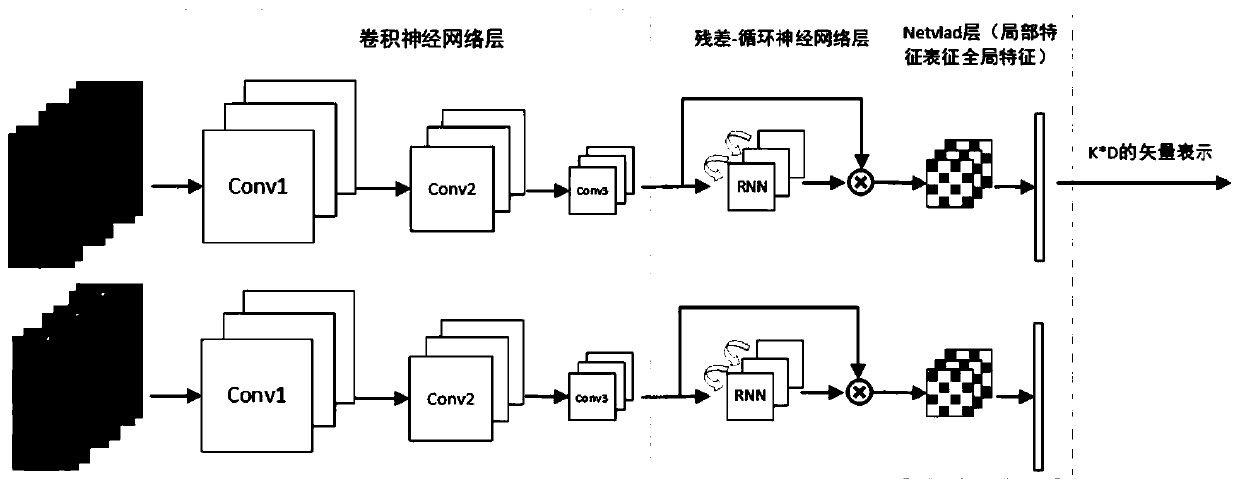

Video pedestrian re-identification method and system based on self-learning local feature representation

ActiveCN111401267AAvoid vanishing gradientsEasy to integrateCharacter and pattern recognitionNeural architecturesPattern recognitionNetwork structure

The invention discloses a video pedestrian re-identification method and system based on self-learning local feature representation. The method comprises the steps: acquiring video information containing continuous change images of pedestrians to be identified in two set time periods; and respectively processing the obtained two segments of video information by adopting a twin network structure toobtain aligned vectors representing spatial and temporal features of pedestrians, and judging whether the pedestrians in the two segments of continuous image information are the same person or not bycomparing the obtained vector information, thereby realizing pedestrian re-identification. According to the residual-recurrent neural network provided by the invention, the correlation between the sequences can be extracted, the residual network is structurally formed, the problem of gradient disappearance of the recurrent neural network is solved, and the fusion of spatial features and time features is enhanced.

Owner:SHANDONG UNIV

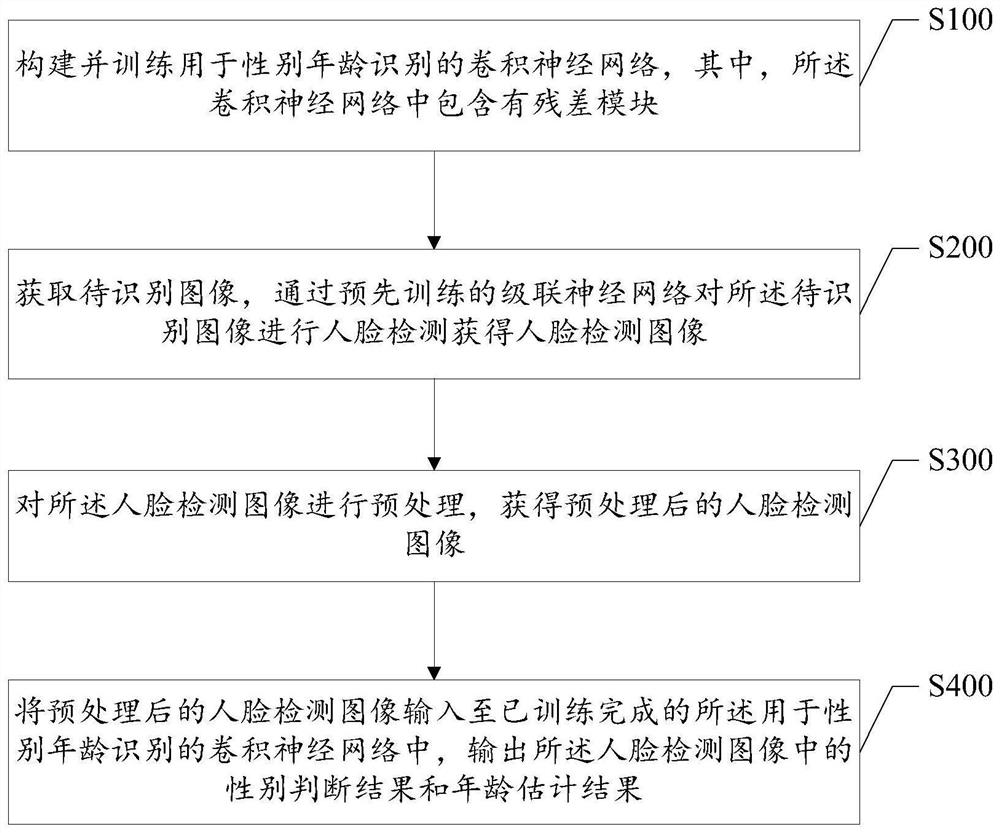

Gender and age recognition method and device and storage medium

PendingCN112257503AAvoid vanishing gradientsOvercome limitationsCharacter and pattern recognitionNeural architecturesFace detectionComputer vision

The invention discloses a gender and age recognition method and device and a storage medium, and the method comprises the steps: constructing and training a convolutional neural network for gender andage recognition, wherein the convolutional neural network comprises a residual module; obtaining a to-be-recognized image, and performing face detection on the to-be-recognized image through a pre-trained cascaded neural network to obtain a face detection image; preprocessing the face detection image to obtain a preprocessed face detection image; and inputting the preprocessed face detection image into the trained convolutional neural network for gender and age recognition, and outputting a gender judgment result and an age estimation result in the face detection image. According to the embodiment of the invention, the convolutional neural network with the residual module is adopted to carry out gender and age recognition on the target face, the problem of gradient disappearance caused bynetwork deepening is solved, the limitation of a traditional algorithm is overcome, and gender and age recognition is enabled to be faster, more accurate and more reliable.

Owner:SHENZHEN WEIBU INFORMATION

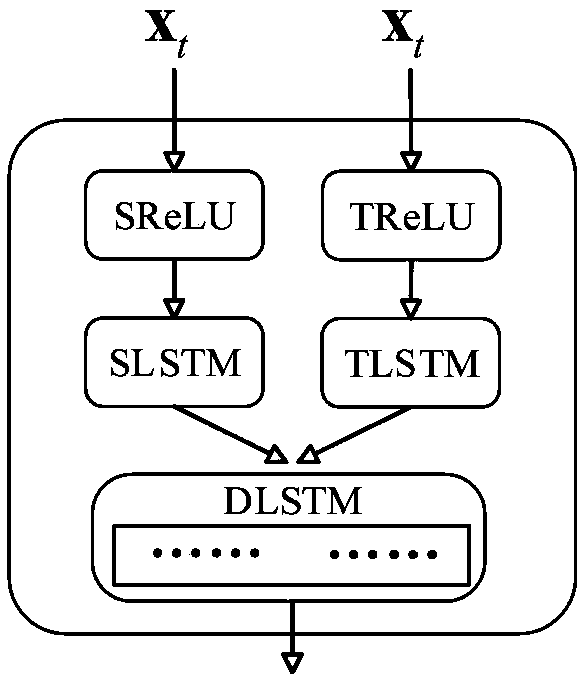

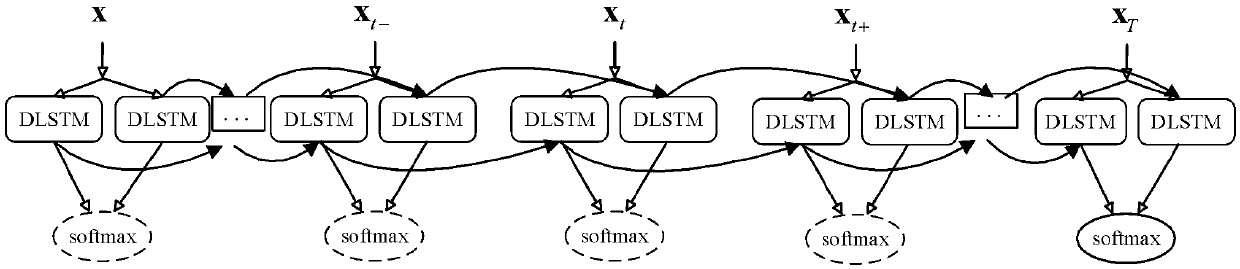

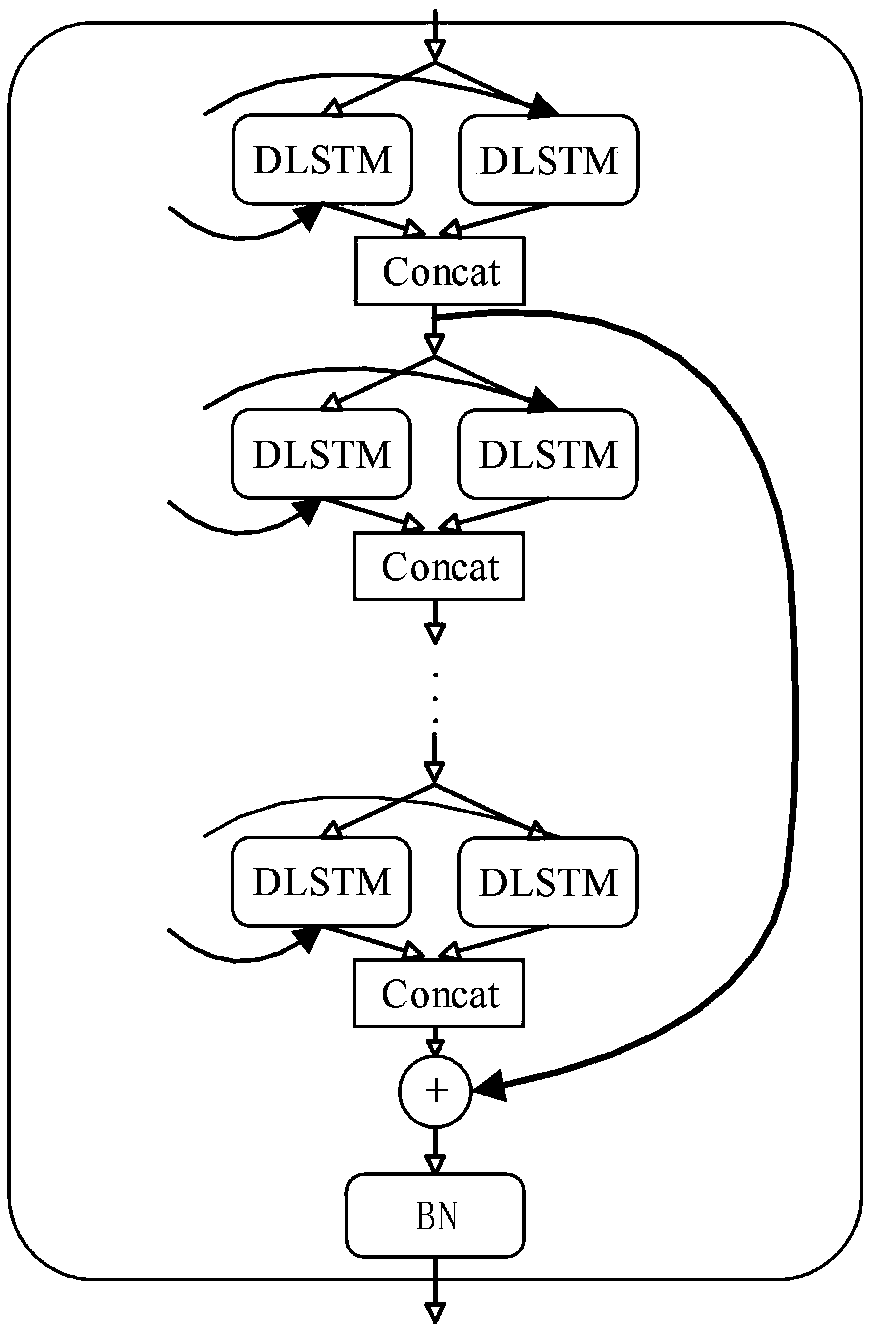

Video event recognition method based on deep residual long-short term memory network

InactiveCN108764009ASolve the problem of LSTM gradient disappearanceEasy to identifyCharacter and pattern recognitionNeural architecturesData connectionShort-term memory

The invention discloses a video event recognition method based on a deep residual long-short term memory network, which comprises 1) the design of spatial-temporal feature data connection layer, thatis, spatial-temporal feature data is synchronously parsed through a long-short term memory (LSTM) unit and then forms a spatial-temporal feature data connection unit DLSTM (double-LSTM), and the consistency of spatial and temporal information is highlighted; (2) the design of a DU-DLSTM (dual unidirectional DLSTM) structure which expands the width of the network and increases the feature selectionrange; (3) the design of an RDU-DLSTM (residual dual unidirectional DLSTM) module which solves a deeper problem of network gradient disappearance; and 4) the design of a 2C-softmax objective functionwhich reduces the distance within classes while expanding the distance between the classes. The video event recognition method has the advantages that the problem of gradient disappearance is solvedthrough constructing the deep residual network framework, and the video event recognition accuracy is improved by using the consistency fusion of temporal network and spatial network features at the same time.

Owner:SUZHOU UNIV +2

Features

- R&D

- Intellectual Property

- Life Sciences

- Materials

- Tech Scout

Why Patsnap Eureka

- Unparalleled Data Quality

- Higher Quality Content

- 60% Fewer Hallucinations

Social media

Patsnap Eureka Blog

Learn More Browse by: Latest US Patents, China's latest patents, Technical Efficacy Thesaurus, Application Domain, Technology Topic, Popular Technical Reports.

© 2025 PatSnap. All rights reserved.Legal|Privacy policy|Modern Slavery Act Transparency Statement|Sitemap|About US| Contact US: help@patsnap.com