Patents

Literature

Hiro is an intelligent assistant for R&D personnel, combined with Patent DNA, to facilitate innovative research.

779 results about "Cache miss" patented technology

Efficacy Topic

Property

Owner

Technical Advancement

Application Domain

Technology Topic

Technology Field Word

Patent Country/Region

Patent Type

Patent Status

Application Year

Inventor

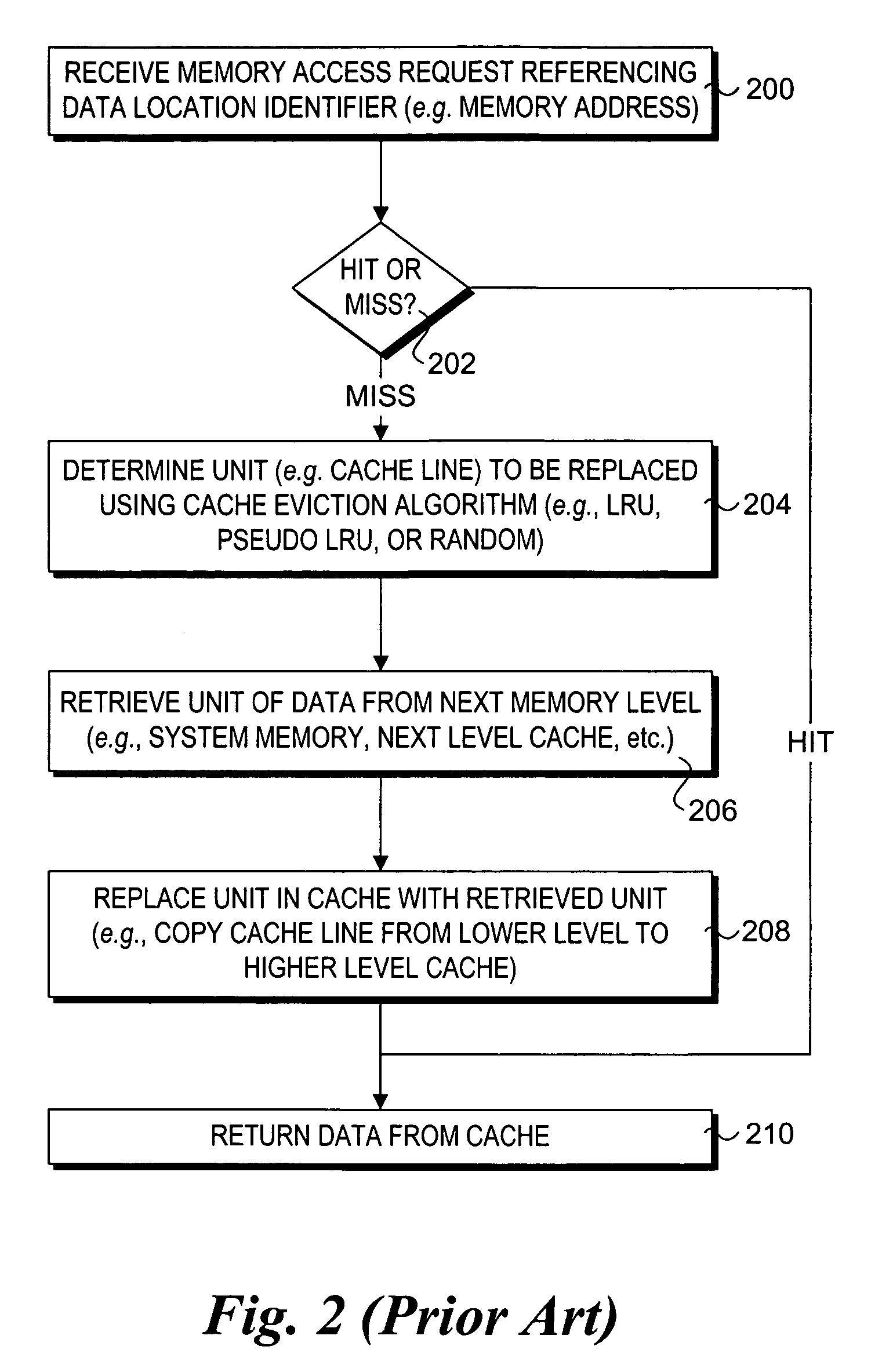

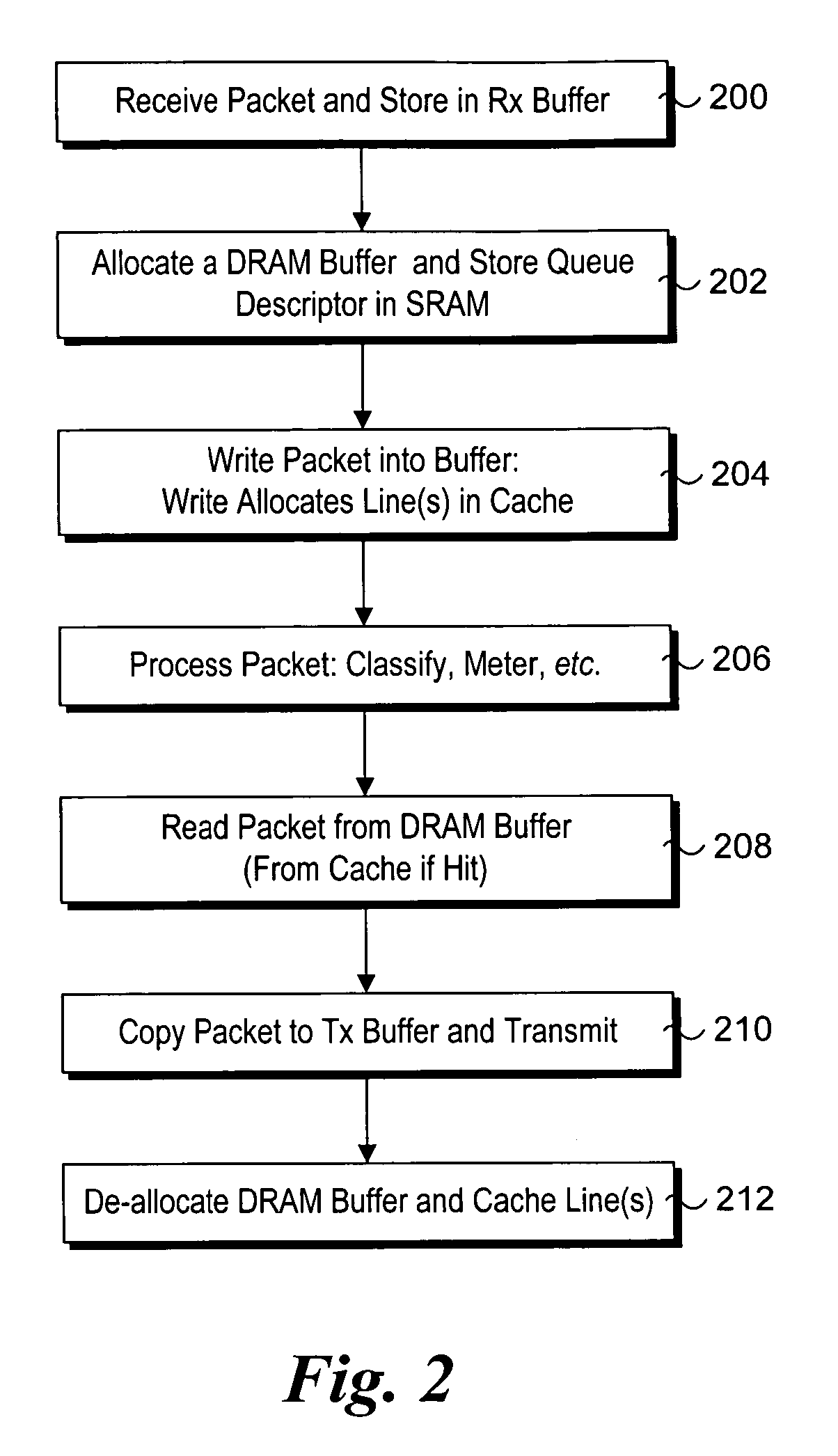

Cache miss occurs within cache memory access modes and methods. For each new request, the processor searched the primary cache to find that data. If the data is not found, it is considered a cache miss.

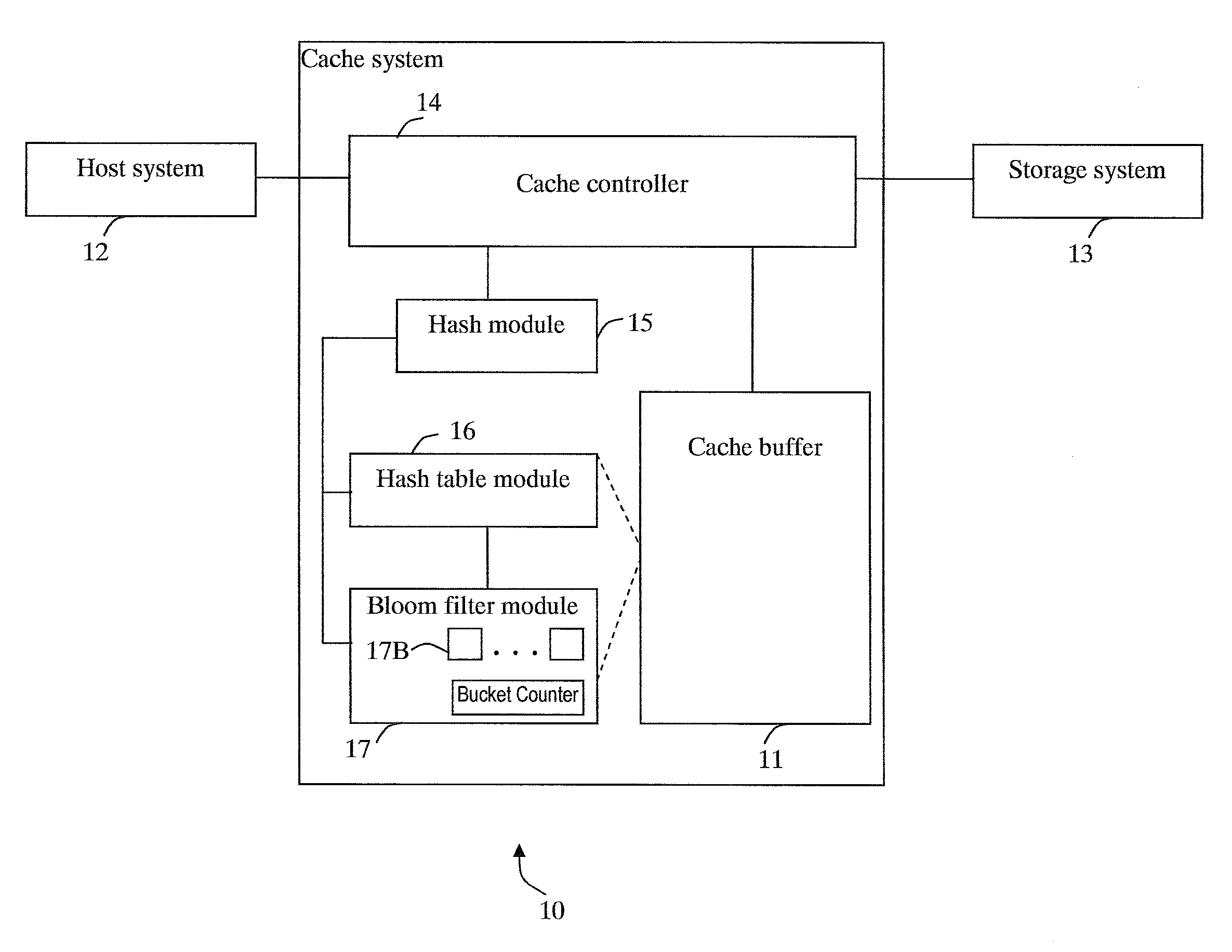

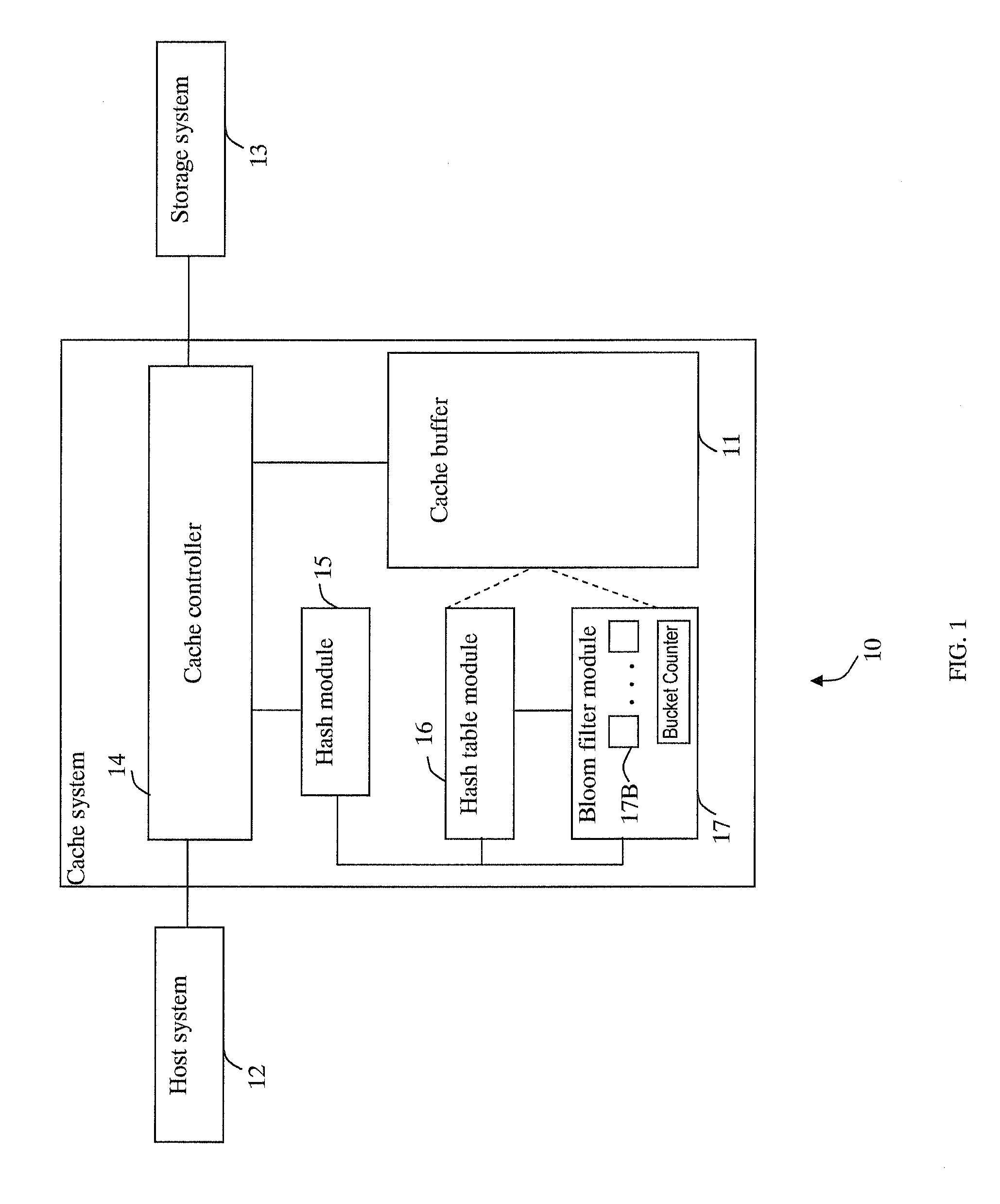

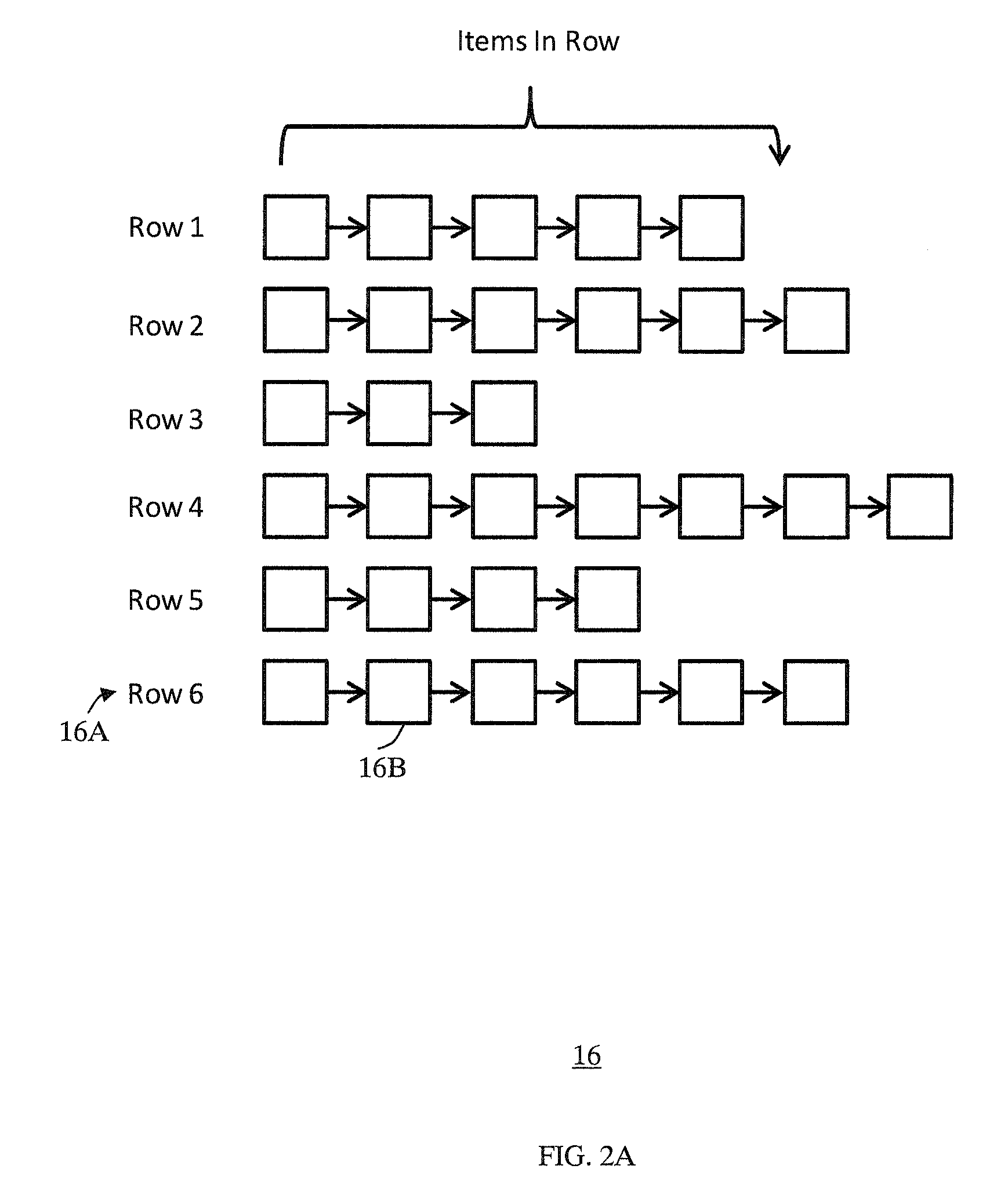

Cache system optimized for cache miss detection

ActiveUS20130326154A1Memory architecture accessing/allocationMemory adressing/allocation/relocationLogical block addressingBloom filter

According to an embodiment of the invention, cache management comprises maintaining a cache comprising a hash table including rows of data items in the cache, wherein each row in the hash table is associated with a hash value representing a logical block address (LBA) of each data item in that row. Searching for a target data item in the cache includes calculating a hash value representing a LBA of the target data item, and using the hash value to index into a counting Bloom filter that indicates that the target data item is either not in the cache, indicating a cache miss, or that the target data item may be in the cache. If a cache miss is not indicated, using the hash value to select a row in the hash table, and indicating a cache miss if the target data item is not found in the selected row.

Owner:SAMSUNG ELECTRONICS CO LTD

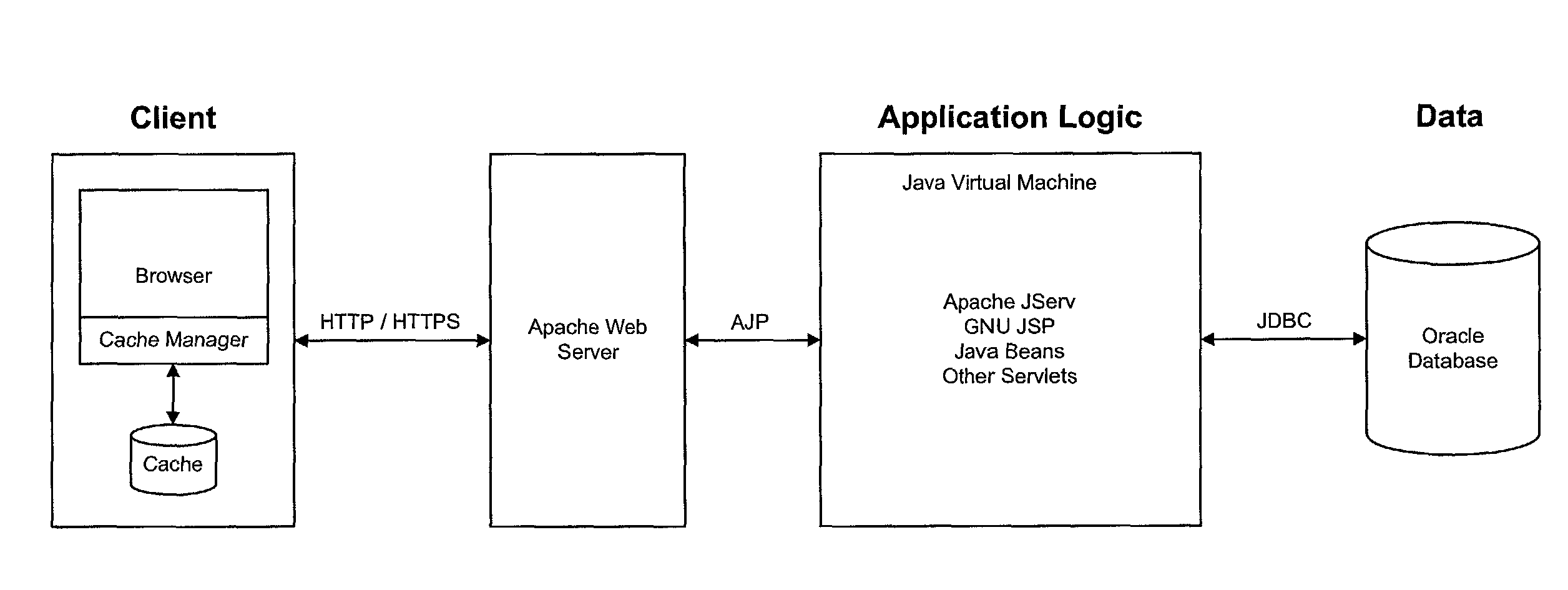

Client-side caching of pages with changing content

ActiveUS7970816B2Digital data information retrievalMultiple digital computer combinationsWeb browserWeb application

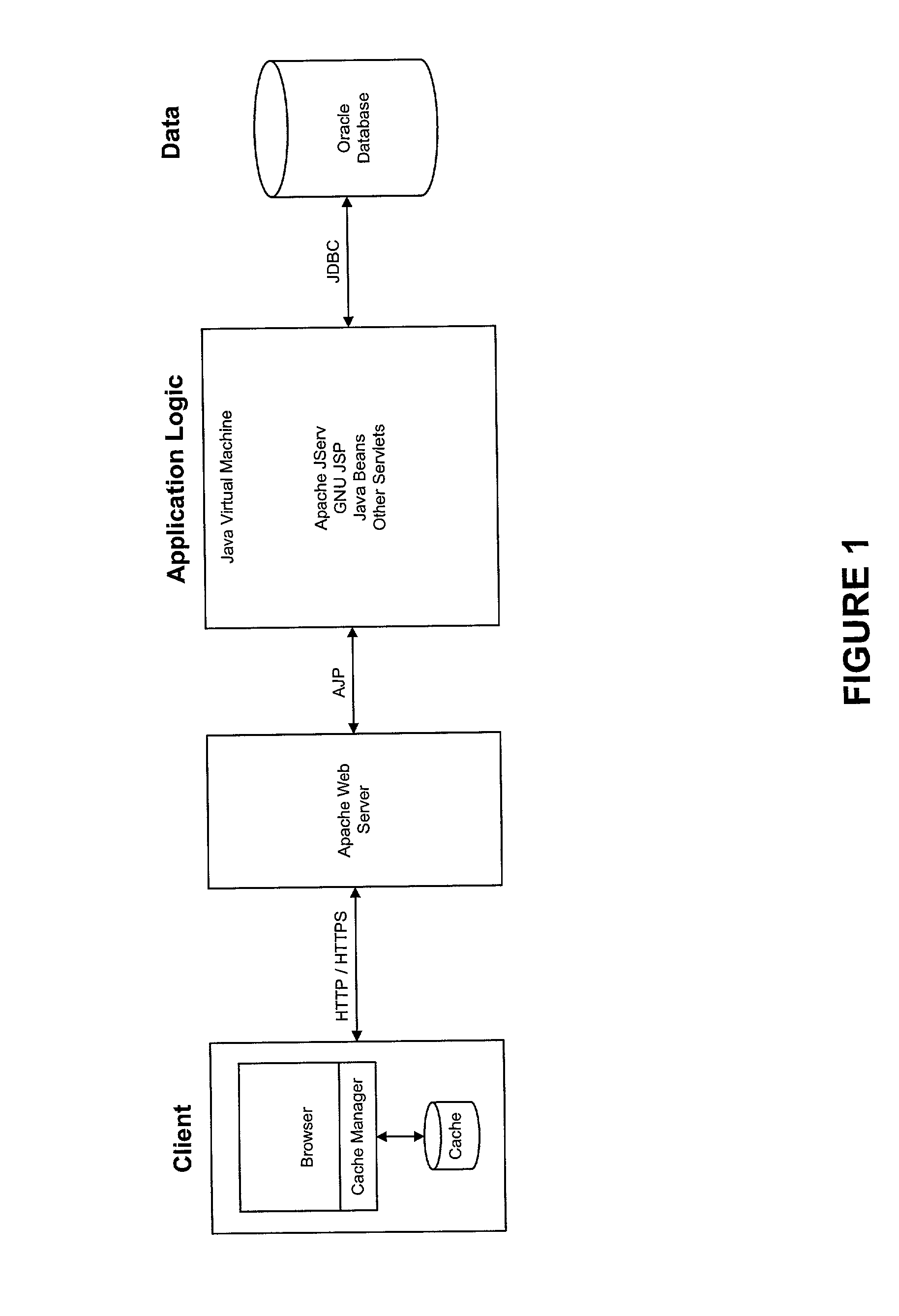

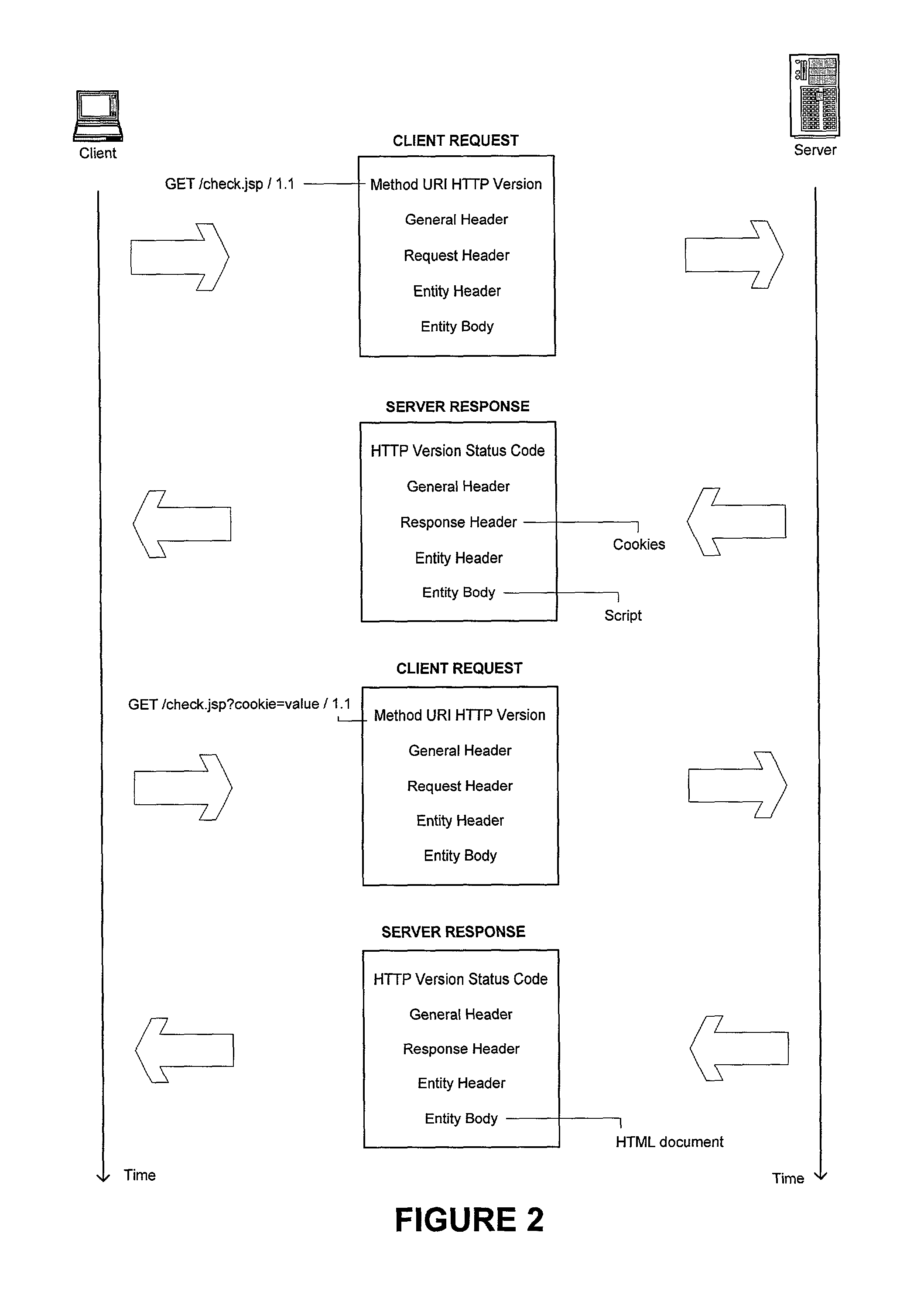

The present invention relates to Internet based and web applications and the need to reduce page latency and bandwidth usage. The invention can achieve these goals by making use of the cache built in to standard web browsers. In one embodiment, the invention provides that a web application user will use their browser to request a page from the application web server, which responds with a small page that includes a script. The script appends a previously established cookie value to the URL originally requested and the browser then re-requests the URL with the appended cookie value. (The server computes the cookie value based on the last modified time of the data used to generate the page.) If the most recent version of the page is in the browser cache, the browser gets a cache hit, which means the page is retrieved from browser cache rather than from the server, rapidly displaying the page to the user. If, on the other hand, there is only an older version of the page in the browser cache, there is a cache miss (because the rewritten URL will not match any URL in the cache) and the browser will send the request to the server to retrieve the most recent version of the page.

Owner:NETSUITE

Method for programmer-controlled cache line eviction policy

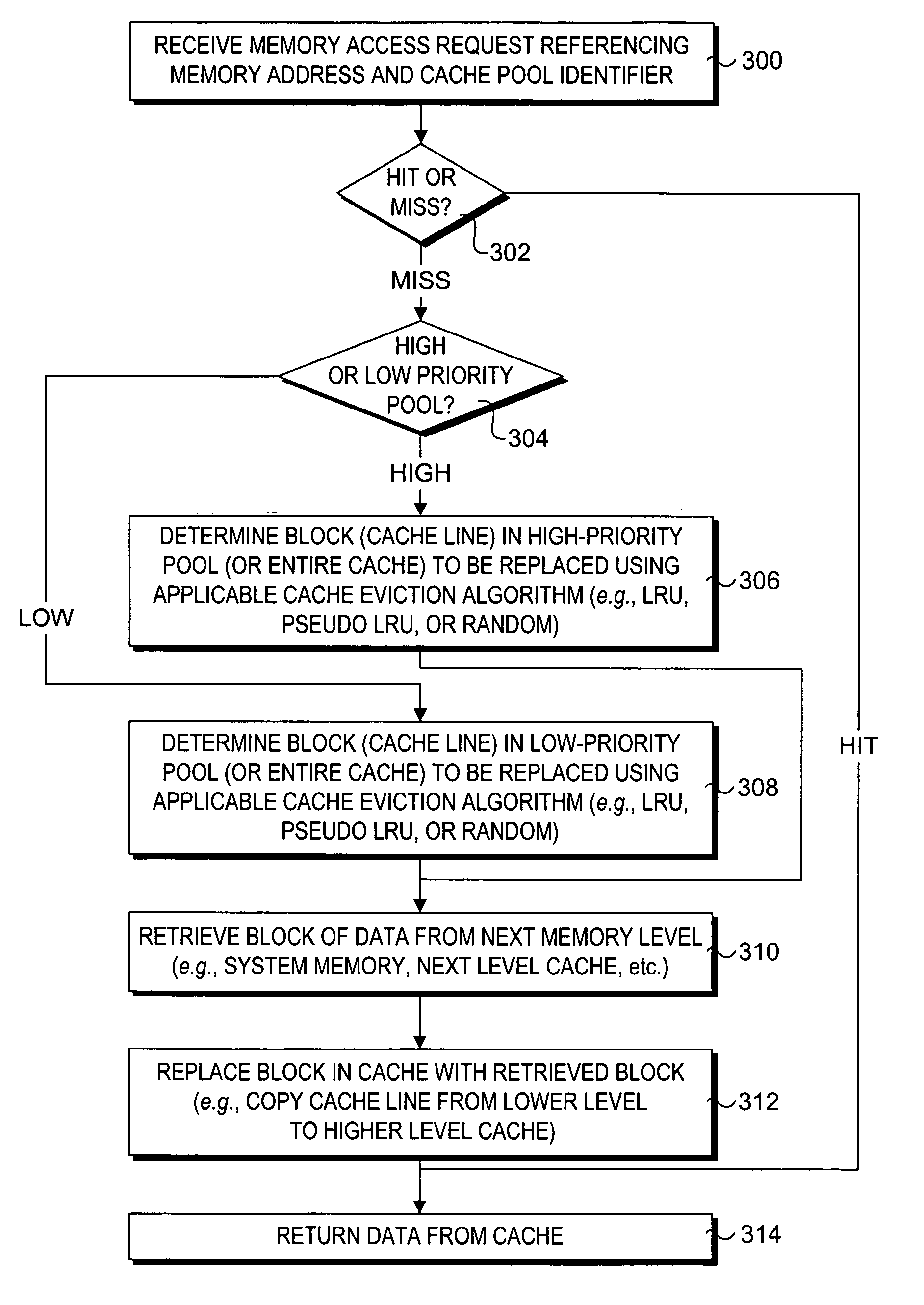

A method and apparatus to enable programmatic control of cache line eviction policies. A mechanism is provided that enables programmers to mark portions of code with different cache priority levels based on anticipated or measured access patterns for those code portions. Corresponding cues to assist in effecting the cache eviction policies associated with given priority levels are embedded in machine code generated from source- and / or assembly-level code. Cache architectures are provided that partition cache space into multiple pools, each pool being assigned a different priority. In response to execution of a memory access instruction, an appropriate cache pool is selected and searched based on information contained in the instruction's cue. On a cache miss, a cache line is selected from that pool to be evicted using a cache eviction policy associated with the pool. Implementations of the mechanism or described for both n-way set associative caches and fully-associative caches.

Owner:INTEL CORP

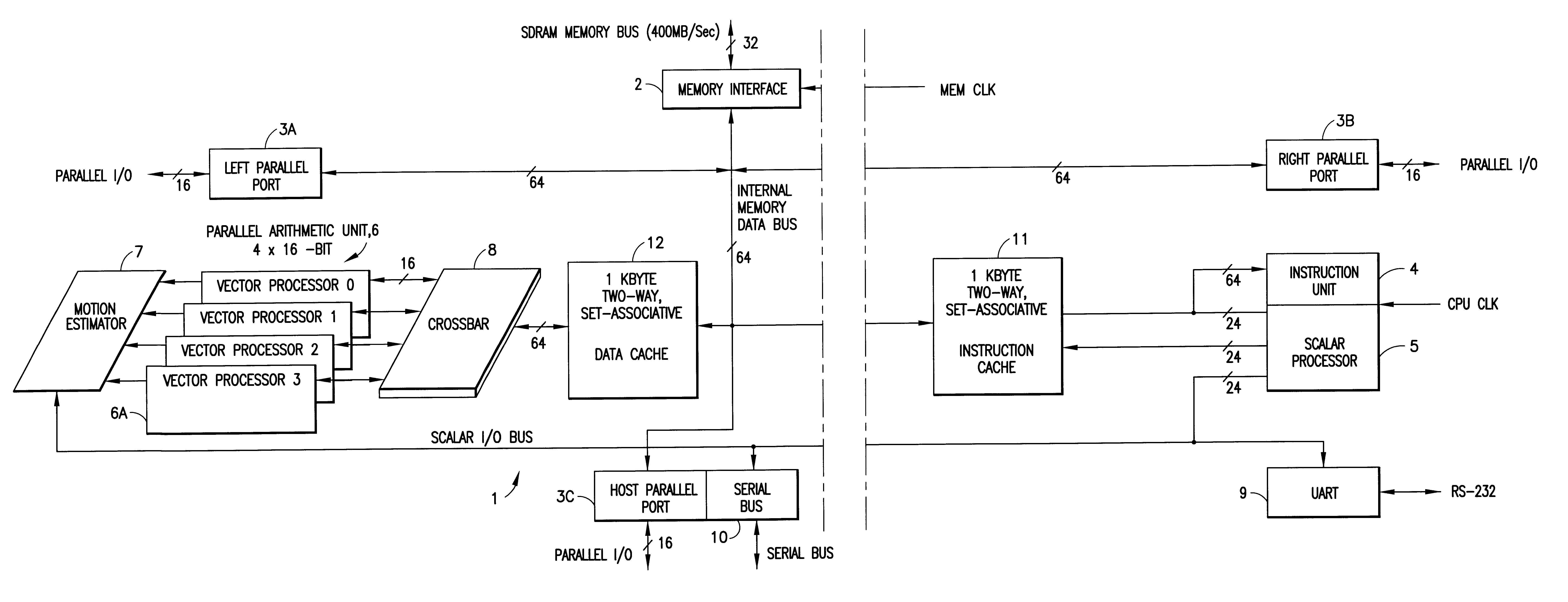

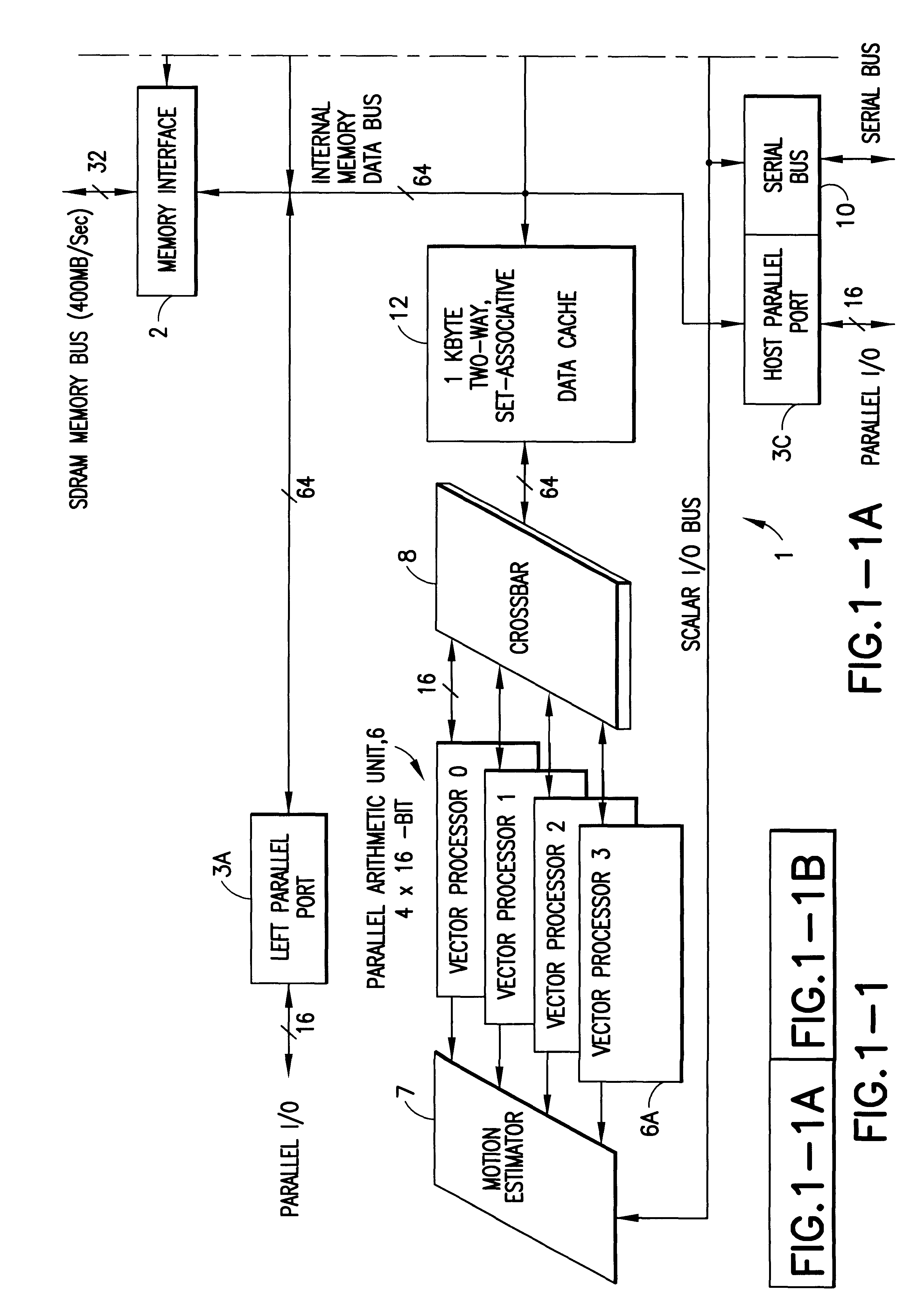

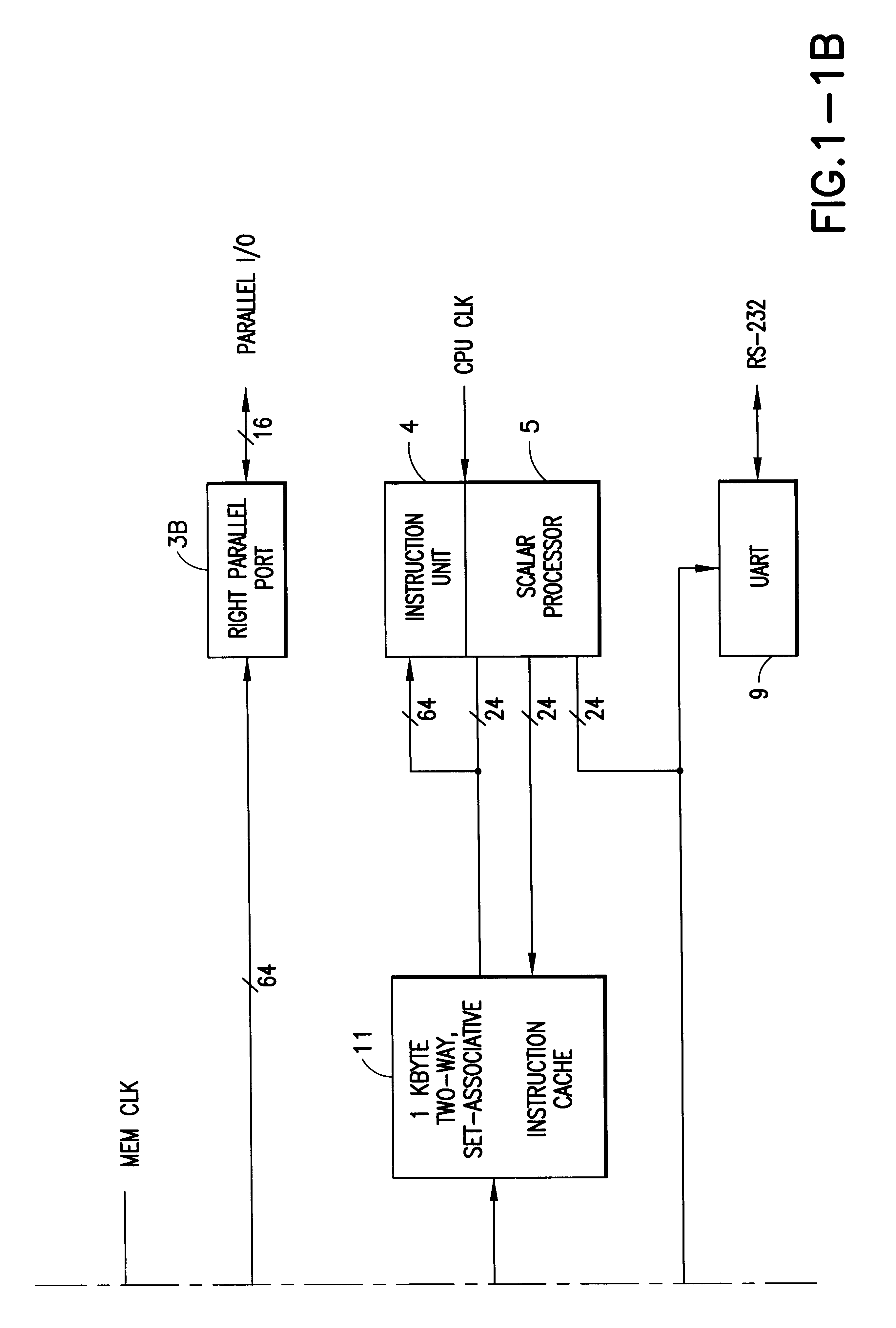

Digital signal processor containing scalar processor and a plurality of vector processors operating from a single instruction

InactiveUS6317819B1Register arrangementsMemory adressing/allocation/relocationCrossbar switchDigital data

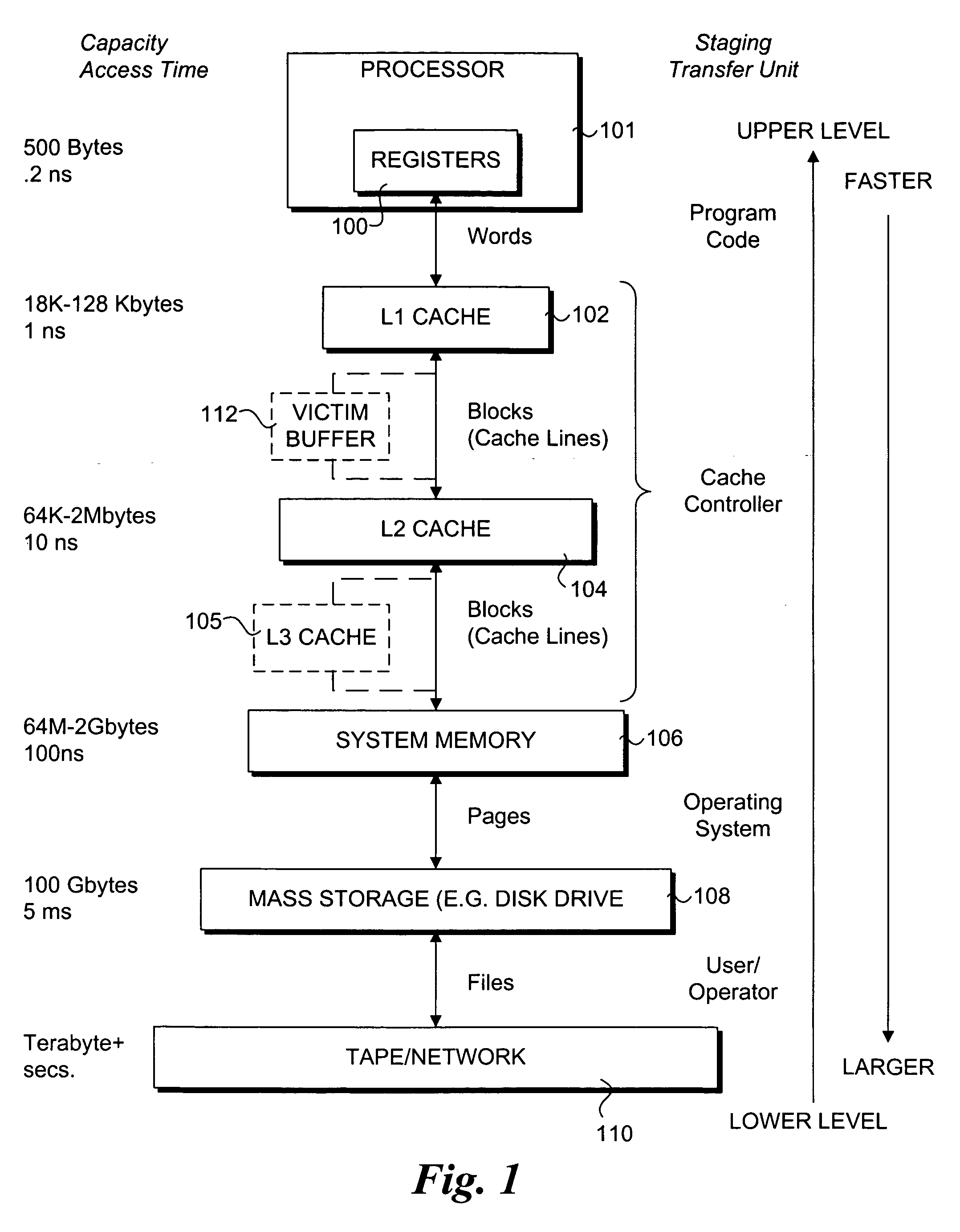

A digital data processor integrated circuit (1) includes a plurality of functionally identical first processor elements (6A) and a second processor element (5). The first processor elements are bidirectionally coupled to a first cache (12) via a crossbar switch matrix (8). The second processor element is coupled to a second cache (11). Each of the first cache and the second cache contain a two-way, set-associative cache memory that uses a least-recently-used (LRU) replacement algorithm and that operates with a use-as-fill mode to minimize a number of wait states said processor elements need experience before continuing execution after a cache-miss. An operation of each of the first processor elements and an operation of the second processor element are locked together during an execution of a single instruction read from the second cache. The instruction specifies, in a first portion that is coupled in common to each of the plurality of first processor elements, the operation of each of the plurality of first processor elements in parallel. A second portion of the instruction specifies the operation of the second processor element. Also included is a motion estimator (7) and an internal data bus coupling together a first parallel port (3A), a second parallel port (3B), a third parallel port (3C), an external memory interface (2), and a data input / output of the first cache and the second cache.

Owner:CUFER ASSET LTD LLC

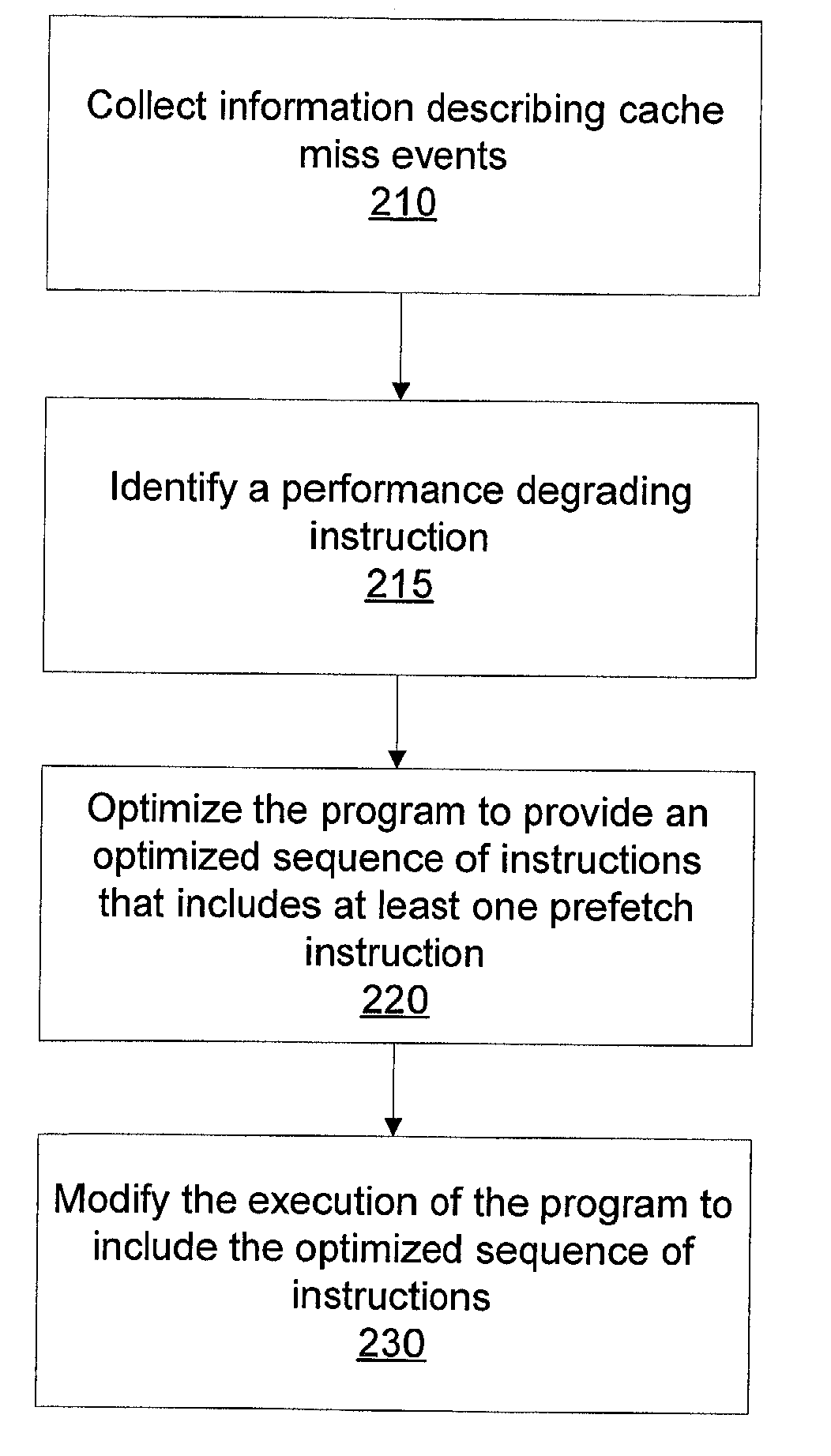

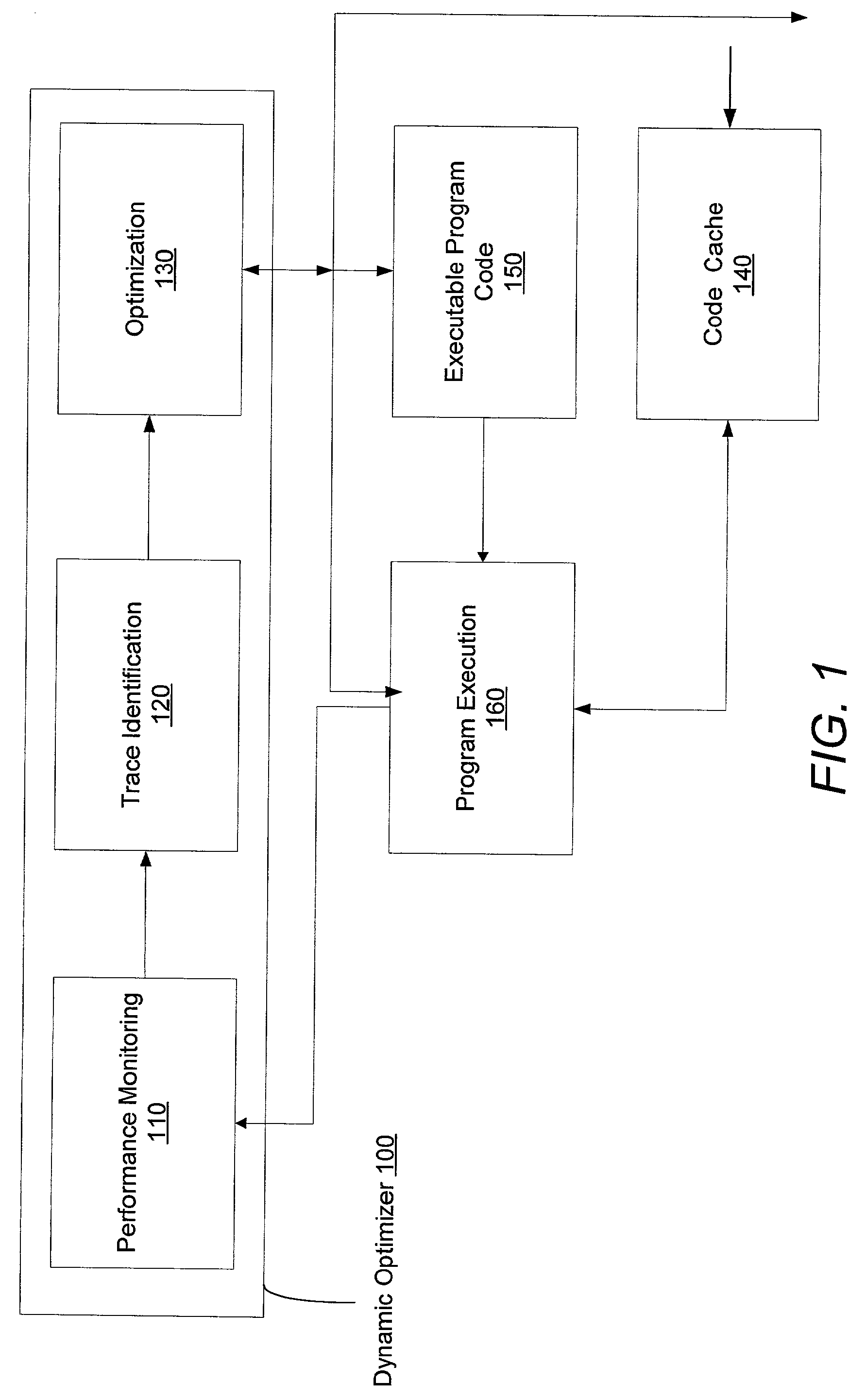

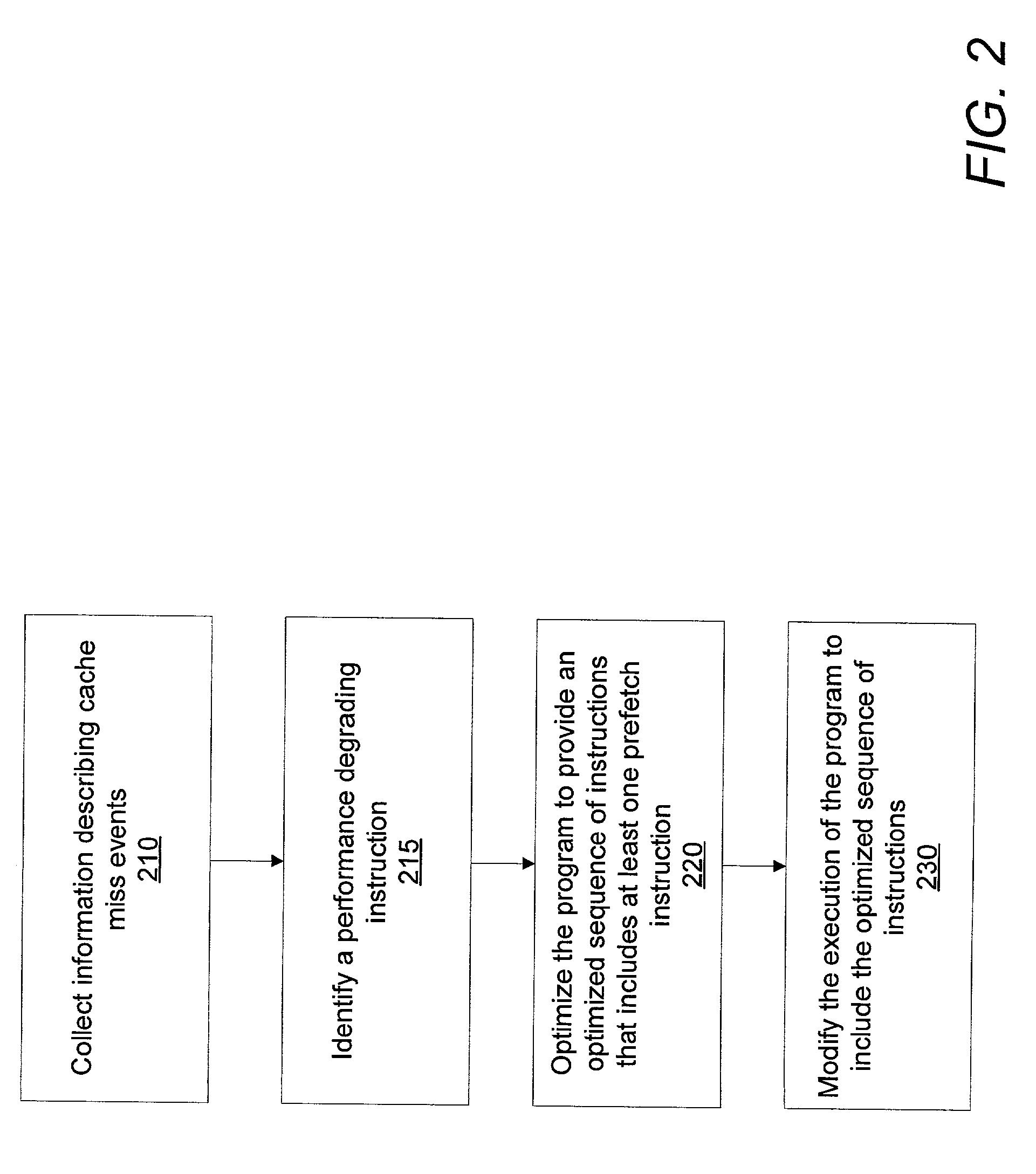

Method of efficient dynamic data cache prefetch insertion

InactiveUS20030145314A1Reducing subsequent cache missesMemory architecture accessing/allocationSoftware engineeringCache missData cache

A system and method for dynamically inserting a data cache prefetch instruction into a program executable to optimize the program being executed. The method, and system thereof, monitors the execution of the program, samples on the cache miss events, identifies the time-consuming execution paths, and optimizes the program during runtime by inserting a prefetch instruction into a new optimized code to hide cache miss latency.

Owner:SUN MICROSYSTEMS INC

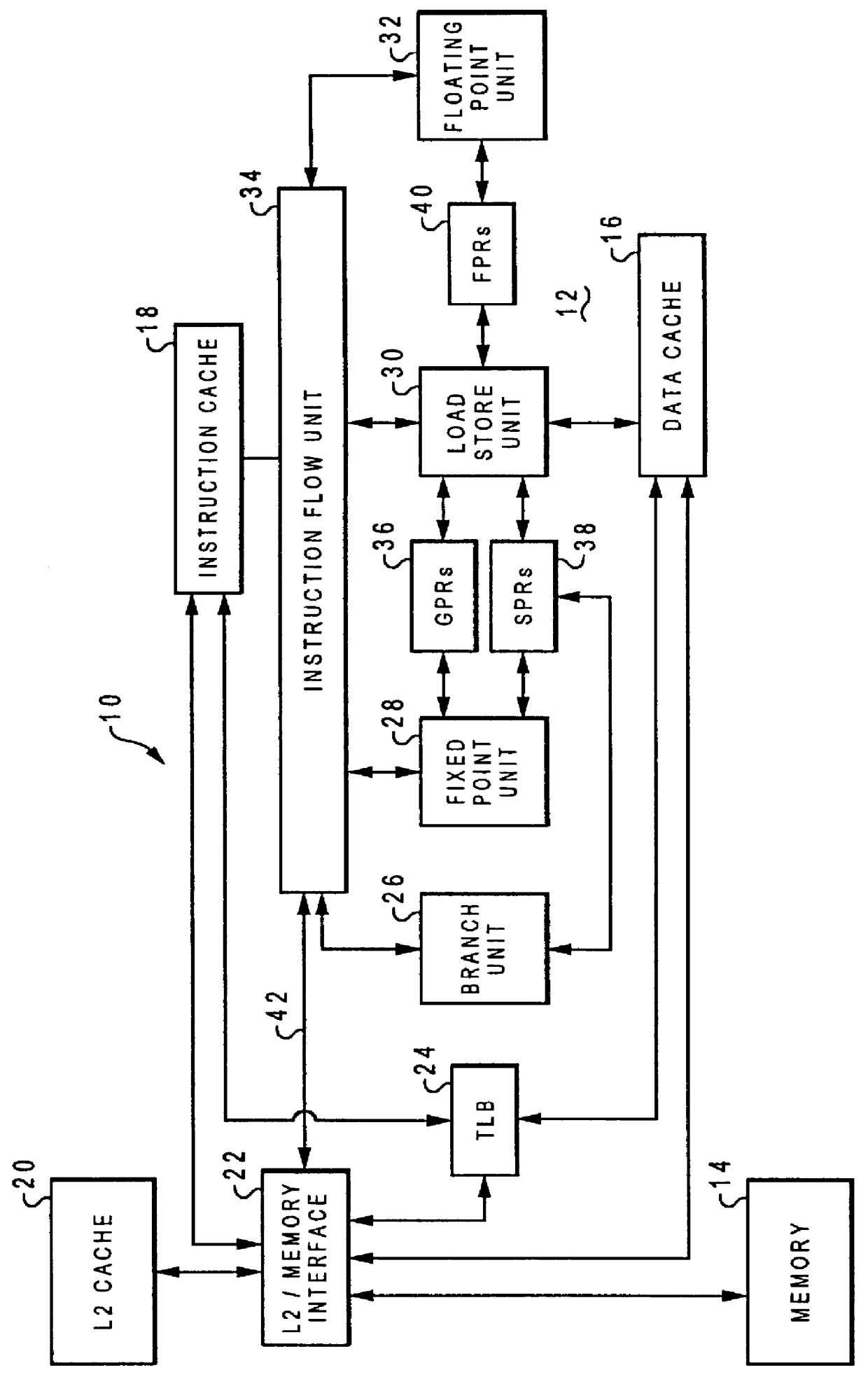

Detecting long latency pipeline stalls for thread switching

InactiveUS6016542AGeneral purpose stored program computerConcurrent instruction executionLong latencyLevel structure

An apparatus is provided that operates in conjunction with a processor having registers and associated caches and a memory. A load management module monitors loads that return data to the registers, including bus requests generated in response to loads that miss in one or more of the caches. A cache miss register includes entries, each of which is associated with one of the registers. A mapping module maps a bus request to a register and sets a bit in a cache miss register entry associated with the register when the bus request is directed to a higher level structure in the memory system.

Owner:INTEL CORP

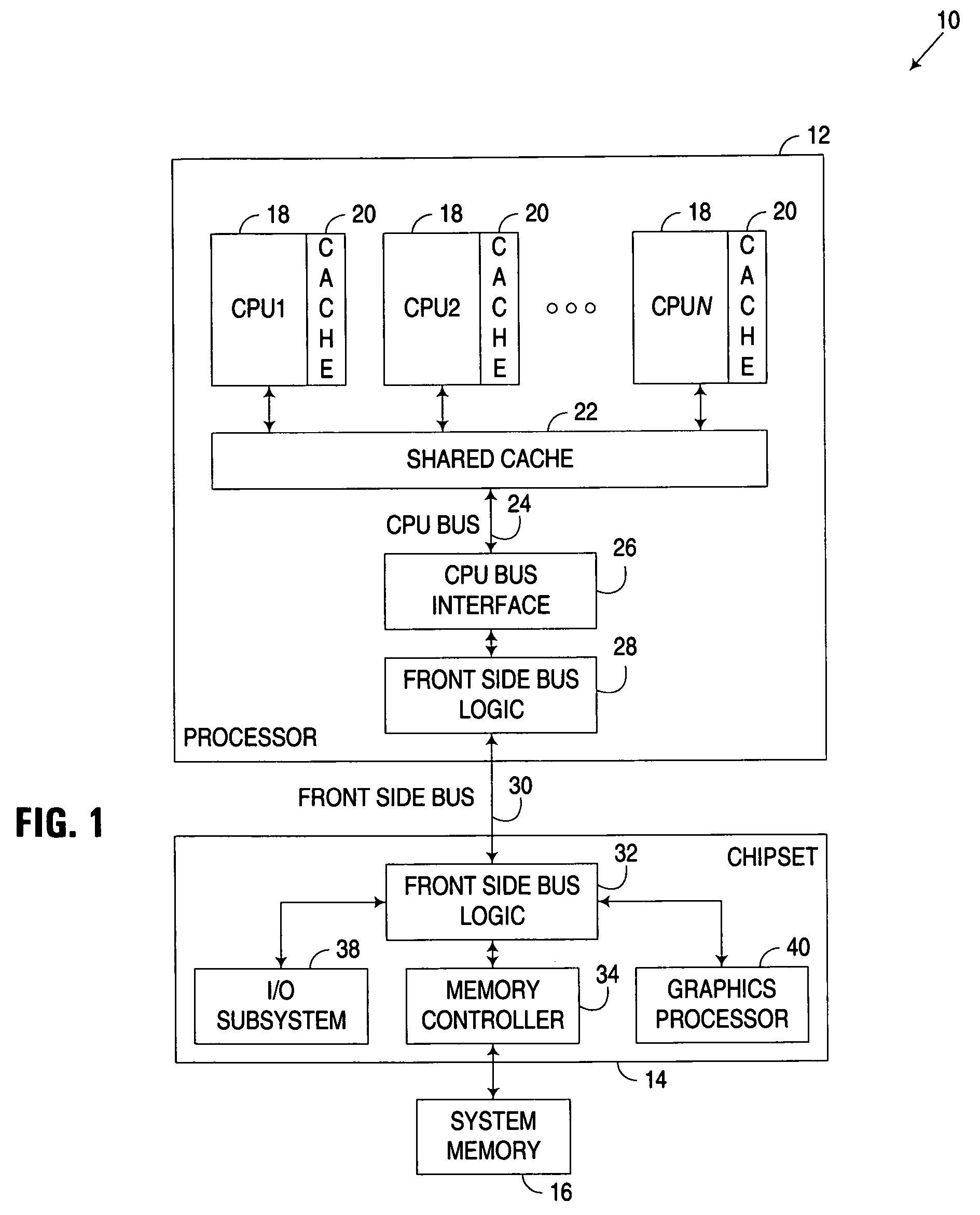

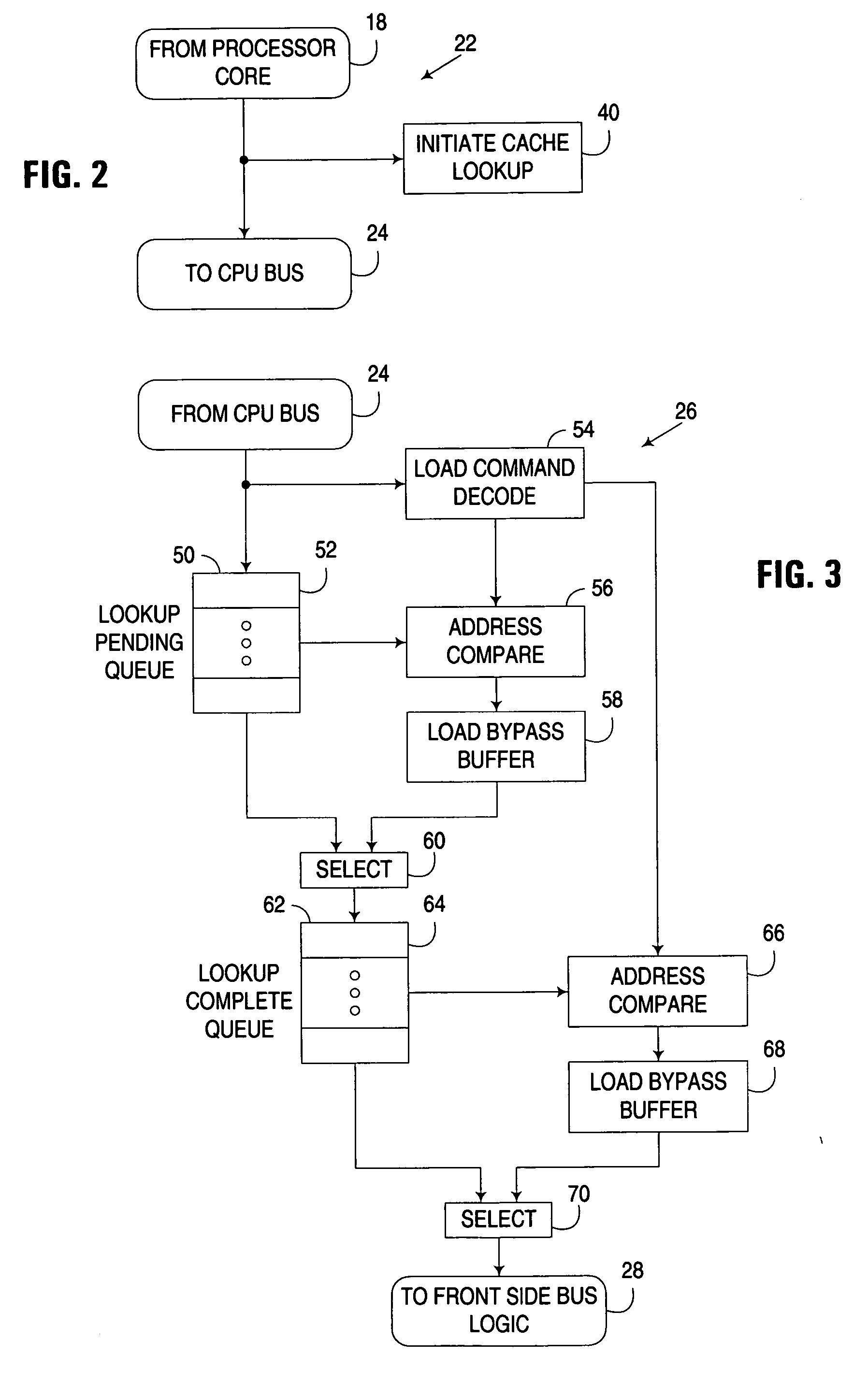

Fast path memory read request processing in a multi-level memory architecture

ActiveUS20070113019A1Lower latencyImprove system performanceDigital computer detailsMemory systemsMethod selectionCache miss

A circuit arrangement and method selectively reorder speculatively issued memory read requests being communicated to a lower memory level in a multi-level memory architecture. In particular, a memory read request that has been speculatively issued to a lower memory level prior to completion of a cache lookup operation initiated in a cache memory in a higher memory level may be reordered ahead of at least one previously received and pending request awaiting communication to the lower memory level. By doing so, the latency associated with the memory read request is reduced when the request results in a cache miss in the higher level memory, and as a result, system performance is improved.

Owner:IBM CORP

Cache architecture to enable accurate cache sensitivity

InactiveUS6243788B1Resource allocationMemory adressing/allocation/relocationOperational systemParallel computing

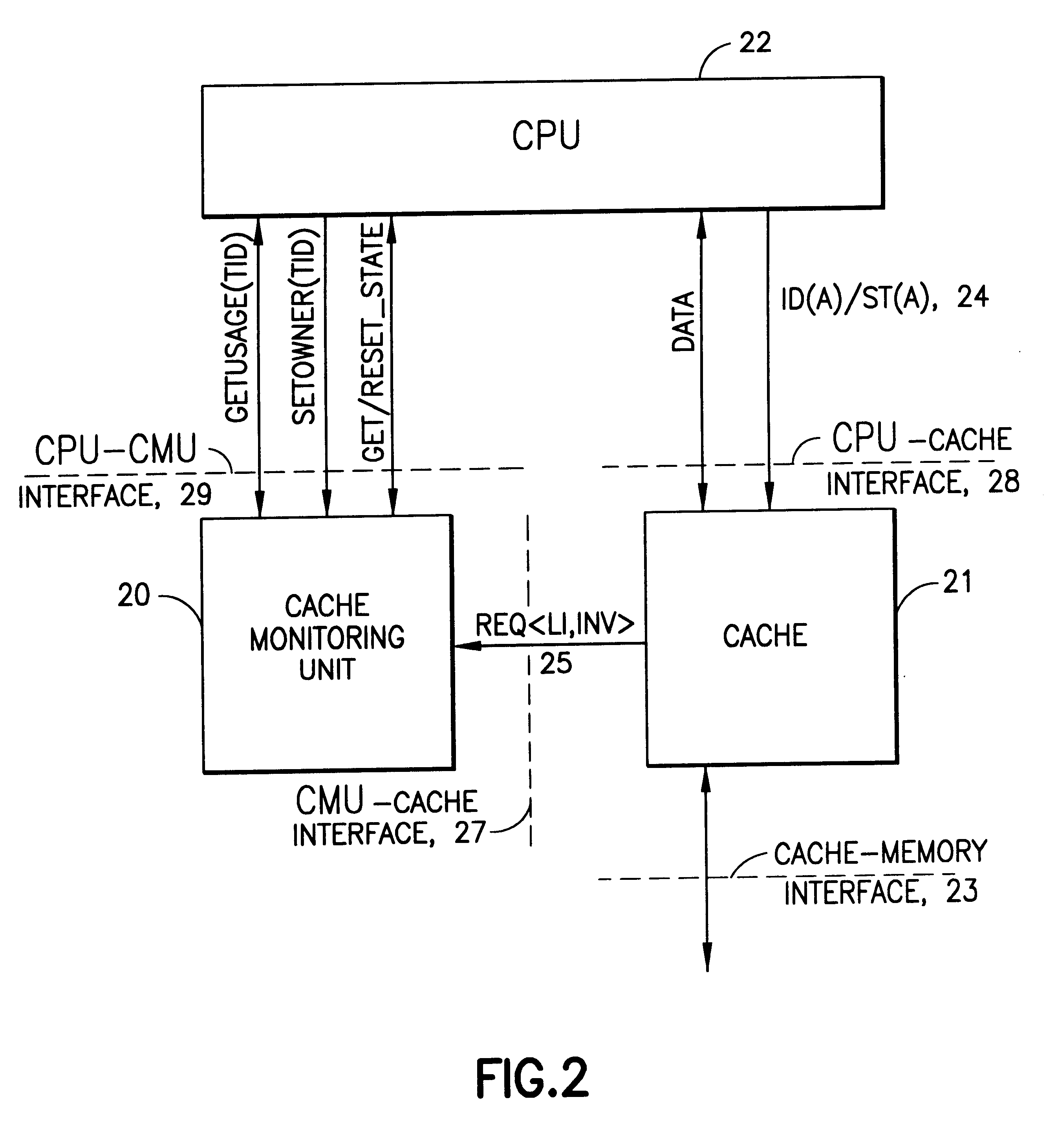

A technique of monitoring the cache footprint of relevant threads on a given processor and its associated cache, thus enabling operating systems to perform better cache sensitive scheduling. A function of the footprint of a thread in a cache can be used as an indication of the affinity of that thread to that cache's processor. For instance, the larger the number of cachelines already existing in a cache, the smaller the number of cache misses the thread will experience when scheduled on that processor, and hence the greater the affinity of the thread to that processor. Besides a thread's priority and other system defined parameters, scheduling algorithms can take cache affinity into account when assigning execution of threads to particular processors. This invention describes an apparatus that accurately measures the cache footprint of a thread on a given processor and its associated cache by keeping a state and ownership count of cachelines based on ownership registration and a cache usage as determined by a cache monitoring unit.

Owner:IBM CORP

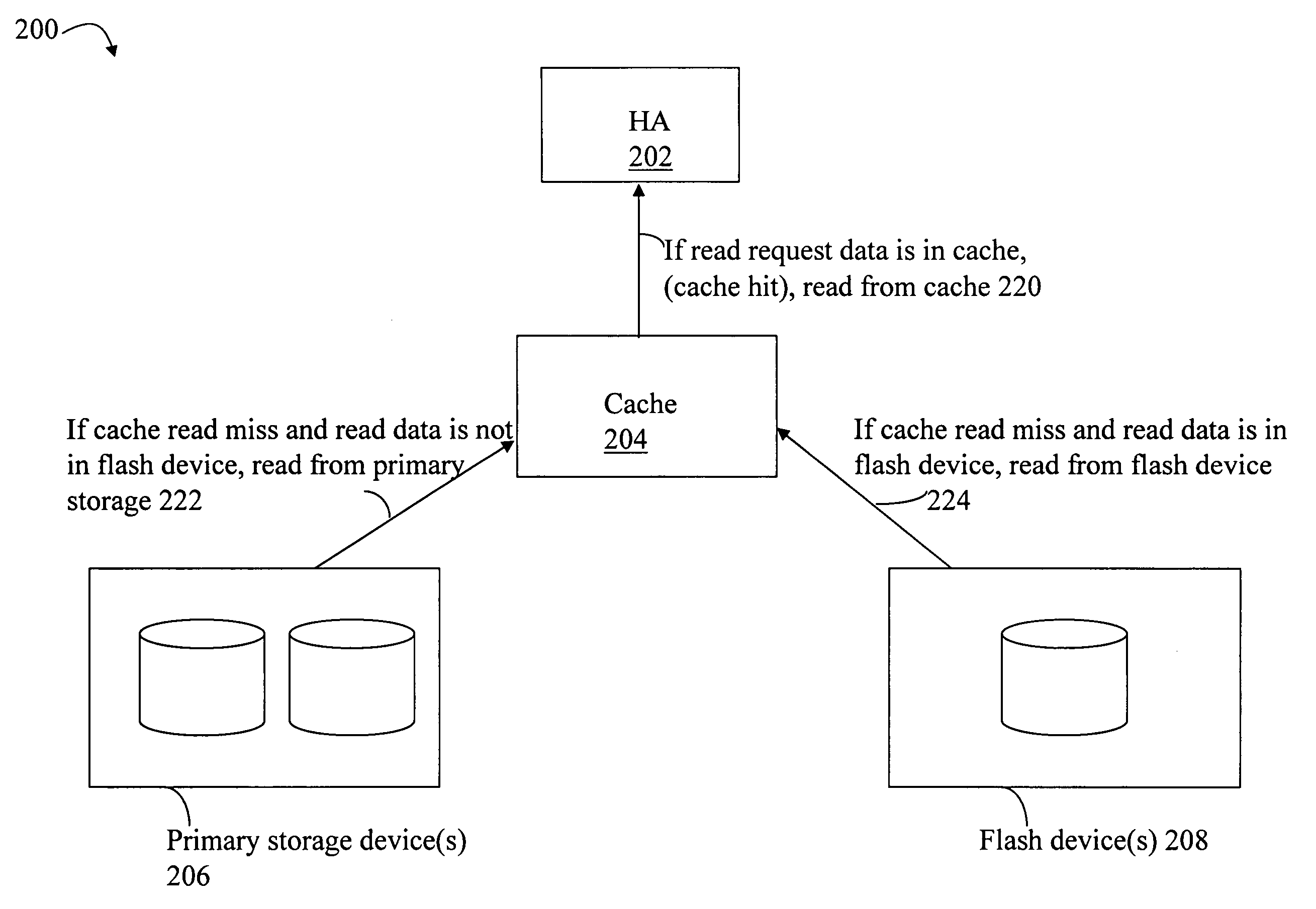

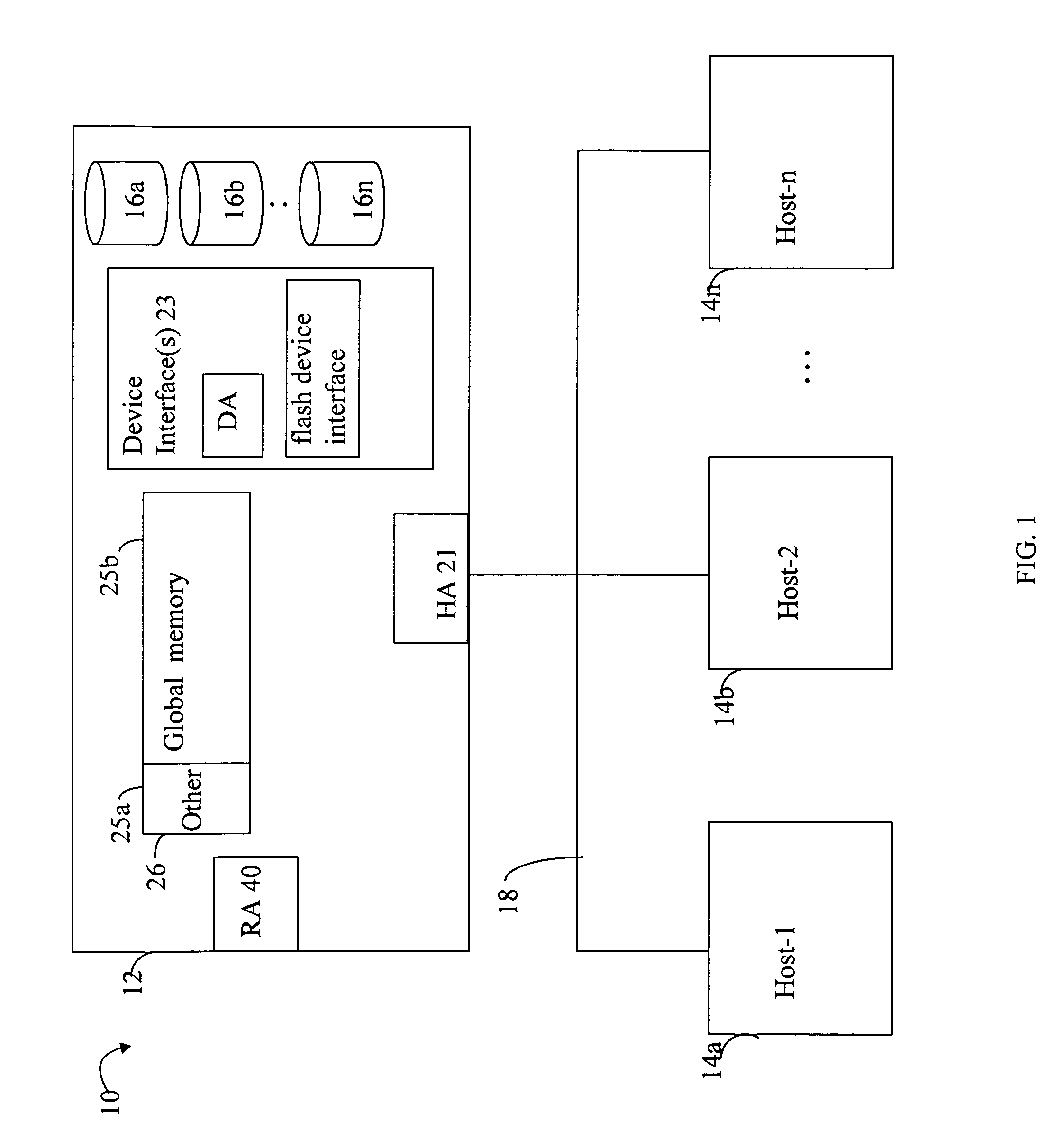

Techniques for processing I/O requests

ActiveUS8046551B1Quality improvementMemory architecture accessing/allocationMemory systemsOperating systemCopying

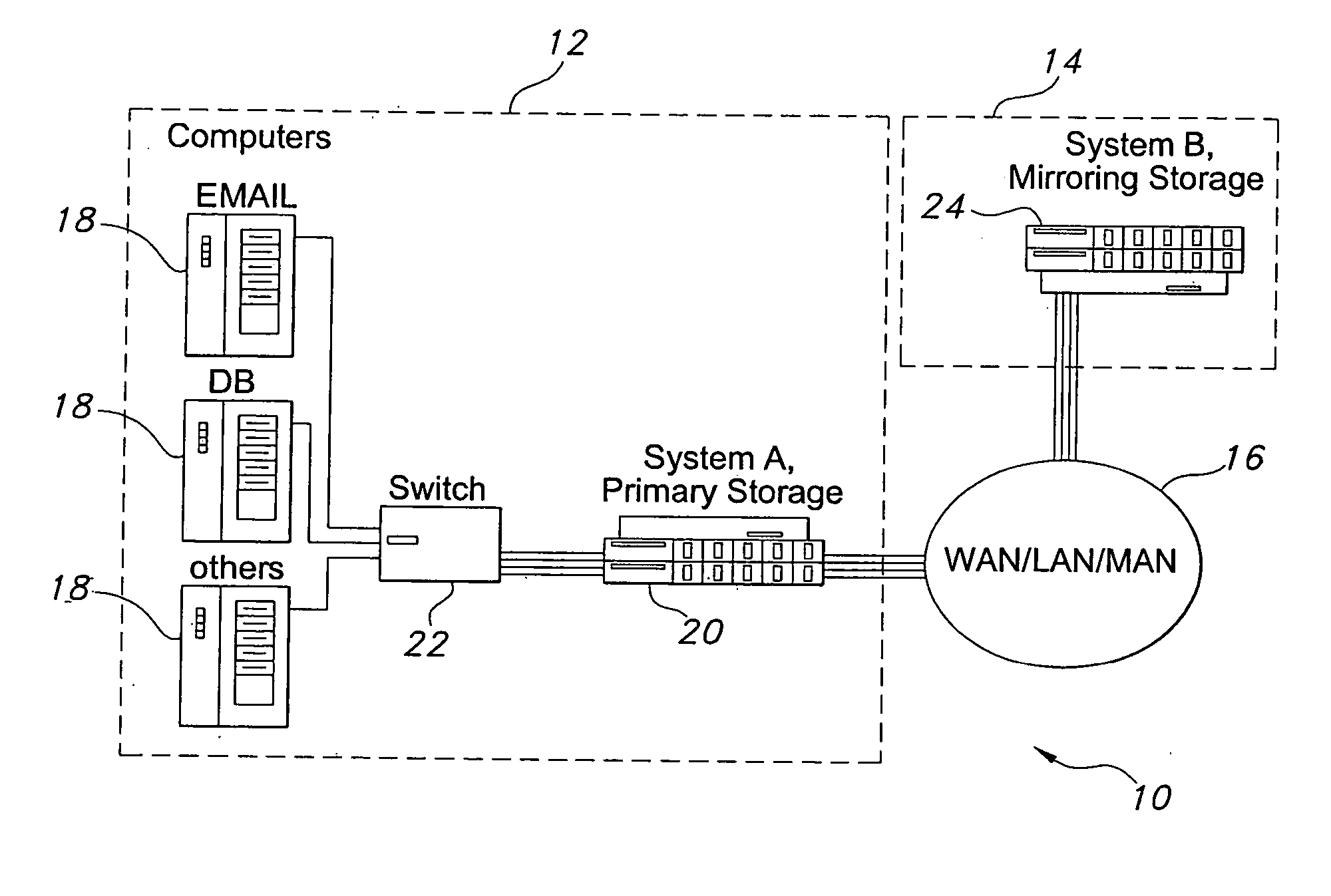

Described are techniques executed in a data storage system in connection with processing an I / O request. The I / O request is received. It is determined whether the I / O request is a write request. If the I / O request is a write request, write request processing is performed. The write request processing includes: copying write request data of the write request to cache; destaging the write request data from the cache to a primary storage device; and copying, in accordance with a heuristic, the write request data from the primary storage device to an asynchronous mirror device including an asynchronous copy of data from the primary storage device, wherein the asynchronous mirror device is write disabled with respect to received write requests requesting to write data thereto, the asynchronous mirror device used for servicing data requested in connection with read requests upon the occurrence of a cache miss.

Owner:EMC IP HLDG CO LLC

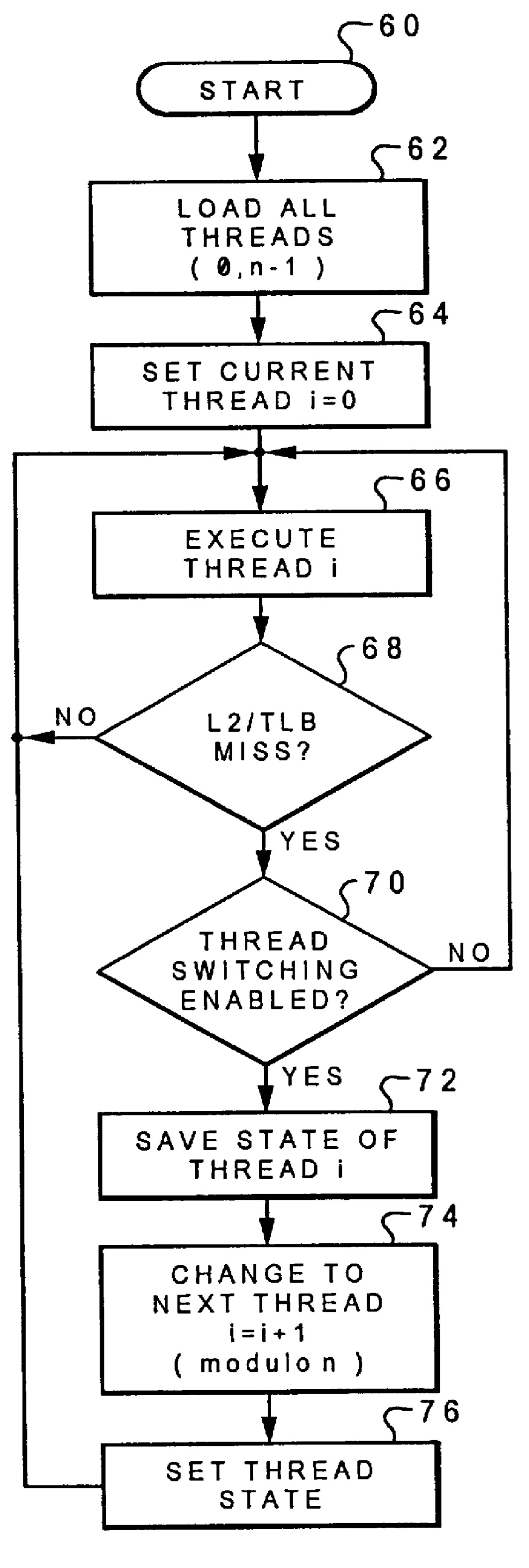

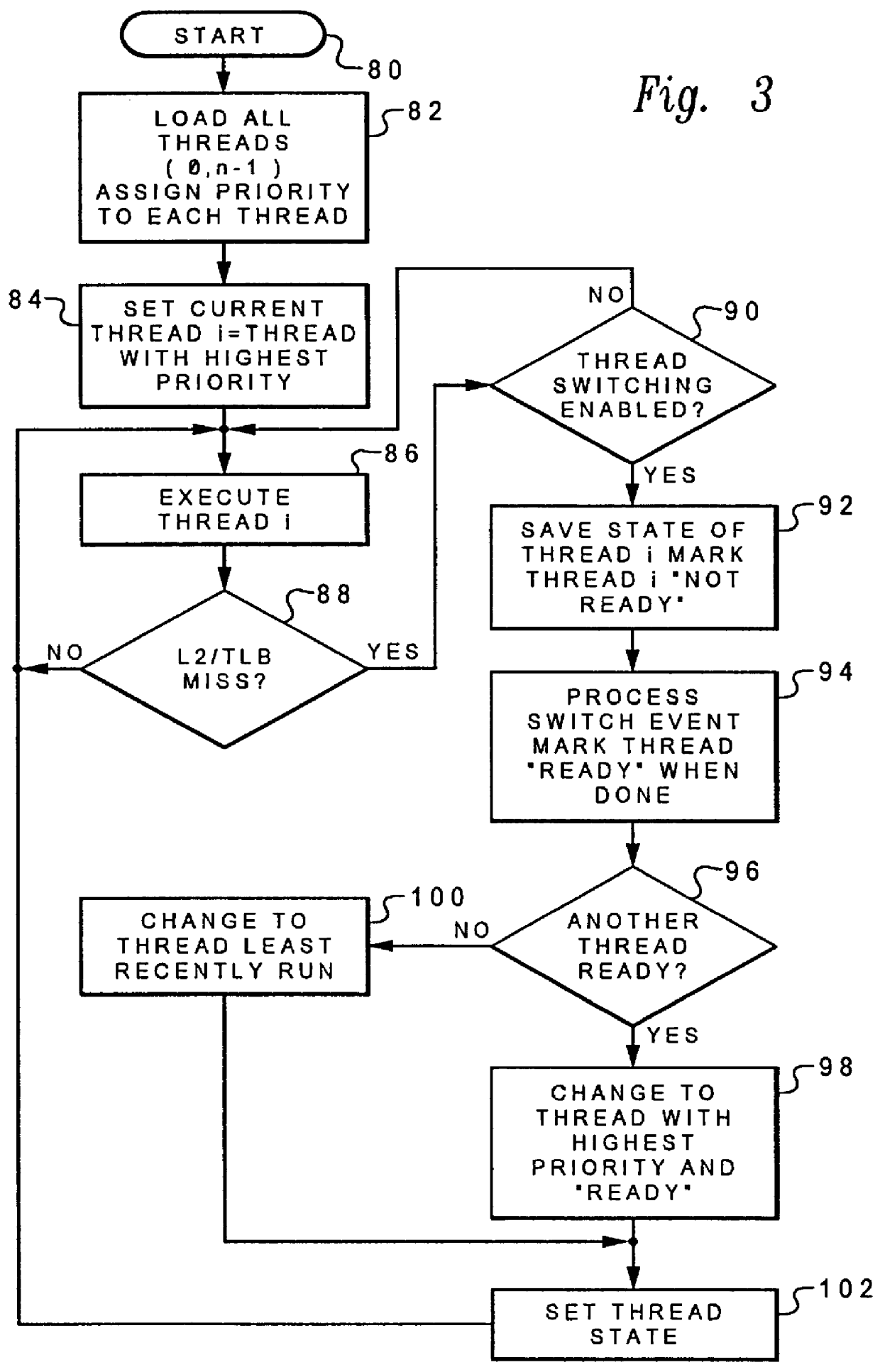

Method and system for multi-thread switching only when a cache miss occurs at a second or higher level

InactiveUS6049867AImproved high performance multithread data processorReduce delaysProgram initiation/switchingMemory adressing/allocation/relocationData processing systemParallel computing

A method and system for enhanced performance multithread operation in a data processing system which includes a processor, a main memory store and at least two levels of cache memory. At least one instruction within an initial thread is executed. Thereafter, the state of the processor at a selected point within the first thread is stored, execution of the first thread is terminated and a second thread is selected for execution only in response to a level two or higher cache miss, thereby minimizing processor delays due to memory latency. The validity state of each thread is preferably maintained in order to minimize the likelihood of returning to a prior thread for execution before the cache miss has been corrected. A least recently executed thread is preferably selected for execution in the event of a nonvalidity indication in association with all remaining threads, in anticipation of a change to the valid status of that thread prior to all other threads. A thread switch bit may also be utilized to selectively inhibit thread switching where execution of a particular thread is deemed necessary.

Owner:IBM CORP

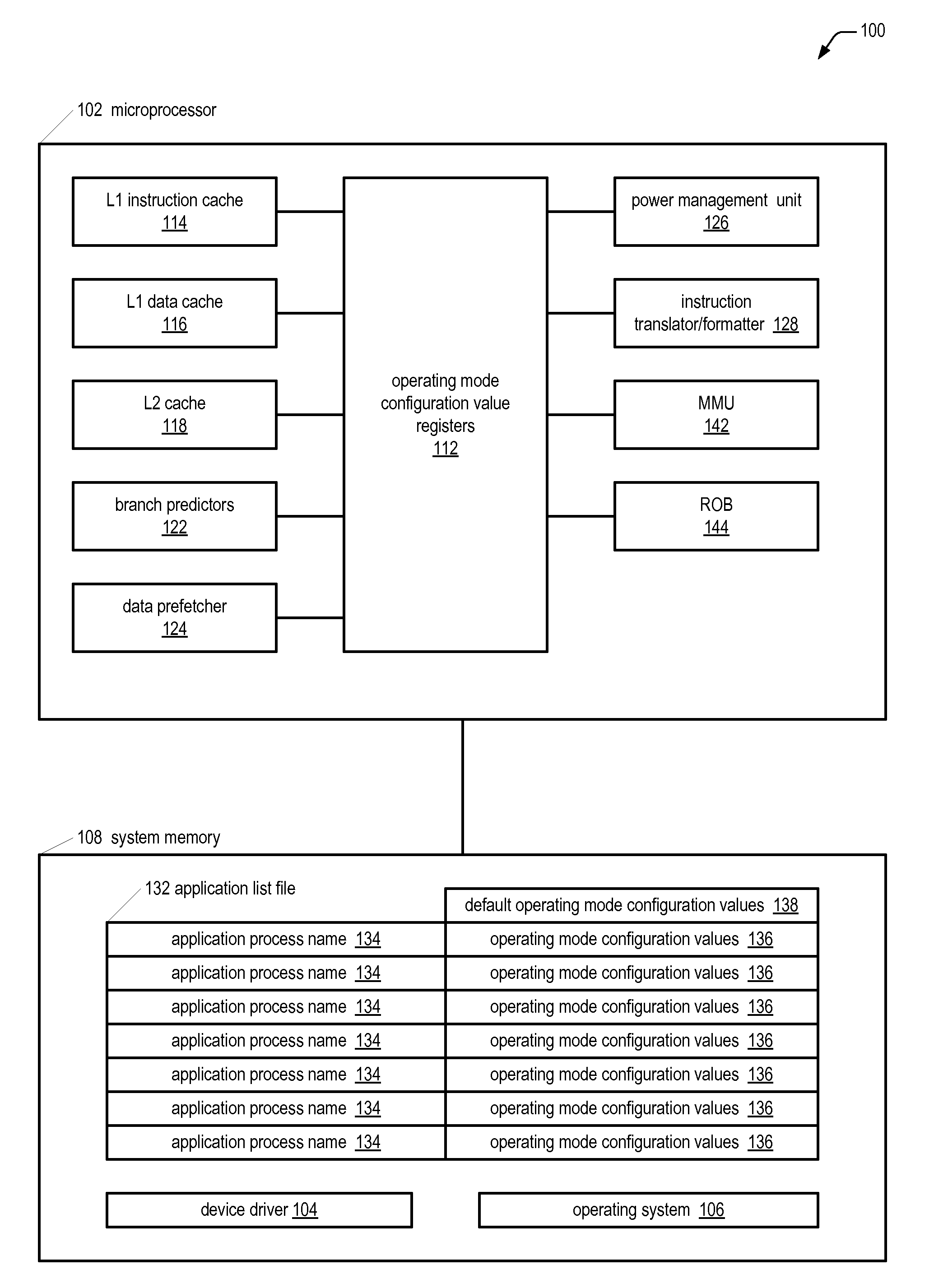

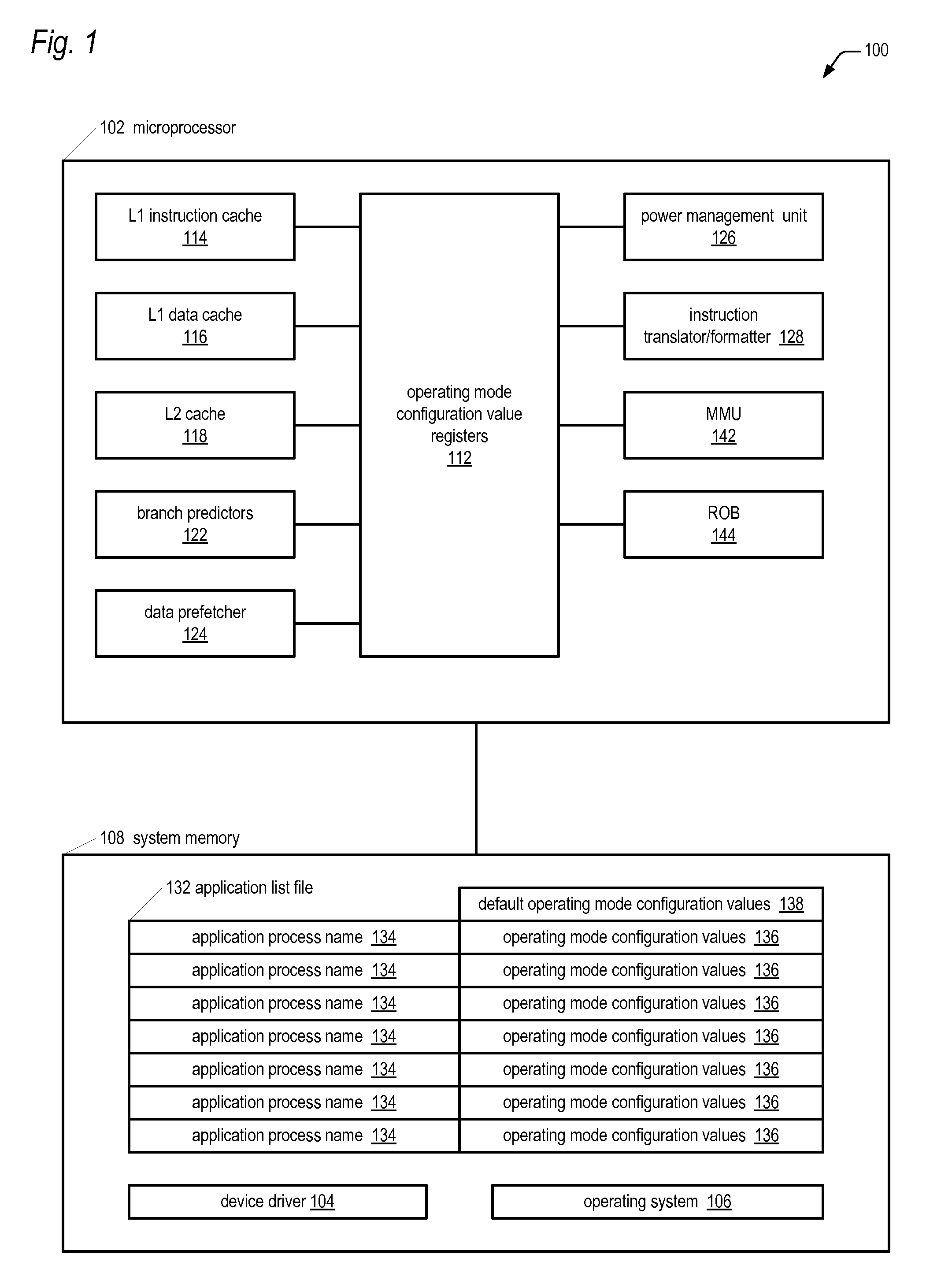

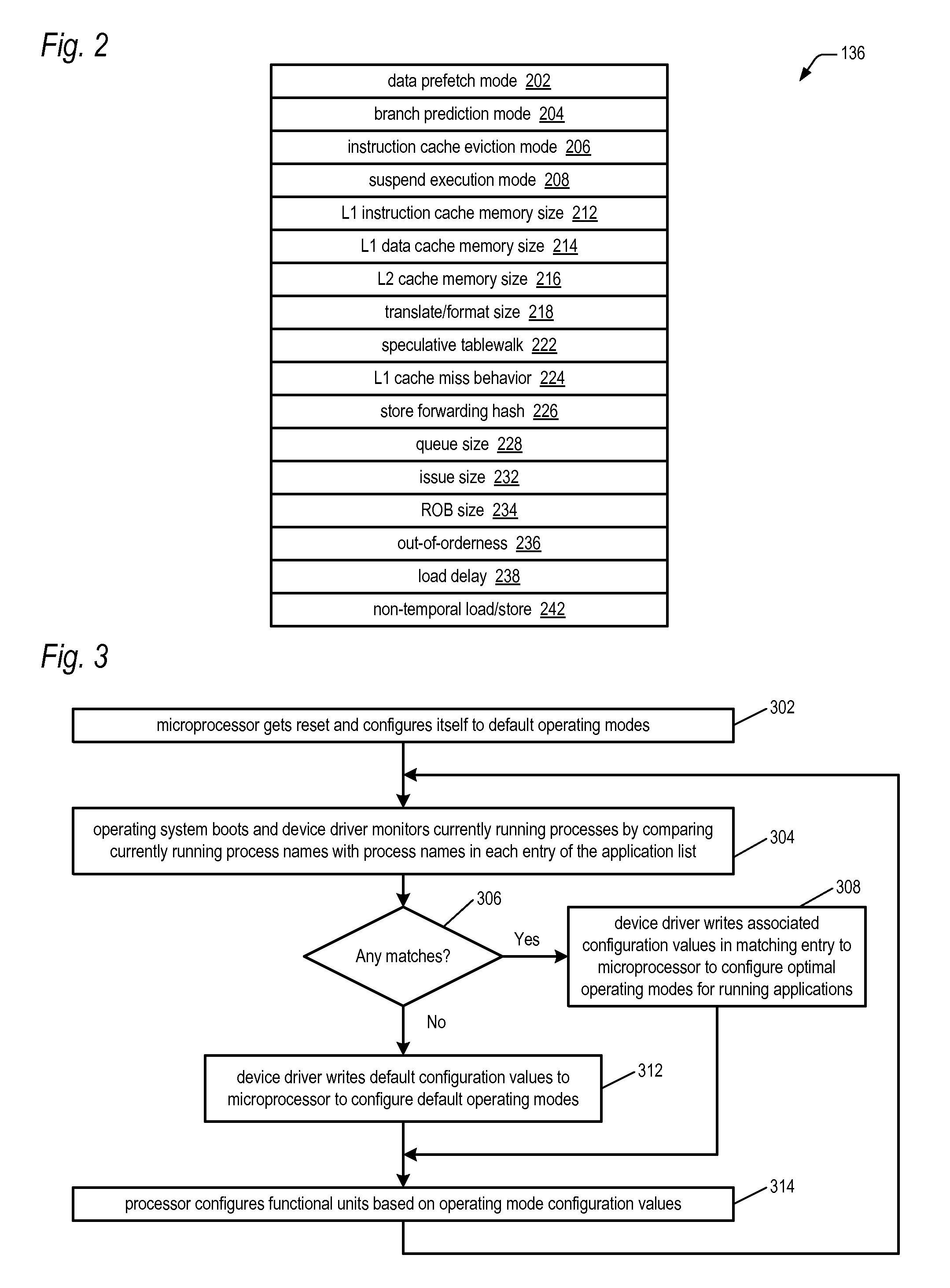

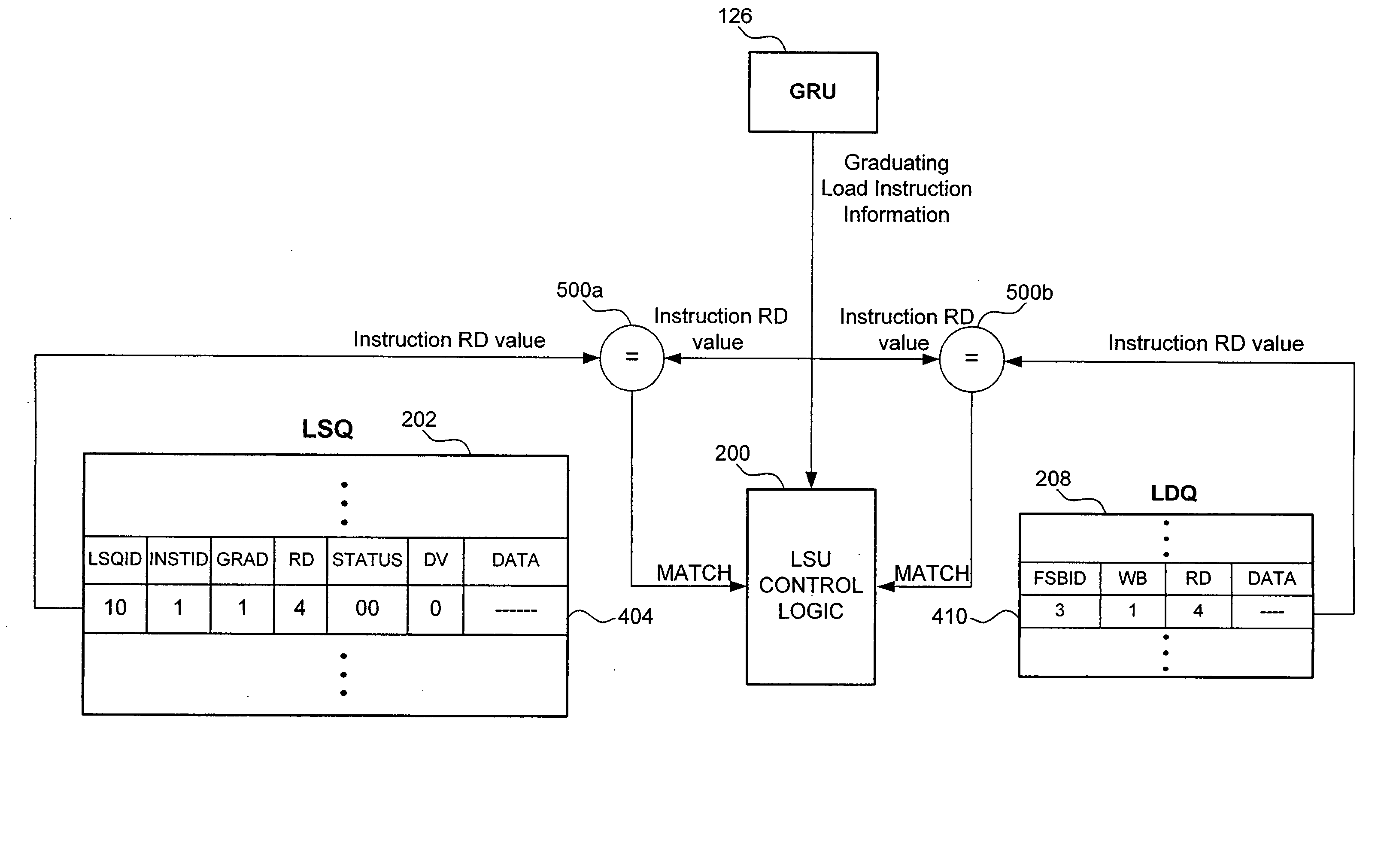

Microprocessor with multiple operating modes dynamically configurable by a device driver based on currently running applications

ActiveUS20100011198A1Improve performanceReduce power consumptionRuntime instruction translationMemory adressing/allocation/relocationBranch target address cacheApplication software

A computing system includes a microprocessor that receives values for configuring operating modes thereof. A device driver monitors which software applications currently running on the microprocessor are in a predetermined list and responsively dynamically writes the values to the microprocessor to configure its operating modes. Examples of the operating modes the device driver may configure relate to the following: data prefetching; branch prediction; instruction cache eviction; instruction execution suspension; sizes of cache memories, reorder buffer, store / load / fill queues; hashing algorithms related to data forwarding and branch target address cache indexing; number of instruction translation, formatting, and issuing per clock cycle; load delay mechanism; speculative page tablewalks; instruction merging; out-of-order execution extent; caching of non-temporal hinted data; and serial or parallel access of an L2 cache and processor bus in response to an instruction cache miss.

Owner:VIA TECH INC

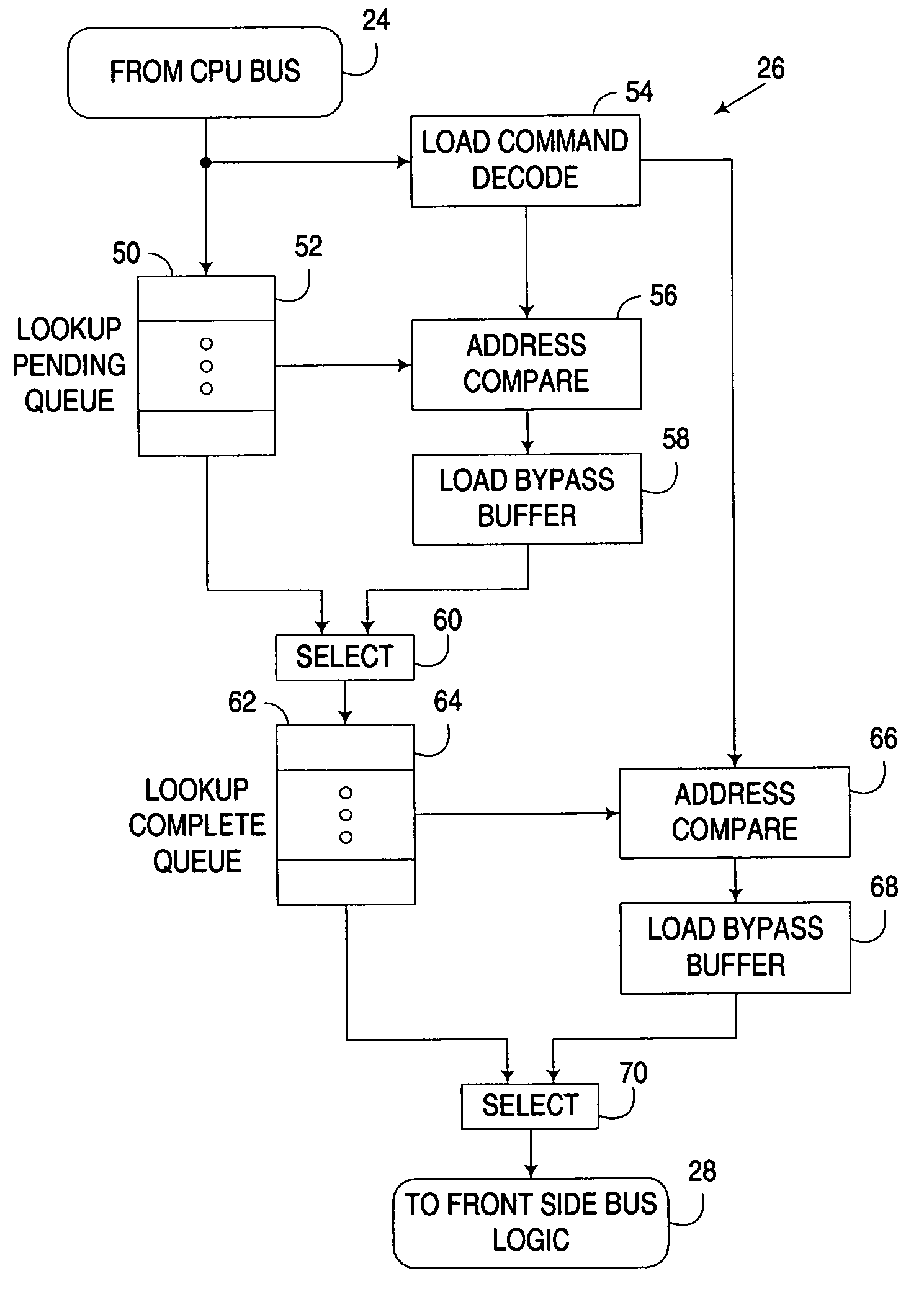

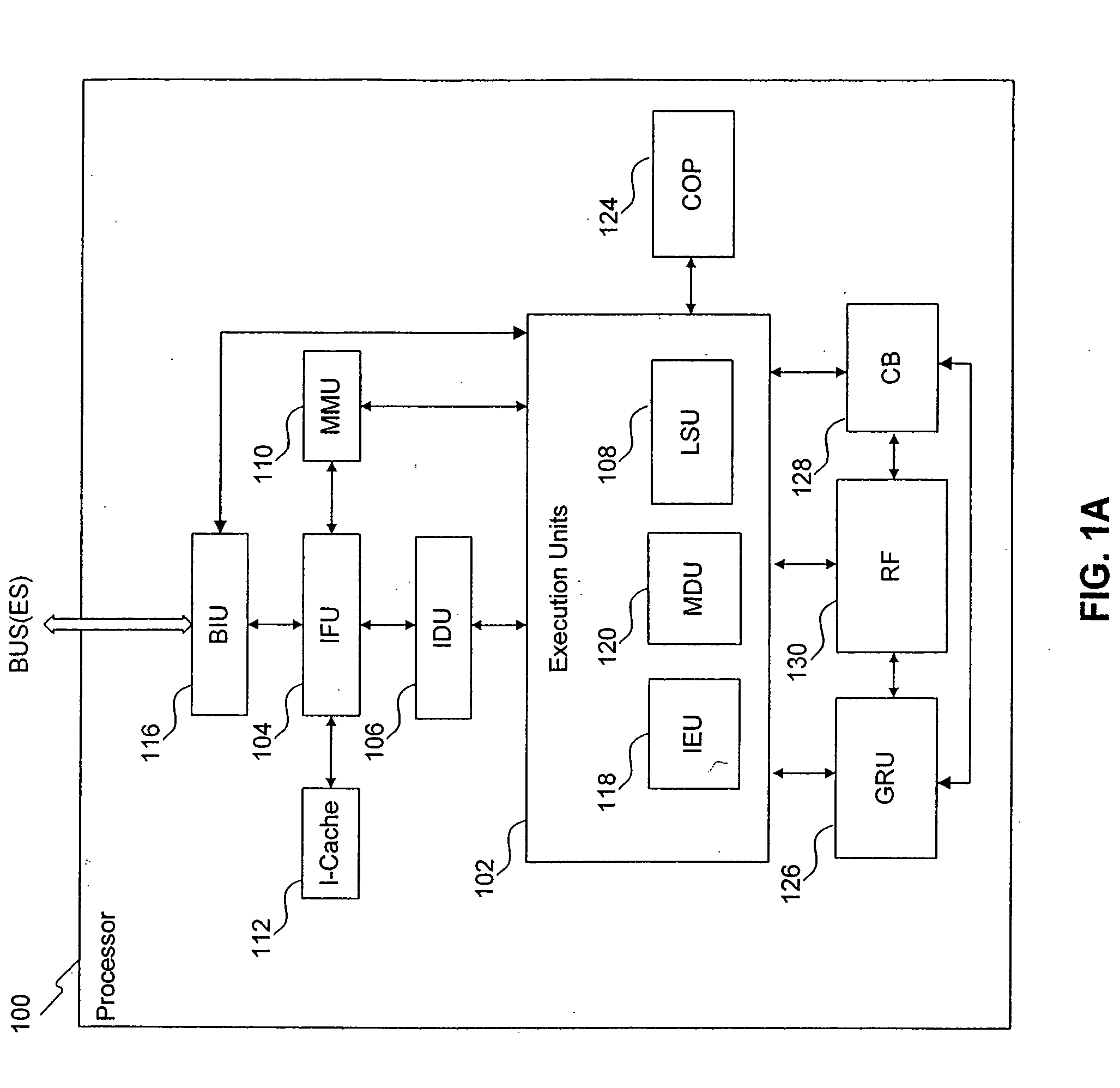

Load/store unit for a processor, and applications thereof

ActiveUS20080082794A1Digital computer detailsSpecific program execution arrangementsData storingData store

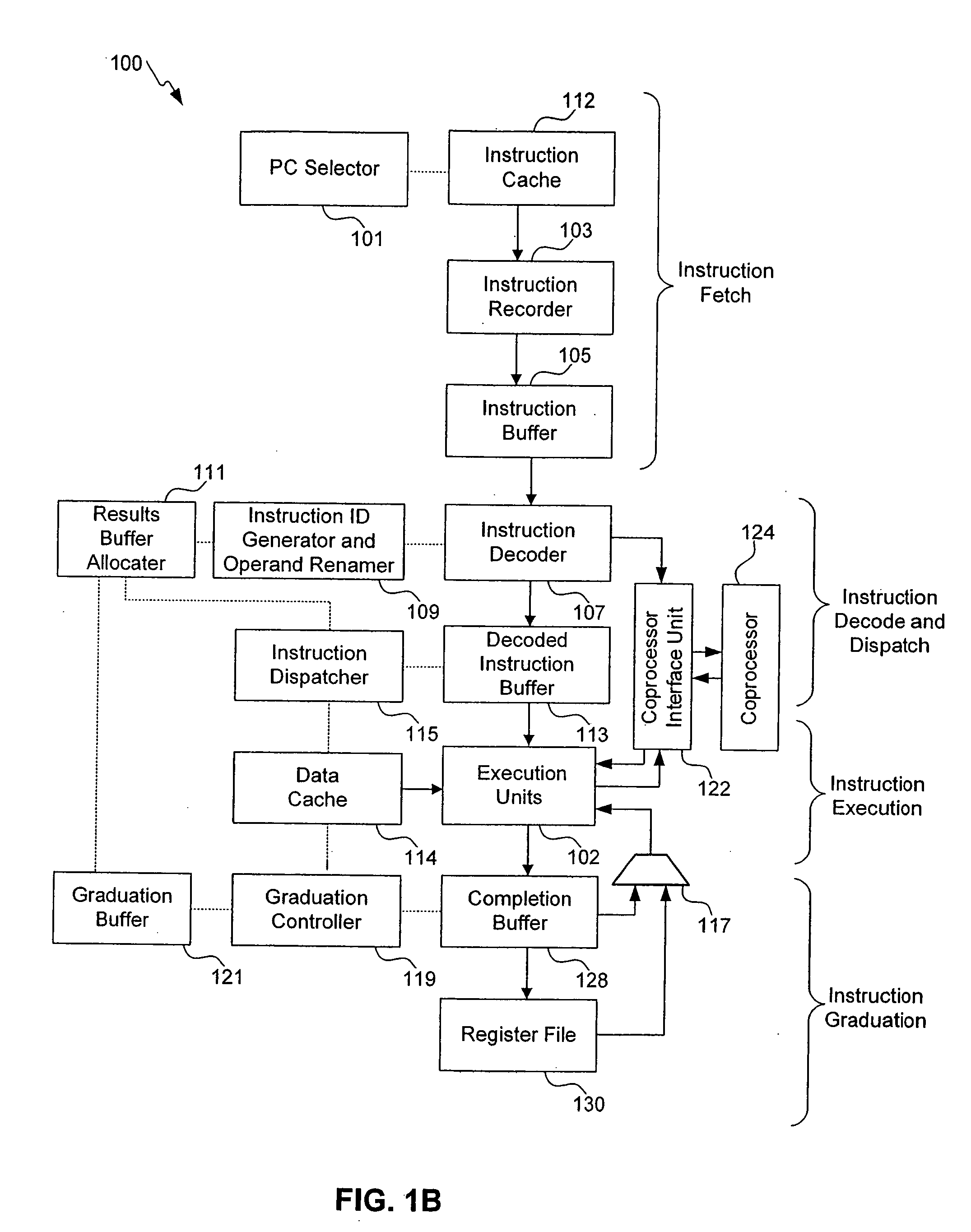

A load / store unit for a processor, and applications thereof. In an embodiment, the load / store unit includes a load / store queue configured to store information and data associated with a particular class of instructions. Data stored in the load / store queue can be bypassed to dependent instructions. When an instruction belonging to the particular class of instructions graduates and the instruction is associated with a cache miss, control logic causes a pointer to be stored in a load / store graduation buffer that points to an entry in the load / store queue associated with the instruction. The load / store graduation buffer ensures that graduated instructions access a shared resource of the load / store unit in program order.

Owner:ARM FINANCE OVERSEAS LTD

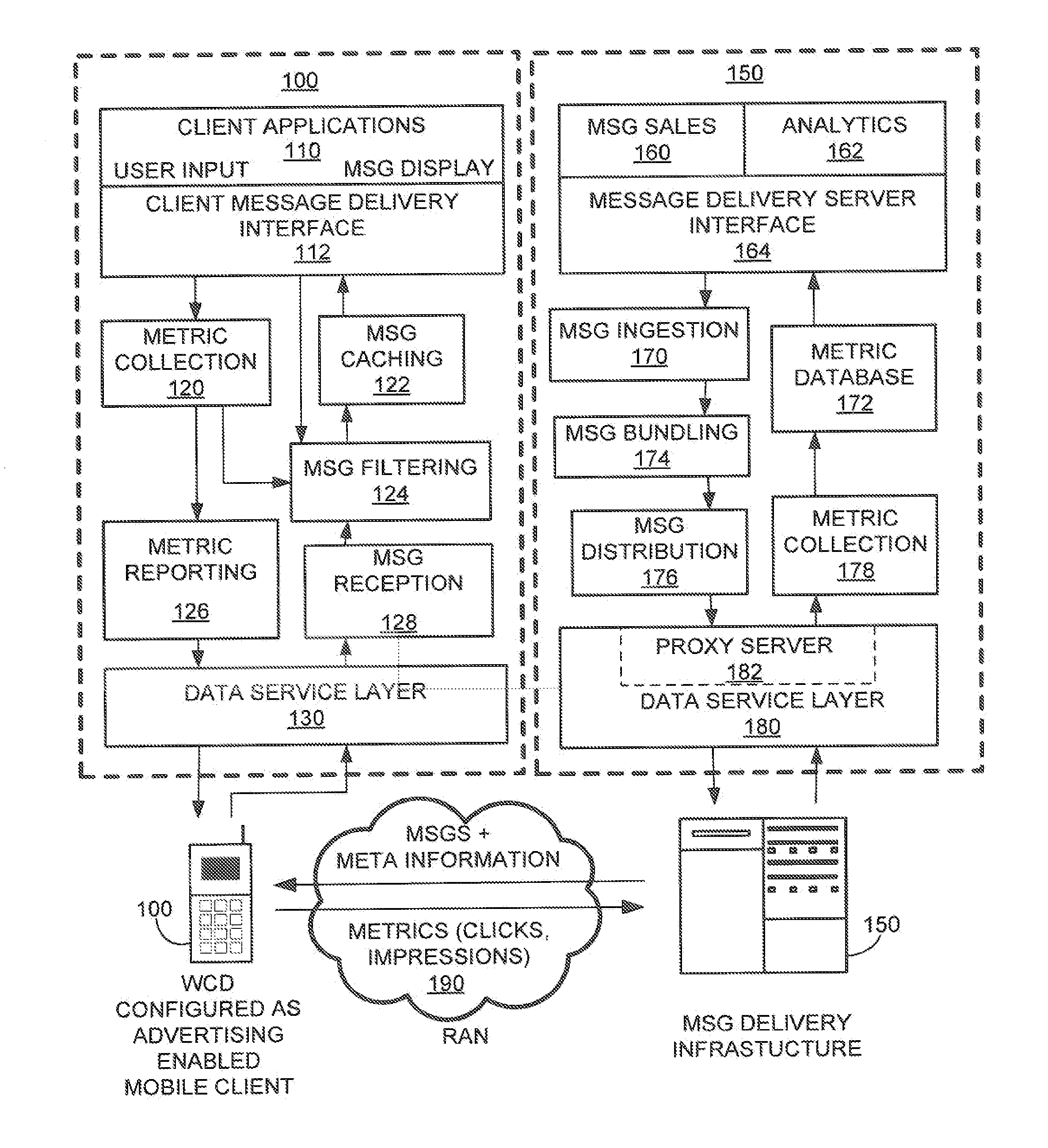

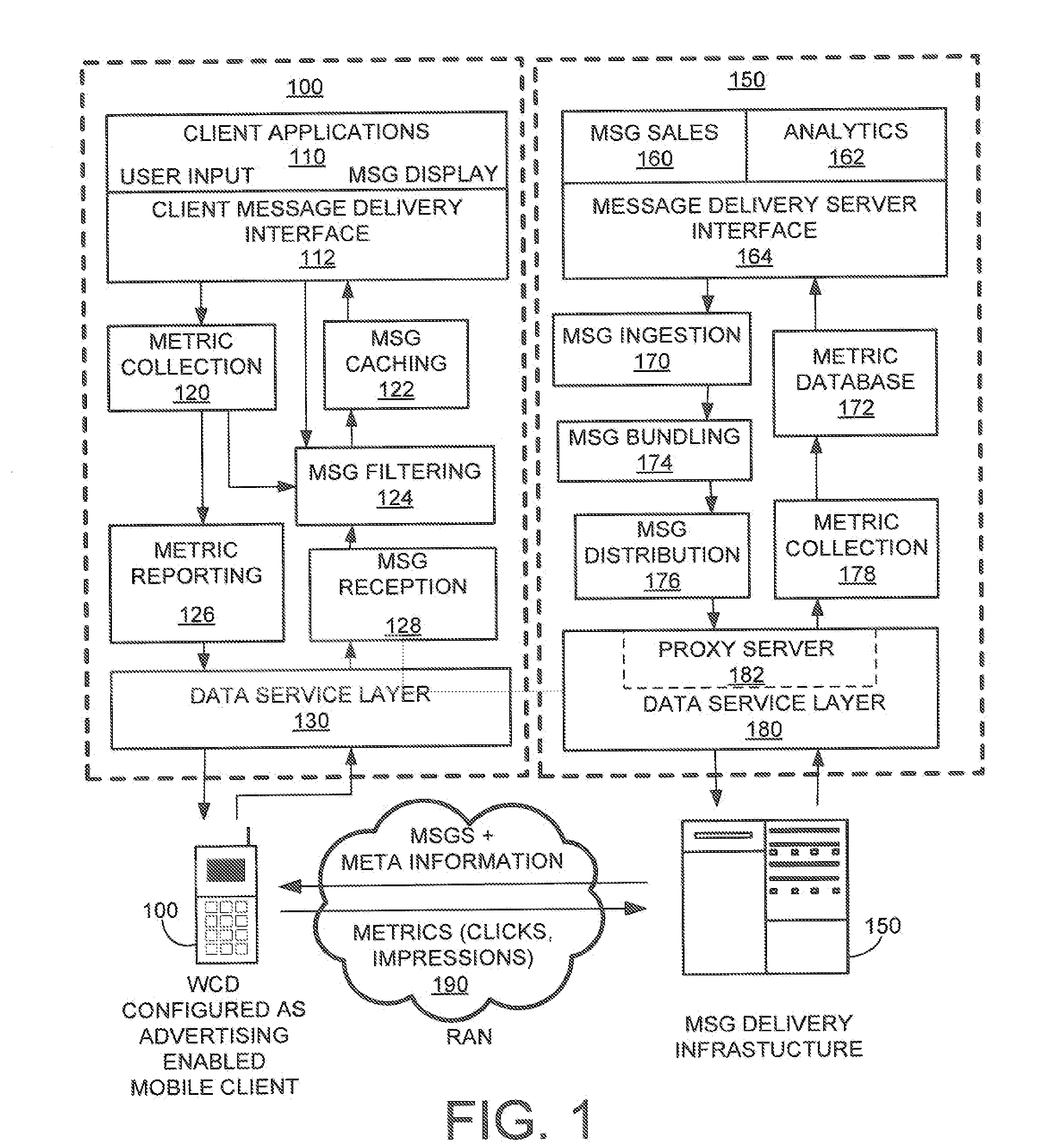

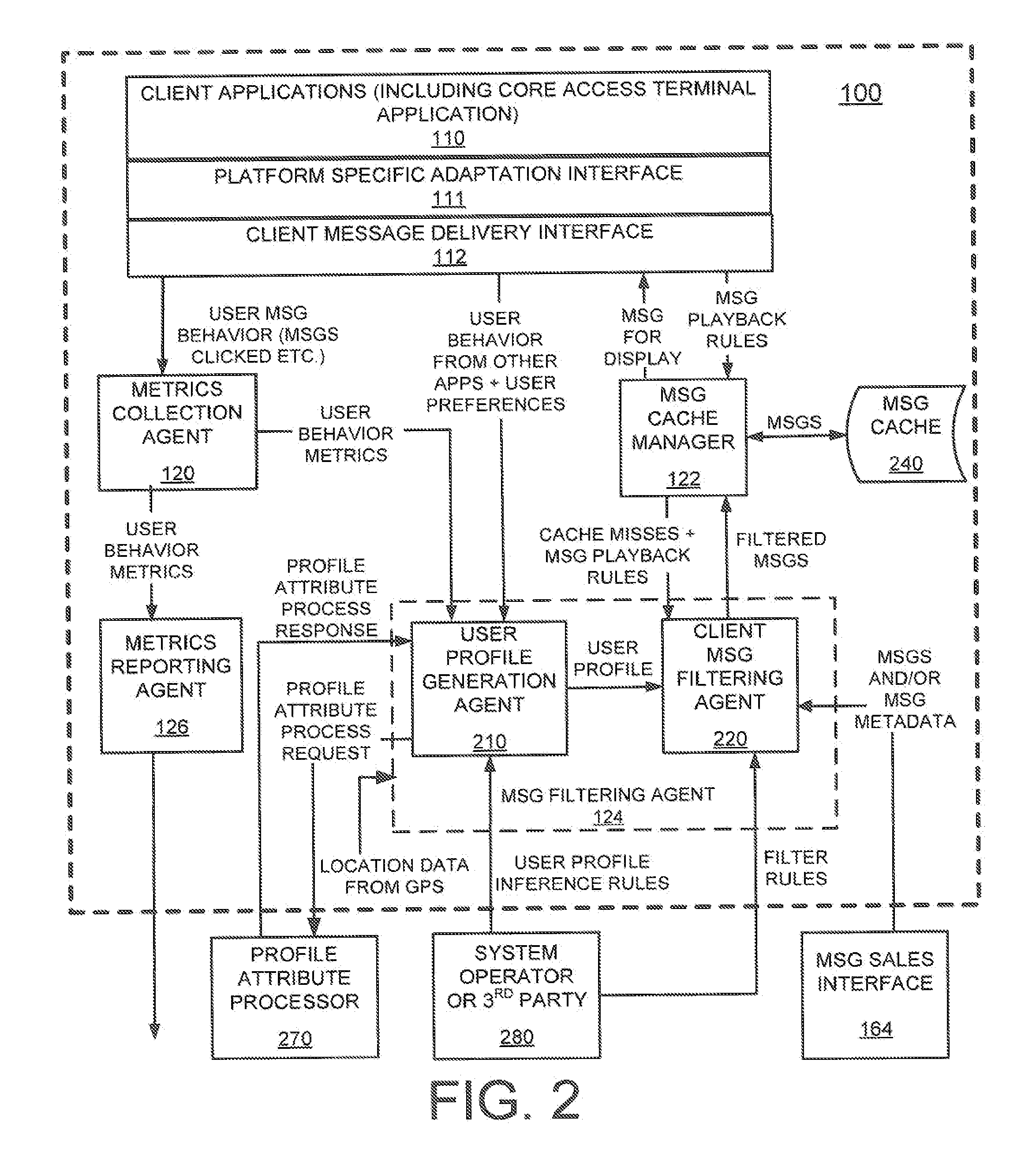

Method and system for using a cache miss state match indicator to determine user suitability of targeted content messages in a mobile environment

Methods and systems for determining a suitability for a mobile client to display information are disclosed. A particular exemplary method includes maintaining on the mobile client a list of first attributes relating to message cache misses of a cache memory located on the mobile client, receiving on the mobile client a set of target attributes associated with a target message, performing on the mobile client one or more matching operations between the first attributes and the target attributes to produce a matching result, and storing the target message in a cache in the mobile client dependant upon the matching result.

Owner:QUALCOMM INC

Methods and apparatuses for compiler-creating helper threads for multi-threading

Methods and apparatuses for compiler-created helper thread for multi-threading are described herein. In one embodiment, exemplary process includes identifying a region of a main thread that likely has one or more delinquent loads, the one or more delinquent loads representing loads which likely suffer cache misses during an execution of the main thread, analyzing the region for one or more helper threads with respect to the main thread, and generating code for the one or more helper threads, the one or more helper threads being speculatively executed in parallel with the main thread to perform one or more tasks for the region of the main thread. Other methods and apparatuses are also described.

Owner:INTEL CORP

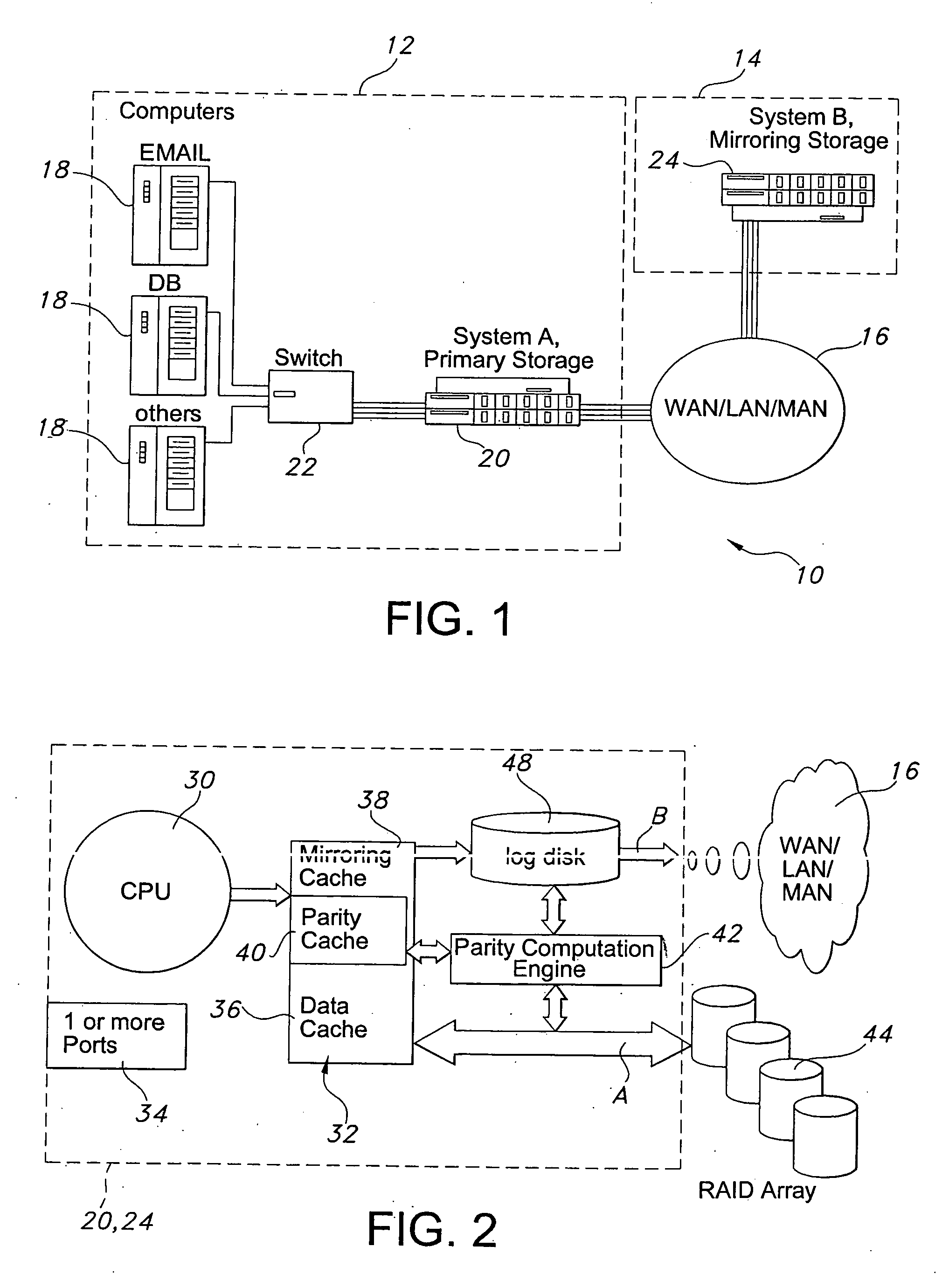

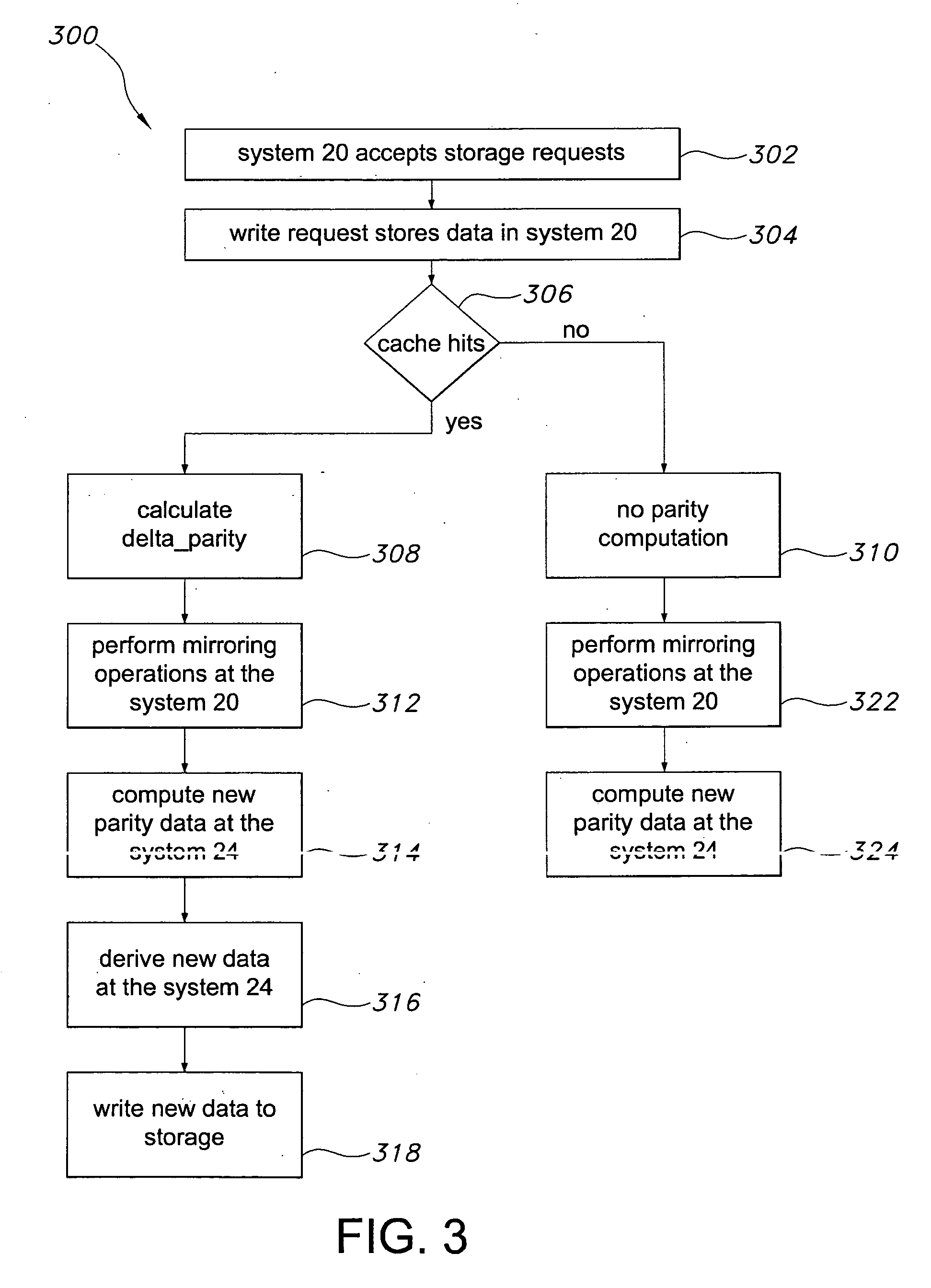

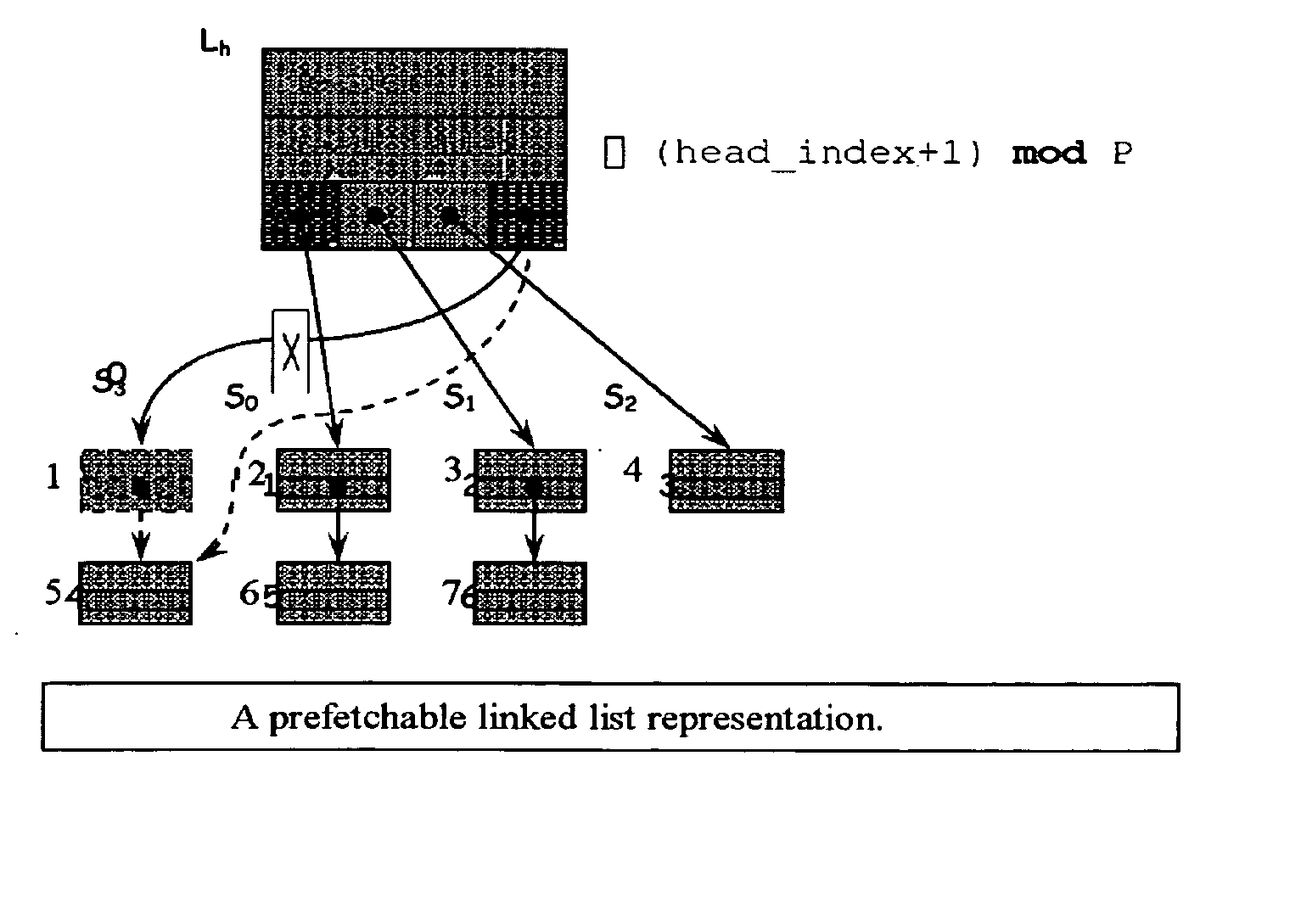

Data replication method over a limited bandwidth network by mirroring parities

InactiveUS20060036904A1Easy to useReduce the amount requiredError detection/correctionRAIDNetwork connection

A storage architecture provides efficient remote mirroring of data in RAID storage or like to a remote storage through a network connection. The storage architecture mirrors only a delta_parity. A parity cache keeps the delta_parity of each data block until the block is mirrored to the remote site. Whenever network bandwidth is available, the parity cache performs a cache operation to mirror the delta_parity to the remote site. If a cache miss occurs, i.e. the delta_parity is not found in the parity cache, computation of the data parity creates the delta_parity. For RAID architectures, reading old data and old parity is a necessary step of computing new parity for every write operation. Thus, no additional operation is needed to compute the delta_parity for mirroring. At the remote site, the delta_parity is used to generate the new parity and the new data using the old data and parity and, in turn, WAN traffic is substantially reduced.

Owner:GEMINI STORAGE

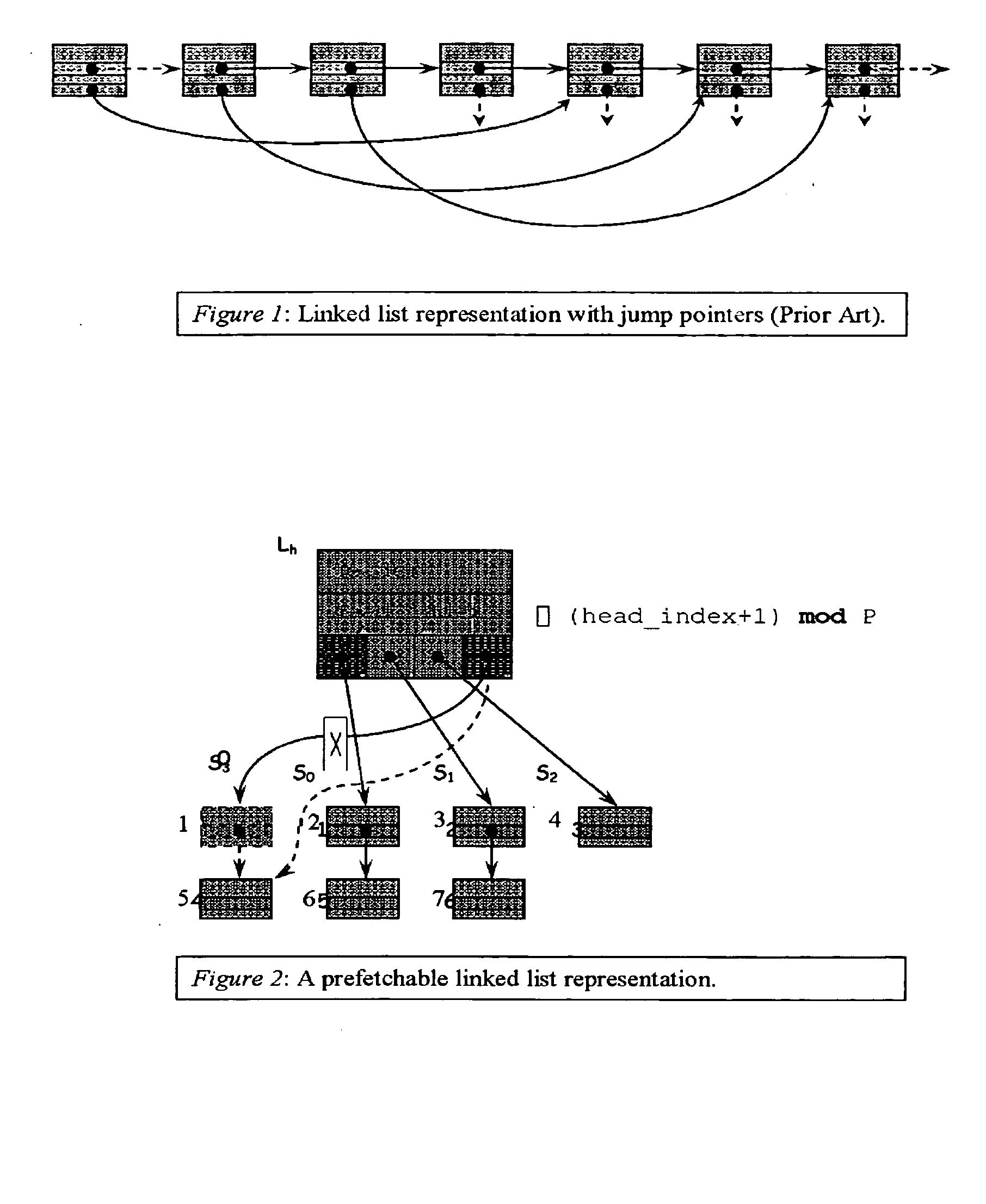

Method for prefetching recursive data structure traversals

InactiveUS20050102294A1Improve cache hit ratioPotential throughput of the computer systemDigital data information retrievalData processing applicationsOperational systemTerm memory

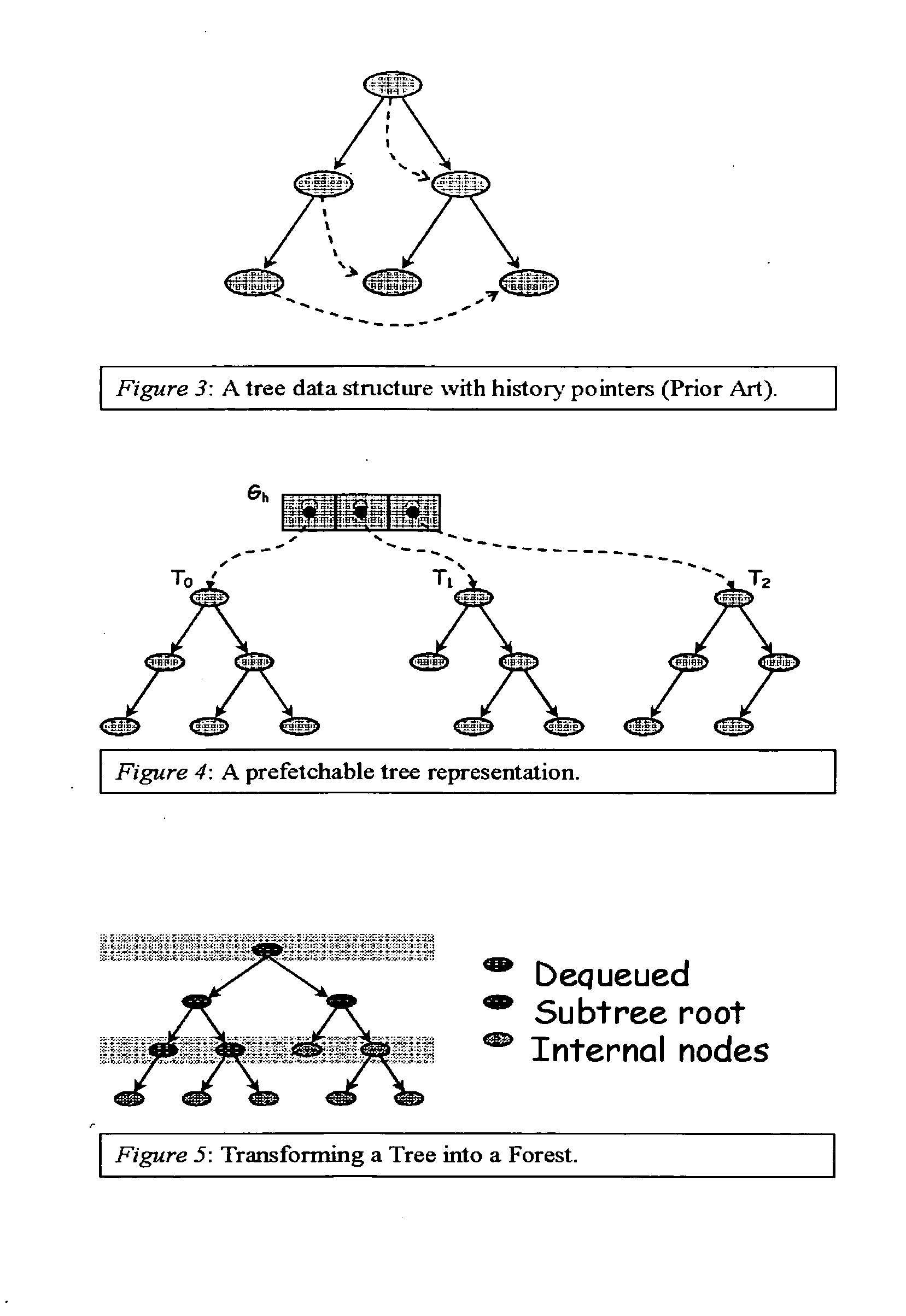

Computer systems are typically designed with multiple levels of memory hierarchy. Prefetching has been employed to overcome the latency of fetching data or instructions from or to memory. Prefetching works well for data structures with regular memory access patterns, but less so for data structures such as trees, hash tables, and other structures in which the datum that will be used is not known a priori. In modern transaction processing systems, database servers, operating systems, and other commercial and engineering applications, information is frequently organized in trees, graphs, and linked lists. Lack of spatial locality results in a high probability that a miss will be incurred at each cache in the memory hierarchy. Each cache miss causes the processor to stall while the referenced value is fetched from lower levels of the memory hierarchy. Because this is likely to be the case for a significant fraction of the nodes traversed in the data structure, processor utilization suffers. The inability to compute the address of the next address to be referenced makes prefetching difficult in such applications. The invention allows compilers and / or programmers to restructure data structures and traversals so that pointers are dereferenced in a pipelined manner, thereby making it possible to schedule prefetch operations in a consistent fashion. The present invention significantly increases the cache hit rates of many important data structure traversals, and thereby the potential throughput of the computer system and application in which it is employed. For data structure traversals in which the traversal path may be predetermined, a transformation is performed on the data structure that permits references to nodes that will be traversed in the future be computed sufficiently far in advance to prefetch the data into cache.

Owner:DIGITAL CACHE LLC

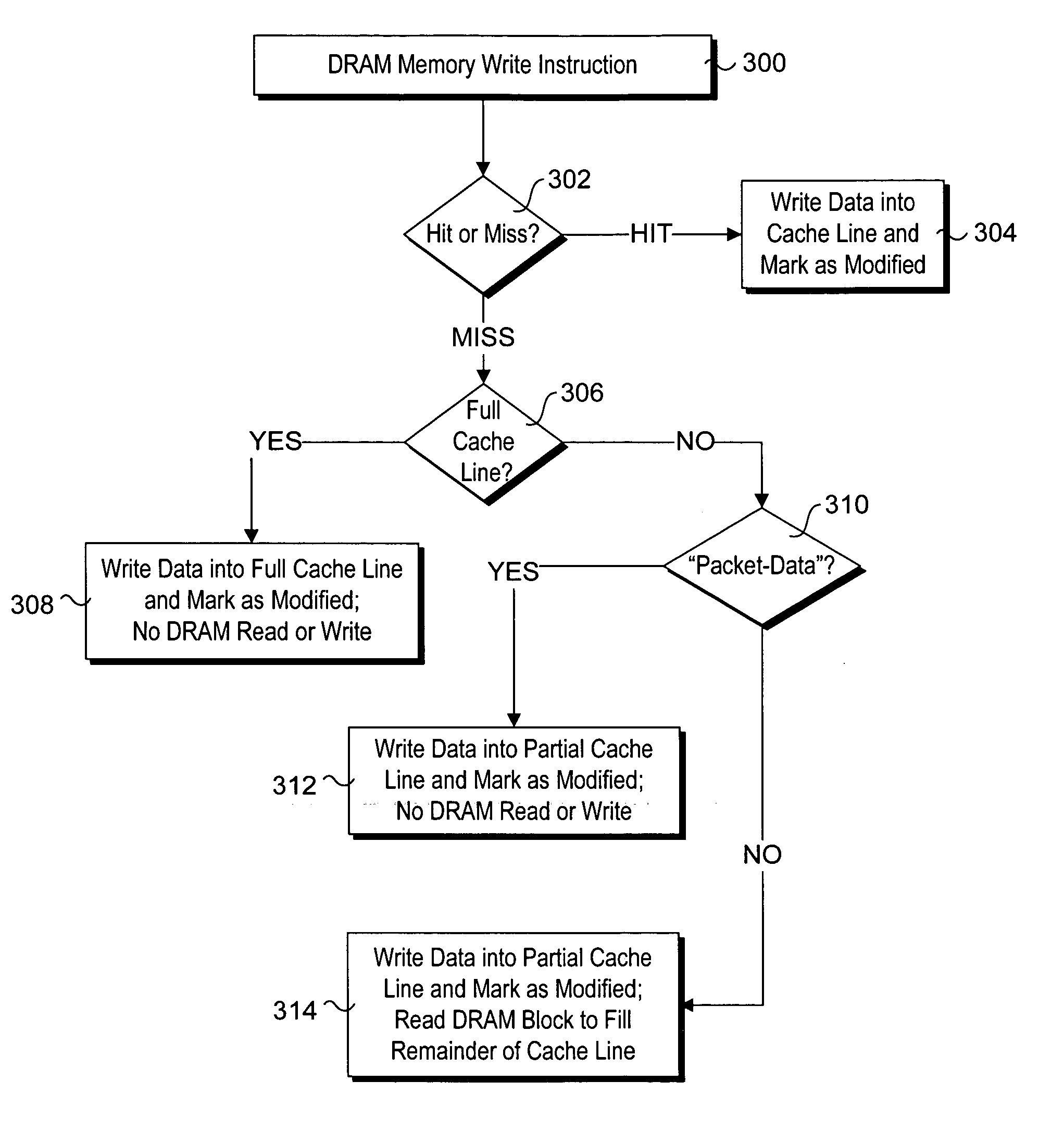

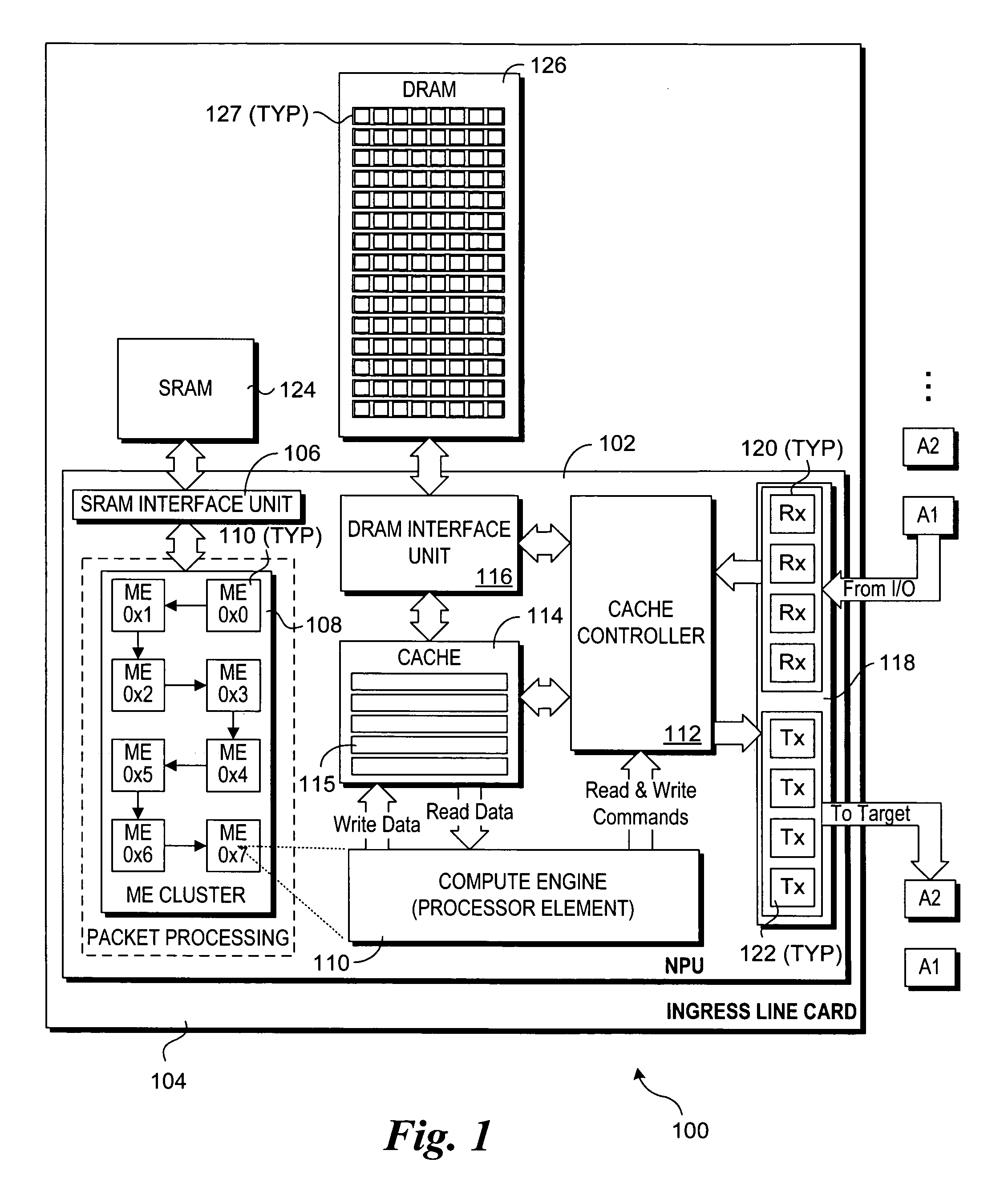

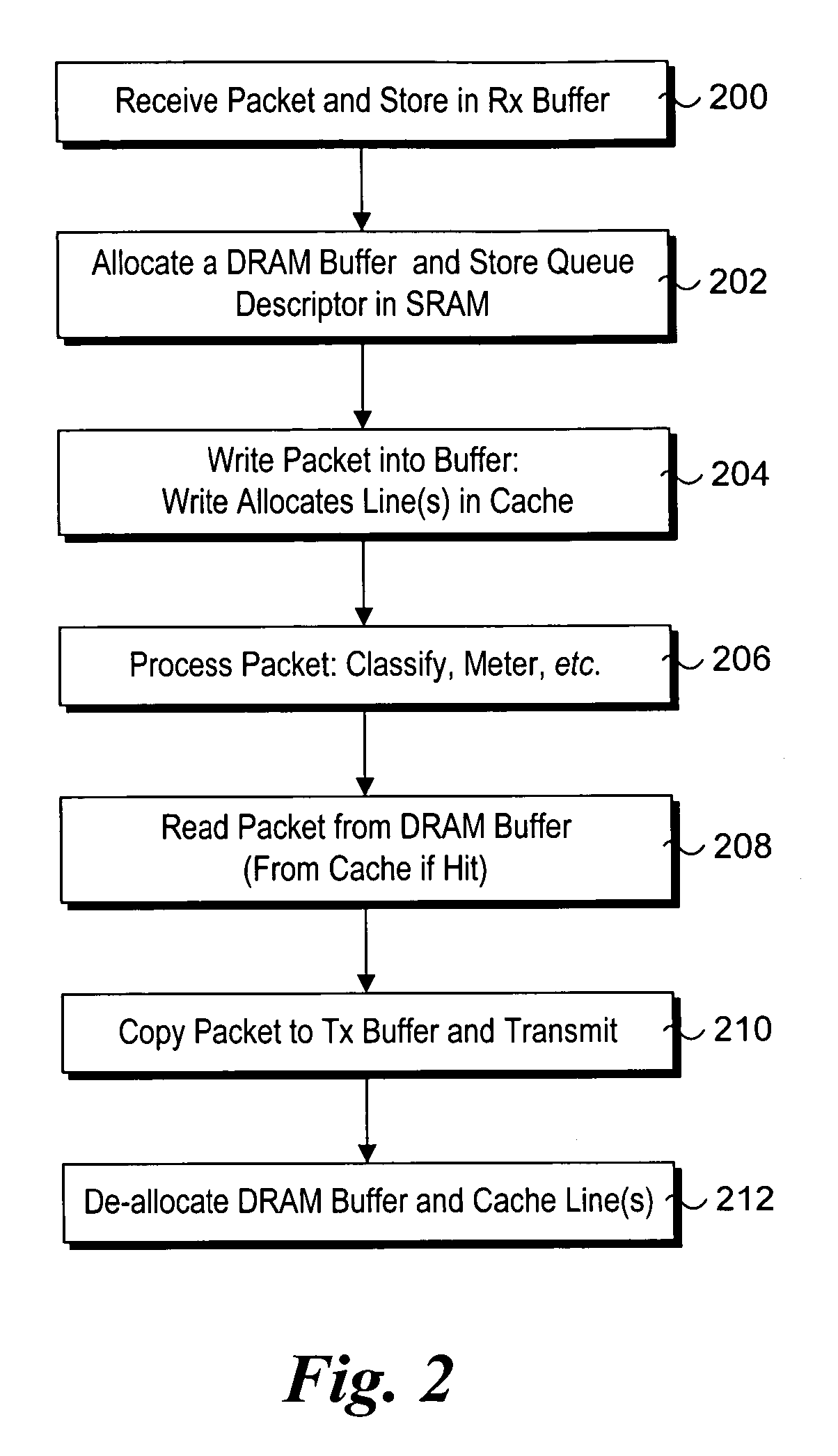

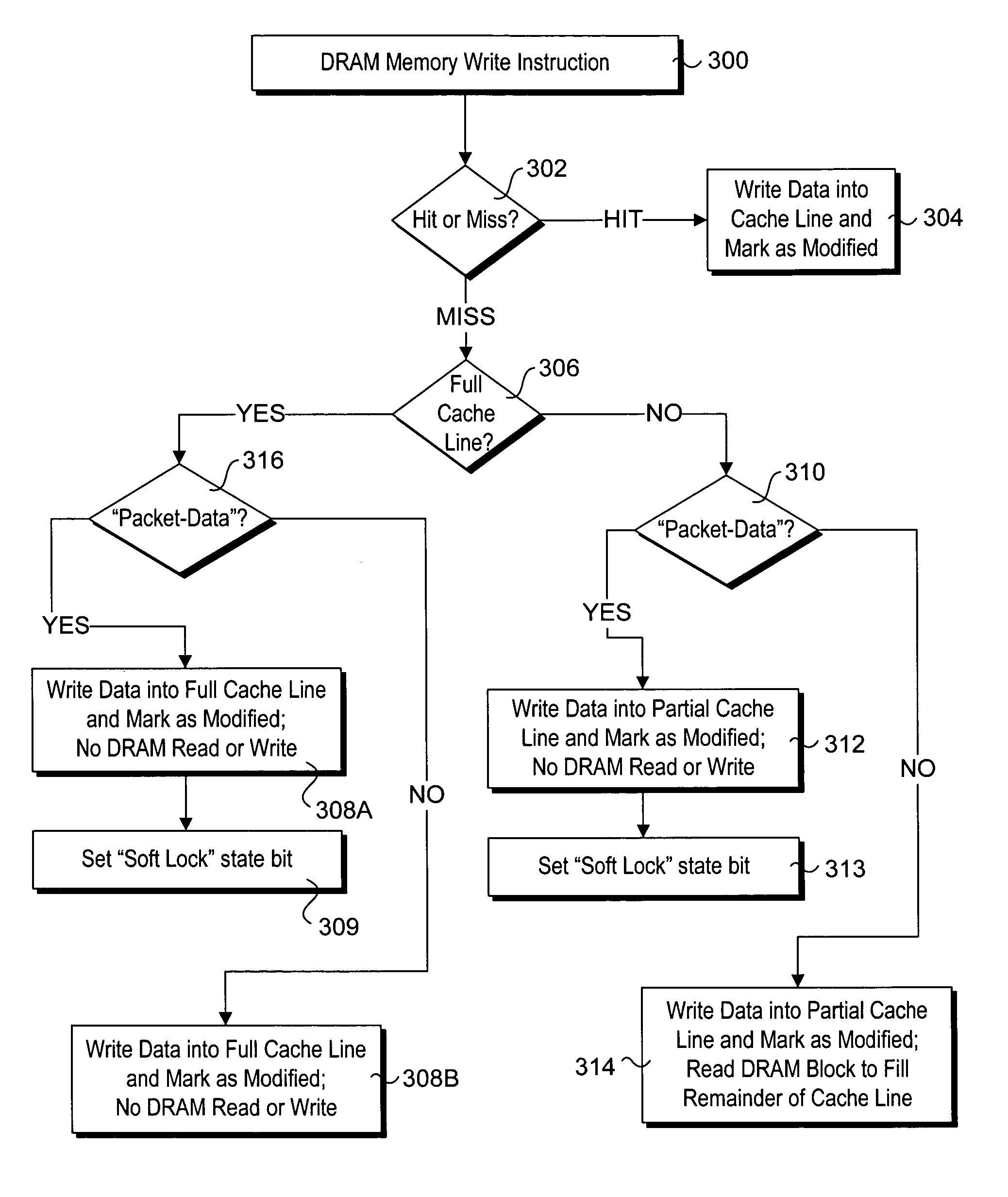

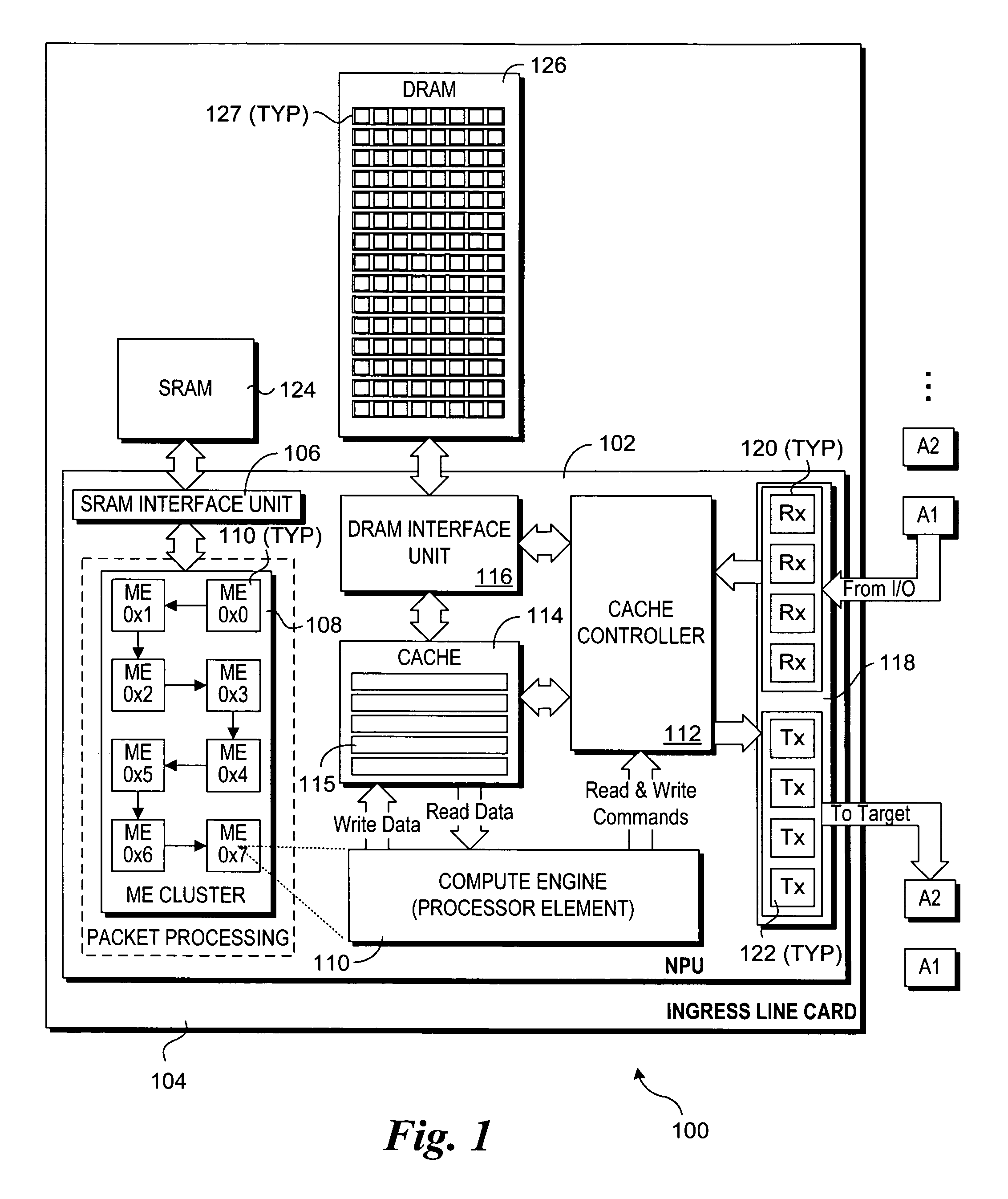

Instruction-assisted cache management for efficient use of cache and memory

Instruction-assisted cache management for efficient use of cache and memory. Hints (e.g., modifiers) are added to read and write memory access instructions to identify the memory access is for temporal data. In view of such hints, alternative cache policy and allocation policies are implemented that minimize cache and memory access. Under one policy, a write cache miss may result in a write of data to a partial cache line without a memory read / write cycle to fill the remainder of the line. Under another policy, a read cache miss may result in a read from memory without allocating or writing the read data to a cache line. A cache line soft-lock mechanism is also disclosed, wherein cache lines may be selectably soft locked to indicate preference for keeping those cache lines over non-locked lines.

Owner:TAHOE RES LTD

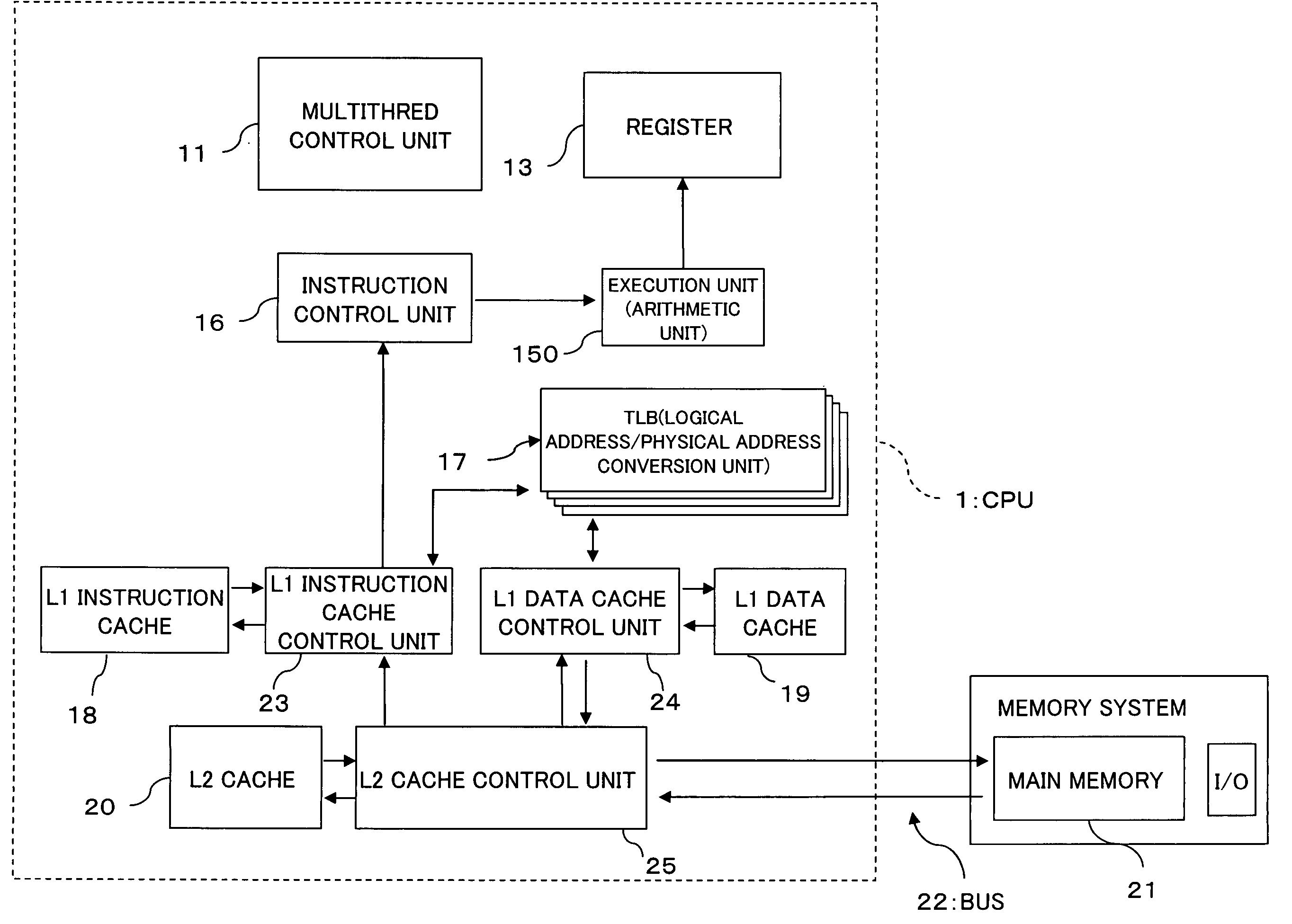

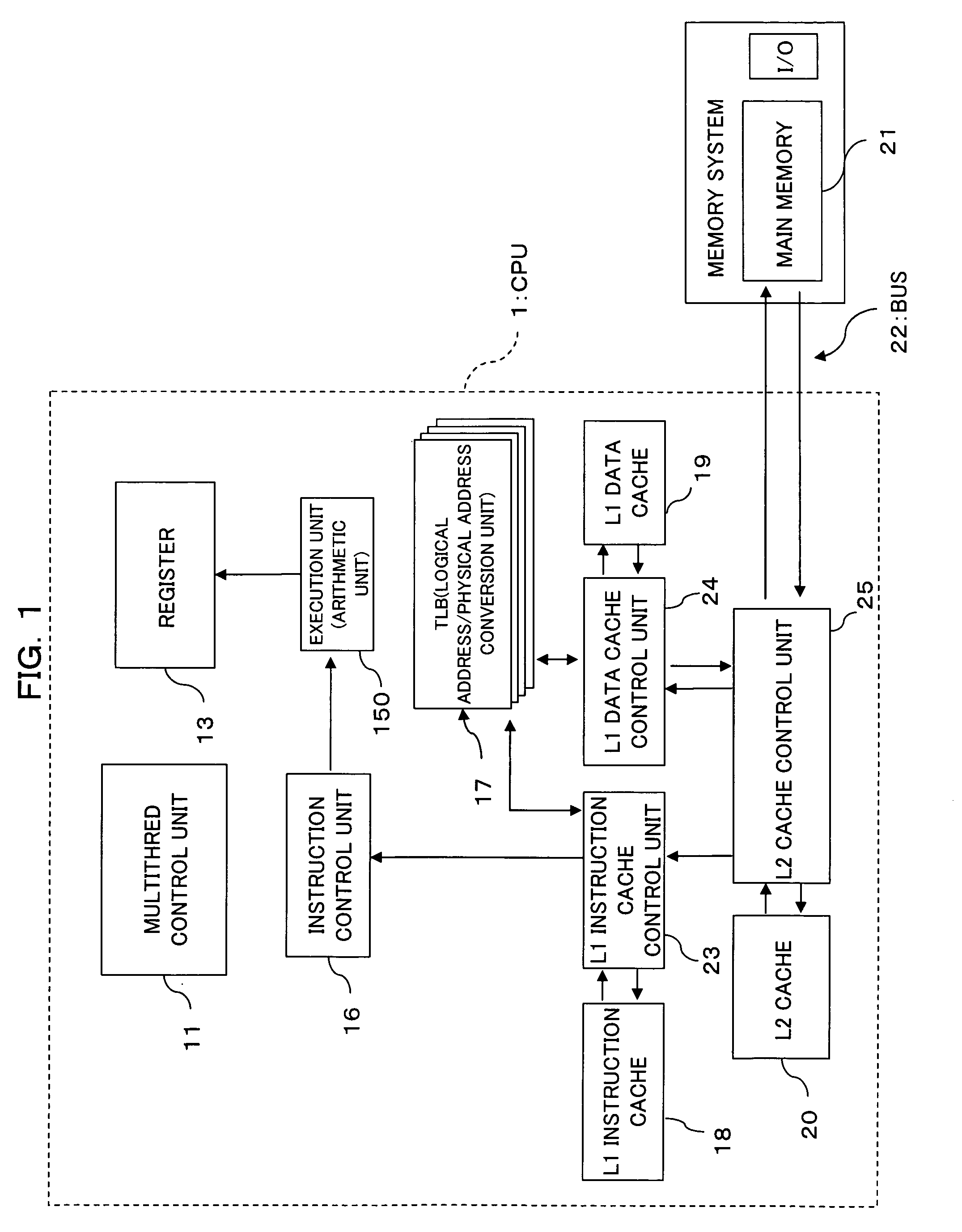

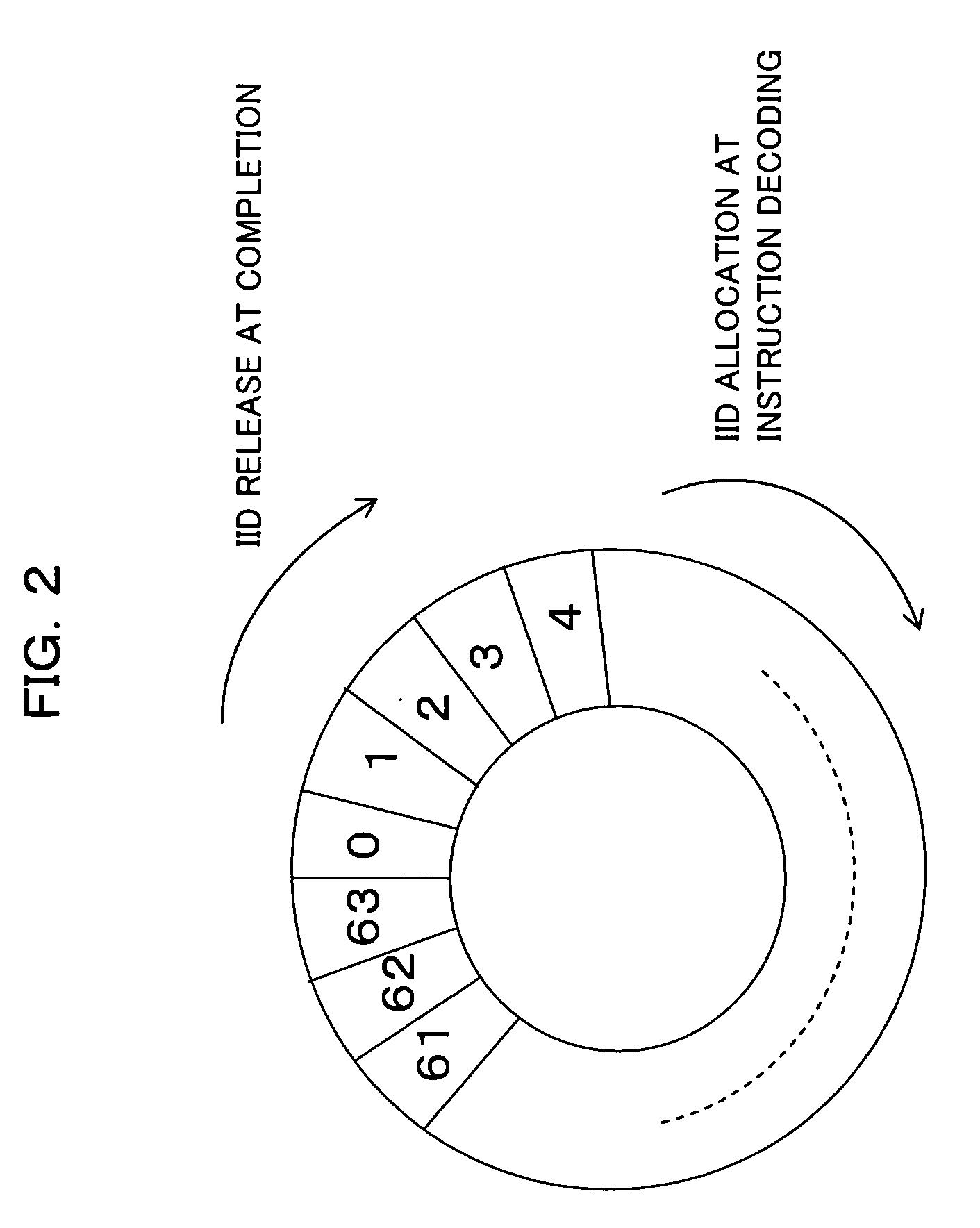

Multithread processor and thread switching control method

ActiveUS20060026594A1Reduce processing timeImprove efficiencyProgram initiation/switchingMemory adressing/allocation/relocationControl unitProcess rate

The present invention relates to a multithread processor. In the multithread processor, when a cache miss occurs on a request related to an instruction in, of a plurality of caches arranged hierarchically, a cache at the lowest place in the hierarchy, with respect to the request suffering the cache miss, a cache control unit notifies an instruction identifier and a thread identifier, which are related to the instruction, to a multithread control unit. When a cache miss occurs on an instruction to be next completed, the multithread control unit makes the switching between threads on the basis of the instruction identifier and thread identifier notified from the cache control unit. This enables effective thread switching, thus enhancing the processing speed.

Owner:FUJITSU LTD

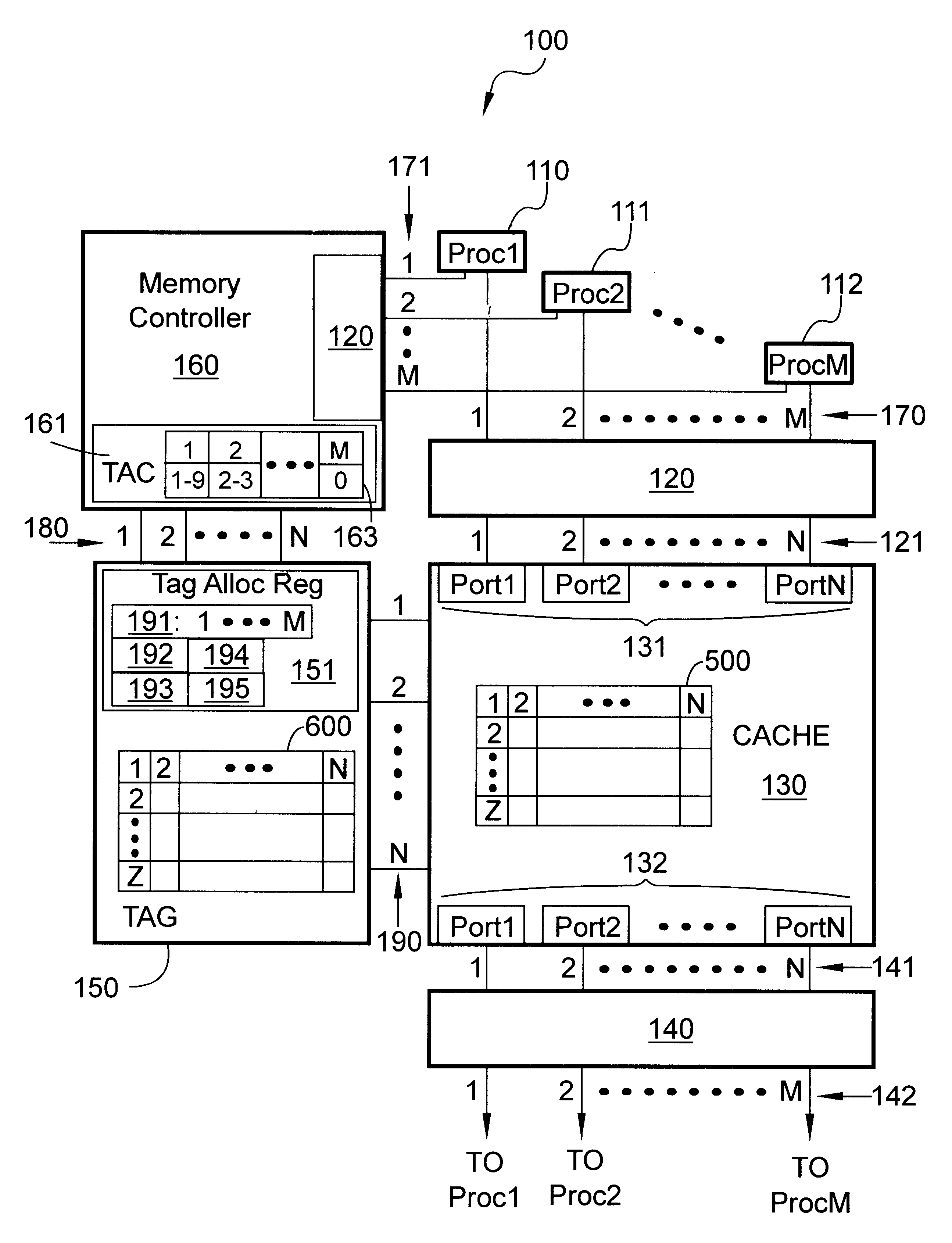

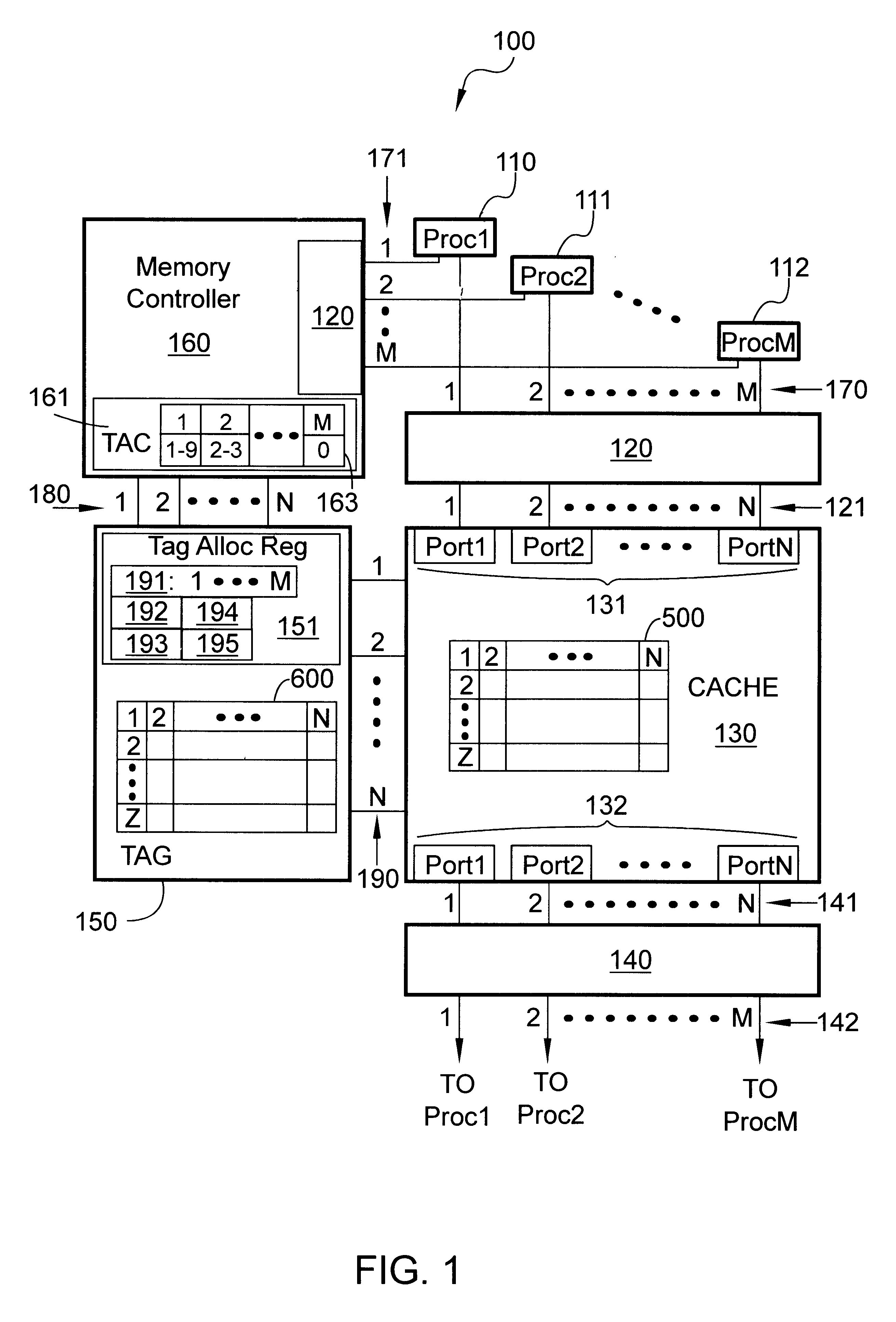

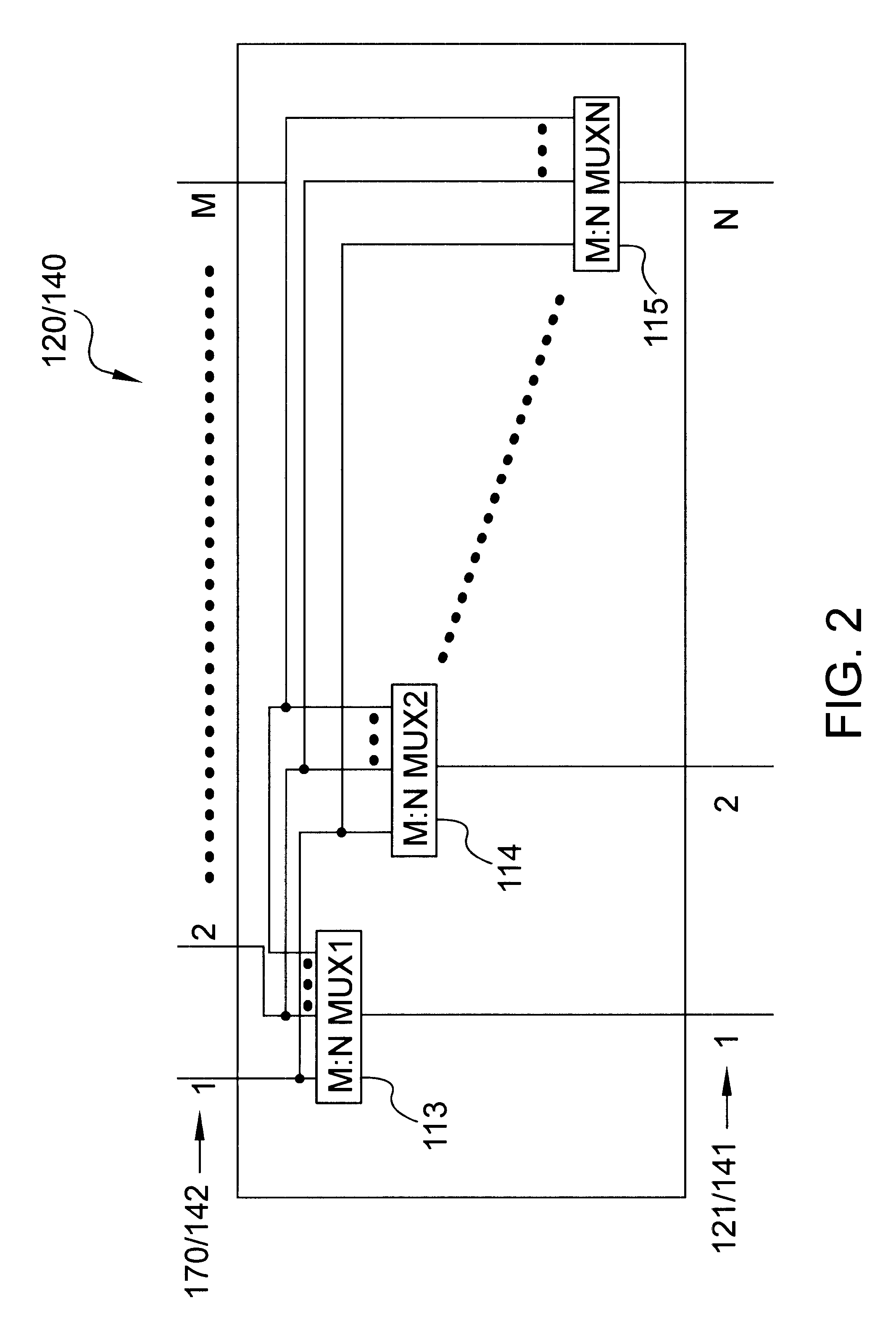

Performance based system and method for dynamic allocation of a unified multiport cache

InactiveUS6604174B1Memory architecture accessing/allocationMemory adressing/allocation/relocationCache accessParallel computing

The present invention provides a performance based system and method for dynamic allocation of a unified multiport cache. A multiport cache system is disclosed that allows multiple single-cycle look ups through a multiport tag and multiple single-cycle cache accesses from a multiport cache. Therefore, multiple processes, which could be processors, tasks, or threads can access the cache during any cycle. Moreover, the ways of the cache can be allocated to the different processes and then dynamically reallocated based on performance. Most preferably, a relational cache miss percentage is used to reallocate the ways, but other metrics may also be used.

Owner:GOOGLE LLC

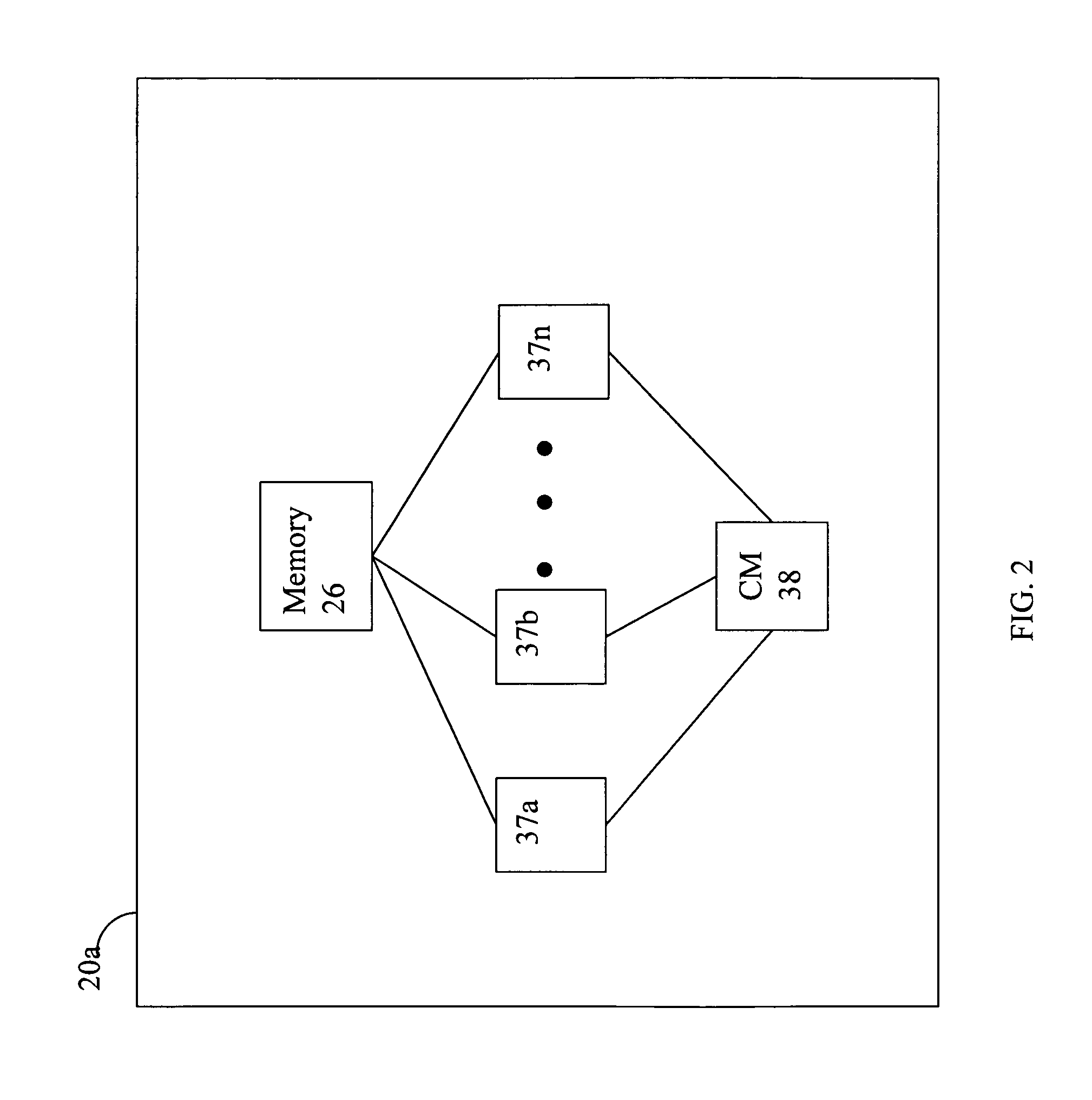

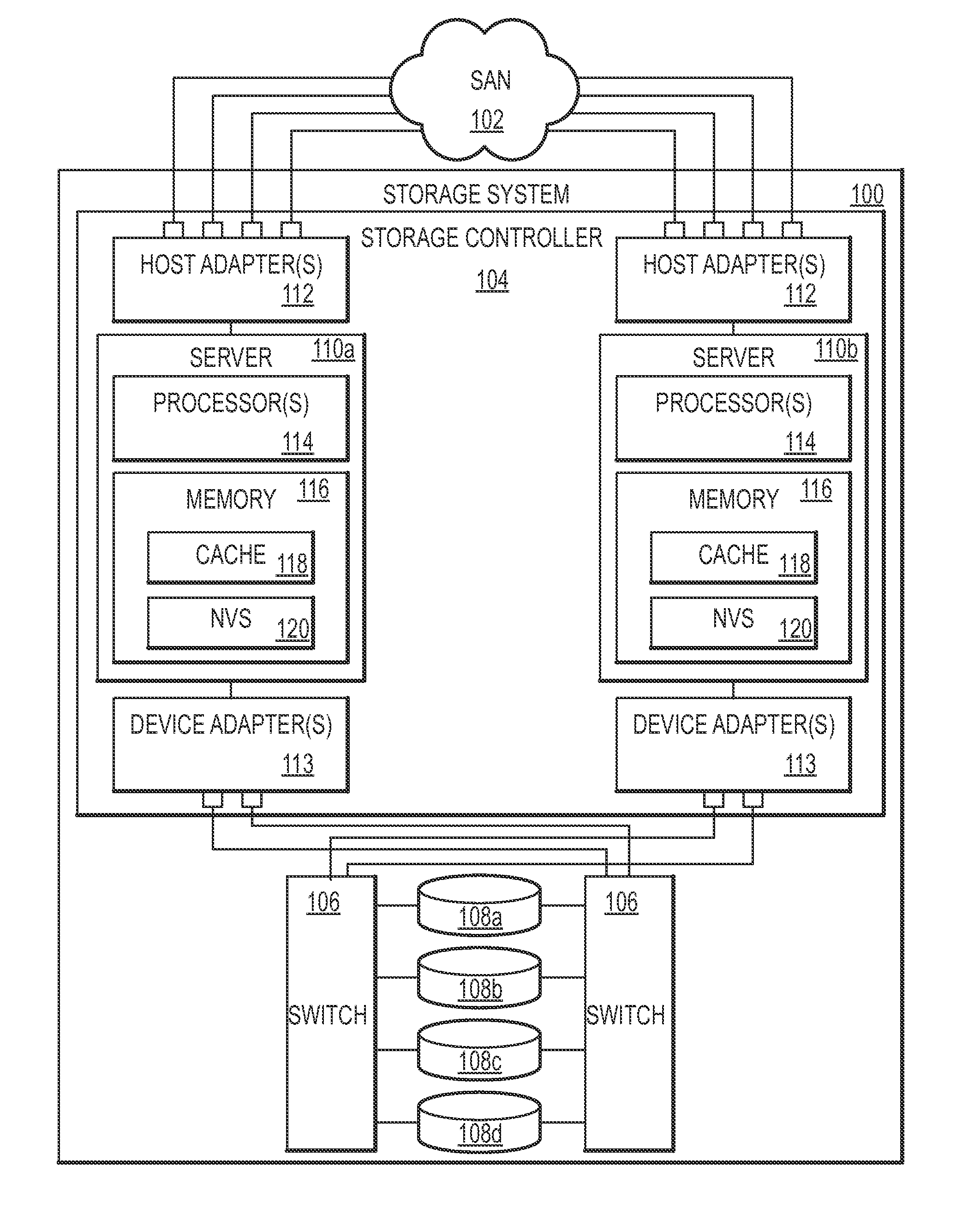

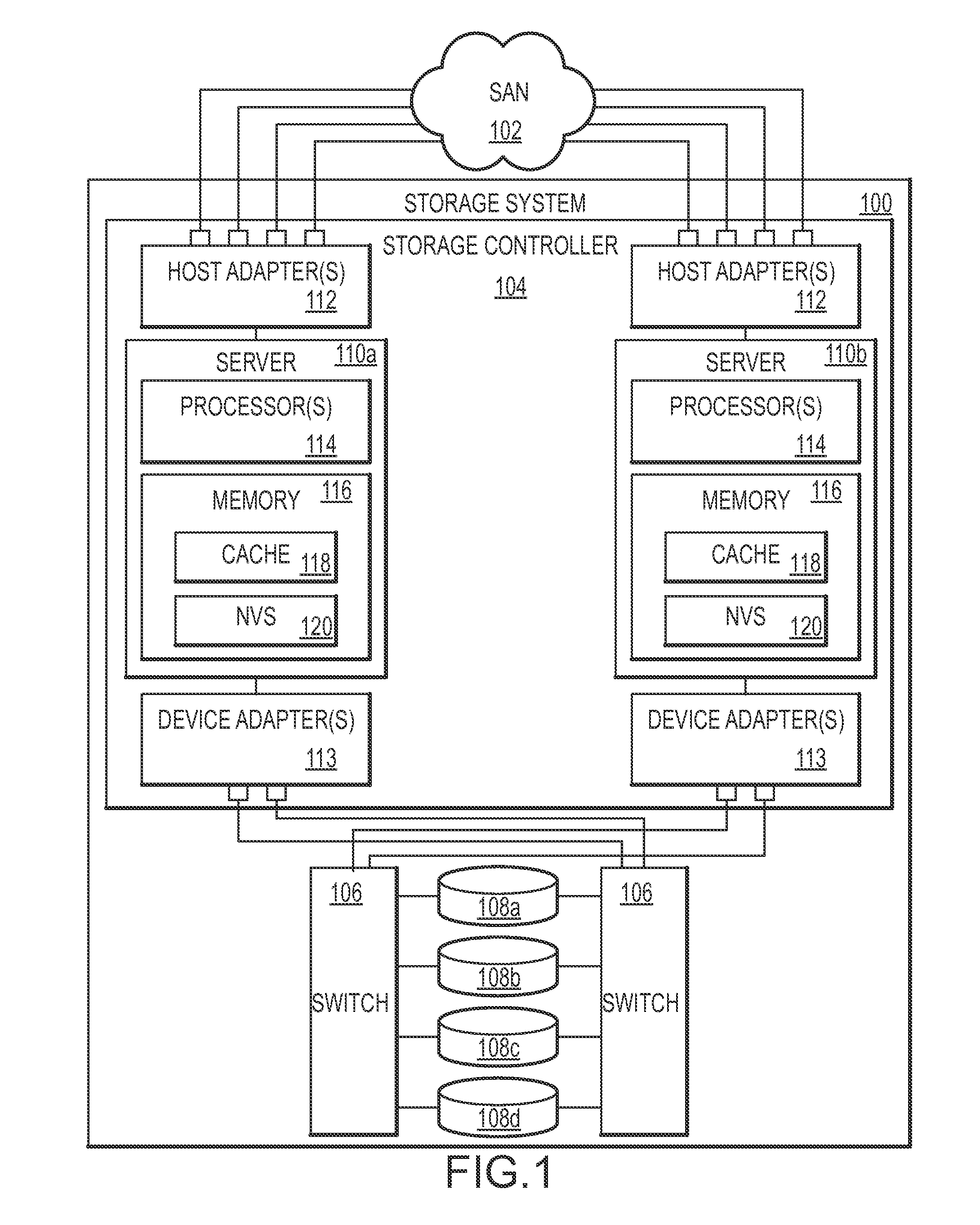

Dynamic management of destage tasks in a storage controller

ActiveUS20110191534A1Desired overall occupancy of the NVSExtension of timeMemory architecture accessing/allocationMemory adressing/allocation/relocationOccupancy rateDynamic management

Method, system, and computer program product embodiments for facilitating data transfer from a write cache and NVS via a device adapter to a pool of storage devices by a processor or processors are provided. The processor(s) adaptively varies the destage rate based on the current occupancy of the NVS for a particular storage device and stage activity related to that storage device. The stage activity includes one or more of the storage device stage activity, device adapter stage activity, device adapter utilized bandwidth and the read / write speed of the storage device. These factors are generally associated with read response time in the event of a cache miss and not ordinarily associated with dynamic management of the destage rate. This combination maintains the desired overall occupancy of the NVS while improving response time performance.

Owner:IBM CORP

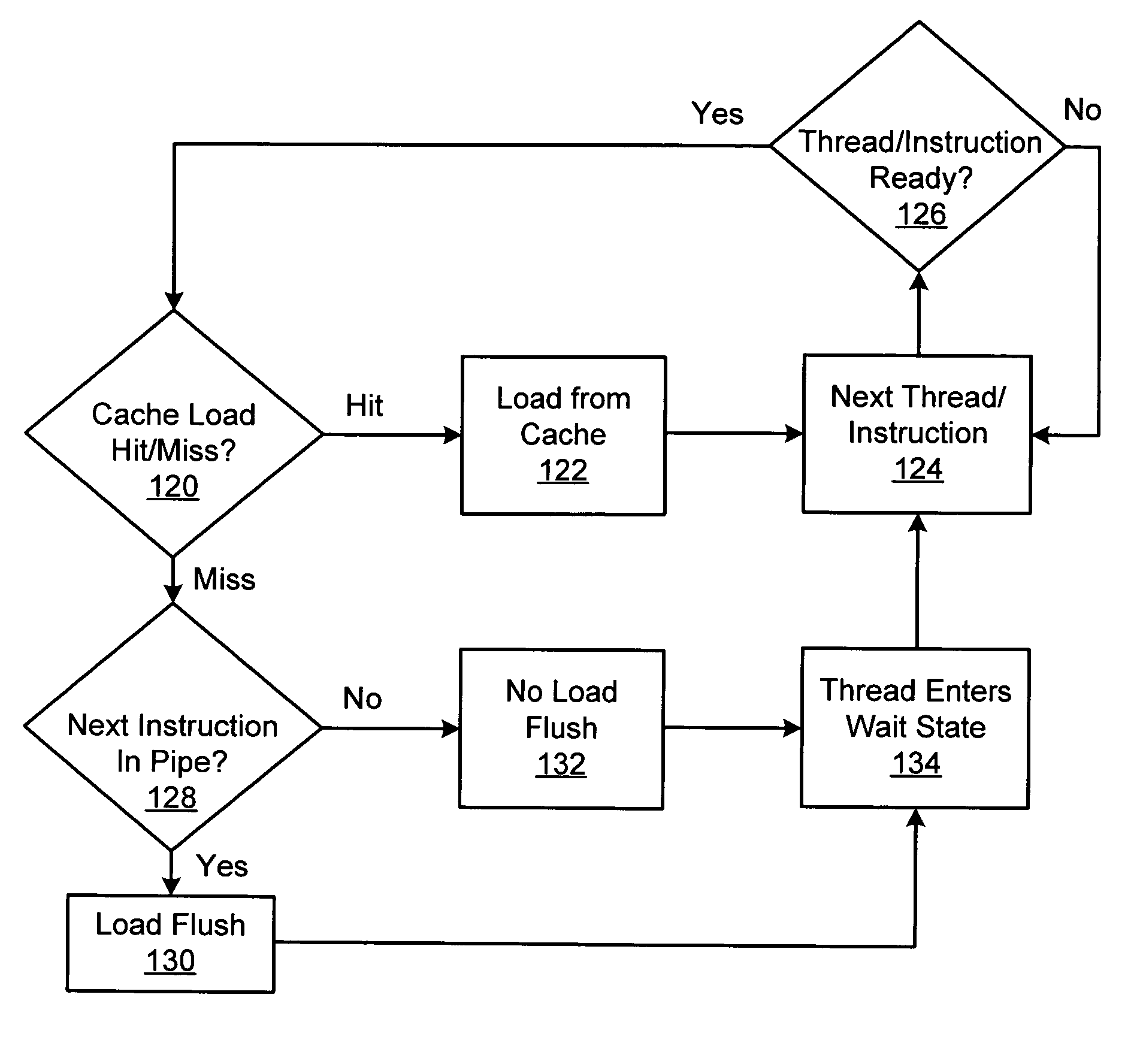

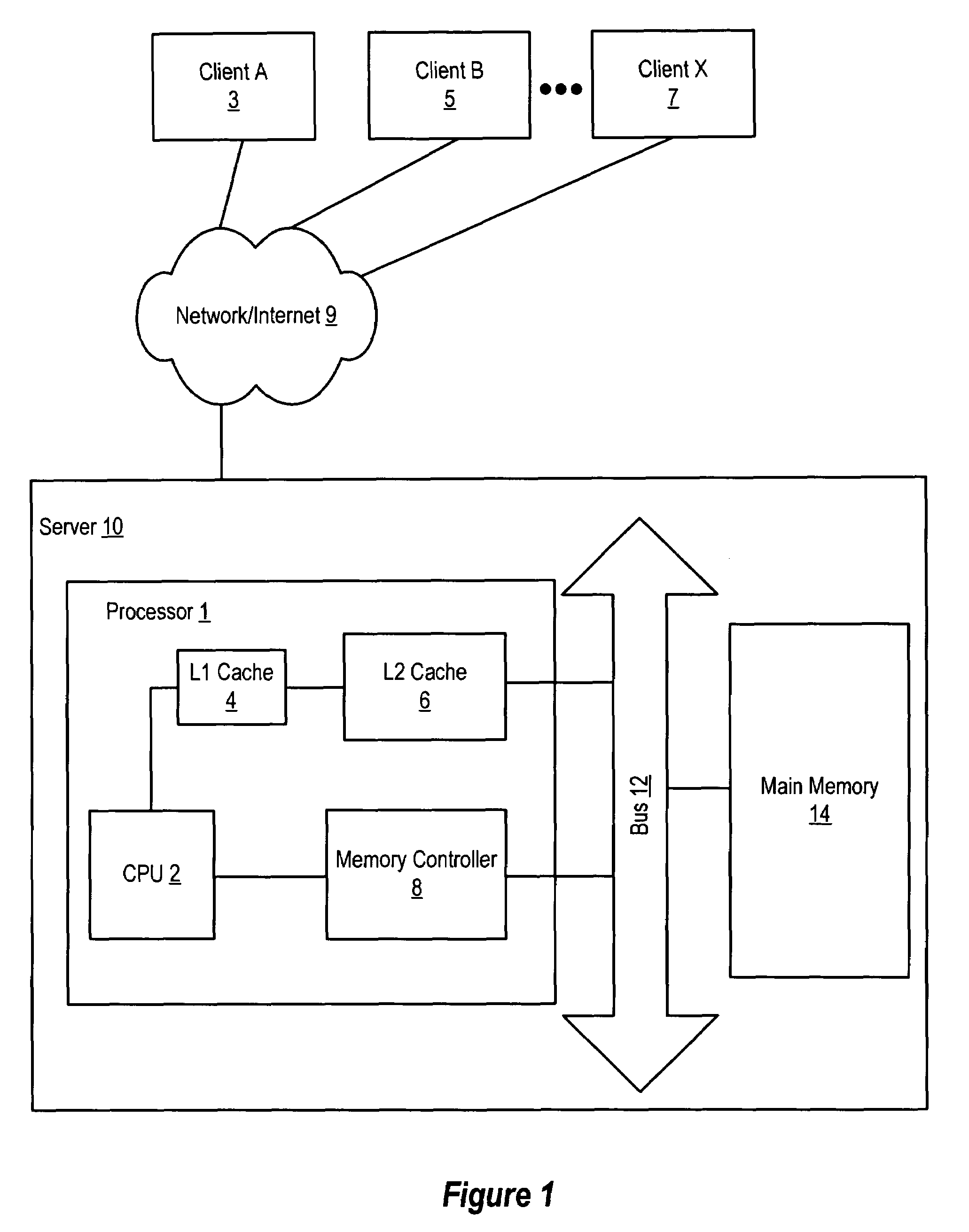

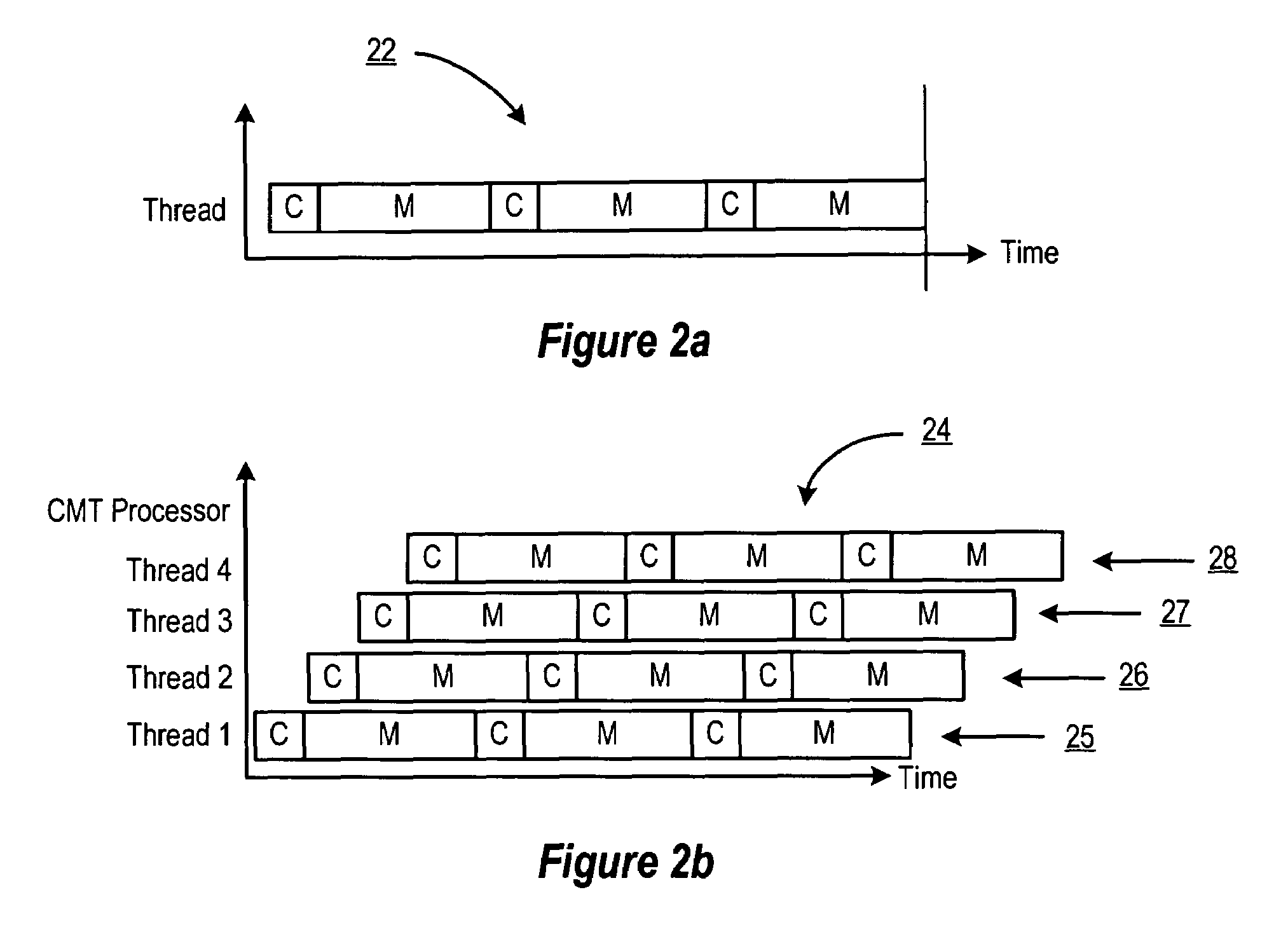

Handling cache misses by selectively flushing the pipeline

ActiveUS7509484B1Eliminate operationHigh bandwidthDigital computer detailsSpecific program execution arrangementsLoad instructionParallel computing

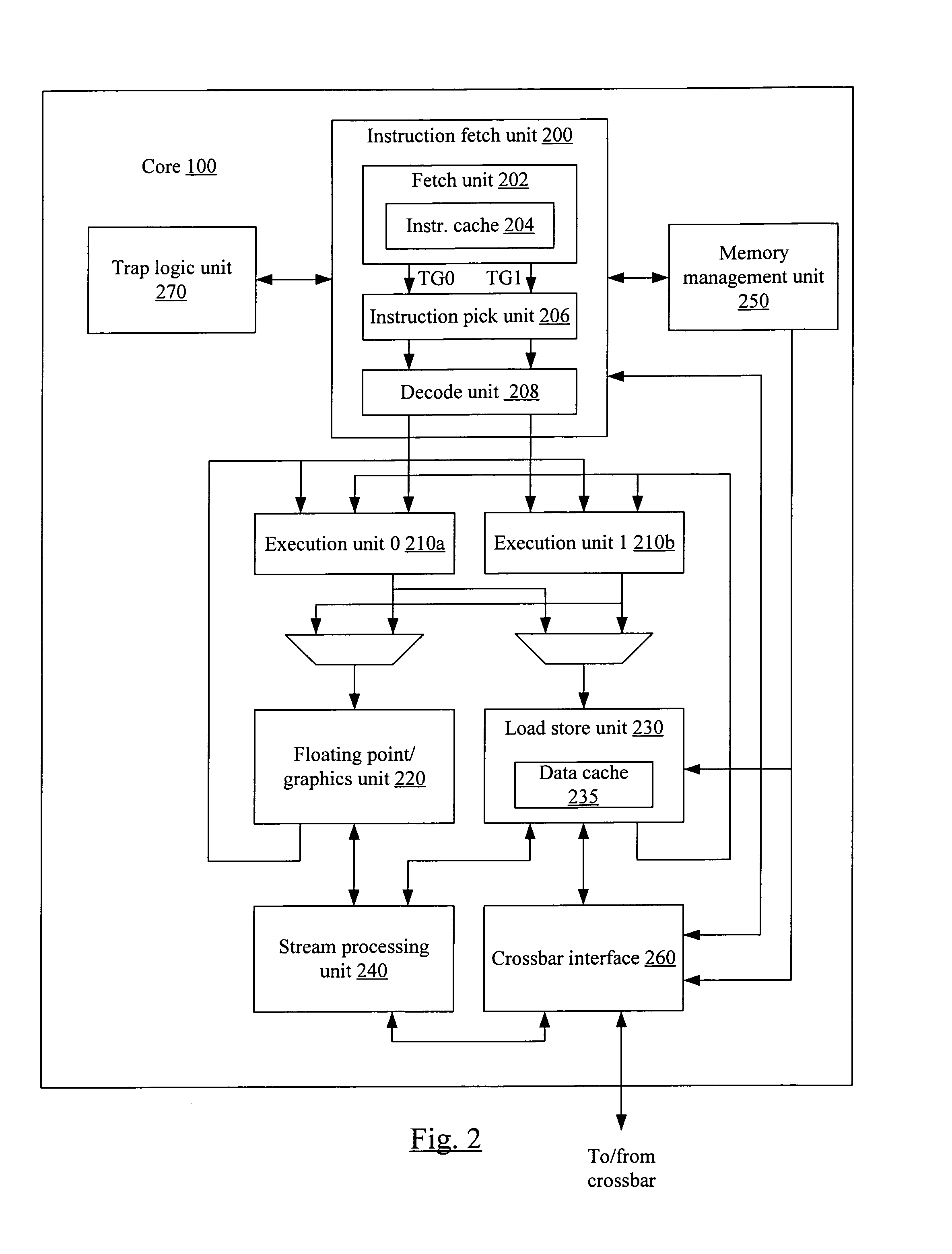

An apparatus and method for efficiently managing data cache load misses is described in connection with a multithreaded, pipelined multiprocessor chip. A CMT processor keeps track of load misses for each thread by issuing a load miss signal each time a load instruction to the data cache misses. A detection logic functionality in the IFU responds the load miss signal to determine if a valid instruction from the thread is at the one of the pipeline stages. If no instructions from the thread are detected in the pipeline, then no flush is required and the thread is placed in a wait state until the requested data is returned from higher order memory. If any instruction from the thread is detected in the pipeline, the thread is flushed and the instruction is re-fetched.

Owner:ORACLE INT CORP

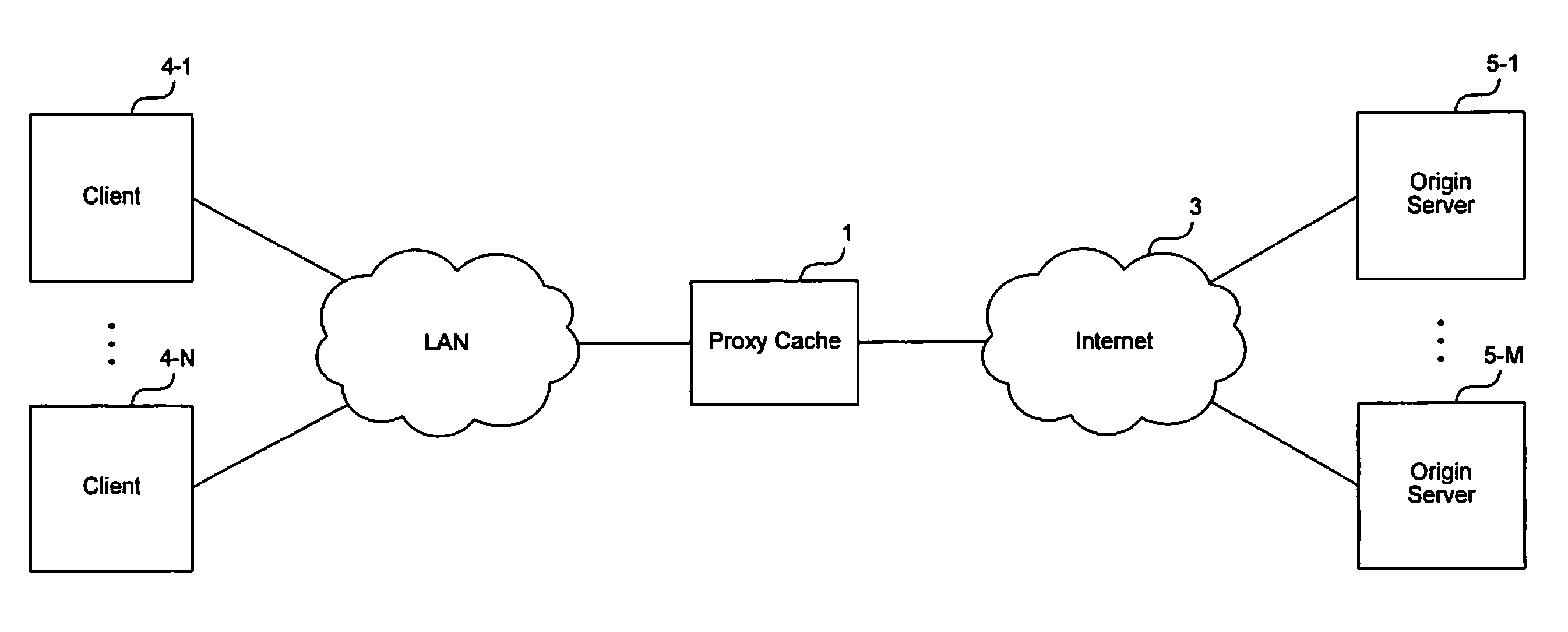

Method and apparatus for forwarding requests in a cache hierarchy based on user-defined forwarding rules

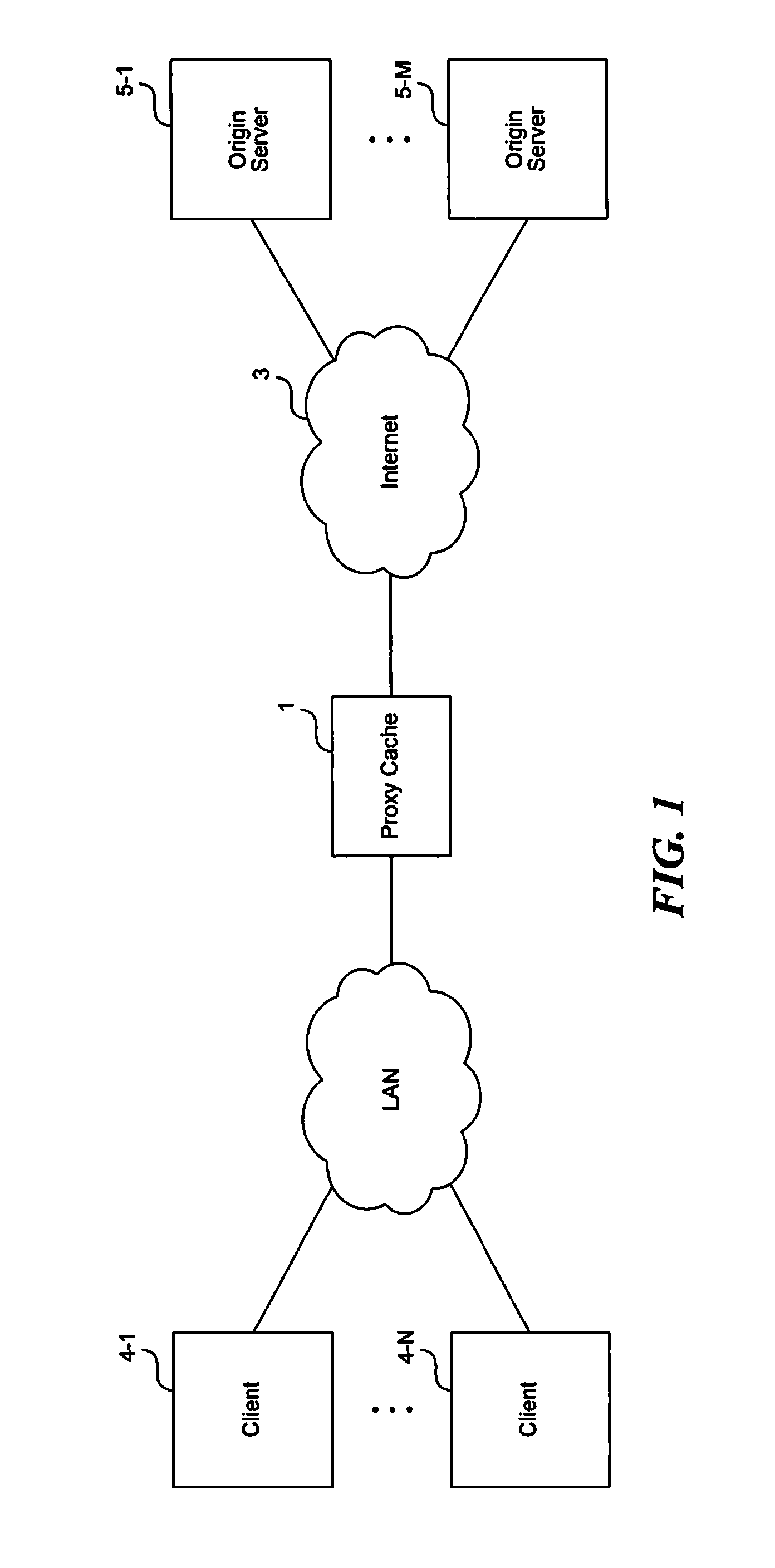

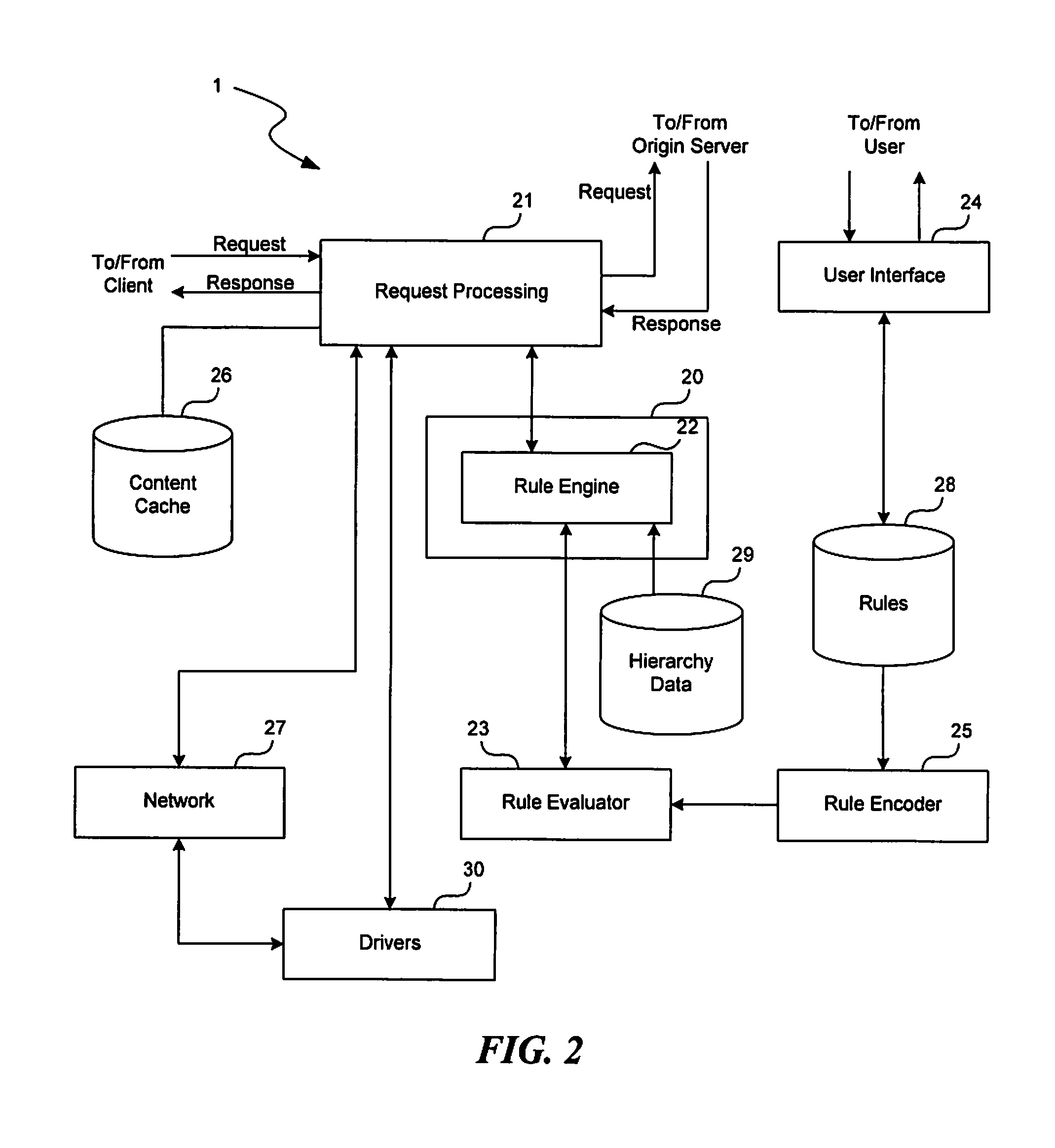

A method and apparatus for forwarding requests in a cache hierarchy based on user-defined forwarding rules are described. A proxy cache on a network provides a user interface that enables a user to define a set of forwarding rules for controlling the forwarding of content requests within a cache hierarchy. When the proxy cache receives a content request from a client and the request produces a cache miss, the proxy cache examines the rules sequentially two determine whether any of the user-defined rules applies to the request. If a rule is found to apply, the proxy cache identifies one or more forwarding destinations from the rule and determines the availability of the destinations. The proxy cache then forwards the request to an available destination according to the applicable rule.

Owner:NETWORK APPLIANCE INC

Instruction-assisted cache management for efficient use of cache and memory

Owner:TAHOE RES LTD

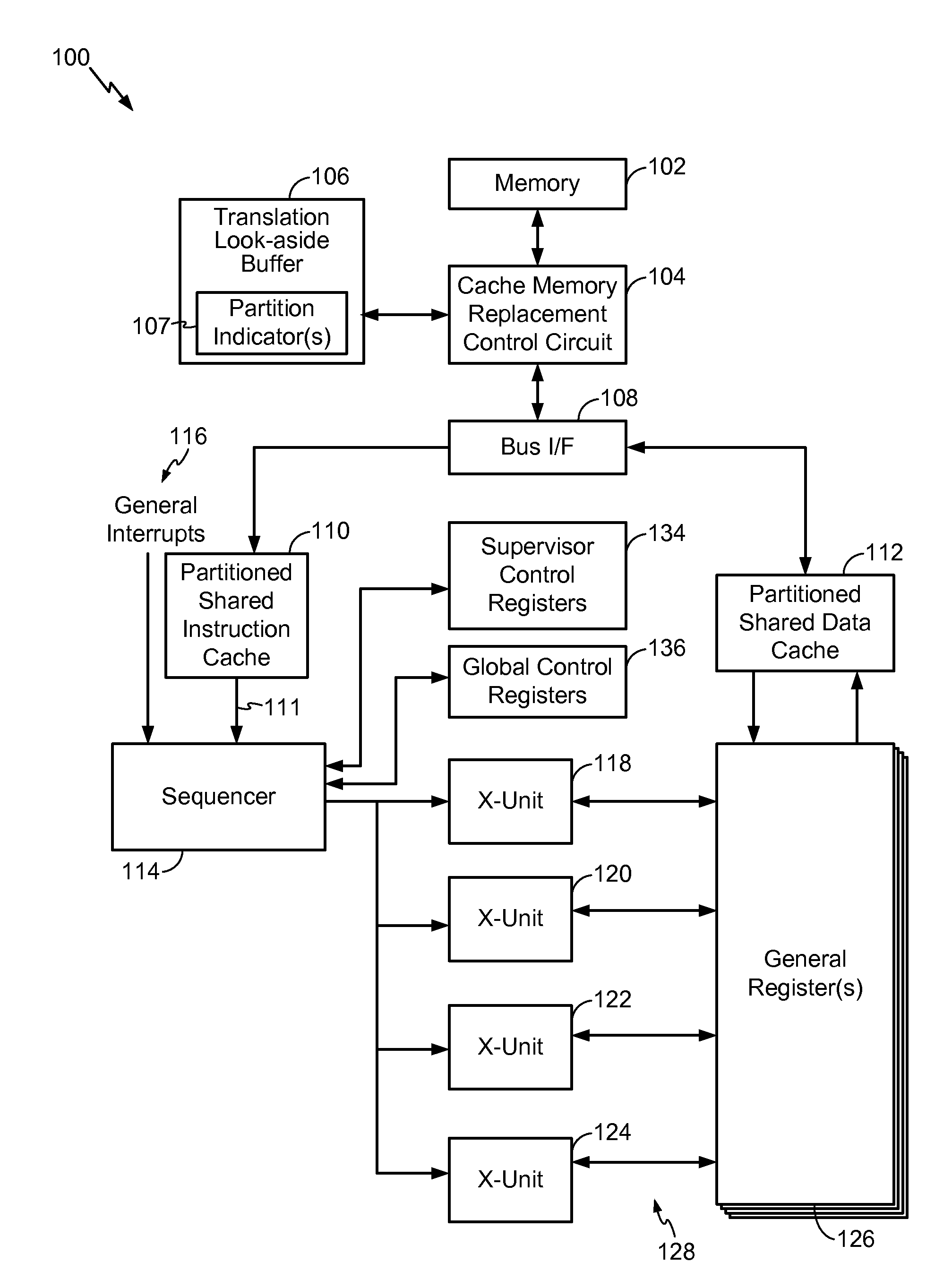

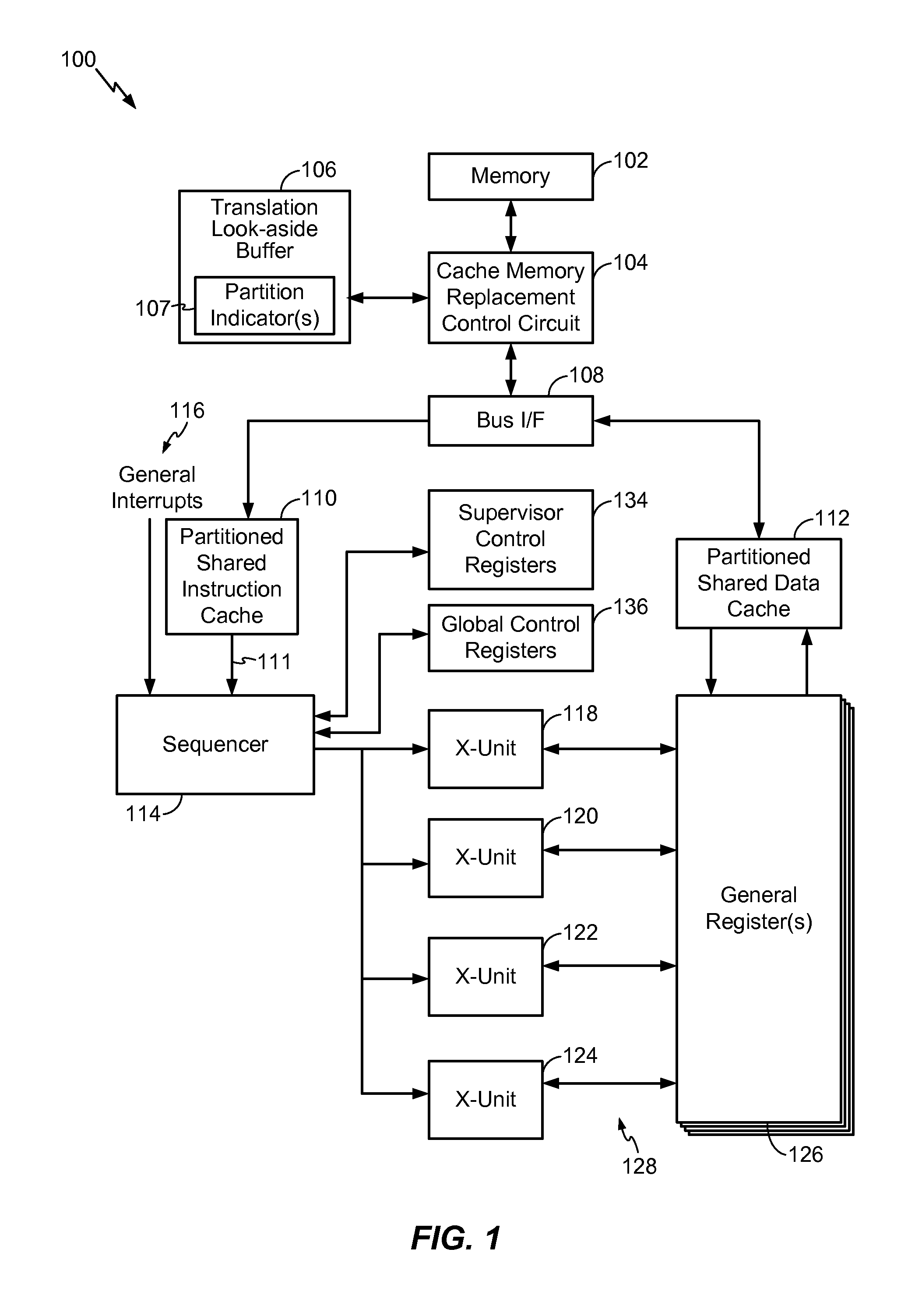

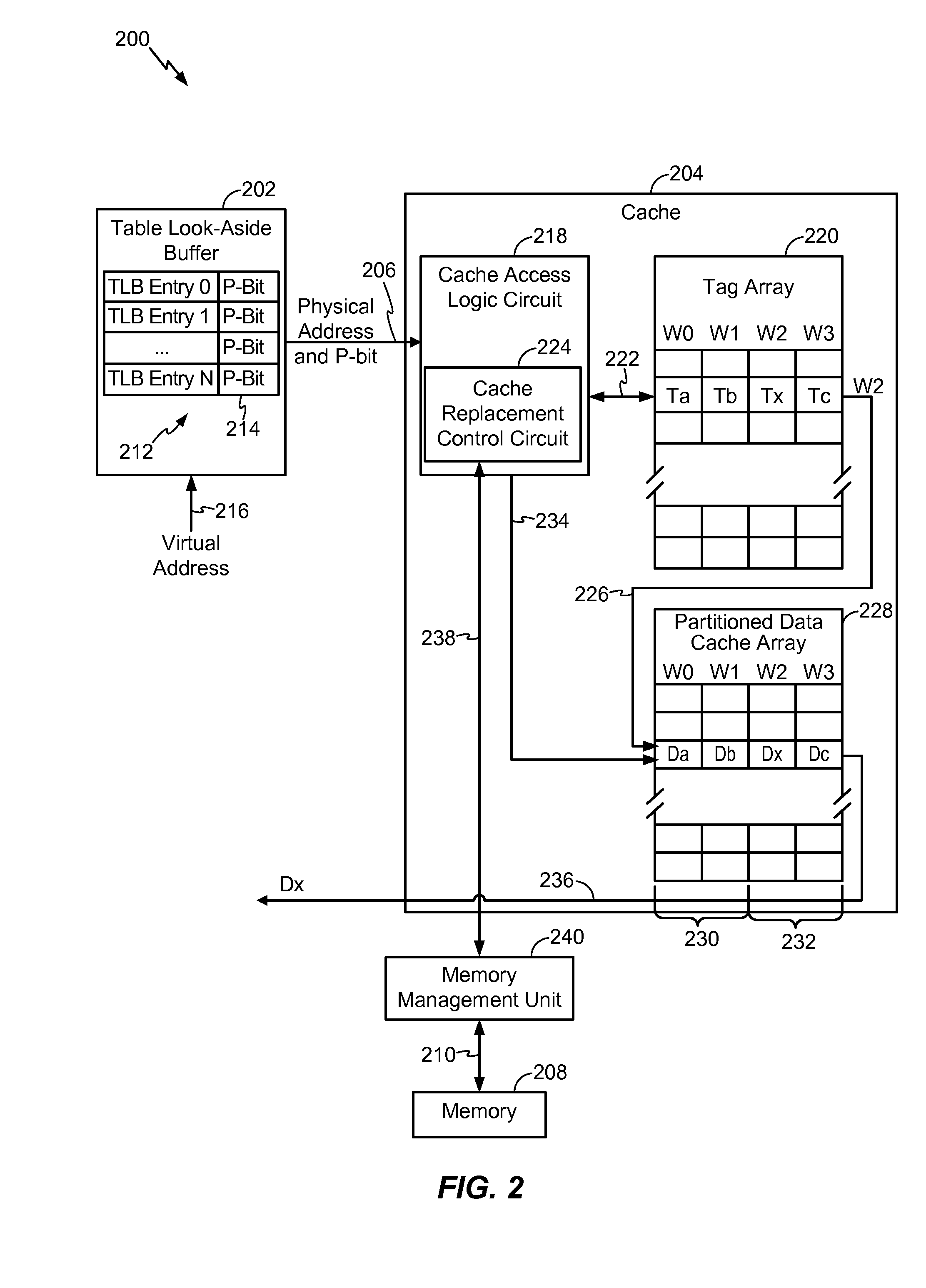

Partitioned Replacement For Cache Memory

InactiveUS20100318742A1Guaranteed service qualityReplacement is limitedDigital data processing detailsMemory adressing/allocation/relocationOperating systemControl logic

In a particular embodiment, a circuit device includes a translation look-aside buffer (TLB) configured to receive a virtual address and to translate the virtual address to a physical address of a cache having at least two partitions. The circuit device also includes a control logic circuit adapted to identify a partition replacement policy associated with the identified one of the at least two partitions based on a partition indicator. The control logic circuit controls replacement of data within the cache according to the identified partition replacement policy in response to a cache miss event.

Owner:QUALCOMM INC

Multiple variable cache replacement policy

A method for selecting a candidate to mark as overwritable in the event of a cache miss while attempting to avoid a write back operation. The method includes associating a set of data with the cache access request, each datum of the set is associated with a way, then choosing an invalid way among the set. Where no invalid ways exist among the set, the next step is determining a way that is not most recently used among the set. Next, the method determines whether a shared resource is crowded . When the shared resource is not crowded, the not most recently used way is chosen as the candidate. Where the shared resource is crowded, the next step is to determine whether the not most recently used way differs from an associated source in the memory and where the not most recently used way is the same as an associated source in the memory, the not most recently used way is chosen as the candidate. Where the not most recently used way differs from an associated source in the memory, the candidate is chosen as the way among the set that does not differ from an associated source in the memory. Where all ways among the set differ from respective sources in the memory, the not most recently used way is chosen as the candidate and the not most recently used way is stored in the shared resource.

Owner:SUN MICROSYSTEMS INC

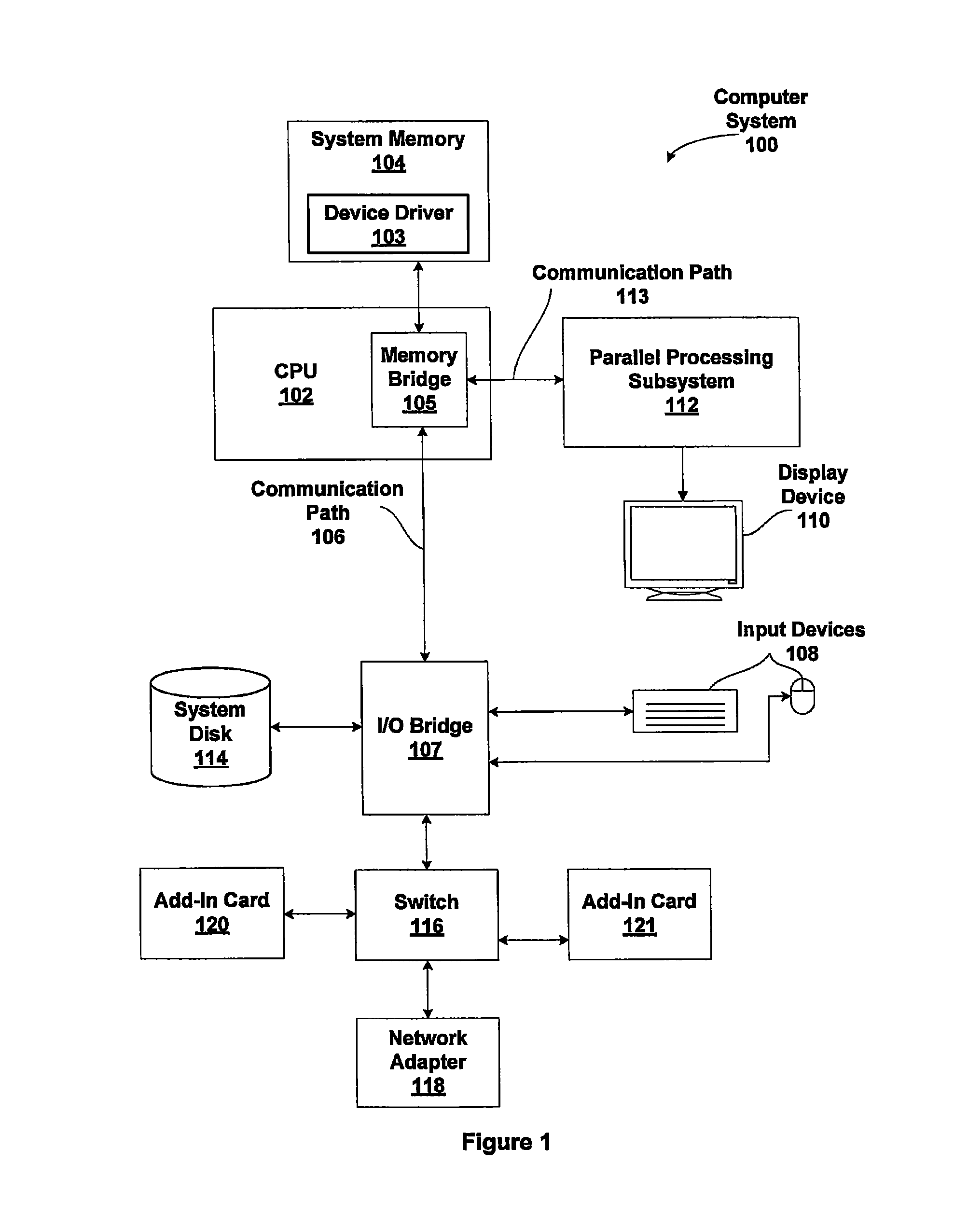

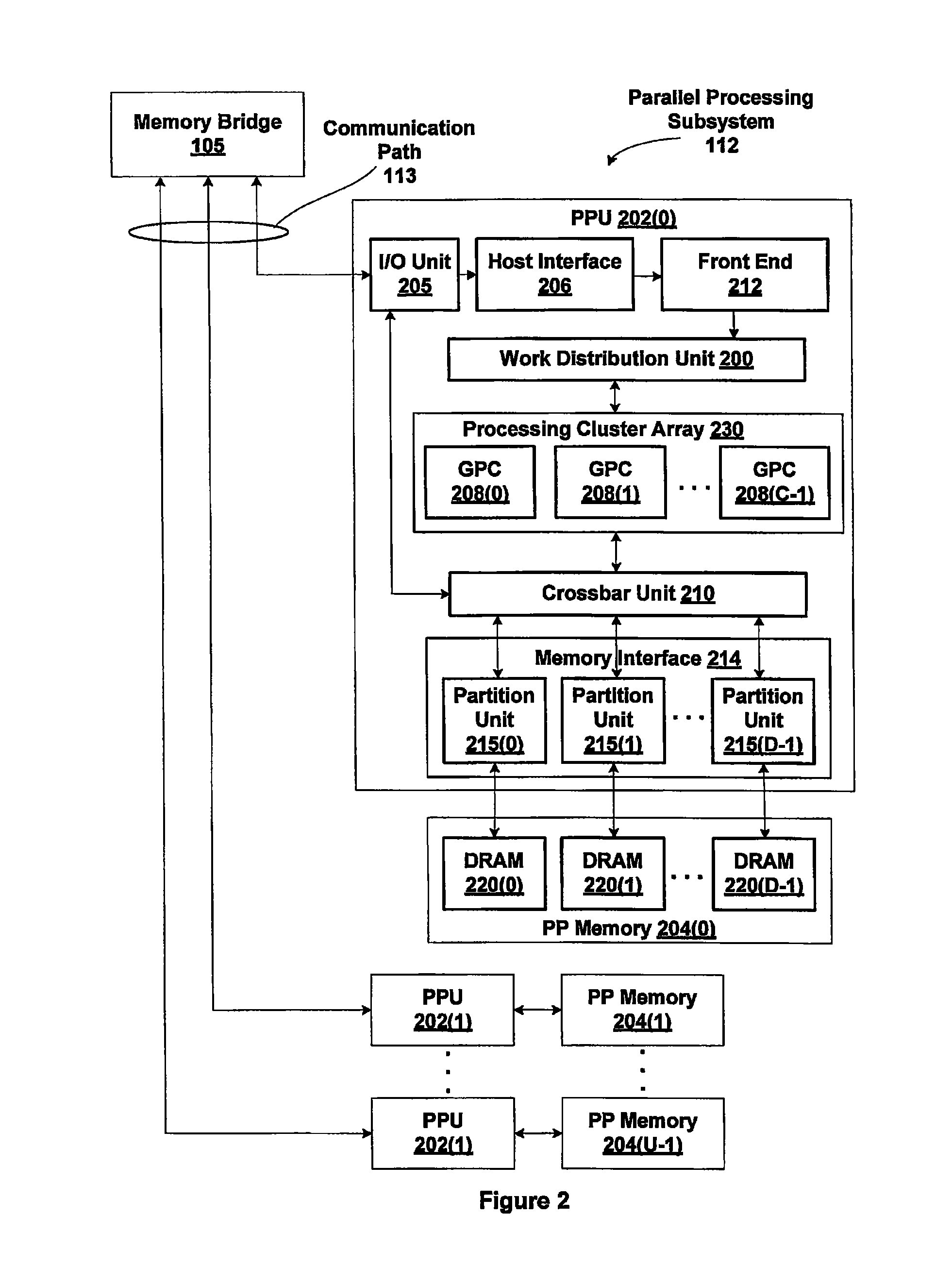

Efficient line and page organization for compression status bit caching

ActiveUS20110087840A1Efficient accessMemory architecture accessing/allocationMemory adressing/allocation/relocationPage tableVirtual address space

One embodiment of the present invention sets forth a technique for performing a memory access request to compressed data within a virtually mapped memory system comprising an arbitrary number of partitions. A virtual address is mapped to a linear physical address, specified by a page table entry (PTE). The PTE is configured to store compression attributes, which are used to locate compression status for a corresponding physical memory page within a compression status bit cache. The compression status bit cache operates in conjunction with a compression status bit backing store. If compression status is available from the compression status bit cache, then the memory access request proceeds using the compression status. If the compression status bit cache misses, then the miss triggers a fill operation from the backing store. After the fill completes, memory access proceeds using the newly filled compression status information.

Owner:NVIDIA CORP

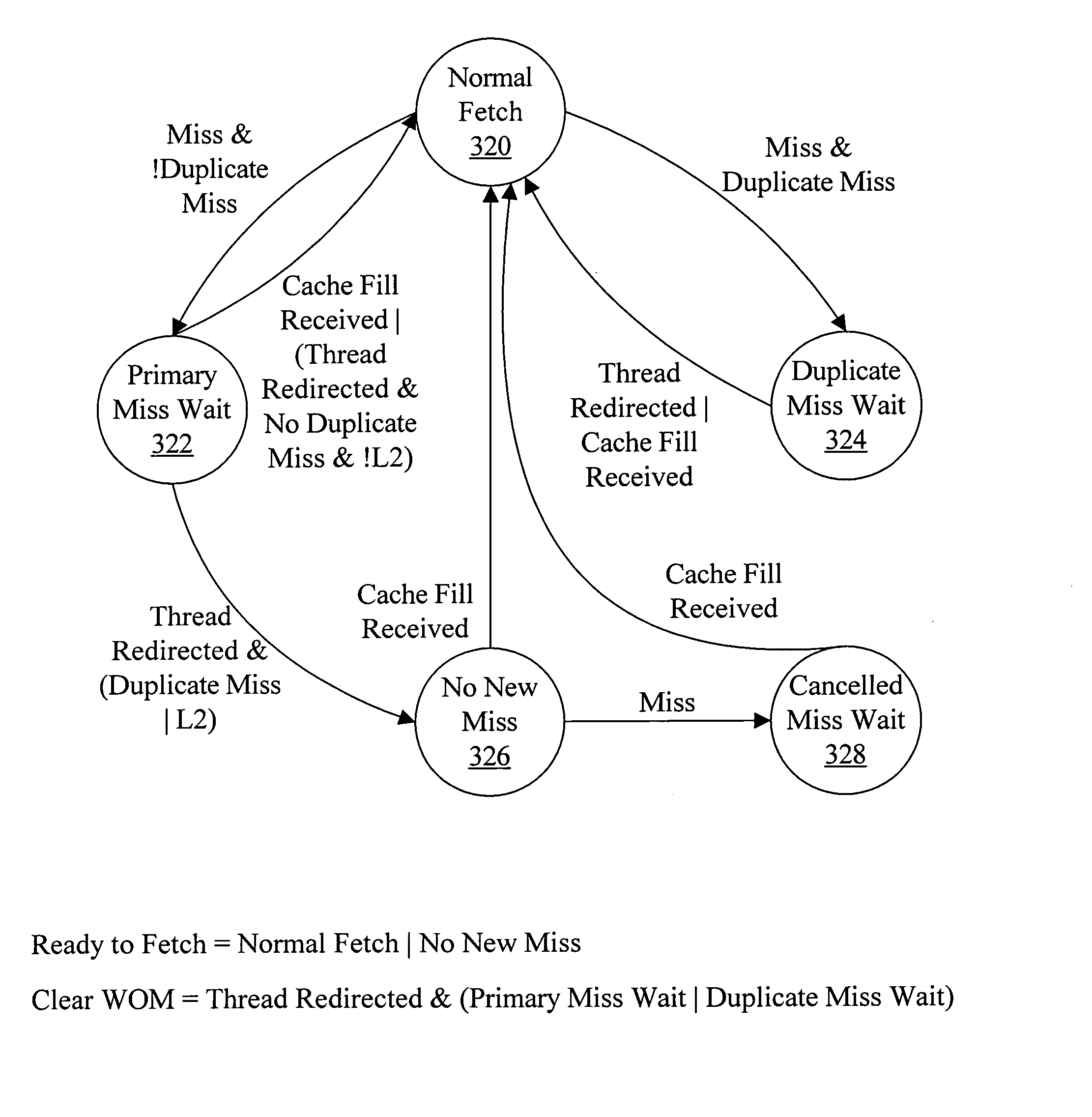

Handling duplicate cache misses in a multithreaded/multi-core processor

In one embodiment, a processor comprises a cache and a cache miss unit coupled to the cache. The cache miss unit is configured to initiate a cache fill of a cache line for the cache responsive to a first cache miss in the cache, wherein the first cache miss corresponds to a first thread of a plurality of threads in execution by the processor. Furthermore, the cache miss unit is configured to record an additional cache miss corresponding to a second thread of the plurality of threads, wherein the additional cache miss occurs in the cache prior to the cache fill completing for the cache line. The cache miss unit is configured to inhibit initiating an additional cache fill responsive to the additional cache miss.

Owner:ORACLE INT CORP

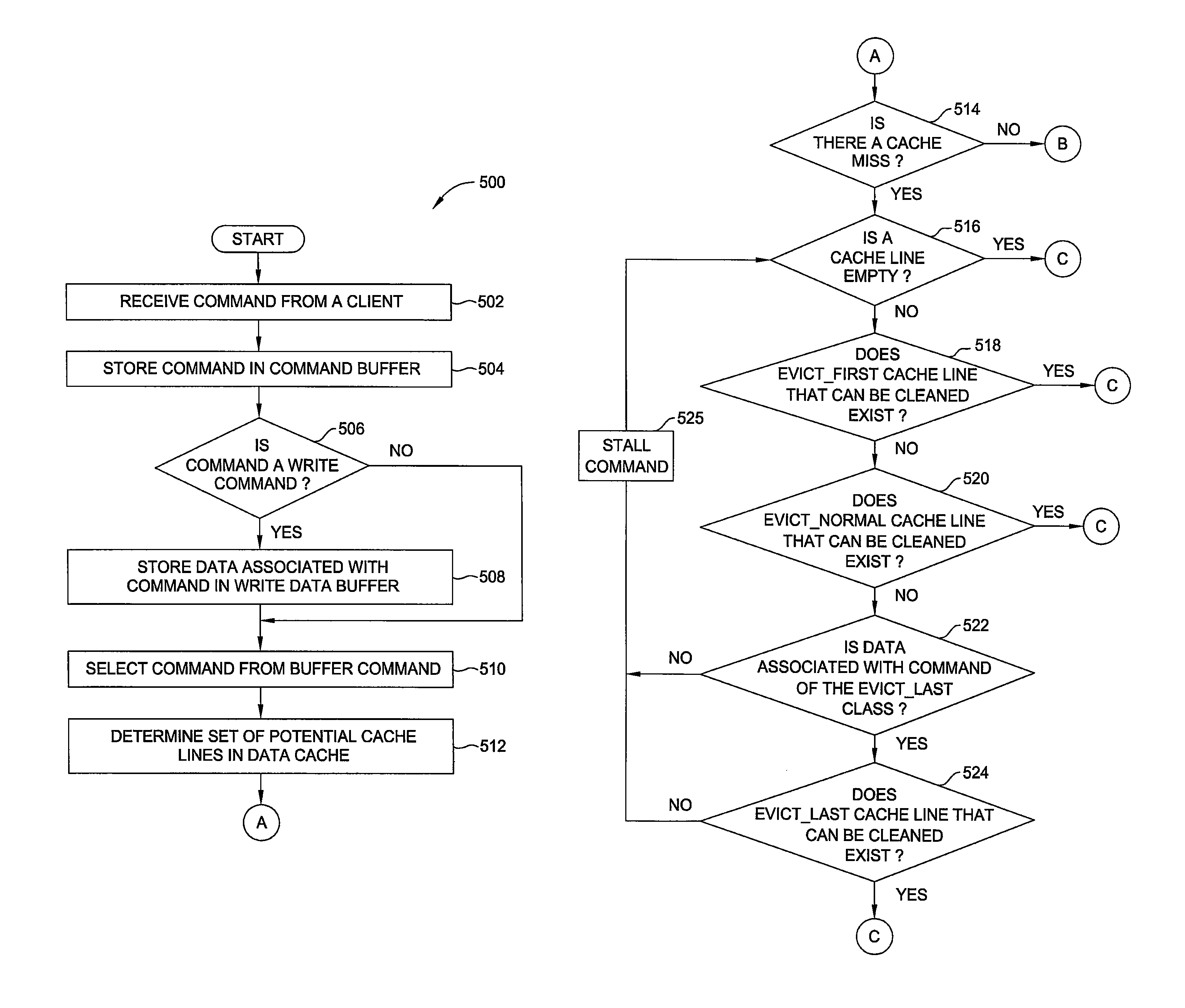

Multi-class data cache policies

ActiveUS8868838B1Reduce in quantityProgram control using stored programsMemory adressing/allocation/relocationParallel computingData storing

One embodiment of the invention sets forth a mechanism for evicting data from a data cache based on the data class of that data. The data stored in the cache lines in the data cache is categorized based on data classes that reflect the reuse potential of that data. The data classes are stored in a tag store, where each tag within the tag store corresponds to a single cache line within the data cache. When reserving a cache line for the data associated with a command, a tag look-up unit examines the data classes in the tag store to determine which data to evict. Data that has a low reuse potential is evicted at a higher priority than data that has a high reuse potential. Advantageously, evicting data that belongs to a data class that has a lower reuse potential reduces the number of cache misses within the system.

Owner:NVIDIA CORP

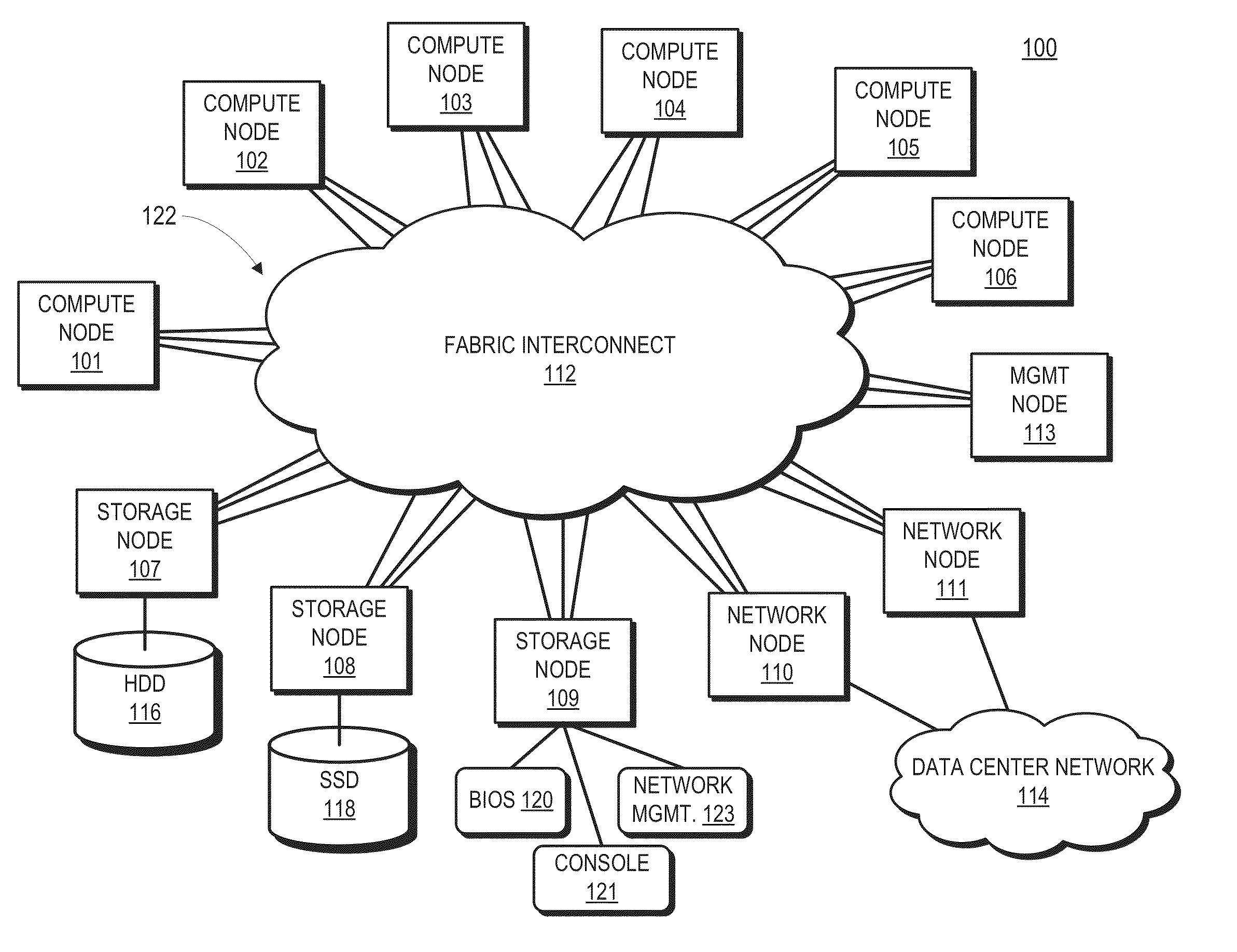

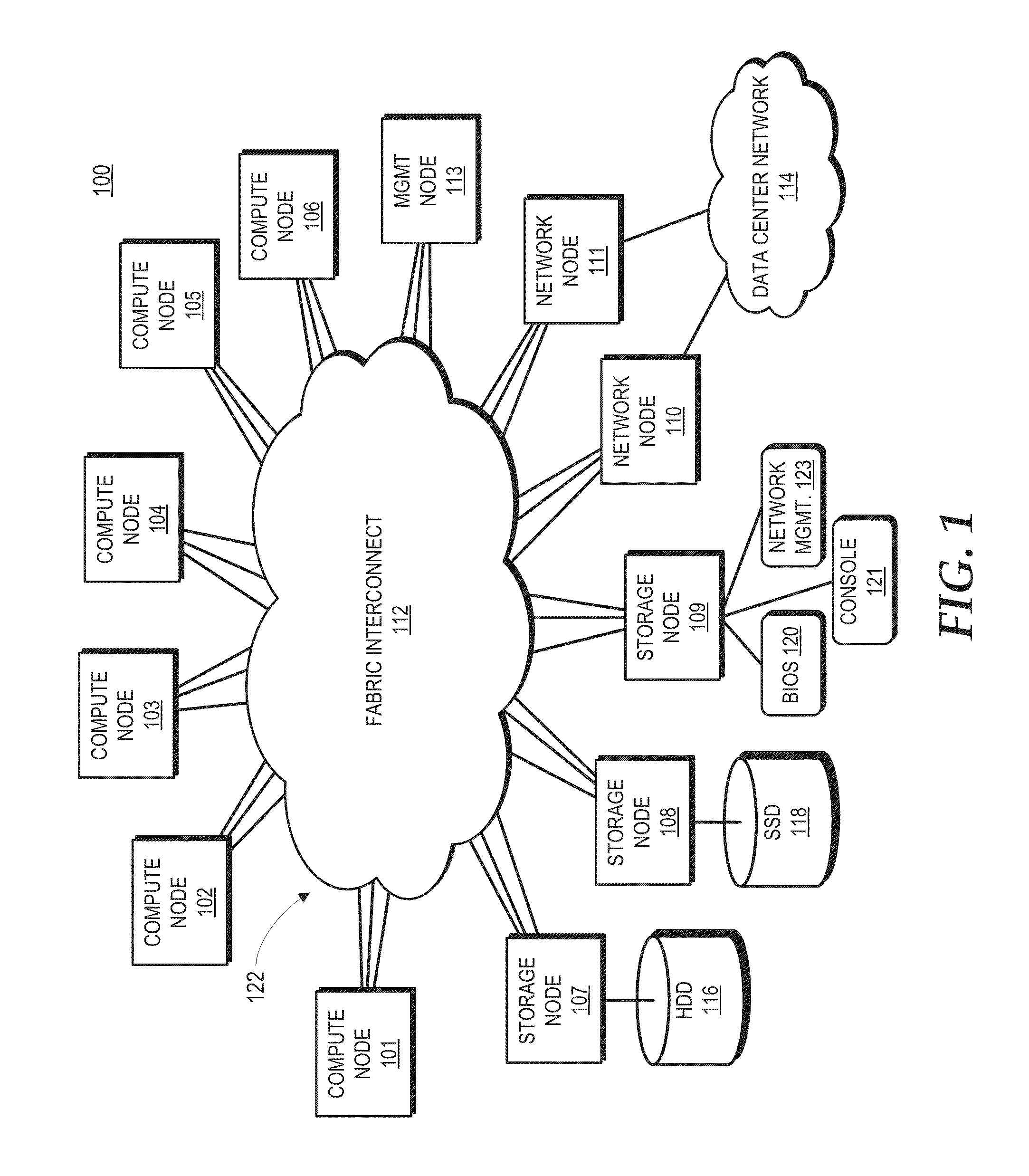

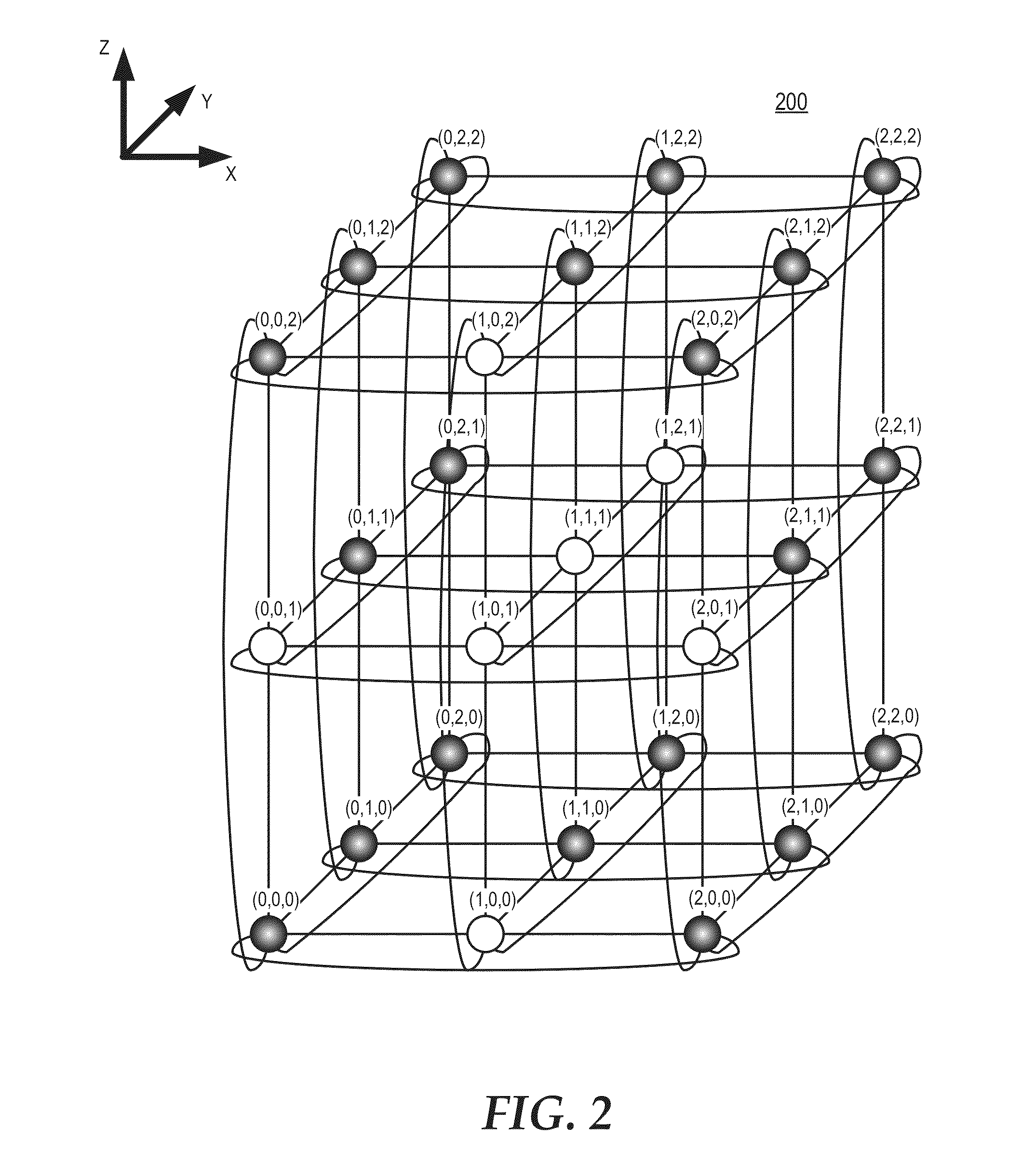

Distributed packet switching in a source routed cluster server

A cluster compute server includes nodes coupled in a network topology via a fabric that source routes packets based on location identifiers assigned to the nodes, the location identifiers representing the locations in the network topology. Host interfaces at the nodes may be associated with link layer addresses that do not reflect the location identifier associated with the nodes. The nodes therefore implement locally cached link layer address translations that map link layer addresses to corresponding location identifiers in the network topology. In response to originating a packet directed to one of these host interfaces, the node accesses the local translation cache to obtain a link layer address translation for a destination link layer address of the packet. When a node experiences a cache miss, the node queries a management node to obtain the specified link layer address translation from a master translation table maintained by the management node.

Owner:ADVANCED MICRO DEVICES INC

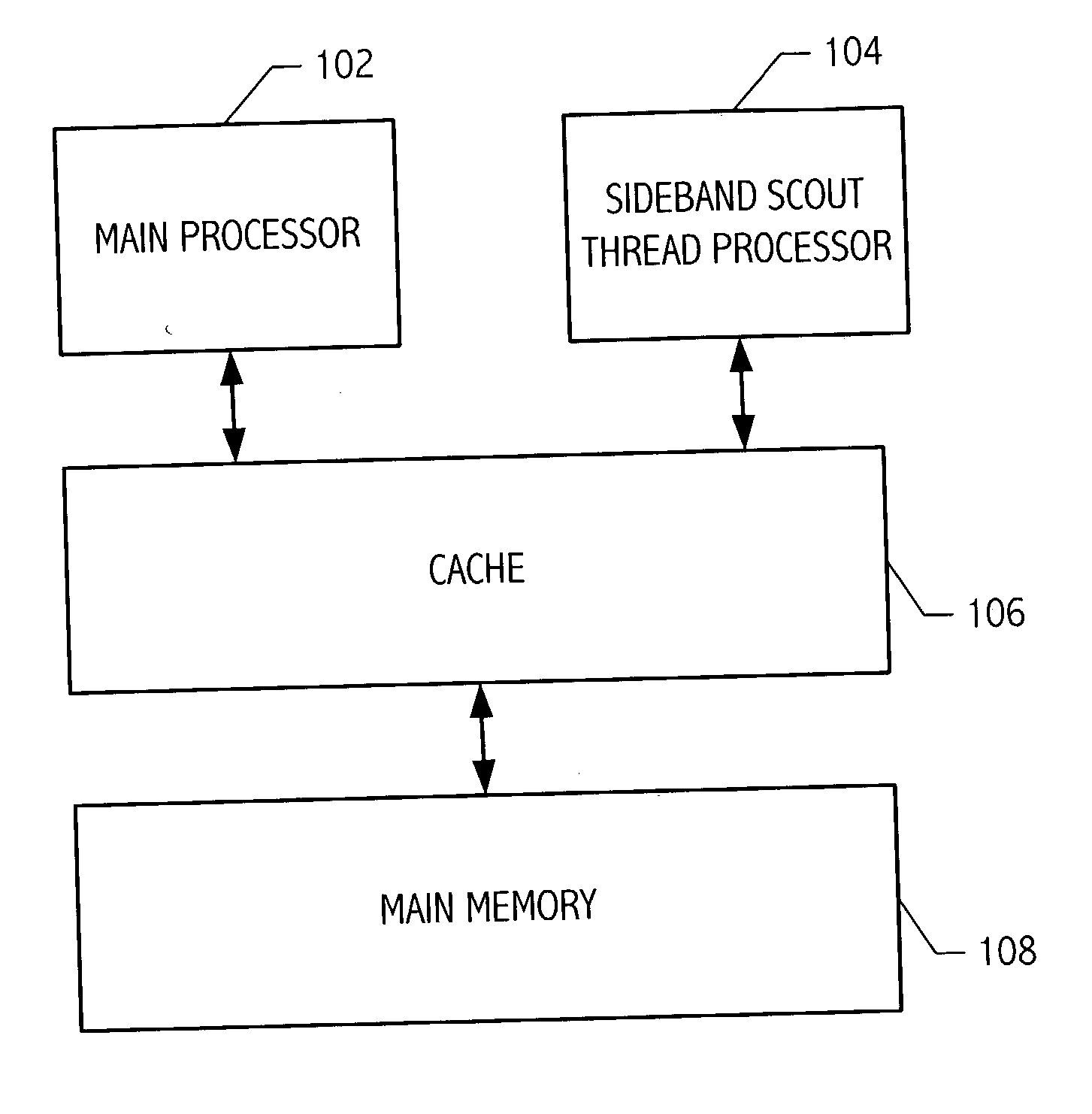

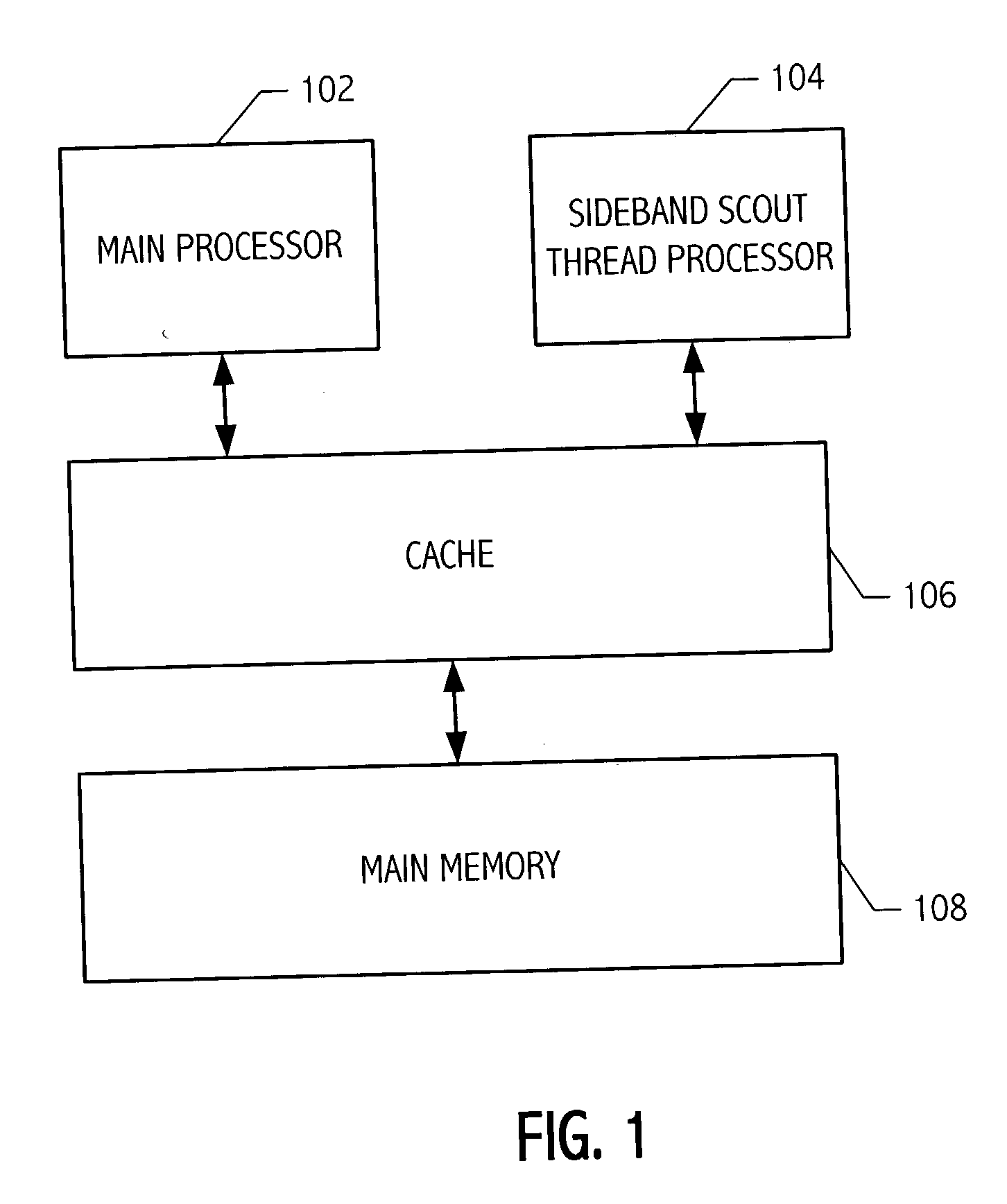

Sideband scout thread processor

ActiveUS20040148491A1Memory architecture accessing/allocationSoftware engineeringParallel computingSideband

A sideband scout thread processing technique is provided. The sideband scout thread processing technique utilizes sideband information to identify a subset of processor instructions for execution by a scout thread processor. The sideband information identifies instructions that need to be executed to "warm-up" a cache memory that is shared with a main processor executing the whole set of processor instructions. Thus, the main processor has fewer cache misses and reduced latencies. In one embodiment, a system includes a first processor for executing a sequence of processor instructions, a second processor for executing a subset of the sequence of processor instructions, and a cache shared between the first processor and the second processor. The second processor includes sideband circuitry configured to identify the subset of the sequence of processor instructions to execute according to sideband information associated with the sequence of processor instructions.

Owner:ORACLE INT CORP

Features

- R&D

- Intellectual Property

- Life Sciences

- Materials

- Tech Scout

Why Patsnap Eureka

- Unparalleled Data Quality

- Higher Quality Content

- 60% Fewer Hallucinations

Social media

Patsnap Eureka Blog

Learn More Browse by: Latest US Patents, China's latest patents, Technical Efficacy Thesaurus, Application Domain, Technology Topic, Popular Technical Reports.

© 2025 PatSnap. All rights reserved.Legal|Privacy policy|Modern Slavery Act Transparency Statement|Sitemap|About US| Contact US: help@patsnap.com