Patents

Literature

Hiro is an intelligent assistant for R&D personnel, combined with Patent DNA, to facilitate innovative research.

322 results about "Land cover" patented technology

Efficacy Topic

Property

Owner

Technical Advancement

Application Domain

Technology Topic

Technology Field Word

Patent Country/Region

Patent Type

Patent Status

Application Year

Inventor

Land cover is the physical material at the surface of the earth. Land covers include grass, asphalt, trees, bare ground, water, etc. Earth cover is the expression used by ecologist Frederick Edward Clements that has its closest modern equivalent being vegetation. The expression continues to be used by the United States Bureau of Land Management.

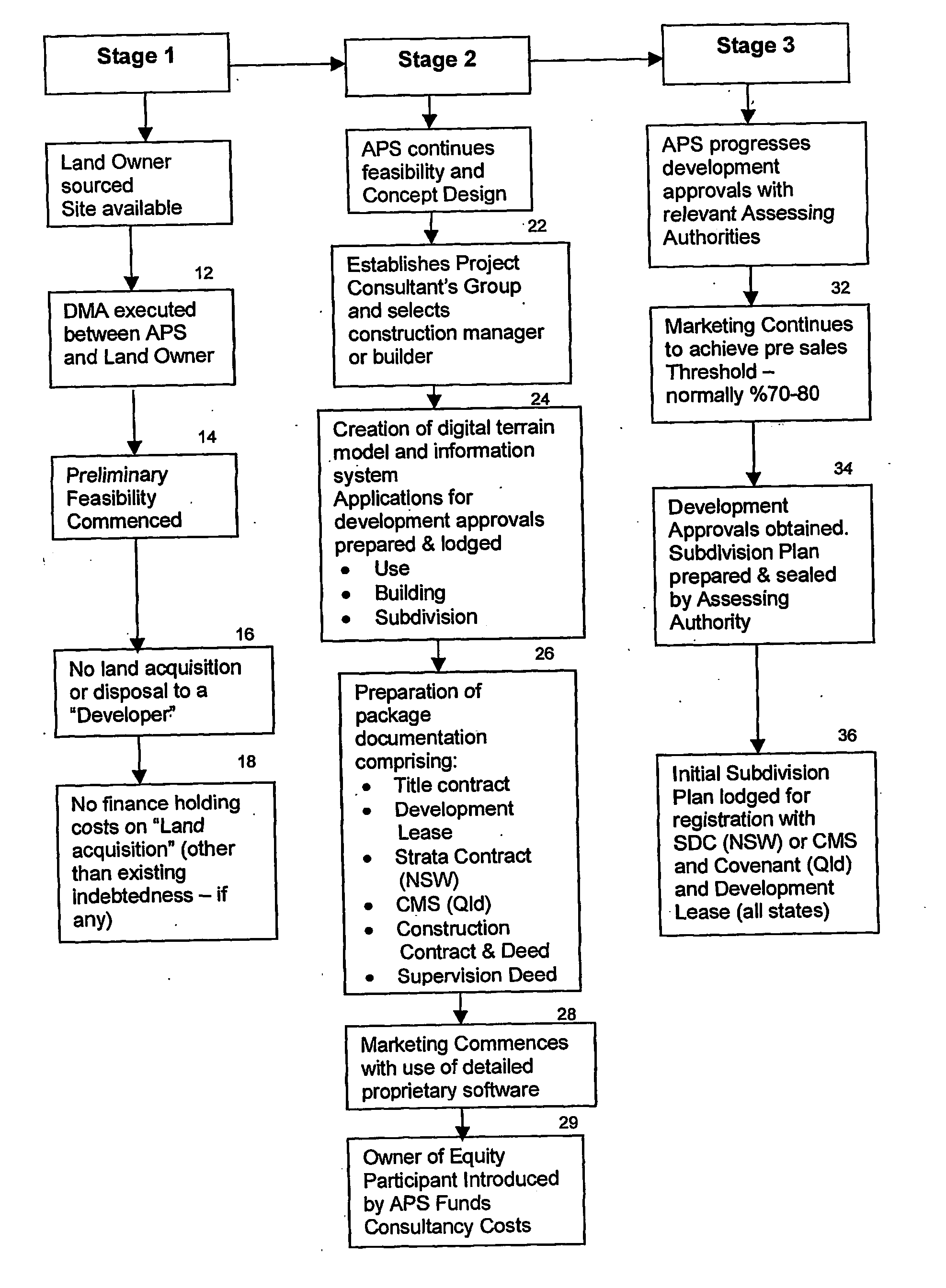

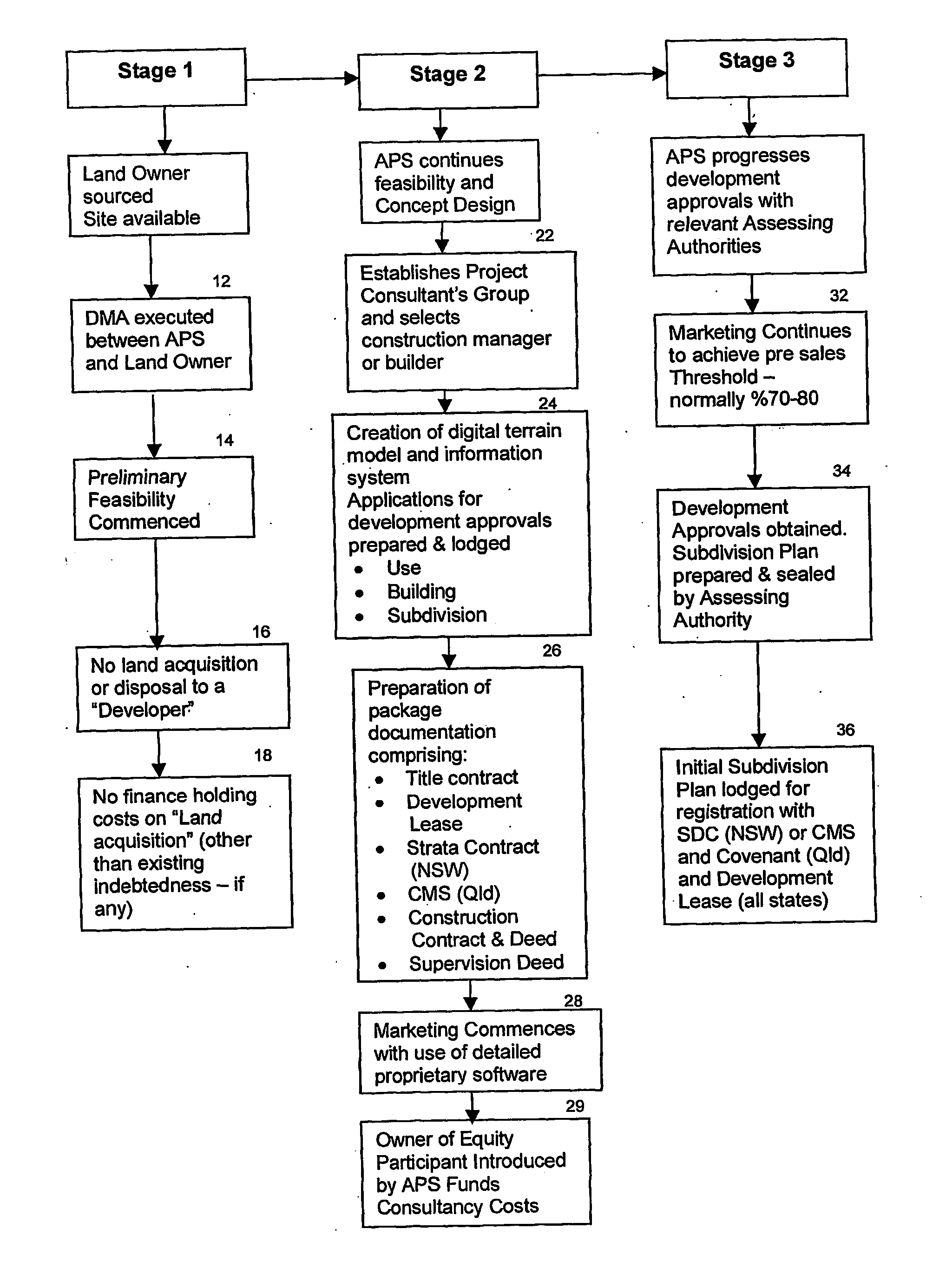

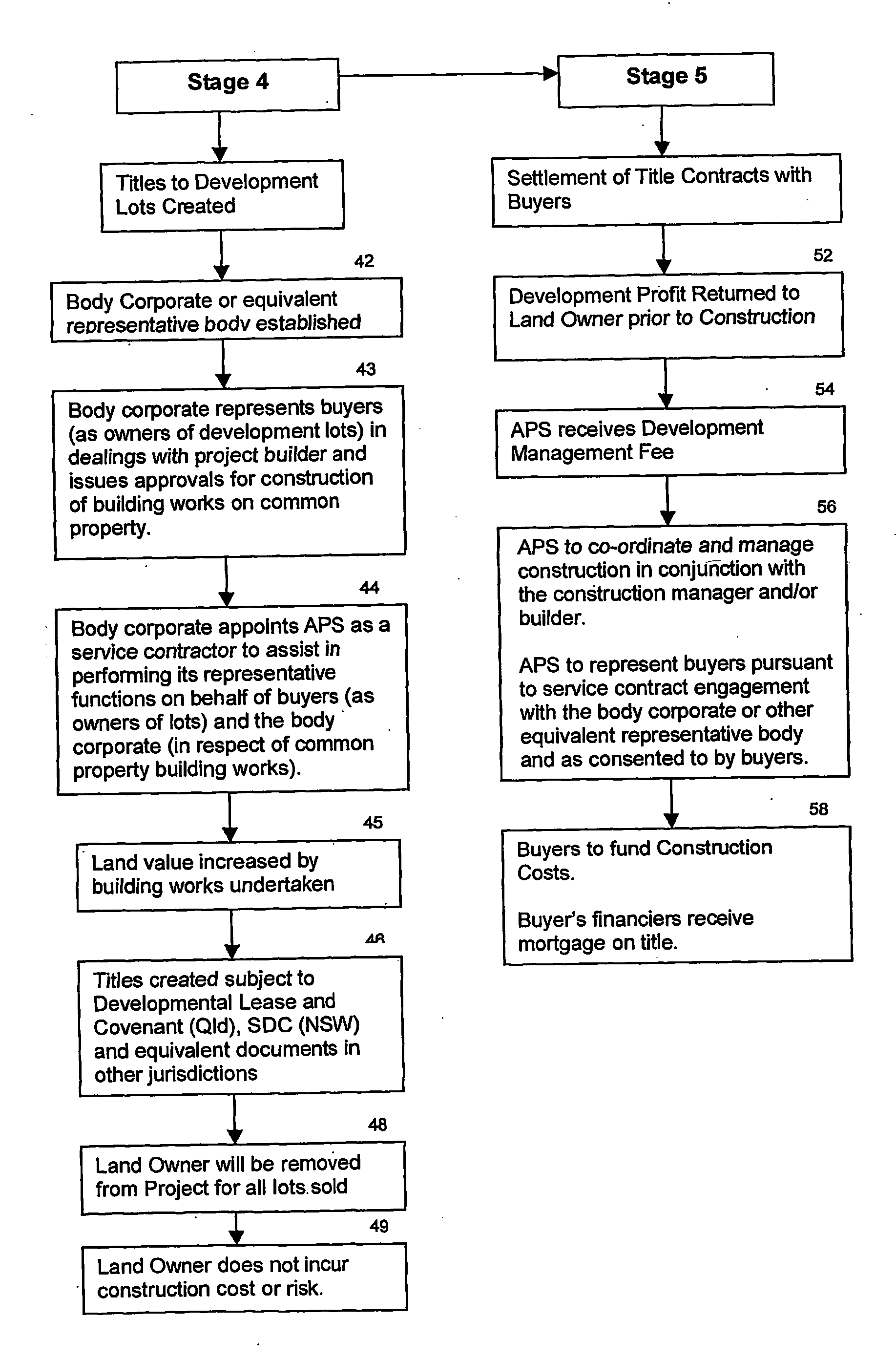

Method of managing property development

InactiveUS20040148294A1Enhance the imageImprove economyFinanceDigital data processing detailsLand coverEngineering

A computerised method for developing real property a land owner, builder, end buyers and a development manager are given participatory roles in the development process wherein returns produced by the development of land and realisation of development rights attaching to land are accesible to the land owner and other profit participants; realisation is not limited to receipt of a return on the land value only through the disposition of the land to a developer, and wherein the development can be carried out on a computer generated model of the land together with any improvements thereon and official titles to the real property can be issued by relevant authorities and a financial settlement able to occur on the titles prior to commencing and or completing any civil works or construction on the land.

Owner:AUSTRALIAN PROPERTY SYST NO 1

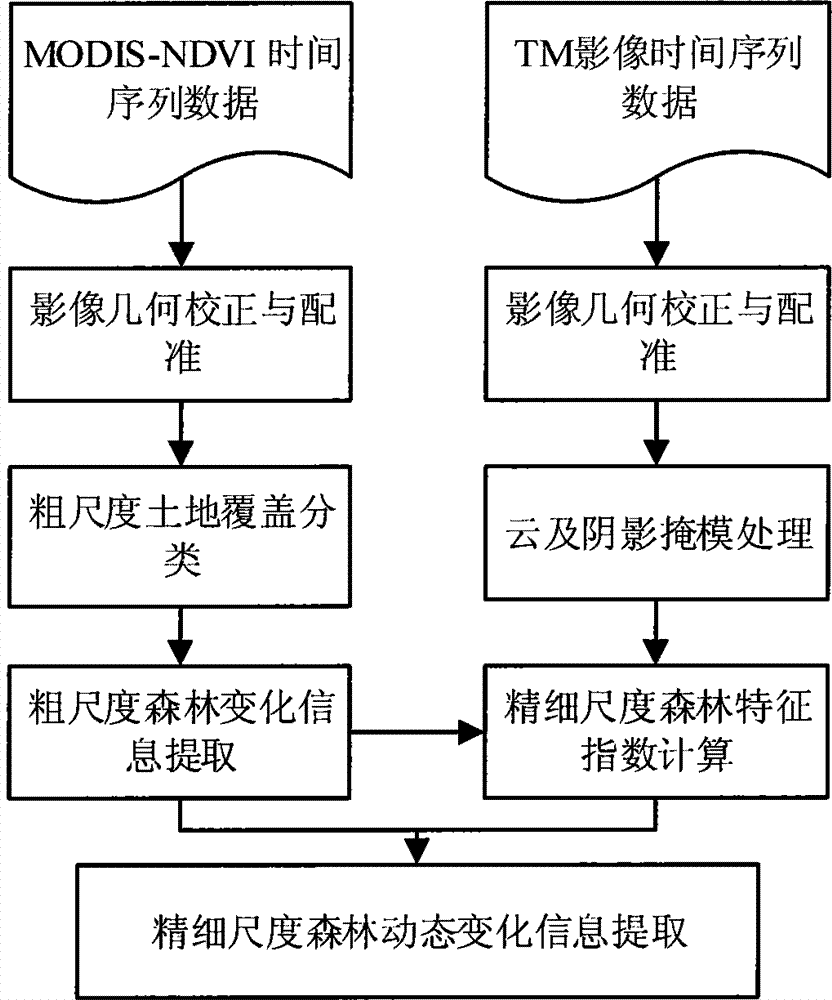

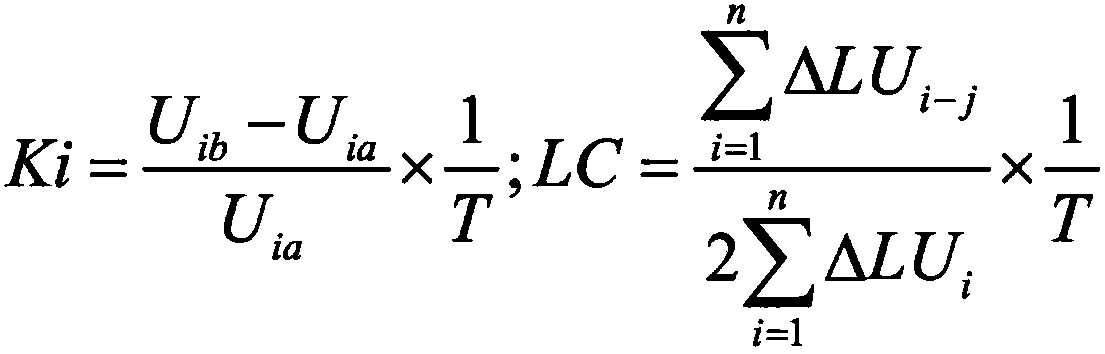

Multi-scale forest dynamic change monitoring method

InactiveCN104851087AImprove monitoring efficiencyImprove monitoring accuracyImage enhancementImage analysisSensing dataForest dynamics

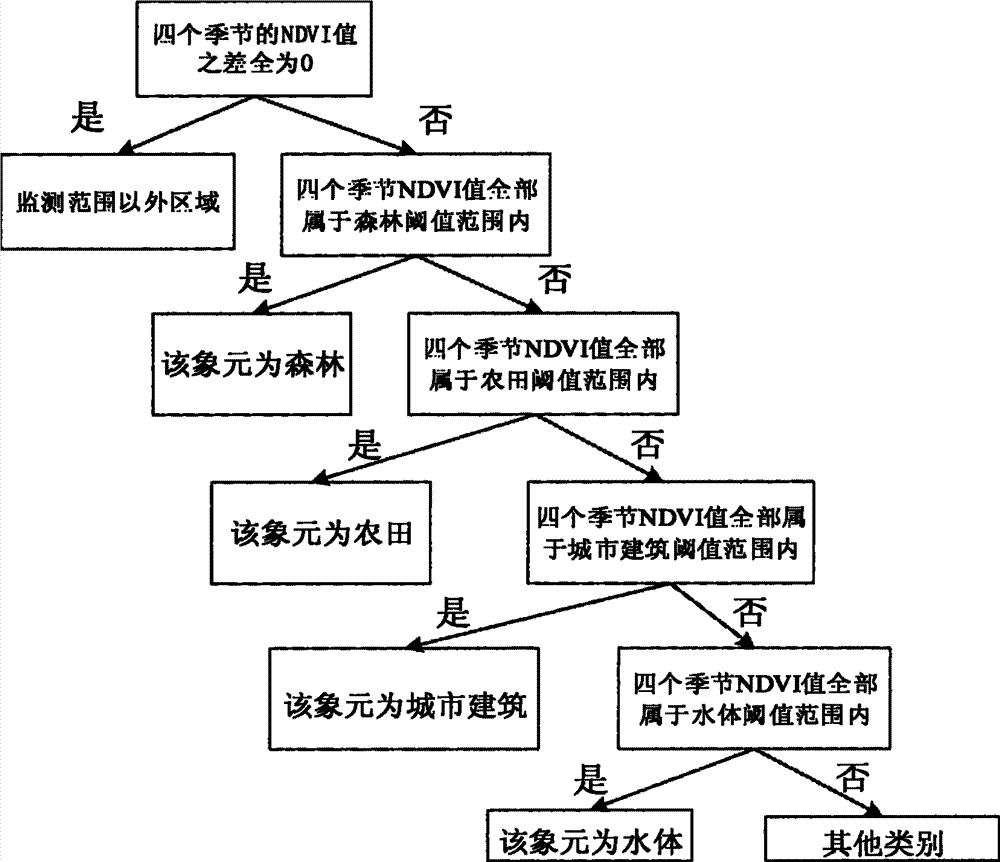

The present invention discloses a multi-scale forest dynamic change monitoring method. The method comprises carrying out remote sensing image geometric correction and rectification; acquiring a land cover type map with a 1KM resolution ratio by using MODIS time series NDVI data with a 1KM resolution ratio of different seasons within one year; generating a coarse scale land cover changing map by using multiple years of land cover type maps with a 1KM resolution ratio; establishing a coarse scale forest cover change masking file by using a coarse scale land cover changing type map; establishing forest cover characteristic indexes on TM images with a 30 m resolution ratio according to the coarse scale forest cover change masking file; and extracting forest dynamic change information combining the coarse scale land cover changing map and forest characteristic indexes of time series. According to the method provided by the present invention, by using time series remote sensing data with different spatial resolutions, coarse scale land cover to fine scale forest change step-by-step detailed forest dynamic monitoring can be realized in large-area regions, and both monitoring efficiency and monitoring accuracy can be raised.

Owner:HUAZHONG AGRI UNIV

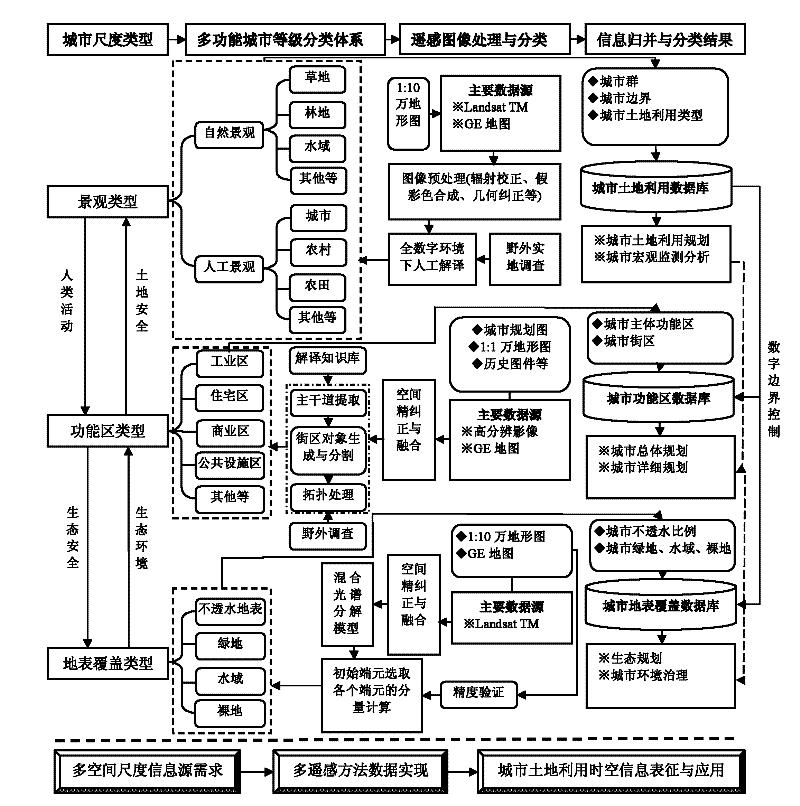

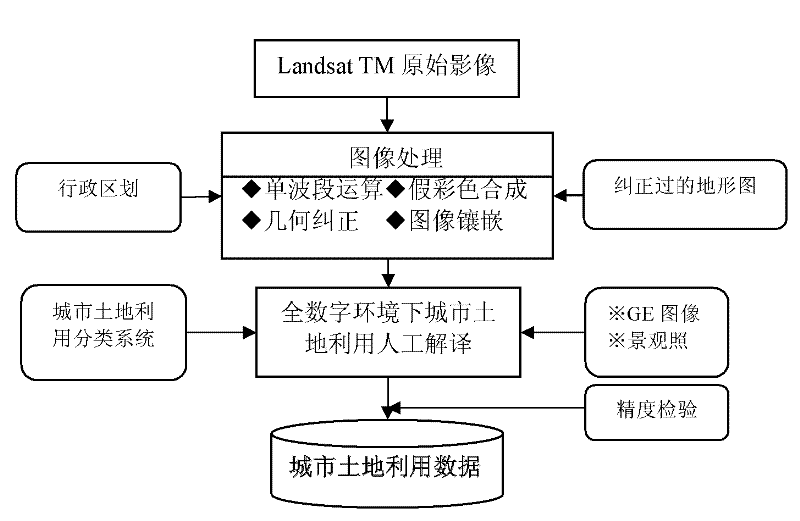

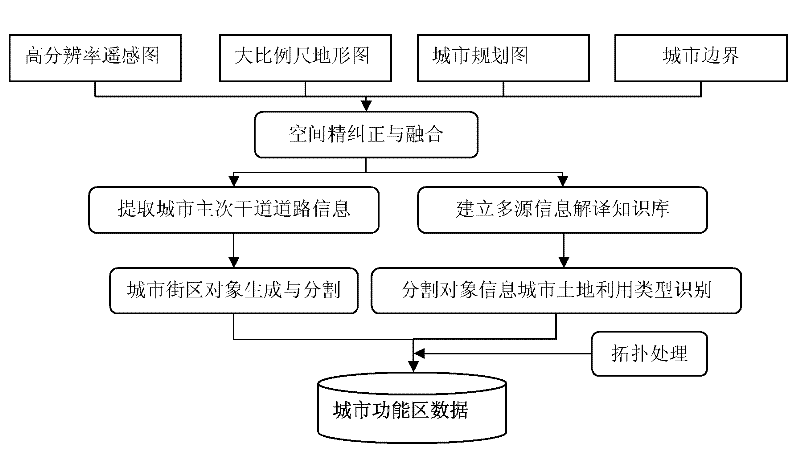

High-resolution remote-sensing multifunctional urban land spatial information generation method

The invention discloses a high-resolution remote-sensing multifunctional urban land spatial information generation method. According to the method, a complex system rank theory is introduced; an urban land spatial information classification system with three rank scales, i.e. a multifunctional target landscape type, a functional area type and a land cover type, which self-adapts to urban planningmanagement and environmental renovation, is proposed; and on the basis of realizing the treatment of fine correction and alignment on remote-sensing images of a Landsat TM, a Google Earth and auxiliary maps, the Landsat TM is applied to carrying out urban landscape type classification, a three-level rank classification type-merged combination and an information mining knowledge base are constructed, the classified information is merged to form a first-level classification result of urban land, the digital functional areas are classified into a second level and the land cover is classified into a third level under the constrained control of higher-level classification information. The method has the characteristics of low cost, high accuracy of classification and strong targeted application, and thus, the requirements on target applications, such as ecological urban design, urban environmental management and the like, are better met.

Owner:INST OF GEOGRAPHICAL SCI & NATURAL RESOURCE RES CAS

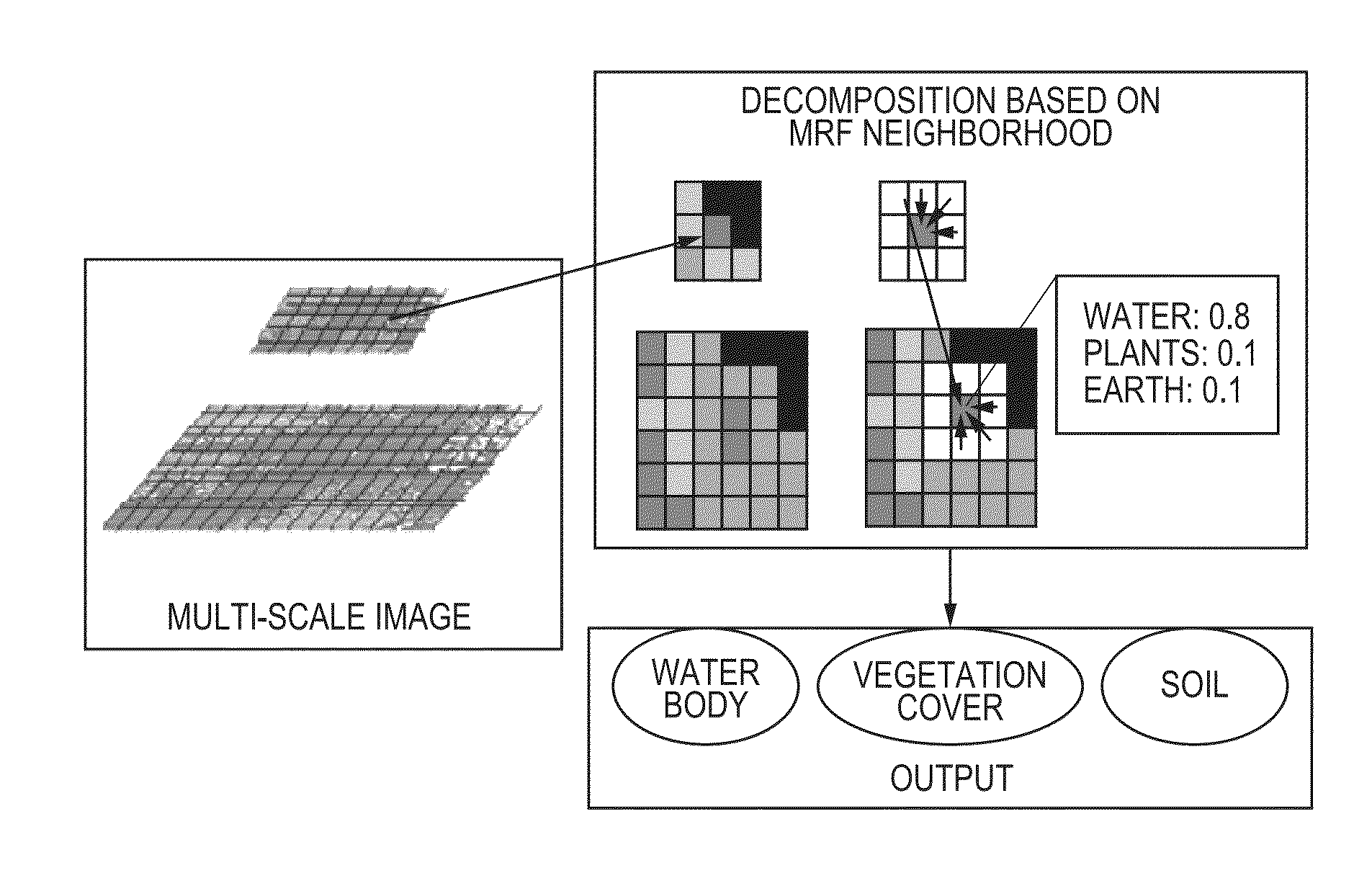

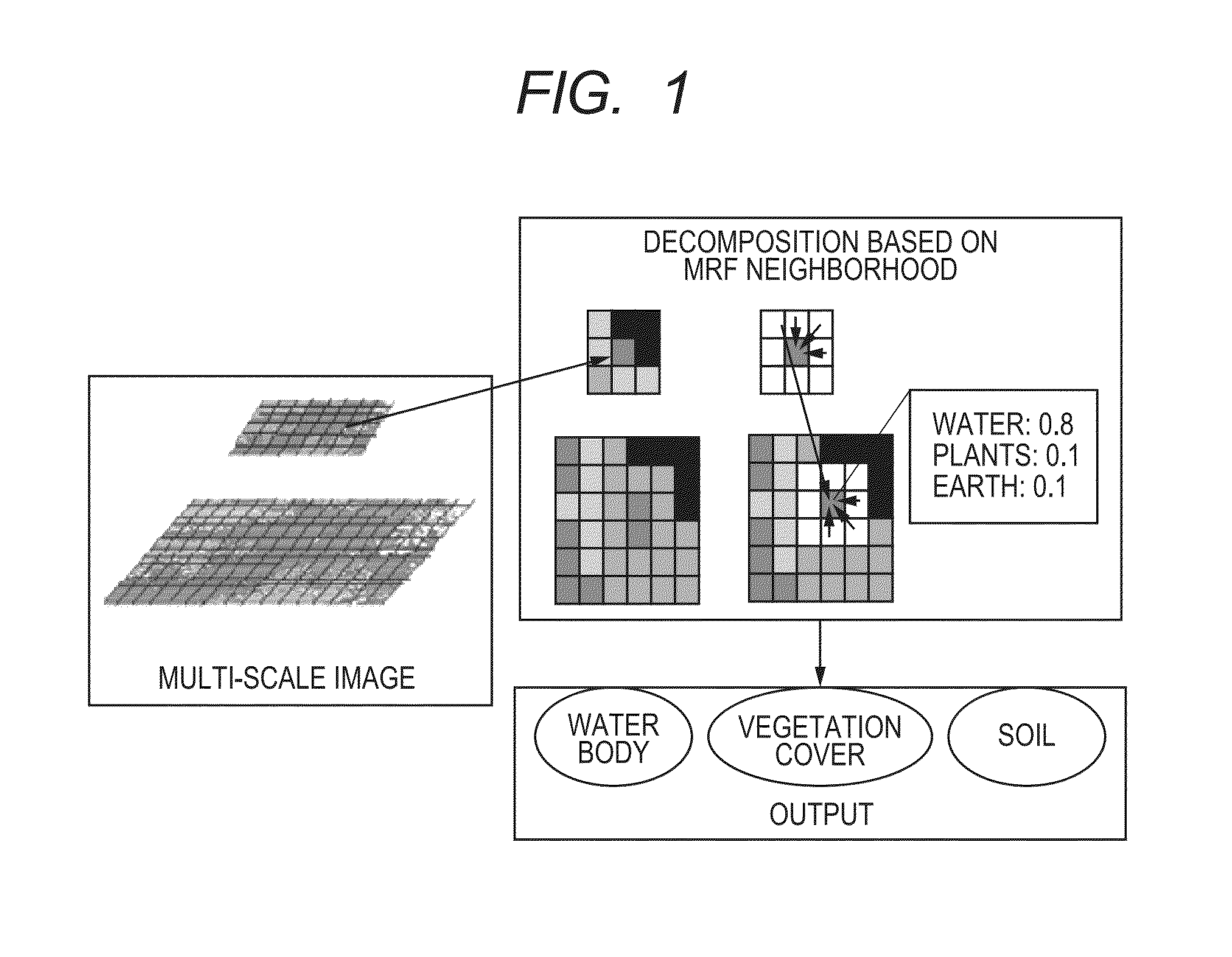

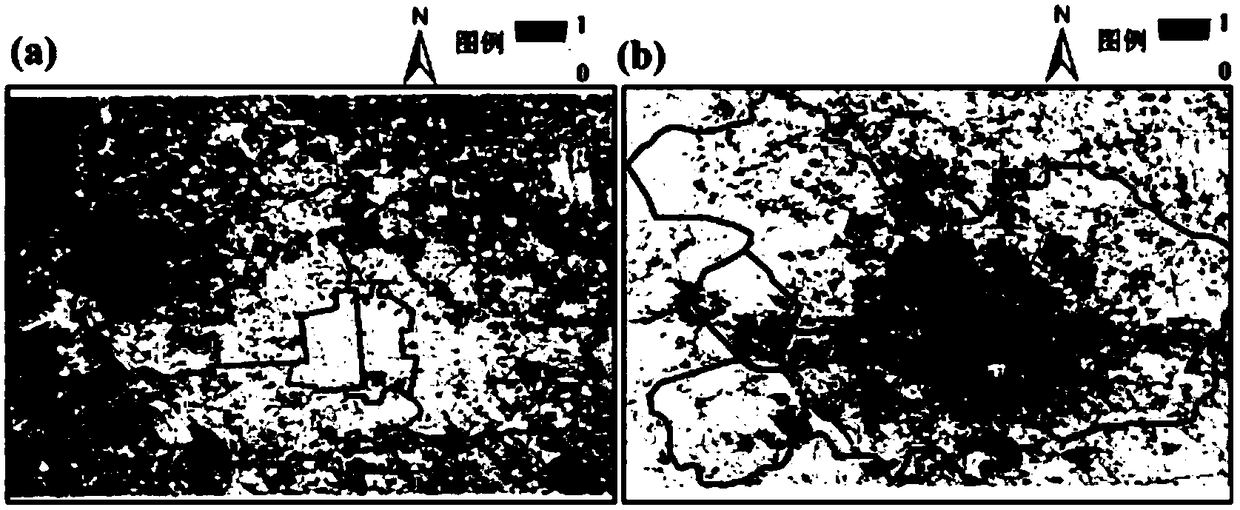

Decomposition apparatus and method for refining composition of mixed pixels in remote sensing images

ActiveUS20130336540A1Accurate land cover informationImprove accuracyImage enhancementImage analysisLand coverDecomposition

To achieve space correlation of pixel decomposition results and reduce noise problem caused by isolation, there is provided a decomposition apparatus and method for refining composition of mixed pixels in remote sensing images, including: a preprocessing step for temporarily determining the provability value of the composition ratio of the different land cover types of each pixel in the image, based on a received remote sensing image and spectral information, to obtain first material composition information; and a neighborhood correlation calculation step for analyzing the correlation between a main pixel and the neighboring areas by using the first material composition information of each of the pixels present in the neighboring areas within a predetermined range in which the pixels are adjacent to each other, and optimizing the first material composition information of the main pixel by the result of the correlation analysis, to obtain second material composition information.

Owner:HITACHI LTD

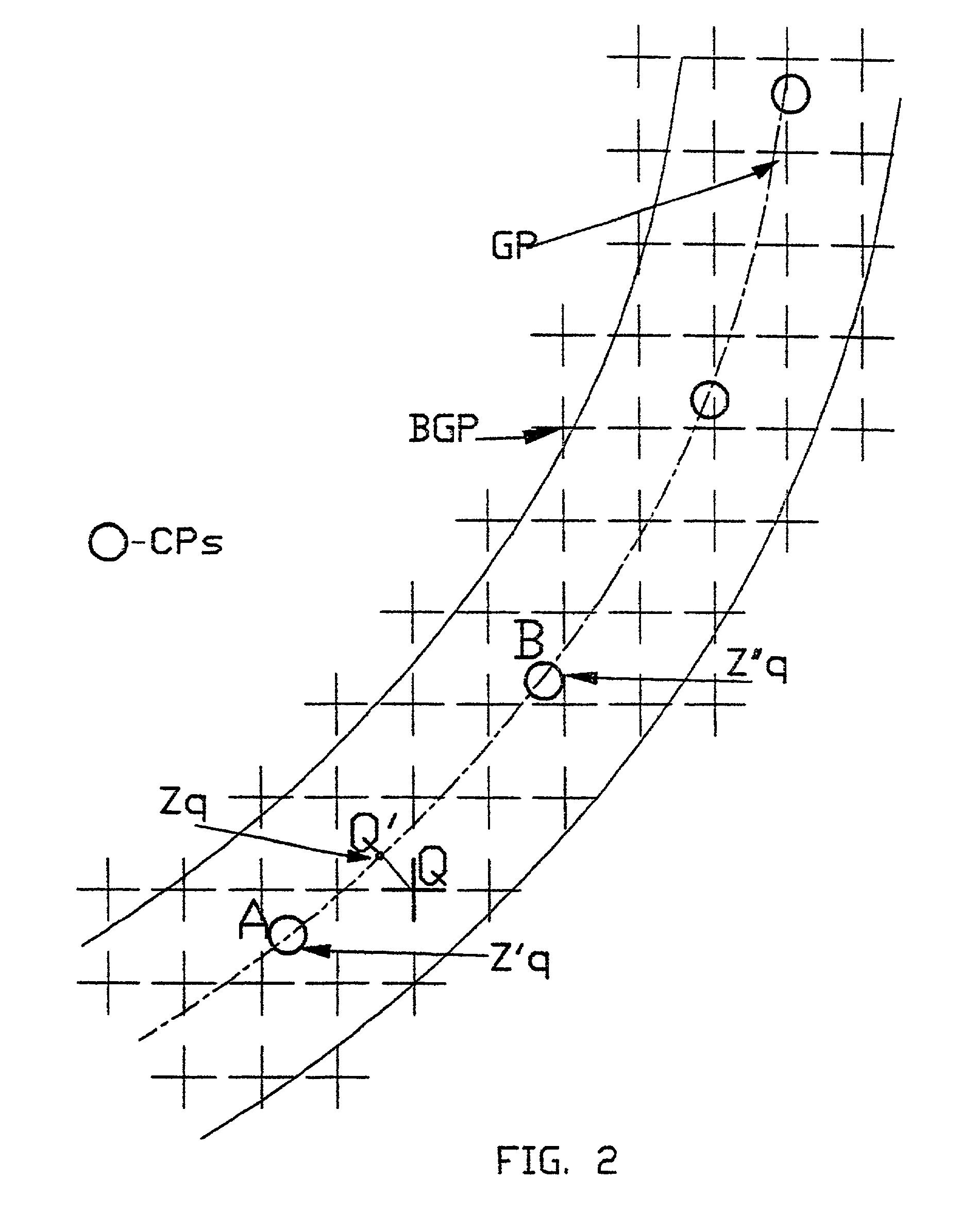

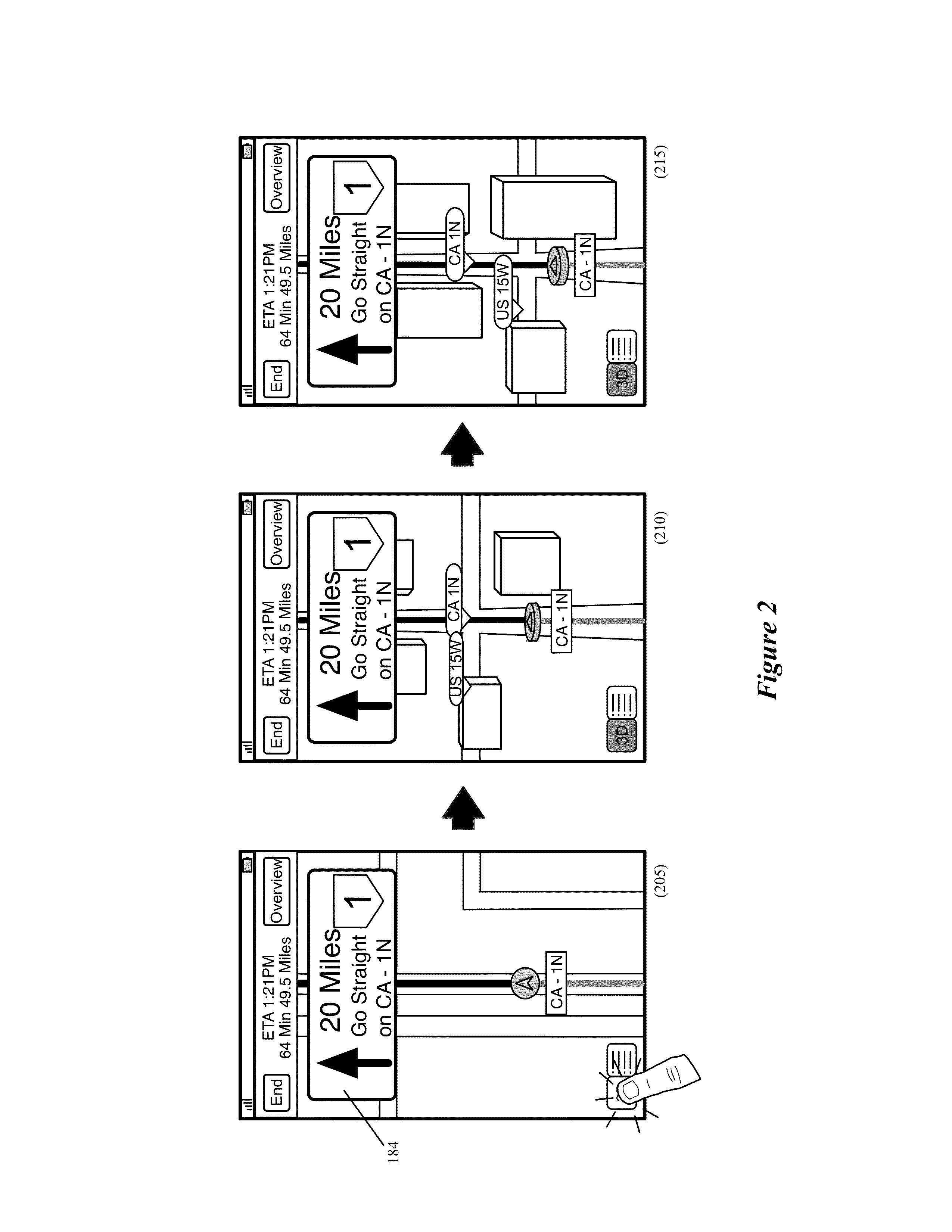

Computer-implemented method and system for designing transportation routes

InactiveUS6996507B1Facilitate comparison of different layoutsGreat cost-effectivenessGeometric CADLiquid/gas jet drillingLand coverUnit cost

A computer-implemented method and system for designing transportation routes, wherein design criteria to be met in respect of at least one of said transportation routes are supplied and route profile data are obtained showing land heights at sampled points along each of said at least one transportation route. Per-unit cost estimates in respect of land-cut and land-fill operations are supplied, and a height profile is computed of the at least one transportation route which meets the design criteria and for which the land-cut and land-fill operations are adjusted to give a minimum cost.

Owner:MAKOR ISSUES & RIGHTS

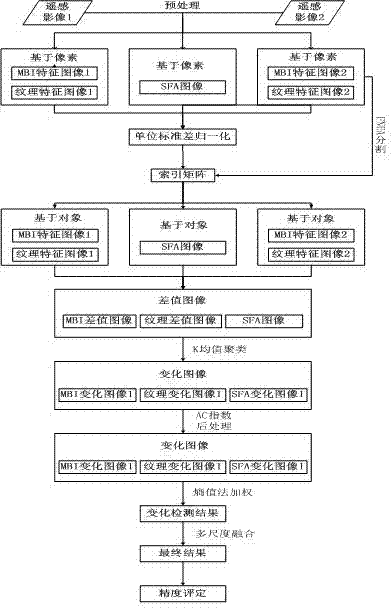

Object-oriented building change detection method based on multi-feature fusion

The invention discloses an object-oriented building change detection method based on multi-feature fusion. The method includes: solving MBIs (morphological building indexes), texture features and SFA (slow feature analysis) graphs of image pixels; performing FNEA (fractal net evolution approach) splitting through the MBIs and the texture features; solving three feature values of each object, performing differencing, and solving a threshold by a K-means clustering algorithm to obtain a feature change graph; performing post-processing through AC indexes; solving weights of different feature change graphs by an entropy method, and setting thresholds according to the weights to obtain change images; performing post-processing by a voting method to obtain change detection results. The method has the advantages that building changes are detected through the MBIs, the SFA graphs and the texture features, the MBIs and the texture features are added to the FNEA splitting, the AC indexes are provided, and post-processing is performed by the voting method. A novel way for the application of high-resolution remote sensing images, land coverage and urban expansion is provided.

Owner:WUHAN UNIV

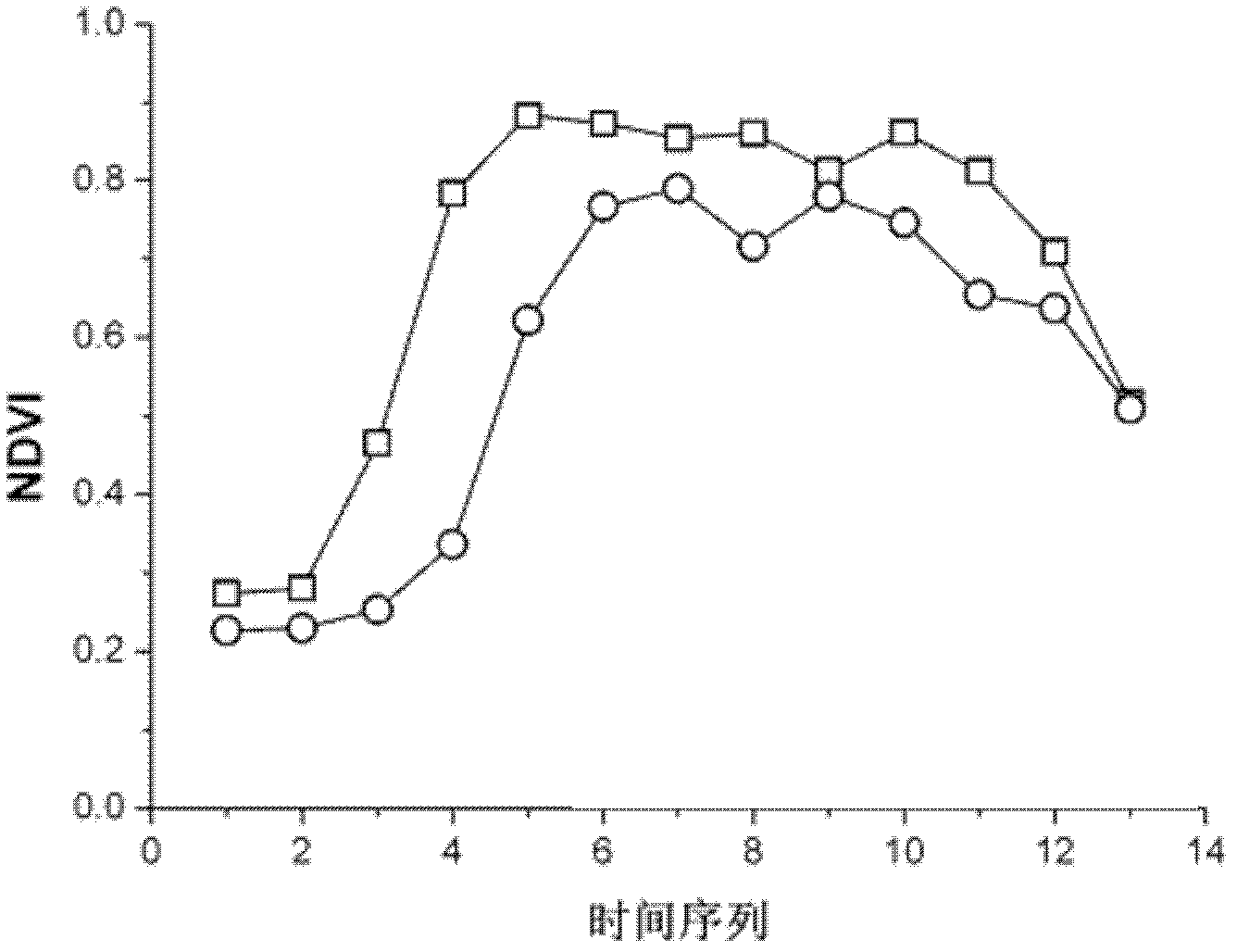

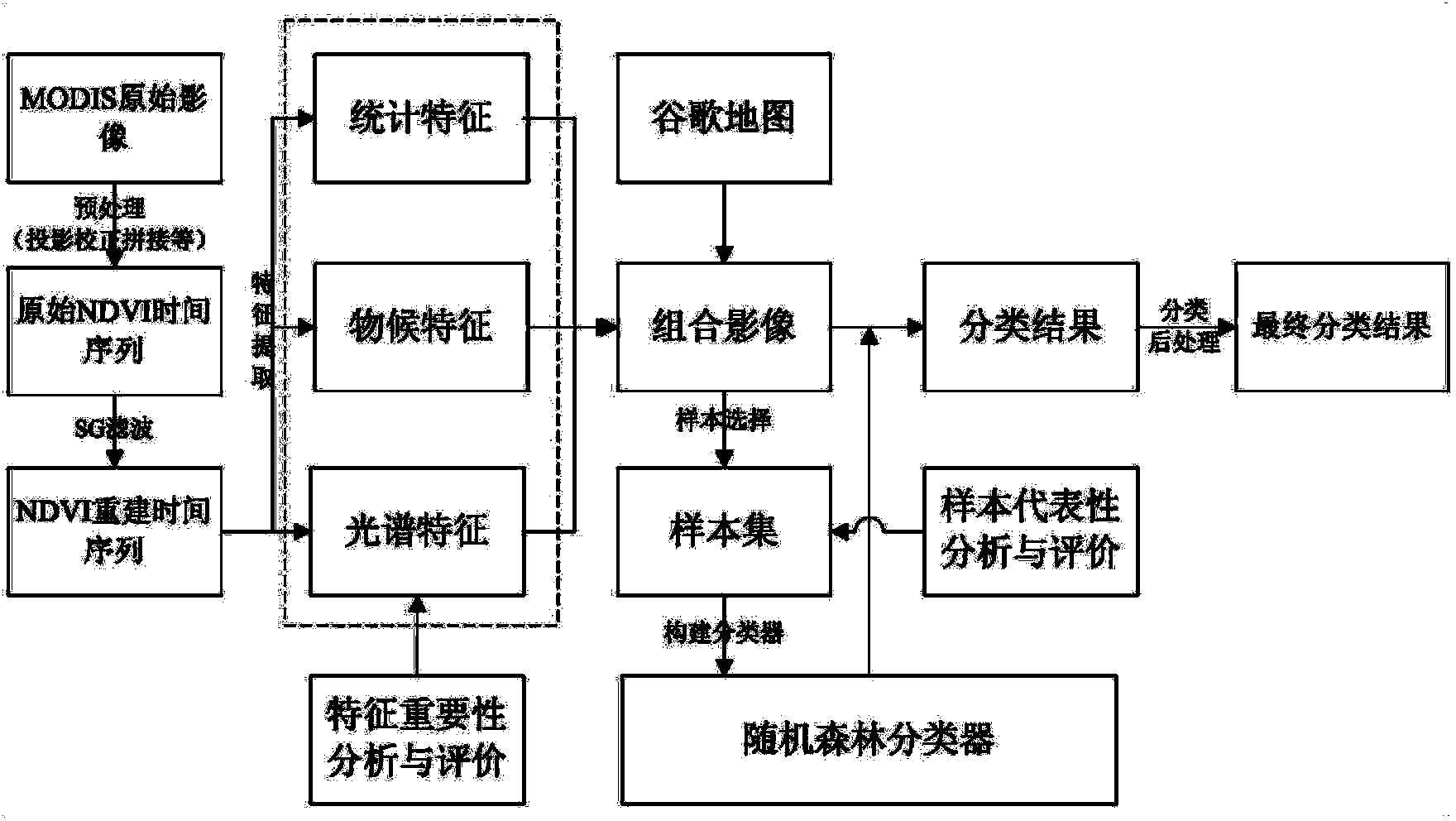

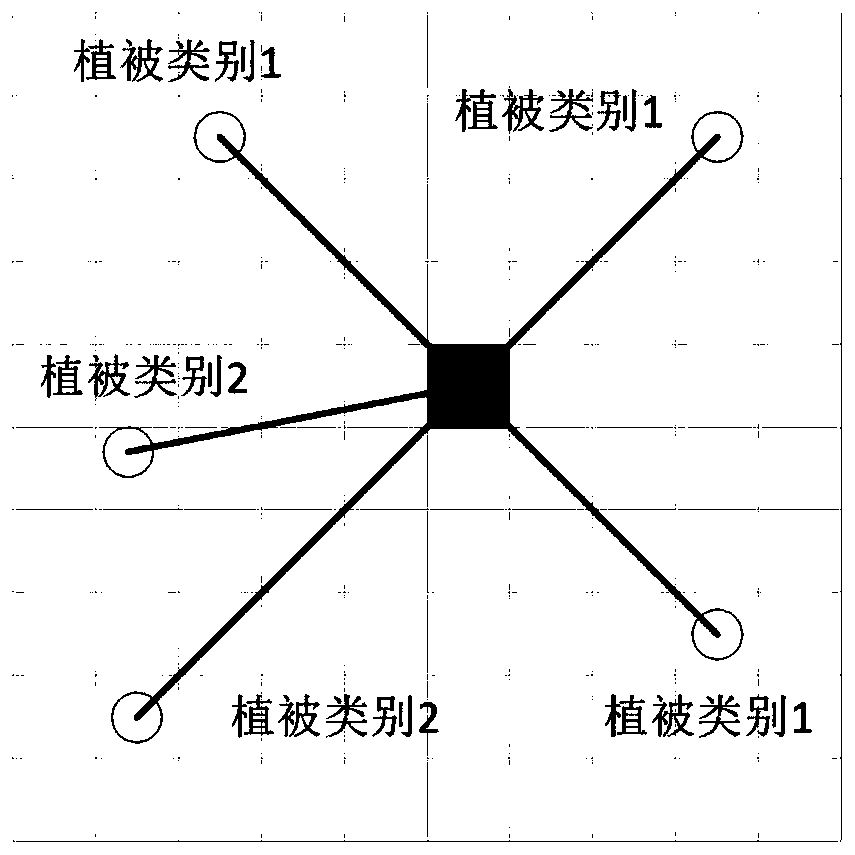

Method for classifying remote sensing images blended with high-space high-temporal-resolution data by object oriented technology

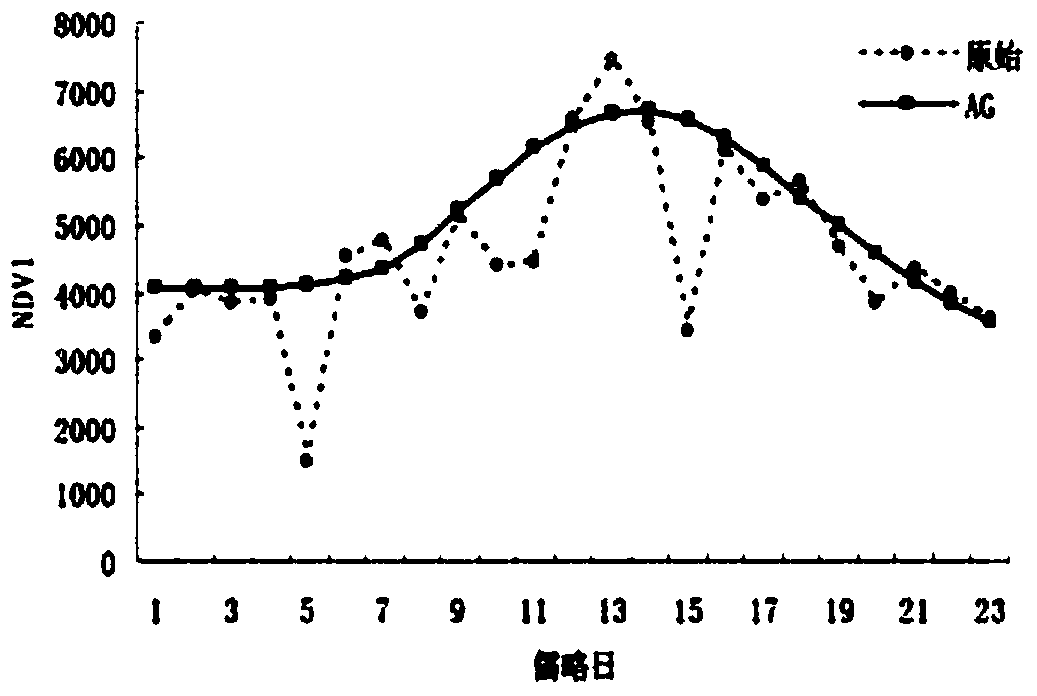

InactiveCN102609726AOvercome indistinguishable difficultiesSolve finelyPhotogrammetry/videogrammetryCharacter and pattern recognitionLand coverVegetation Index

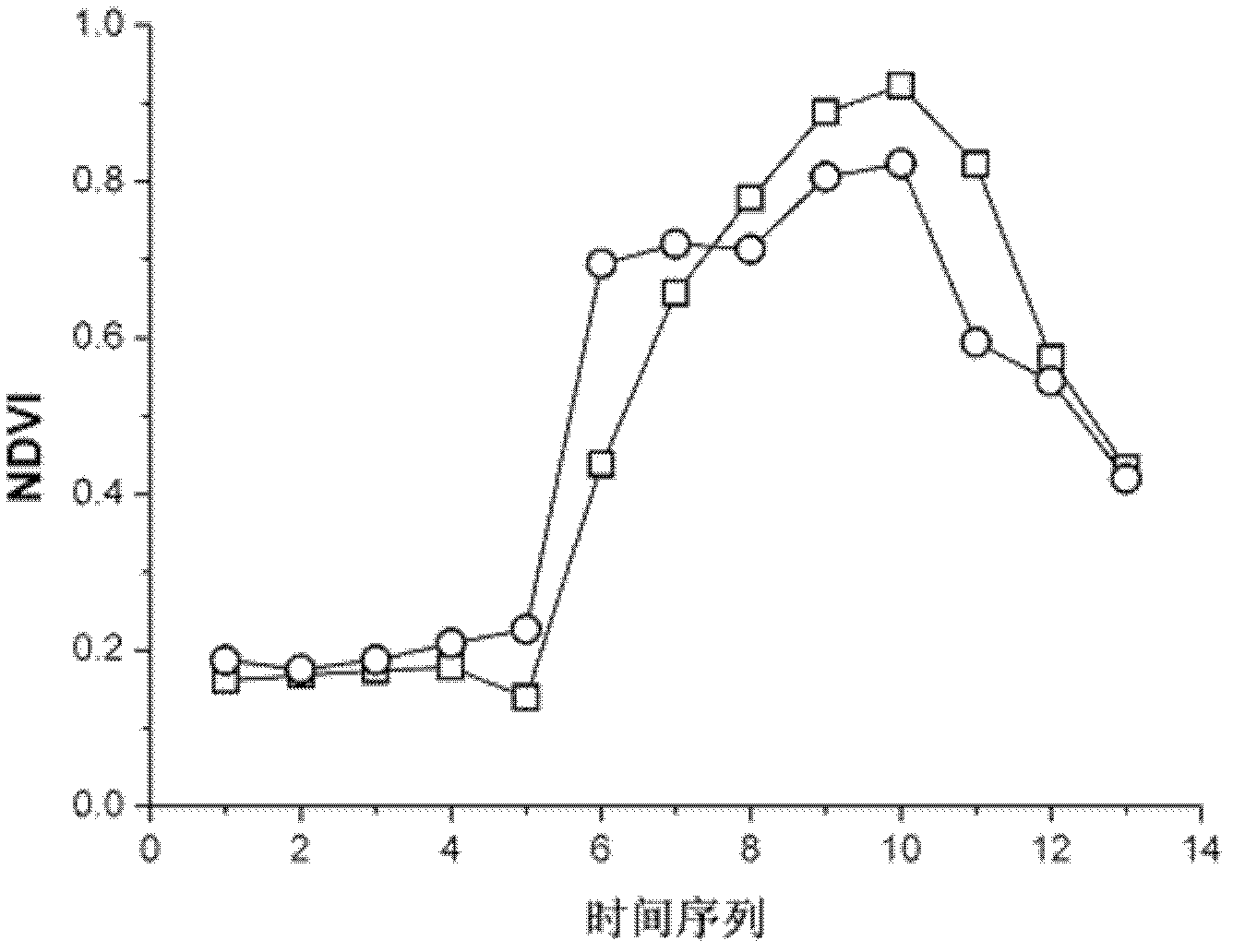

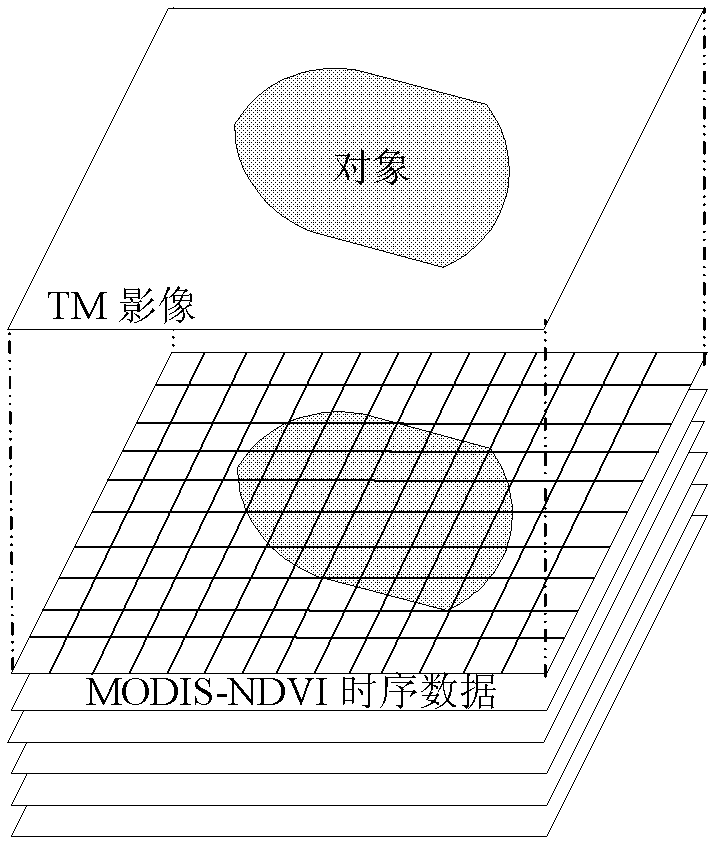

The invention discloses a method for classifying remote sensing images blended with high-space high-time resolution data by an object oriented technology, and relates to a method for classifying remote sensing images of an oriented object, which can be used for solving the problem that the previous method for classifying remote sensing images can not be used for distinguishing land cover types of 'foreign bodies with the same spectrum', and is not suitable for being applied to the remote sensing images with low-medium resolution ratio. The method provided by the invention comprises the following steps: carrying out filter processing by applying an SG (screen grid) filter; determining a time sequence curve of typical vegetational MODIS-NDVI (moderate resolution imaging spectroradiometer-normalized difference vegetation index) in the remote sensing image to be classified; segmenting a TM (thematic mapper) image, wherein each segmentation unit is used as an object; extracting the characteristic information of each object; extracting all non-vegetation objects; removing the non-vegetation objects, and taking the obtained vegetational objects as planar vectors to segment MODIS-NDVI time sequence data, so as to obtain corresponding biotemperature information acquired by each vegetational object; and determining the vegetational type, to which each object belongs; and completing the land cover classification. The method provided by the invention can be used for distinguishing the land cover types.

Owner:NORTHEAST INST OF GEOGRAPHY & AGRIECOLOGY C A S

Land coverage change algorithm and system based on time-space analysis

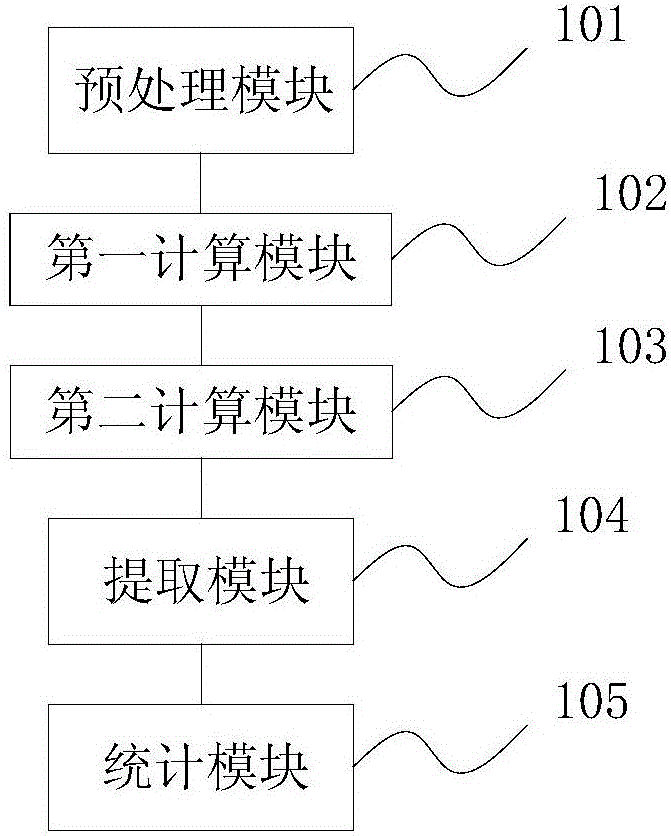

InactiveCN106548146AImprove automationImprove monitoring accuracyImage enhancementImage analysisSensing dataCoverage Type

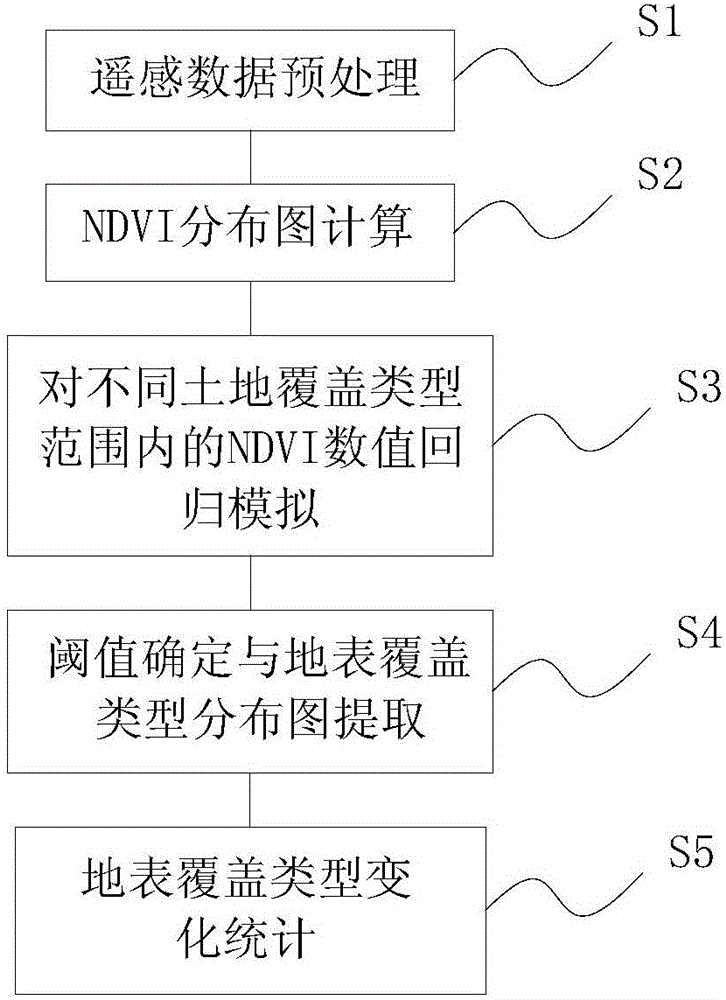

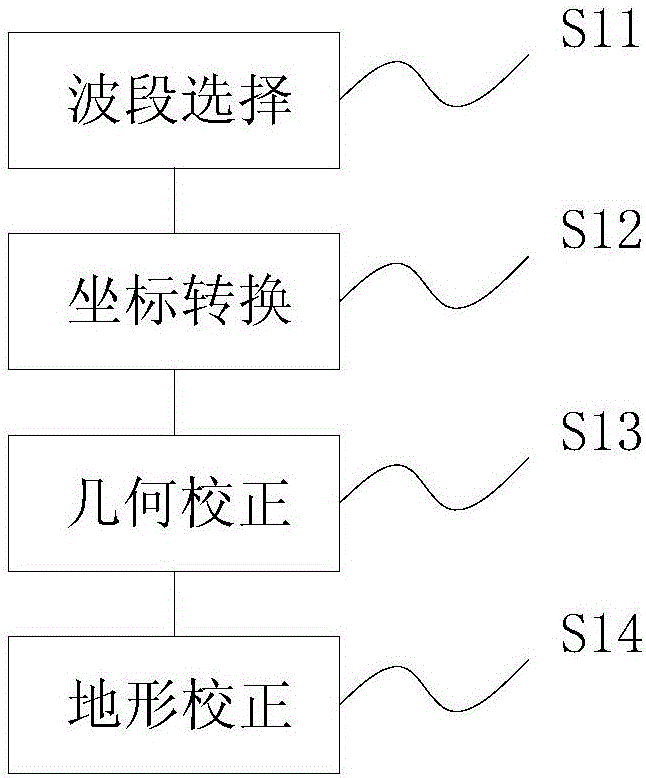

The invention discloses a land coverage change algorithm based on time-space analysis. The method comprises the following steps: S1, performing remote sensing data preprocessing; S2, performing NDVI distribution map calculation; S3, performing regression simulation on NDVI values in different land coverage type scopes; S4, performing threshold determining and land coverage type distribution map extraction; and S5, performing land coverage type change statistics. According to the invention, by taking remote sensing images of different time phases and land data (such as second national land data employed by the embodiments of the invention) of field investigation as a basis, image preprocessing is performed on the remote sensing images, and plantation normalization exponents of the different time phases are obtained. Model analyzing is performed on NDVI data of the different time phases and corresponding land field investigation data, an NDVI threshold scope corresponding to each type of land is determined, a quite clear land use type graph is obtained, and changing land coverage change data statistics is carried out.

Owner:BEIJING AEROSPACE TITAN TECH CO LTD

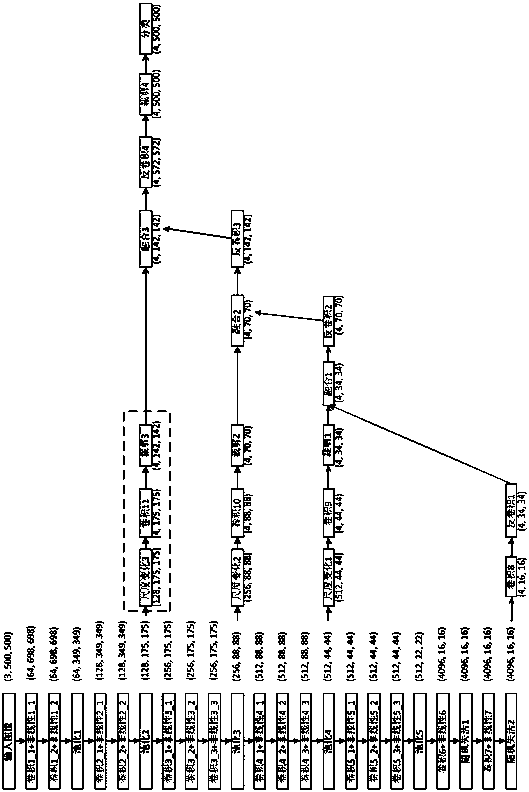

Full convolution network based remote-sensing image land cover classifying method

InactiveCN108537192AImprove classification performancePracticalScene recognitionNeural architecturesStochastic gradient descentLand cover

The invention relates to a full convolution network based remote-sensing image land cover classifying method. The method comprises the following steps: S1, performing data enhancement on a data set with limited data quantity; and generating a training set of which the data quantity and quality meet the training requirement; S2, combining the improved full convolution network FCN4s and the improvedU type full convolution network U-NetBN; and building a remote-sensing image land cover classifying model; S4, minimizing the cross entropy loss as the decrease of random gradient; learning the optimal parameters of the model to obtain the trained remote-sensing image land cover classifying model; and S4, performing pixel class classifying prediction on the predicted remote-sensing image throughthe trained remote image land cover classifying model. According to the method, the properties of the FCN full convolution network and the U-Net full convolution network are combined, so that the remote-sensing image land cover classifying performance can be improved.

Owner:FUZHOU UNIV

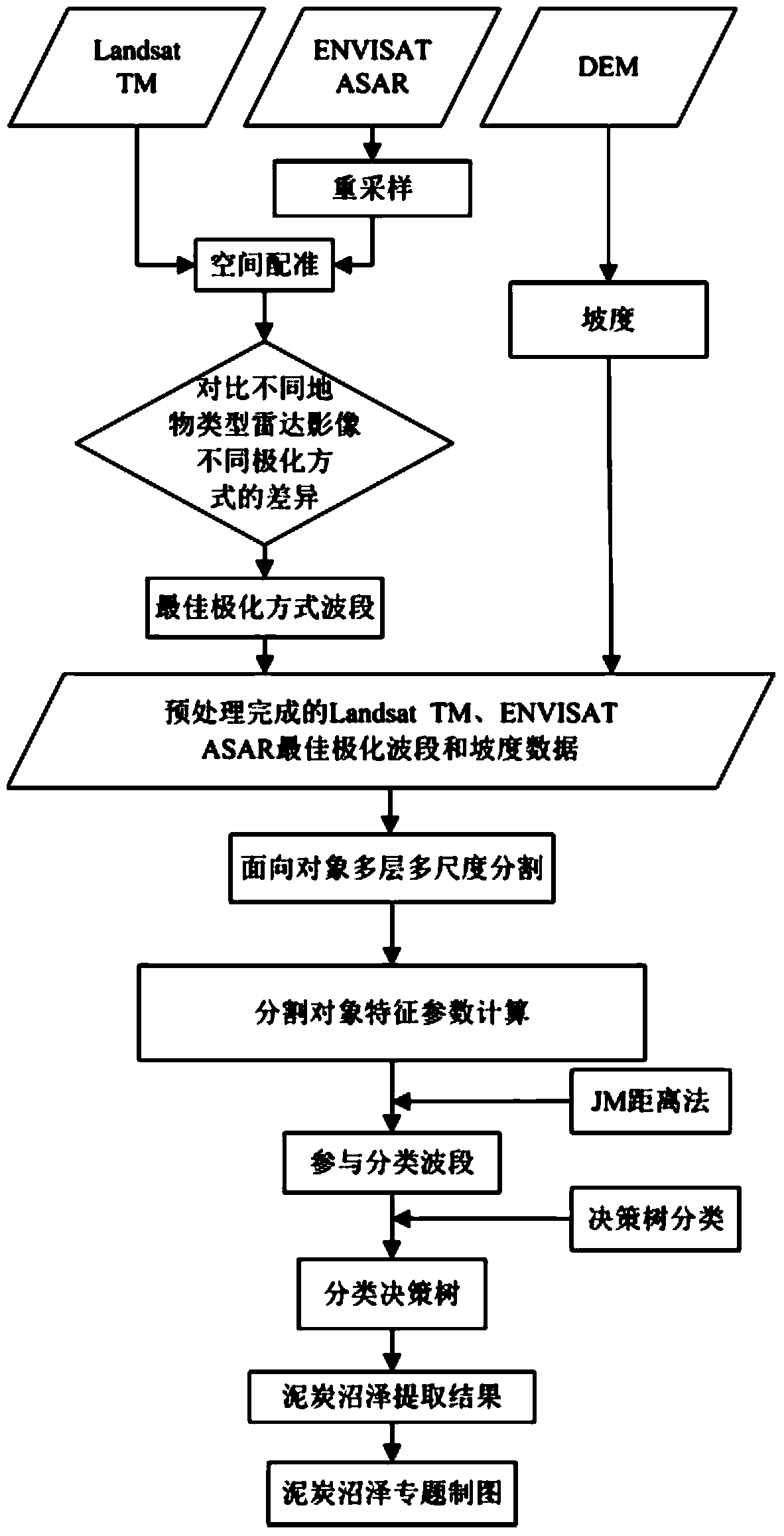

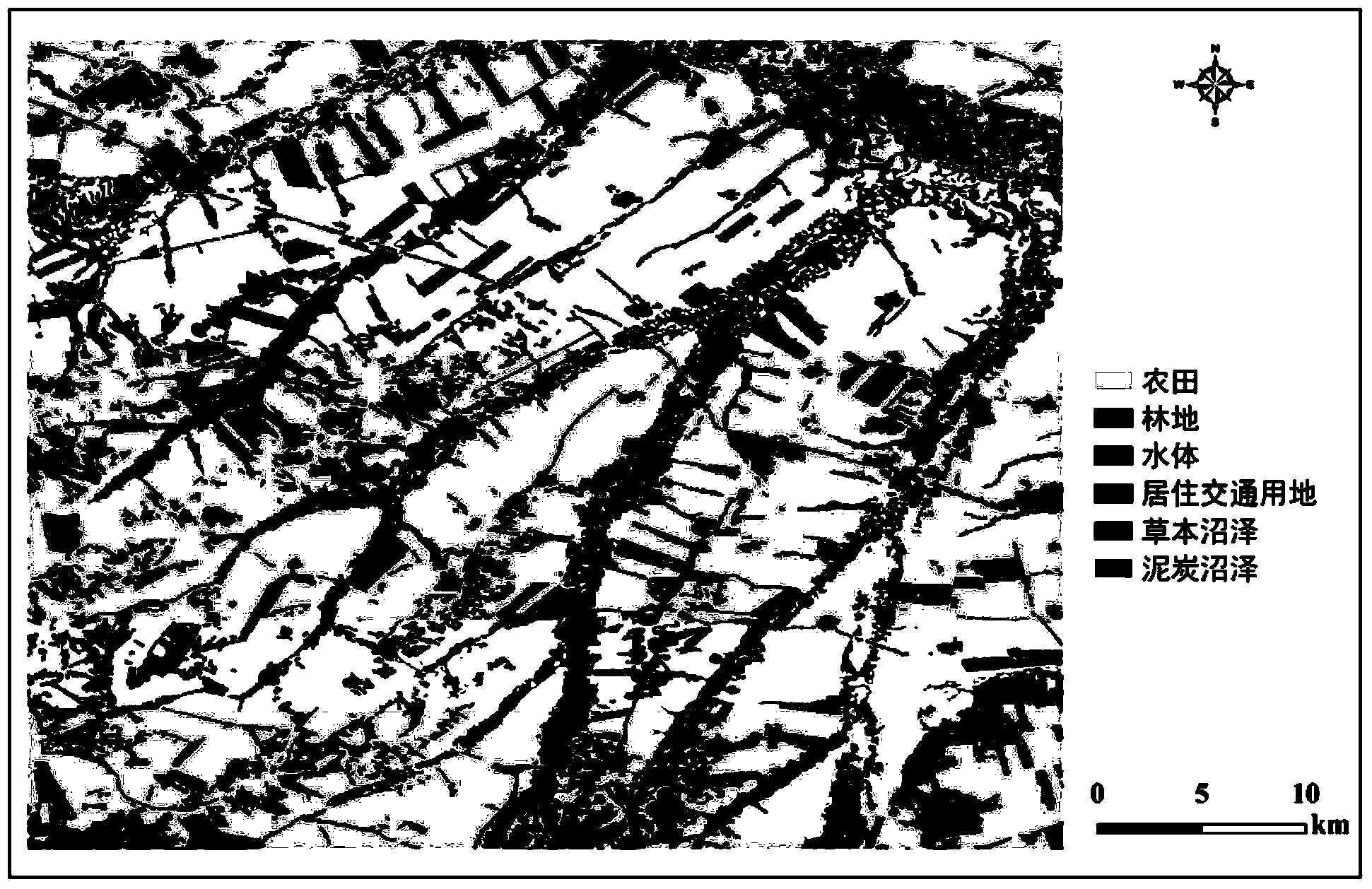

Peat bog information extracting method based on ENVISAT ASAR, Landsat TM and DEM data

ActiveCN104361338AAutomatic fast and accurate extractionAutomatic Extraction FastScene recognitionLand coverPeat

The invention relates to peat bog information extracting methods, in particular to a peat bog information extracting method based on ENVISAT ASAR, Landsat TM and DEM data, and solves the problem that peat bog and other bog types cannot be distinguished by a conventional method. The peat bog information extracting method includes step 1, preprocessing Landsat TM data; step 2, preprocessing ENVISAT ASAR data; step 3, re-sampling the ENVISAT ASAR data; step 4, acquiring an ENVISAT ASAR image; step 5, acquiring gradient data; step 6, extracting back scattering coefficient; step 7, determining optimal polarization mode waveband of the ENVISAT ASAR image; step 8, acquiring a division unit; step 9, extracting feature parameters; step 10, determining optimal classification waveband; step 11, establishing a classification decision-making tree; step 12, generating a soil covering type vector file; step 13, making a peat bog map. The peat bog information extracting method is applied to the field of peat bog information extracting.

Owner:NORTHEAST INST OF GEOGRAPHY & AGRIECOLOGY C A S

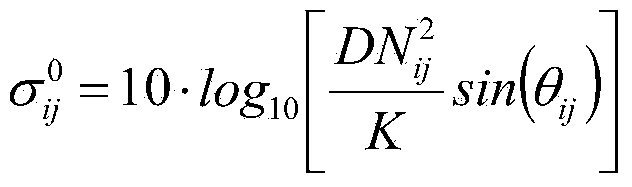

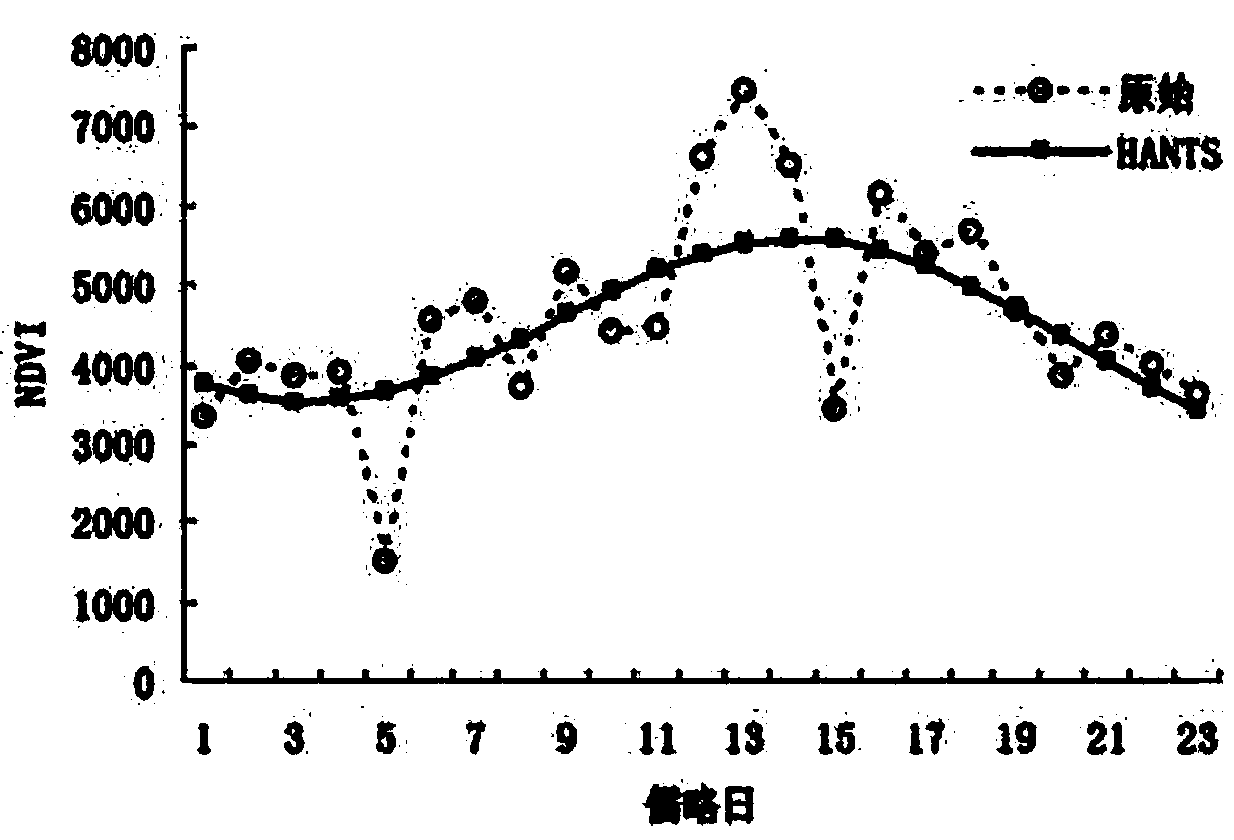

Land cover classification method based on MODIS time series data

InactiveCN104318270AHigh precisionEasy to identifyCharacter and pattern recognitionVegetationLand cover

The invention discloses a land cover classification method based on MODIS time series data, and relates to the field of land cover classifying. The land cover classification method aims at solving the problems that as for a traditional method, the using time is long, the minus deviation of the vegetation index is generated, and the accuracy of an SG reestablishment result is reduced. The land cover classification method based on the MODIS time series data specifically includes the following steps: (1) building an original curve; (2) carrying out filtering on the original curve to form an initial curve in a fitted mode; (3) building a cloudless image two-dimensional array of pixels of the initial curve; (4) setting a threshold value T, wherein Y is not equal to y; (5) processing the initial curve; (6) obtaining a rebuilt NDVI annual variation curve; (7) extracting vegetation growth season parameters for forming a feature image; (8) determining a final voting classification result. The land cover classification method is used for the land cover classification field based on the MODIS time series data.

Owner:NORTHEAST FORESTRY UNIVERSITY

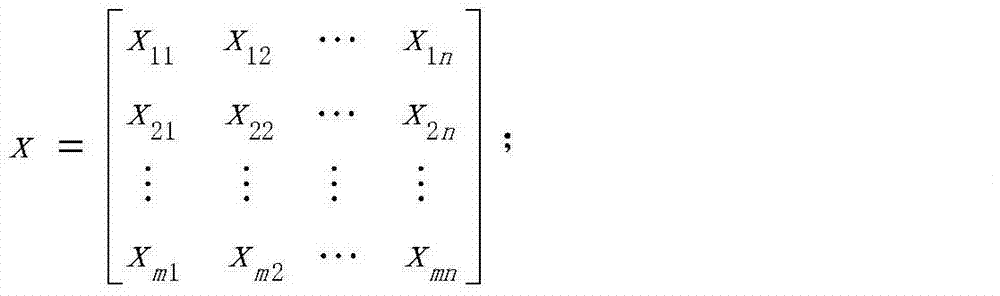

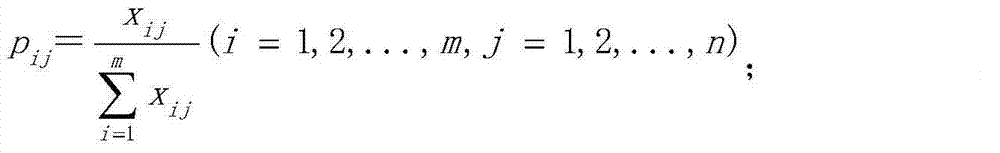

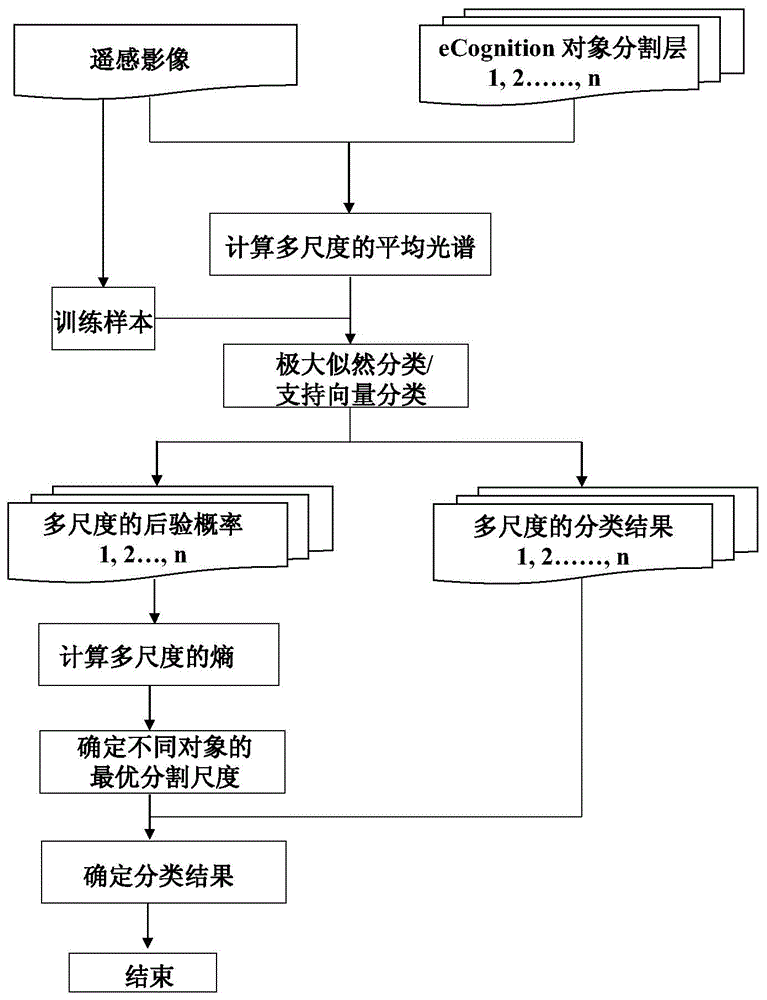

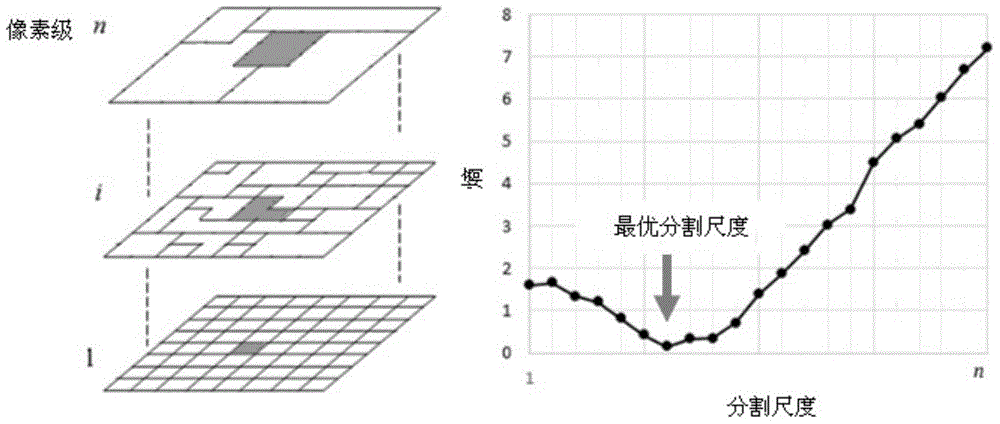

Optimum segmentation dimension determining method for remotely-sensed image land cover classification

InactiveCN104881677AImplement automatic selectionOvercoming the problem of 'salt and pepper' noiseCharacter and pattern recognitionLand coverClassification methods

The invention provides an optimum segmentation dimension determining method for remotely-sensed image land cover classification. The method comprises steps of multi-dimension segmentation and classification of remotely-sensed images and optimum dimension selection based on entropy information. The method performs remotely-sensed image classification by integrating a pixel-based classification method and an object-oriented classification method and by fully utilizing sample information, effectively overcomes a problem that a conventional pixel-oriented method generates a large amount of pepper salt noisy points, and achieves automatic selection of object optimum dimension. The invention provides an effective method for land cover drawing.

Owner:BEIJING NORMAL UNIVERSITY

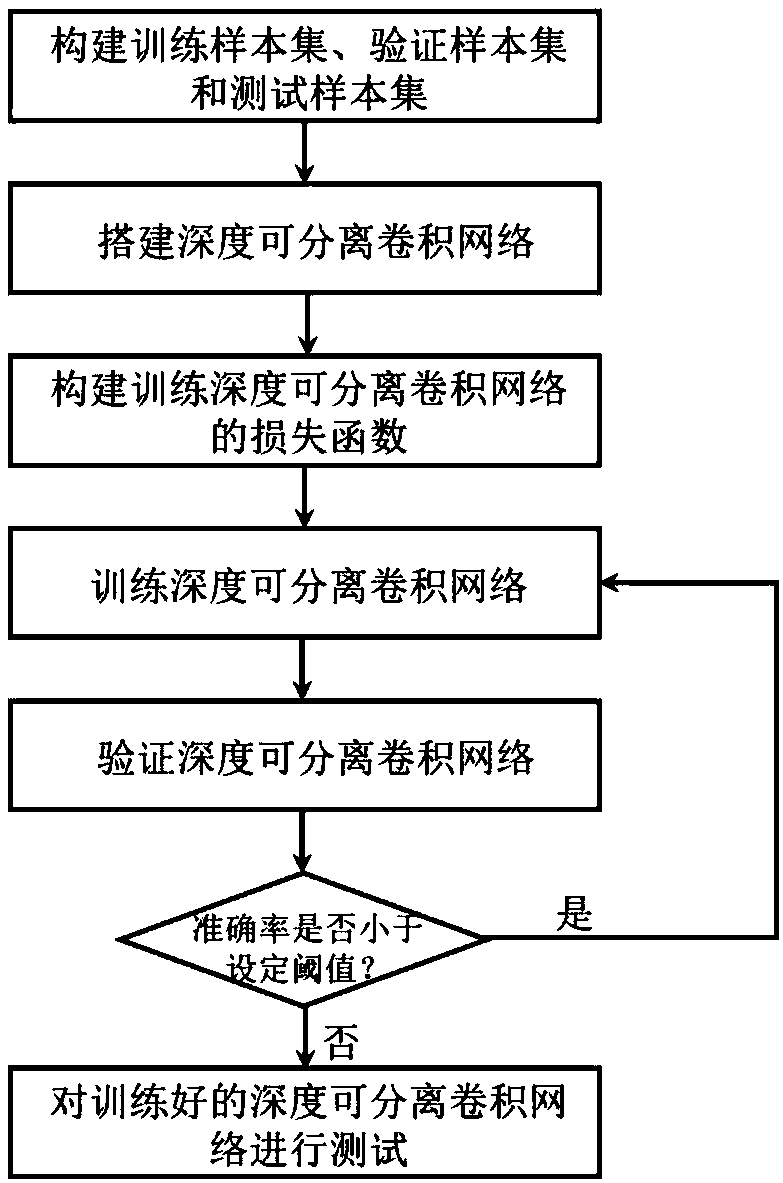

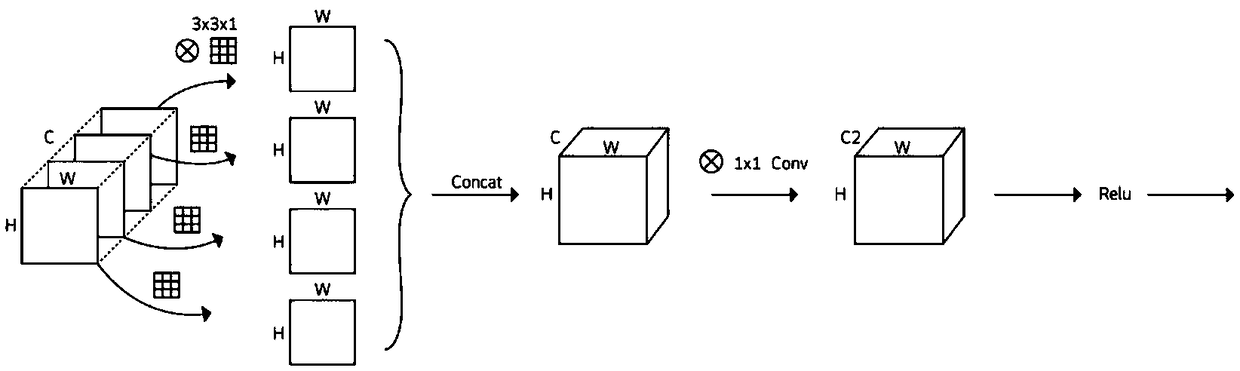

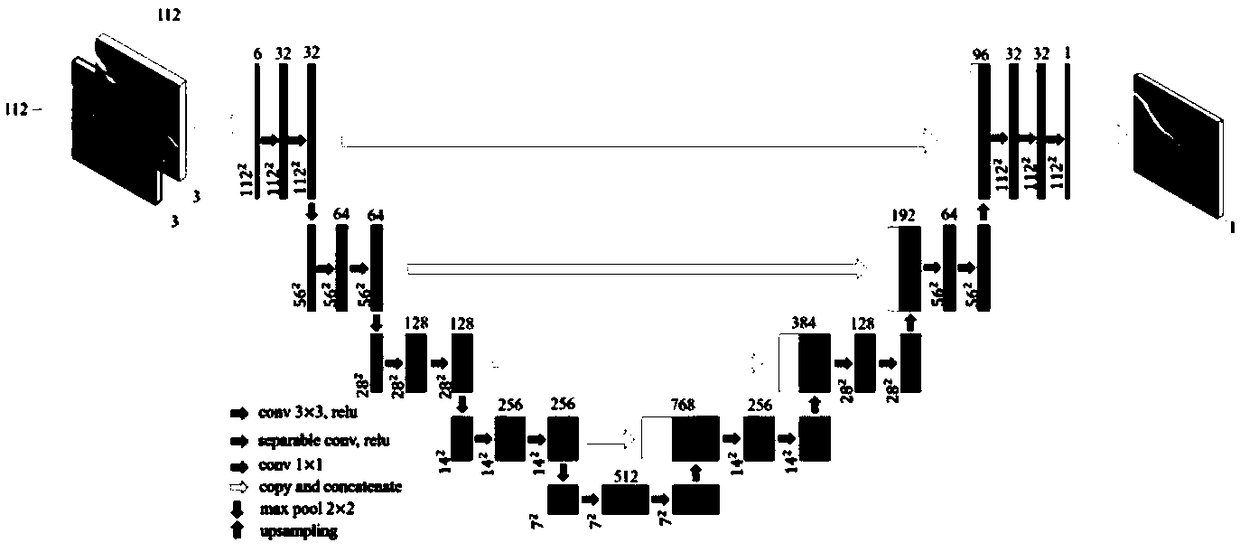

Image change detection method based on depth-separable convolution network

ActiveCN108846835AEnhance expressive abilityStrong discriminationImage enhancementImage analysisVideo monitoringPattern recognition

The invention provides an image change detection method based on a depth-separable convolution network, which is used for solving the technical problem of low detection accuracy existing in the conventional image change detection methods. The implementation steps include: constructing a training sample set, a verifying sample set and a test sample set; using a variant U-Net of a full convolution network as a basic network to establish a depth-separable convolution network; constructing a loss function of the trained depth-separable convolution network; training, testing and verifying the depth-separable convolution network; and using the verified finally-trained depth-separable convolution network to perform test to obtain a change detection result graph. The invention has rich image feature semantics and structure information extracted by the depth-separable convolution network, has strong image expression ability and discrimination, can improve the change detection accuracy, and canbe used in the technical fields of land cover detection, disaster assessment, video monitoring and the like.

Owner:XIDIAN UNIV

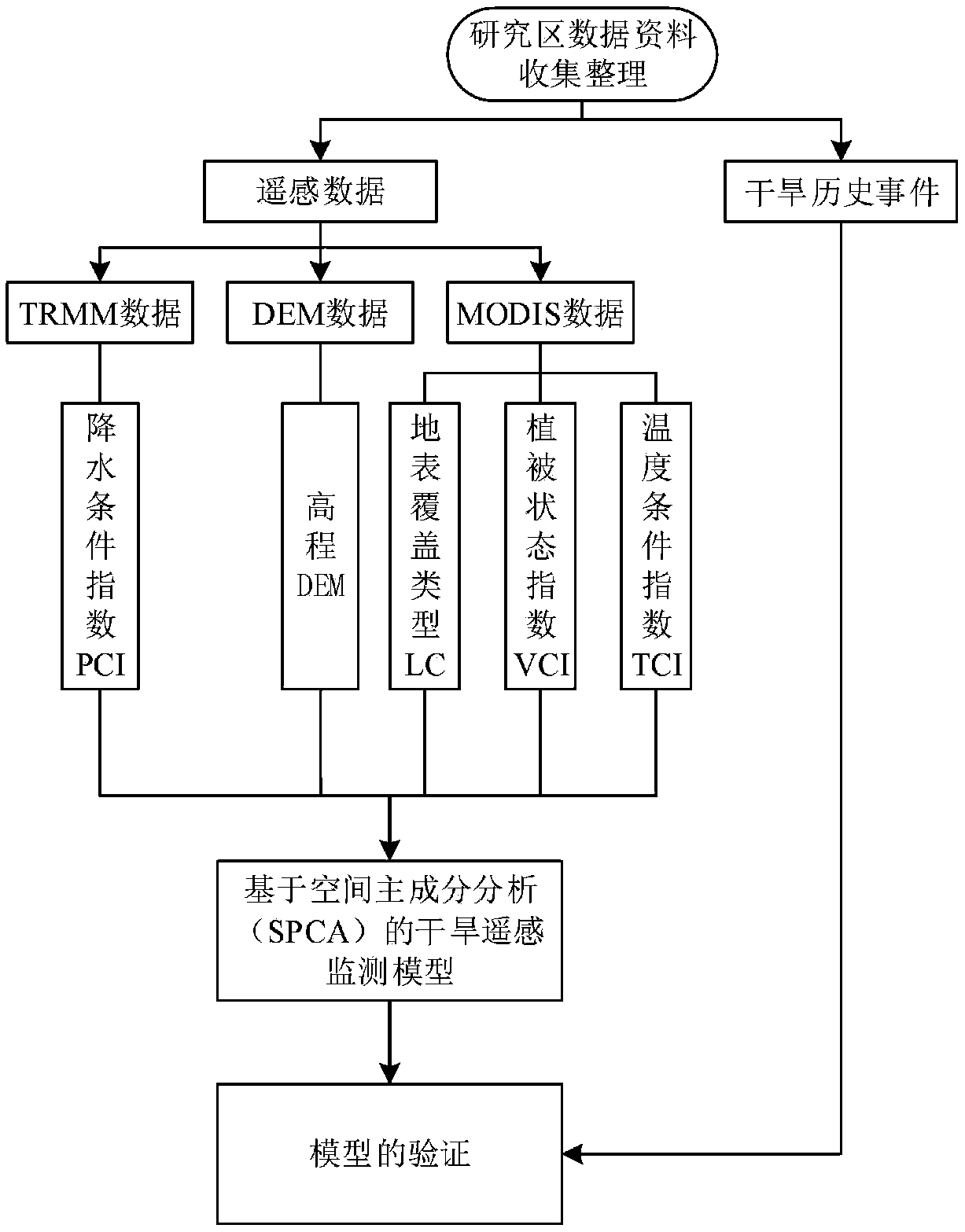

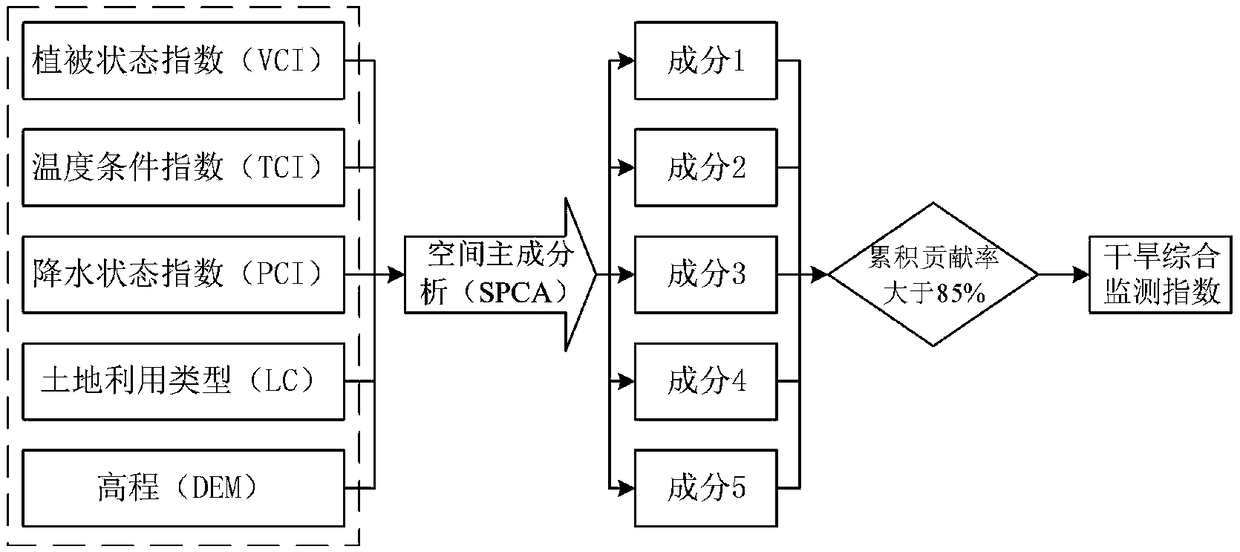

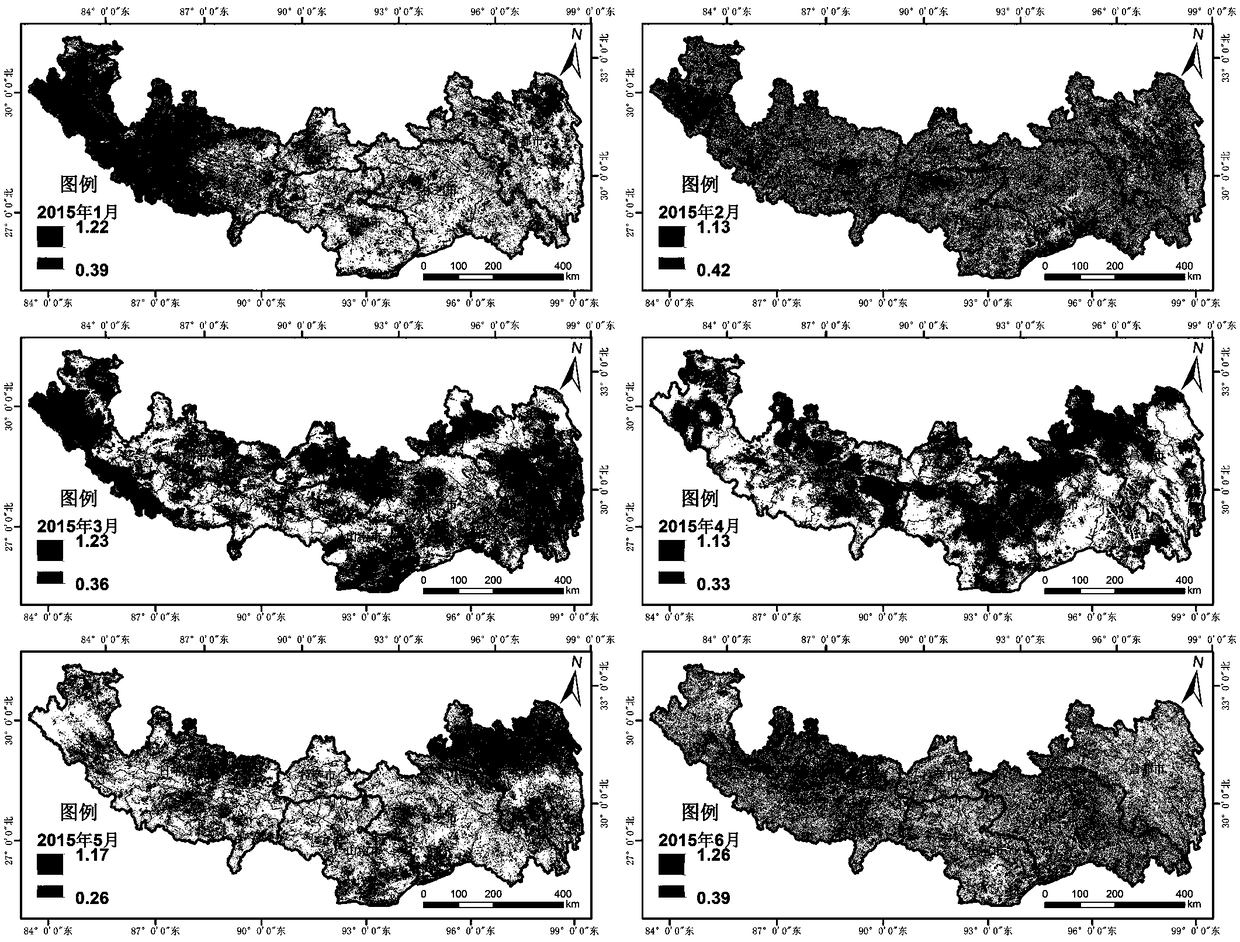

Drought remote sensing monitoring method suitable for high altitude areas

ActiveCN108760643AReduce the impact of uneven distributionMaterial analysis by optical meansKernel principal component analysisVegetation

The invention discloses a drought remote sensing monitoring method suitable for high altitude areas. The method comprises: S10, collecting data of a target area, S20, preprocessing the data obtained in step S10 to obtain an enhanced vegetation index, a surface temperature index, a land cover type and downscaling rainfall data, S30, calculating a vegetation state index, a temperature condition index, a rainfall state index, a reclassified land cover type and elevation by the data in step S20, and S40, constructing a drought remote sensing monitoring model based on spatial principal component analysis. The method comprehensively considers various factors affecting drought, wherein the various factors include a vegetation factor, a surface temperature factor, a rainfall factor, a land cover type factor and a topographic factor, a drought monitoring model is constructed by a spatial principal component analysis method, can effectively eliminate variables with large correlation in the selected variables and can extract few unrelated comprehensive indicators.

Owner:SOUTHWEST PETROLEUM UNIV

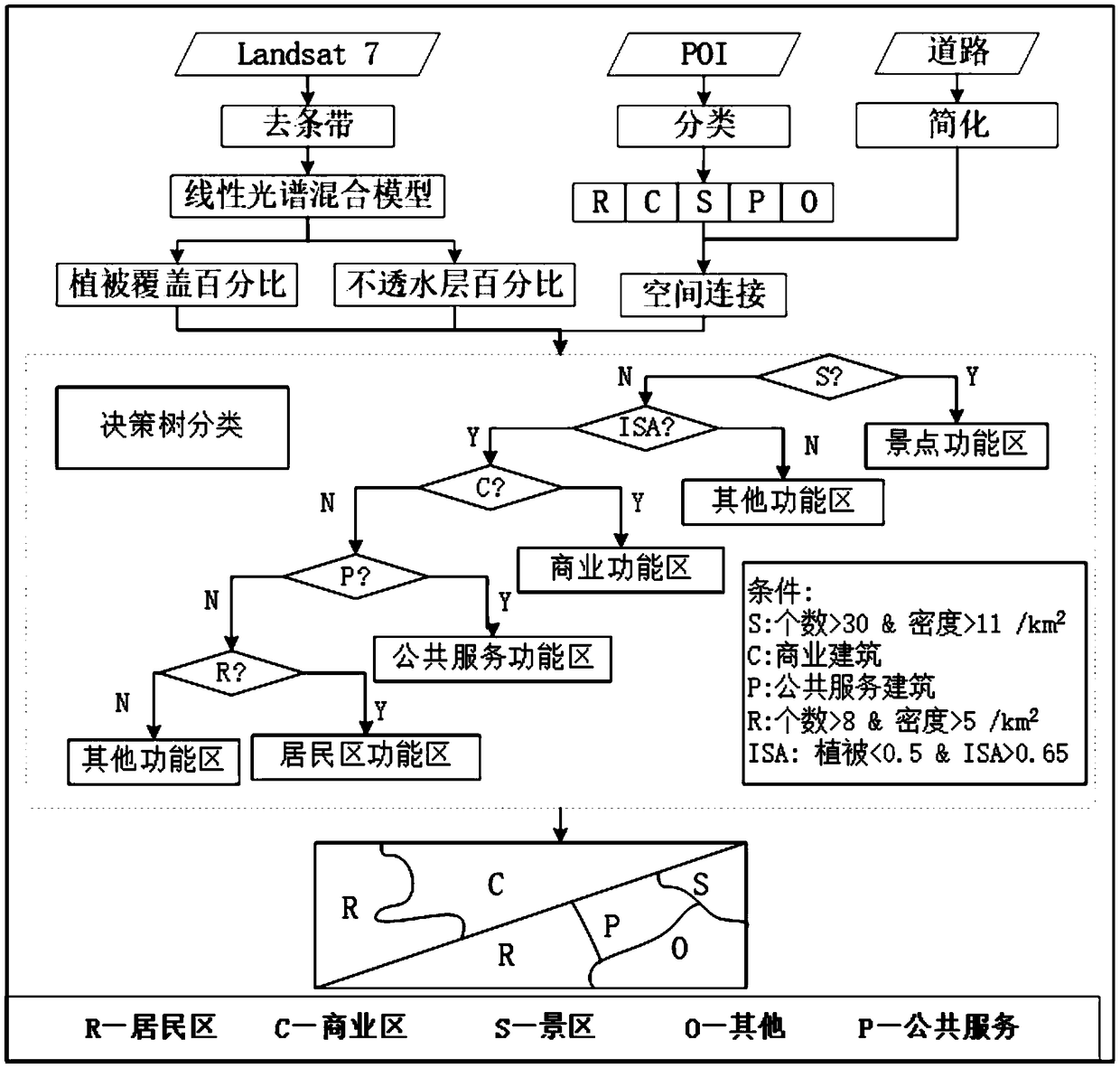

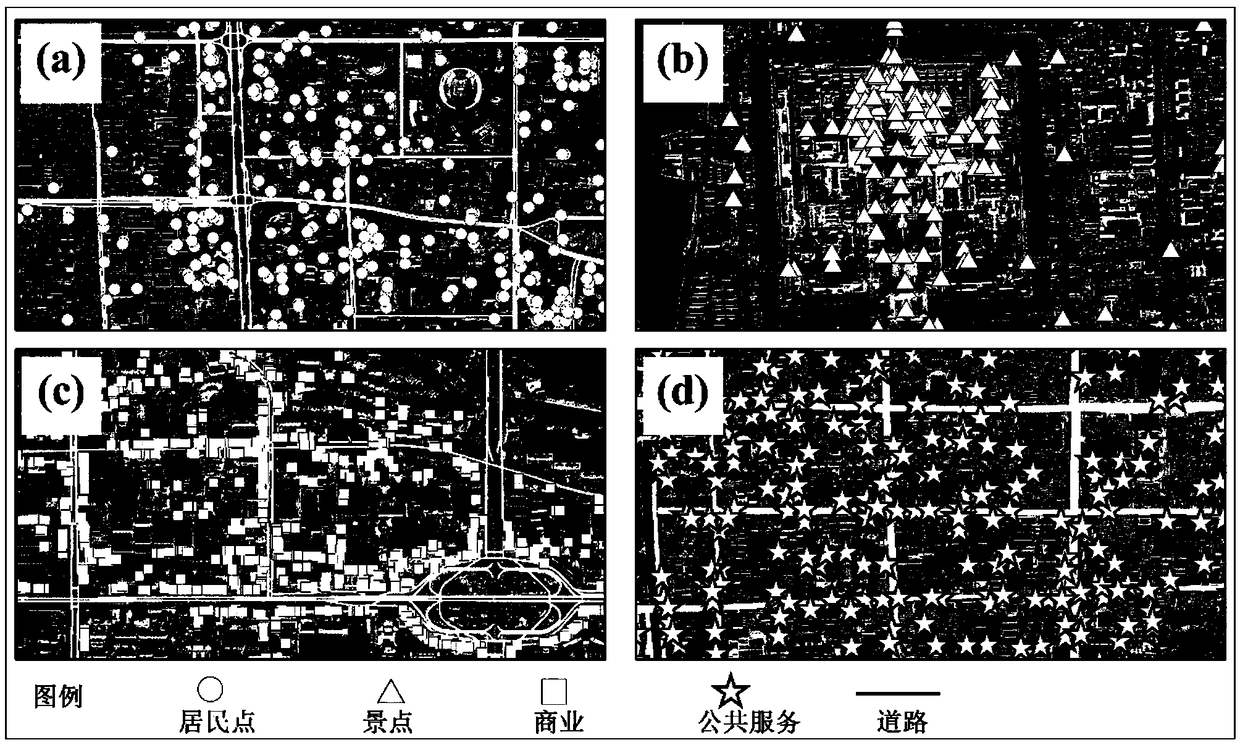

POI (Point of interest) and remote-sensing image integrated urban function zone division method

ActiveCN108764193AQuick divisionImprove matchCharacter and pattern recognitionSensing dataLand cover

The invention discloses a POI (Point of interest) and remote-sensing image integrated urban function zone division method. The method comprises the following steps of (1), obtaining POI data, remote-sensing images and space geographic entity data of a region; (2), selecting classes sensitive to urban function zone division from class attributes of the POI data, thereby forming evaluation classes,forming function POI data based on the POI data corresponding to the evaluation classes, matching the function POI data with the space geographic entity data, thereby obtaining an initial distributionstructure of each class of POI data in each geographic entity; (3), extracting land cover data from the remote-sensing images of the region; (4), making a decision tree classification rule based on the initial distribution structure of each class of POI data in the (2) and the land cover data in the (3); and (5), dividing urban function zones of the region according to the decision tree classification rule. According to the method, on the basis of a decision tree classification algorithm, the POI data and Landsat remote-sensing data are combined, so the urban function zones are rapidly divided.

Owner:BEIJING NORMAL UNIVERSITY

Land cover classification method for high-resolution remote sensing image based on parallel algorithm

ActiveCN107909039AImprove adaptabilityCapable of parallel computingImage enhancementImage analysisLand coverParallel algorithm

The invention discloses a land cover classification method for a high-resolution remote sensing image based on a parallel algorithm. The method comprises the steps that S1, high-resolution remote sensing image data is segmented according to the number of computers, and high-resolution remote sensing image blocks after segmentation are obtained; S2, all the high-resolution remote sensing image blocks are allocated to m processors based on an OpenMP parallel framework, and land cover classification processing is executed concurrently; and S3, according to the data segmentation principle, all high-resolution remote sensing image block data is merged, and a final land cover classification result is obtained. Through the method, the data is automatically segmented according to the data size andcomputer memory using conditions, a configuration file is used to organize a classification algorithm process, a parallel classification algorithm is realized, and therefore the method can adapt to ahigh-resolution land cover charting task with an extremely large data volume and land object space fine division.

Owner:WUHAN UNIV

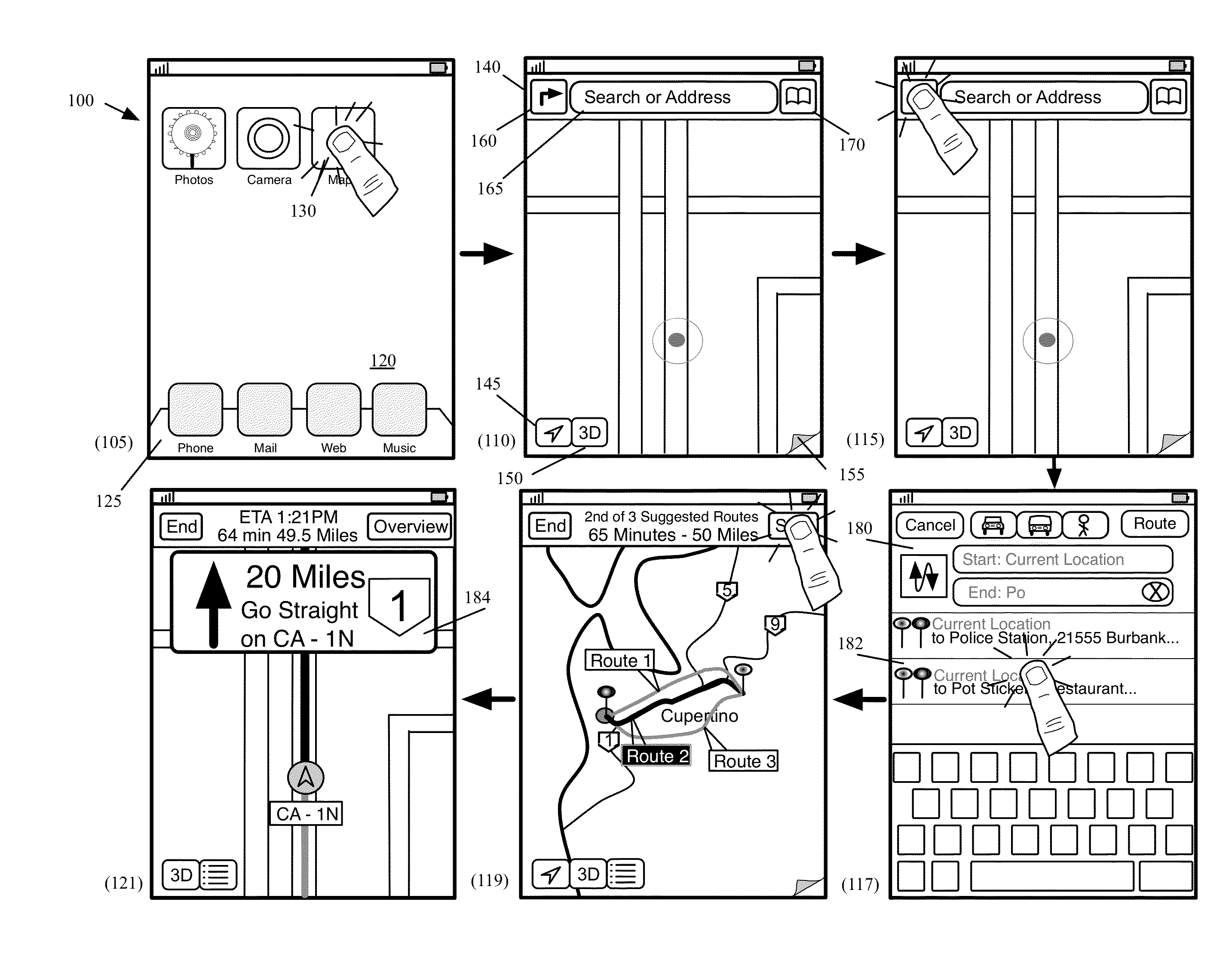

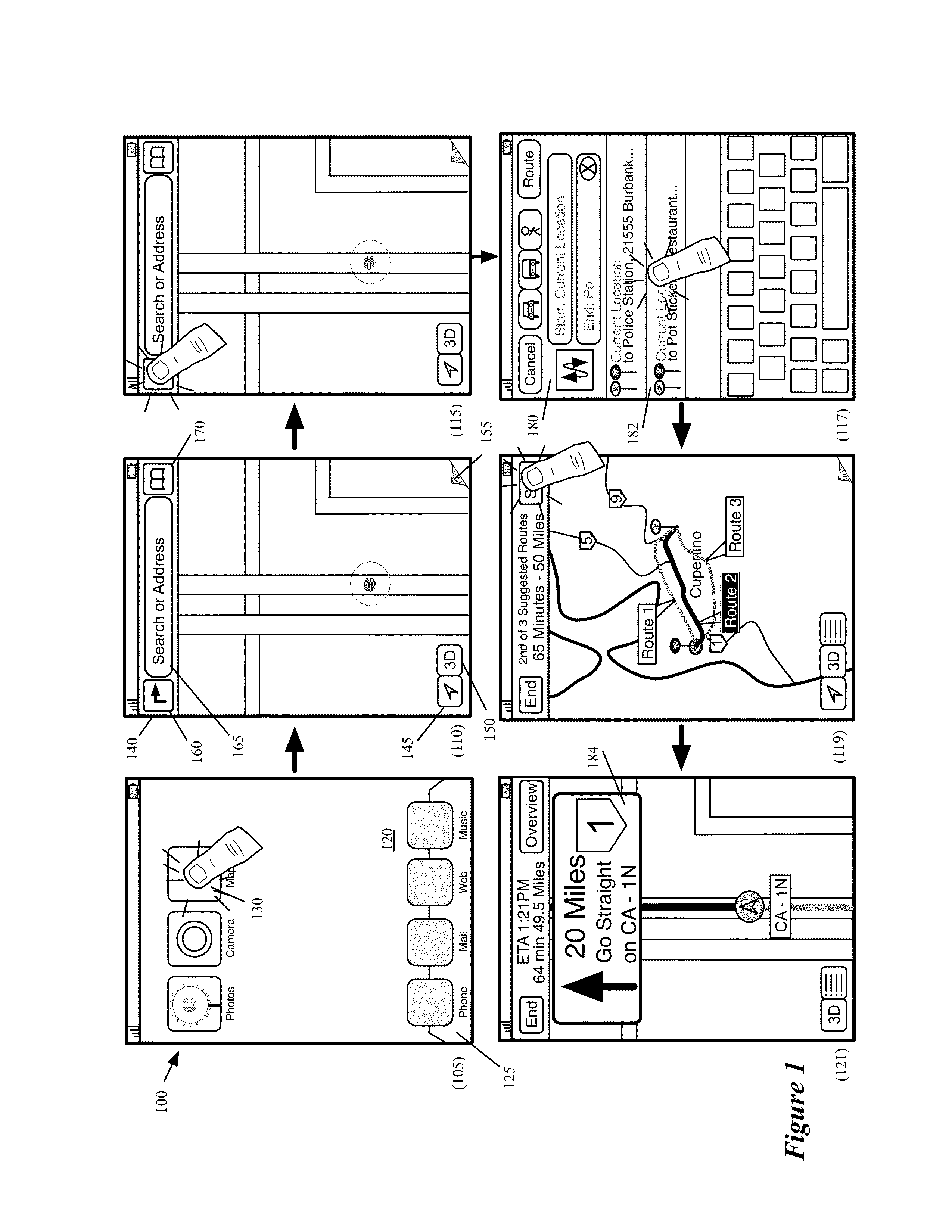

Generating Land Cover for Display by a Mapping Application

ActiveUS20130328883A1Instruments for road network navigationDrawing from basic elementsLand coverWeb mapping

Some embodiments provide a method for conflating geometries to a road in a map region for an electronic mapping service. The method receives a first geometry representing a road. The method receives several geometries arranged such that a gap representing the road is between the geometries. The gap is not aligned with the first geometry representing the road. The method expands the geometries toward the first geometry such that the geometries converge at the first geometry. The road geometry is for drawing over the plurality of other geometries by a client mapping application.

Owner:APPLE INC

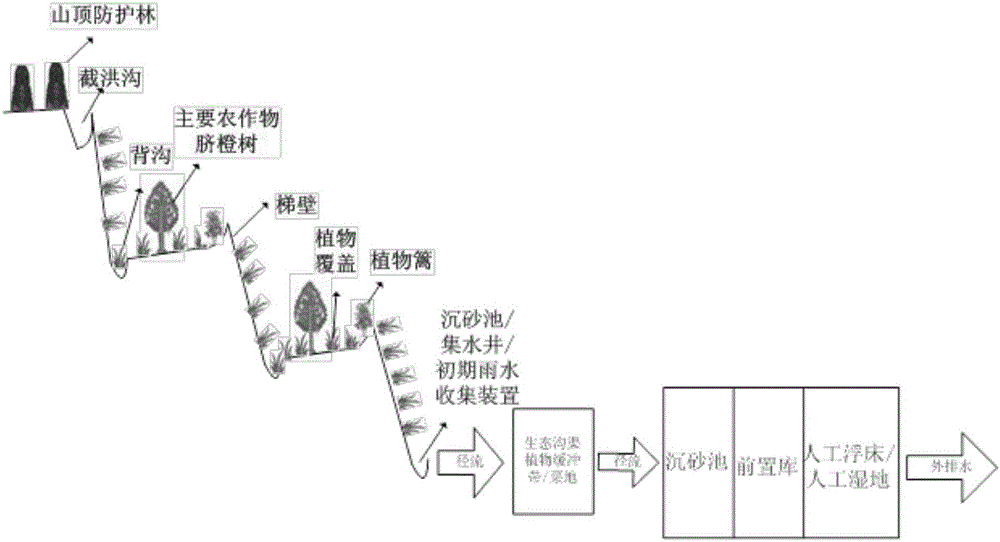

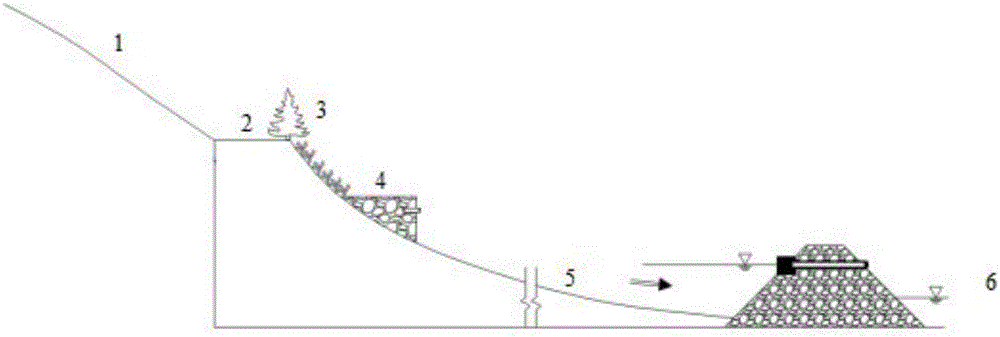

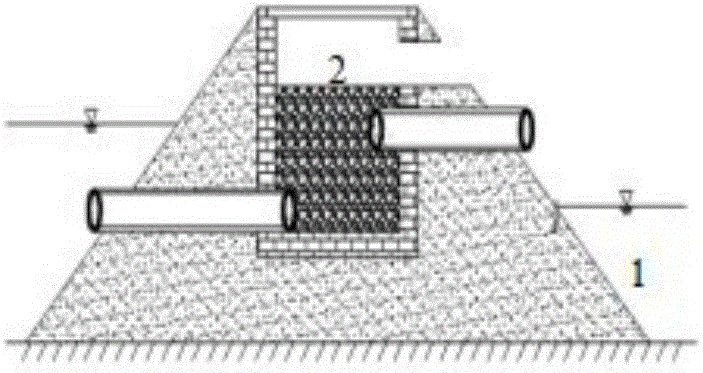

Method for treating agricultural non-point source pollution in hilly areas

The invention provides a systematic method for treating agricultural non-point source pollution in hilly areas. According to the systematic method, hilly uplands are reclaimed as counter-slope terrace lands, terrace lands per line are kept at equal height, and different lines of terrace lands are parallel mutually and are perpendicular to the inclination direction of upslope lands. The systematic method includes source reduction, runoff interception, process control and nutrient utilization, wherein source reduction refers to substitution for and reduction of chemical pesticides and chemical fertilizers; runoff interception is implemented by planting hedgerow on the external slope surfaces of the counter-slope terrace lands and planting land cover plants on the slope surfaces, dorsal furrows and terrace ridges; in process control, flood intercepting trenches, longitudinal drainage ditches and the dorsal furrows are formed and grit chambers, water-collecting wells and primary rainwater collecting devices are arranged to form a network; nutrient utilization is implemented by a pretreatment unit and a main treatment unit. The systematic method for treating the agricultural non-point source pollution in the hilly areas has the advantages that onsite terrain conditions are fully utilized, and corresponding ecological control and treatment methods are adopted in different forming phases of runoff and pollution, so that runoff nutrient cycling utilization efficiency is improved and production and discharge of the non-point source pollution are reduced.

Owner:JIANGXI ACADEMY OF ENVIRONMENTAL PROTECTION SCI

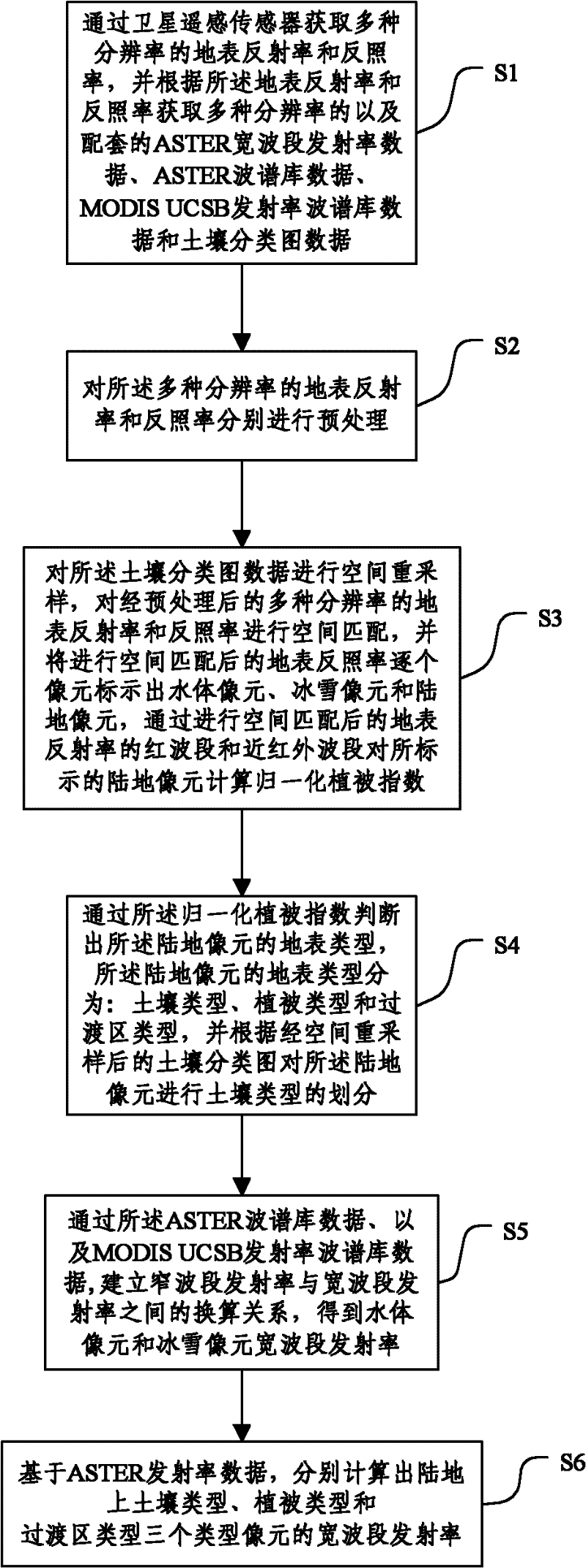

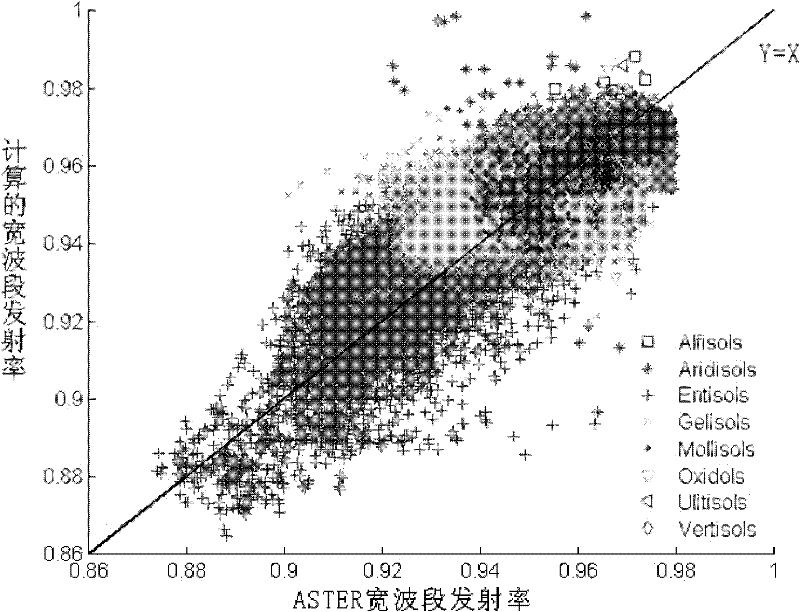

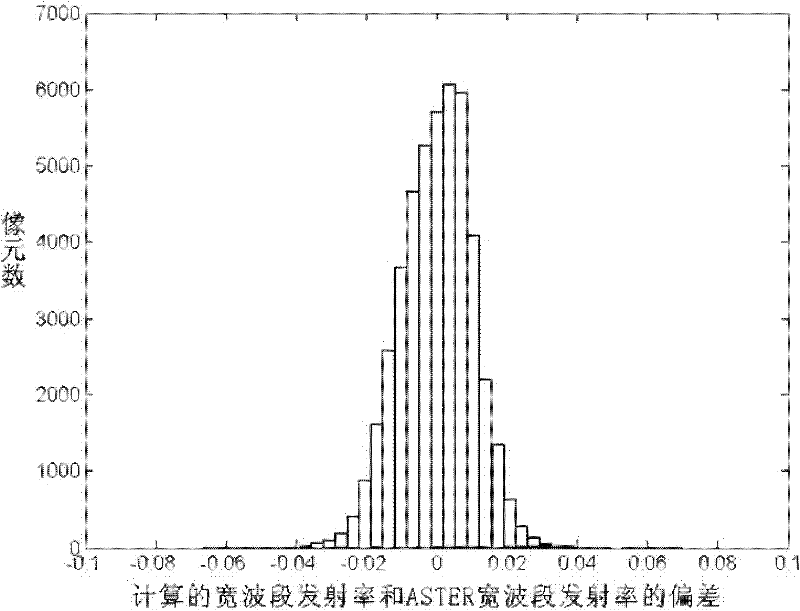

Global Land Surface Broadband Emissivity Inversion Method and System

The invention relates to the satellite remote sensing technology field and especially relates to a global land cover broadband emissivity inversion method and a system. The method comprises the following steps: S1. acquiring a surface reflectivity with a plurality of resolutions and an albedo and acquiring matched soil classification graph data; S2. carrying out pretreatment respectively to the surface reflectivity with a plurality of resolutions and the albedo; S3. carrying out spatial resample to the soil classification graph data, carrying out spatial matching to the pretreated surface reflectivity with a plurality of resolutions and the albedo and identifying each pixel of the spatial matched surface albedo; S4. determining a surface type of the land pixel; S5. establishing a conversion relation between the narrowband emissivity and the broadband emissivity so as to obtain the broadband emissivity of the water body pixel and the ice and snow pixel; S6. calculating the broadband emissivity of the pixel. In the invention, precision of broadband emissivity inversion can be improved through processing reflectivity data and albedo data.

Owner:BEIJING NORMAL UNIVERSITY

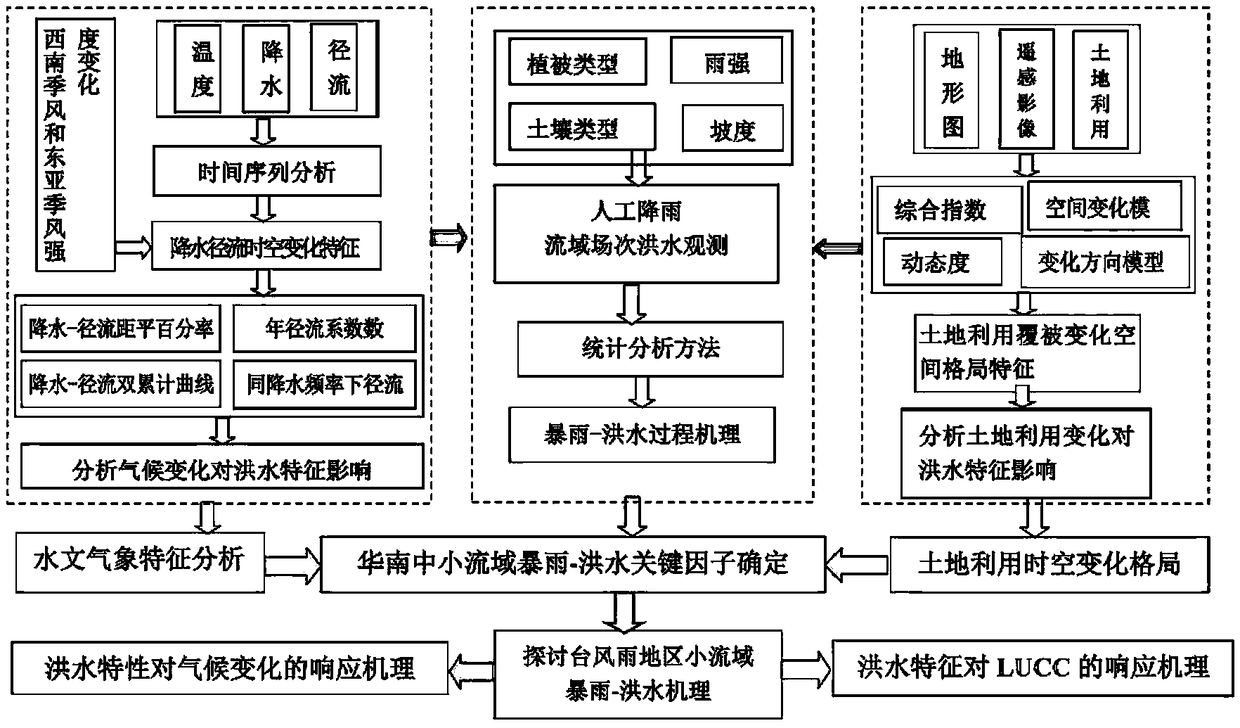

Method for establishing model of response of flood characteristics of medium and small river basins to changing environment

InactiveCN108154270ARapid determinationAccurate measurementForecastingICT adaptationData setLand cover

The invention discloses a method for establishing a model of a response of flood characteristics of medium and small river basins to the changing environment. According to the method, Meihua-River upstream basins of typhoon-rainstorm prone areas of south China serve as research areas, basin flood characteristics under conditions of climatic changes and land use and land cover changes and the response of the basin flood characteristics to the changing environment are analyzed; a physical geography data set of the research areas is established, then a field observation test is carried out in cooperation with historical screening flood data, the relationships between key runoff-generation-and-confluence parameters and environment factors are determined in cooperation with historical observation data, and the rainstorm-flood runoff generation mechanism in the changing environment of the relationships is discussed; the influence of the climatic changes and the land use and land cover changes on the flood characteristics of the typhoon-rainstorm areas is analyzed; a scientific basis and an infrastructural support are provided for prevention and management of flood damage of the medium and small river basins in typhoon rainstorm areas of south China and a land utilization optimization scheme in the future period.

Owner:GUANGZHOU INST OF GEOGRAPHY GUANGDONG ACAD OF SCI

Land utilization category determination method fusing in streetscape images

ActiveCN110263717AAccurate discriminationHigh precisionData processing applicationsScene recognitionLand coverClassification methods

The invention discloses a land utilization category determination method fusing in streetscape images. The method comprises the following steps of firstly, extracting the fine ground object category information in the street view image sampling points through a deep learning convolutional neural network mode; meanwhile, preprocessing the remote sensing image, and obtaining a land cover map by using a supervised classification method; secondly, through the spectrum, the texture, the shape and the geographic distribution information of the pixels where the streetscape sampling points are located, deducing the category condition of the adjacent pixels; and finally, fusing the pixel classification information and the land cover map to obtain a fine multi-class land utilization result. According to the method, by starting from the remote sensing based on the pixel classification, the fine streetscape information is combined, and the precision of the classification result is high.

Owner:INST OF GEOGRAPHICAL SCI & NATURAL RESOURCE RES CAS

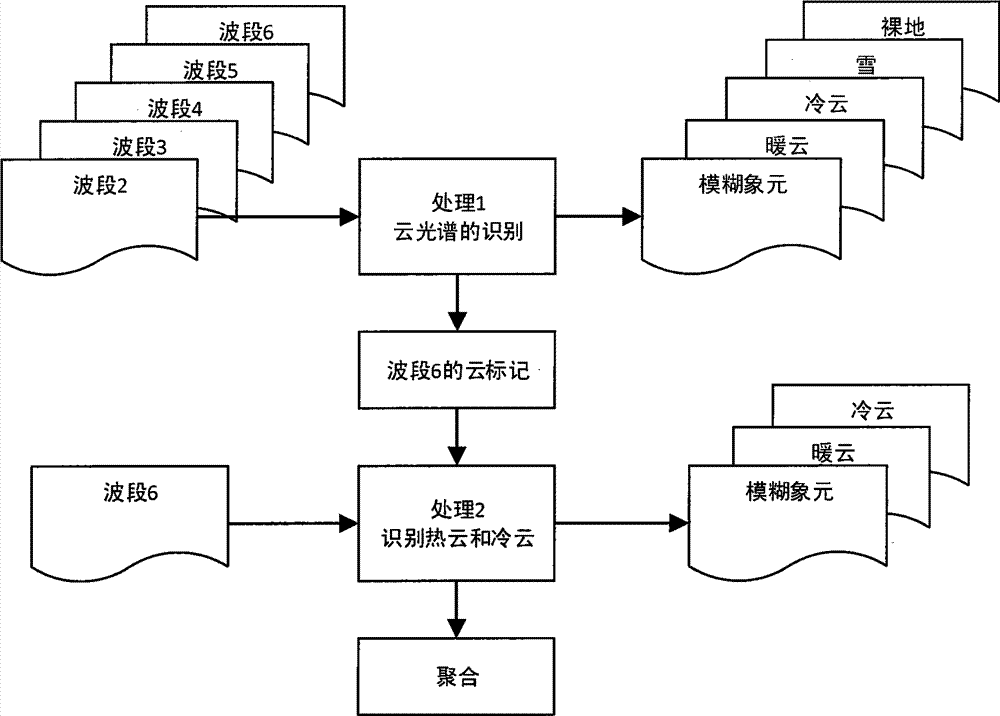

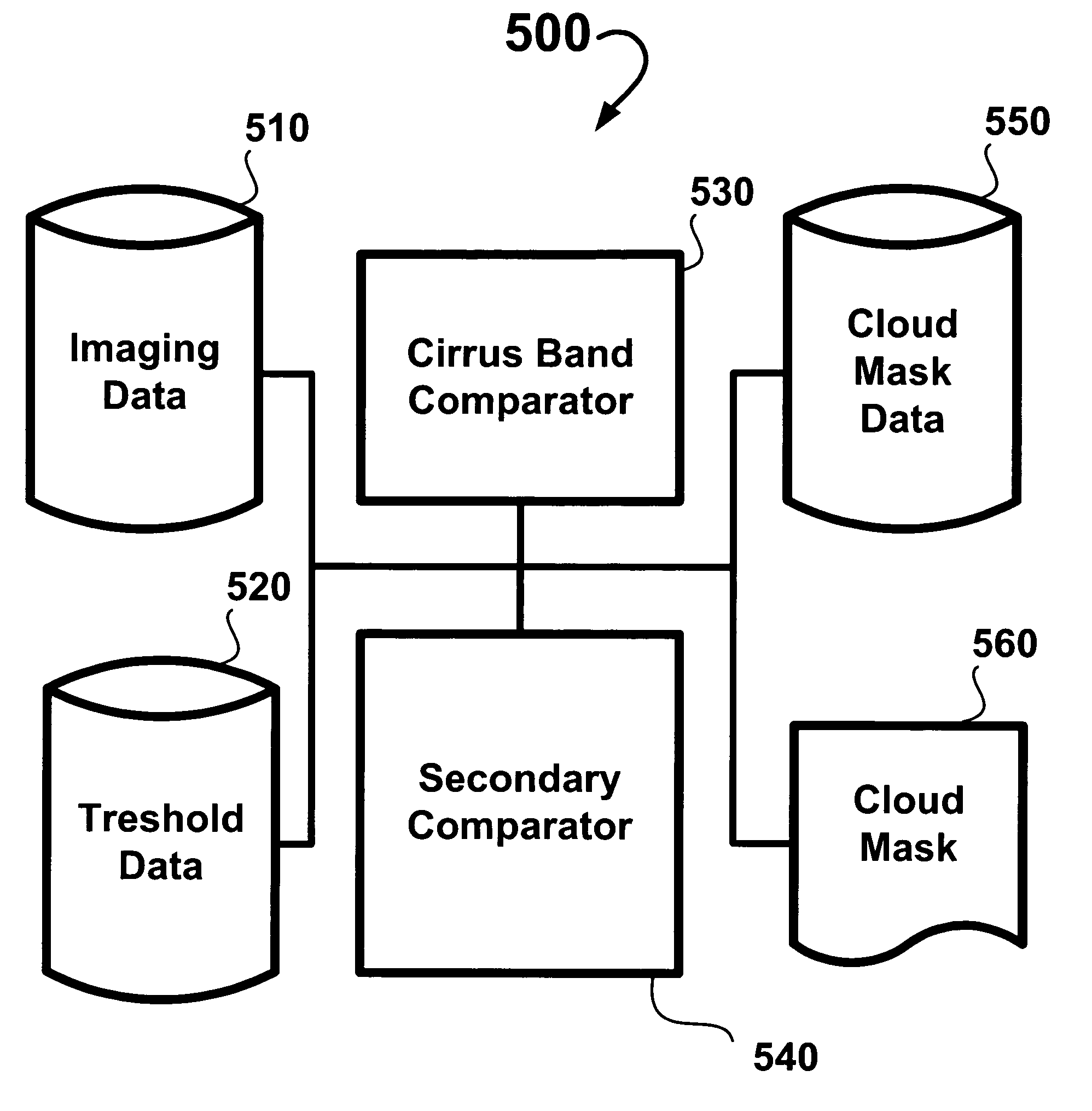

Cloud cover assessment: VNIR-SWIR

InactiveUS20050111692A1Simple calculationNot impose an unreasonable operational computing workloadScene recognitionSpecial data processing applicationsLand coverVisible near infrared

Methods, a computer-readable medium, and a system are provided for determining whether a data point indicates a presence of a cloud using visible near-infrared data and short wavelength infrared data. A first comparison of a cirrus-band reflectance of the data point with a threshold cirrus-band reflectance value is made, classifying the data point as a cloud point if the cirrus-band reflectance of the data point exceeds the threshold cirrus-band reflectance value. When the comparing of the cirrus-band reflectance of the data point with the threshold cirrus-band reflectance value does not classify the data point as a cloud point, a further analysis is performed, including performing a second or more comparisons of additional cloud indicators derived from at least one of the visible, near-infrared, and short wavelength infrared data with related empirically-derived, landcover-dependent thresholds for classifying the data point as a cloud point or a non-cloud point.

Owner:THE BOEING CO

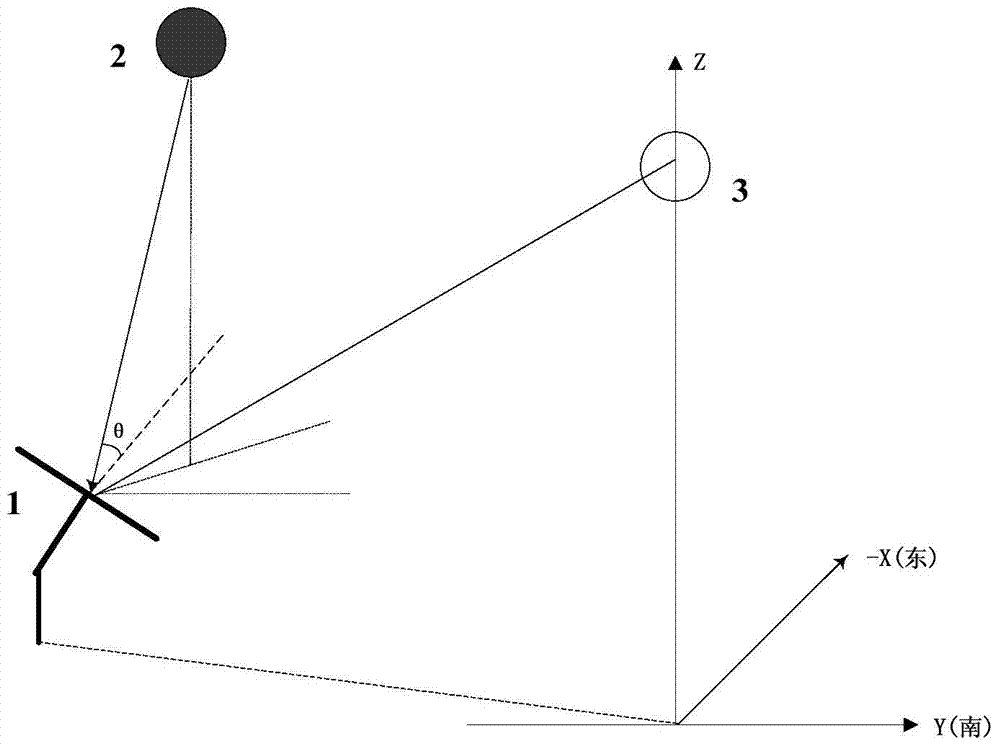

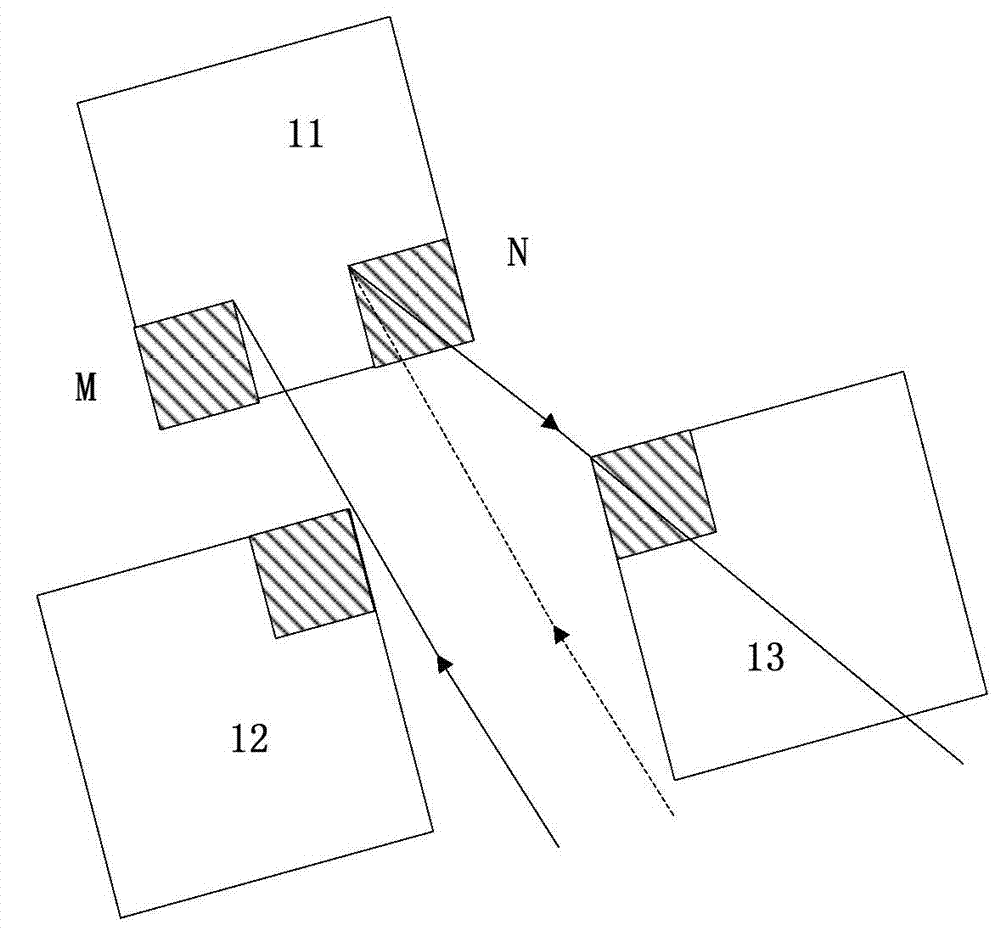

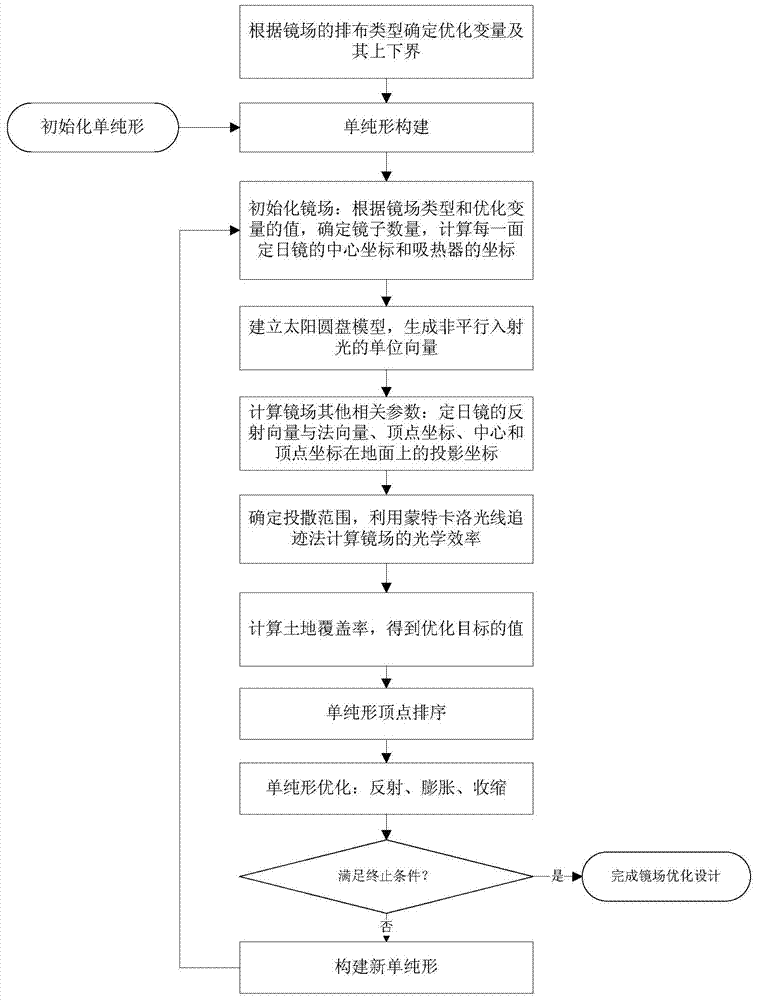

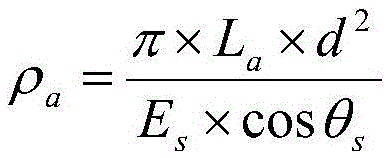

Mirror field optimization design method of cornfield and tower type solar thermoelectric system

ActiveCN103500277AOptimize layoutImprove efficiencySpecial data processing applicationsLand coverHeliostat

The invention discloses a mirror field optimization design method of a cornfield and tower type solar thermoelectric system. The mirror field optimization design method includes the steps of using the product of the comprehensive optical efficiency of a mirror field and a land coverage rate of the mirror field as an optimization target, and optimizing the distance between the first row of heliostats in the mirror field and a heat absorber in the mirror field, the distance between every two rows of the heliostats and the distance between every two lines of the heliostats through a simplex algorithm so that the value of the optimization target can be the largest when the number of the heliostats and the arrangement of the heliostats are under a specific condition. According to the mirror field optimization design method, the contradiction between the number of the heliostats and the comprehensive optical efficiency of the mirror field is solved, and the mirror field optimization design method has better robustness and stability through the simplex algorithm. The comprehensive optical efficiency of each cornfield type small mirror field arranged through the mirror field optimization design method is more than 80% and is improved by 10% compared with the comprehensive optical efficiency of an existing ordinary mirror field.

Owner:ZHEJIANG UNIV

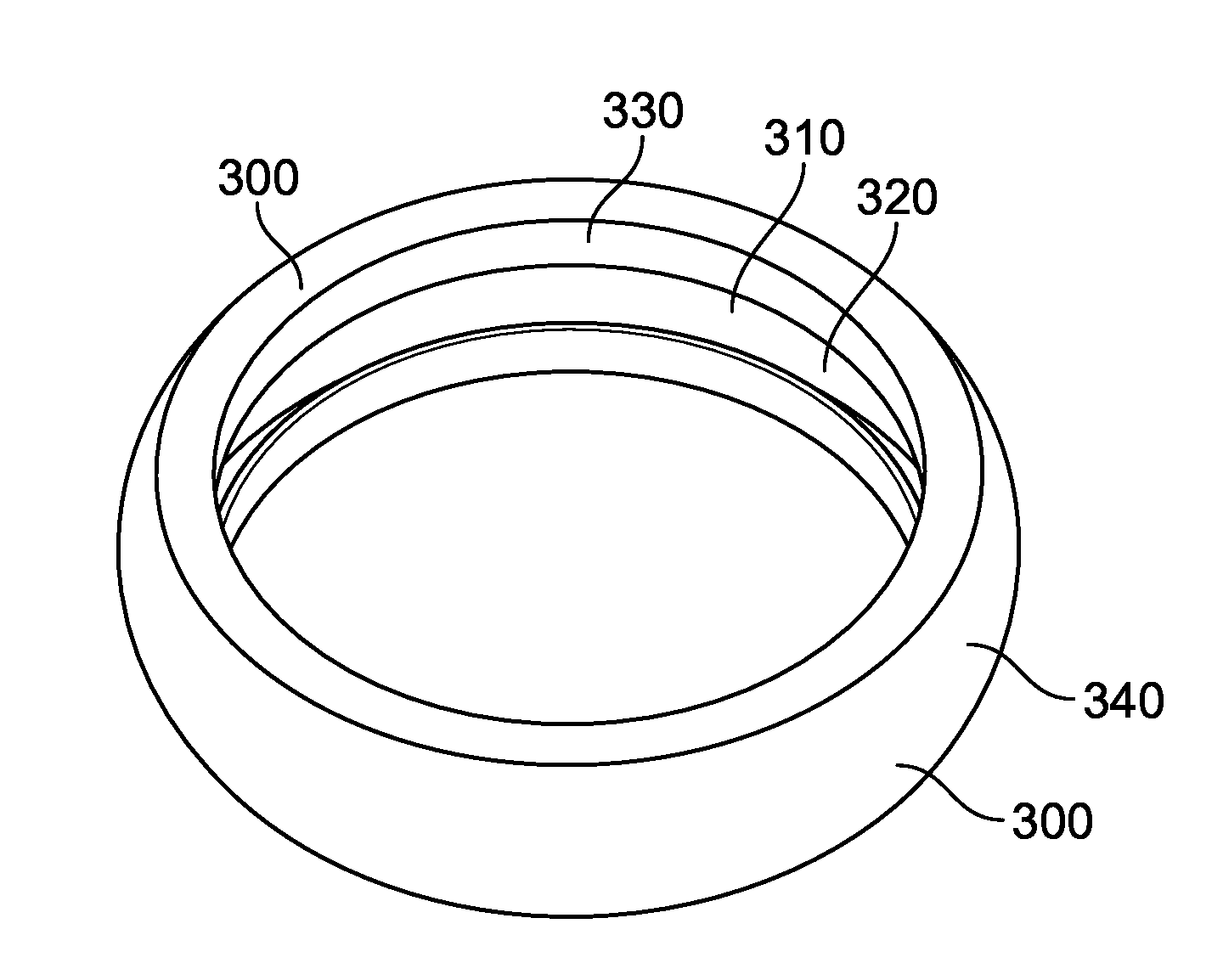

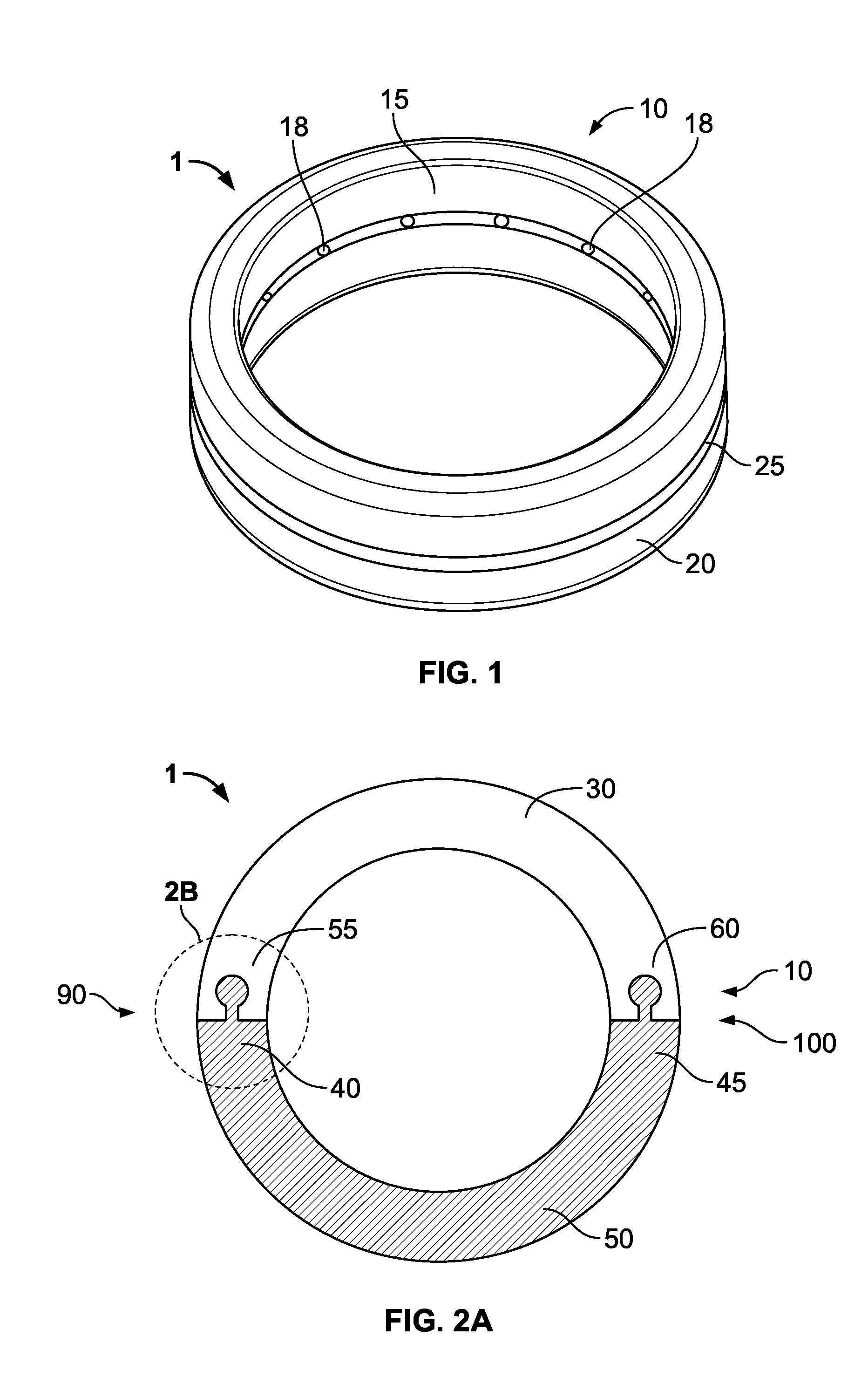

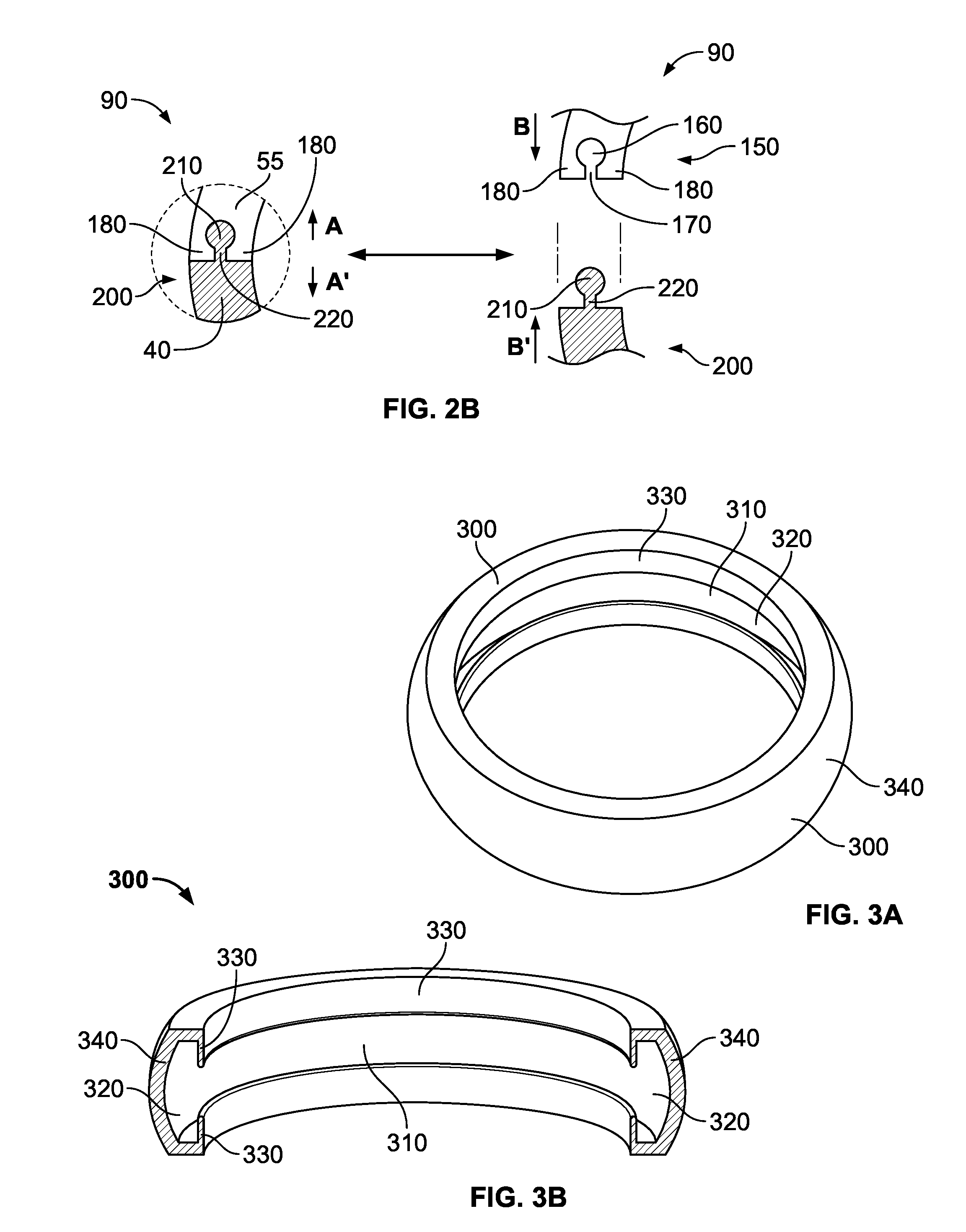

Tactical Finger Band

ActiveUS20140083135A1Preventing inadvertently losingEasy to useFinger-ringsSports activityPull force

A tactical finger band is described and taught having a band body which has one segment or is longitudinally dividable into multiple segments. The band body is openable due to the use of one or more socket-protrusion assembly as connecting mechanisms. In addition, the tactical finger band may also include a band cover or other accessories. The tactical finger band disclosed here is particularly suitable for people engaging in industrial work, medical careers, sports activities, military missions, and law enforcement and fire-fighting operations. With a sufficient pulling force applied to the band body, the finger band may be opened due to the disengagement of the socket-protrusion assembly, preventing injury to the wearer. Moreover, the band body and / or the band cover may bear patterns, colors, logos, and words appropriate for the occasion in which the band is used and the person wearing the band.

Owner:MARTINEZ JESUS

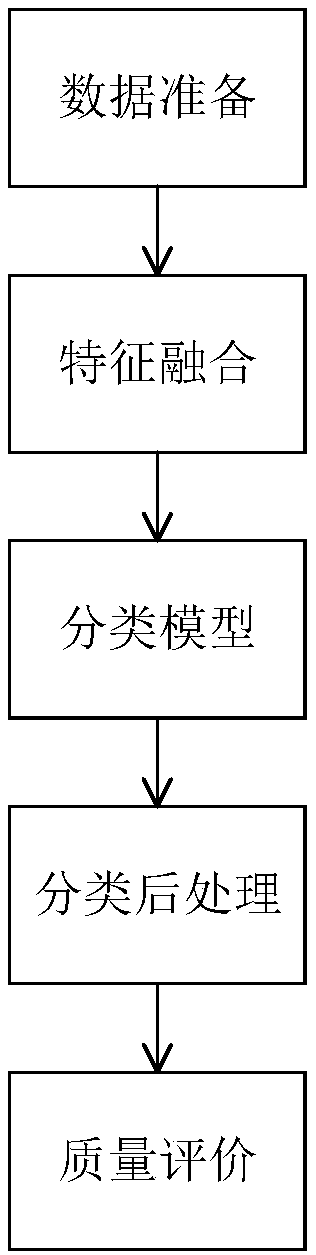

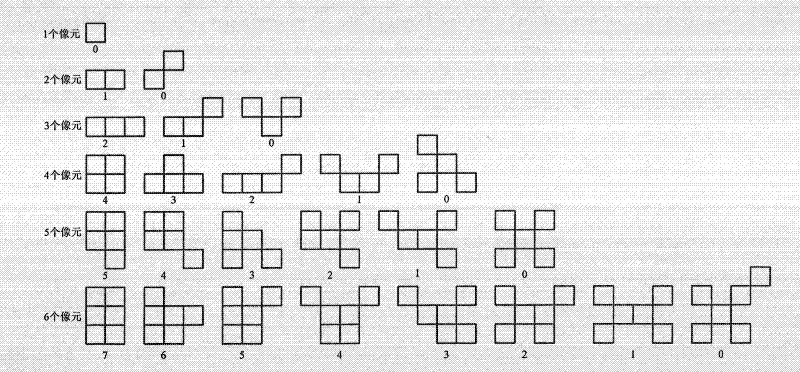

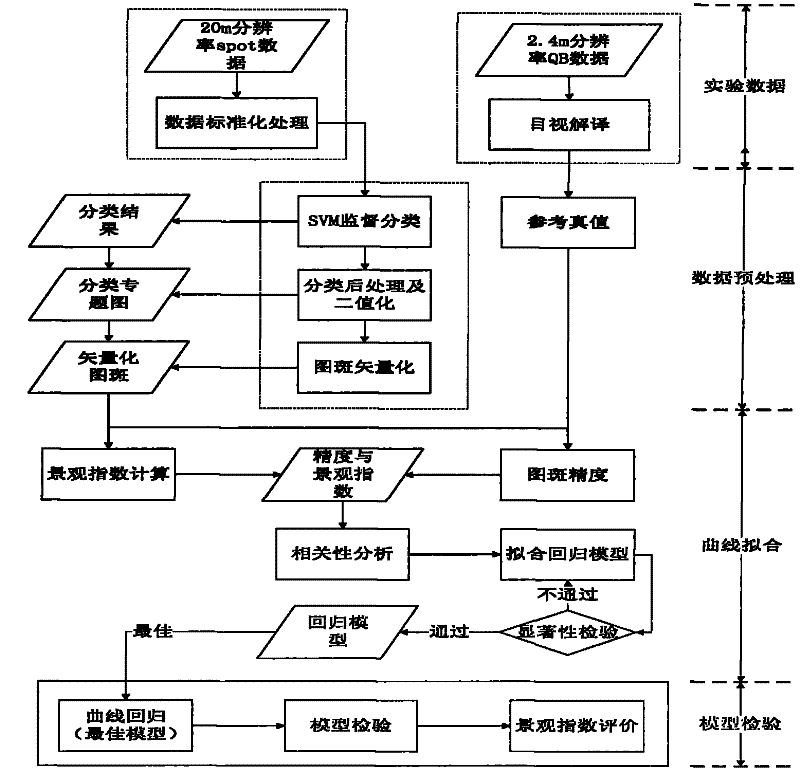

A Method for Analyzing the Effect of Landscape Features on the Accuracy of Remote Sensing Classification Spots

The invention provides a method for analyzing the influence of landscape features on the precision of remote sensing classification spots, including step 1, acquiring data, including performing data standardization processing on original images; step 2, performing image recognition on the data obtained in step 1, including classifying and Post-classification processing, wherein the classification process includes the determination of the classification pattern; step 3, the correlation calculation between the classification pattern and the true value data pattern is carried out on the data obtained in step 2, and the regression curve fitting is established Regression model, including the definition of landscape index expressing landscape characteristics; step 4, statistically test the regression model established in step 3, and evaluate the classification error expressed by the landscape index. The present invention adopts the theoretical basis of the accuracy evaluation and analysis based on the spatial features of the remote sensing classification, uses the landscape features of the remote sensing classification map to describe and express the classification accuracy, and provides the basis and guidance for the application and research related to the land cover thematic map.

Owner:BEIJING NORMAL UNIVERSITY

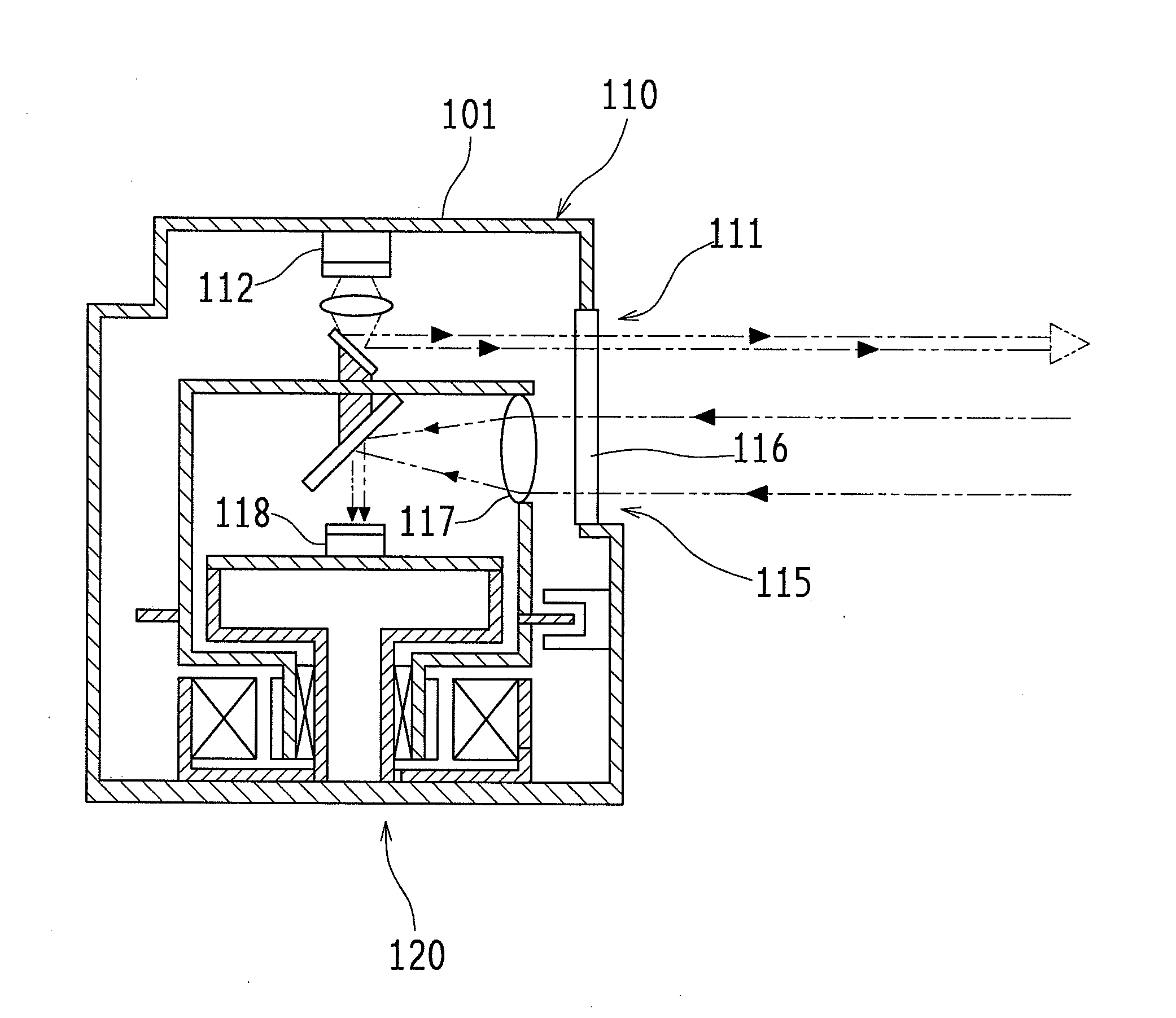

Laser scanning sensor

A laser scanning sensor (100) of an embodiment of the present invention includes a laser range finder (110), a scanning mechanism (120), a data acquisition portion (130), a dirt determination portion (140), an alert output control portion (150) and a memory (160). The laser range finder (110) is arranged inside a housing (101) having an opening portion, and the opening portion is covered with a lens cover (116) that can transmit laser light. In the dirt determination portion (140), a predetermined threshold to be compared with a received light level is changed based on maximum detection distance information in each measurement direction.

Owner:OPTEX CO LTD

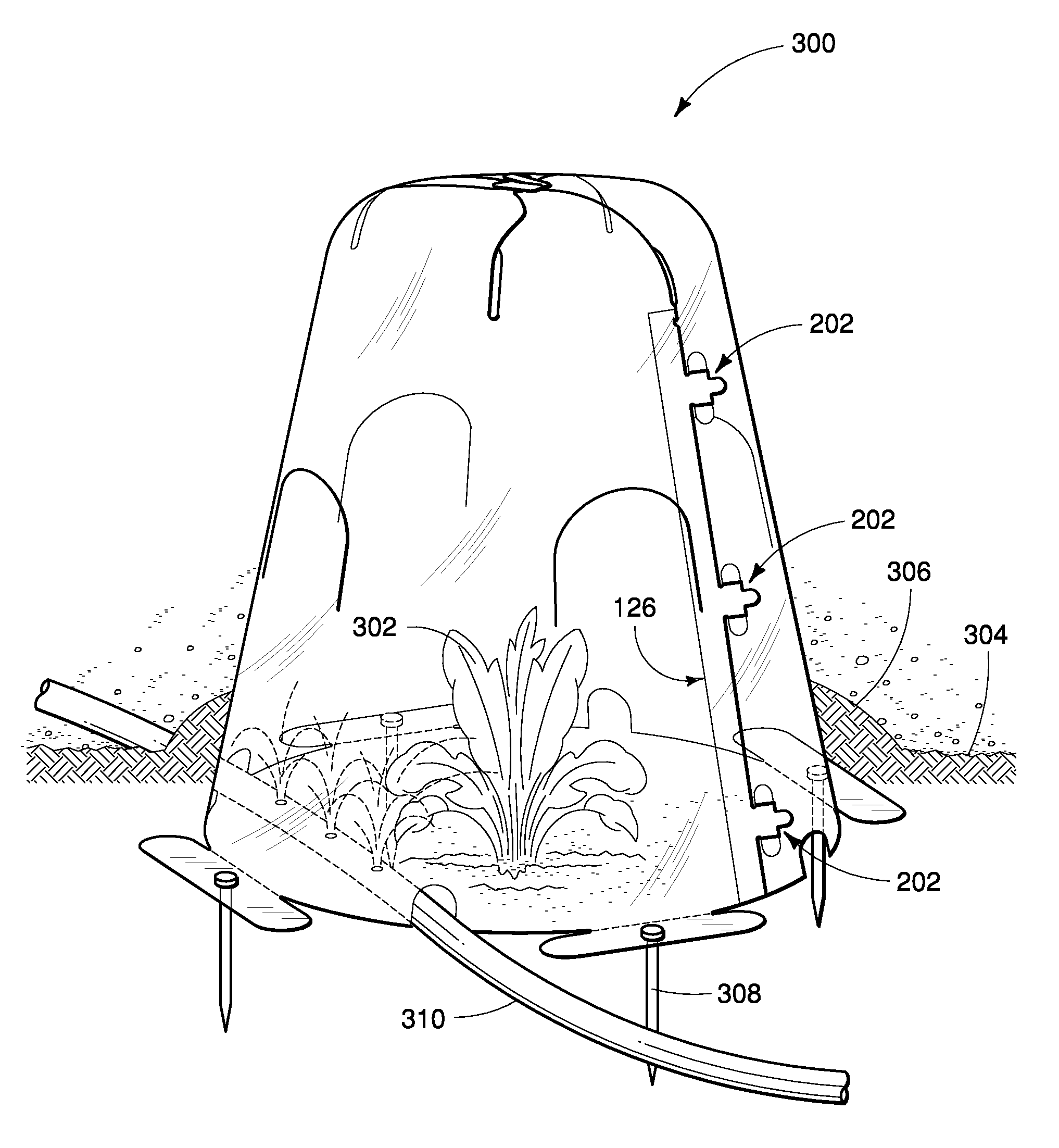

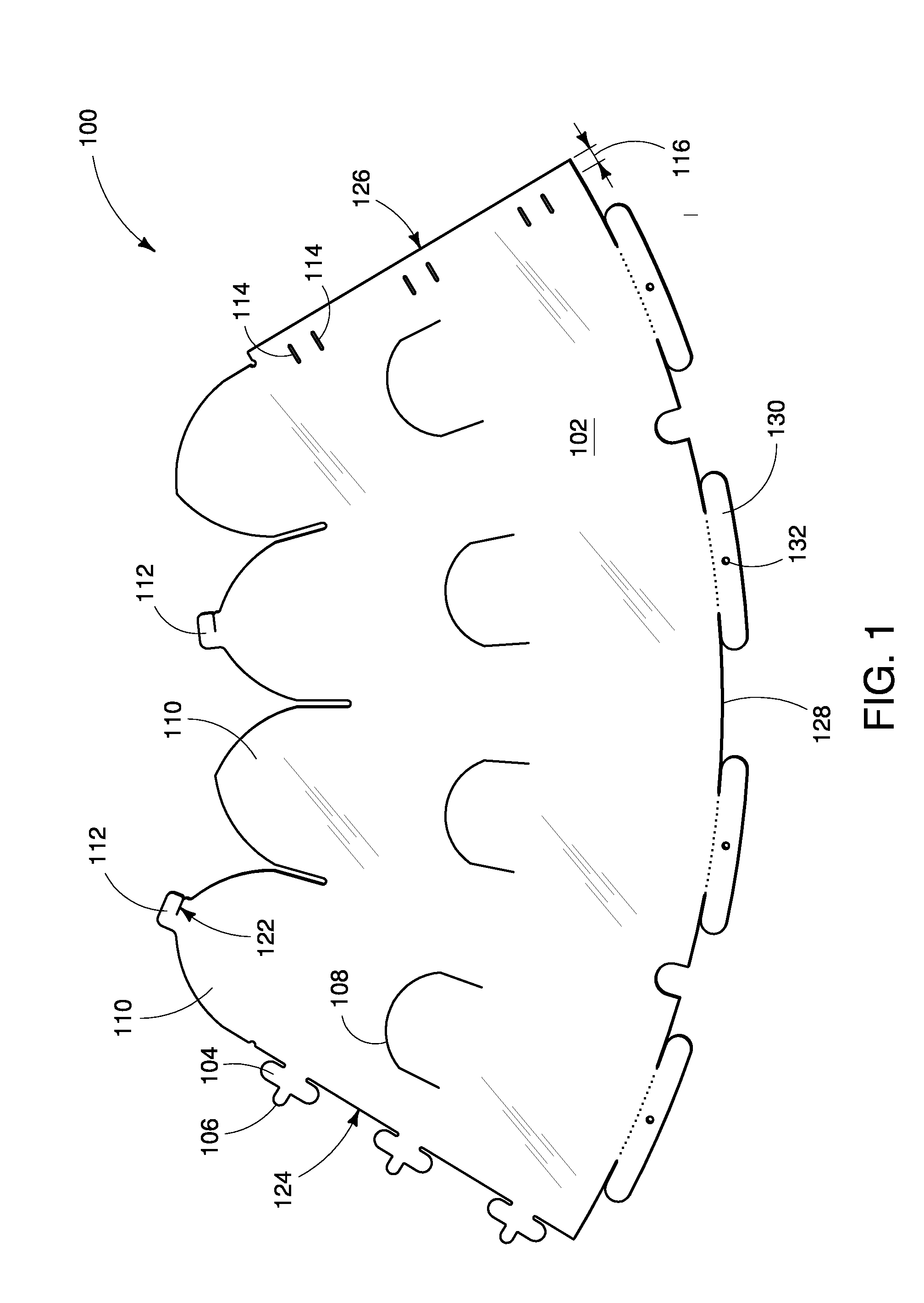

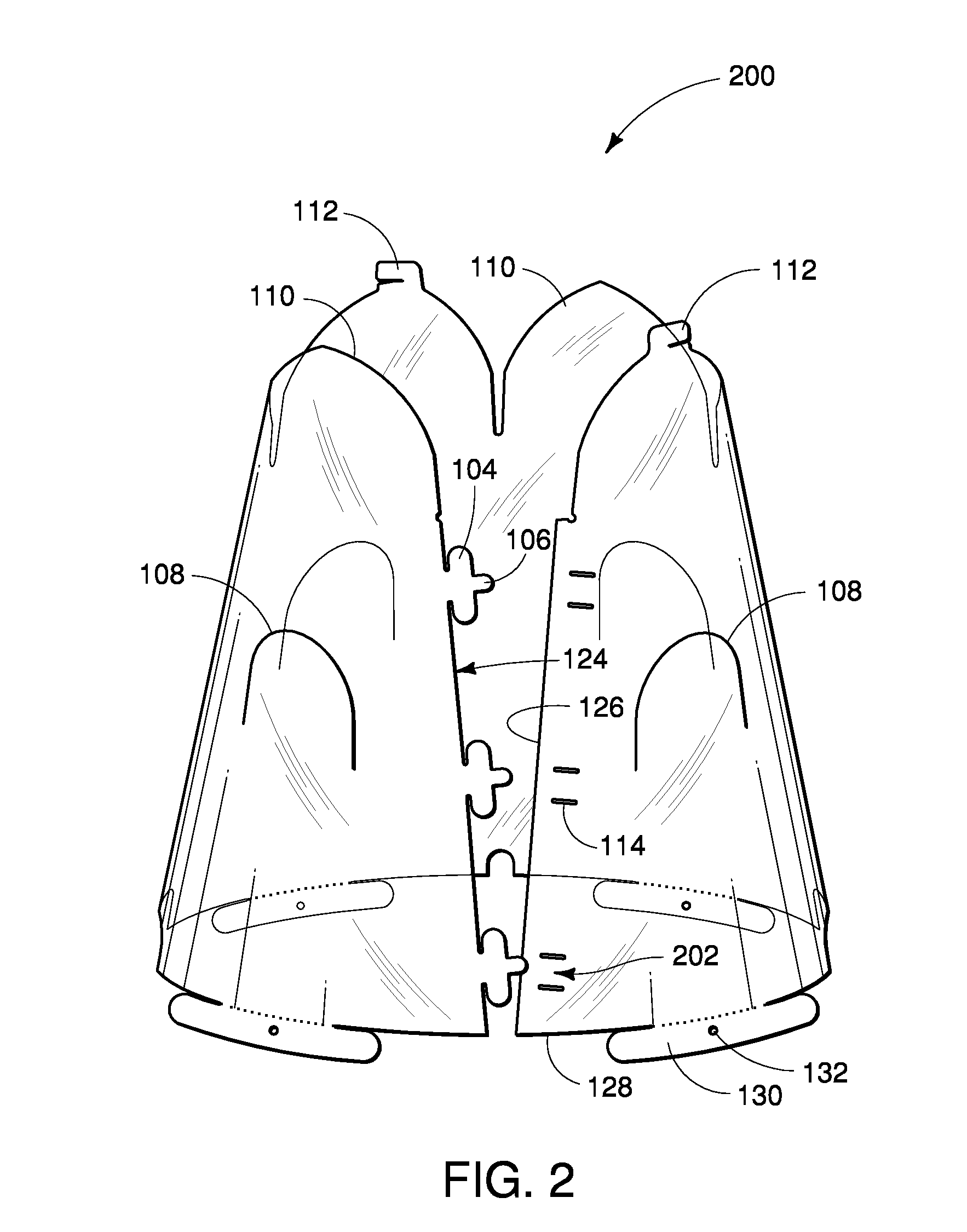

Flexible plant protector

InactiveUS8683741B2Easily and quickly erectedImprove wind resistanceLiving organism packagingOther accessoriesLand coverEngineering

Described are devices and methods for providing protection to plants from cold and other weather. A plant cover or protector is constructed of a translucent or other material, a flexible or bendable material, cut into a two-dimensional blank or shape and provides an easily assembled, self-supporting structure to be placed over one or more plants or a location. The plant protector is erected without the use of tools or need for a separate frame or support. The plant protector may be used with stakes or spikes. The plant protector may be collapsed to a planar configuration. The plant protector may be easily and inexpensively manufactured. The plant protector may be repeatedly used from season to season. A top portion of each plant protector may be easily and quickly closed or opened such as by manual manipulation. The plant protector comprises one or more supports or feet for increased contact with the ground and resilience against wind. The plant protector may be folded from a blank into a three-dimensional frustum or conical shape and placed in a desired location. Other shapes such as hexagonal and octagonal cross sections are possible. A preferred form is tapered from bottom to top.

Owner:CASTAGNO LEO +1

High-precision land cover classification method based on high-resolution satellite image

ActiveCN105608473AAccurate extractionGuaranteed integrityCharacter and pattern recognitionLand coverPlaque area

The invention provides a land cover classification method which comprises the steps of (1) determining plaque areas with similar domestic remote sensing image characters and than carrying out reasonable image segmentation, (2) according to the different areas divided in the step (1), by using spectrum, texture and geometrical characteristics, calculating an image characteristic, (3) by using historical land use data and a ground object spectral library, combined with the image characteristic in the step (2), a ground object sample is obtained automatically, (4) with the ground object sample of the step (3) as an information entropy, various types of ground objects are extracted by using a decision tree and the boosting technology, (5) calculating various types of ground object plaque areas, carrying out plaque combination and elimination according to a set value, and finally obtaining a classification result. The problems of wrong land cover classification and multiple broken plaques are solved, thus the operation efficiency is improved by 50%, and the classification accuracy is raised to 90%.

Owner:CHINA CENT FOR RESOURCES SATELLITE DATA & APPL

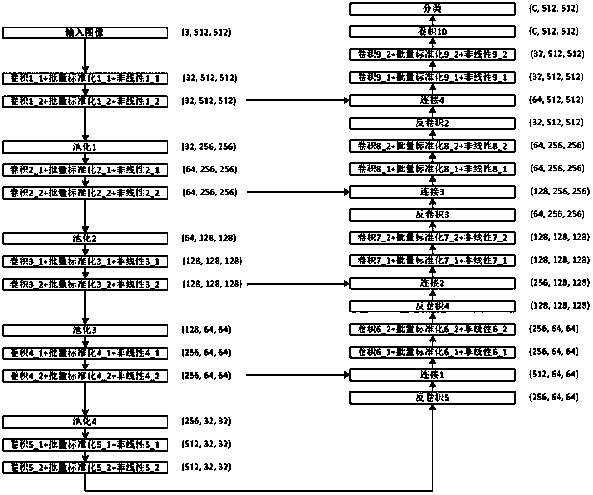

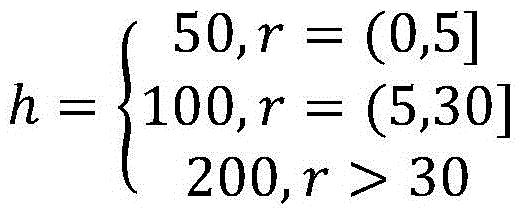

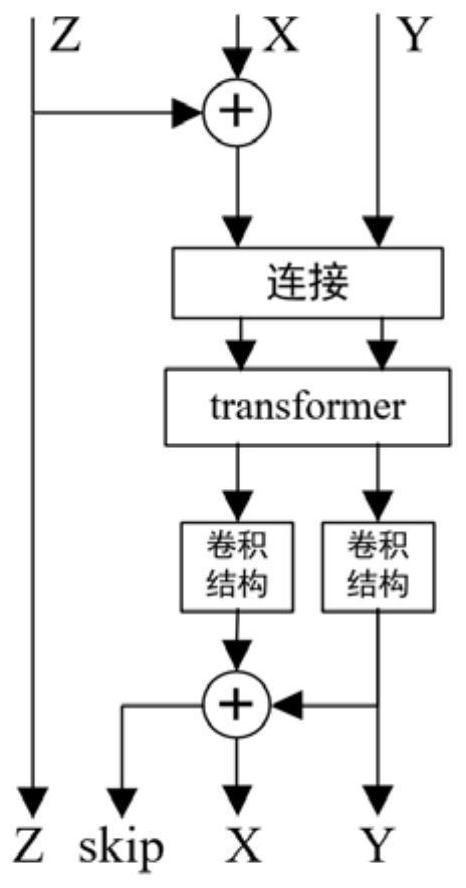

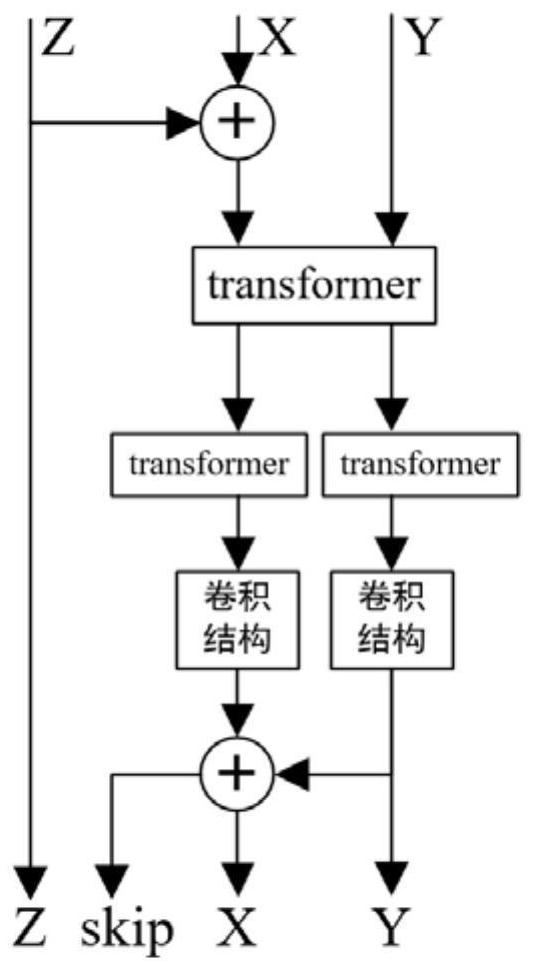

Land cover classification method based on deep fusion of multi-modal remote sensing data

PendingCN113469094AAchieve integrationHigh precisionCharacter and pattern recognitionNeural architecturesSensing dataLand cover

The invention relates to a land cover classification method based on deep fusion of multi-modal remote sensing data. The method comprises the following steps: (1) constructing a remote sensing image semantic segmentation network based on multi-modal information fusion, wherein the network comprises an encoder for extracting ground feature features, a depth feature fusion module, a spatial pyramid module and an up-sampling decoder, and the depth feature fusion module comprises an ACF3 module and a CACF3 module which are used for simultaneously fusing three modal information of RGB, DSM and NDVI, the ACF3 module is a self-attention convolution fusion module based on transformer and convolution, and the CACF3 module is a cross-modal convolution fusion module based on transformer and convolution; (2) training the network constructed in the step (1); (3) utilizing the network model trained in the step (2) to predict the remote sensing image ground object category. Compared with a conventional method, the earth surface coverage classification method based on multi-modal remote sensing data deep fusion has the advantages that the precision improvement effect on earth surface classification tasks is remarkable, and the application prospect is wide.

Owner:上海中科辰新卫星技术有限公司

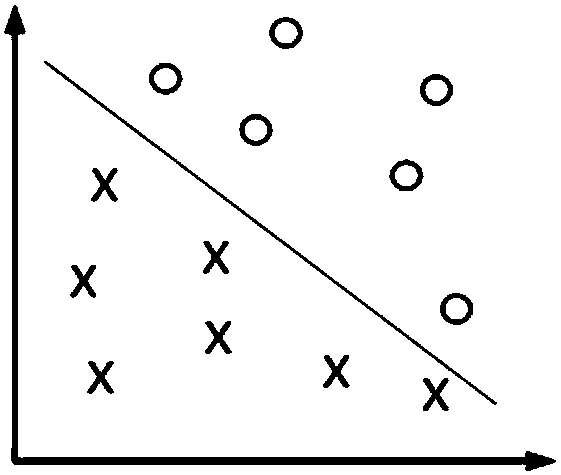

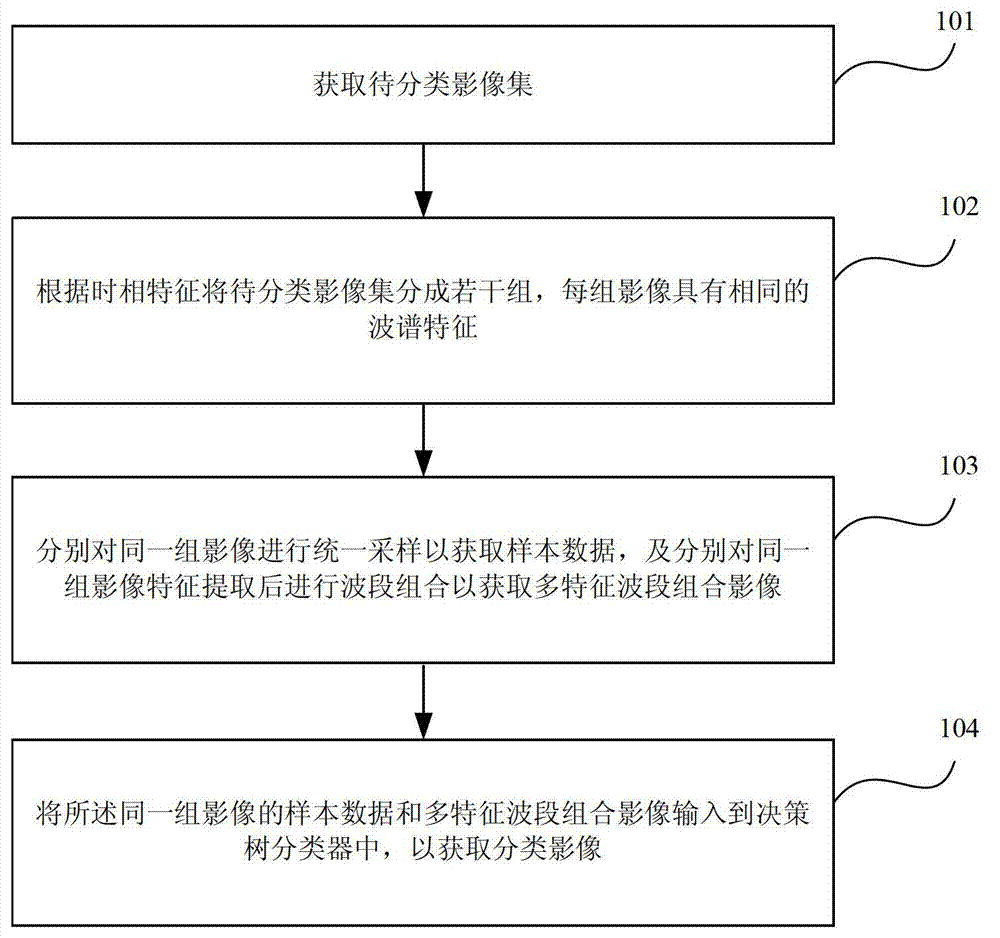

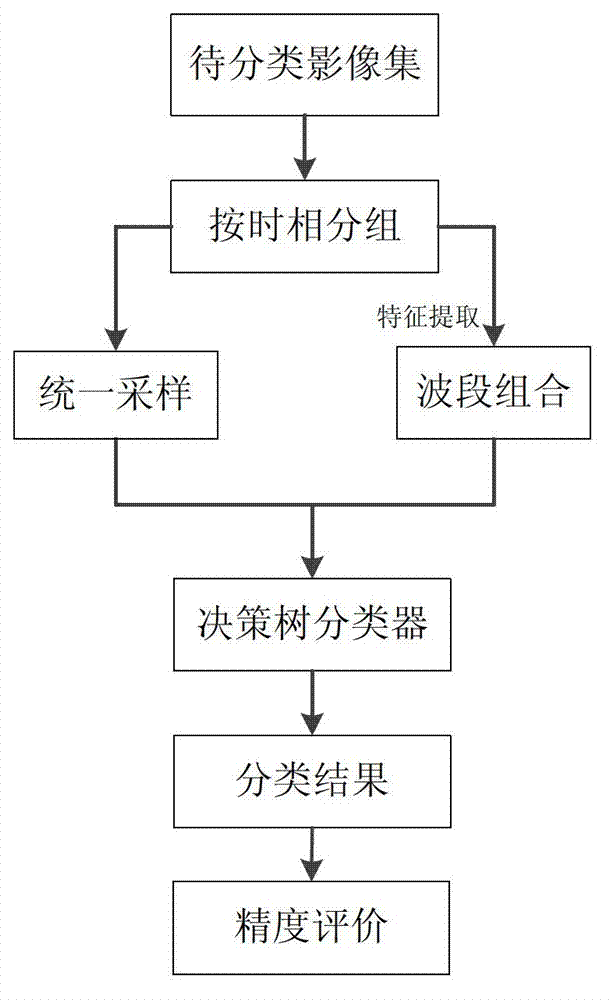

Method and device for decision tree based wide-area remote sensing image classification

ActiveCN102831440AImprove classification accuracyCharacter and pattern recognitionWide areaBased wide

An embodiment of the invention provides a method and a device for decision tree based wide-area remote sensing image classification. The method includes acquiring an image set to be classified; dividing the image set to be classified into multiple groups according to time phase characteristics and enabling each group of images to have the same spectrum characteristics; respectively sampling the same groups of images uniformly to acquire sample data, extracting characteristics of the same groups of images, and then carrying out band combination so as to acquire multi-characteristic band-combination images; and inputting the sample data and the multi-characteristic band-combination images of the same groups of images to a decision tree classifier to acquire classified images. The method and the device have the technical advantages that a wide-area land cover classification decision according to decision tree based sensing image classification is provided, and wide-area remote sensing image classification precision is improved.

Owner:CHINESE ACAD OF SURVEYING & MAPPING

Features

- R&D

- Intellectual Property

- Life Sciences

- Materials

- Tech Scout

Why Patsnap Eureka

- Unparalleled Data Quality

- Higher Quality Content

- 60% Fewer Hallucinations

Social media

Patsnap Eureka Blog

Learn More Browse by: Latest US Patents, China's latest patents, Technical Efficacy Thesaurus, Application Domain, Technology Topic, Popular Technical Reports.

© 2025 PatSnap. All rights reserved.Legal|Privacy policy|Modern Slavery Act Transparency Statement|Sitemap|About US| Contact US: help@patsnap.com