Patents

Literature

Hiro is an intelligent assistant for R&D personnel, combined with Patent DNA, to facilitate innovative research.

919 results about "Shared service" patented technology

Efficacy Topic

Property

Owner

Technical Advancement

Application Domain

Technology Topic

Technology Field Word

Patent Country/Region

Patent Type

Patent Status

Application Year

Inventor

Definition shared services ... Shared services is the consolidation of business operations that are used by multiple parts of the same organization. Shared services are cost-efficient because they centralize back-office operations that are used by multiple divisions of the same company and eliminate redundancy.

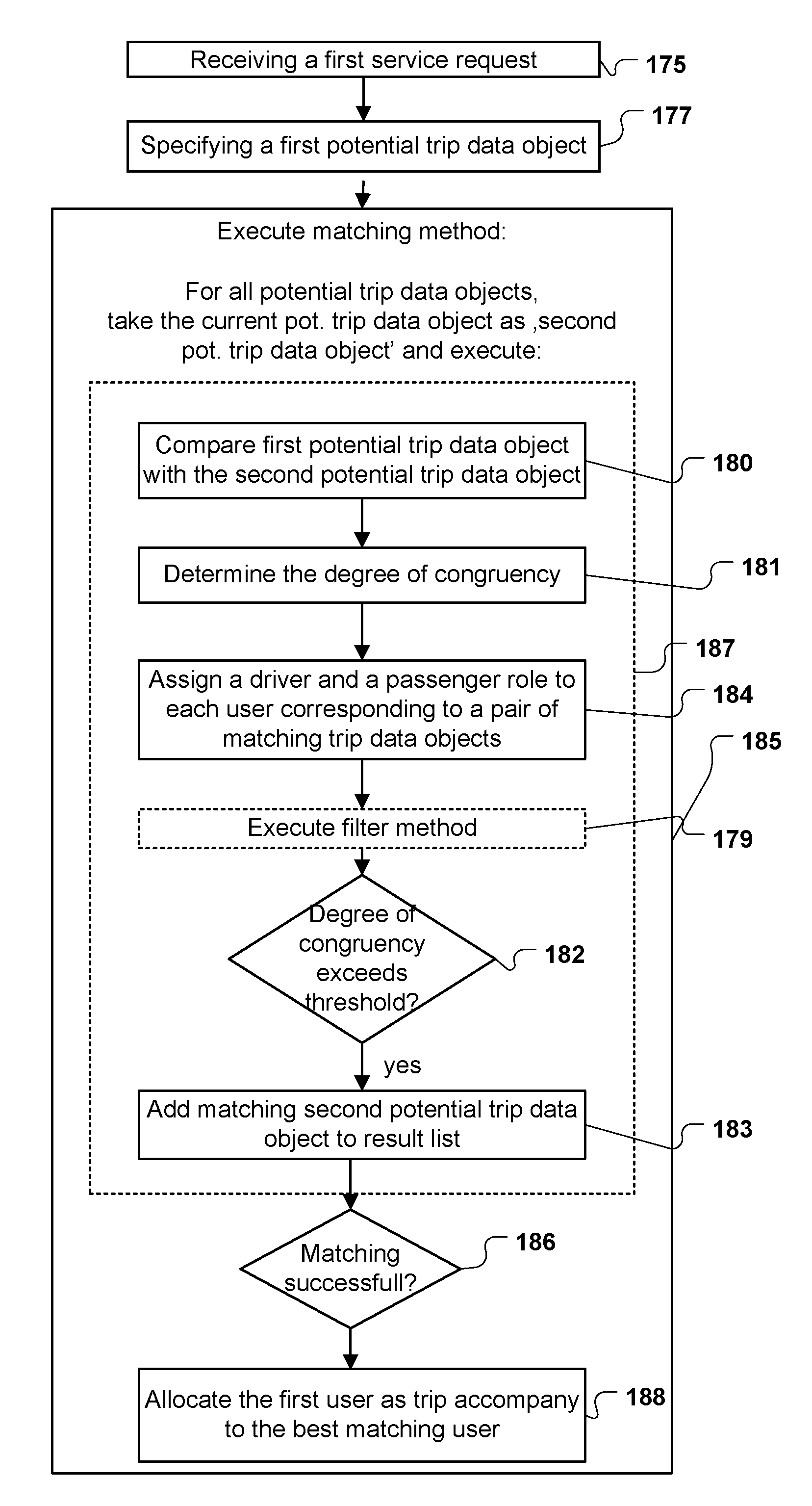

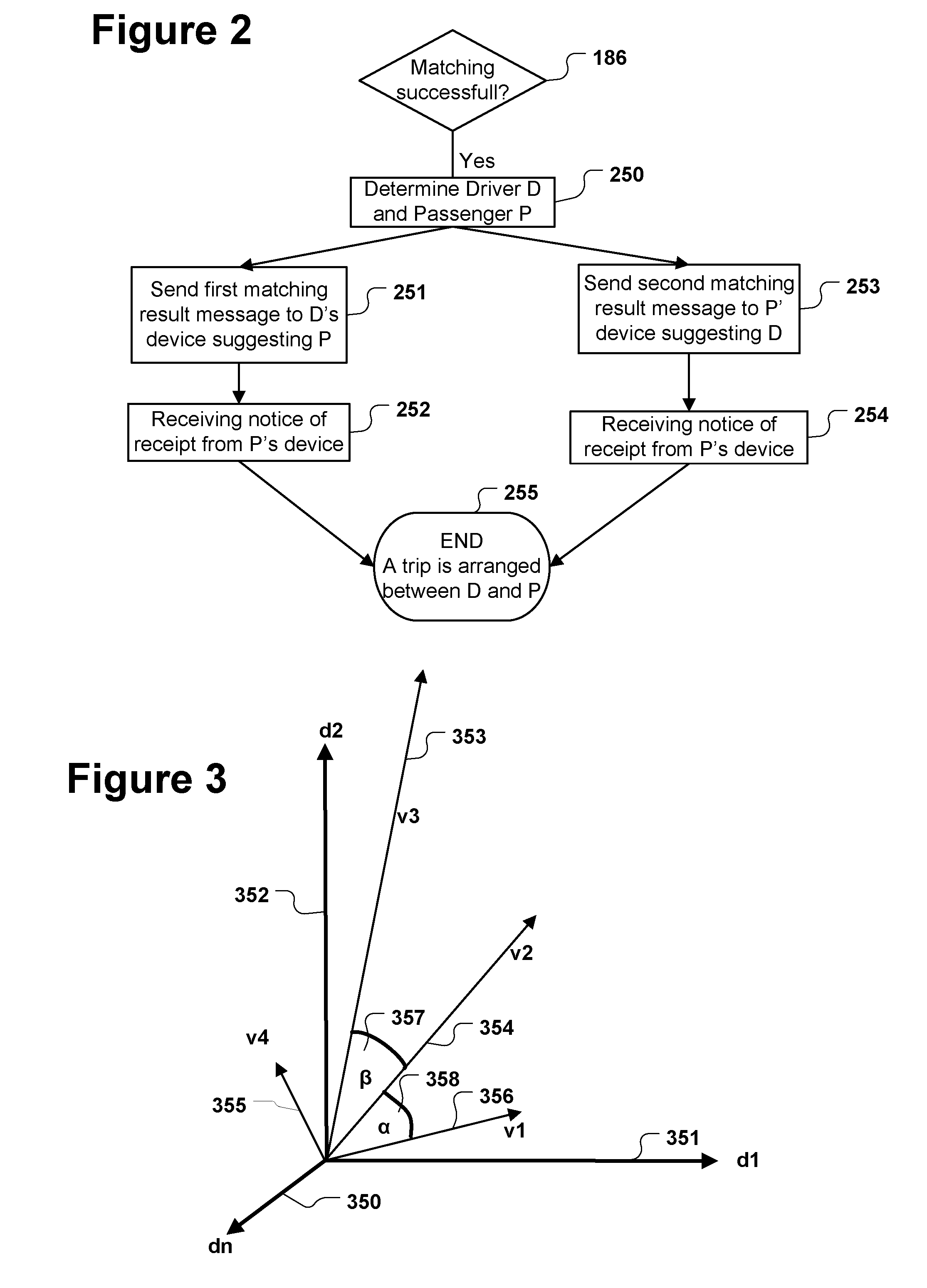

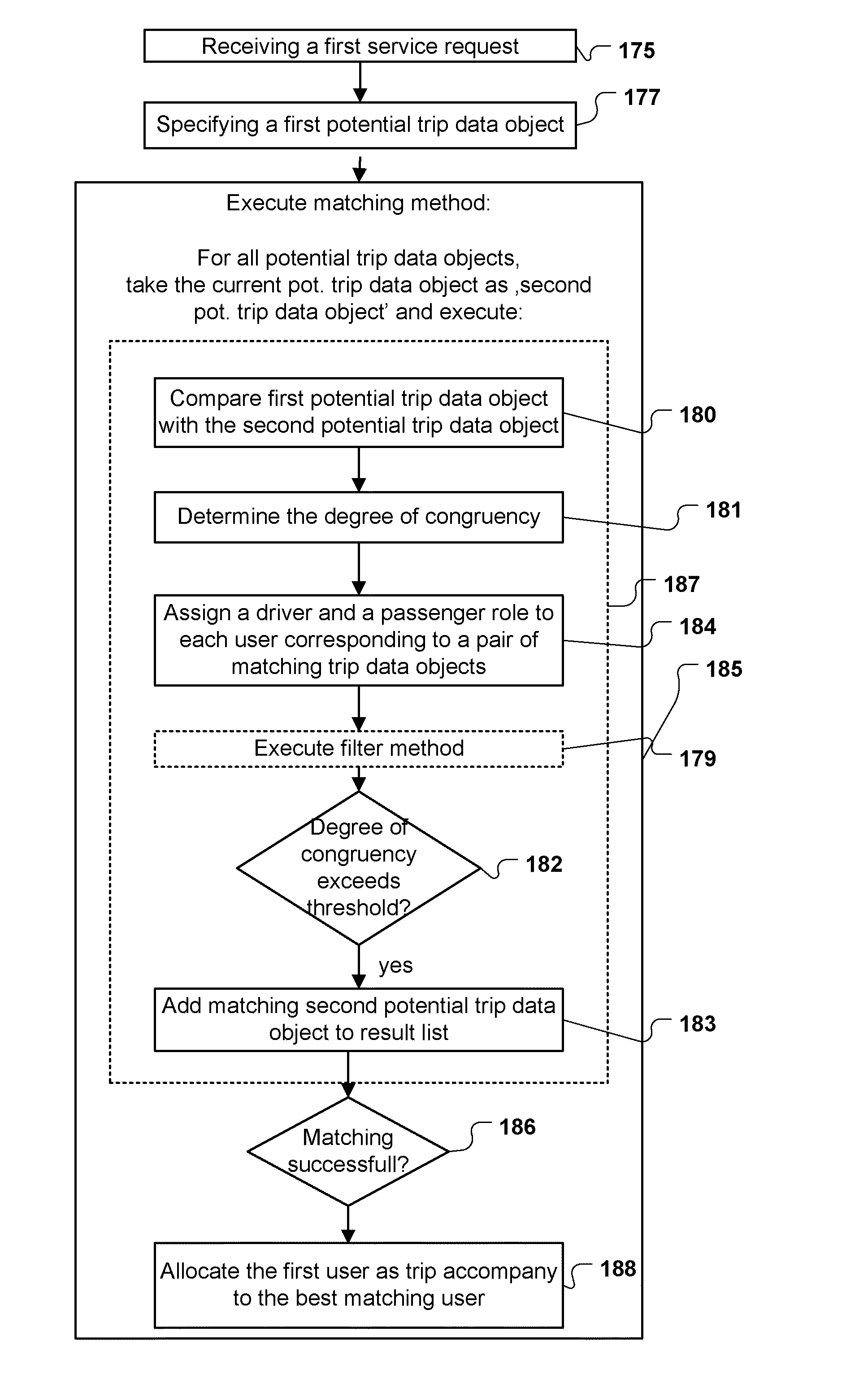

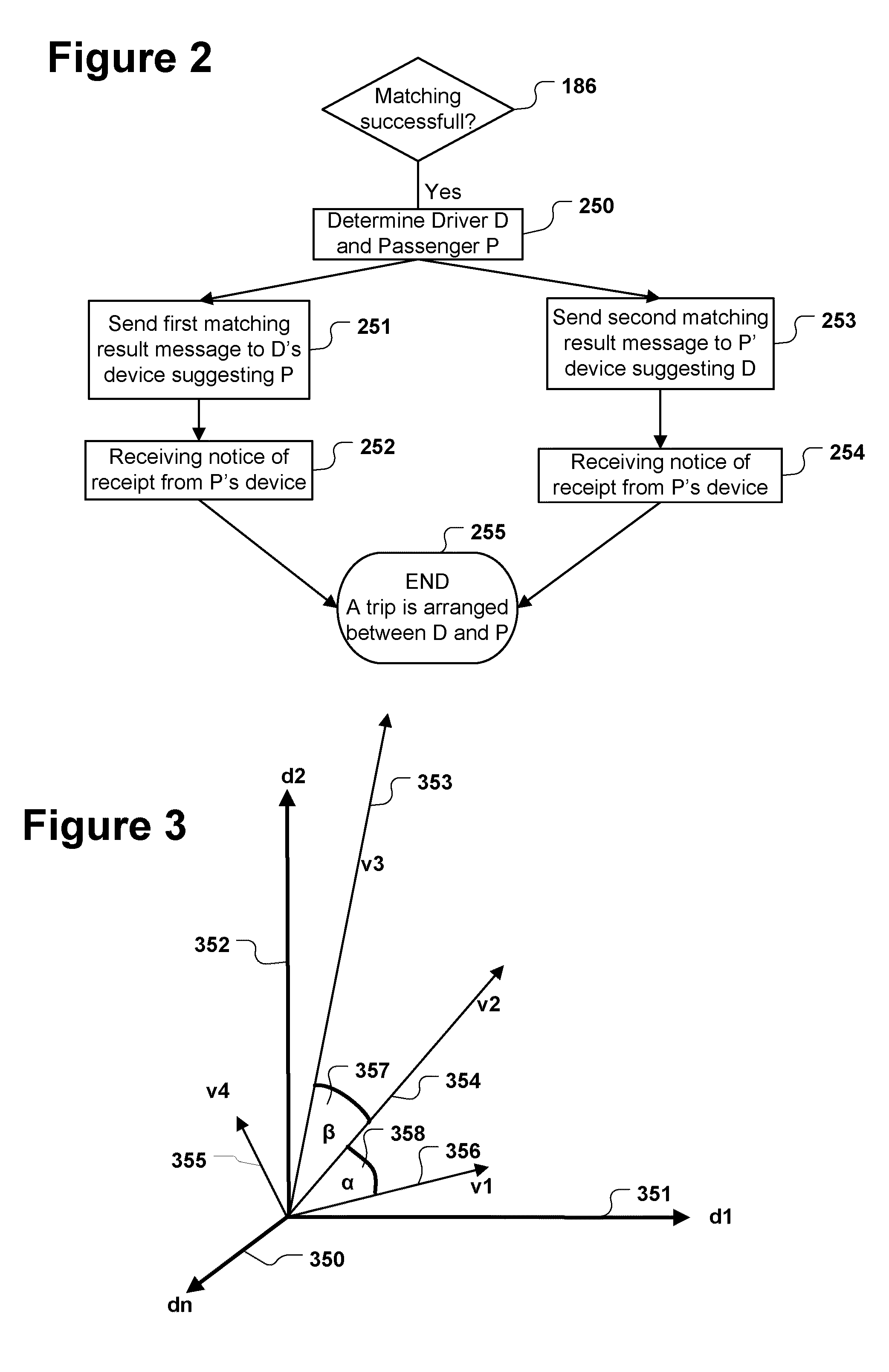

Computer implemented method for allocating drivers and passengers sharing a trip

ActiveUS20110153629A1Considerable effortConsiderable timeInput/output for user-computer interactionDigital data processing detailsDriver/operatorResult list

A computer implemented method for allocating drivers and passengers sharing a trip, the method being executed by a trip sharing service, the method comprising:receiving a first service request;specifying a first potential trip data object by the trip sharing service;executing a matching method, the matching method checking the first potential trip data object against at least a second potential trip data object, the matching method comprising for each checking of the first against the second potential trip data objects the steps of:comparing the specifications of the first potential trip data object with the specifications of the at least one second potential trip data object,determining the degree of congruency of the specifications of the compared potential trip data objects,assigning one role to the first and the second user;adding the second potential trip data object to a result list in case the determined degree of congruency between the first and the second potential trip data object exceeds a predefined threshold.

Owner:SAP AG

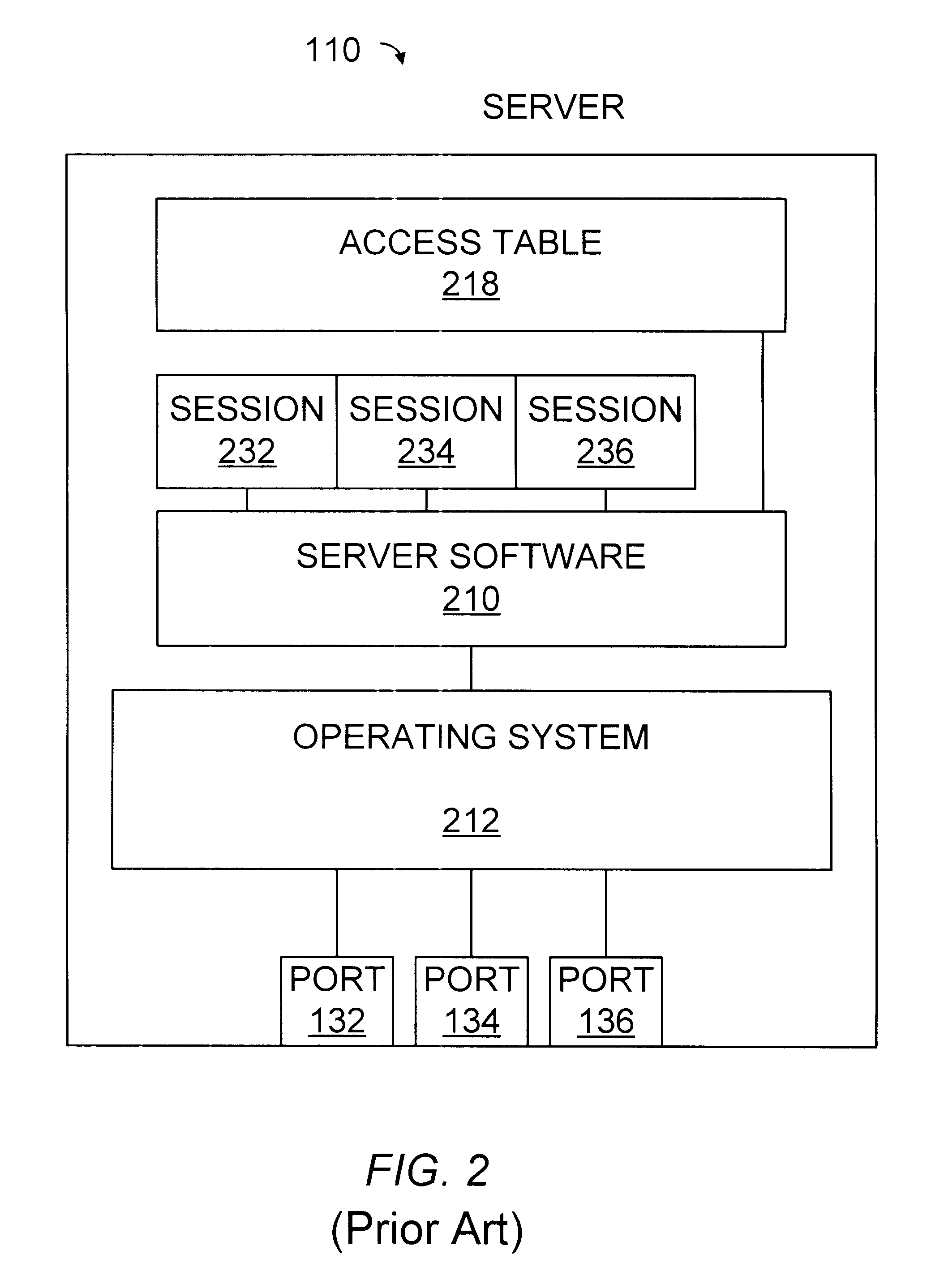

System using session data stored in session data storage for associating and disassociating user identifiers for switching client sessions in a server

A method and apparatus allows clients to share ports on a server. The server can maintain more sessions than server ports. When a client sends a command directed to the server, a resource manager inserted between the clients and the server intercepts the command and directs the server to select the session associated with a client prior to or at the same time that the resource manager forwards the intercepted command to the server. Responses from the server are forwarded by the resource manager to the client that sent the command to which the response relates. The resource manager may be coupled to multiple clients, and one or more ports of one or more servers.

Owner:ORACLE INT CORP

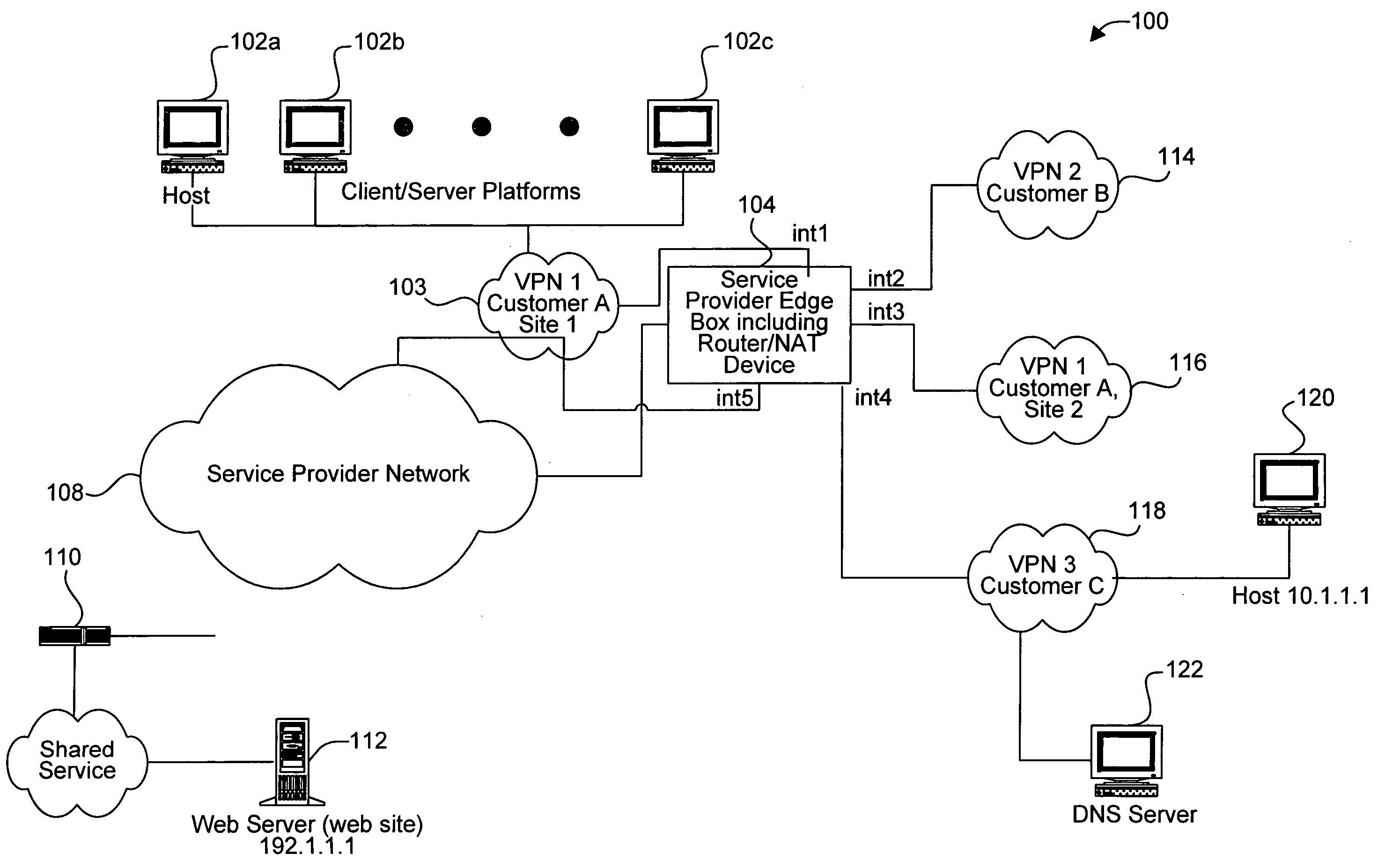

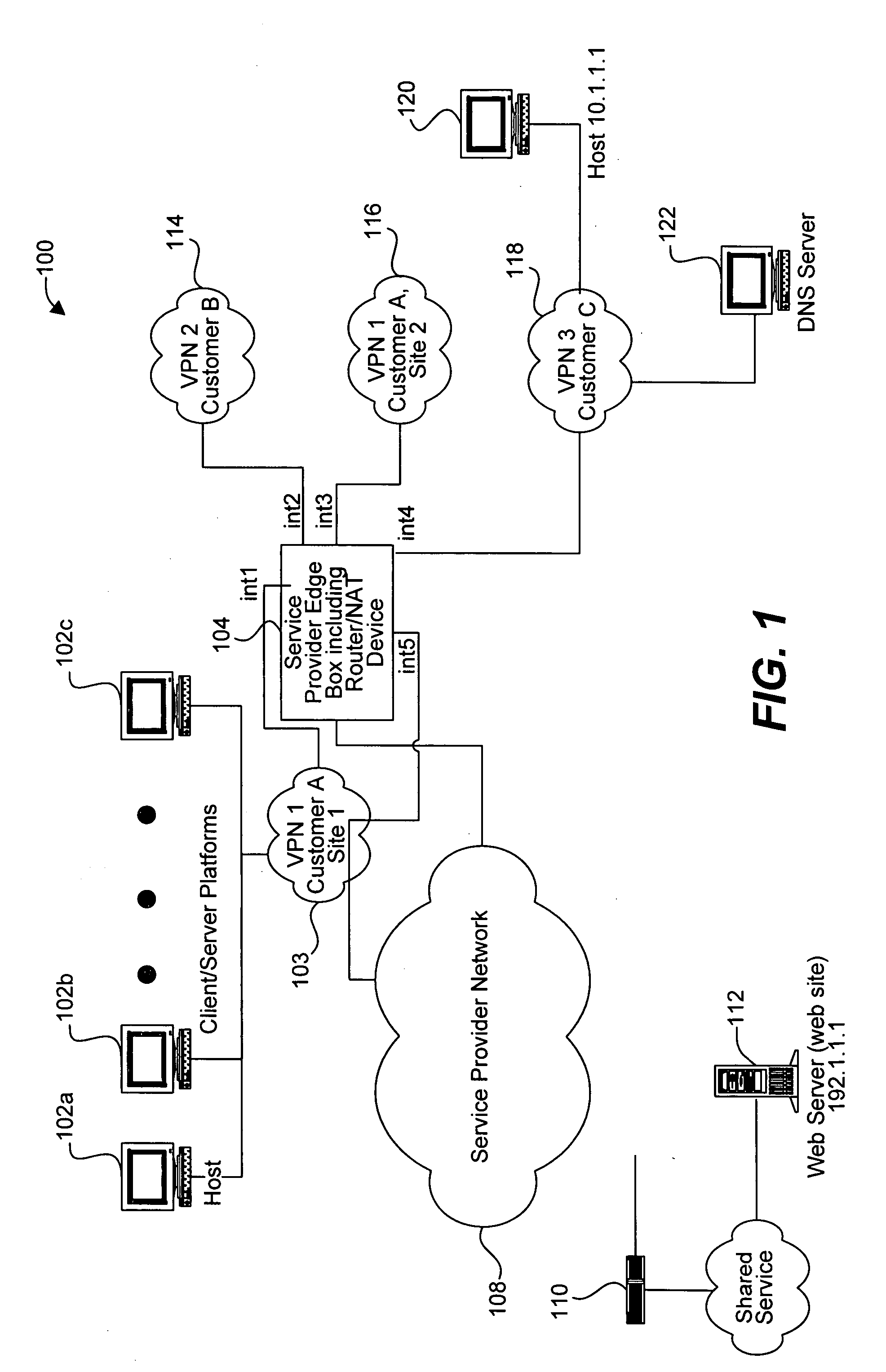

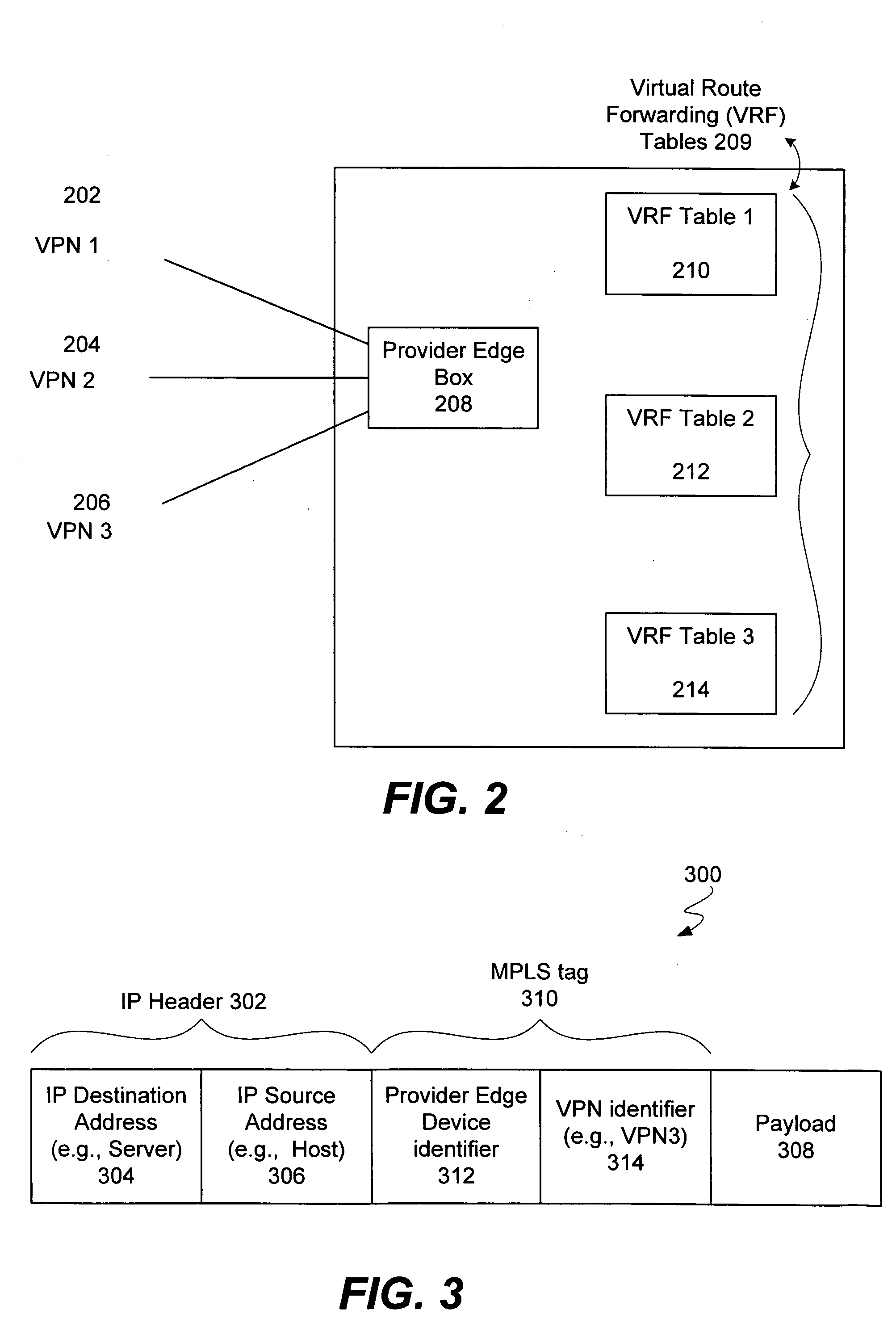

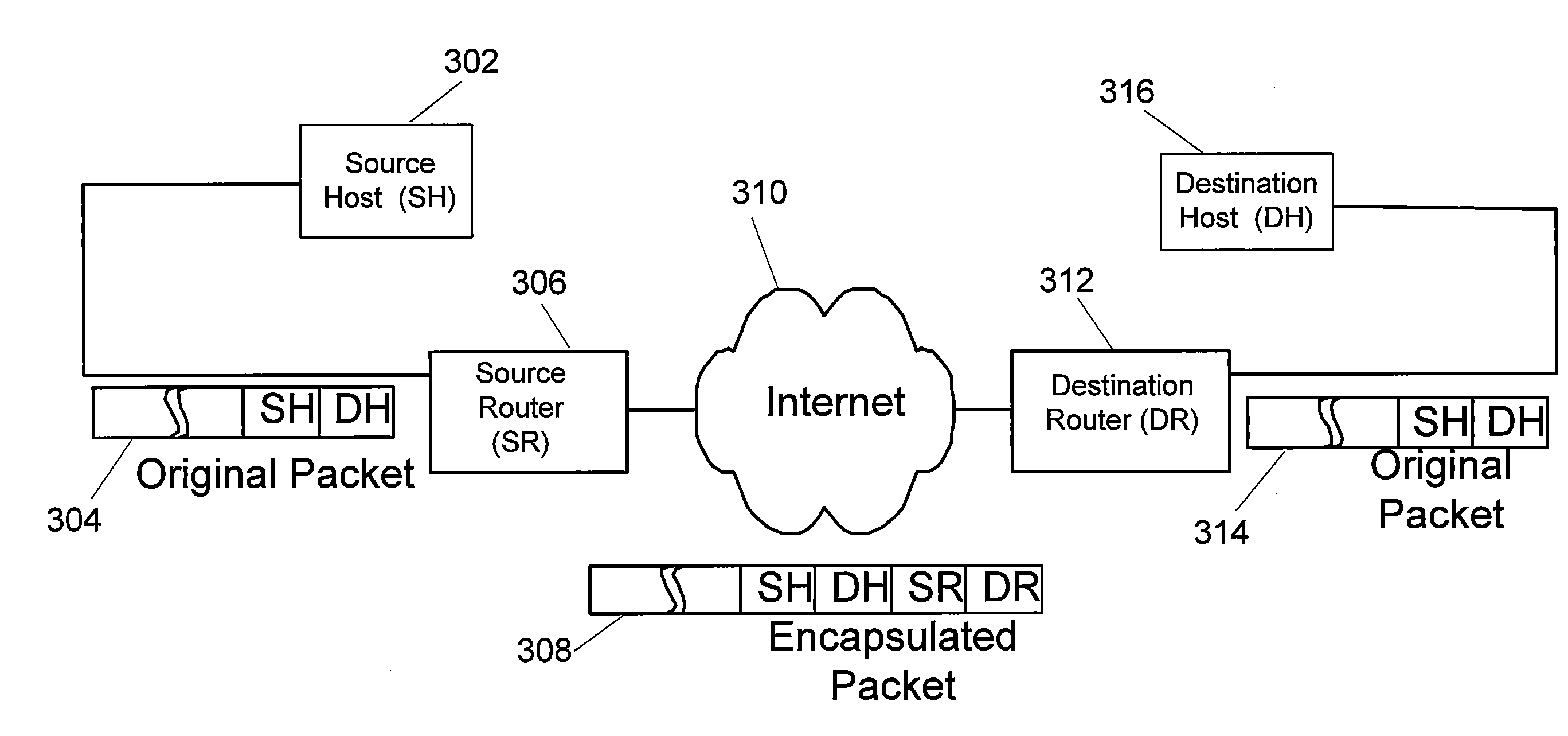

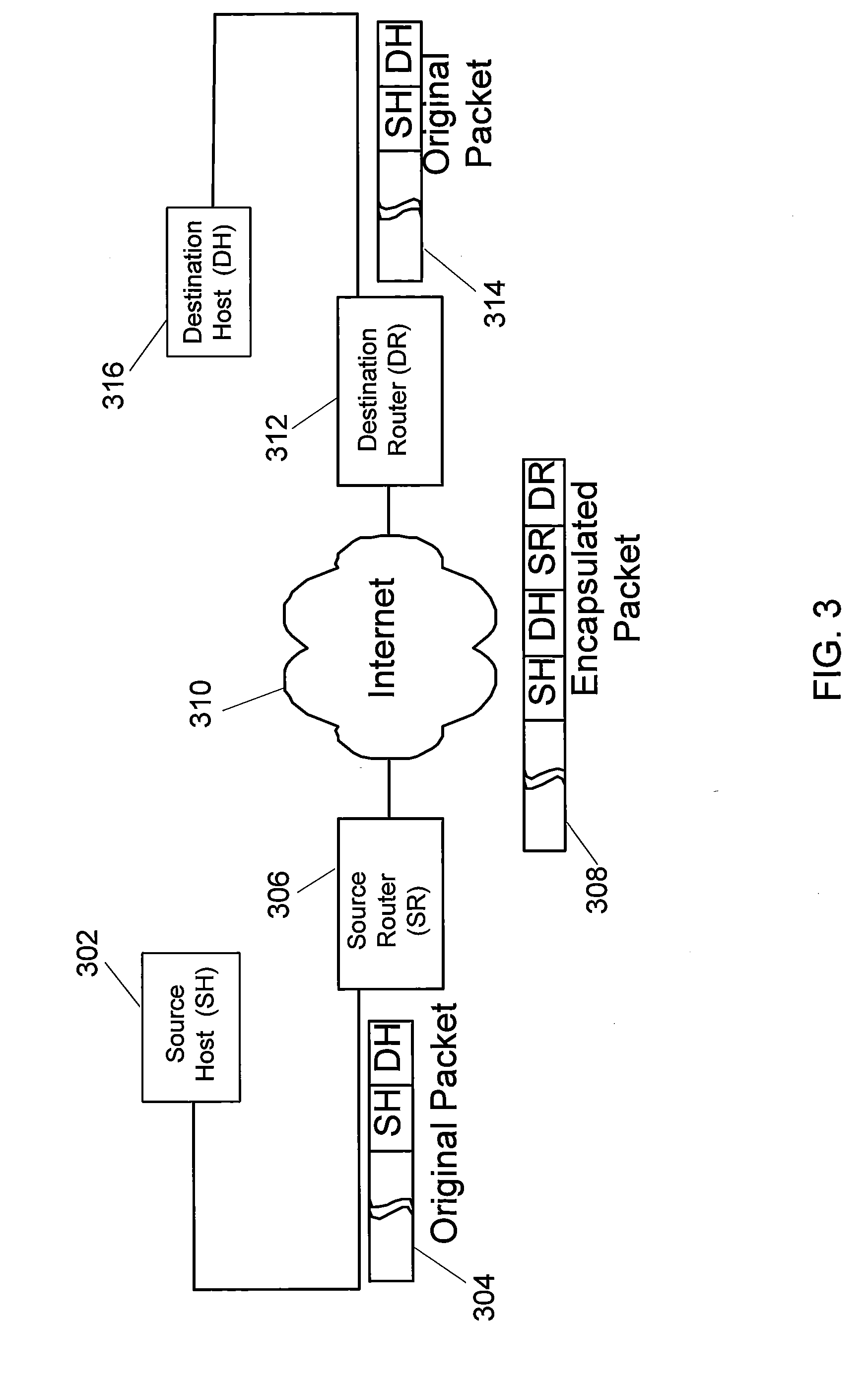

Apparatus and methods for handling shared services through virtual route forwarding(VRF) -aware- NAT

Methods and apparatus for performing NAT are disclosed. Specifically, NAT is performed at a service provider network device associated with an interface of a service provider network. When a packet is sent from a VPN to a node outside the service provider network (e.g., to access a shared service), the packet includes a VPN identifier (or VRF identifier) In accordance with various embodiments, each packet includes an MPLS tag that includes the VPN identifier. The VPN identifier is stored in a translation table entry. The storing of the VPN identifier will enable a reply packet from the shared service network to the customer VPN to be routed using a routing table identified by the VPN identifier.

Owner:CISCO TECH INC

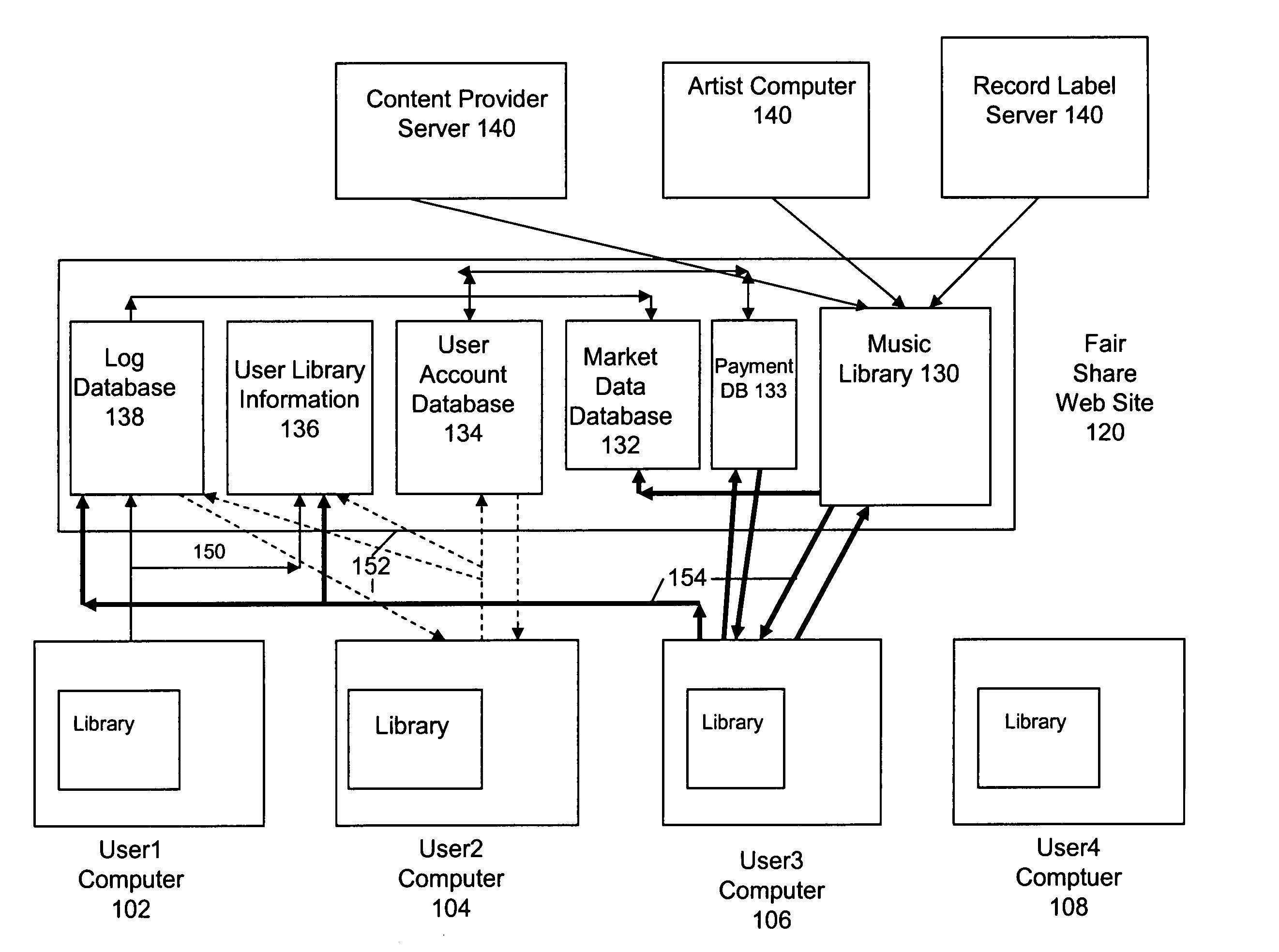

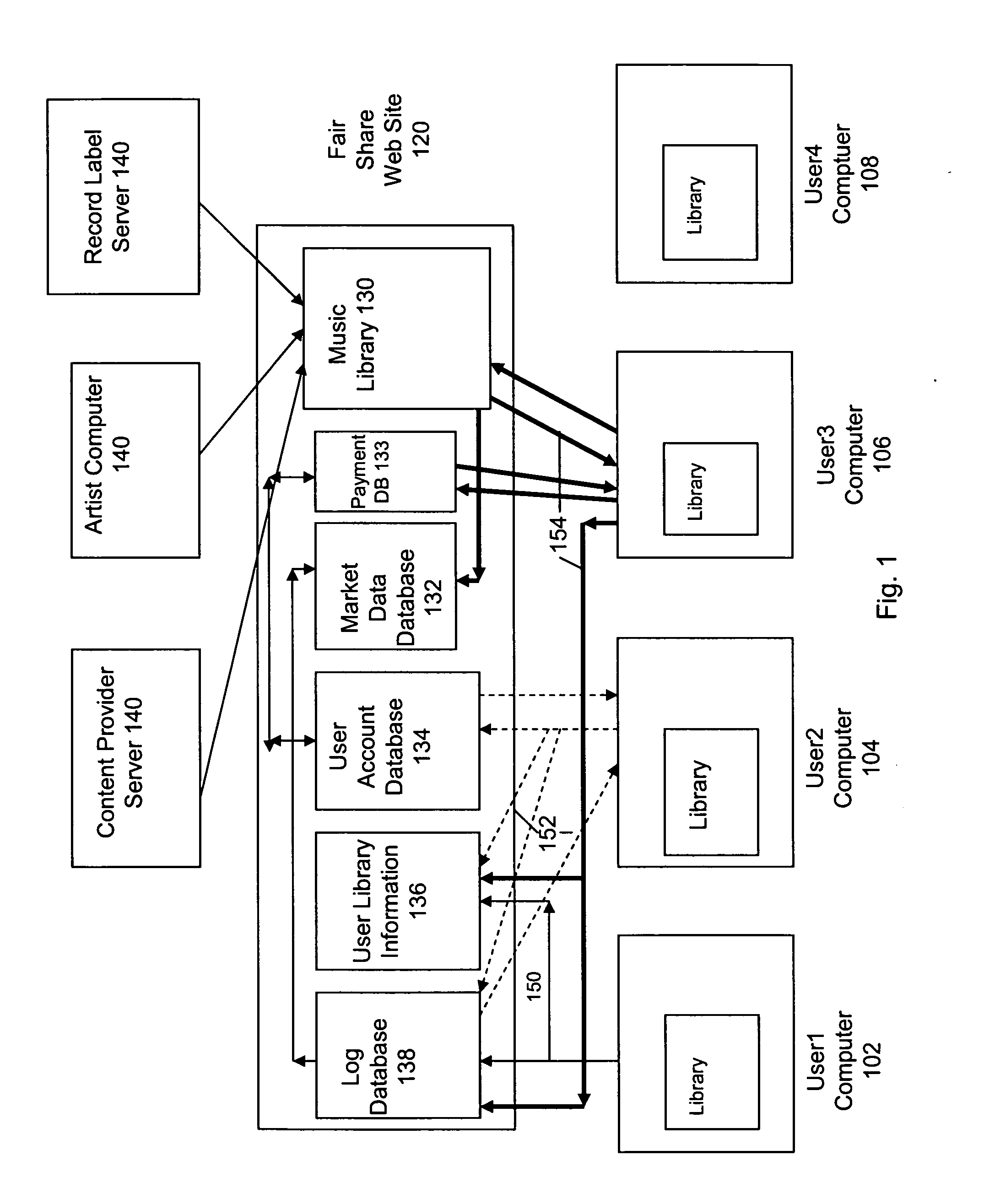

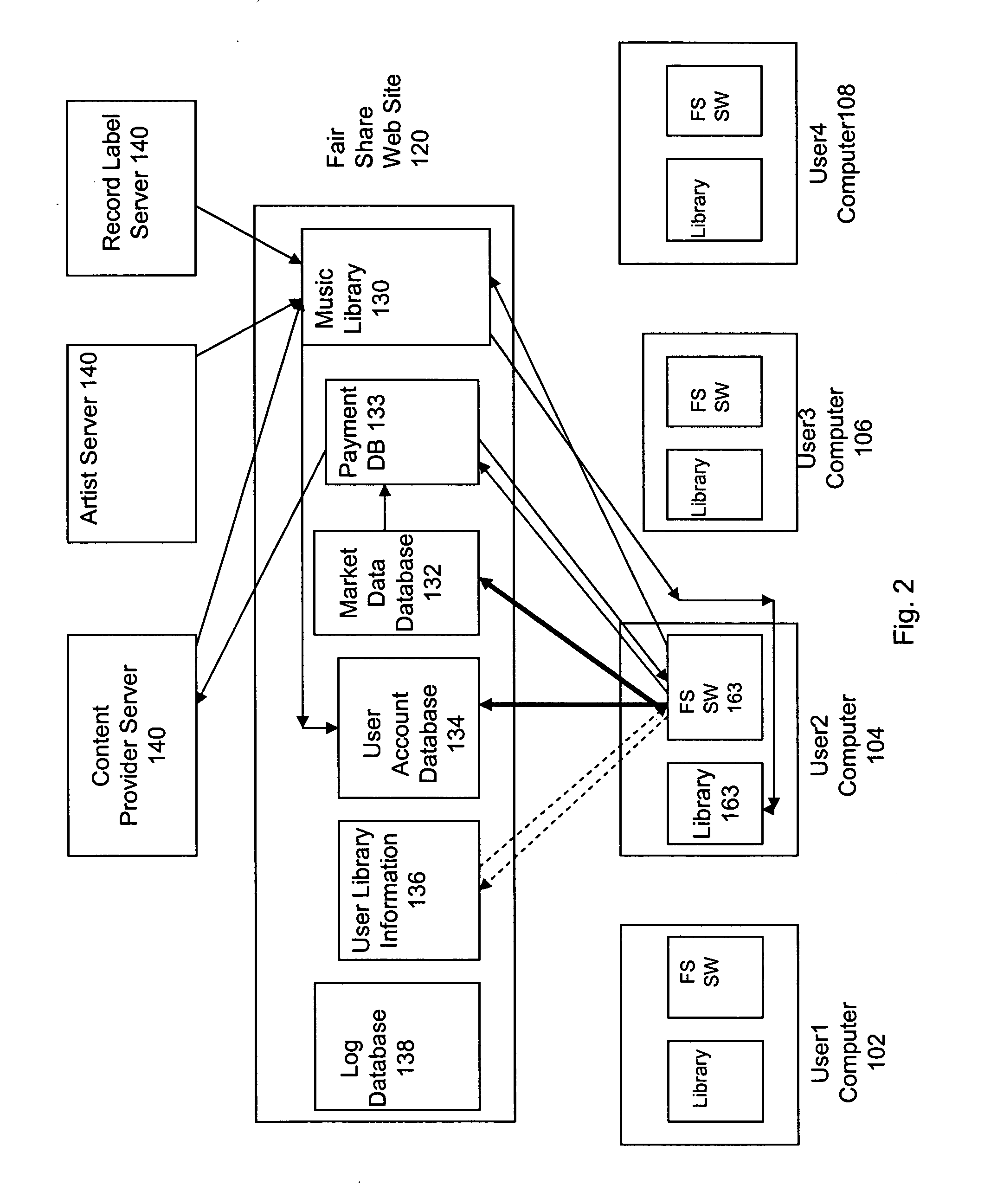

Digital media distribution and trading system used via a computer network

A digital media file sharing system includes a fair share server, a user computer, and a second user computer. The fair share server includes a user library information database and a media library, the media library storing digital media files. A user computer includes a user computer media library and the user computer media library stores user digital media files. A second user computer includes a second user computer media library and requests the downloading of a selected digital media file from the user library information database. The second user computer downloads the selected digital media file from the user computer media library. The user computer is allocated a credit in the payment database of the fair share server for providing the selected digital media file to the second user computer.

Owner:FAIR SHARE DIGITAL MEDIA DISTRIBUTION

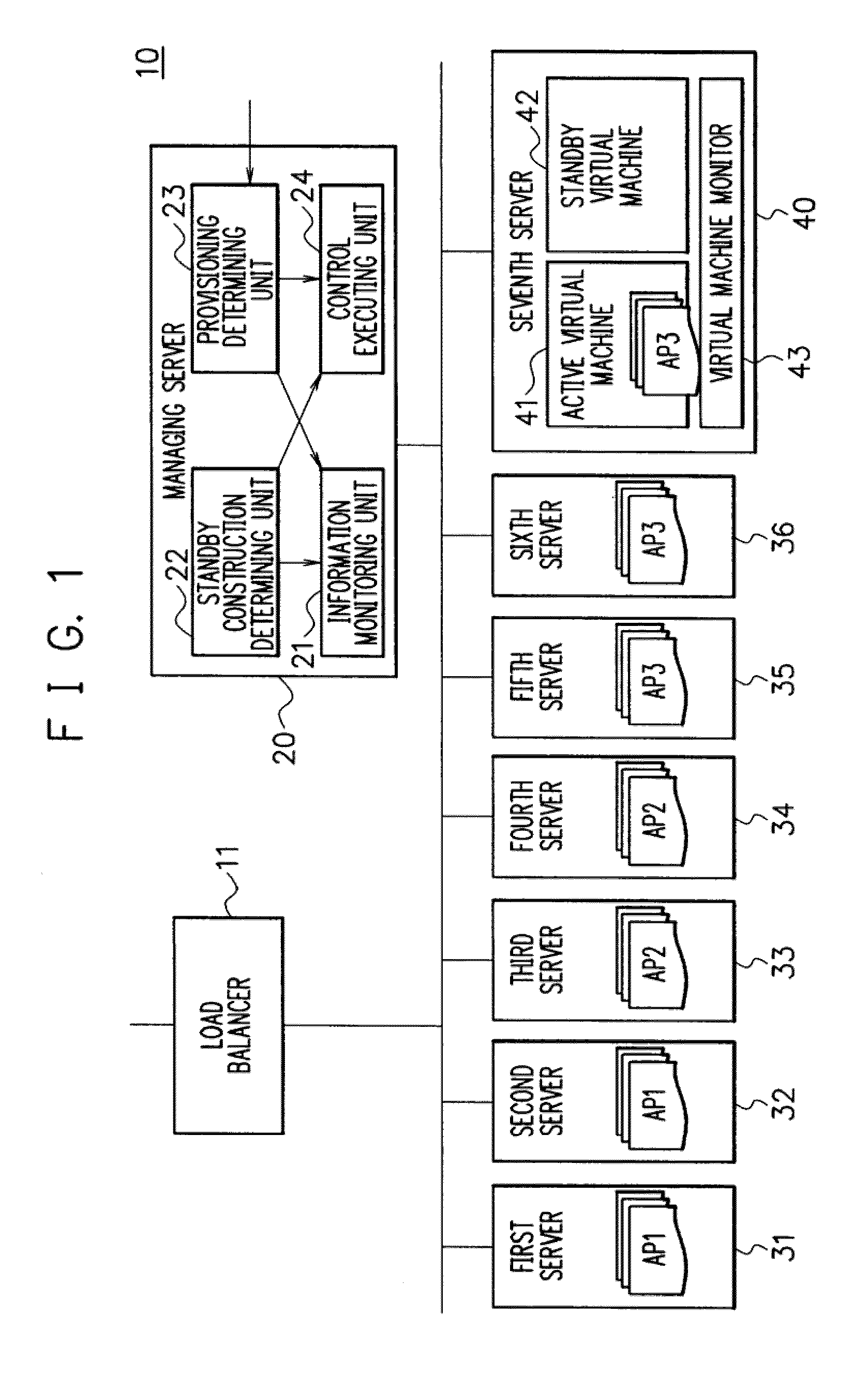

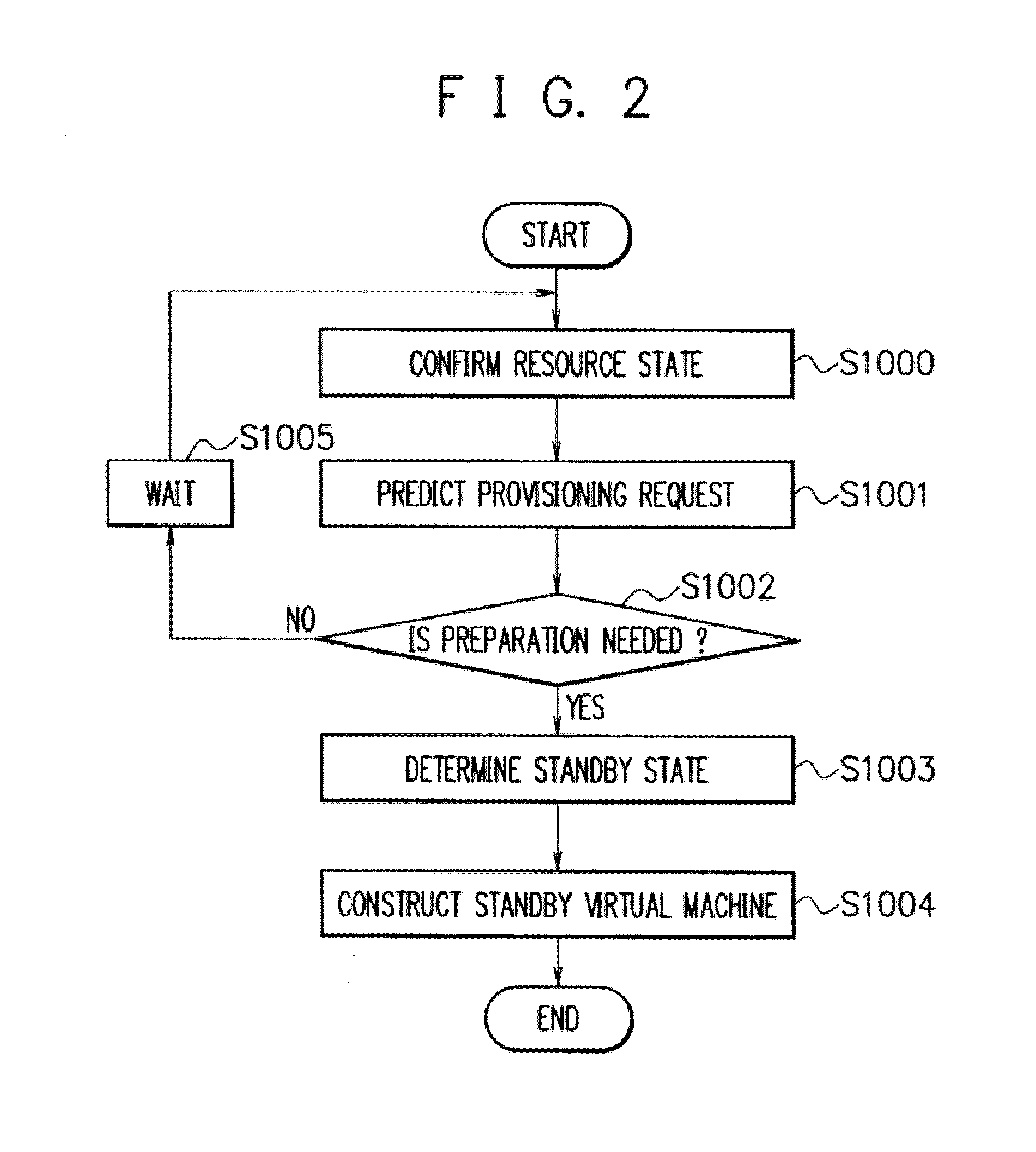

Provisioning system, method, and program

ActiveUS20100058342A1Addressing slow performanceEasy to useResource allocationSoftware simulation/interpretation/emulationResource allocationComputer science

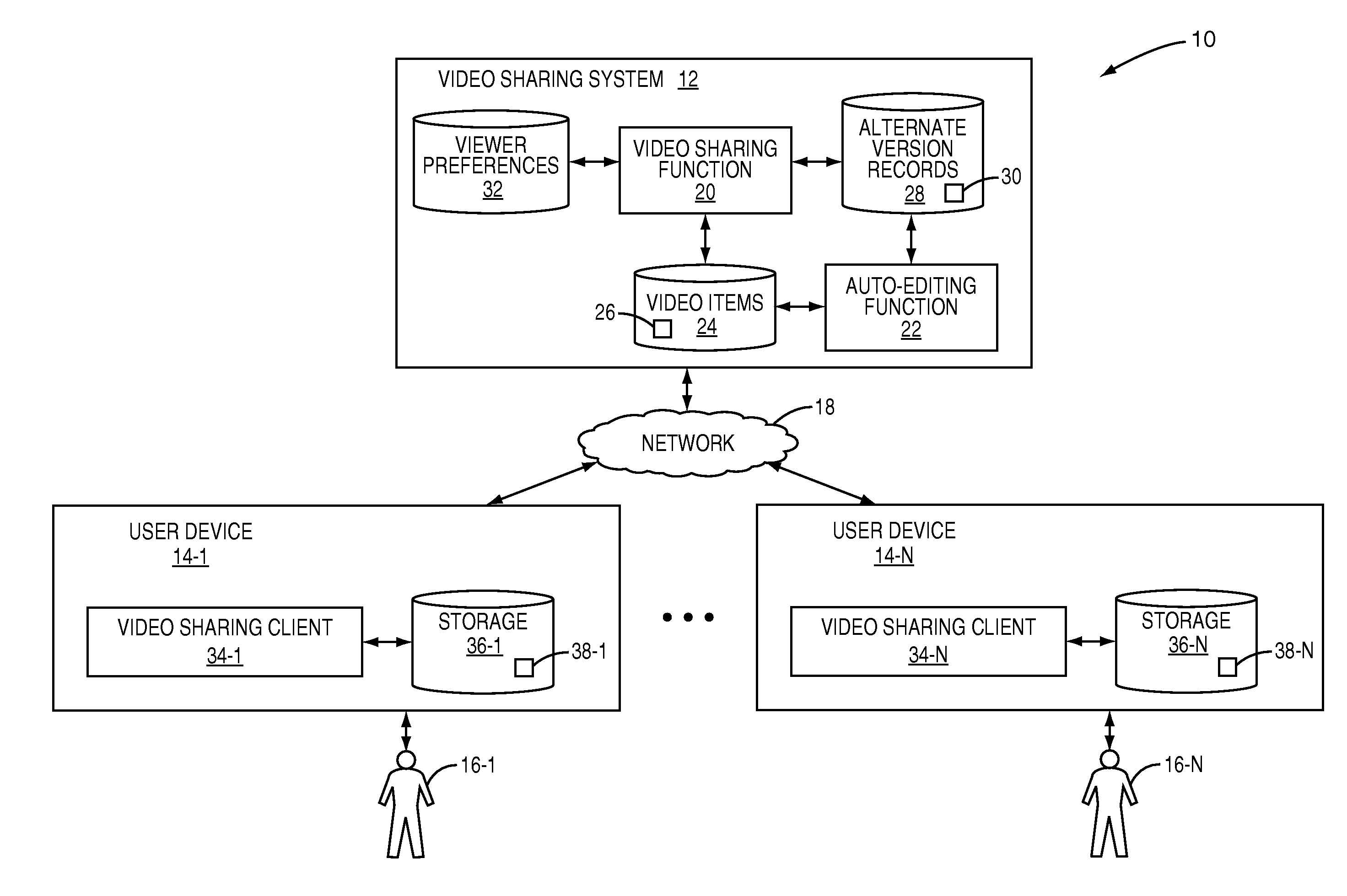

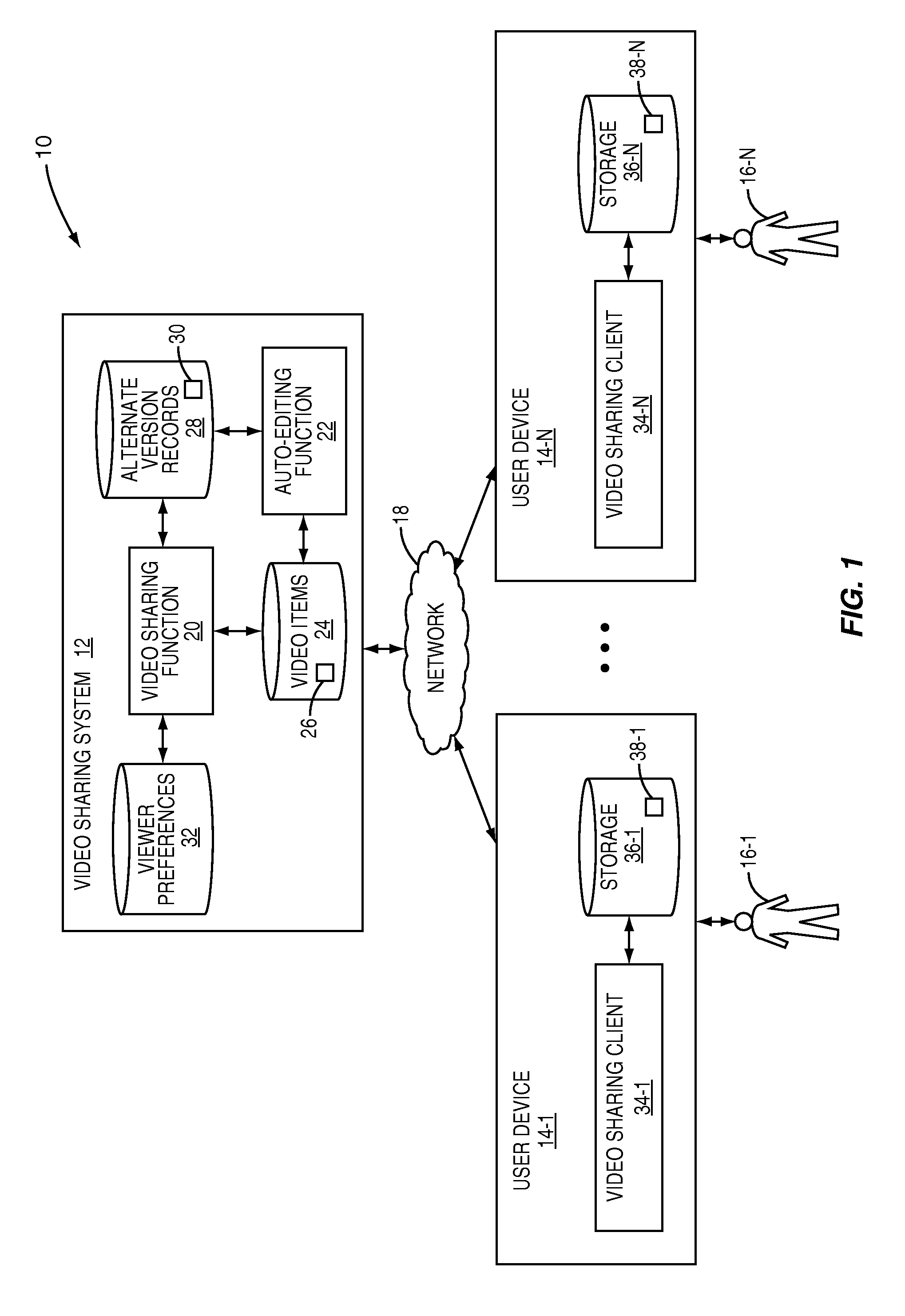

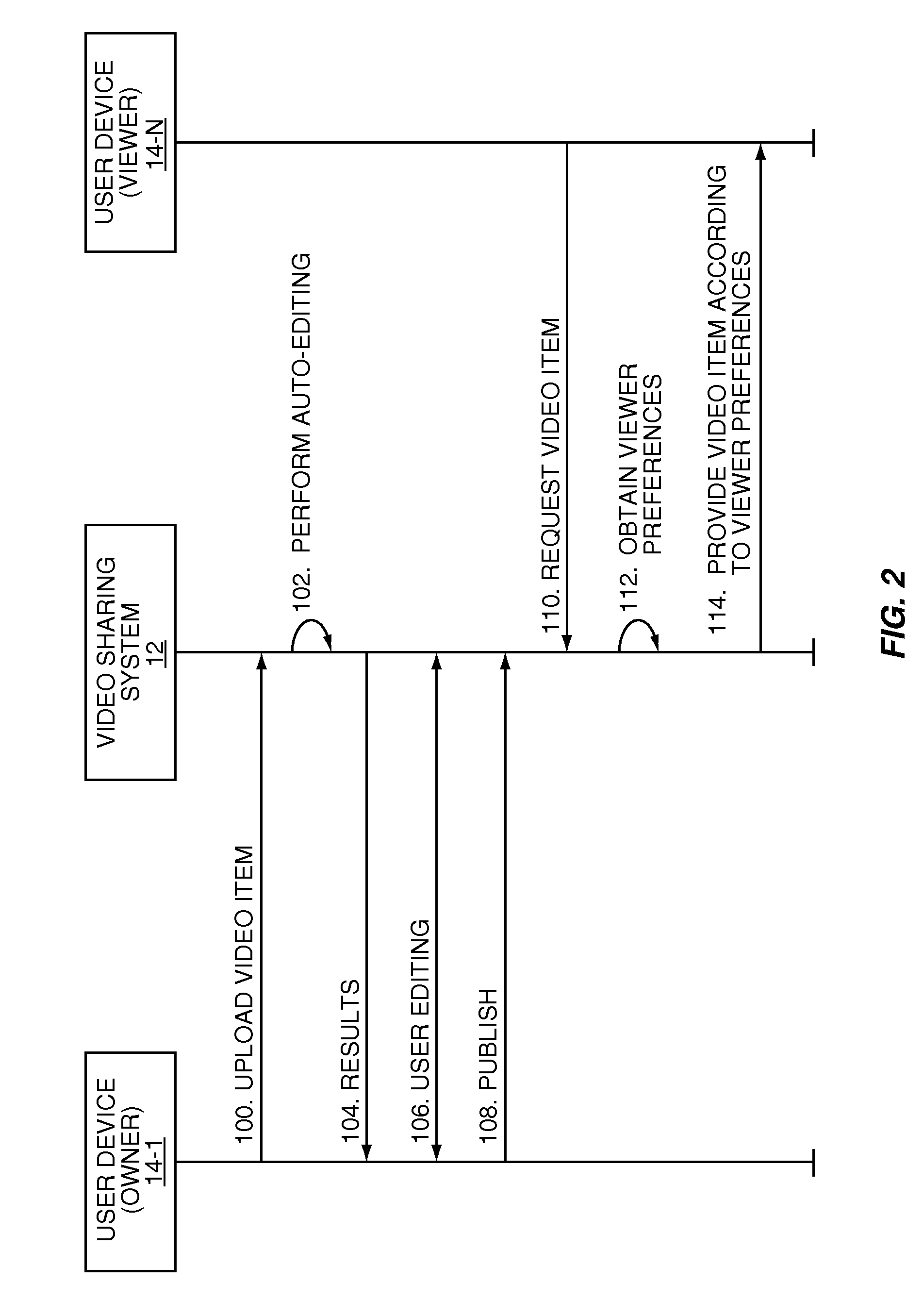

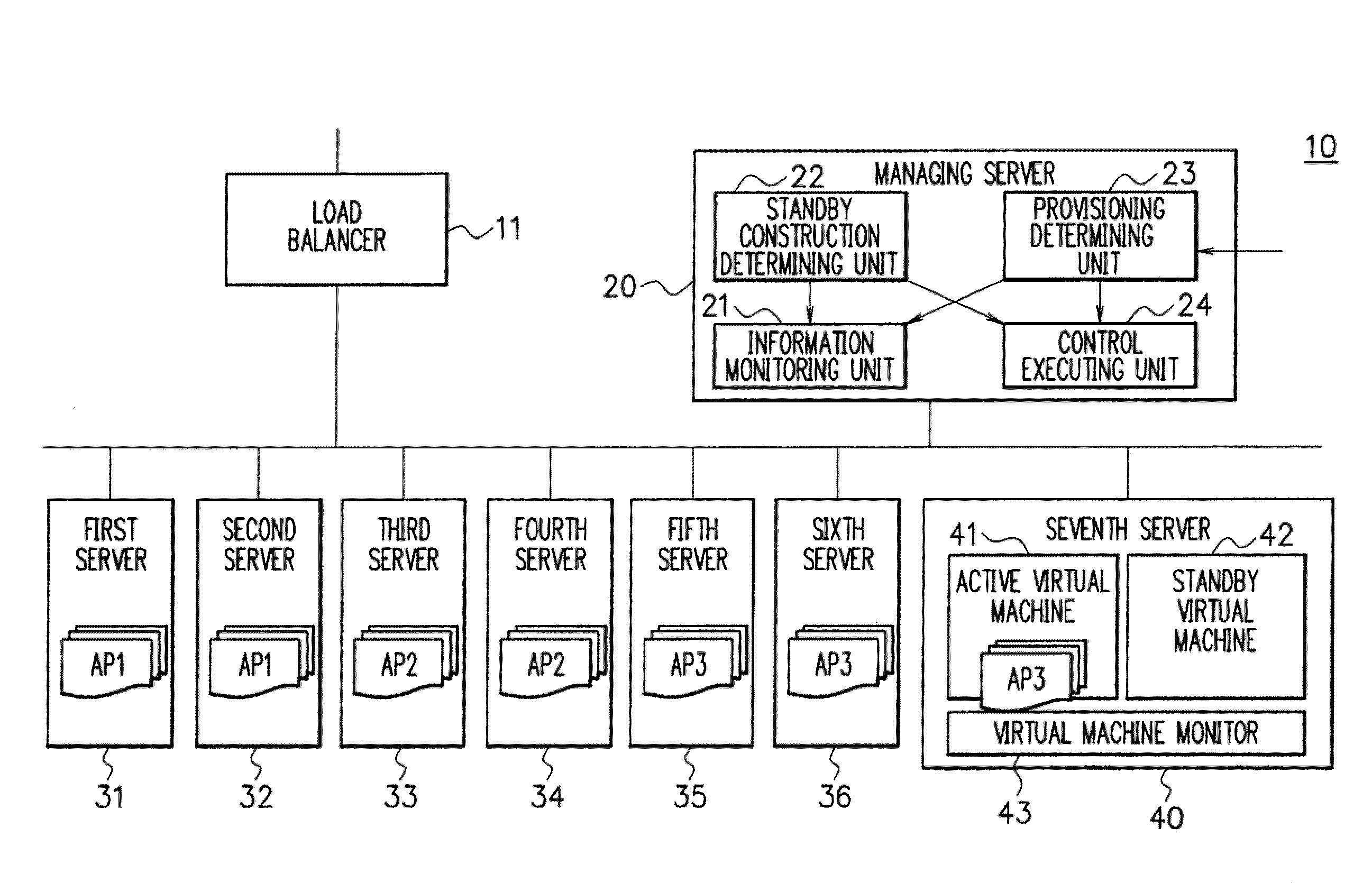

A shared server 40 includes an active virtual machine 41 where a sufficient amount of resources are allocated to an operation of an application system and a standby virtual machine 42 that starts with a minimum amount of resources. When it is predicted that a provisioning request is generated, a standby construction determining unit 22 previously executes provisioning on a standby virtual machine 42, and performs a start of an OS and an application or a setting change of a network apparatus. A provisioning determining unit 23 changes the resource allocation amounts of the active virtual machine 41 and the standby virtual machine 42, allocates a sufficient amount of resources to the standby virtual machine 42, registers the standby virtual machine 42 as a target of load balancing in a load balancer 11, and executes provisioning.

Owner:NEC CORP

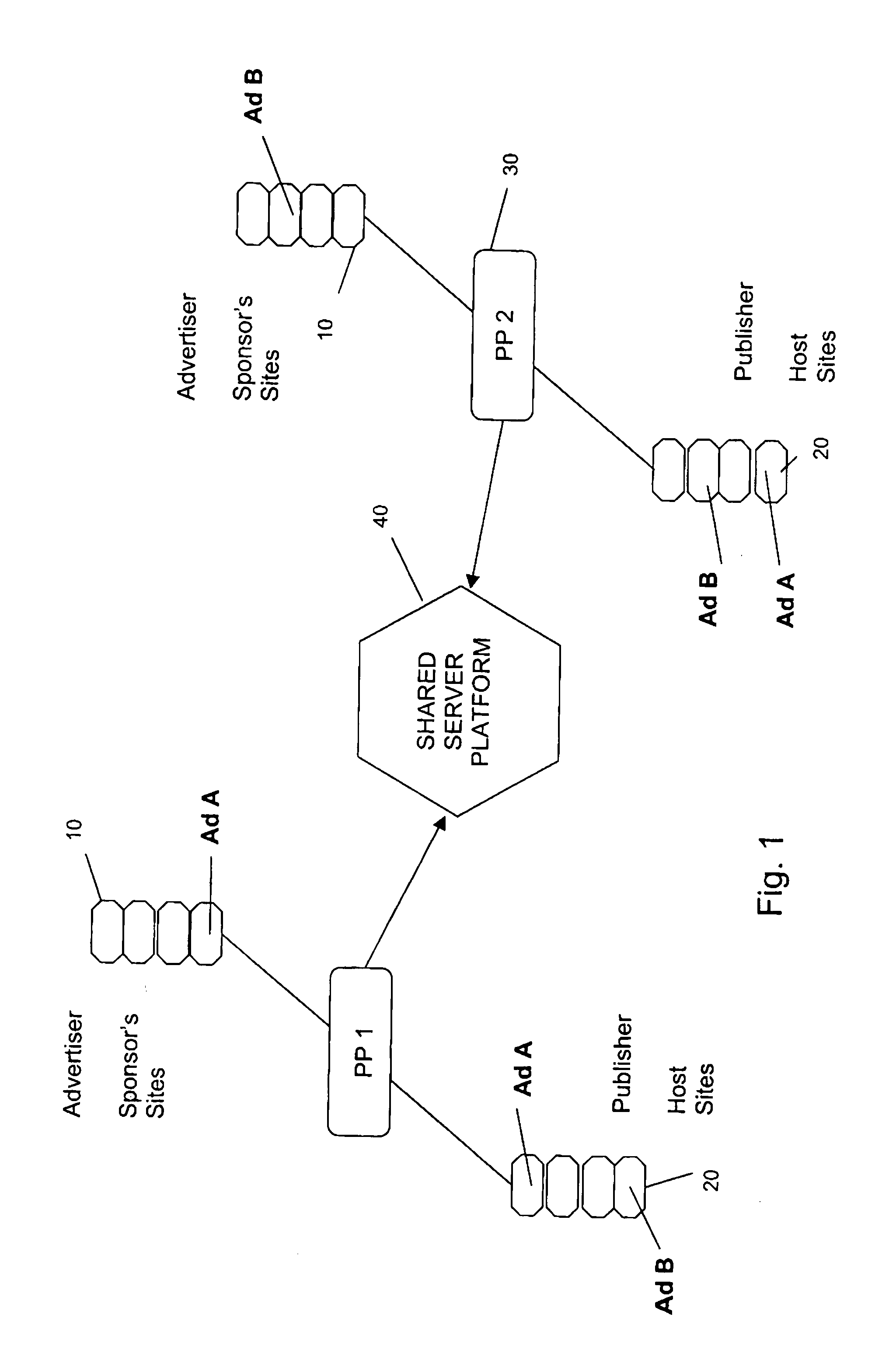

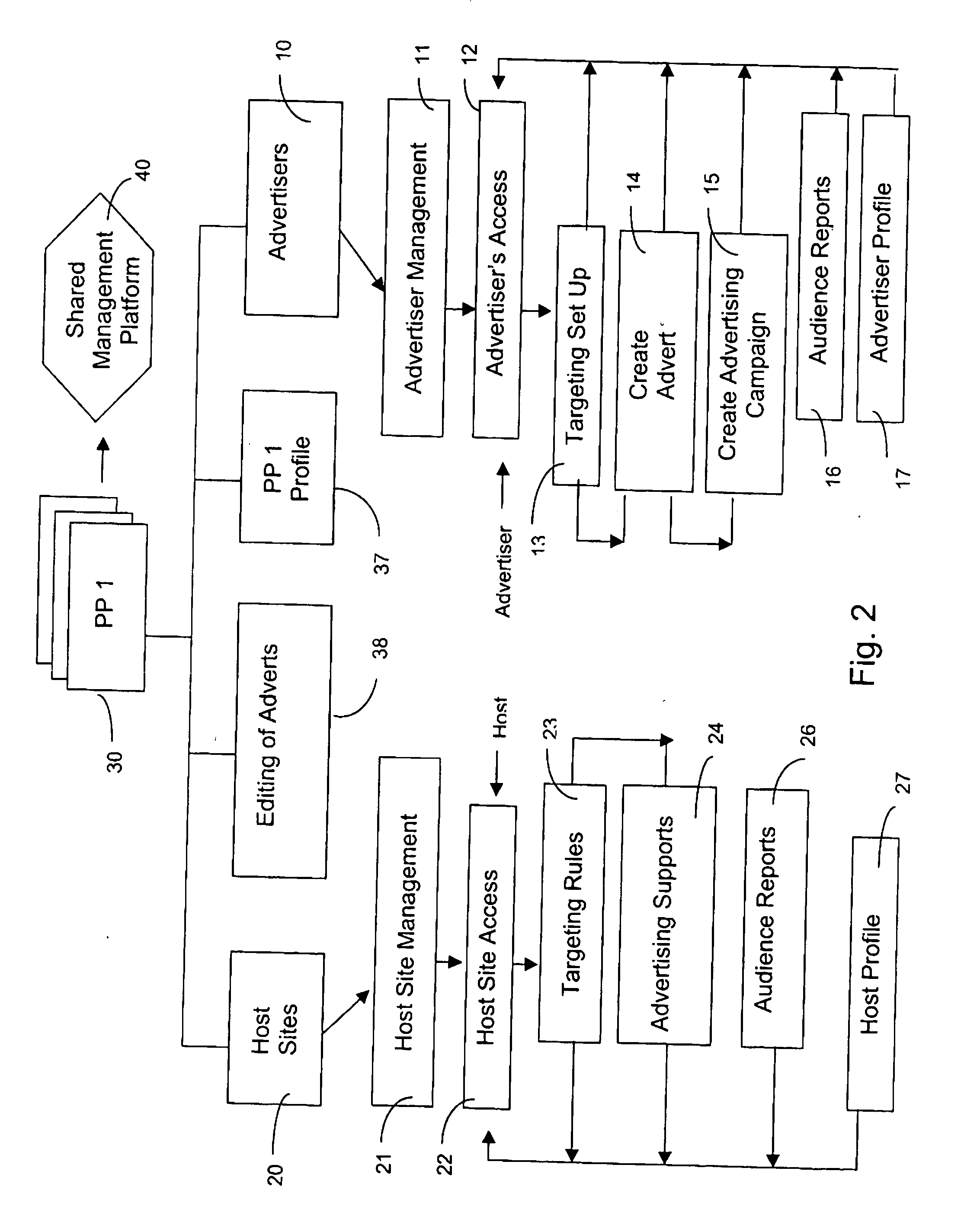

Platform for managing the targeted display of advertisements in a computer network

InactiveUS20050209874A1Increase flexibilityWebsite content managementSpecial data processing applicationsWeb siteDistributed computing

A system for the targeted contextual display of advertisements in a distributed computer network such as the Internet comprises management platforms (30,PP1,PP2) each having a sponsor site management unit (11) accessible to sponsors, a host site management unit (21) accessible to hosts and means for matching keywords, categories and / or parameters of the sponsor sites with those of the host sites for the targeted contextual display of advertising links from sponsor sites (10) on host sites (20). A shared server platform (40) is common to and interconnects the management platforms (30,PP1,PP2). Each management platform (30,PP1,PP2) is associated with its own sponsor sites (10) and its own host sites (20) and constitutes an autonomous system for management of the targeted contextual display of advertising links, that can be operated under the operator's own brand. The shared server platform (40) is arranged to enable the targeted contextual display of advertising links (Ad A, Ad B) from sponsor sites (10) on host sites (20) associated with different management platforms (30,PP1,PP2).

Owner:ADSCLICK

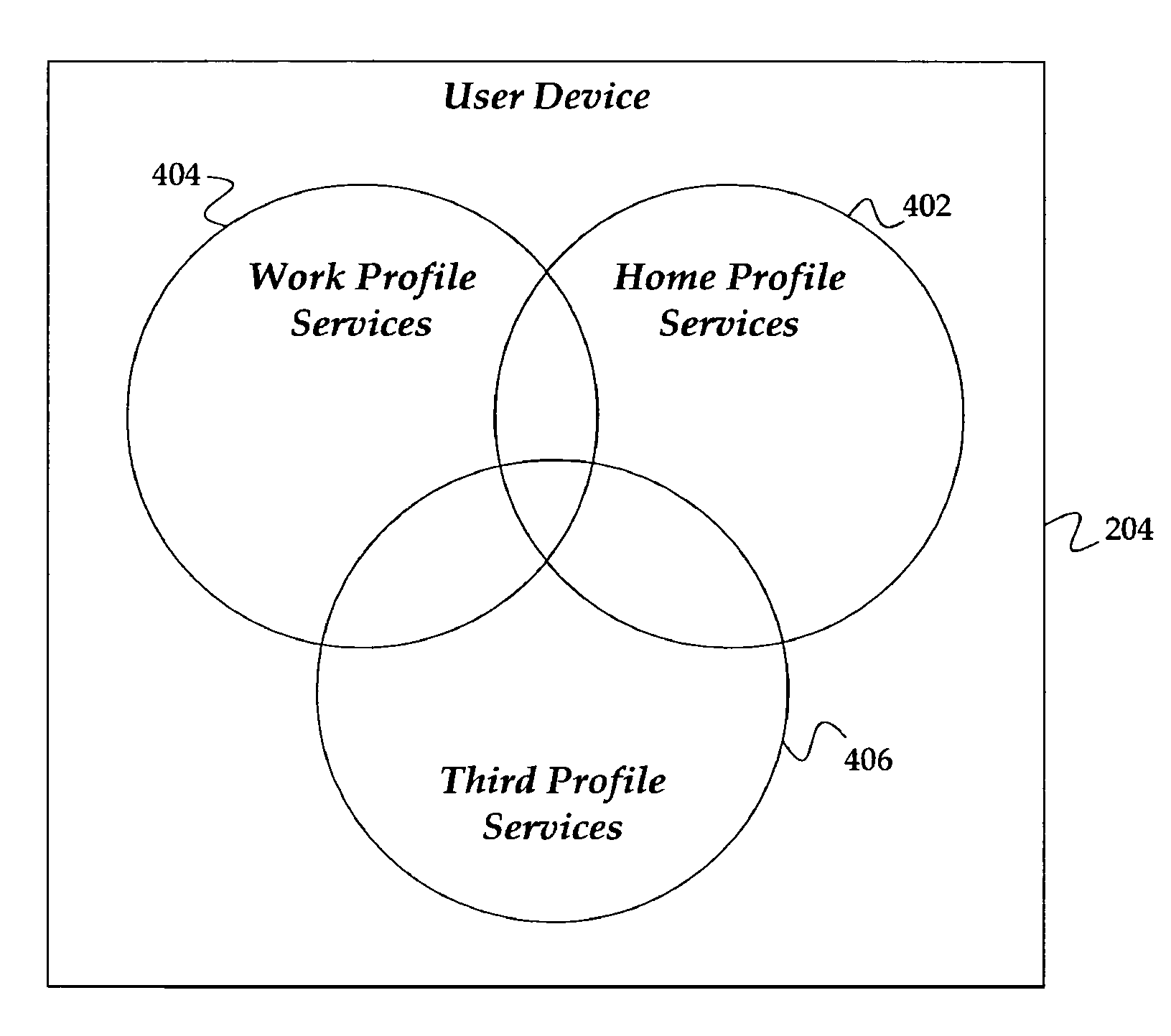

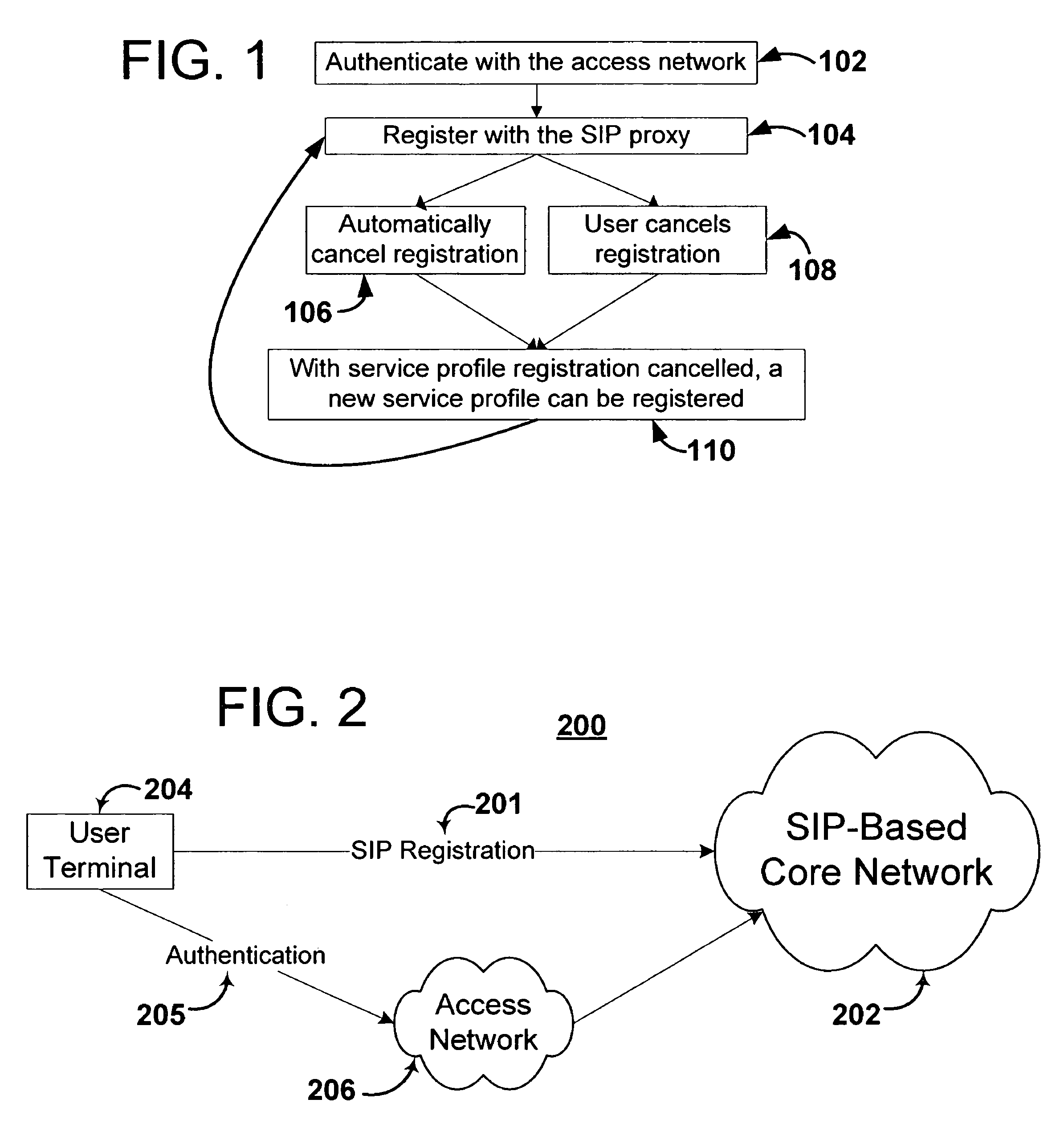

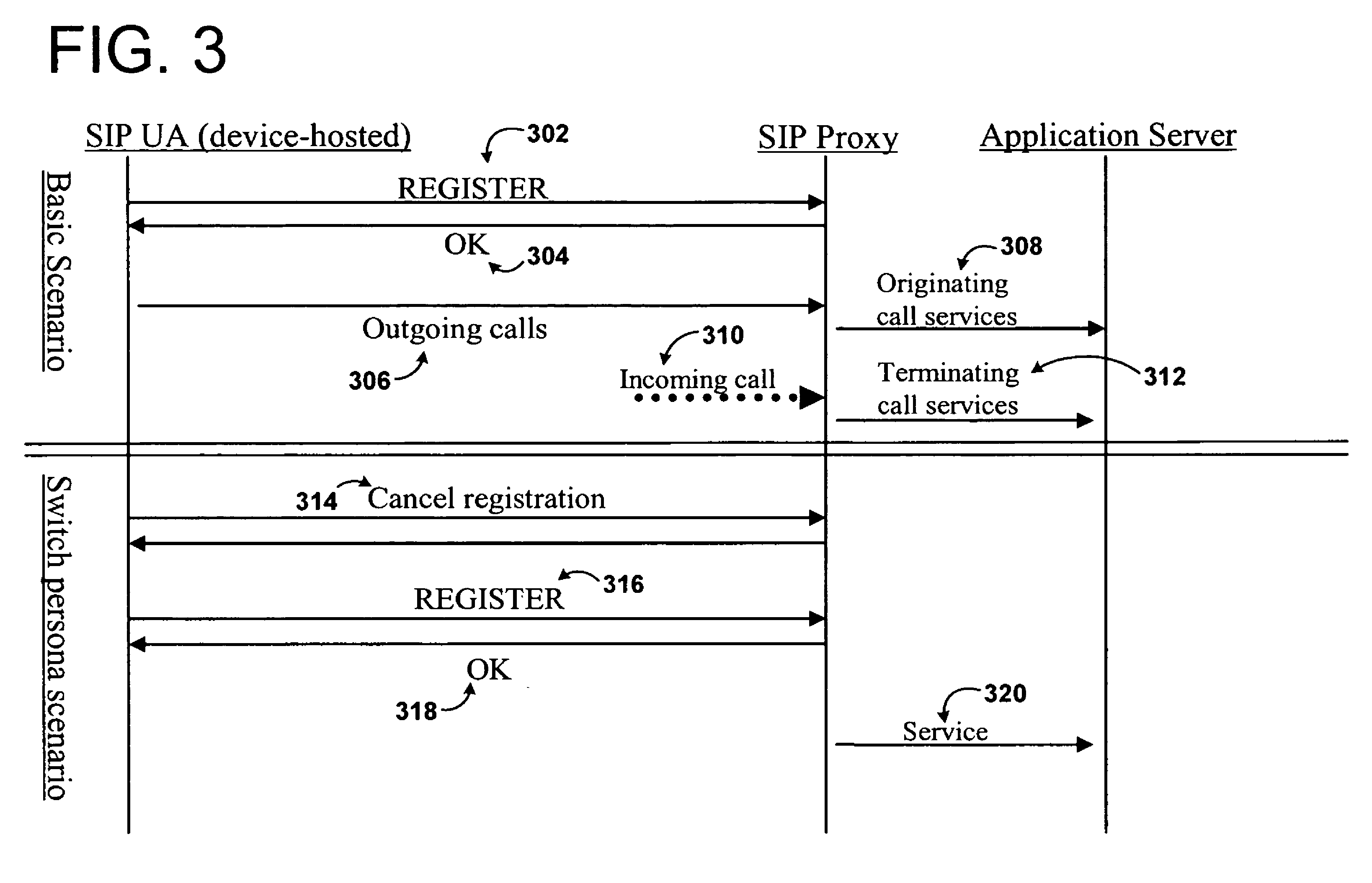

Profile sharing across persona

The embodiments disclosed include a system and method for sharing services between service profiles on a single telecommunications device resulting in improved ease of access for a user who wishes to access services through different service profiles. The user can access services in multiple service profiles with a single device. In one embodiment, the techniques described below are enabled through a Session Initiation Protocol (“SIP”)-based next-generation network (“NGN”), such as the IP Multimedia Subsystem (“IMS”) architecture.

Owner:SBC KNOWLEDGE VENTURES LP

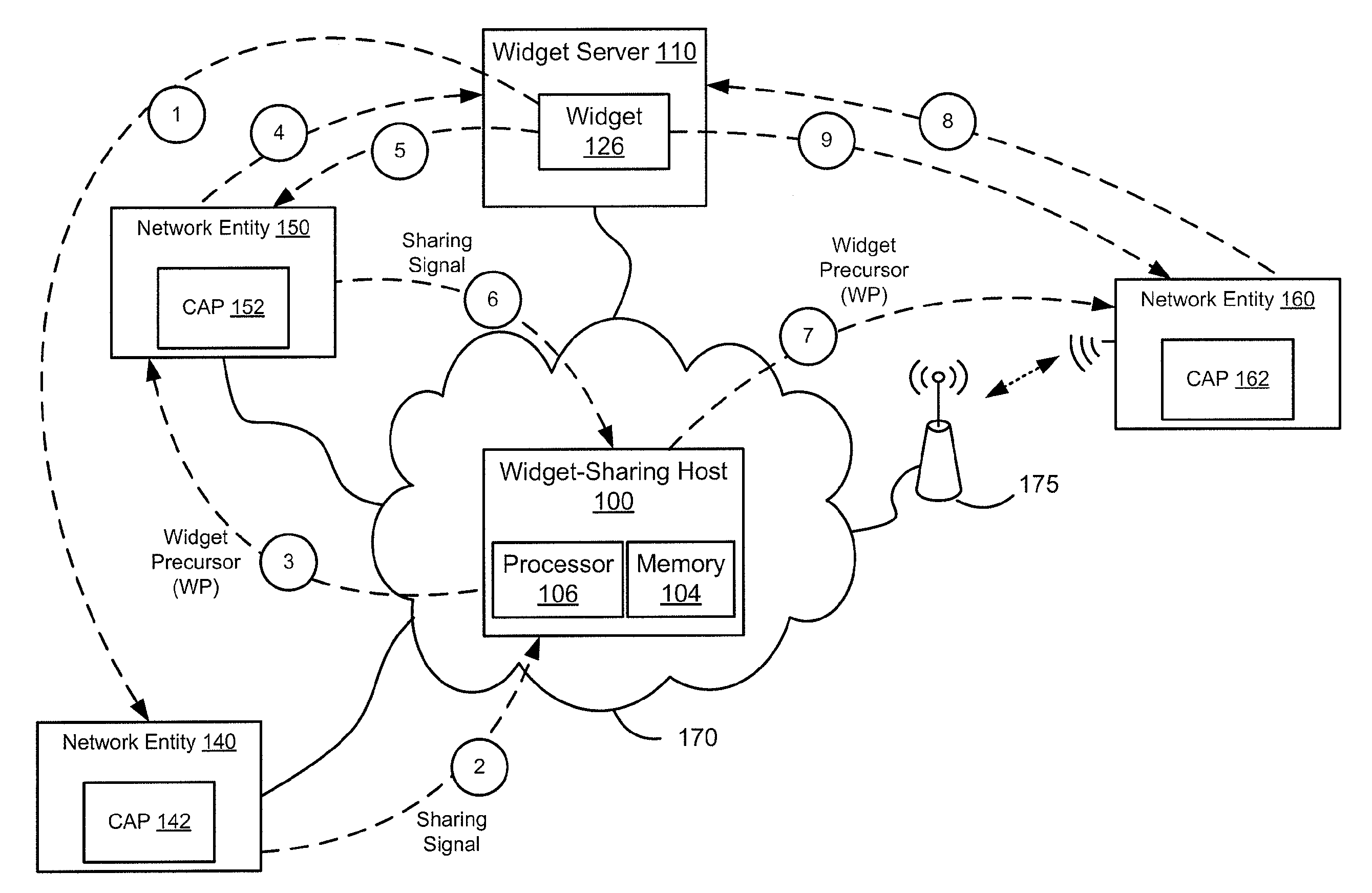

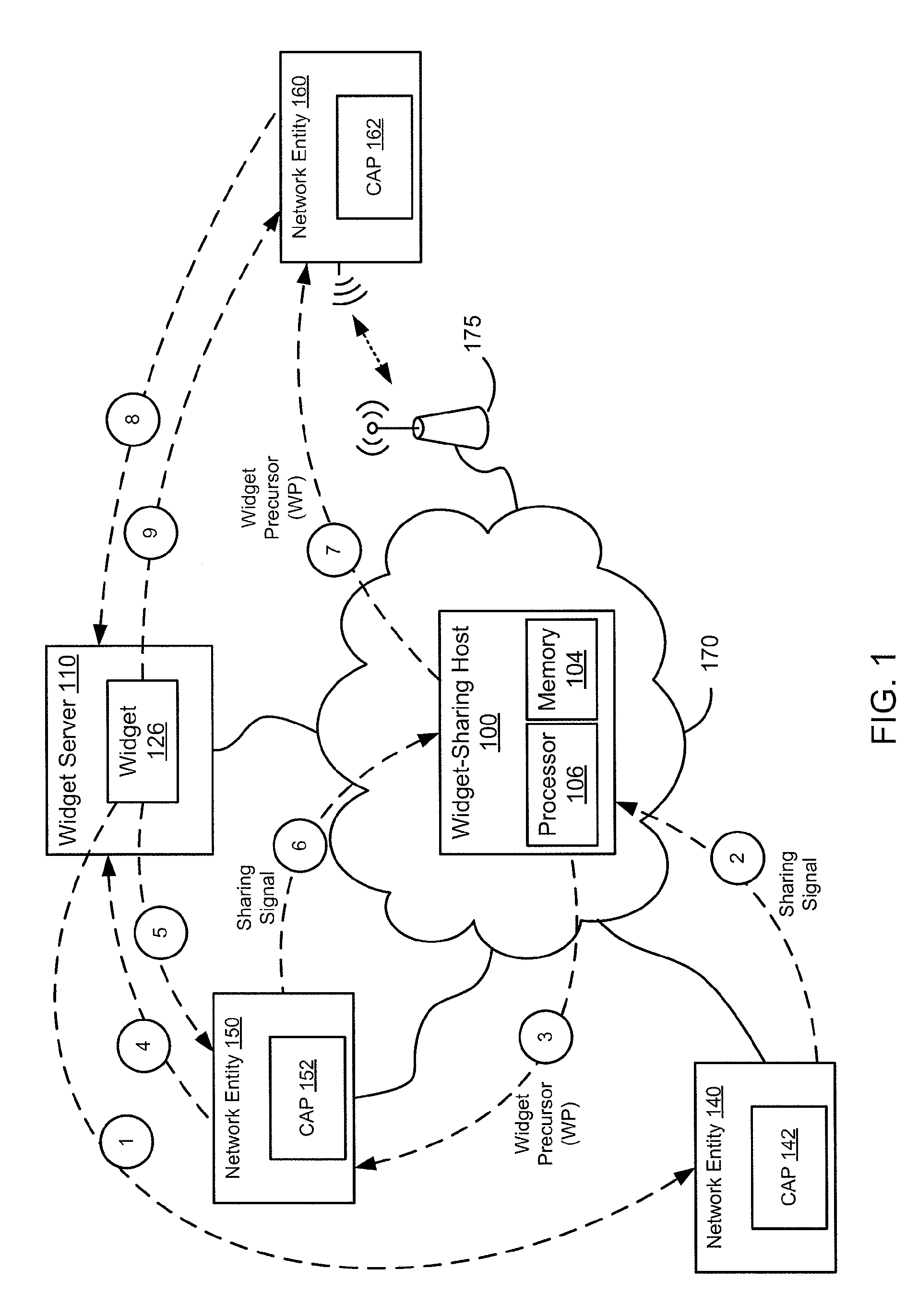

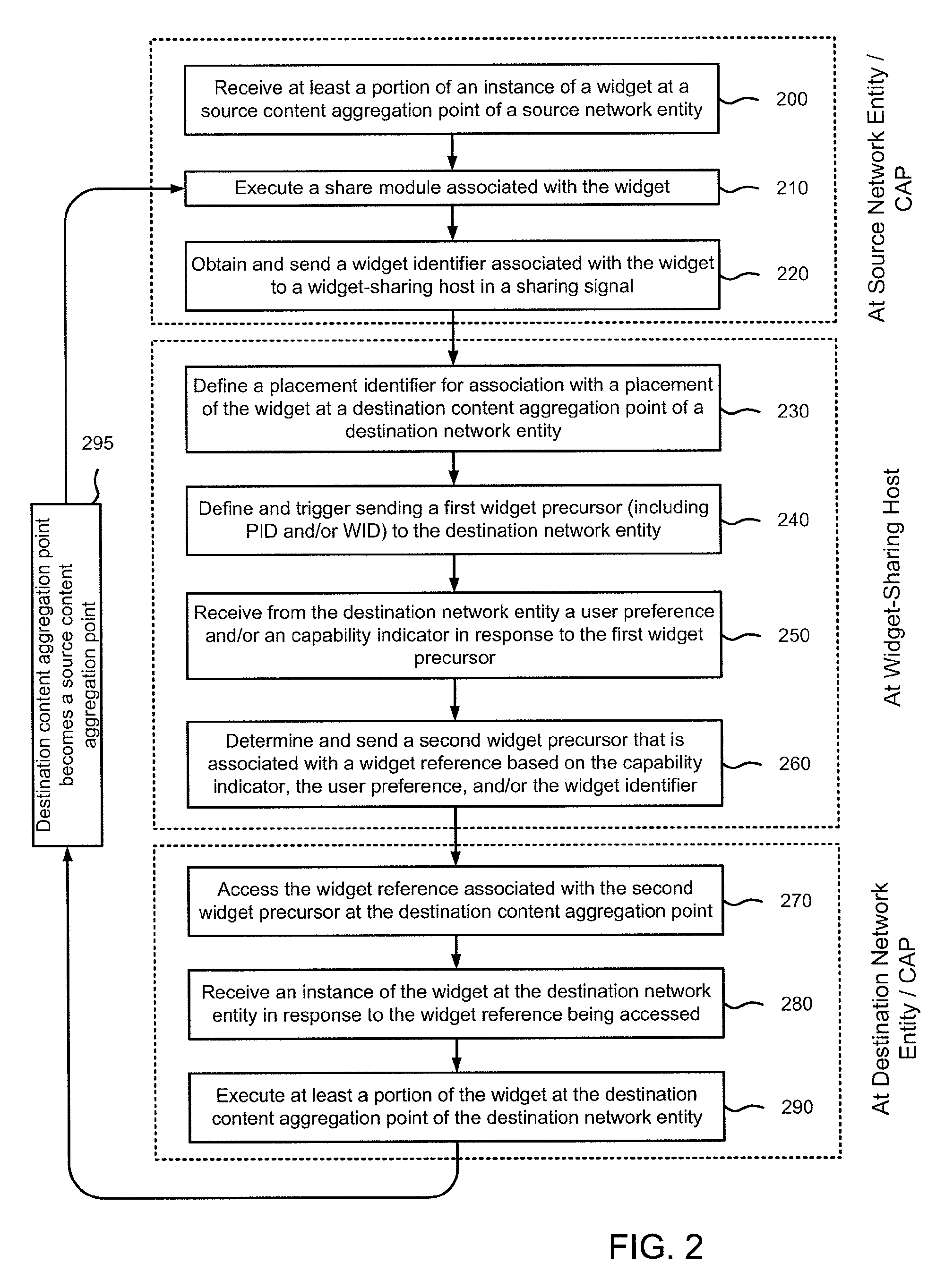

Methods and apparatus for widget sharing between content aggregation points

In one embodiment, a method includes receiving a request from a processing device to send a widget to a handheld mobile device. The request can be defined after at least a portion of an instance of the widget has been processed at the processing device. The request can be associated with a widget identifier. The method can also include defining a widget precursor at a widget-sharing server in response to the request from the processing device. The widget precursor can be associated with the widget identifier and a placement identifier.

Owner:ORACLE INT CORP

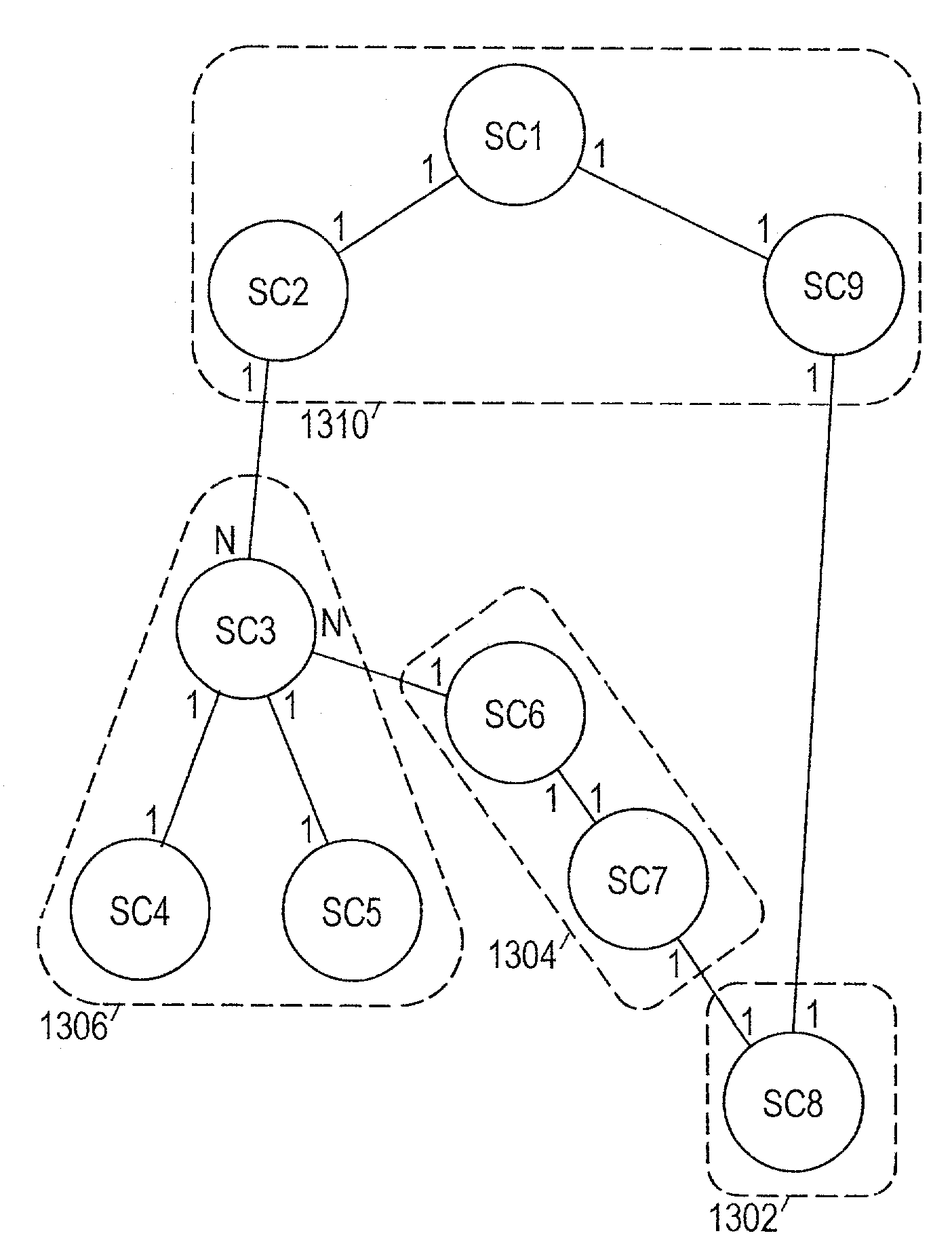

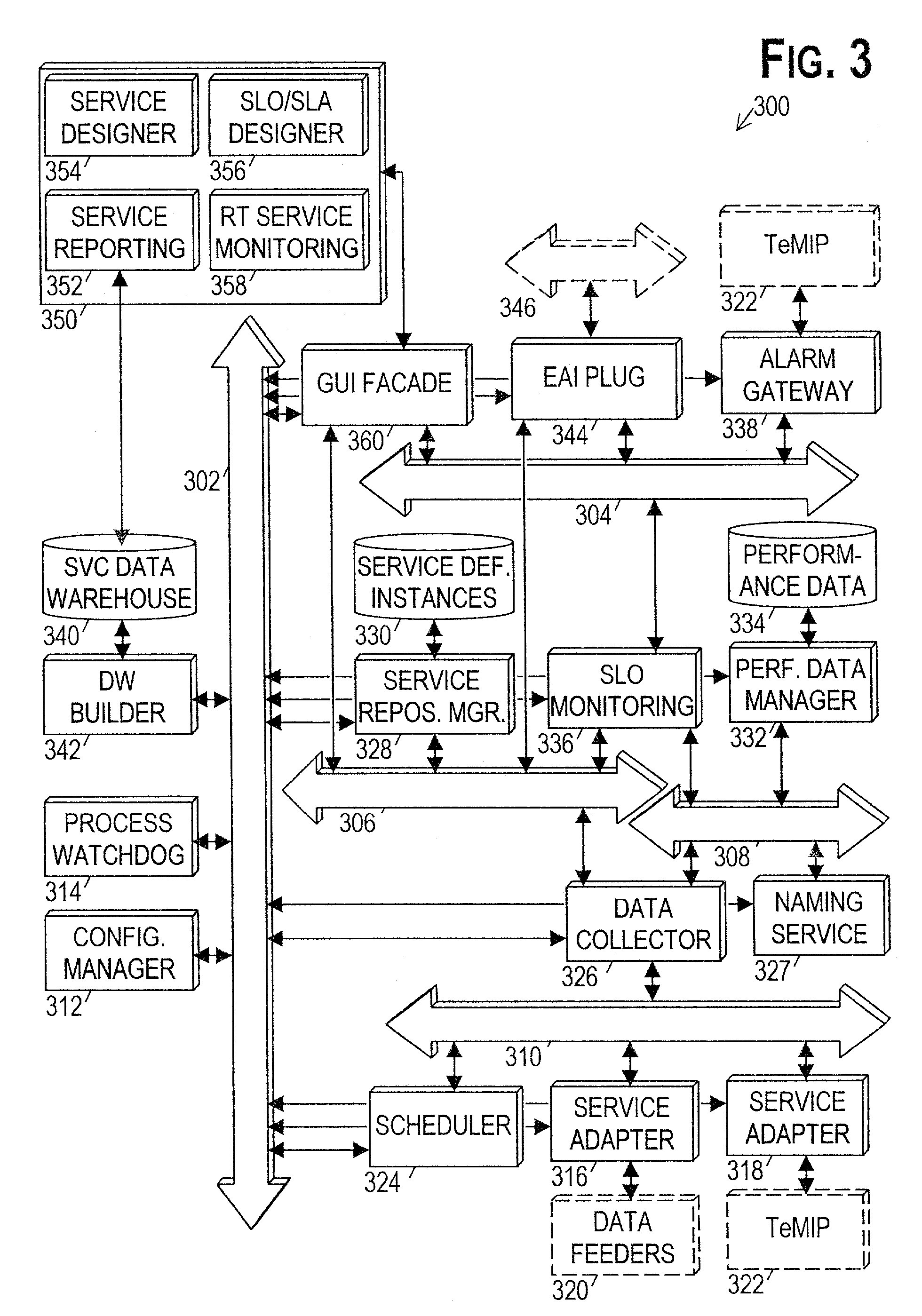

Real-time monitoring of service performance through the use of relational database calculation clusters

InactiveUS7099879B2Data processing applicationsDigital data processing detailsService modelTelecommunications network

A system and method for monitoring service performance are disclosed. In a preferred embodiment, the method comprises: (a) collecting service information from one or more sources in a telecommunications network; (b) converting the service information into values of primary parameters of a service model; and (c) calculating values of secondary parameters of the service model from the primary parameter values, wherein the calculating is performed using relational database tables based on calculation clusters. The calculation clusters are preferably determined from a hierarchical model of the service by dividing the hierarchy in a manner that separates shared service components from the service components that share them, and that separates service components related by 1-to-n and n-to-1 relationships.

Owner:VALTRUS INNOVATIONS LTD +1

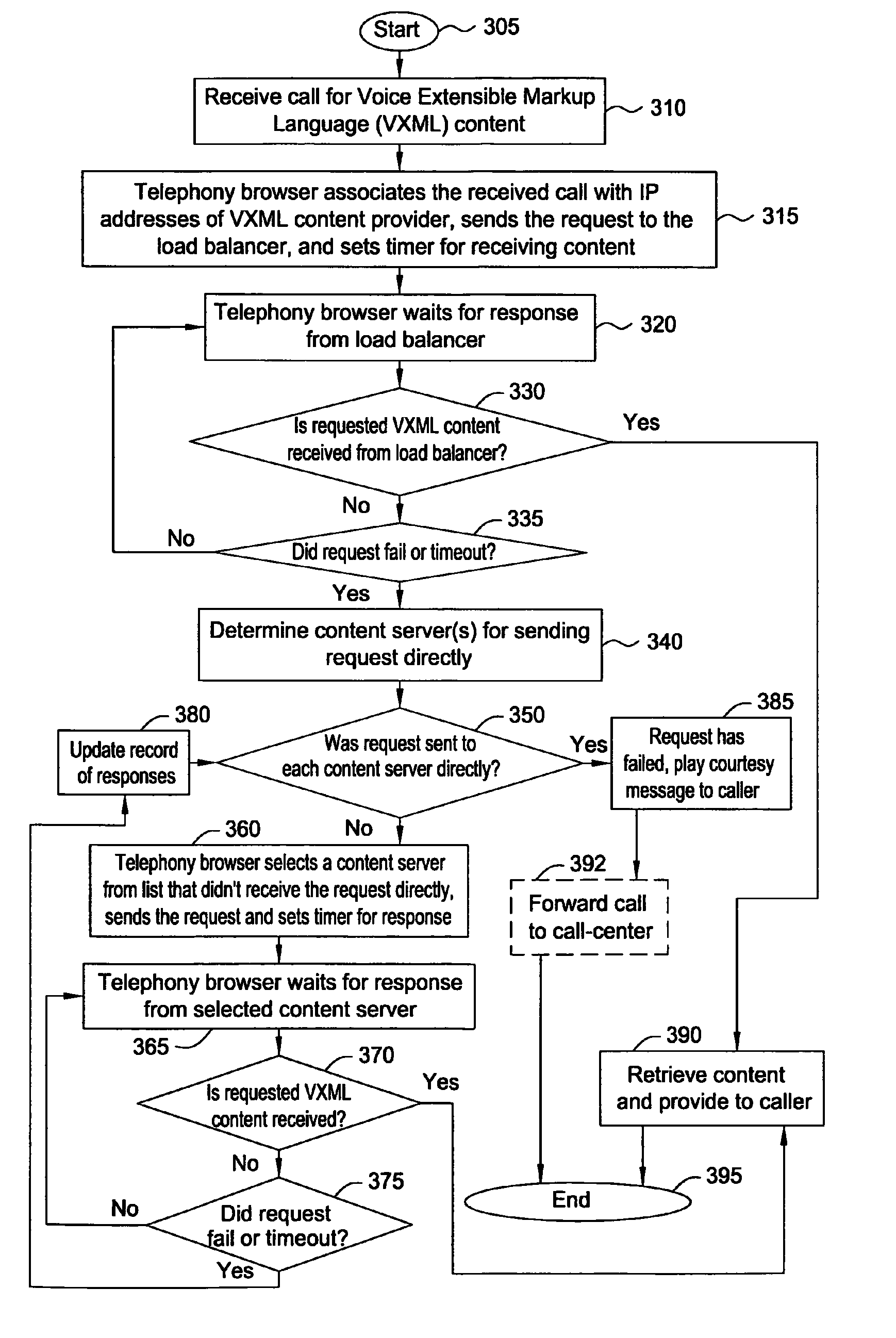

Method and apparatus for providing a reliable voice extensible markup language service

InactiveUS8576712B2Error preventionFrequency-division multiplex detailsExtensible markupComputer science

A method and apparatus for providing a reliable Voice Extensible Markup Language (VXML) over packet networks such as Voice over Internet Protocol (VoIP) and Service over Internet Protocol (SoIP) network are disclosed. For example, a service provider may utilize a plurality of content servers that can be accessed by at least one telephony browser. The telephony browser can reach the content browsers directly as well as through a shared server that may load balance among the content servers. When a request for a VXML content, e.g., a VXML application, is received, the telephony browser sends the request to the shared server. If the request fails or a response is not received prior to expiration of a predetermined time interval, then the telephony browser sends a second request directly to one of the content servers that is capable of providing the requested content.

Owner:AMERICAN TELEPHONE & TELEGRAPH CO

Communications network with converged services

A communications network provides one or more shared services, such as voice or video, to customers over a respective virtual private network (VPN). At the same time, each customer may have its own private data VPN for handling private company data. The shared service VPN permits users from different customers to communicate directly over the shared service VPN. Trust and security are established at the edge of the network, as the information enters from the customer's site. As a result, no additional security measures are required within the shared service VPN for the communications between users. This architecture results in a fast, high quality, shared service.

Owner:ONVOY

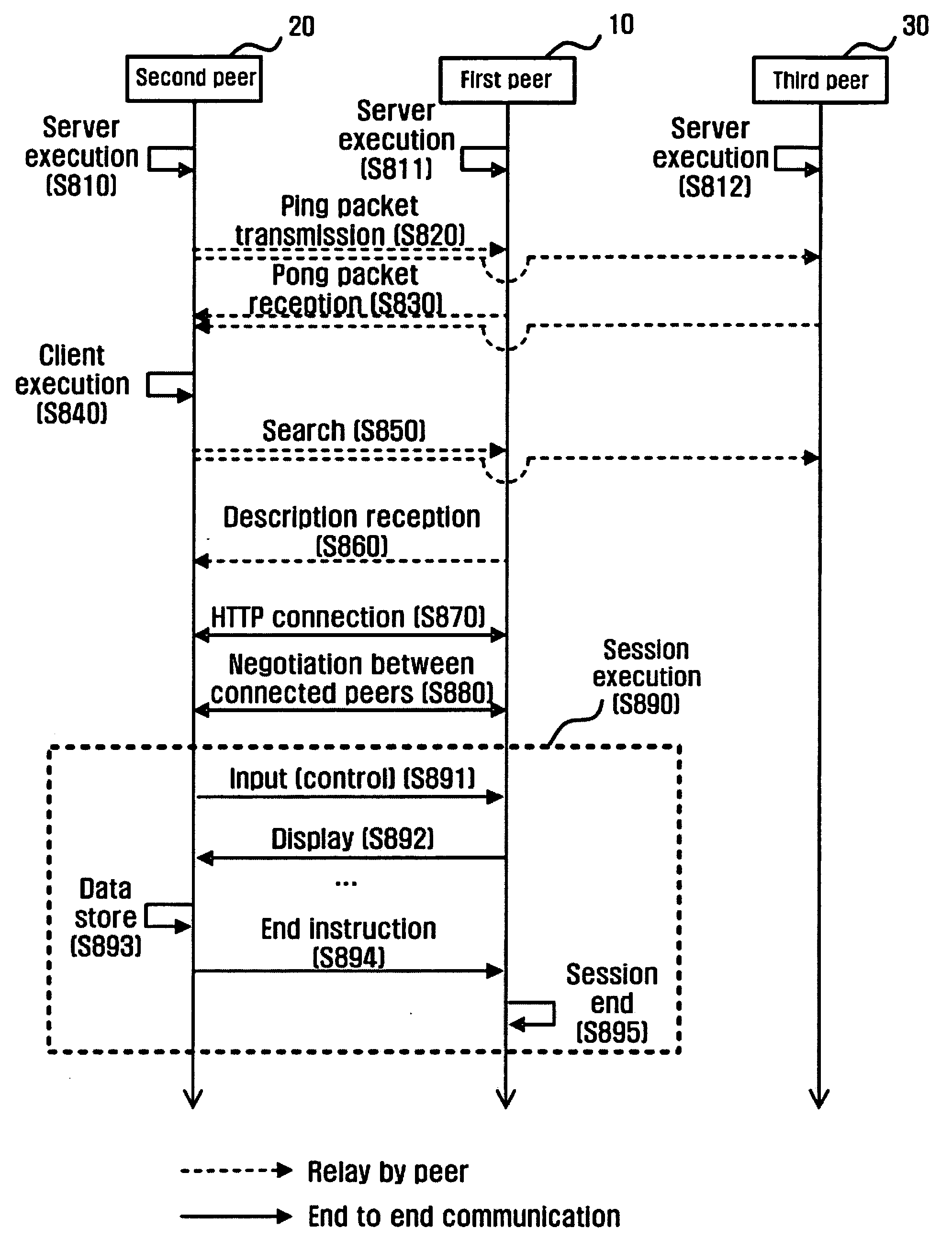

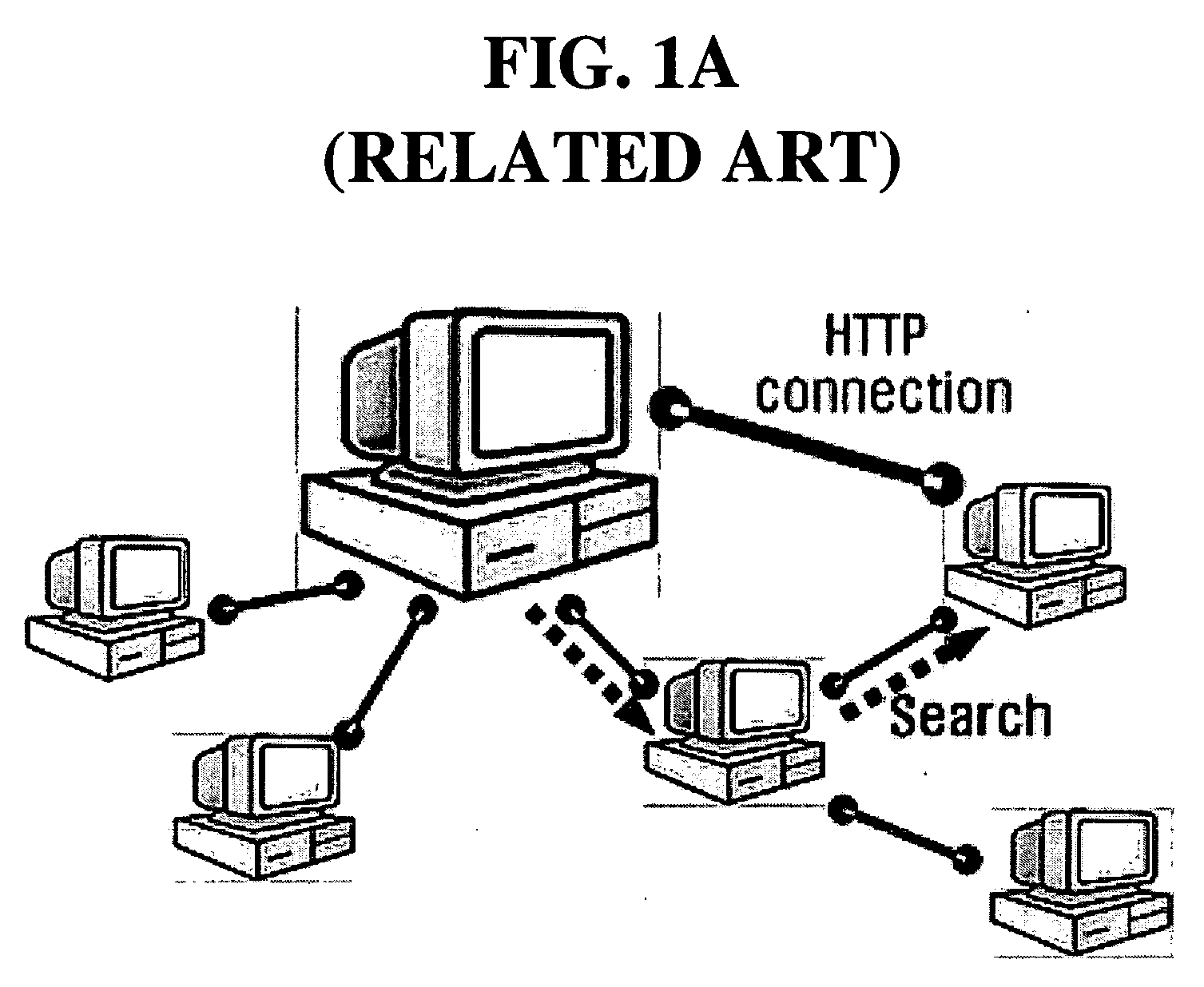

Method and apparatus for sharing applications using P2P protocol

InactiveUS20050120073A1Efficient sharingData processing applicationsDigital data processing detailsClient-sidePeer-to-Peer Protocol

A method of sharing applications using a peer-to-peer protocol includes registering, by a first peer, applications to be shared; determining whether the registered application files meet a search condition included in an application search instruction that is received from a second peer, and transmitting a description file to the second peer in response to the determination results; making a connection to the second peer through a predetermined protocol to perform a service pertaining to an application meeting the search condition; and executing a session for providing a remote display service pertaining to the application for the second peer, such that client peers can share idle resources in server peers, and can use applications that cannot be executed with their current resources or in their current environments.

Owner:SAMSUNG ELECTRONICS CO LTD

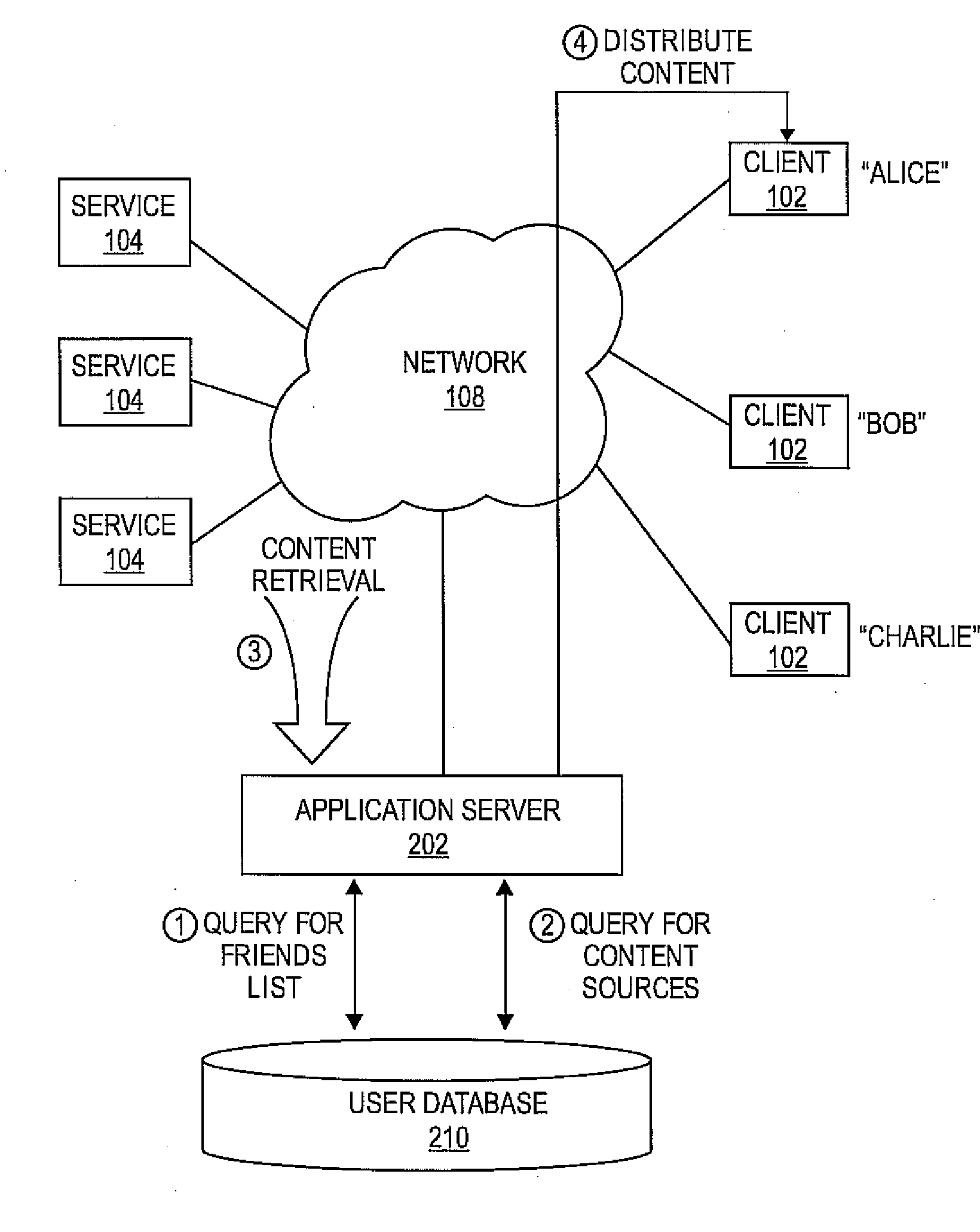

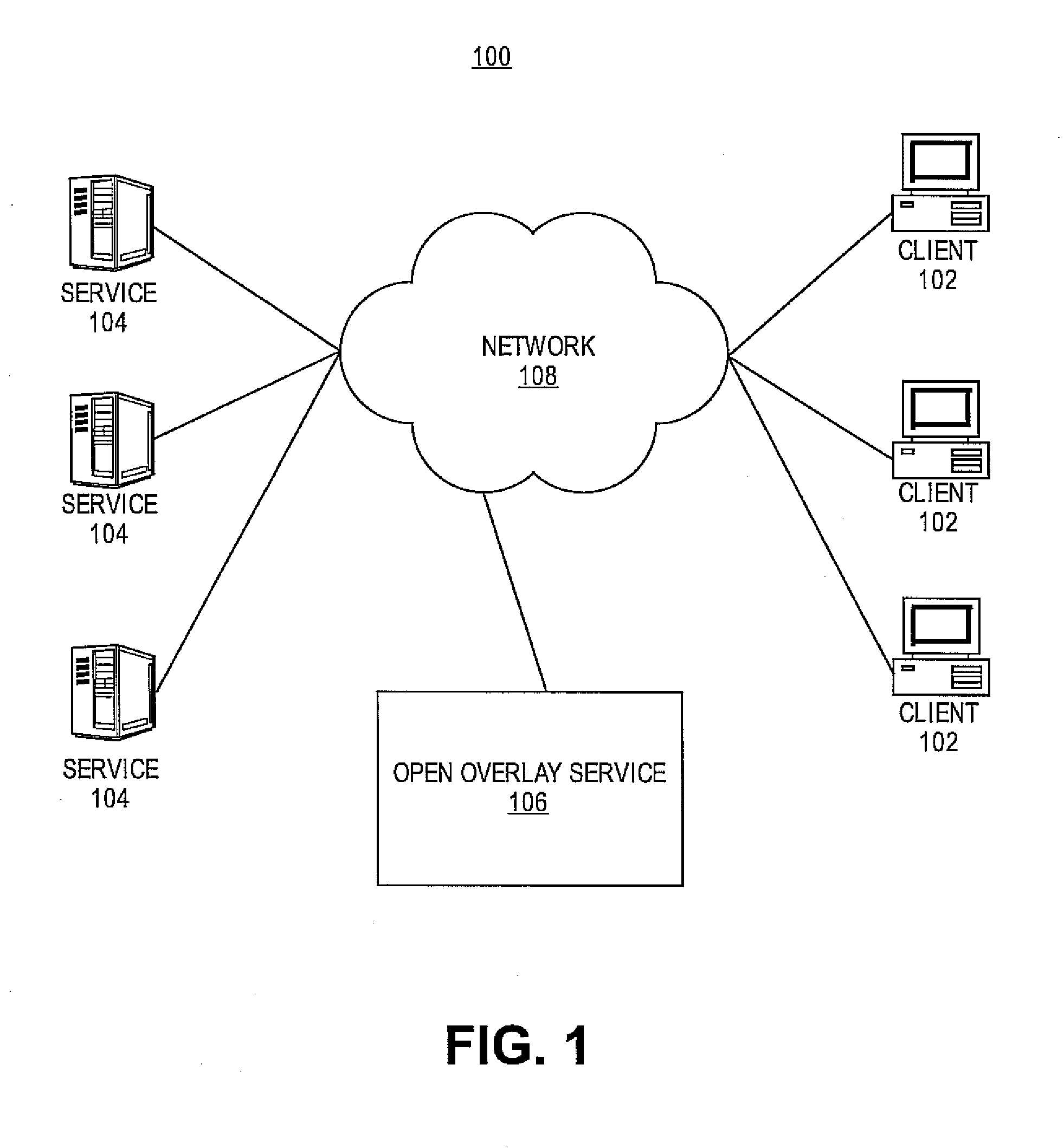

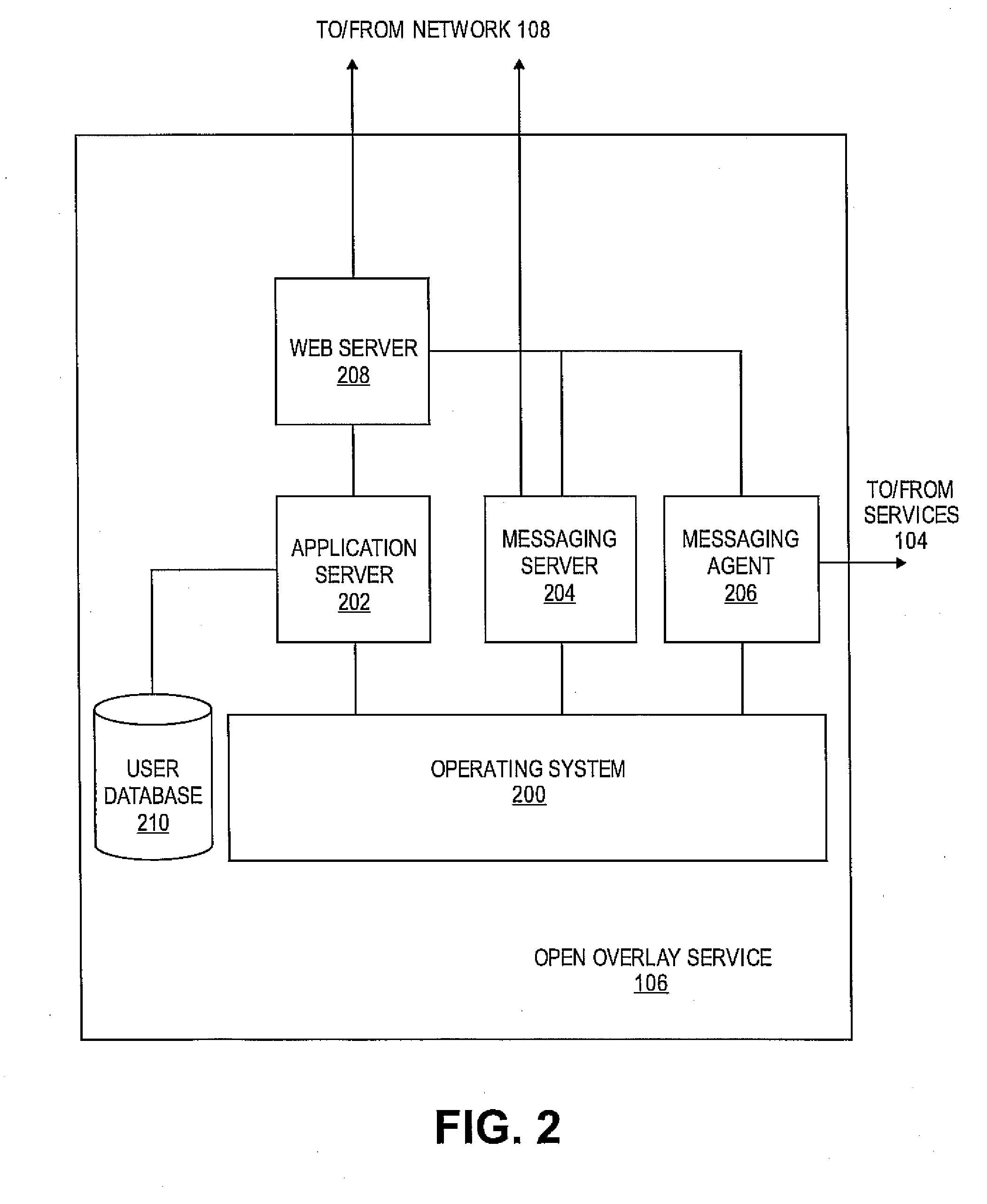

Automated screen saver with shared media

ActiveUS20080133649A1Cathode-ray tube indicatorsMultiple digital computer combinationsHard disc driveImage sharing

Embodiments of the present invention provide users in a social network with a screen saver constructed by media shared by their contacts and groups in a social network. The present invention provides a shared photo album that displays images from a user's own photo collection, and that of their social network automatically. For a user, the social network service queries its database to retrieve a list of photo sources. The sources of images may be online photo sharing services, other computers with photos on their local hard drives, and public peer-to-peer storage services. The images may be displayed to the user and optionally may be accompanied with information, such as the owner of the photo or descriptive phrases or comments about the photo. The social network service may be configured to continuously or periodically request photos to update the screen saver.

Owner:RED HAT

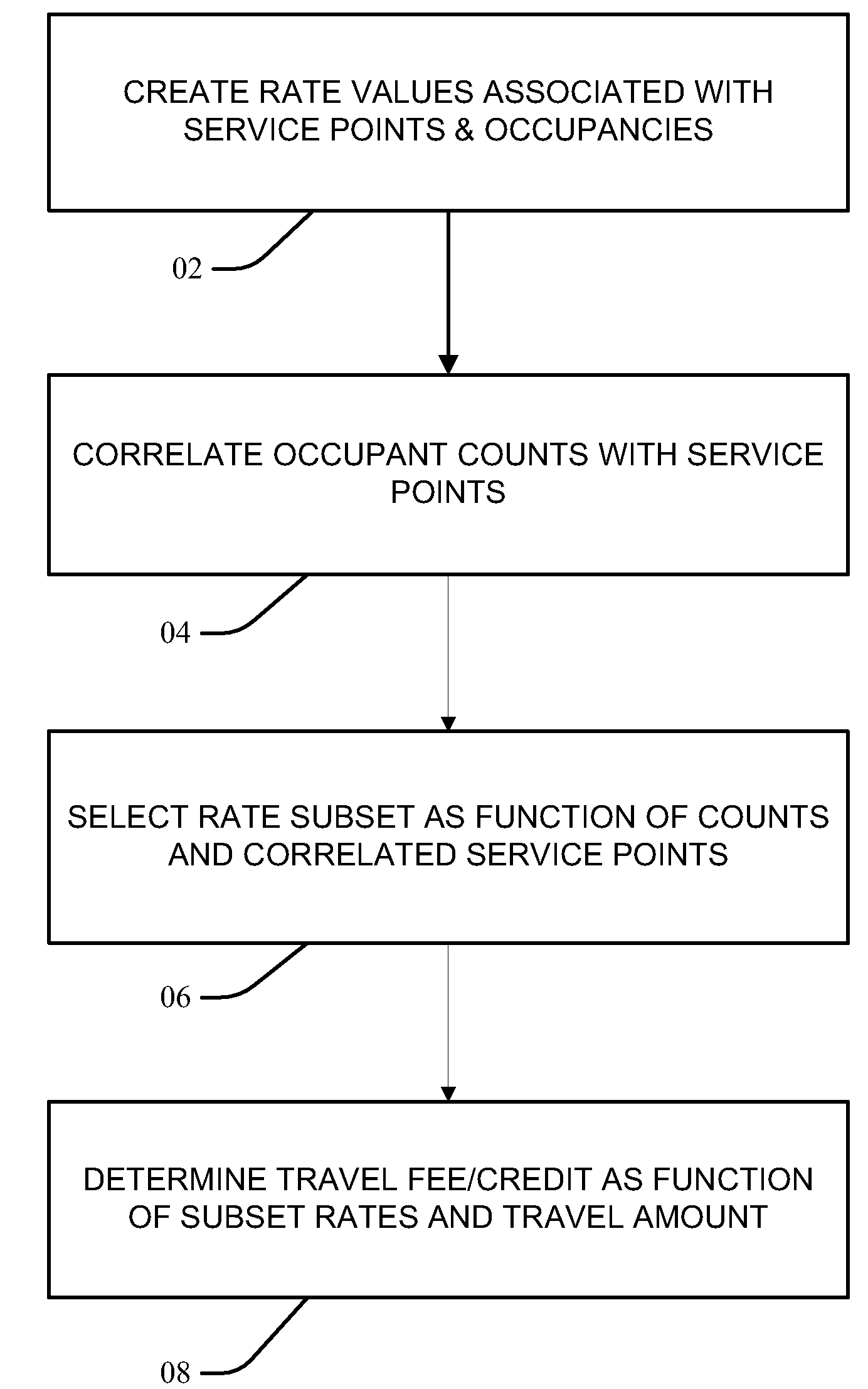

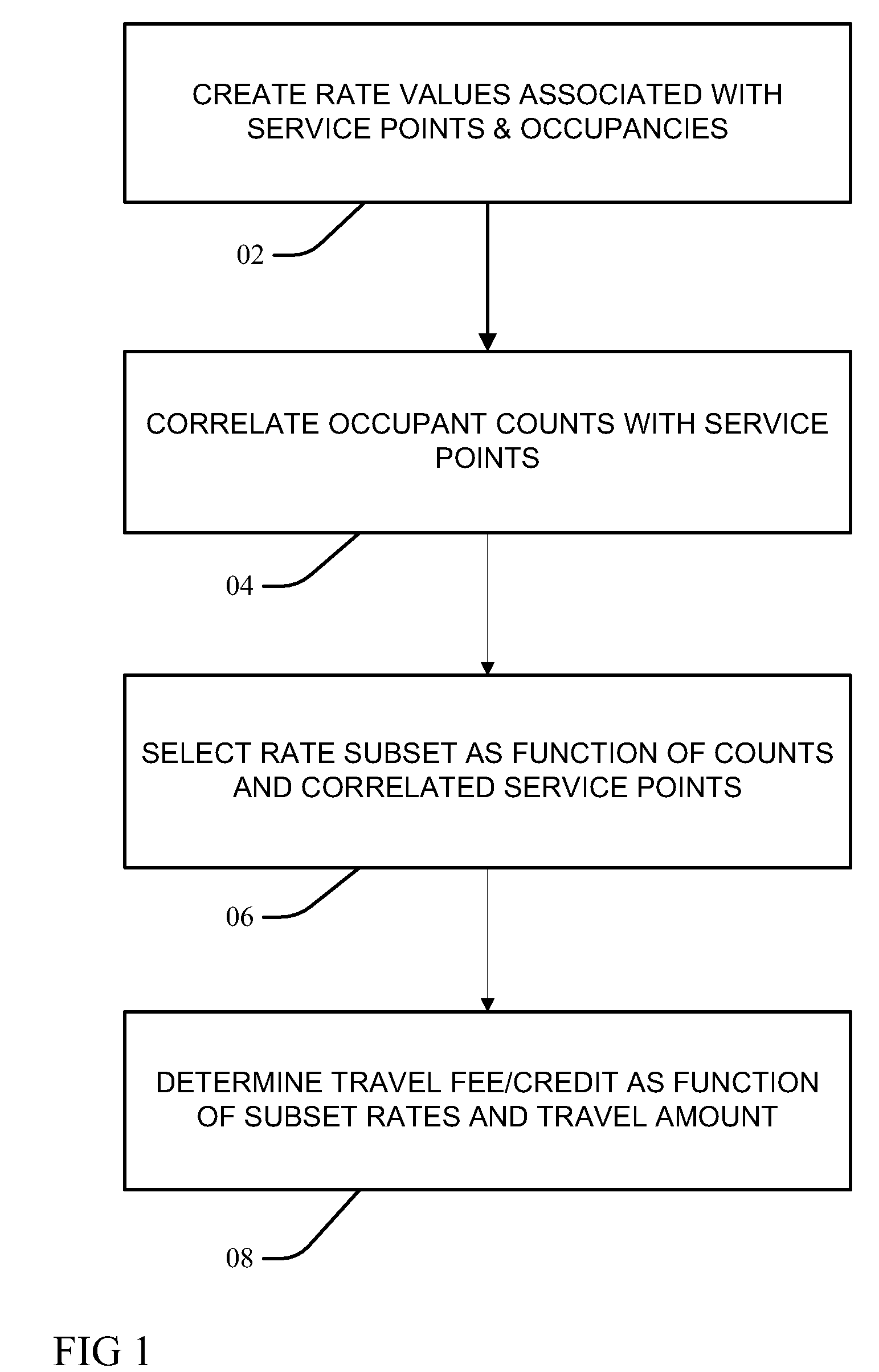

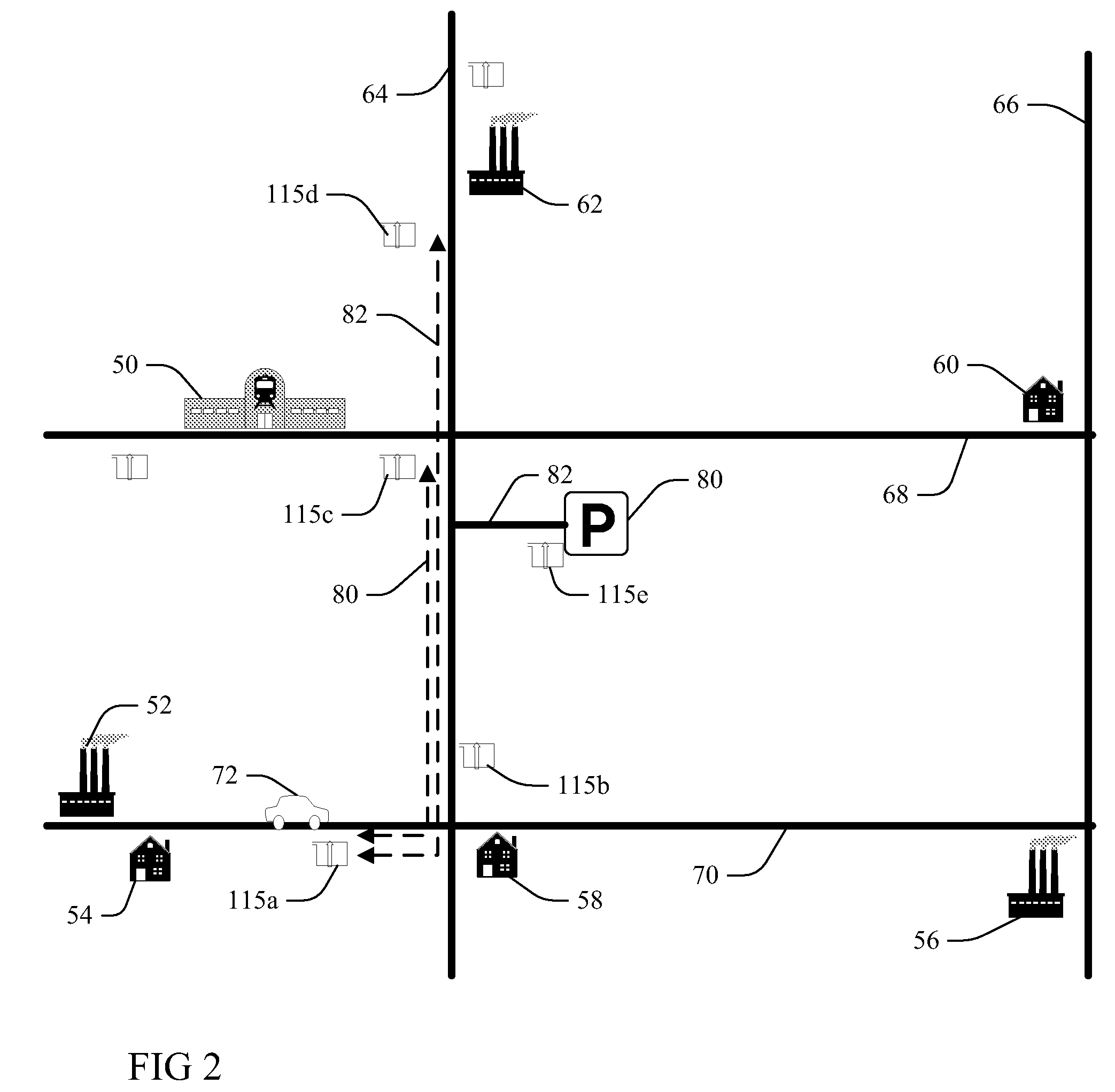

Variable rate travel fee based upon vehicle occupancy

InactiveUS20100161392A1Arrangements for variable traffic instructionsOptical signallingEngineeringE infrastructure

Owner:IBM CORP

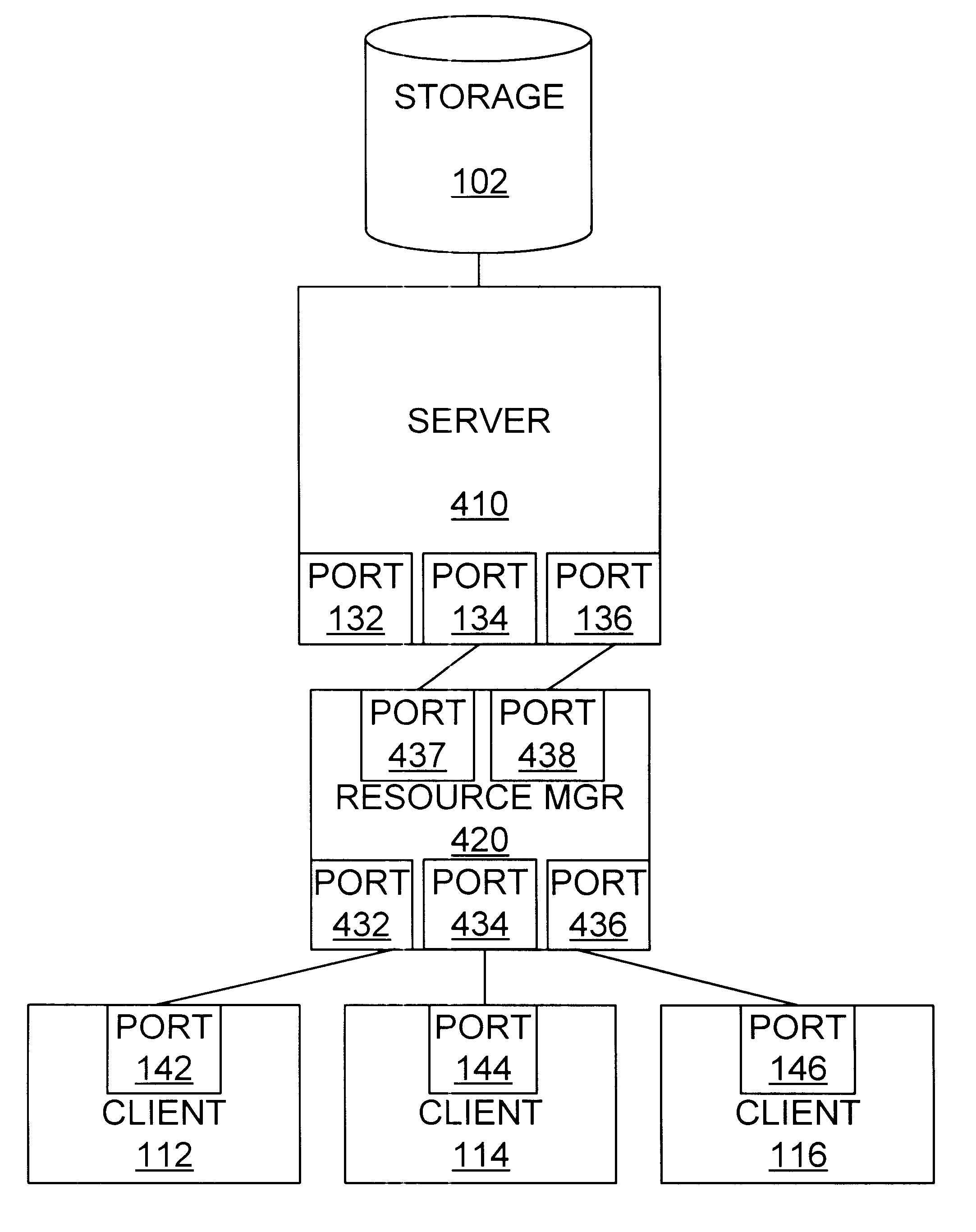

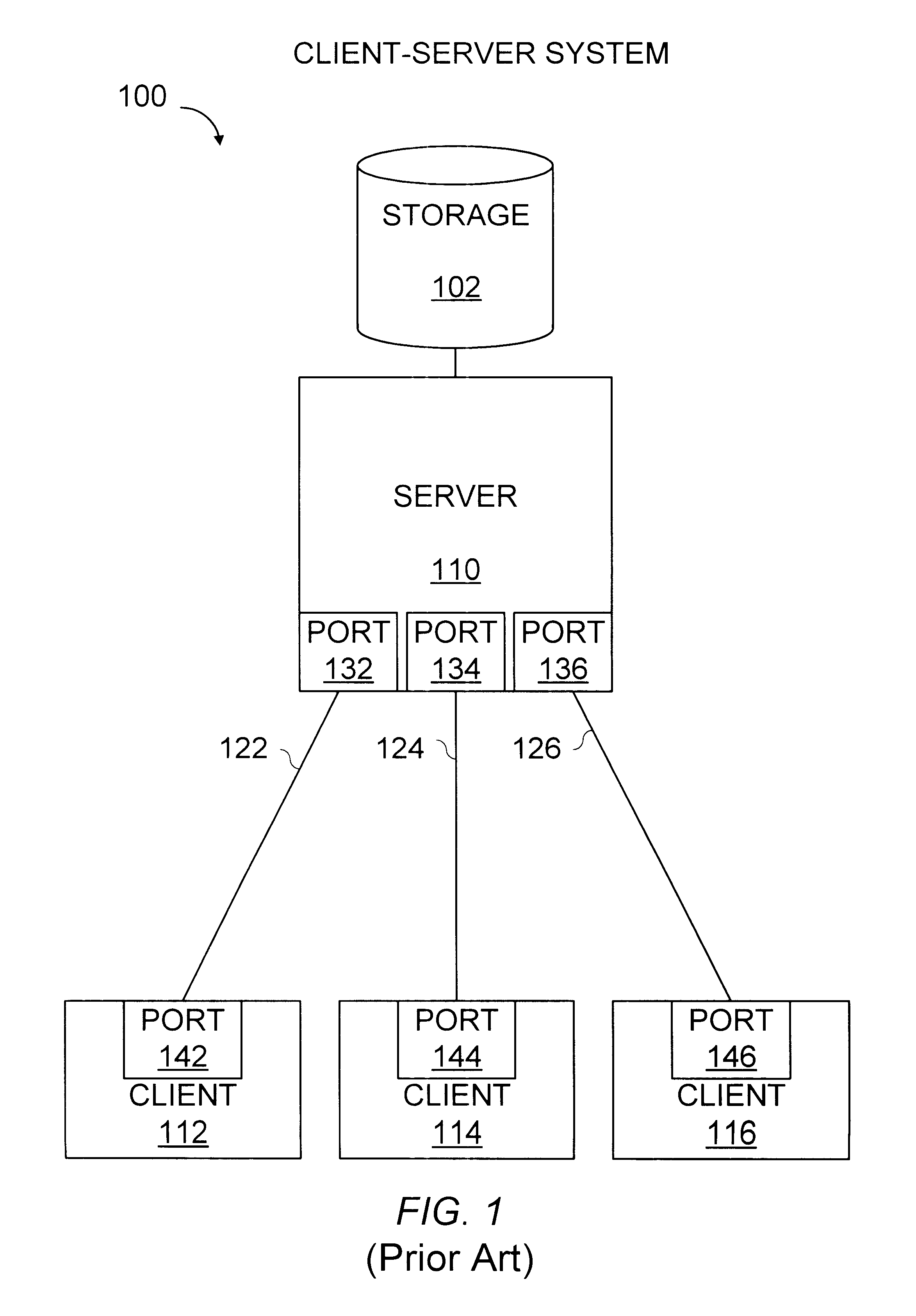

Method and apparatus for coupling clients to servers

A method and apparatus allows clients to share ports on a server. The server can maintain more sessions than server ports. When a client sends a command directed to the server, a resource manager inserted between the clients and the server intercepts the command and directs the server to select the session associated with a client prior to or at the same time that the resource manager forwards the intercepted command to the server. Responses from the server are forwarded by the resource manager to the client that sent the command to which the response relates. The resource manager may be coupled to multiple clients, and one or more ports of one or more servers.

Owner:ORACLE INT CORP

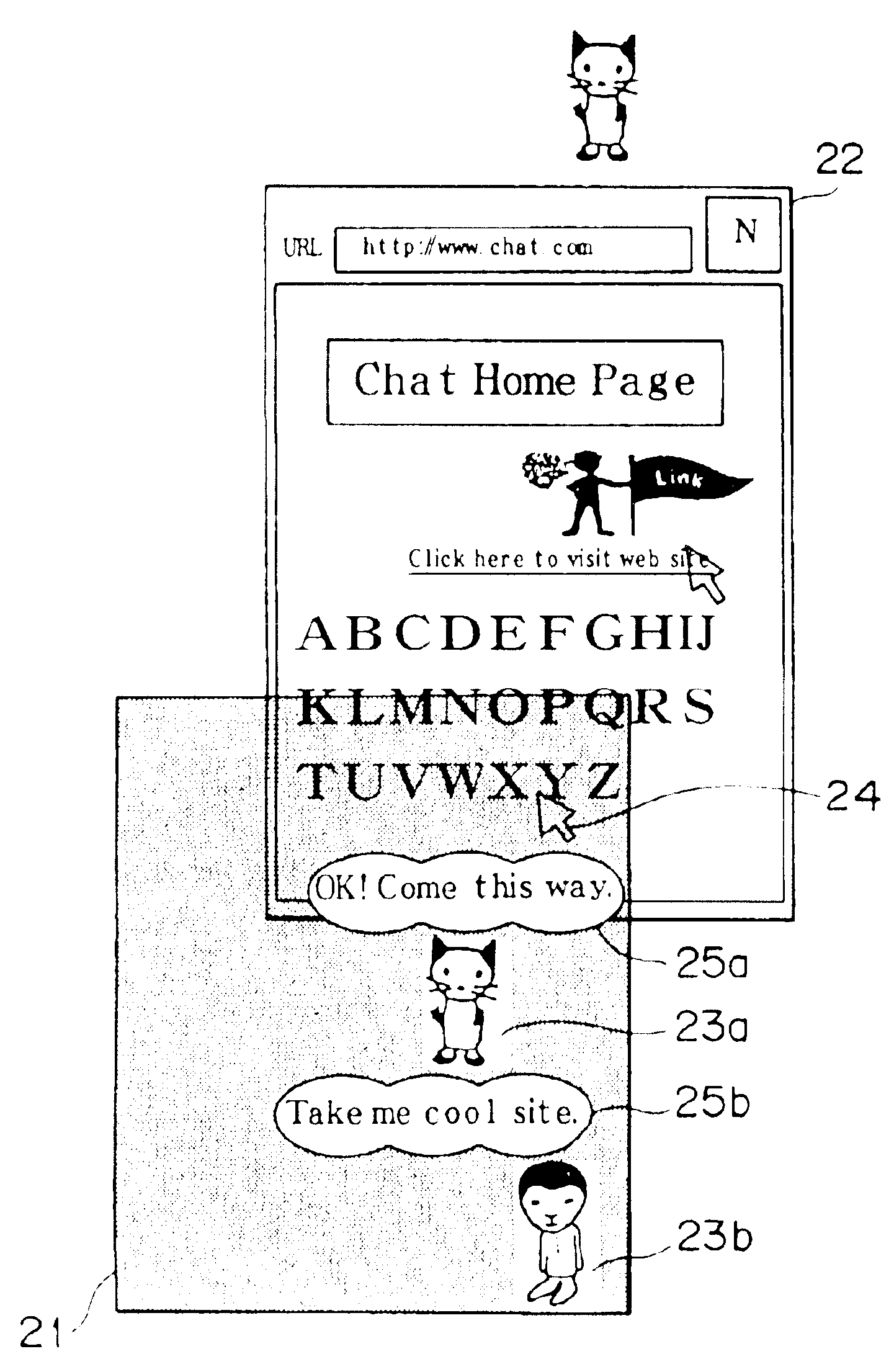

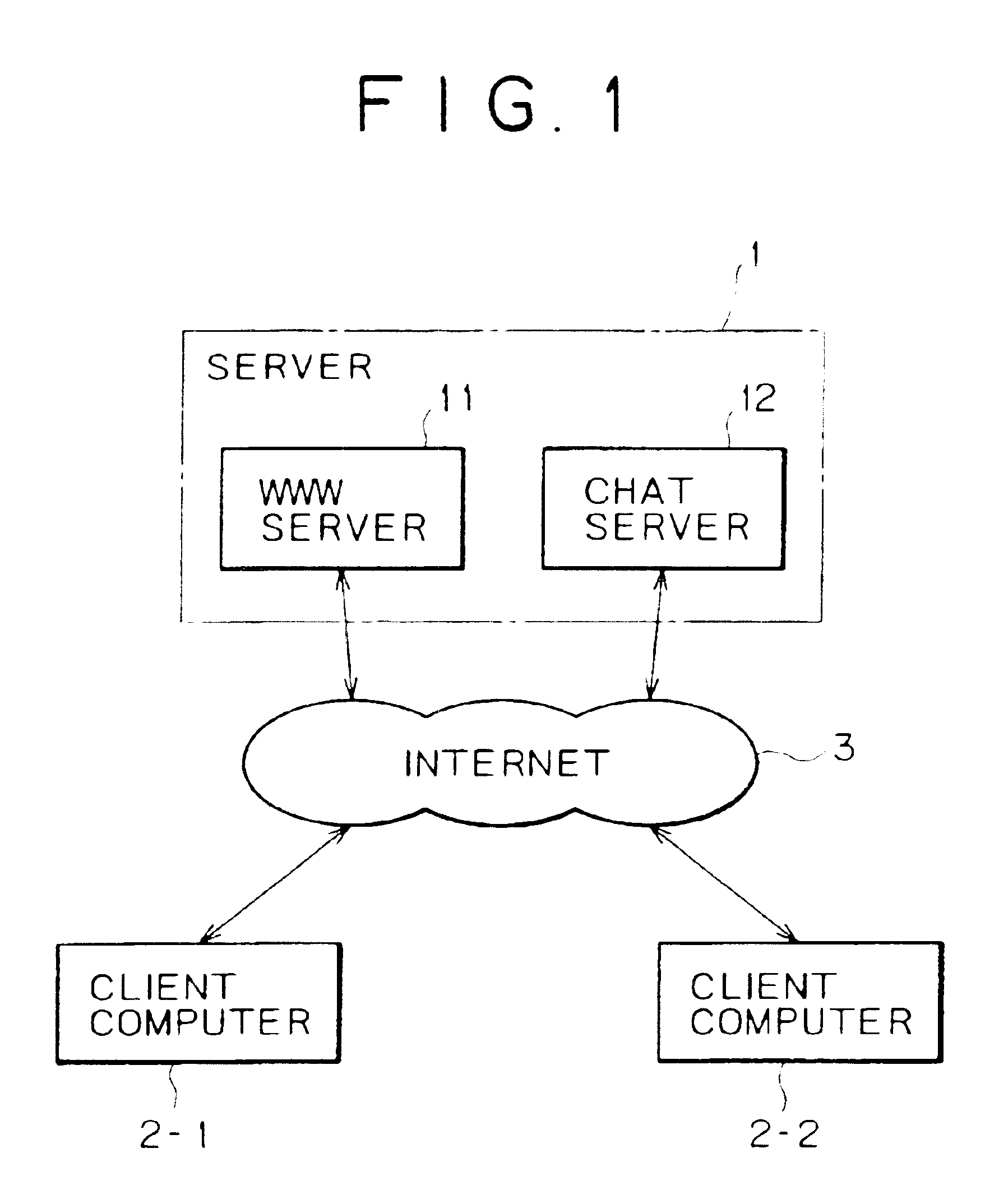

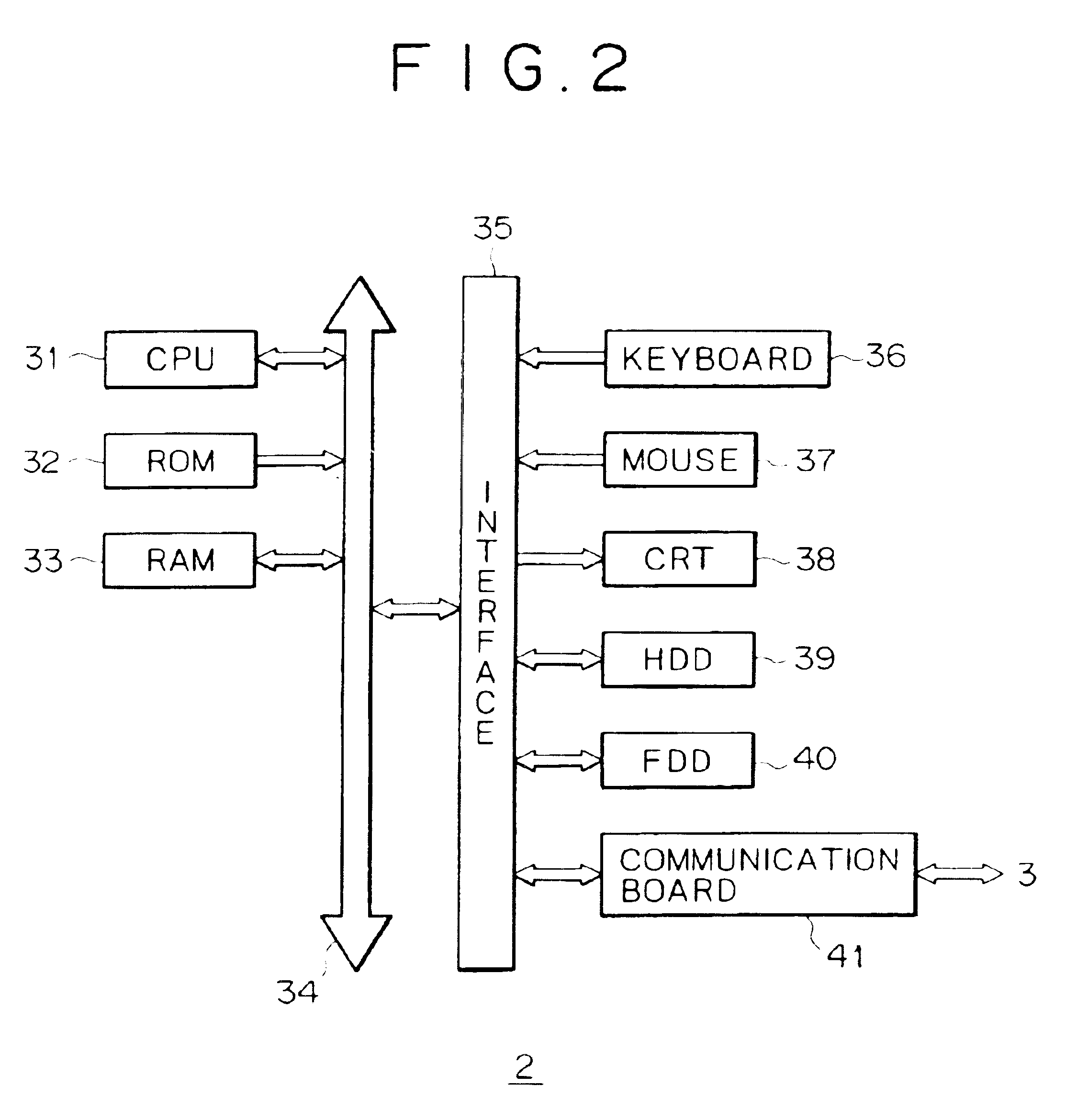

Information sharing processing method, information sharing processing program storage medium, information sharing processing apparatus, and information sharing processing system

InactiveUS6954902B2Easy to manufactureEasy accessCathode-ray tube indicatorsWeb data navigationInformation processingInformation sharing

There is provided an information sharing processing method comprising the steps of a page display processing step for acquiring a file from a predetermined server on a network and displaying the file as a page, wherein the file is described in a predetermined page description language and includes a description of link information to another file on the network; a common-screen display processing step for displaying an icon representing a user at a position on a common screen shared with the user and displaying a message issued by the user making an access to the same page as the page displayed at the page display processing step, wherein information on the position and the message are specified by the user in shared data transmitted by the user by way of a shared server on the network; and a screen superposition processing step for superposing the common screen displayed at the common-screen display processing step on the page displayed at the page display processing step. Accordingly, it is possible to make an access to the web page with ease while participating a chat. In addition, any one of the users is capable of immediately knowing whether the other user is making an access to the same web page.

Owner:SONY CORP

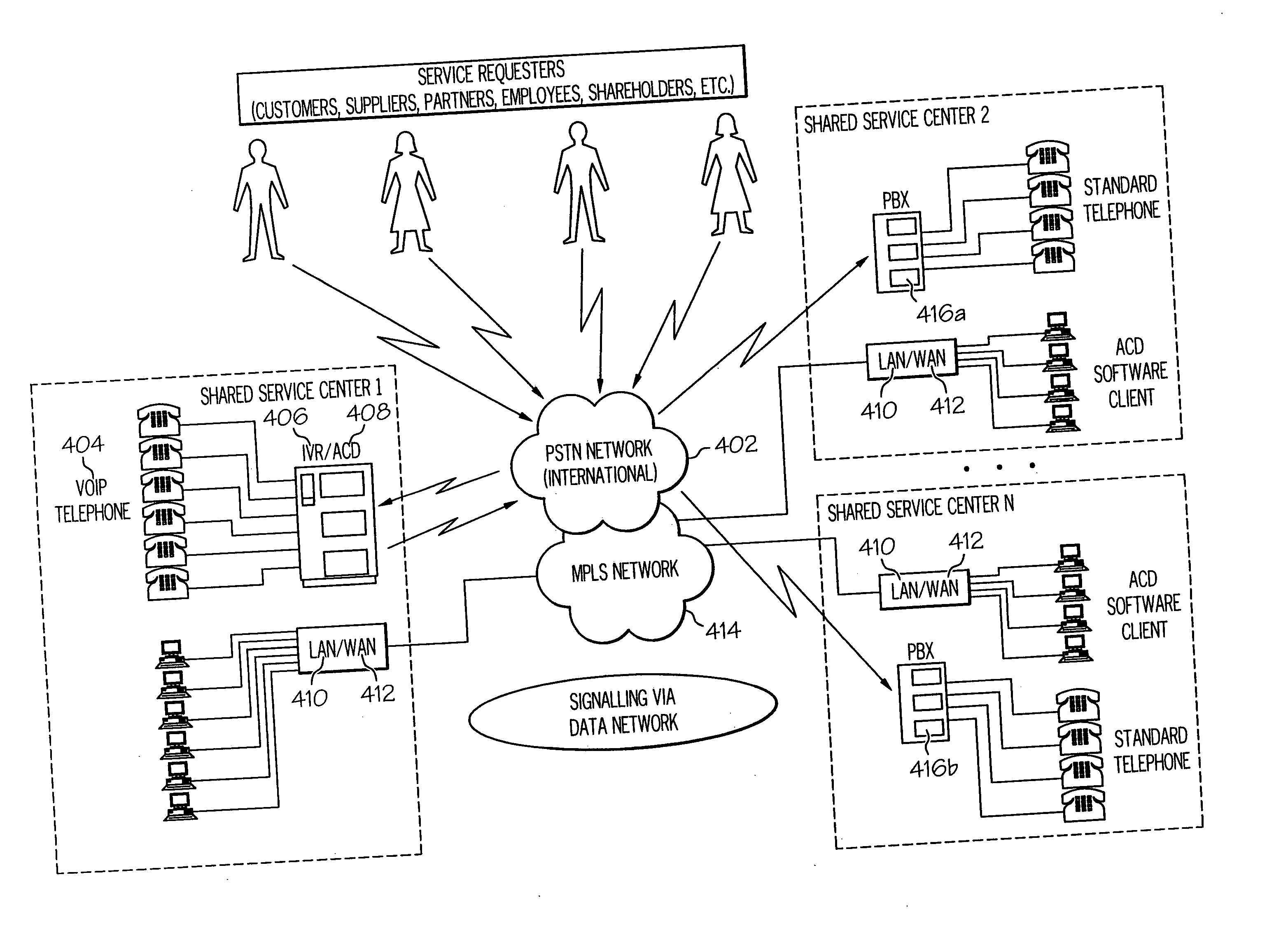

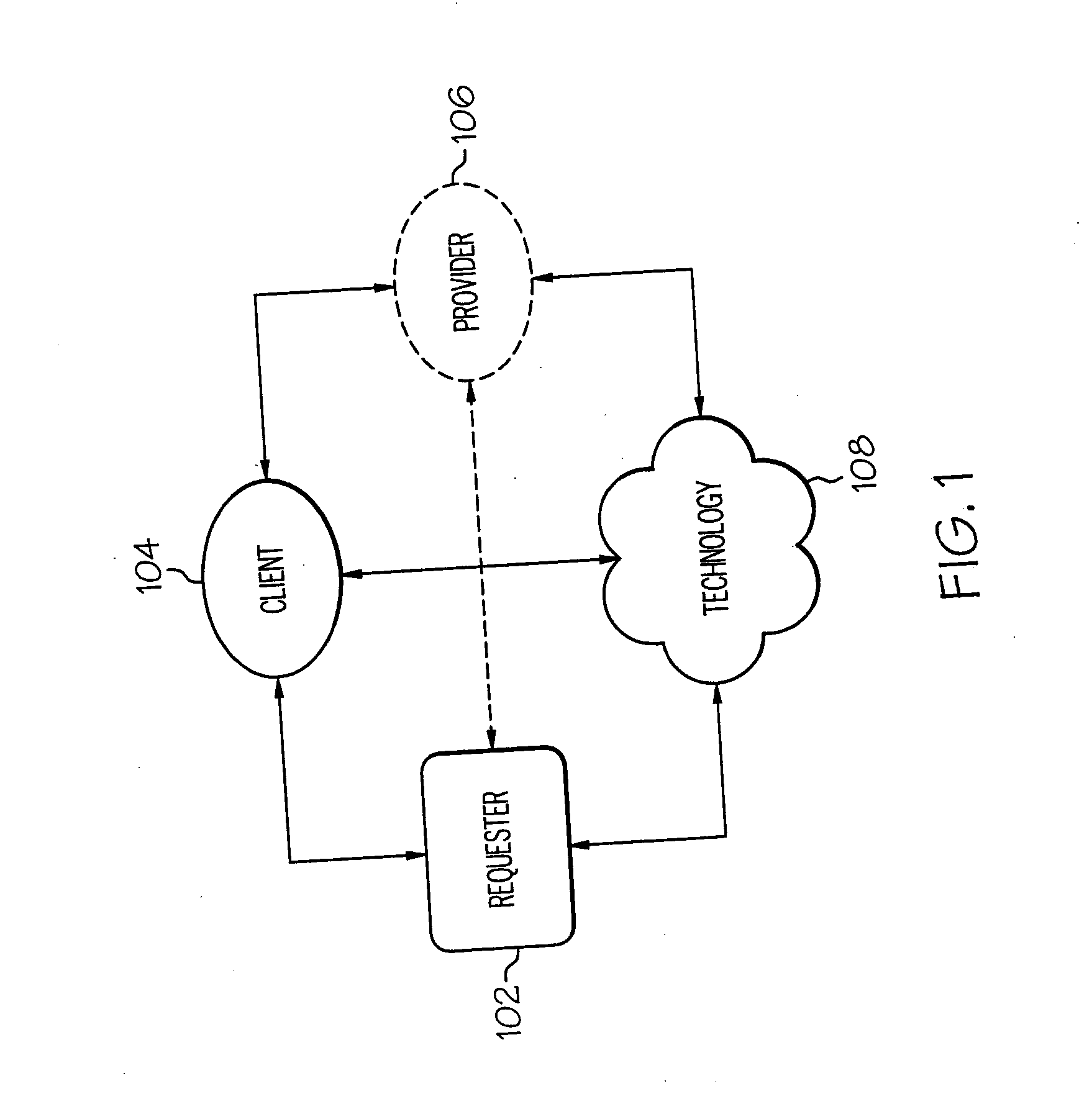

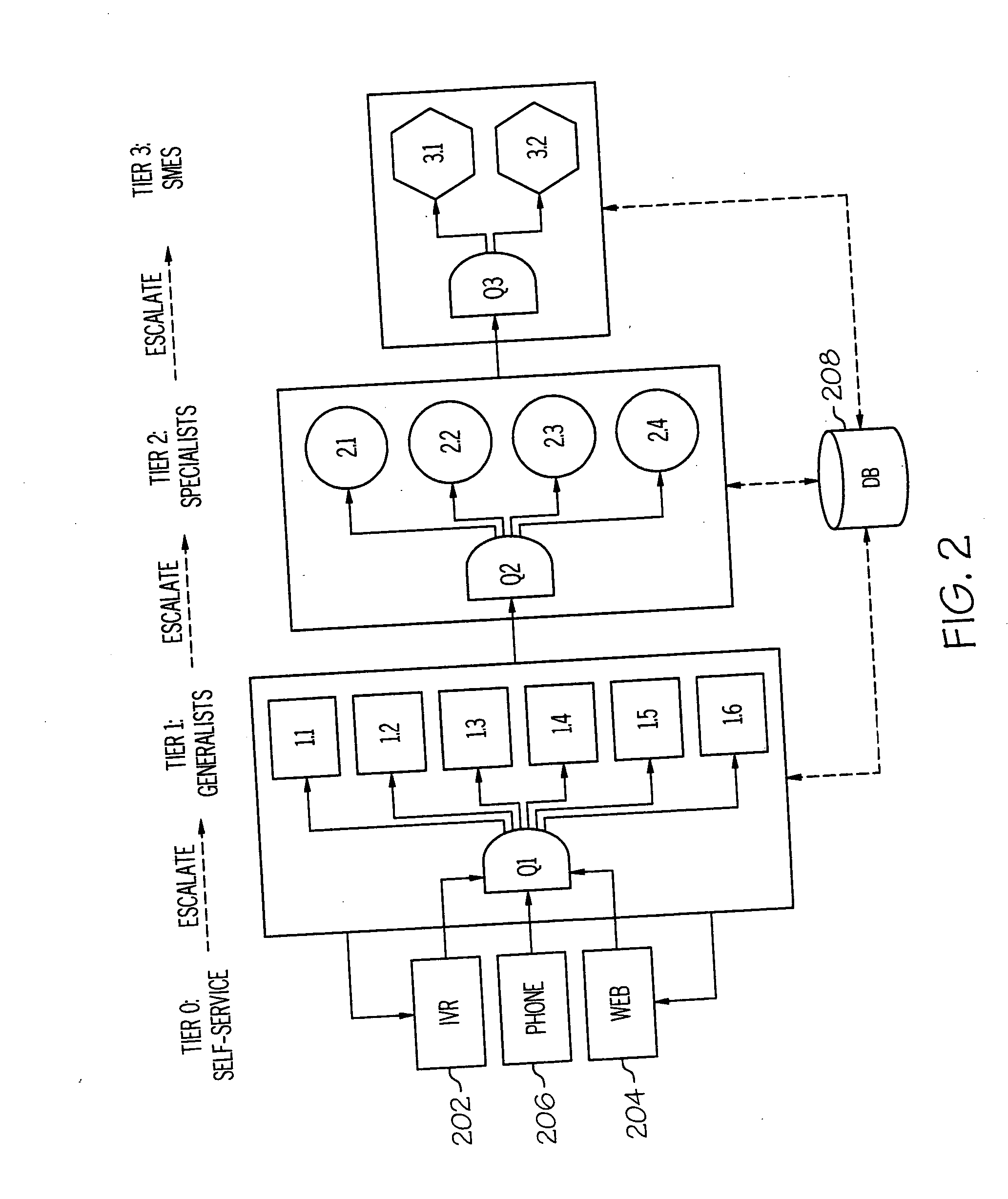

Call routing between shared service centers

ActiveUS20070064912A1Easy to routeImprove efficiencyManual exchangesAutomatic exchangesSpeech soundCall routing

A method and system to optimally route telephone calls between shared service centers is presented. Using a combination of service tiers, Agent Directory, Instant Messaging (IM), and Voice over Internet Protocol (VoIP) provides optimal routing of incoming calls for assistance. The method utilizes different protocols during normal operations, transitional operations, and emergency operations, and addresses Shared Service Center (SSC) planning and management.

Owner:KYNDRYL INC

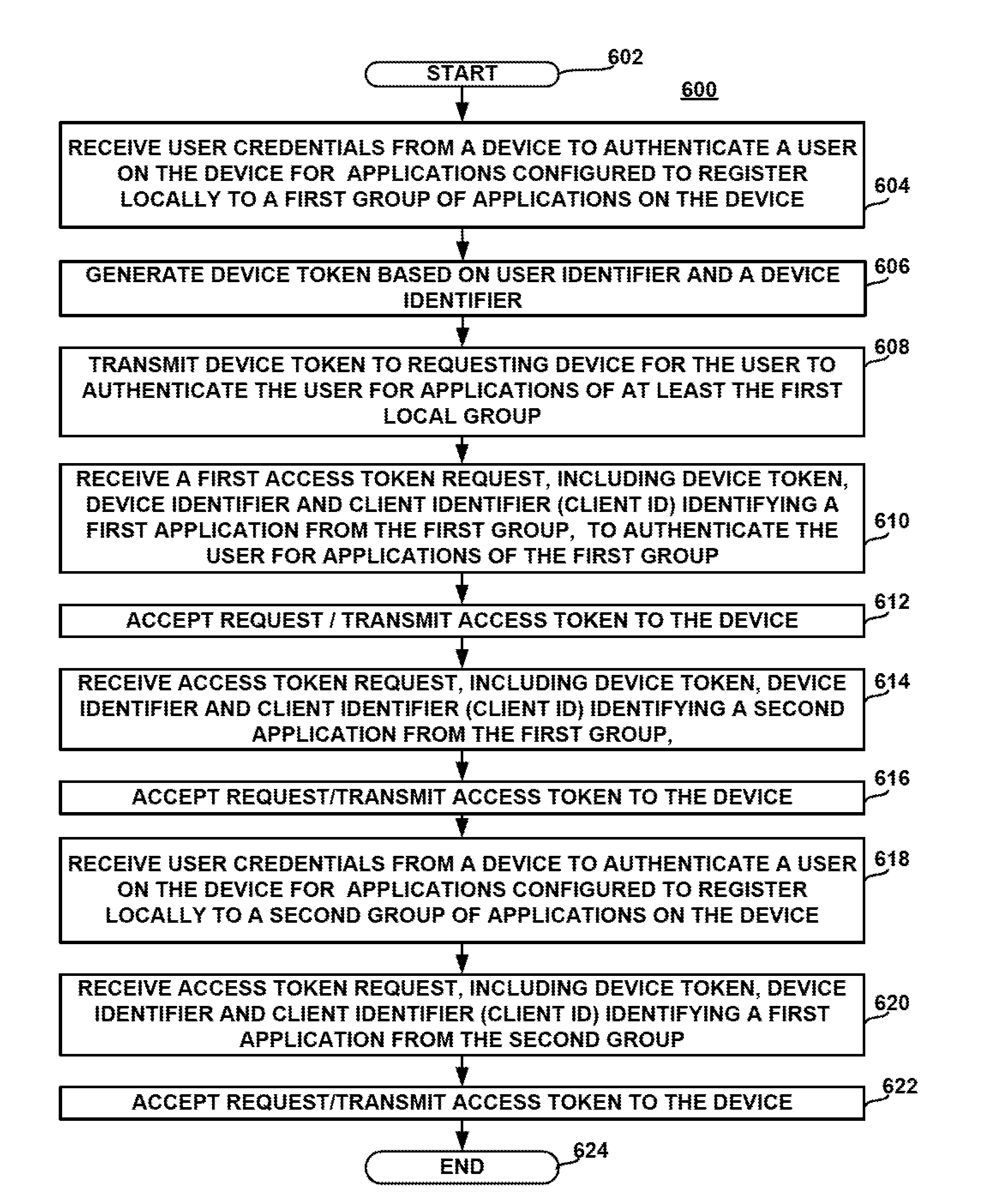

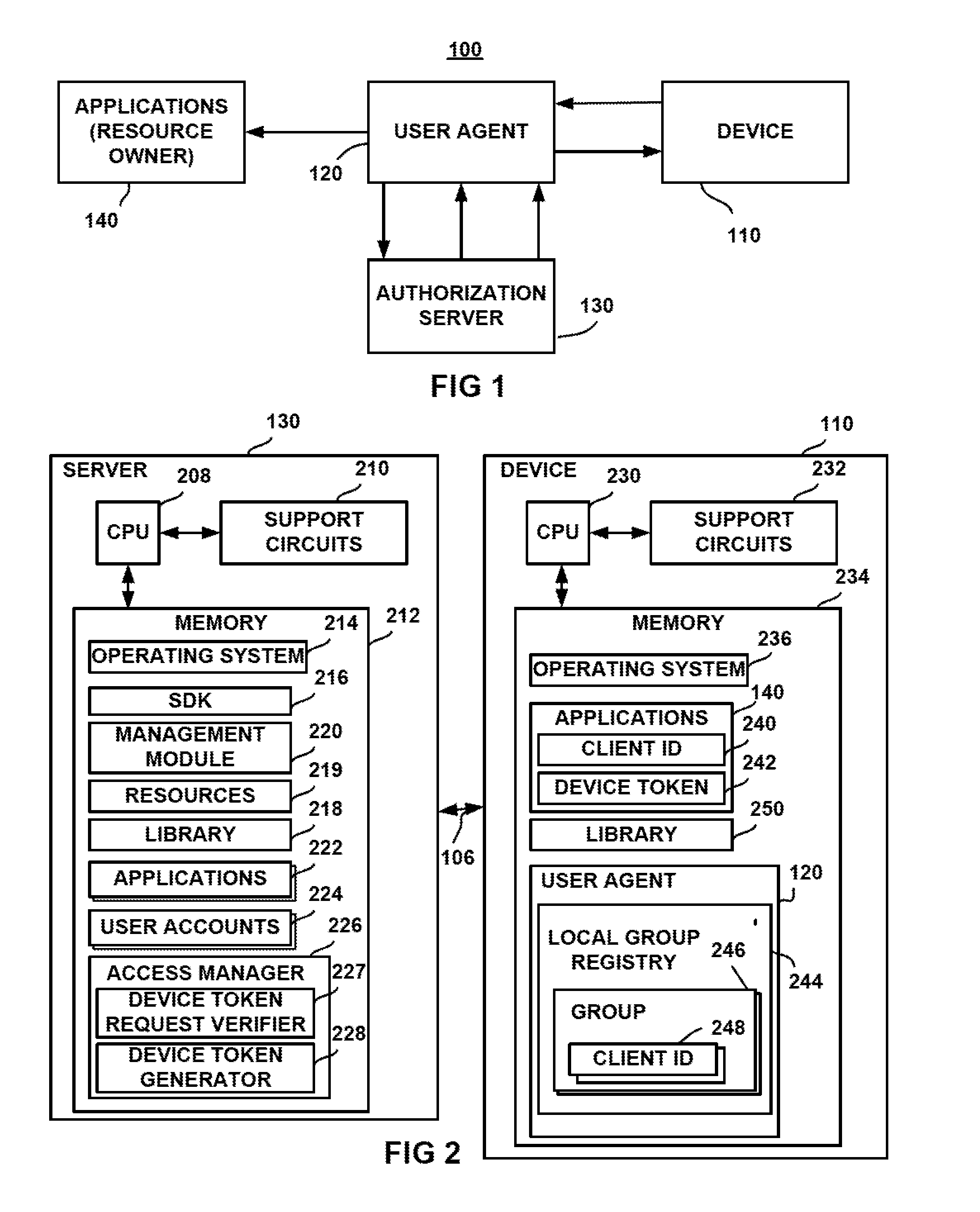

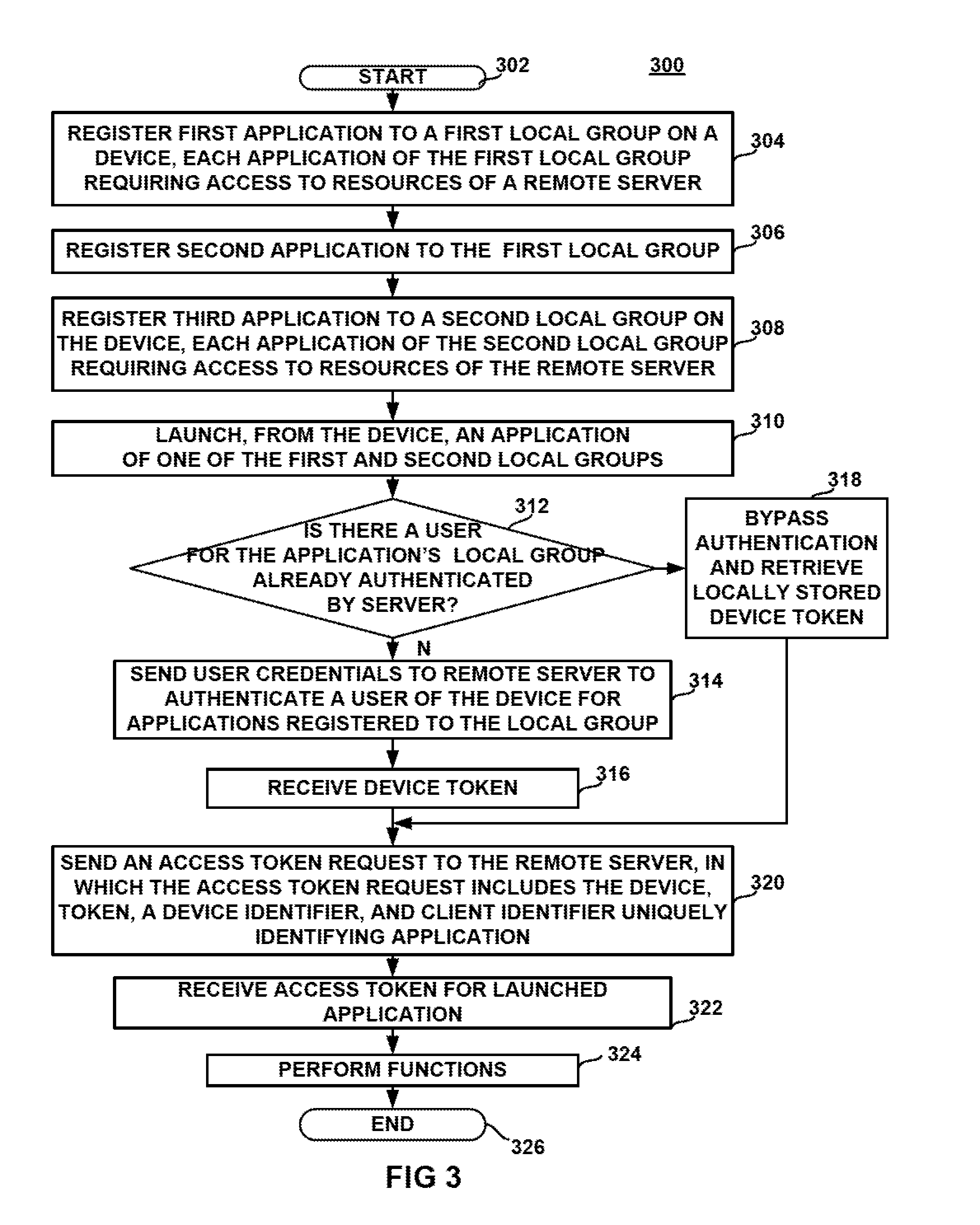

Method and apparatus for sharing server resources using a local group

ActiveUS20150365399A1Well formedDigital data processing detailsComputer security arrangementsUser needsLocal Group

A computer implemented method and apparatus for sharing server resources. One or more applications are registered to a first local group on a device, and one or more applications are registered to a second local group on the device. If a user and device have been authenticated, and a device token already acquired for obtaining authorization for a first application of the first local group to access resources from a server, the same device token is available for use in obtaining authorization for a second application of the first group to access (share) resources from the server. Thus, the user need not re-submit authentication credentials to the authorization server. When the user signs out of an application of the same group, the sign out procedure is processed locally for all applications of the group. A device token is surrendered when it is not needed by applications of any other group.

Owner:ADOBE INC

Computer implemented method for allocating drivers and passengers sharing a trip

ActiveUS8126903B2High degreeShorten the timeDigital data processing detailsBuying/selling/leasing transactionsResult listDatabase

Techniques for allocating drivers and passengers sharing a trip. The techniques may include a trip sharing service comprising receiving a first service request; specifying a first potential trip data object by the trip sharing service and executing a matching method. Matching may include checking a first potential trip data object against at least a second potential trip data object. Matching may further include comparing the specifications of the first potential trip data object with the specifications of the at least one second potential trip data object, determining the degree of congruency of the specifications of the compared potential trip data objects, assigning one role to a first and a second user, and adding the second potential trip data object to a result list in case the determined degree of congruency between the first and the second potential trip data object exceeds a predefined threshold.

Owner:SAP AG

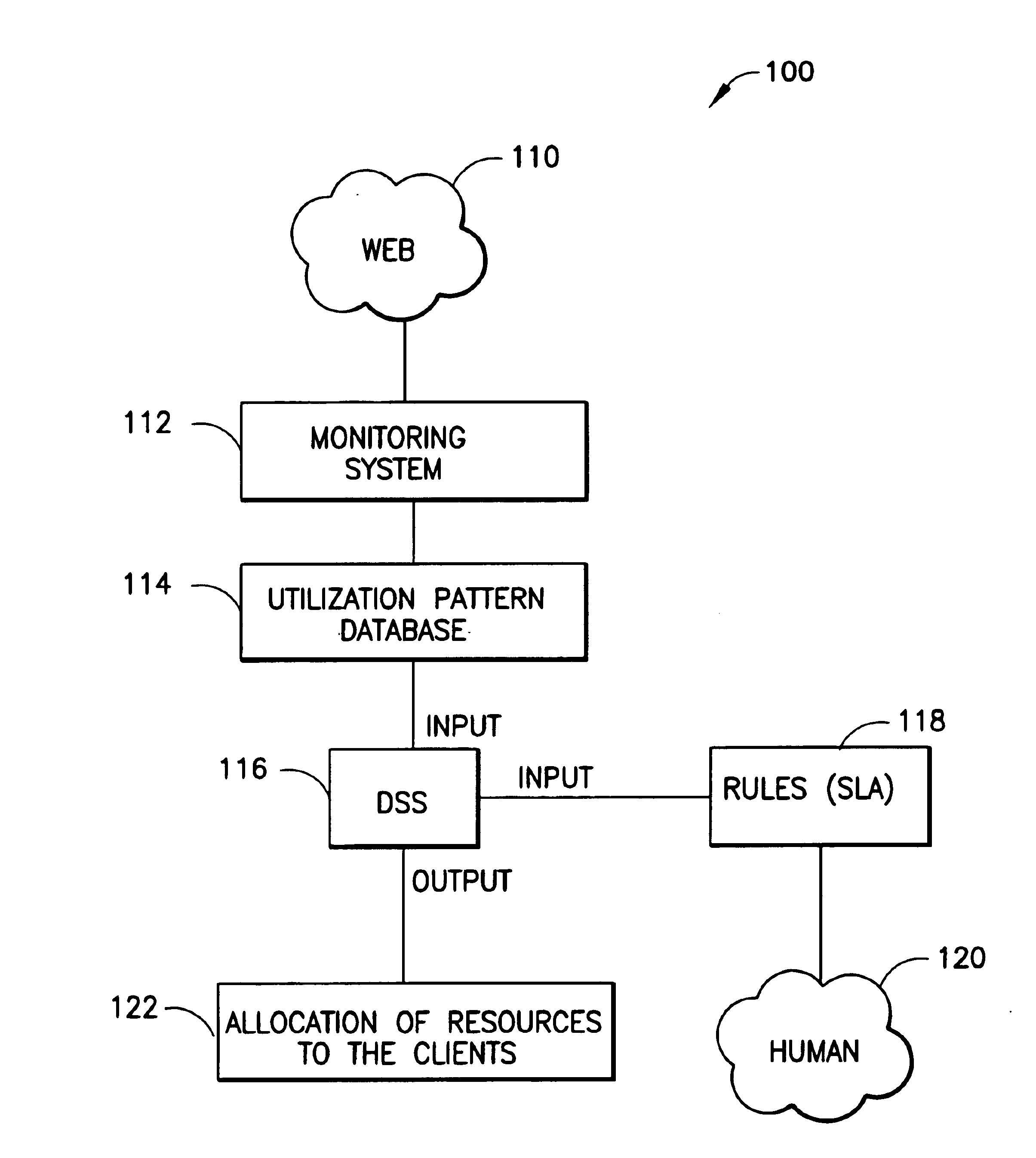

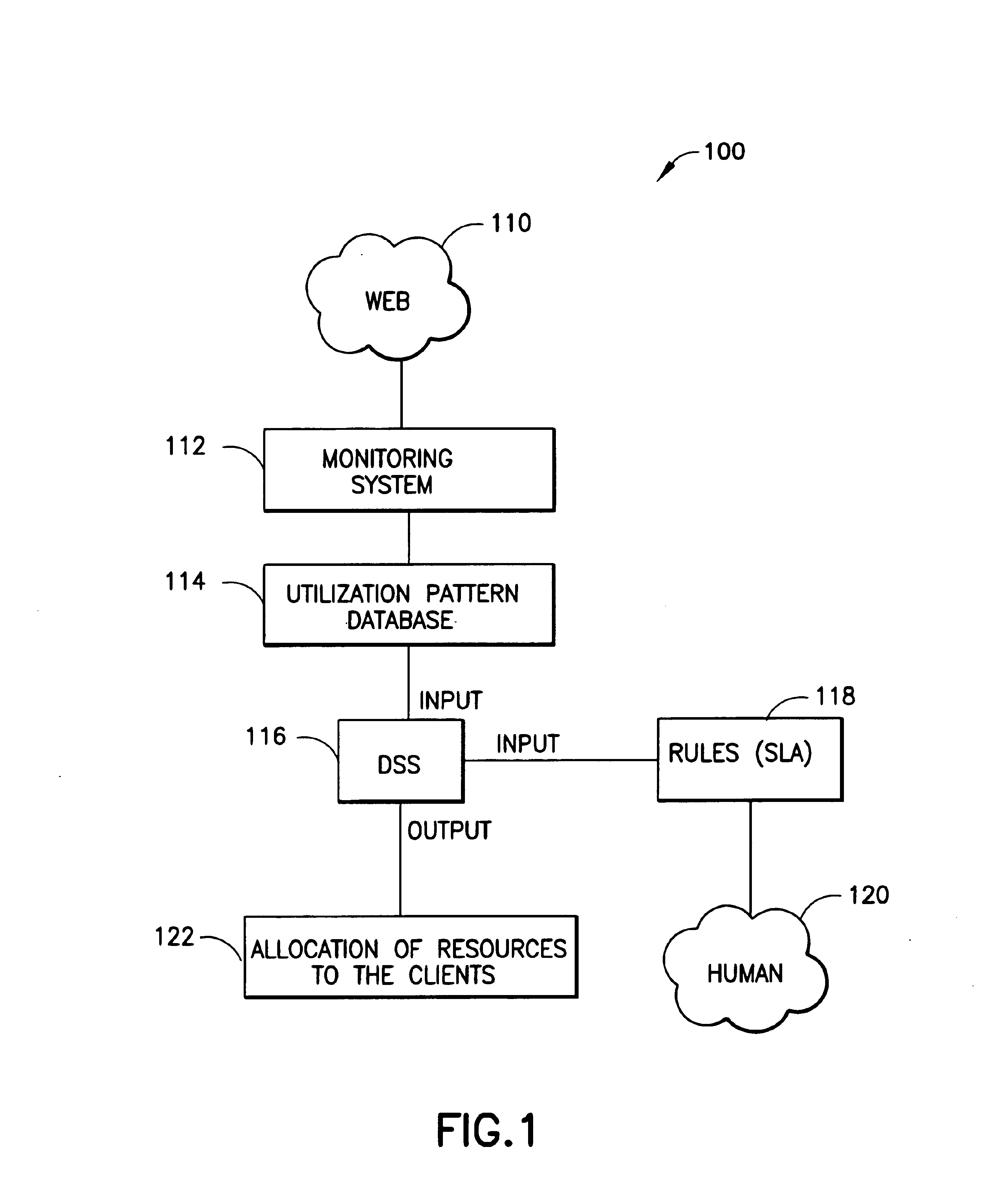

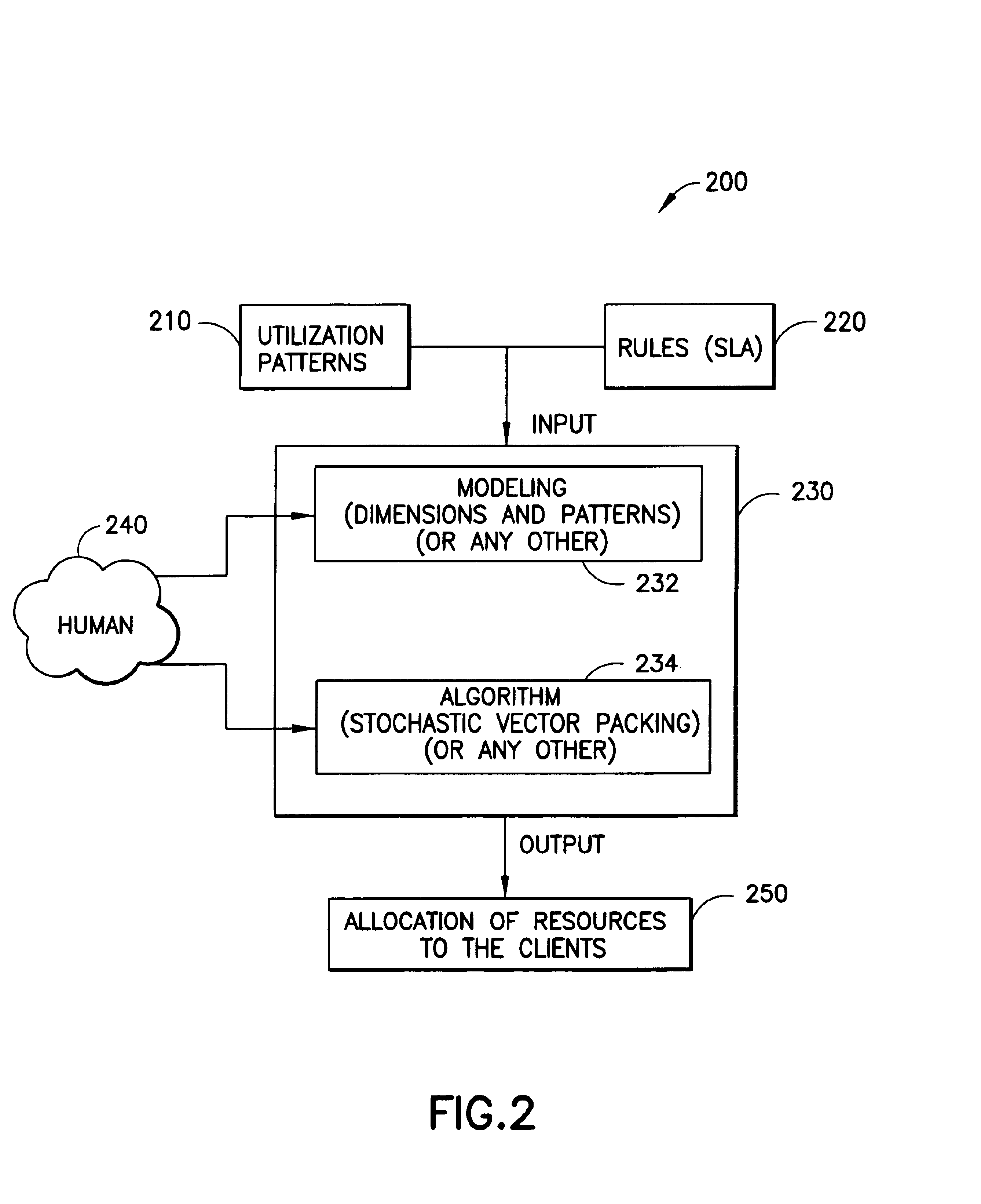

System for optimal resource allocation and planning for hosting computing services

InactiveUS6877035B2Facilitates optimal management of resourceMultiprogramming arrangementsMultiple digital computer combinationsClient-sideShared service

A method, apparatus, computer program product, and decision support system and for allocating hosting-service resources to clients in at least one shared server. The method comprises discovering utilization patterns of the clients; monitoring the clients to discover the utilization patterns; providing bounds specifying minimum and maximum hosting-service resources for each of the clients; modeling dimensions for client user measures and the utilization patterns; and allocating the resources to the clients dependent on the utilization patterns. The step of allocating is also dependent upon the bounds. The method further includes packing the clients using stochastic vectors, wherein the packing step utilizes at least one of a Roof Avoidance process, a Minimized Variance process, a Maximized Minima process, and a Largest Combination process, and wherein the hosting-service resources relate to at least one hosting comprising one of collaborative hosting services, commerce hosting services, and e-business hosting services.

Owner:IBM CORP

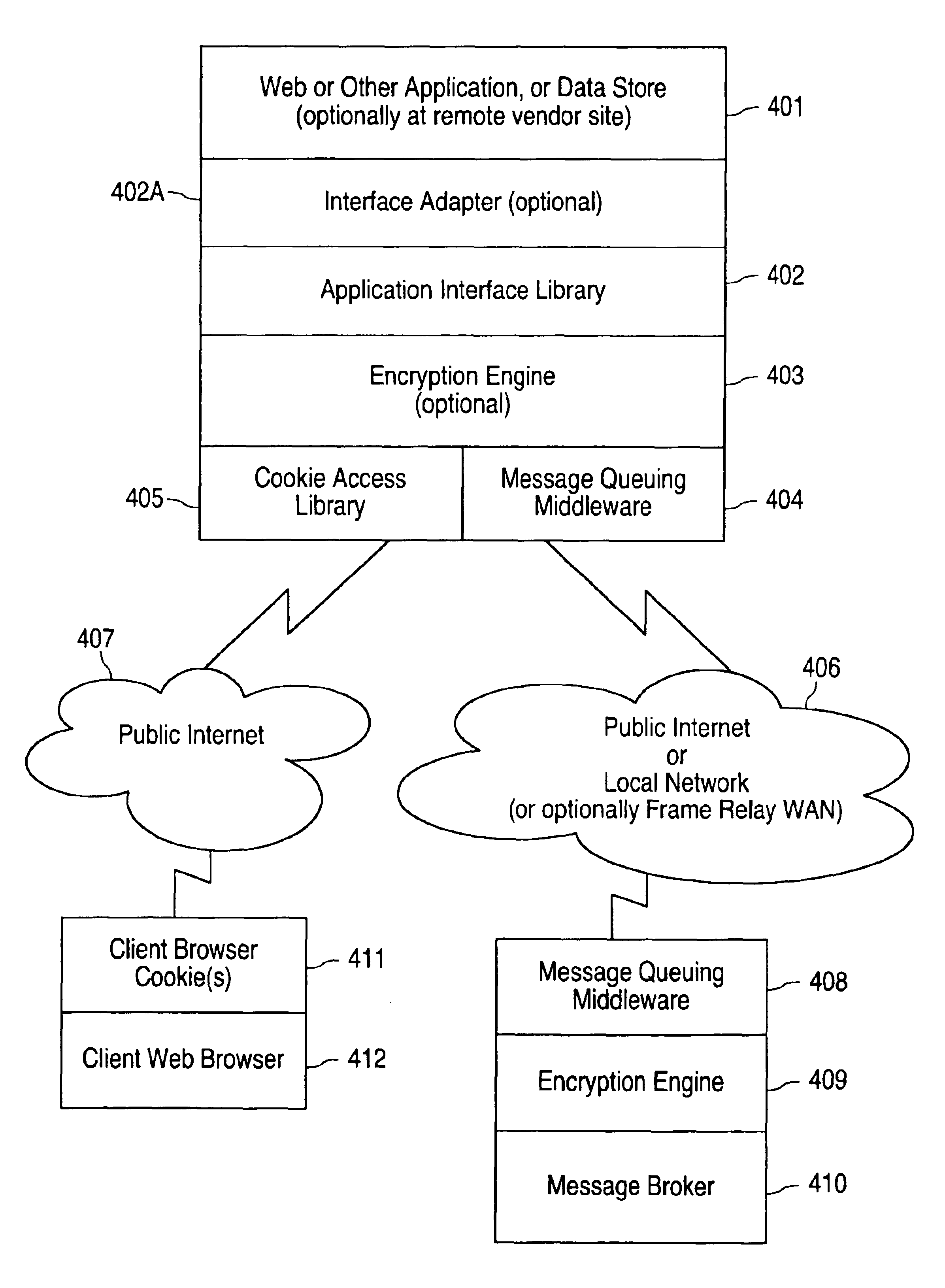

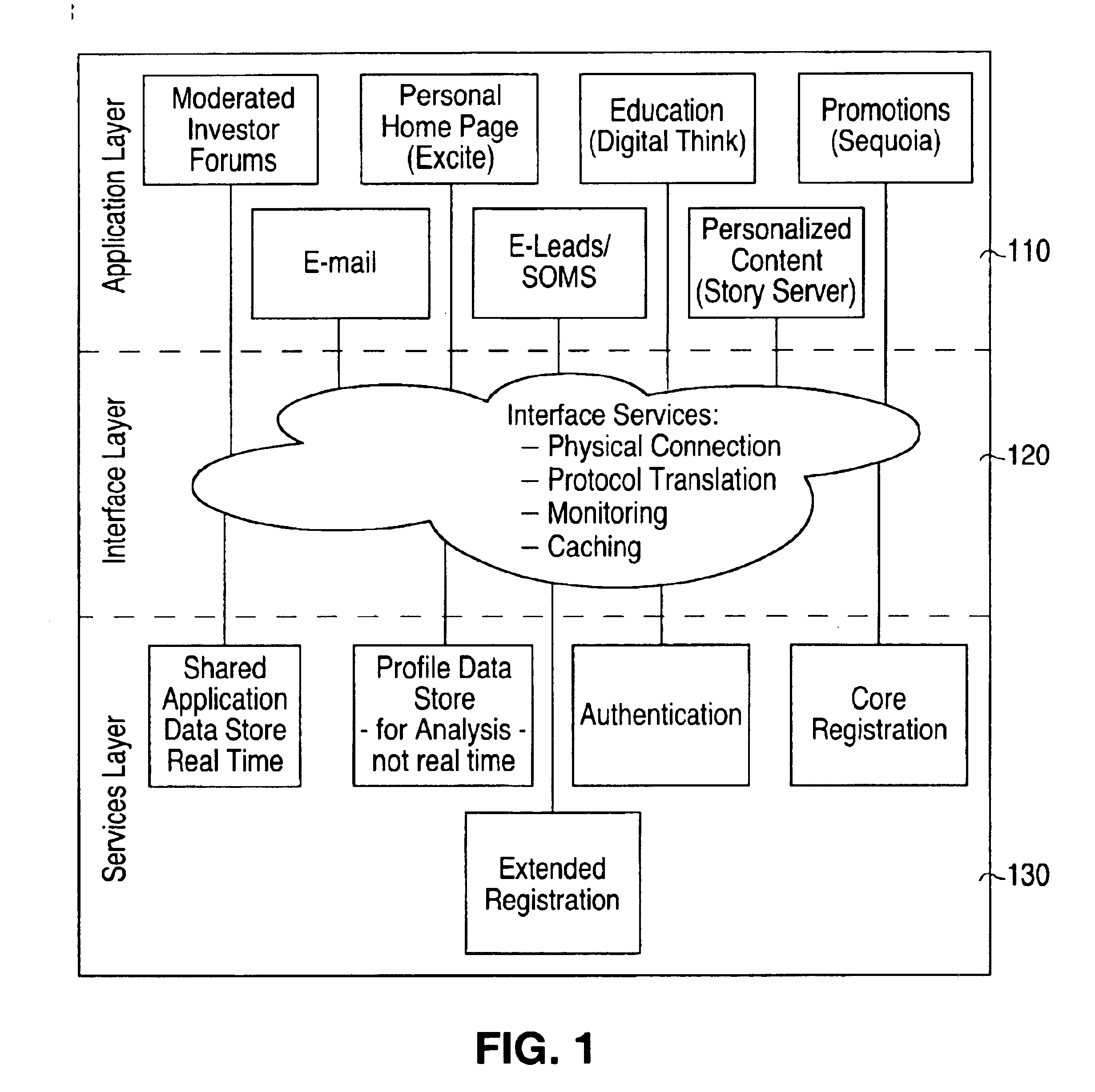

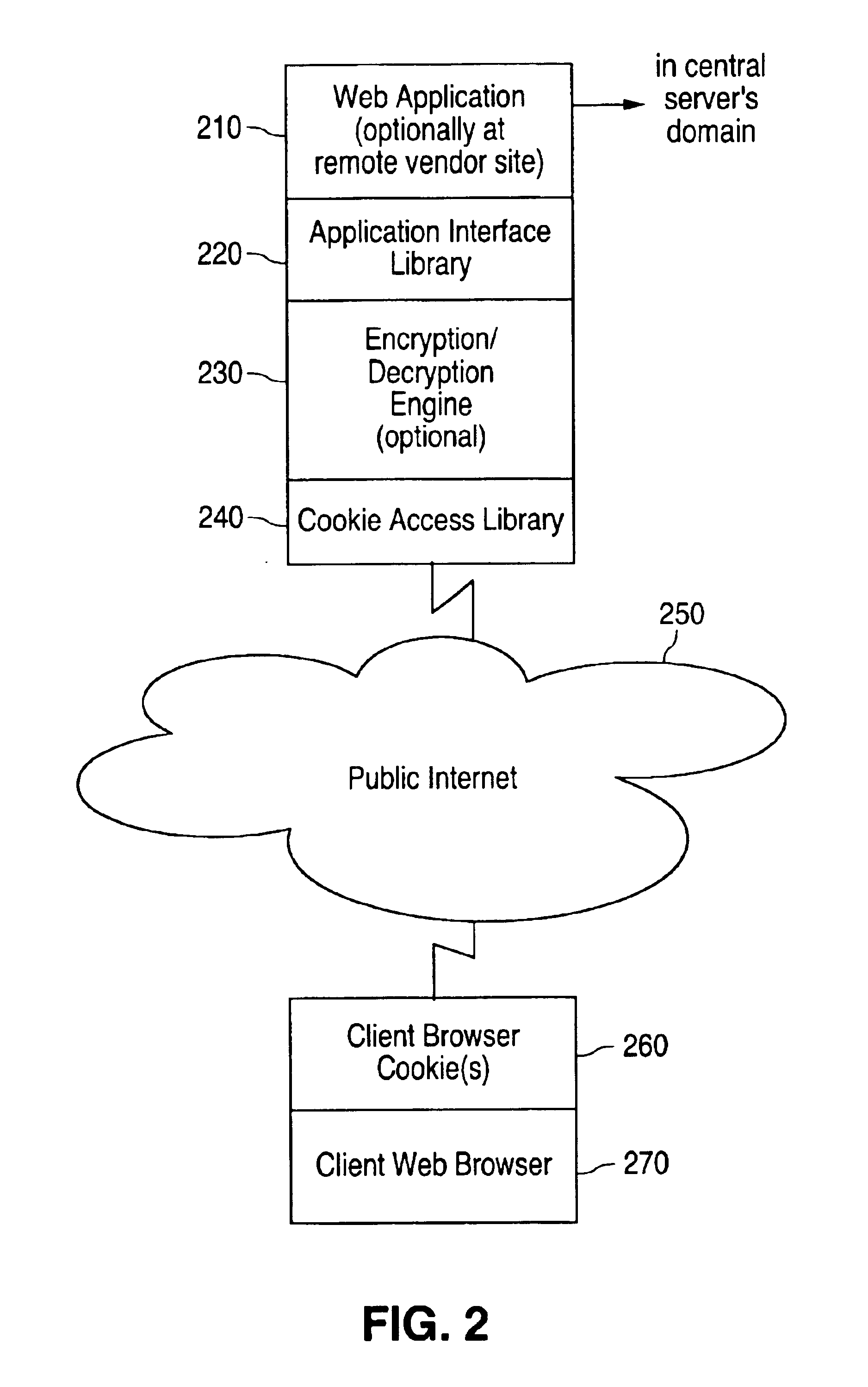

Method and apparatus for integrating distributed shared services system

InactiveUS6954799B2Multiple digital computer combinationsDigital data authenticationWeb siteAccess time

Method and apparatus for integrating distributed shared services system which integrates web based applications with each other and with other centralized application to provide a single sign-on approach for authentication and authorization services for distributed web sites requiring no access time back to the authentication / authorization server is provided.

Owner:CHARLES SCHWAB & CO INC

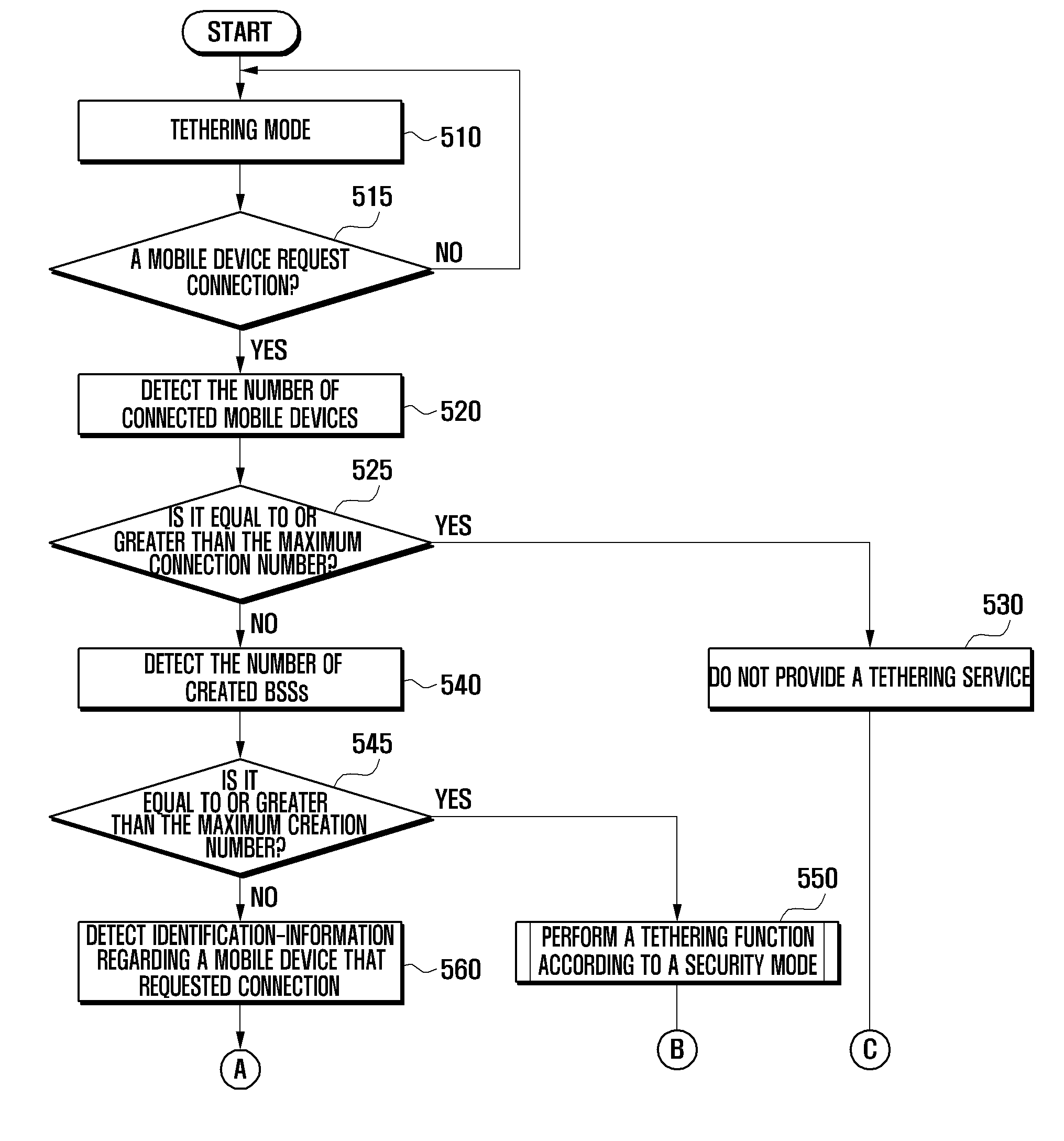

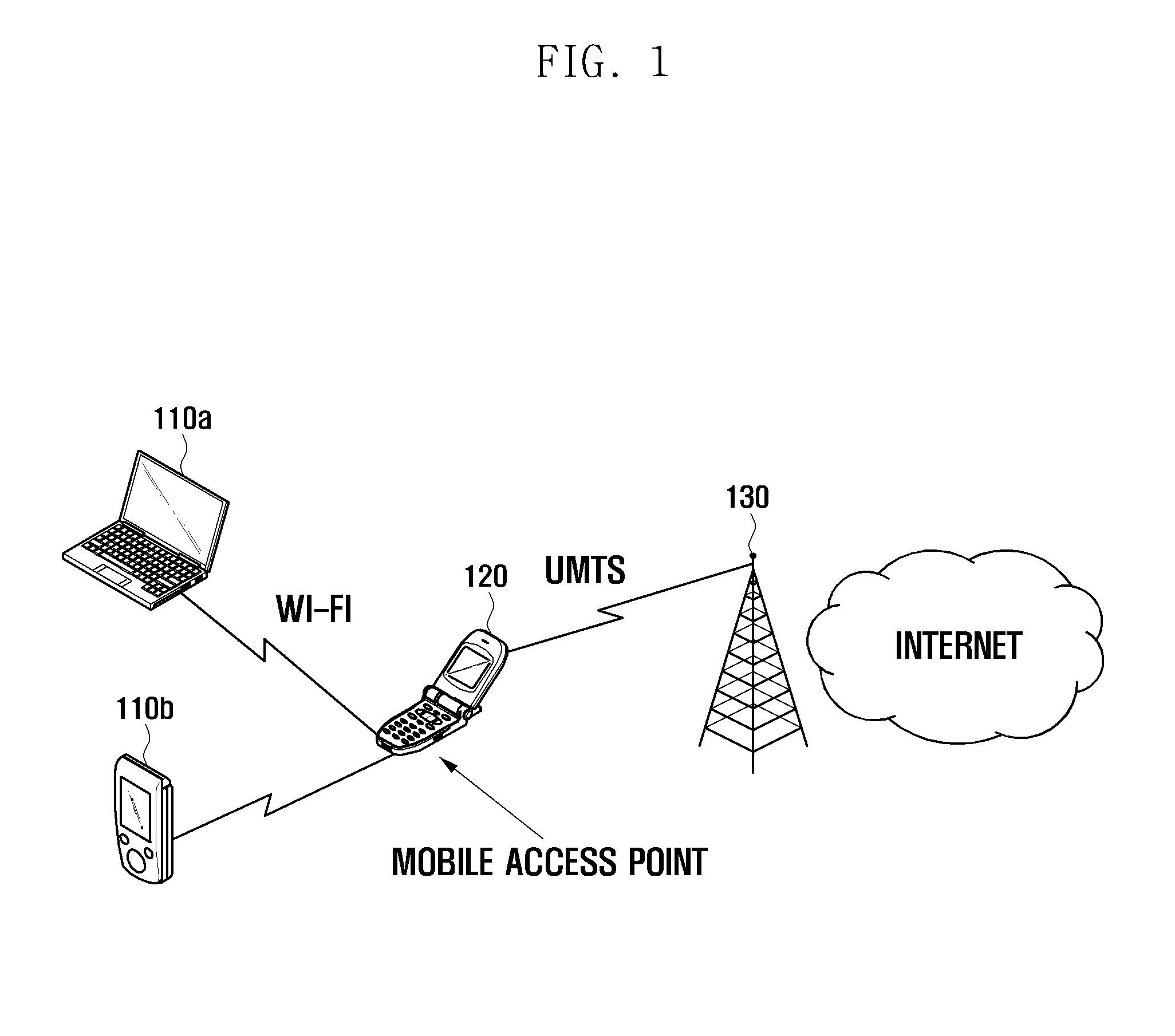

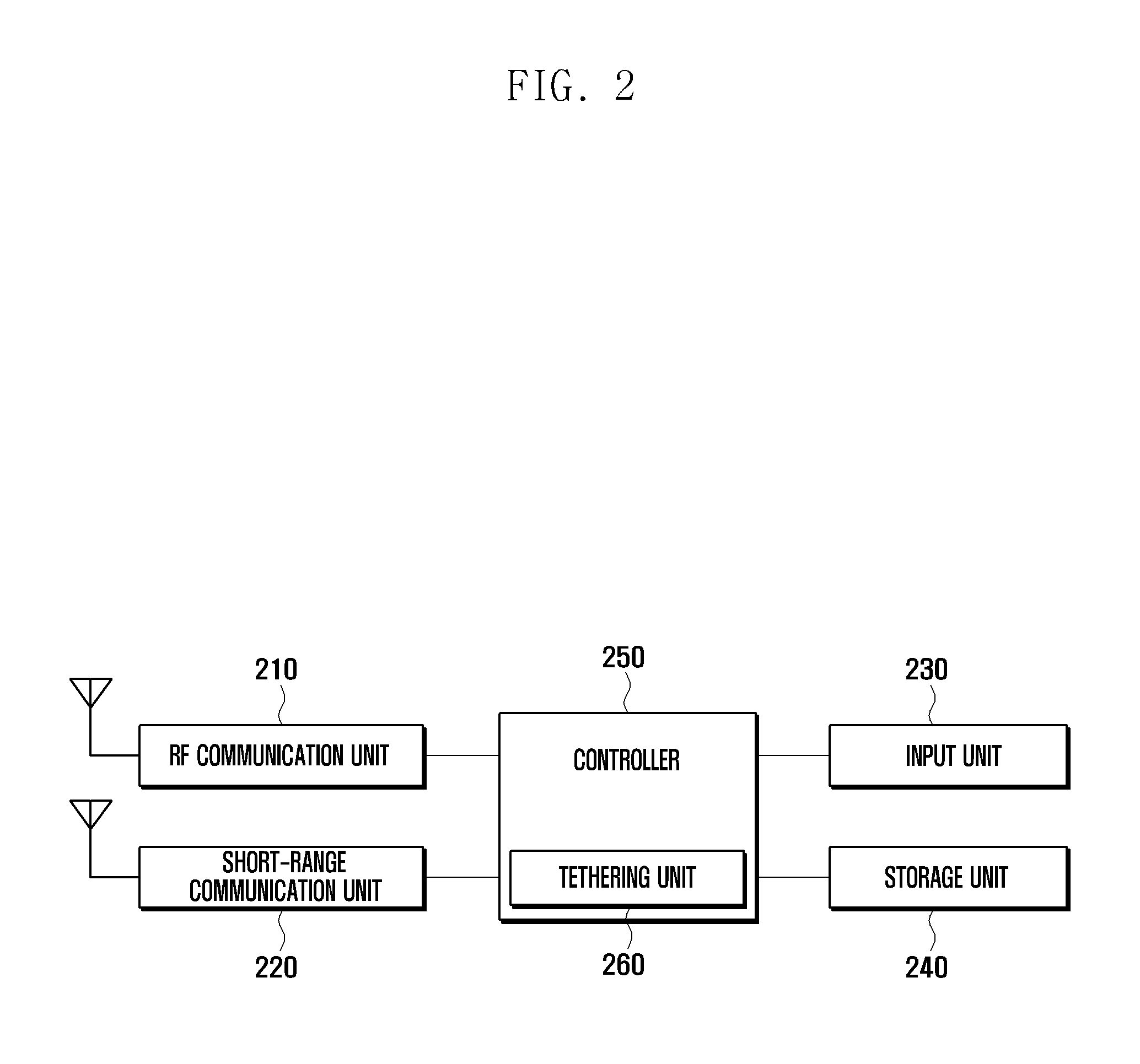

Tethering method and mobile device adapted thereto

ActiveUS20110283001A1Network traffic/resource managementConnection managementBasic serviceConnection number

A mobile device and a method for providing a tethering service via a security mode and a list of preferred mobile devices are provided. The method includes determining, when the mobile device receives a connection request from a client mobile device, a number of client mobile devices that are currently connected to the mobile device, determining, when the number of connected client mobile devices is less than a preset maximum connection number, the number of created Basic Service Sets (BSSs), determining, when the number of BSSs is less than a preset maximum creation number, the identification-information regarding the client mobile device that requested connection, and providing a tethering service to the client mobile device according to the determined identification-information.

Owner:SAMSUNG ELECTRONICS CO LTD

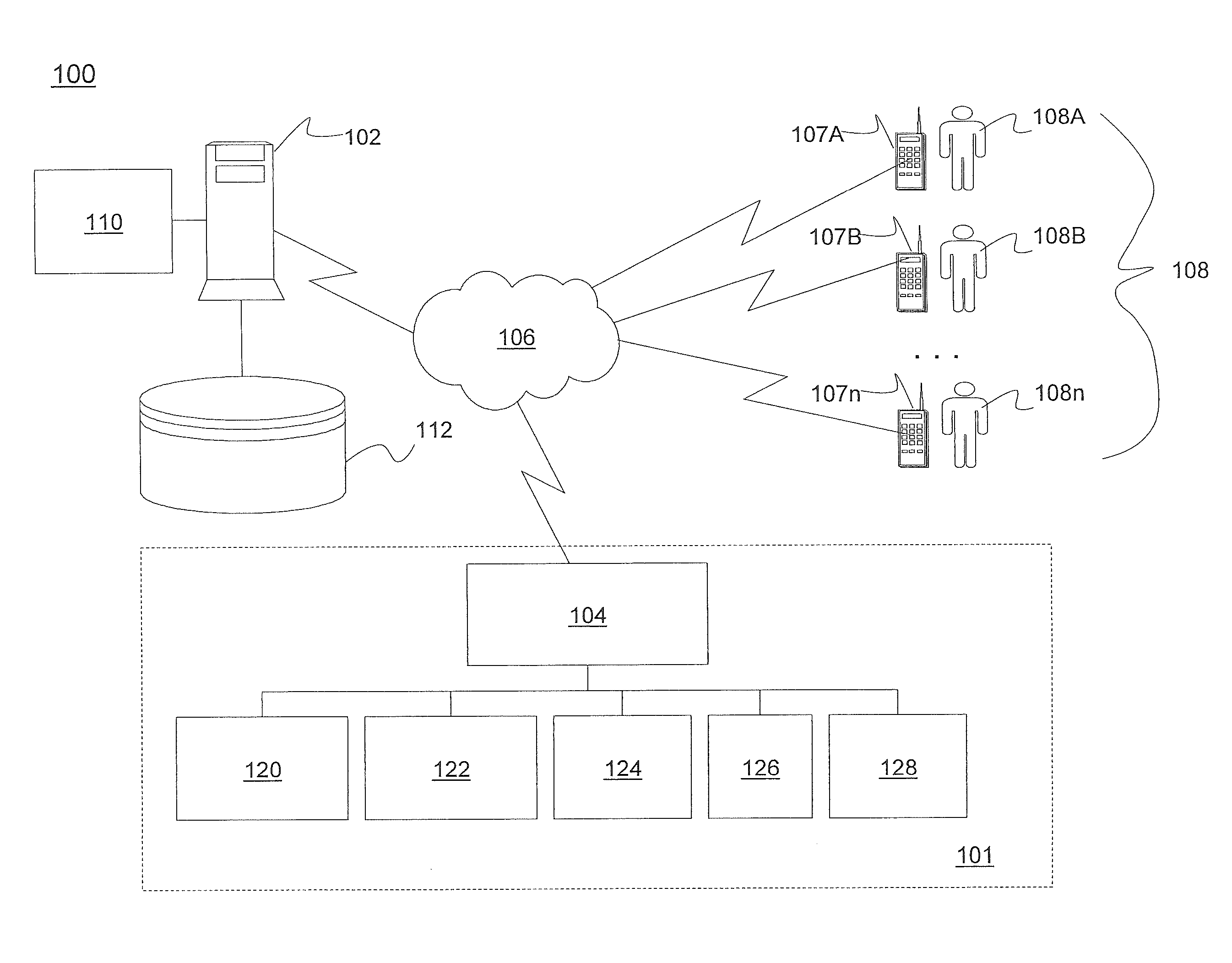

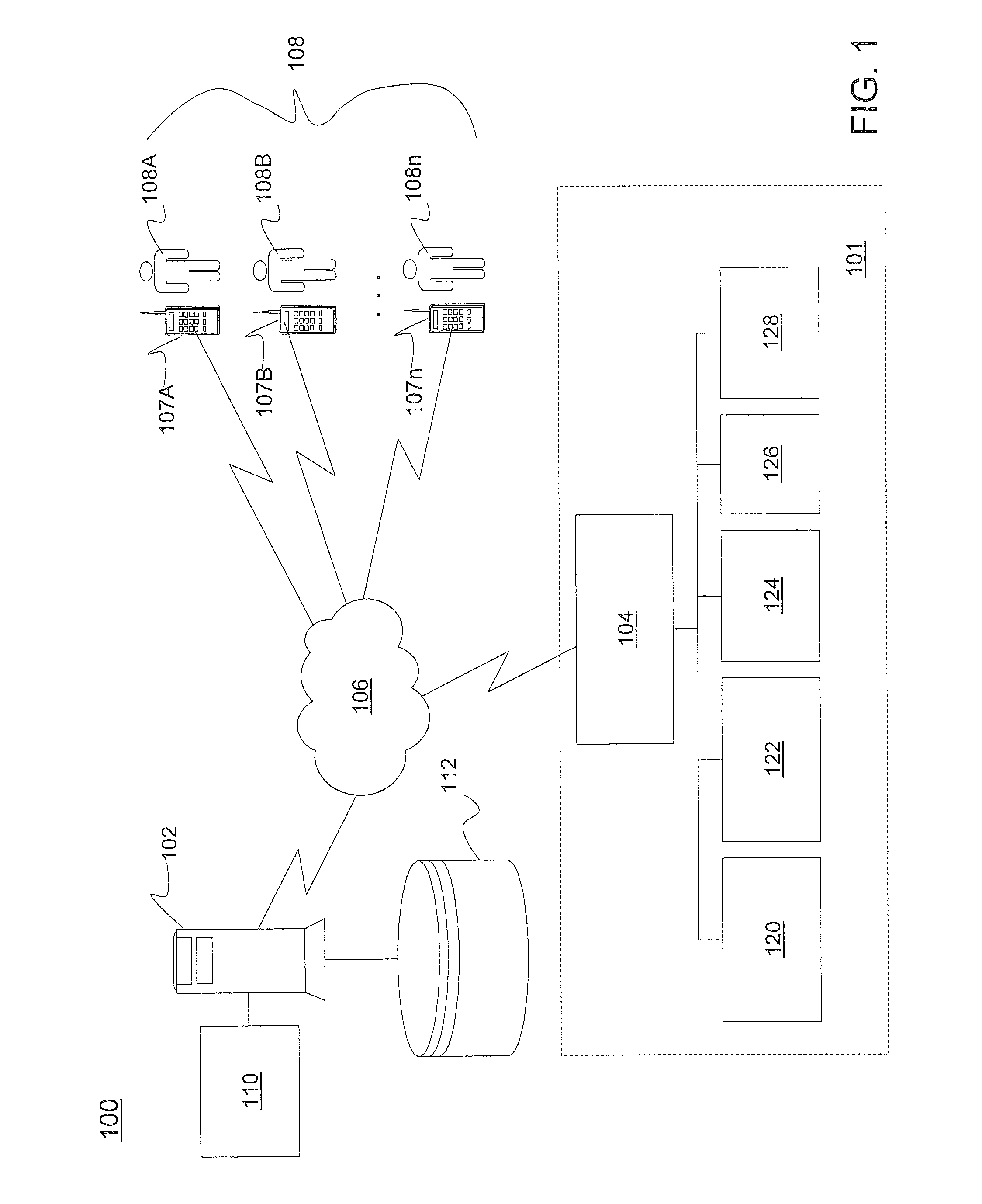

Ride-share service

ActiveUS20130096827A1Instruments for road network navigationRoad vehicles traffic controlWorld Wide WebShared service

Implementing a ride share service includes determining a route for an operator of the vehicle and accessing user preferences of the operator, the user preferences including characteristics of a ride share event and prospective ride share individuals. The ride share service also includes comparing the user preferences with information provided by individuals seeking transportation, each of the individuals providing a request for the transportation. The ride share service further includes identifying qualified candidates for the ride share event from the comparing by determining a threshold level of characteristics matching information provided by the individuals. In response to receiving a selection of a qualified candidate from the qualified candidates, the ride share service includes transmitting a communication to the selected qualified candidate accepting the request.

Owner:GM GLOBAL TECH OPERATIONS LLC

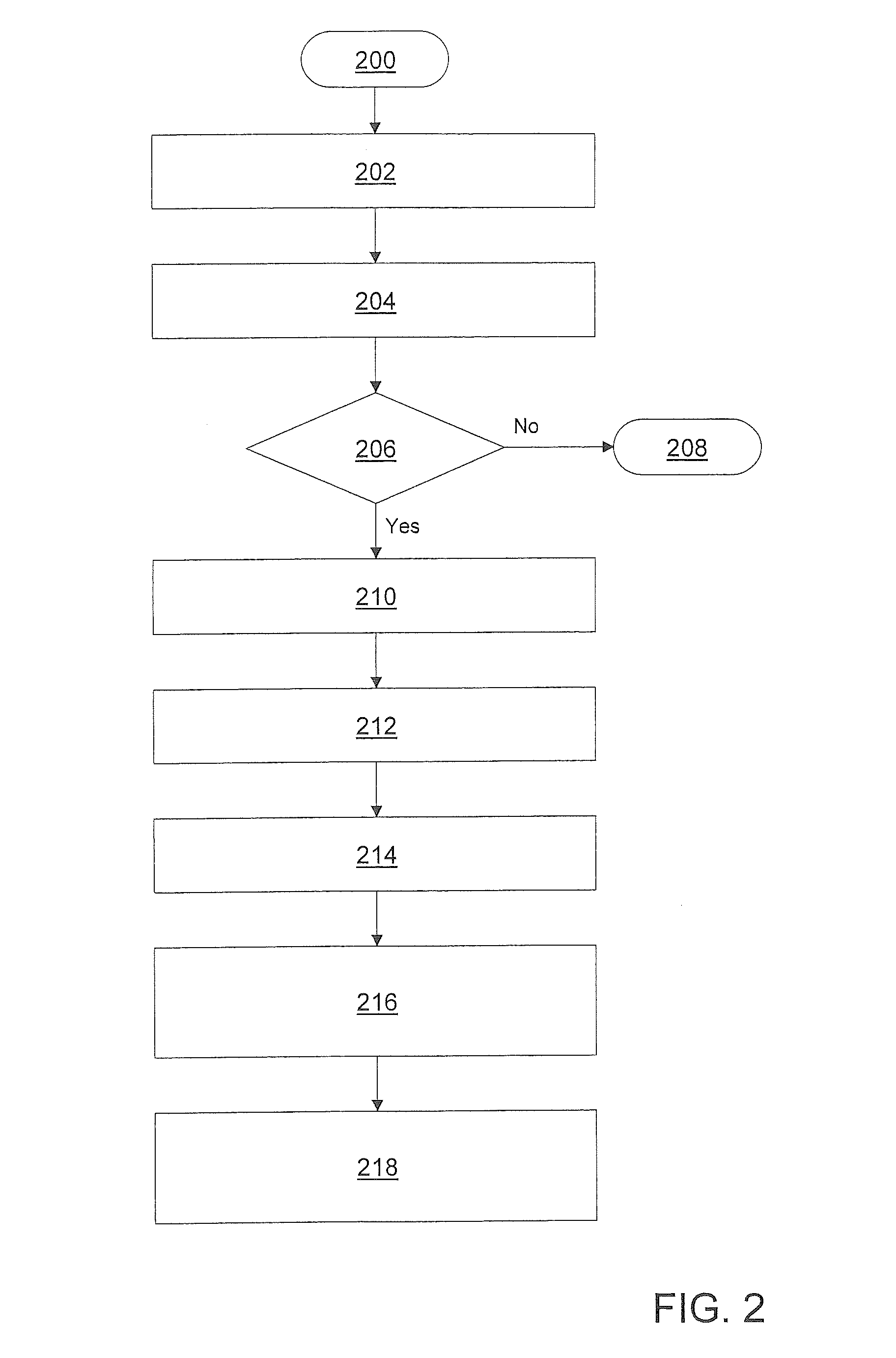

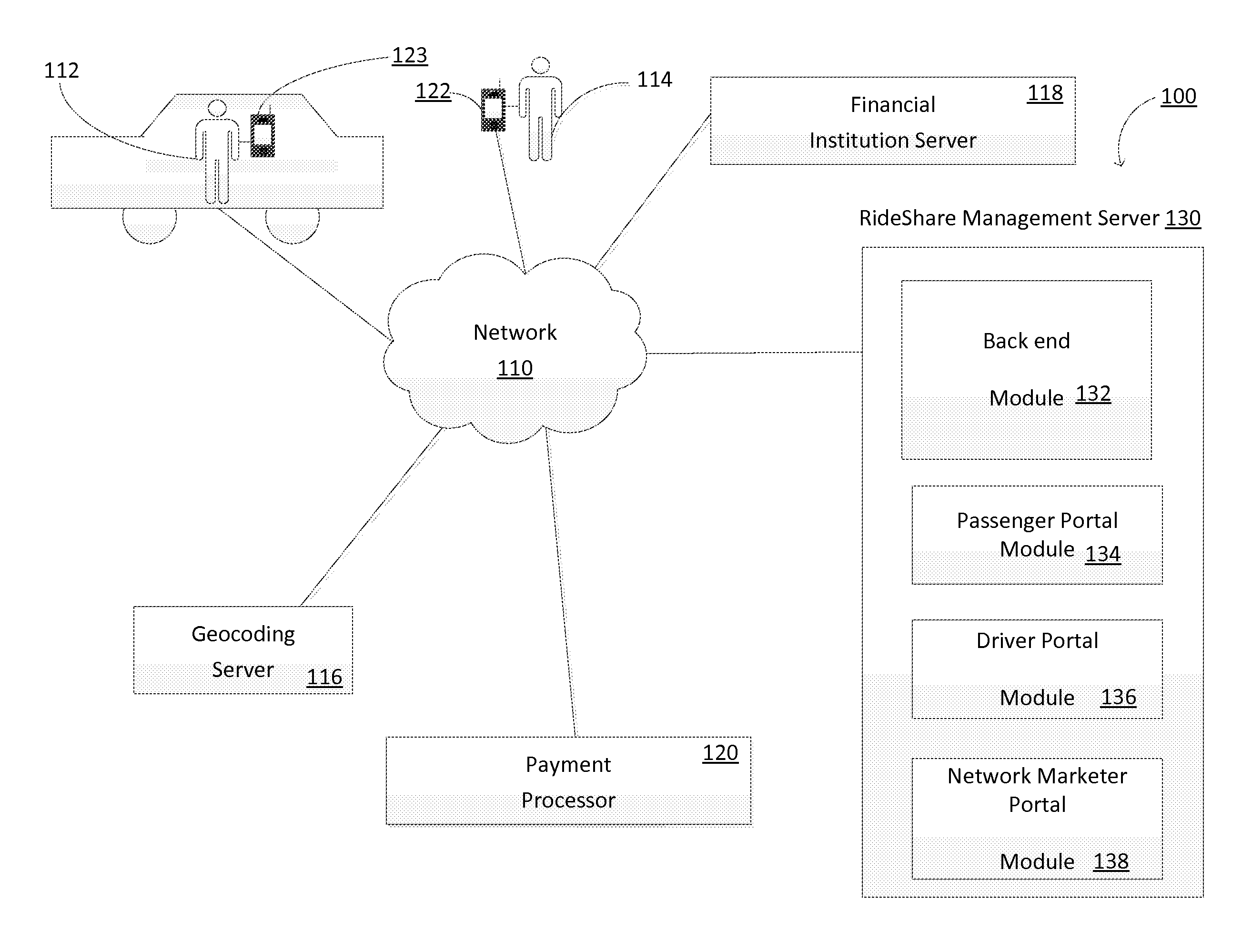

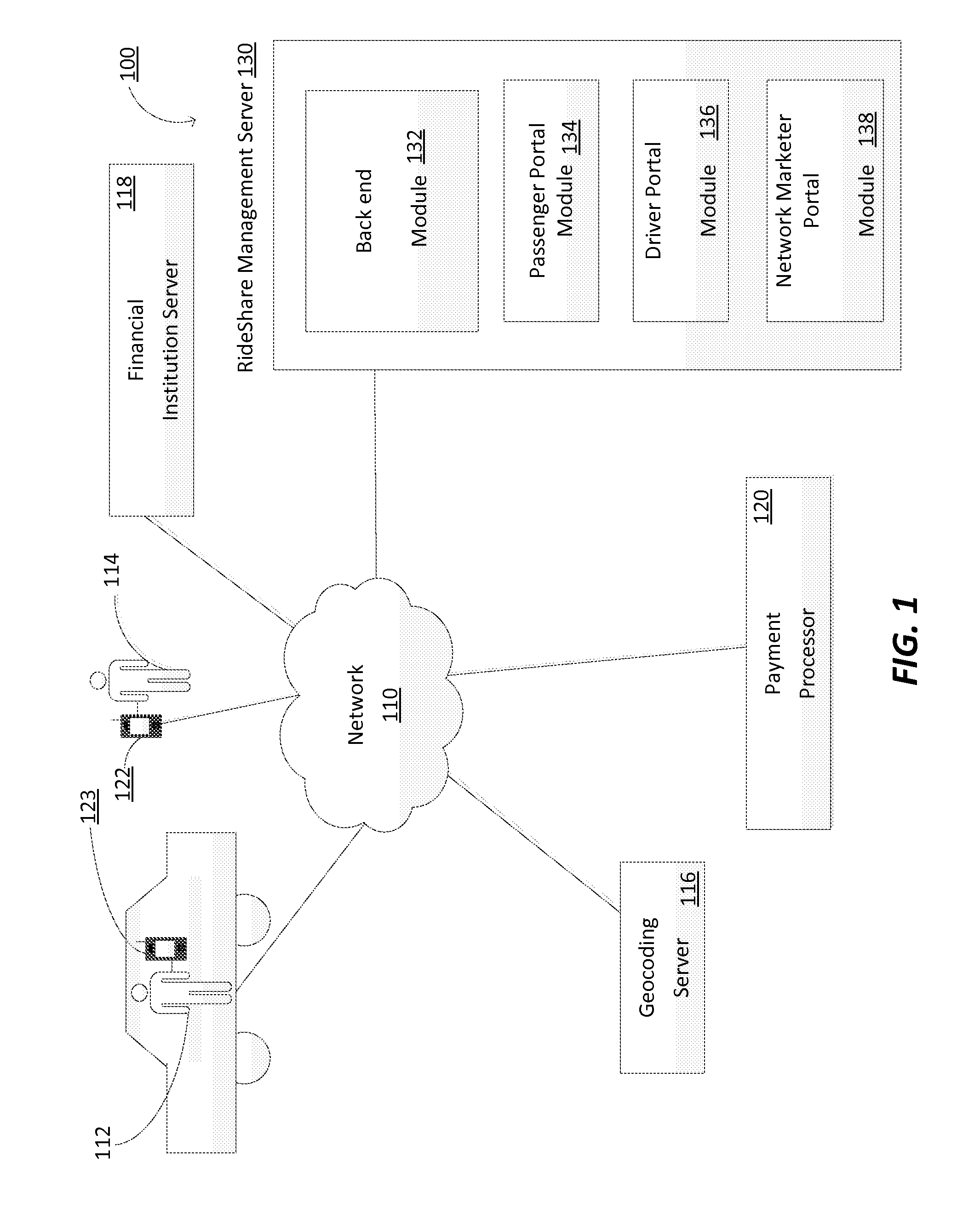

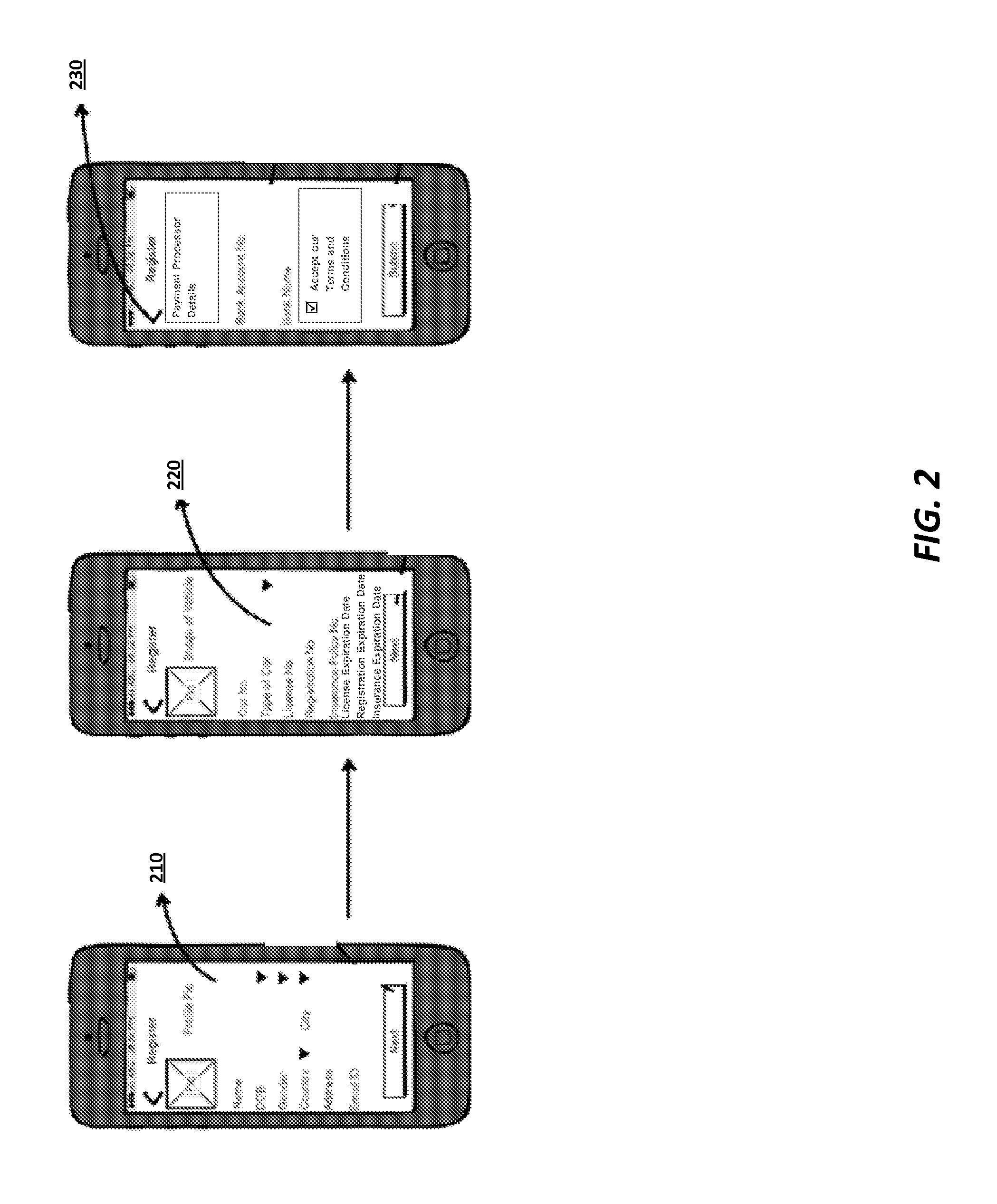

Method And System For Ride Shares Involving Hierarchical Driver Referrals

InactiveUS20160171574A1Efficient use ofInstruments for road network navigationTicket-issuing apparatusMobile appsDriver/operator

Owner:YUR DRIVERS NETWORK

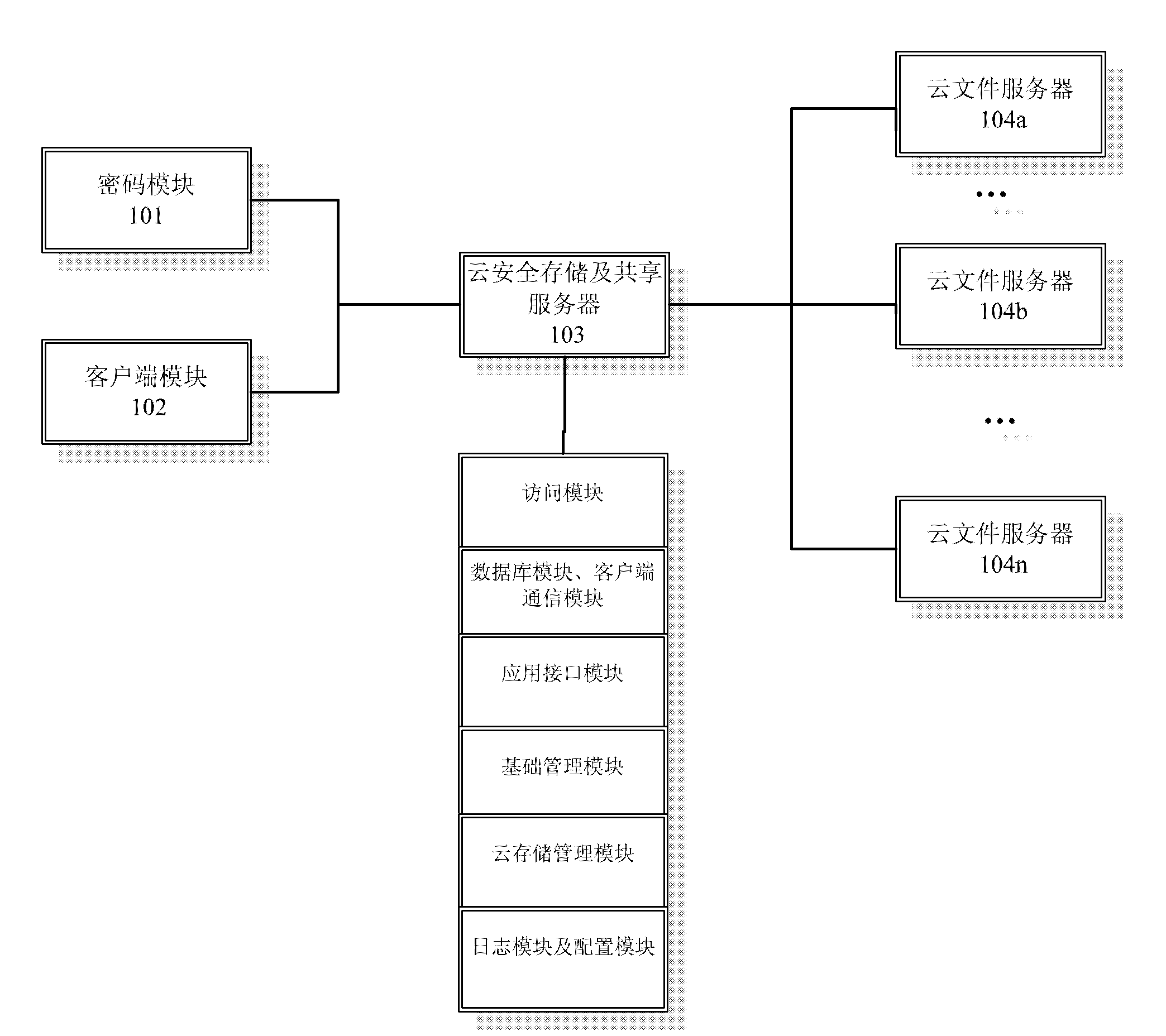

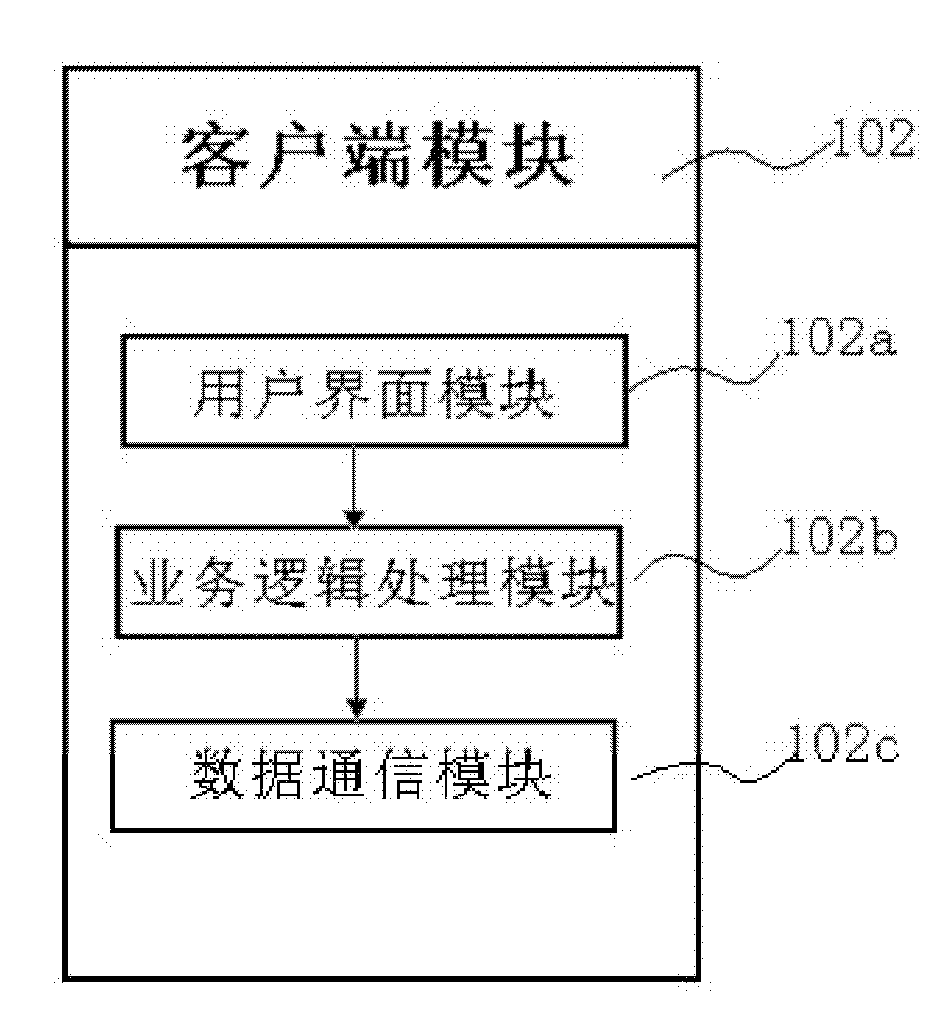

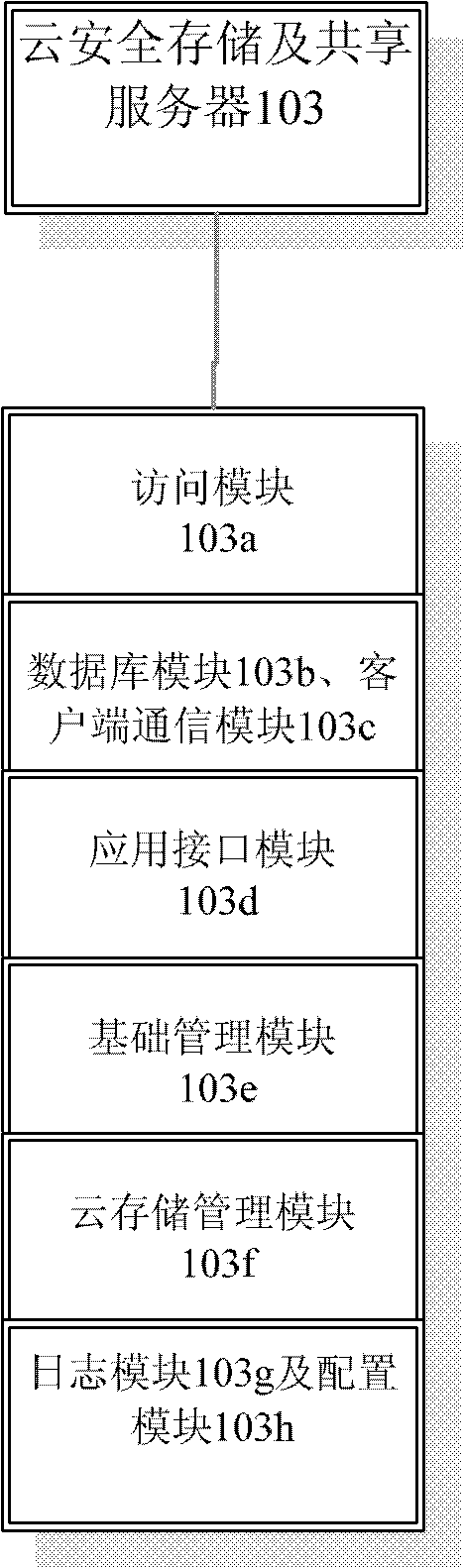

Cloud security storage and sharing service platform

The invention discloses a cloud security storage and sharing service platform comprising a cryptographic module, a client module, a cloud security storage and sharing server and a cloud file server. The cloud security storage and sharing server comprises an access module for the cloud file server, a database module, a client communication module, an application interface module, a basic management module, a cloud storage management module, a log module and a configuration module. The cryptographic module cooperates with the client module for realizing related operations of files, and performs communication with the cloud security storage and sharing server for realizing the operations of the files in the cloud file server. The cloud security storage and sharing service platform disclosed by the invention can realize three different protection mechanisms for the files in three different types and meet the requirements of cloud security storage and sharing service.

Owner:KOAL SOFTWARE CO LTD

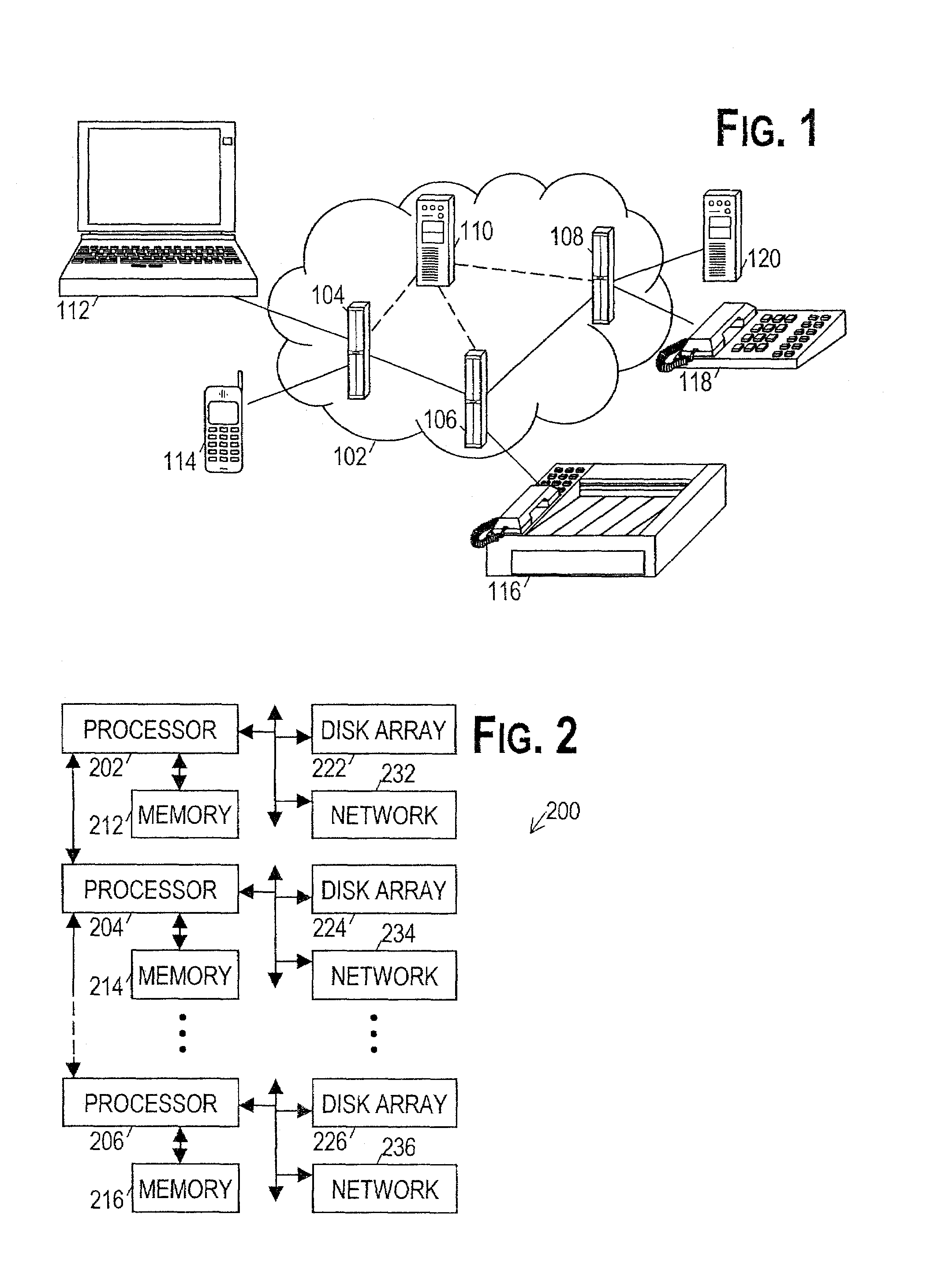

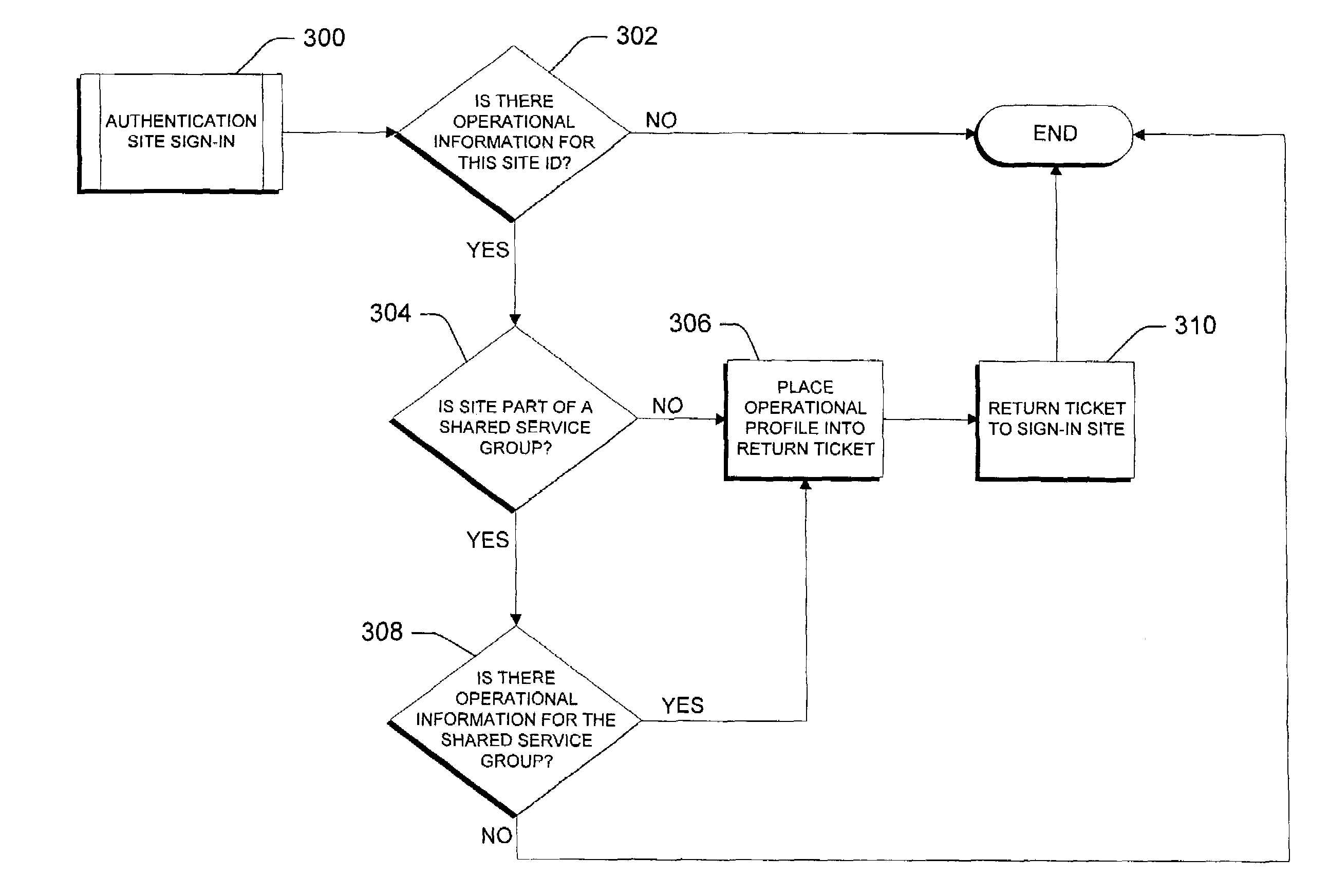

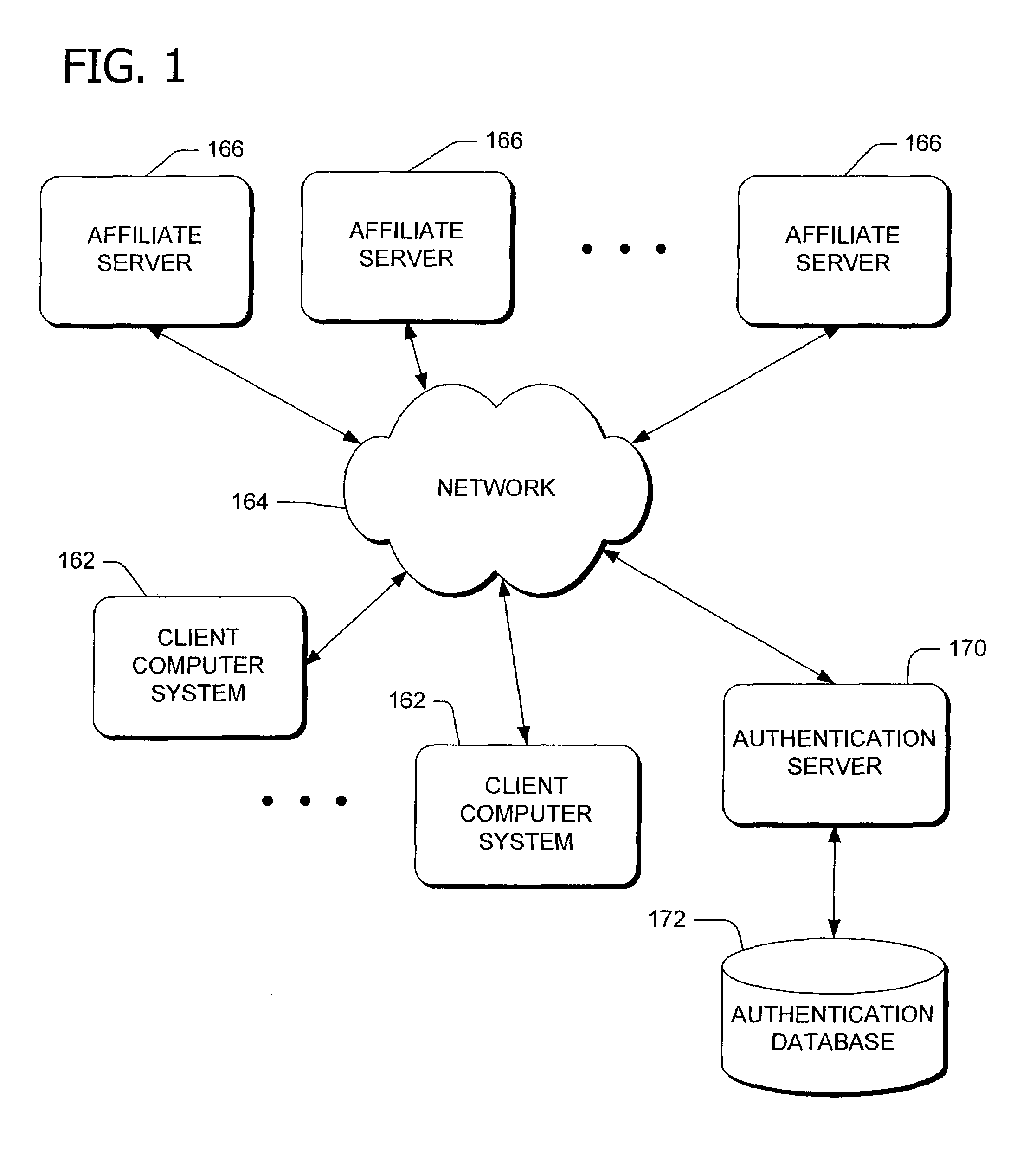

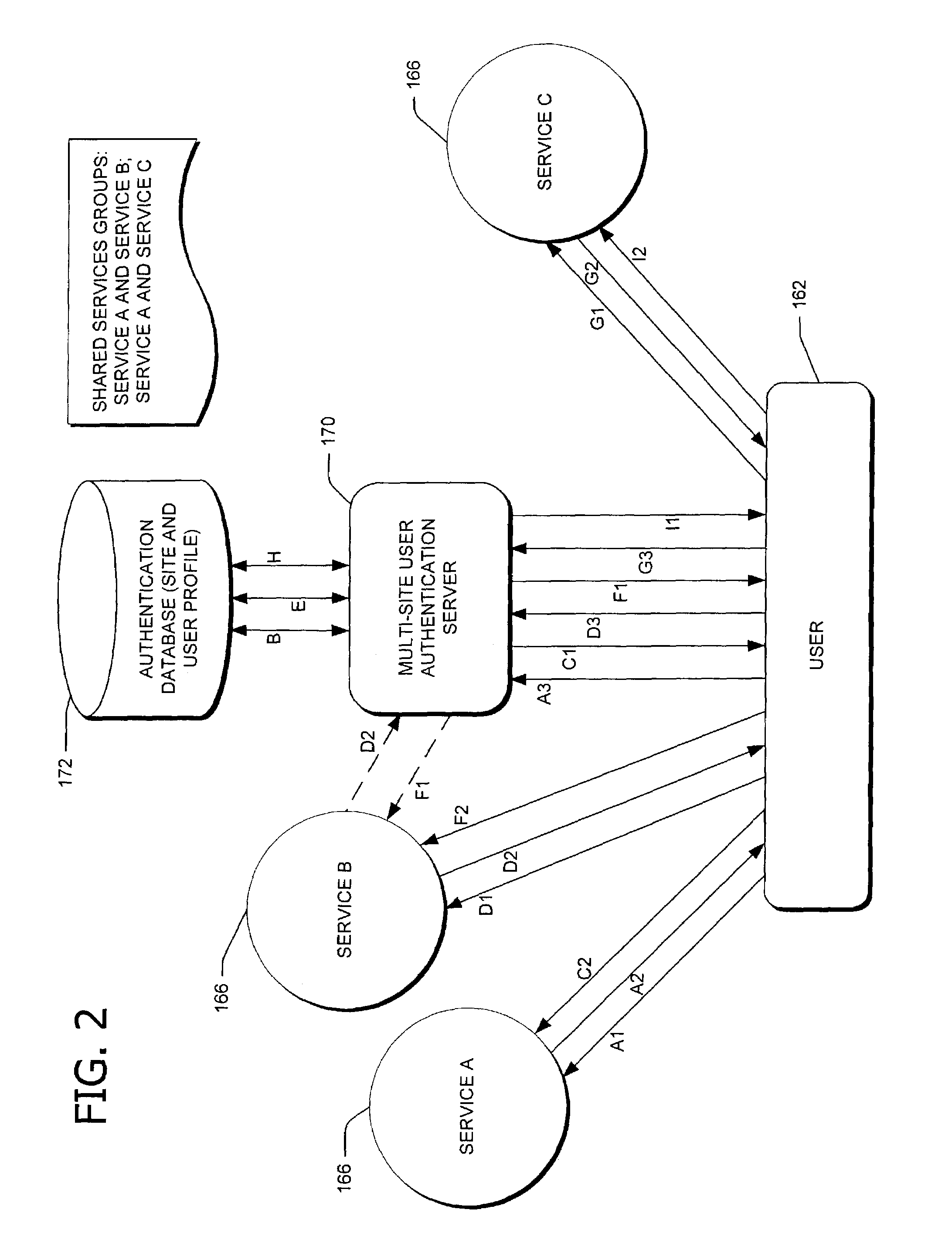

Shared services management

ActiveUS7334013B1Enhance information sharingLess laborMultiple digital computer combinationsTransmissionService product managementClient-side

Methods and system of sharing information among network servers coupled to a data communication network for providing services to a user via a client on the network and data structure for use therewith. Related services provided by the network servers are grouped into service groups. A database stores user-specific information, including operational information to be shared within the service groups. A central server coupled to the network receives a request from the user for a selected service and determines whether the selected service belongs to one of the service groups. In response to the request, the central server retrieves user-specific information identifying the user with respect to the selected service. The retrieved information includes operational information to be shared within each of the service groups to which the selected service belongs.

Owner:MICROSOFT TECH LICENSING LLC

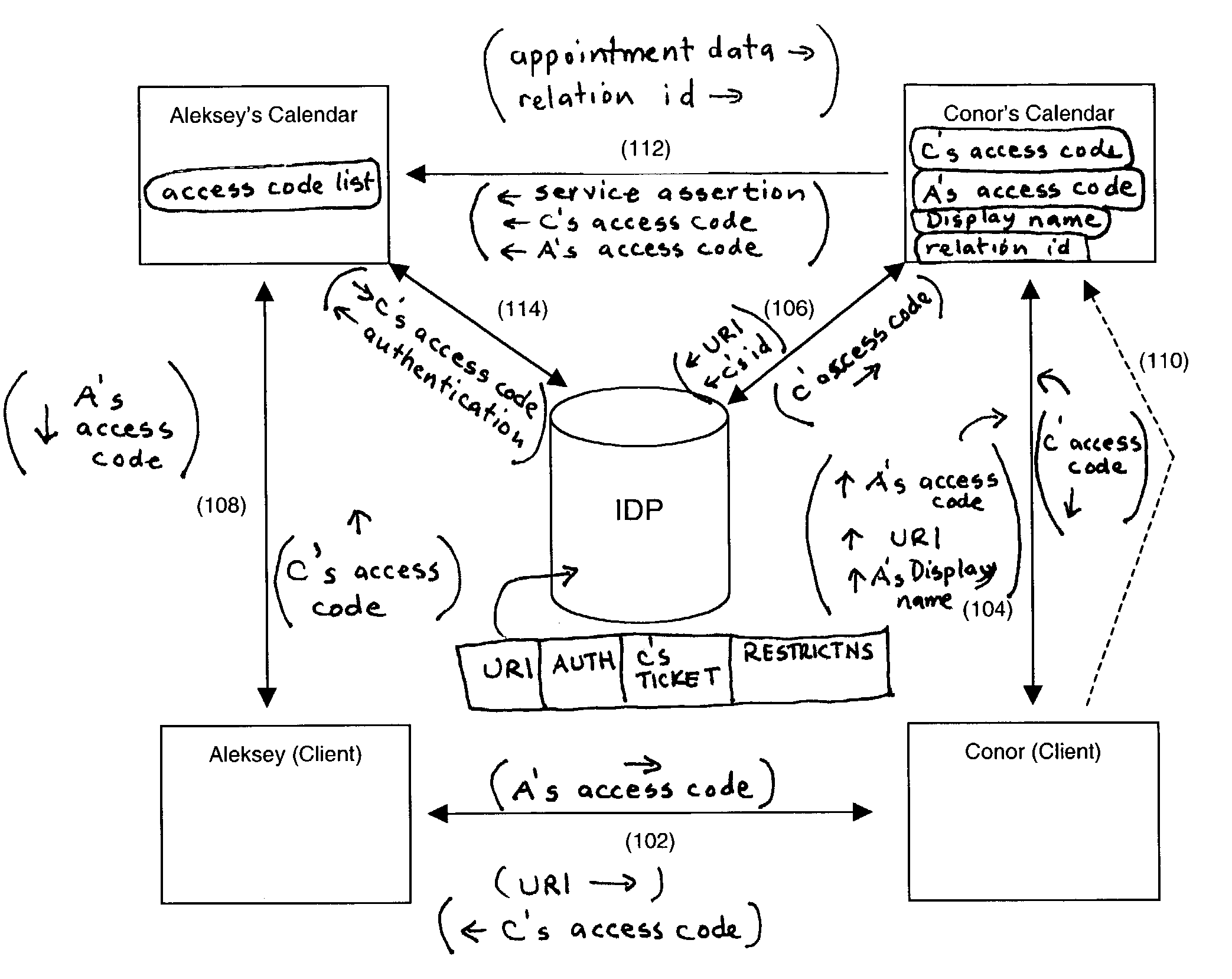

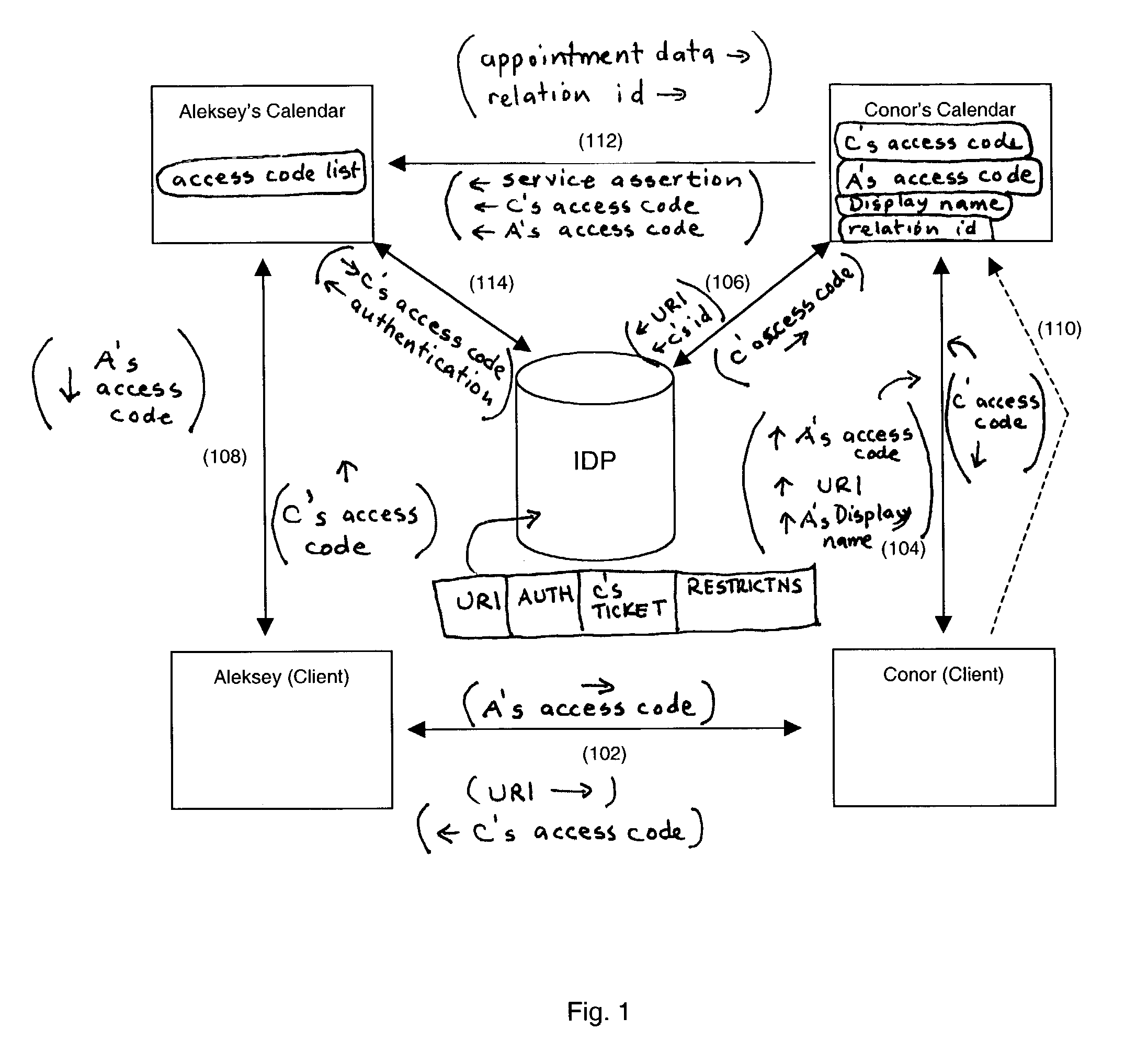

Use of pseudonyms vs. real names

InactiveUS7107447B2Simple and secure wayDigital data processing detailsUser identity/authority verificationNetwork serviceElectronic mail

An apparatus and method is provided for allowing users to share services without sharing identities. Specifically, the apparatus and method allow users to share pseudonyms instead of actual user names, thus protecting both users from unwanted emails, IM messages, and the like. The invention provides an introduction scheme, which comprises a simple and secure way of establishing a user to user link. A preferred embodiment incorporates services of a linked federation network service, such as AOL's Liberty Alliance service, without exposing real user names to other users.

Owner:META PLATFORMS INC

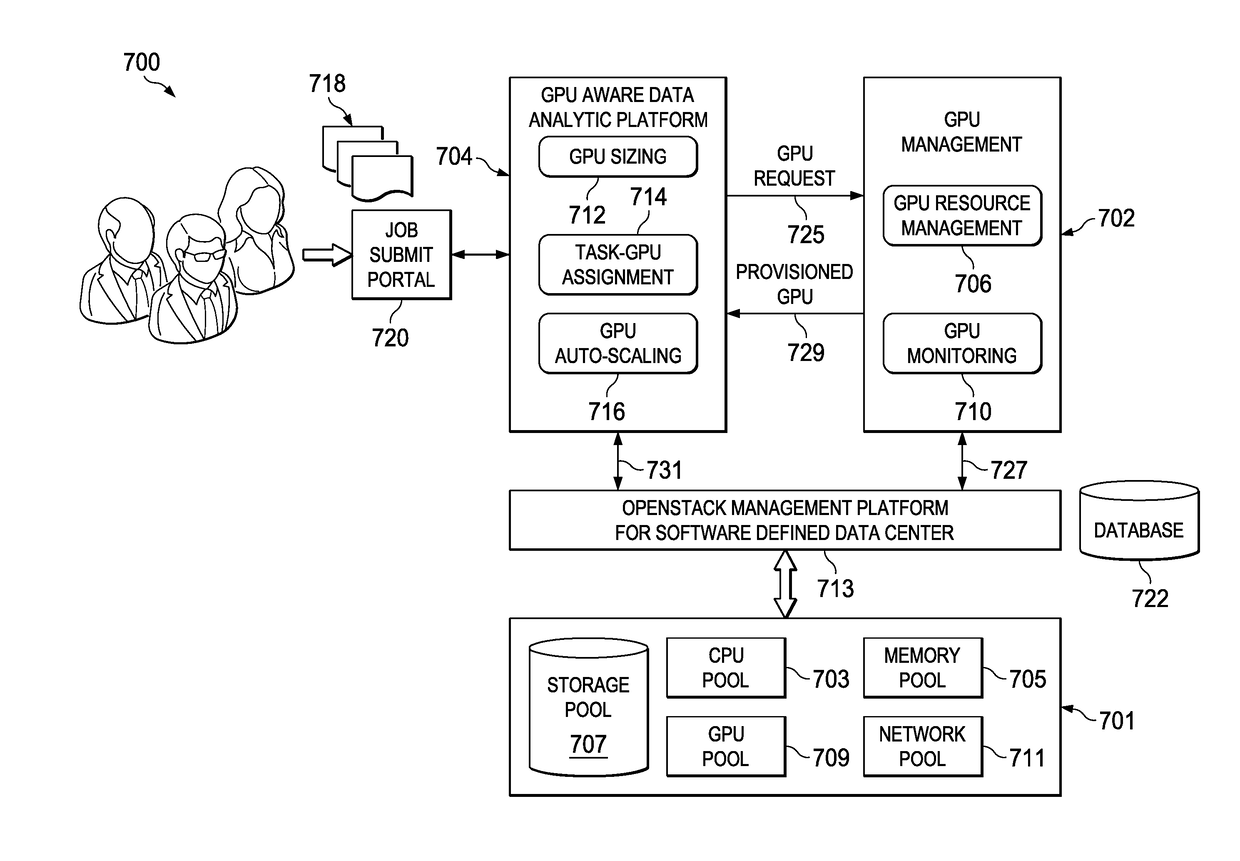

Dynamically provisioning and scaling graphic processing units for data analytic workloads in a hardware cloud

ActiveUS20170293994A1Improves GPU utilizationFine granularity and agileProcessor architectures/configurationProgram controlResource poolGraphics

Server resources in a data center are disaggregated into shared server resource pools, including a graphics processing unit (GPU) pool. Servers are constructed dynamically, on-demand and based on workload requirements, by allocating from these resource pools. According to this disclosure, GPU utilization in the data center is managed proactively by assigning GPUs to workloads in a fine granularity and agile way, and de-provisioning them when no longer needed. In this manner, the approach is especially advantageous to automatically provision GPUs for data analytic workloads. The approach thus provides for a “micro-service” enabling data analytic workloads to automatically and transparently use GPU resources without providing (e.g., to the data center customer) the underlying provisioning details. Preferably, the approach dynamically determines the number and the type of GPUs to use, and then during runtime auto-scales the GPUs based on workload.

Owner:IBM CORP

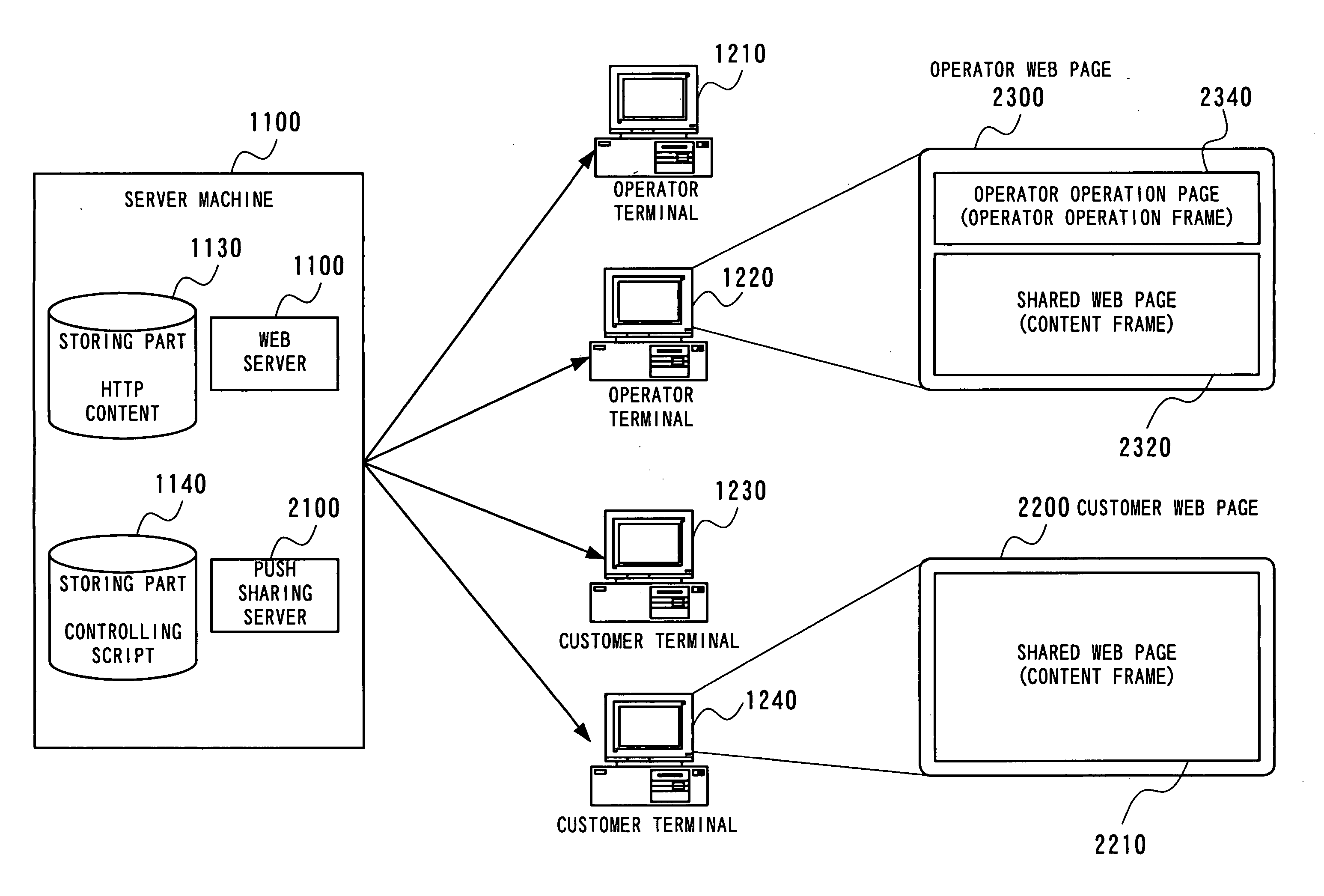

Real-time web sharing system

ActiveUS20060015763A1Error detection/correctionMultiple digital computer combinationsComputer hardwareWeb page

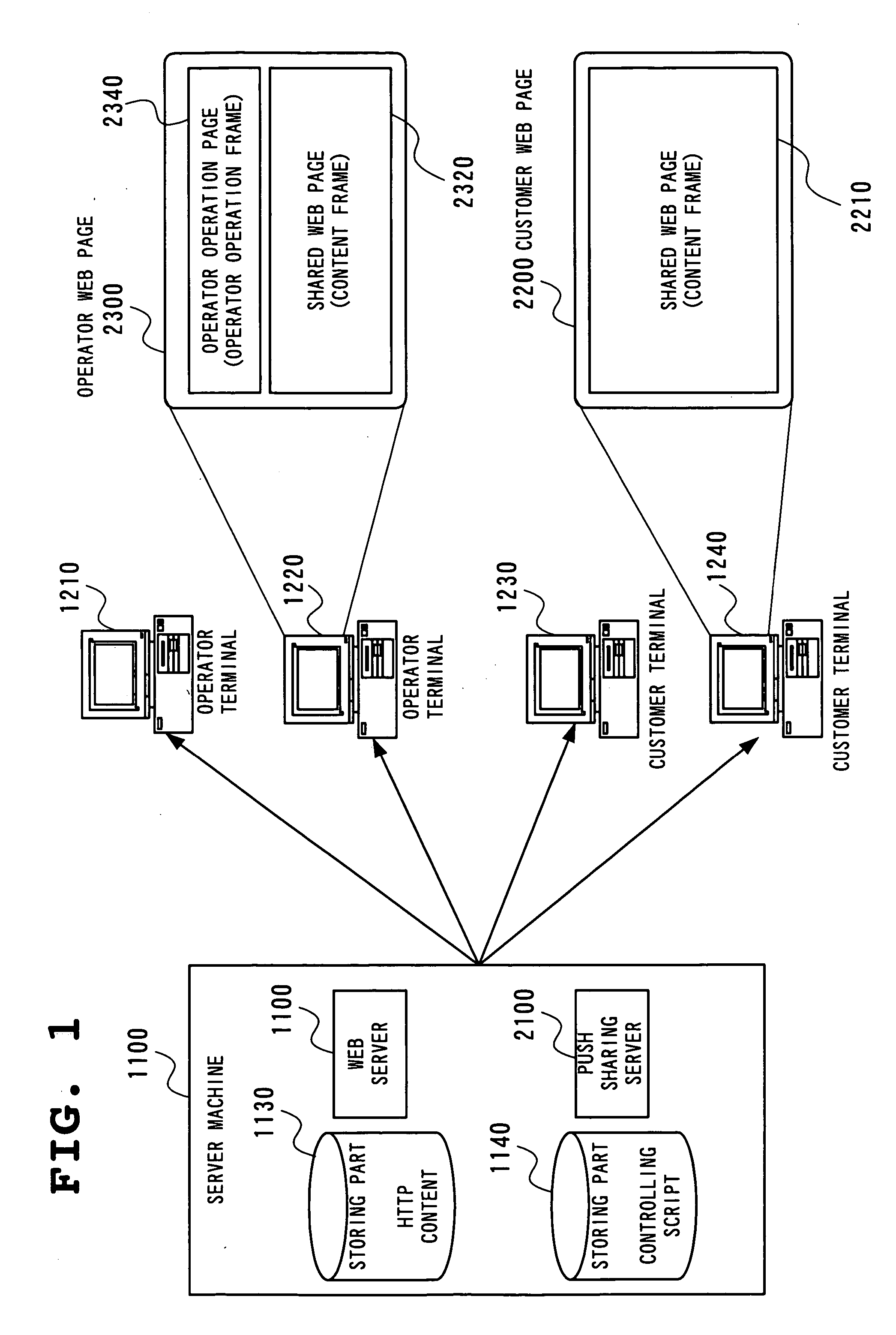

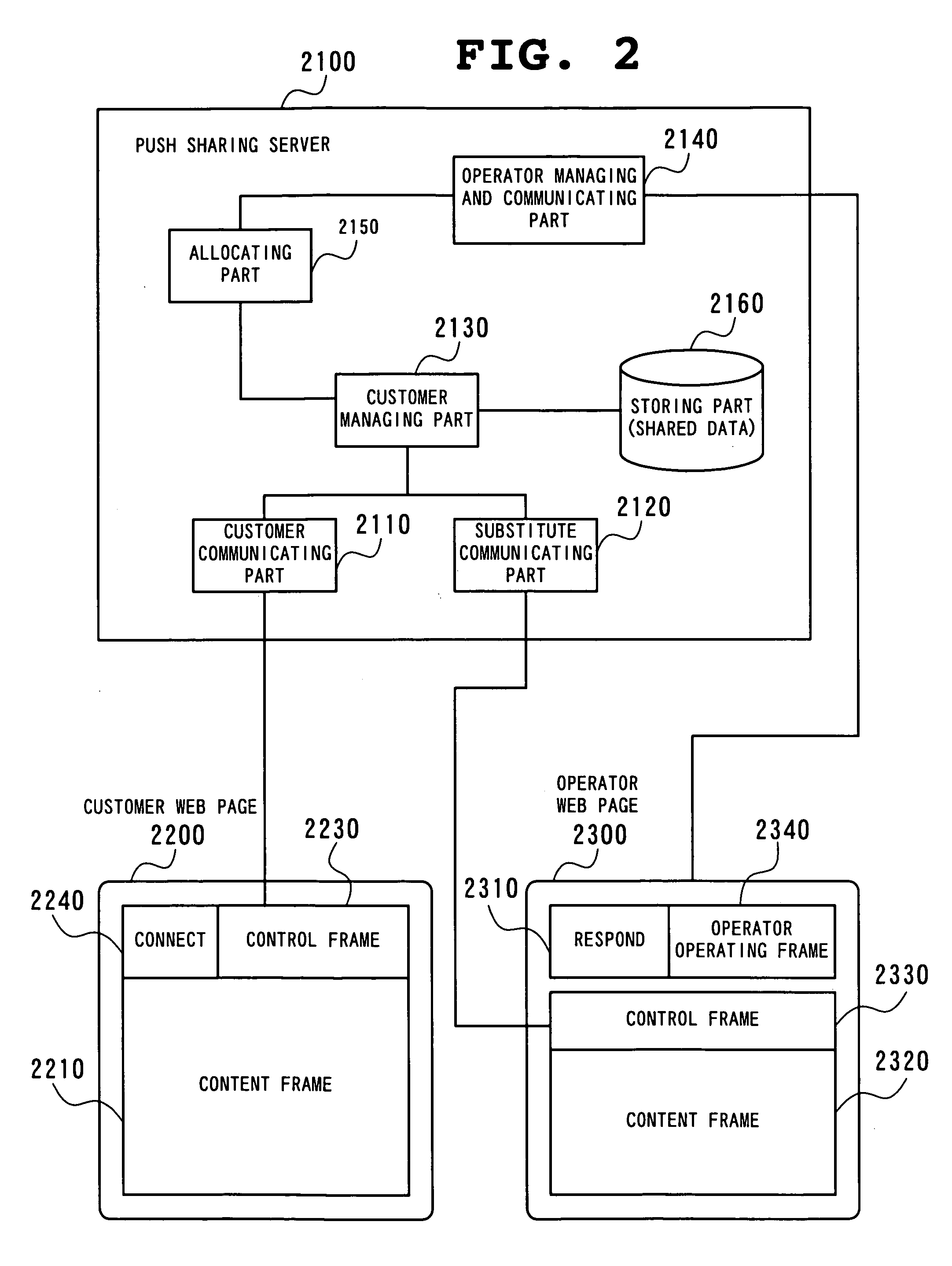

The customer presses the Connect button (2240) on the customer terminal (1230). By this, a connection request to an operator terminal is notified to an operator terminal (1210) via the push sharing server (2100). On receiving this notification, the operator terminal (1210) changes the Respond button (2310) to the Incoming button. When the operator presses the Respond button (2310) on the operator web page (2300), the push sharing server (2100) transmits a difference notification command to the operator terminal (1210), and the operator terminal displays the same web page as the web page on the customer terminals (1230).

Owner:ASTRAZEMECA +1

Features

- R&D

- Intellectual Property

- Life Sciences

- Materials

- Tech Scout

Why Patsnap Eureka

- Unparalleled Data Quality

- Higher Quality Content

- 60% Fewer Hallucinations

Social media

Patsnap Eureka Blog

Learn More Browse by: Latest US Patents, China's latest patents, Technical Efficacy Thesaurus, Application Domain, Technology Topic, Popular Technical Reports.

© 2025 PatSnap. All rights reserved.Legal|Privacy policy|Modern Slavery Act Transparency Statement|Sitemap|About US| Contact US: help@patsnap.com