Patents

Literature

Hiro is an intelligent assistant for R&D personnel, combined with Patent DNA, to facilitate innovative research.

36 results about "Corresponding conditional" patented technology

Efficacy Topic

Property

Owner

Technical Advancement

Application Domain

Technology Topic

Technology Field Word

Patent Country/Region

Patent Type

Patent Status

Application Year

Inventor

In logic, the corresponding conditional of an argument (or derivation) is a material conditional whose antecedent is the conjunction of the argument's (or derivation's) premises and whose consequent is the argument's conclusion. An argument is valid if and only if its corresponding conditional is a logical truth. It follows that an argument is valid if and only if the negation of its corresponding conditional is a contradiction. Therefore, the construction of a corresponding conditional provides a useful technique for determining the validity of an argument.

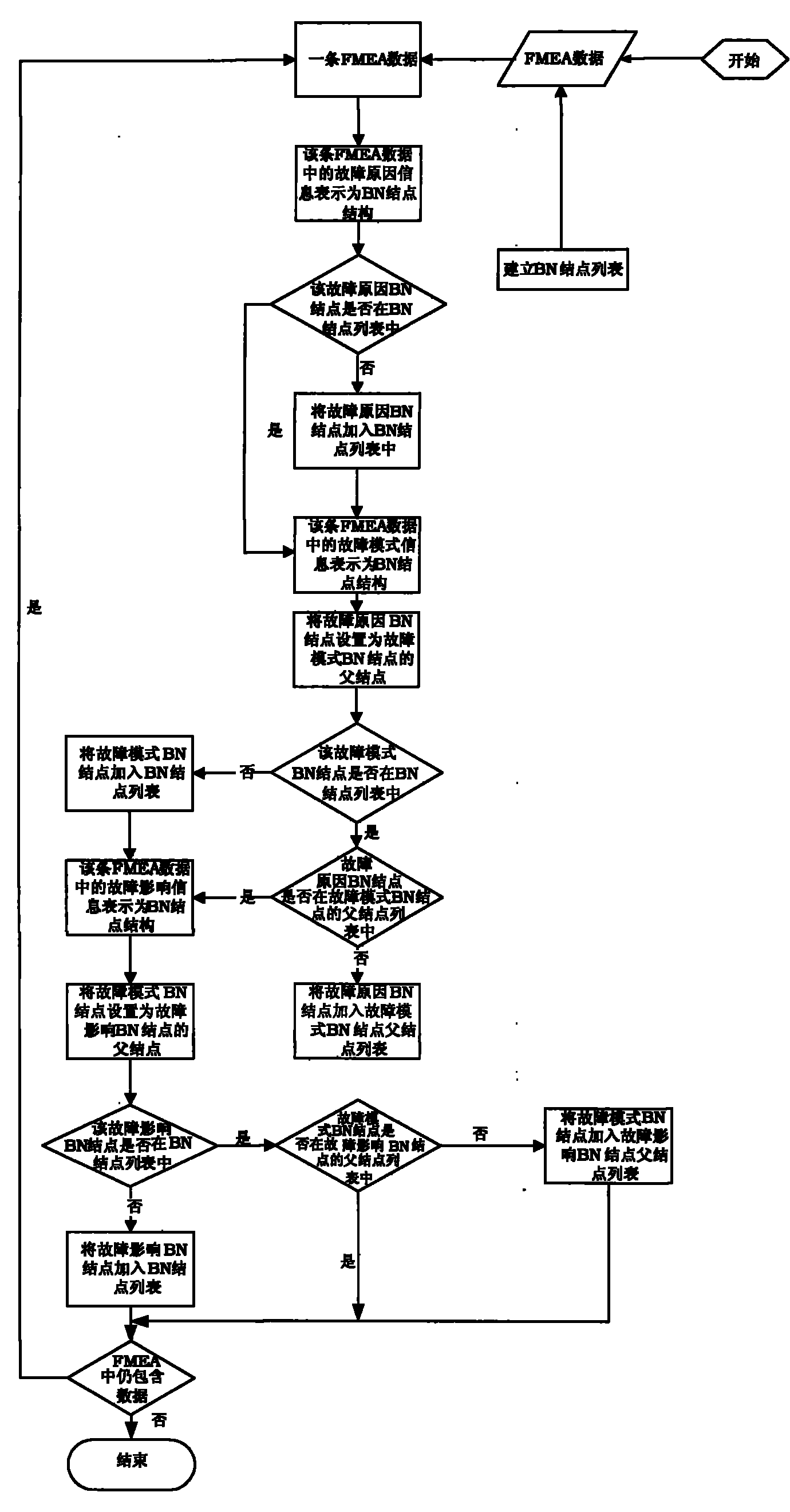

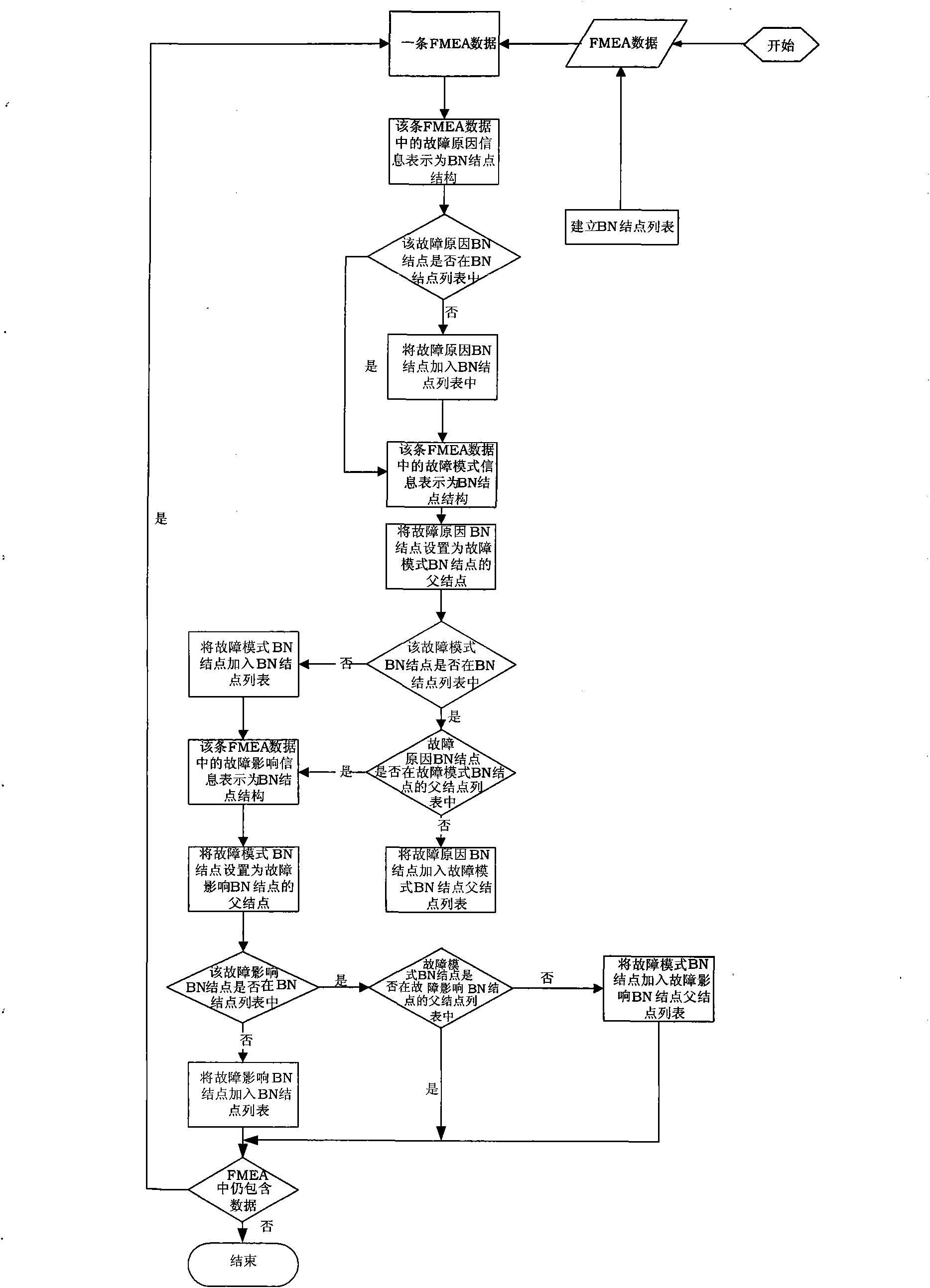

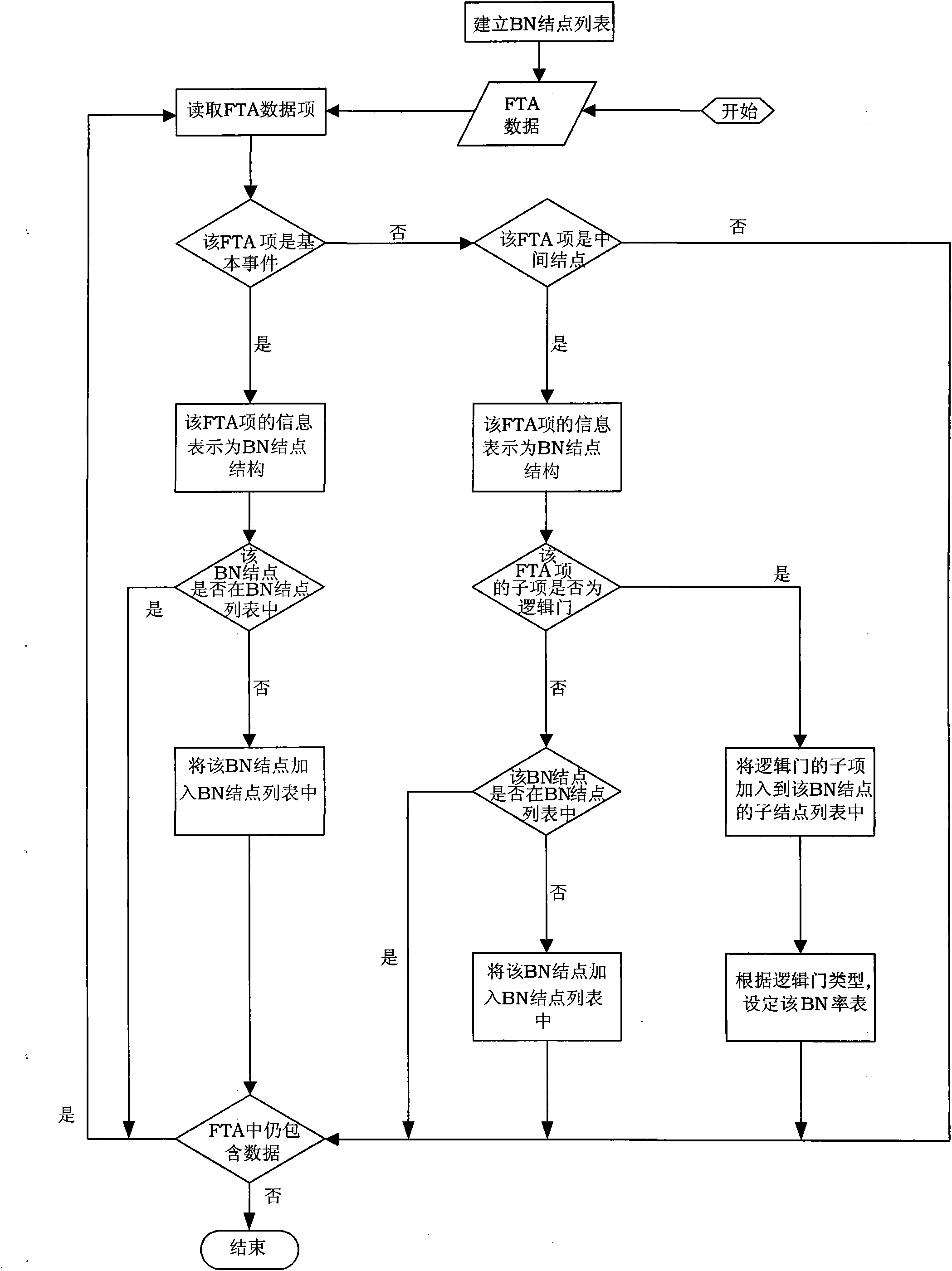

Method for performing fault diagnosis by using model conversion

ActiveCN101814114AEasy to useImprove diagnostic accuracyMathematical modelsSpecial data processing applicationsCorresponding conditionalLogic gate

The invention discloses a method for performing fault diagnosis by using model conversion. According to the method, processed related information of a fault mode effect analysis model is converted into a corresponding Bayesian network model by using a self-defined data structure while ensuring complete data, an elementary event, a logic gate and an intermediate event of a fault tree in a fault tree analysis model are converted into nodes in a Bayesian network respectively, and a corresponding conditional probability table in the Bayesian network is set. The fault diagnosis is performed through the converted Bayesian network model. The method of the invention expands the use of the Bayesian network model in the fault diagnosis, improves the diagnosis accuracy of a fault diagnosis model in practical application, ensures the universality of model conversion, and can realize cross-tool conversion among different fault mode effect analysis, fault tree analysis result and the generated Bayesian network.

Owner:BEIHANG UNIV

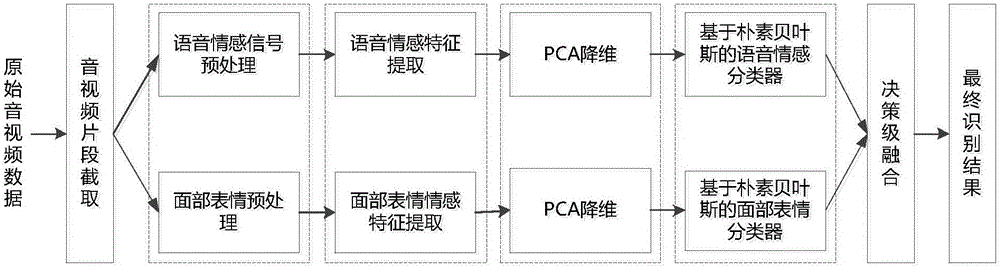

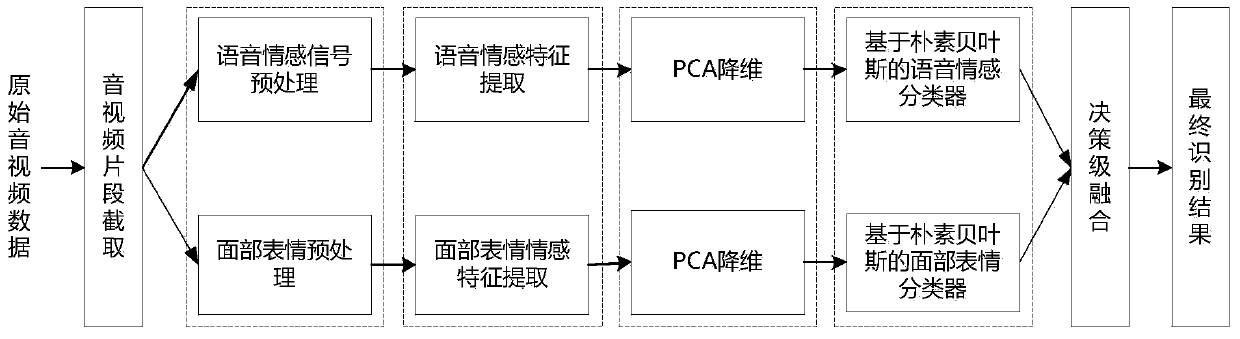

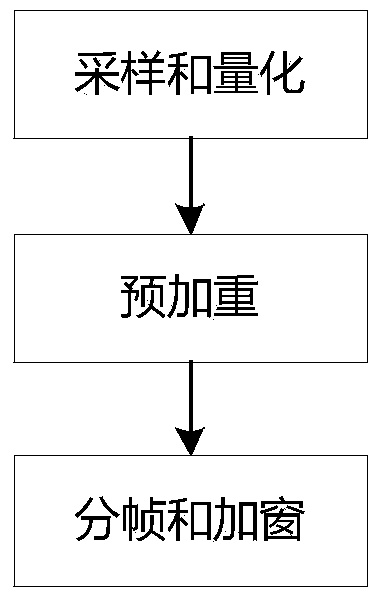

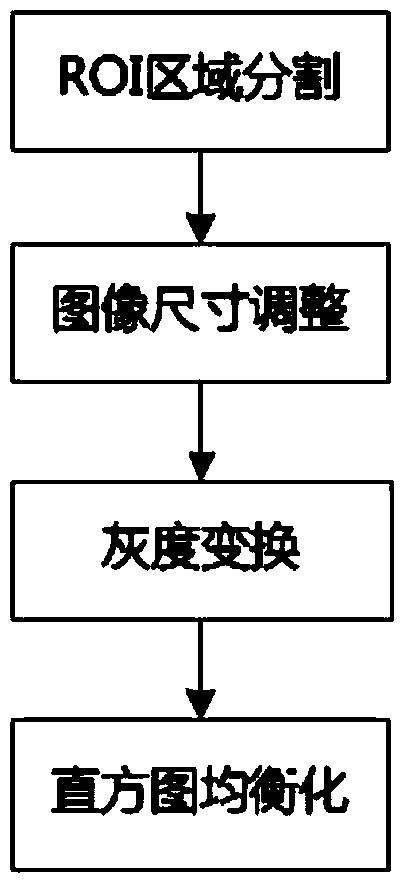

Voice-and-facial-expression-based identification method and system for dual-modal emotion fusion

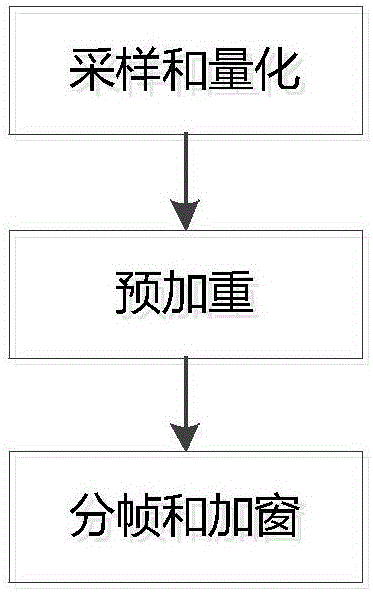

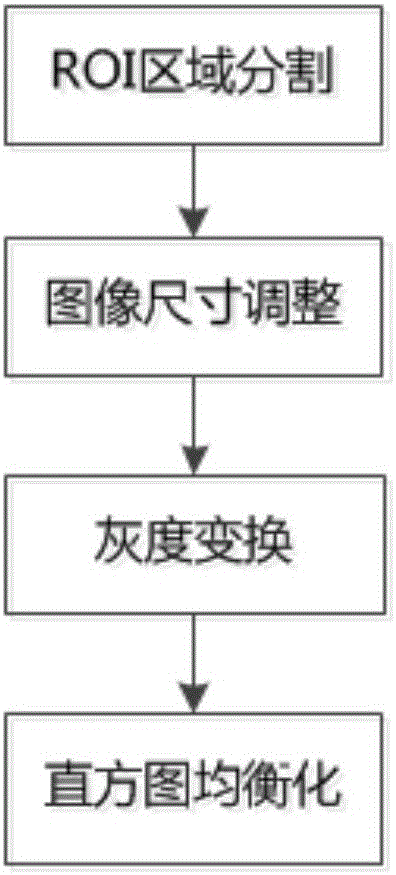

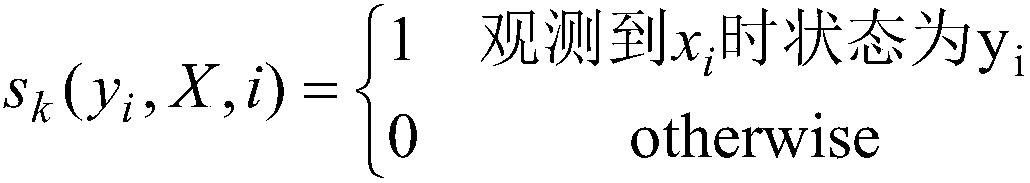

ActiveCN105976809AImprove accuracyImprove reliabilitySpeech recognitionCorresponding conditionalDimensionality reduction

The invention relates to a voice-and-facial-expression-based identification method for dual-modal emotion fusion. The method comprises: S1, audio data and video data of a to-be-identified object are obtained; S2, a face expression image is extracted from the video data and segmentation of an eye region, a nose region, and a mouth region is carried out; S3, a facial expression feature in each regional image is extracted from images of the three regions; S4, PCA analysis and dimensionality reduction is carried out on voice emotion features and the facial expression features; and S5, naive Bayesian emotion voice classification is carried out on samples of two kinds of modes and decision fusion is carried out on a conditional probability to obtain a final emotion identification result. According to the invention, fusion of the voice emotion features and the facial expression features is carried out by using a decision fusion method, so that accurate data can be provided for corresponding conditional probability calculation carried out at the next step; and an emotion state of a detected object can be obtained precisely by using the method, so that accuracy and reliability of emotion identification can be improved.

Owner:CHINA UNIV OF GEOSCIENCES (WUHAN)

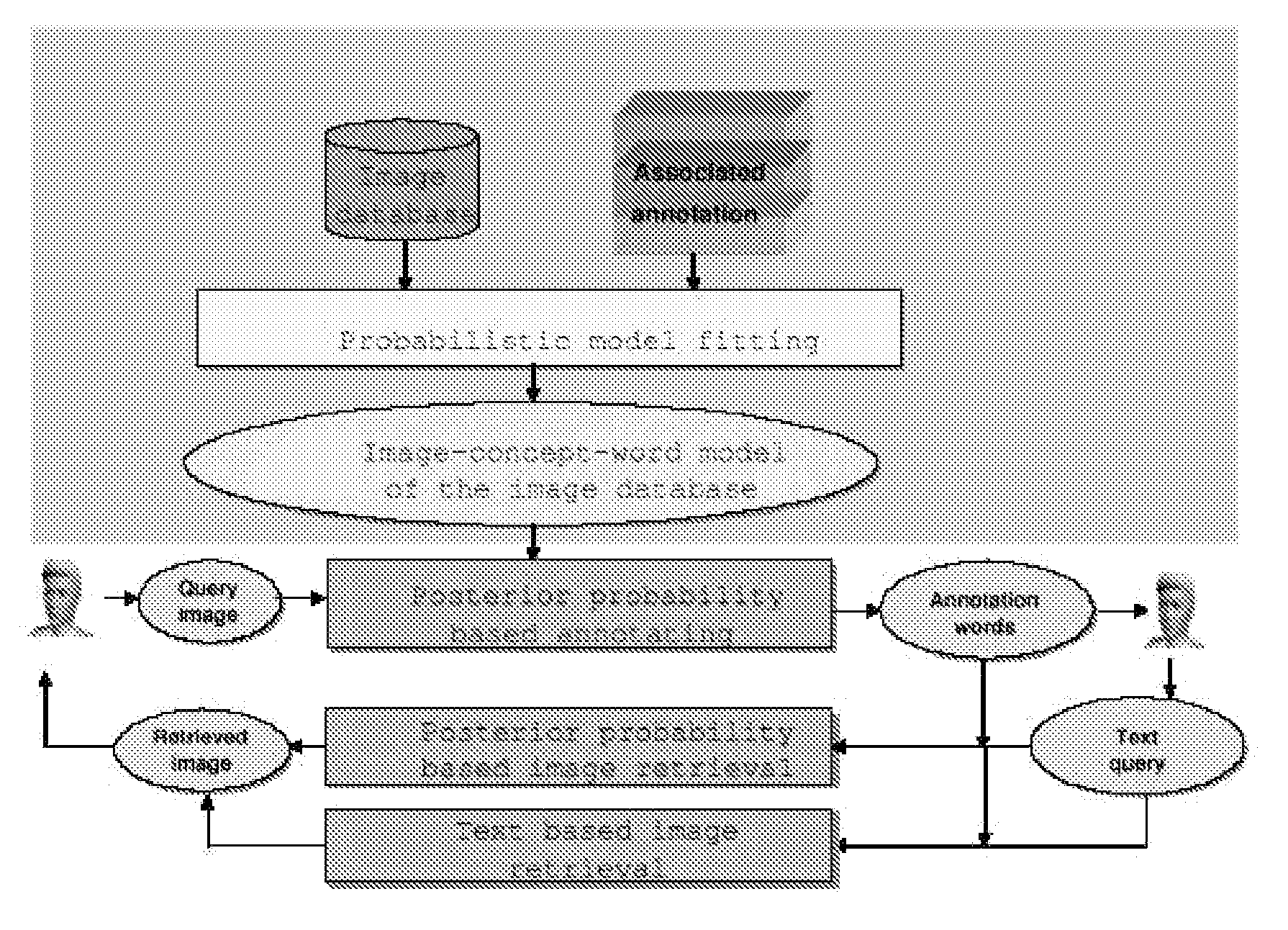

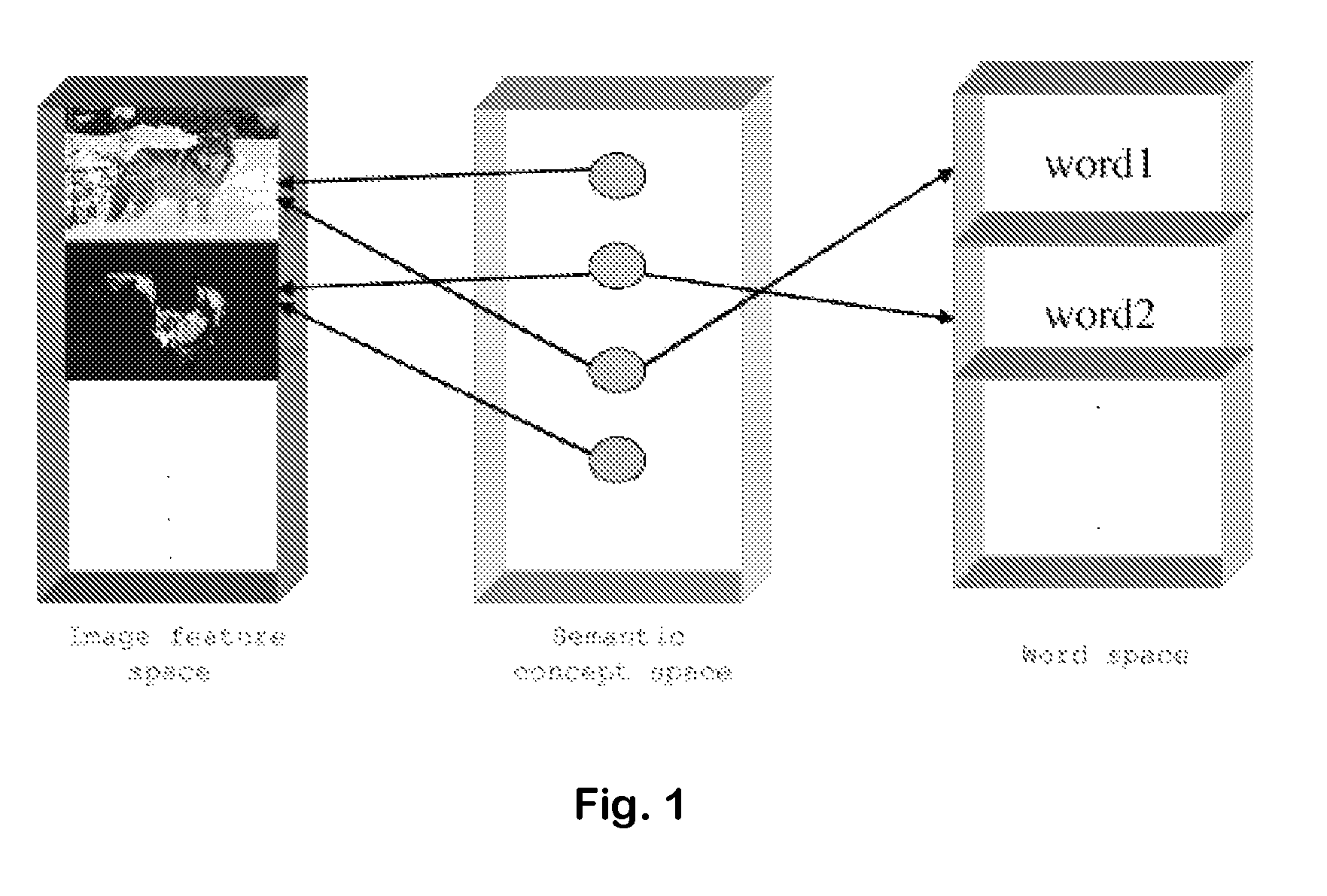

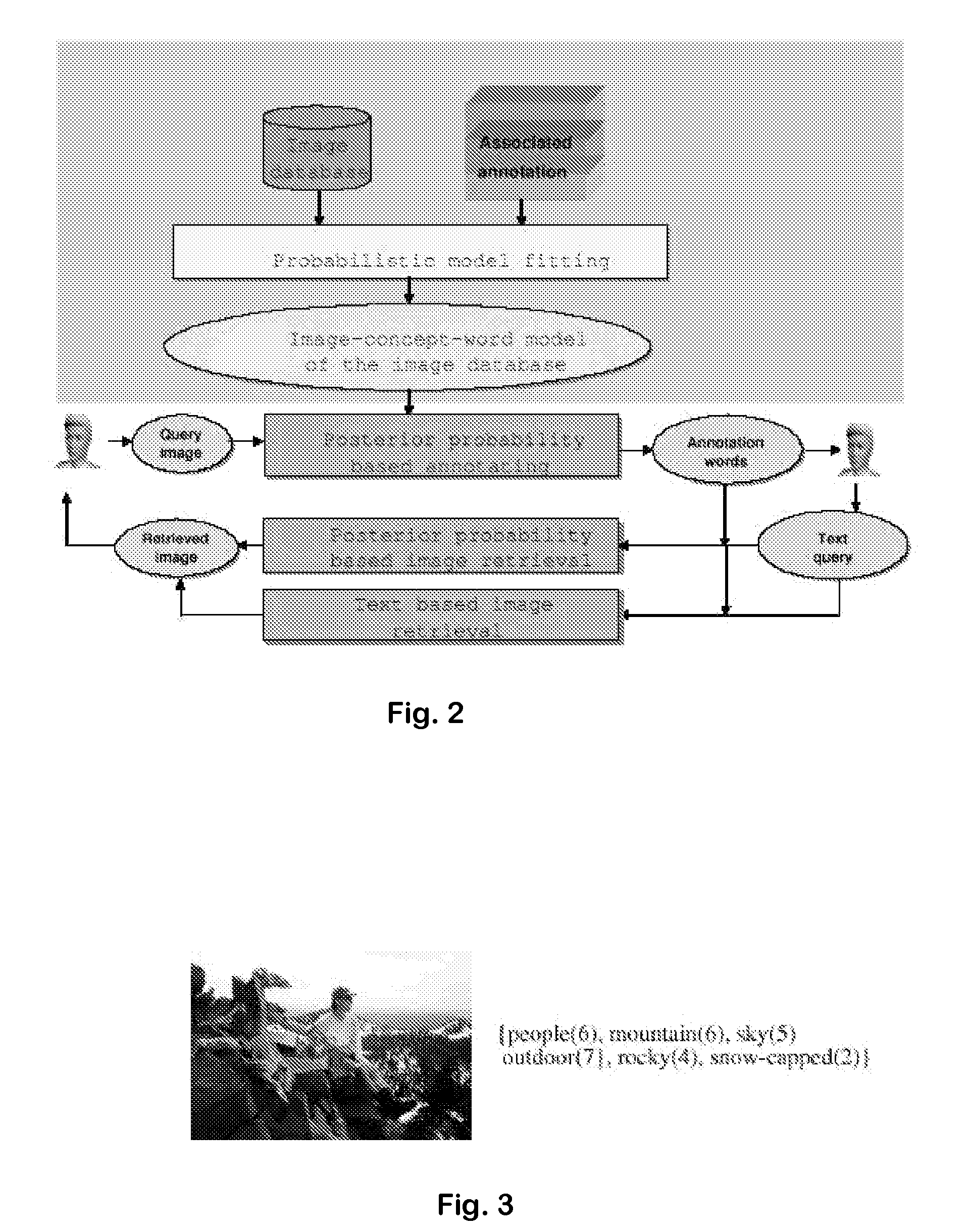

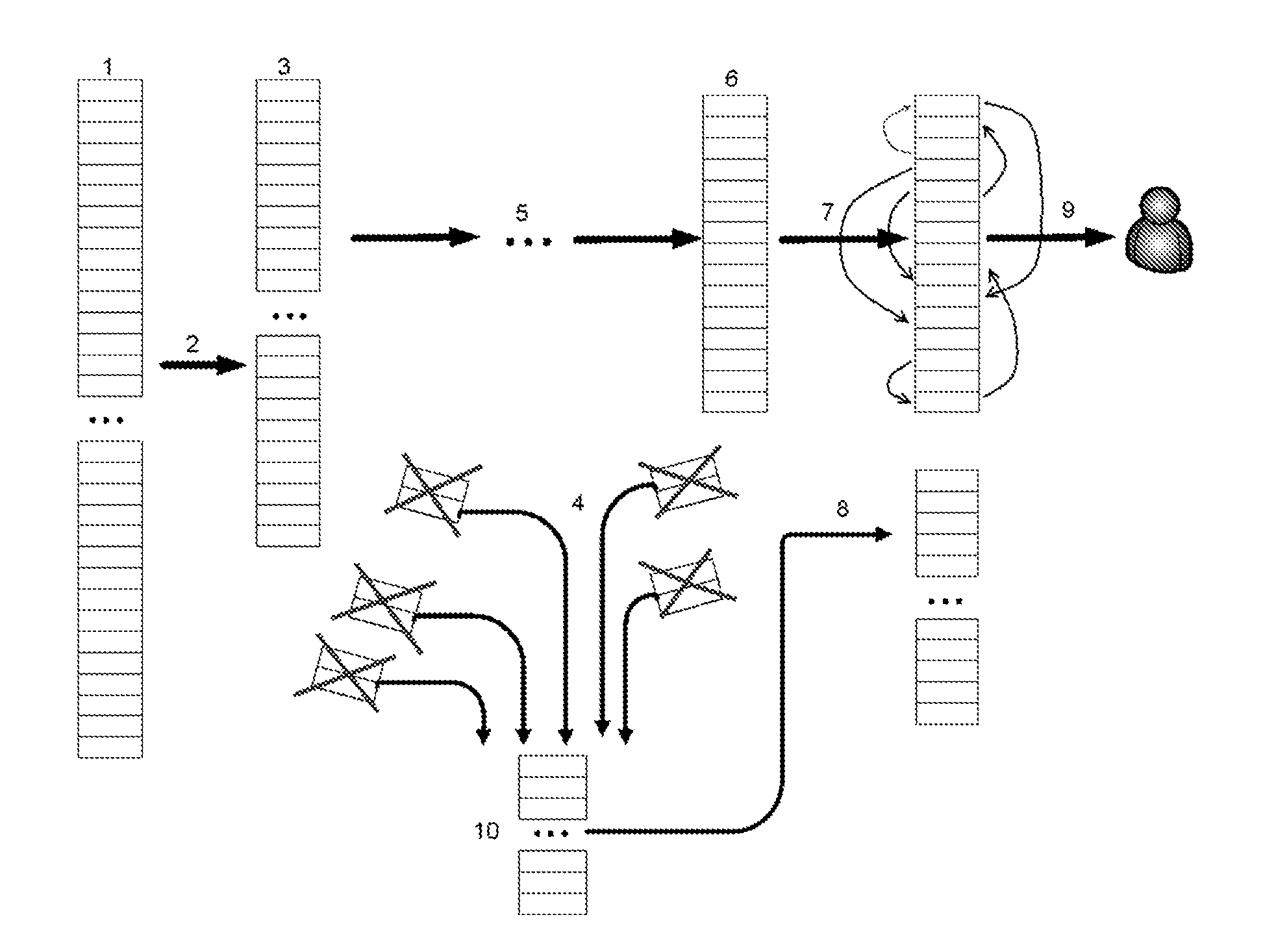

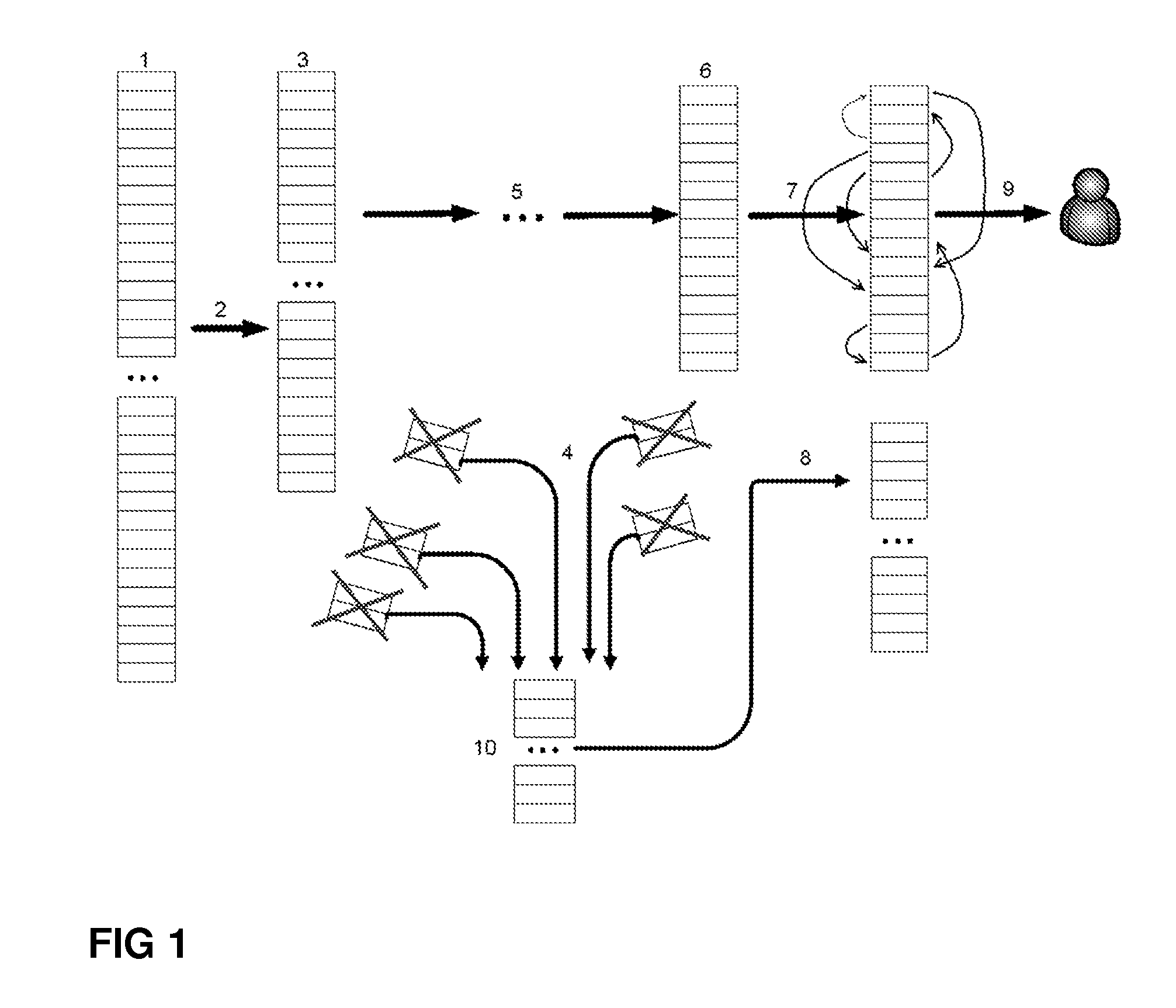

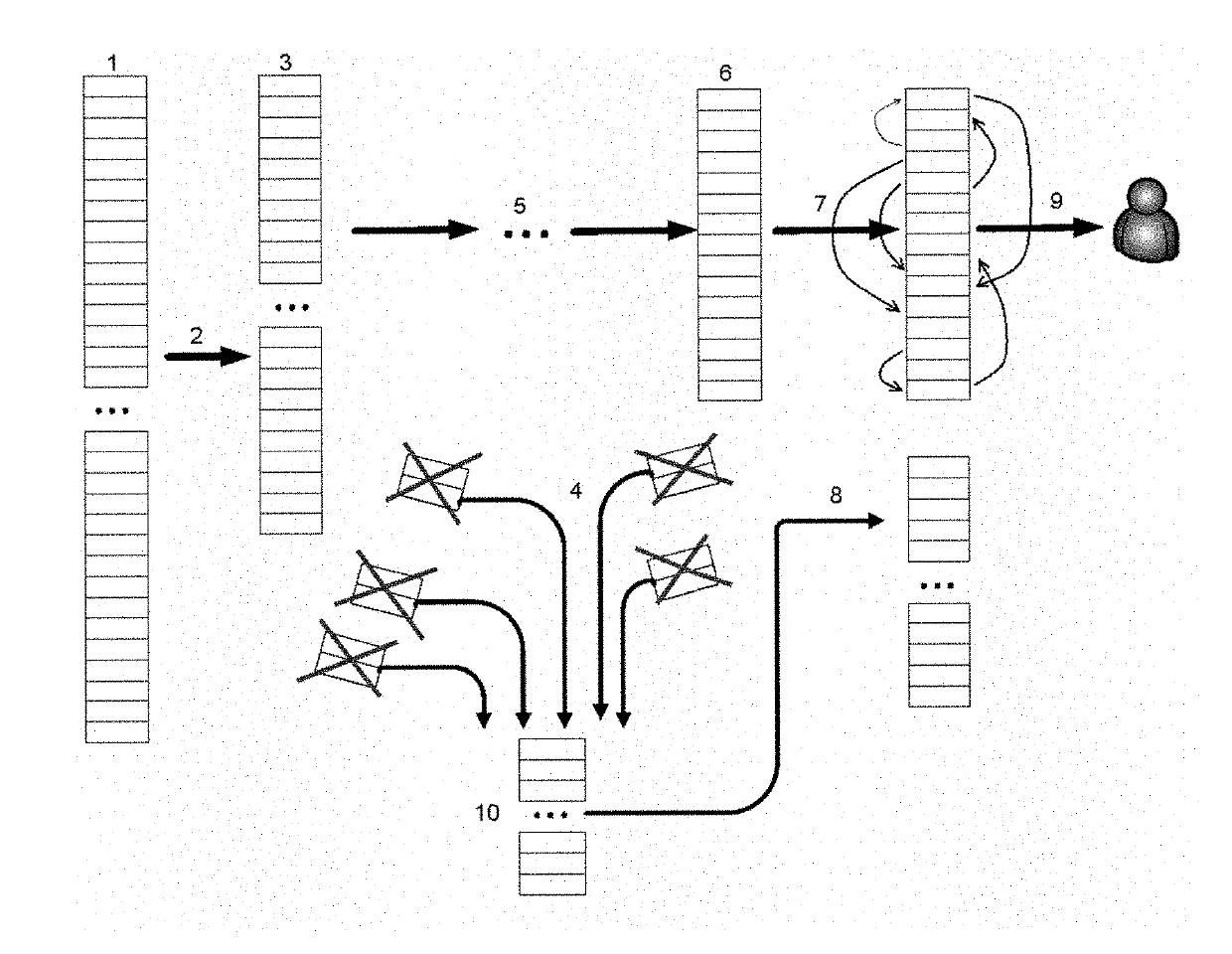

System and method for image annotation and multi-modal image retrieval using probabilistic semantic models

ActiveUS7814040B1Easy to annotate and retrieveEvaluation lessMathematical modelsDigital data information retrievalAlgorithmCorresponding conditional

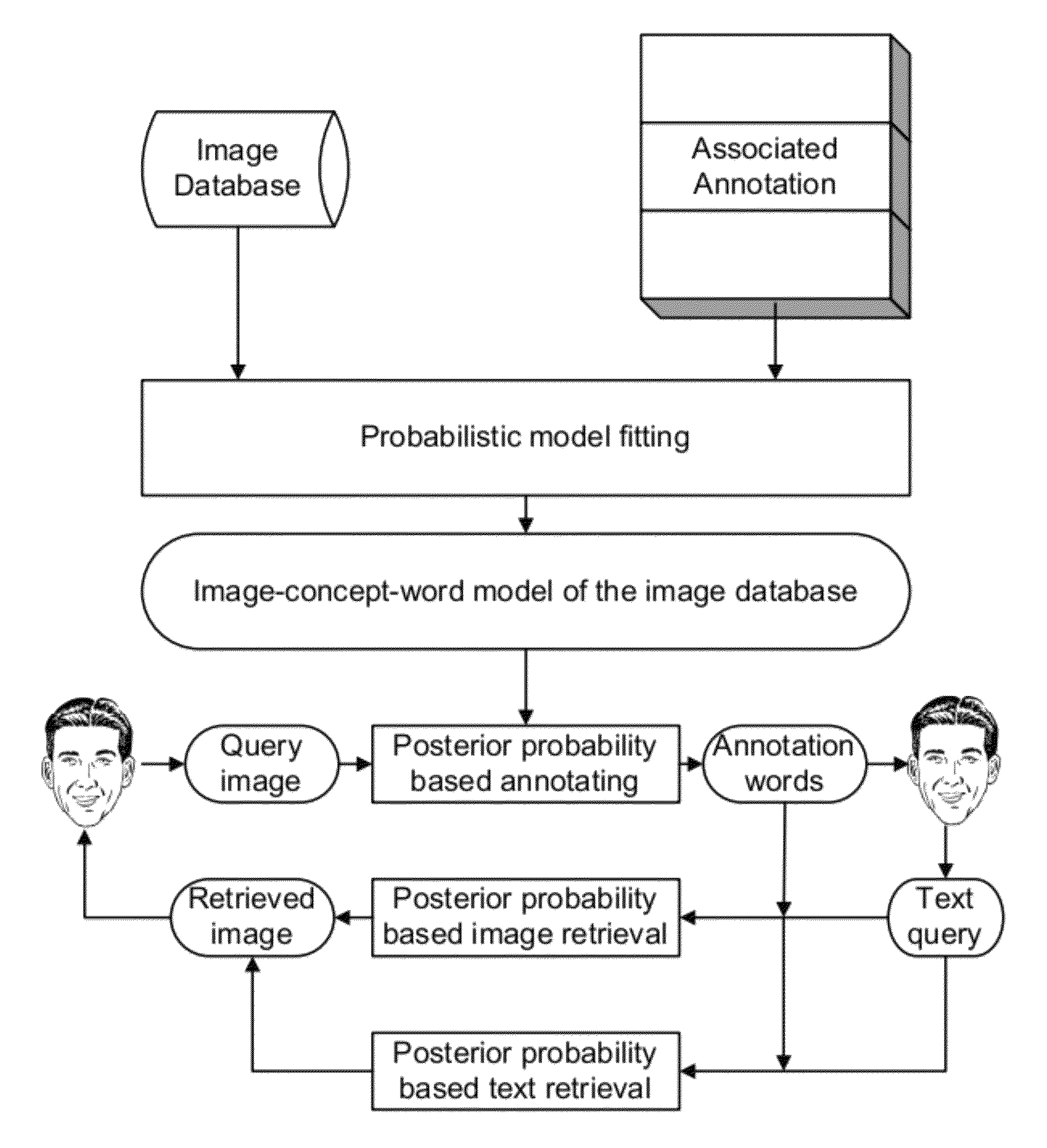

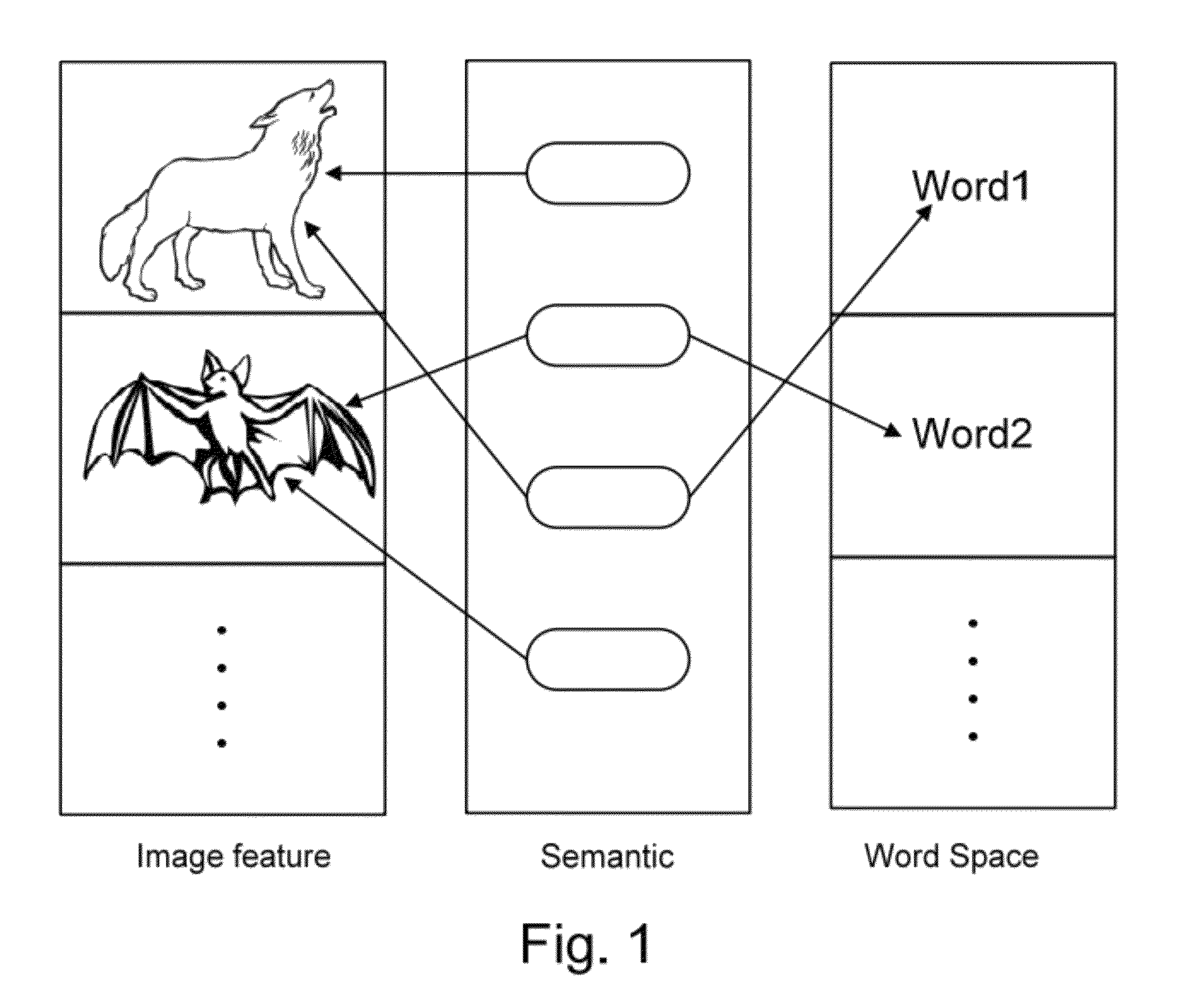

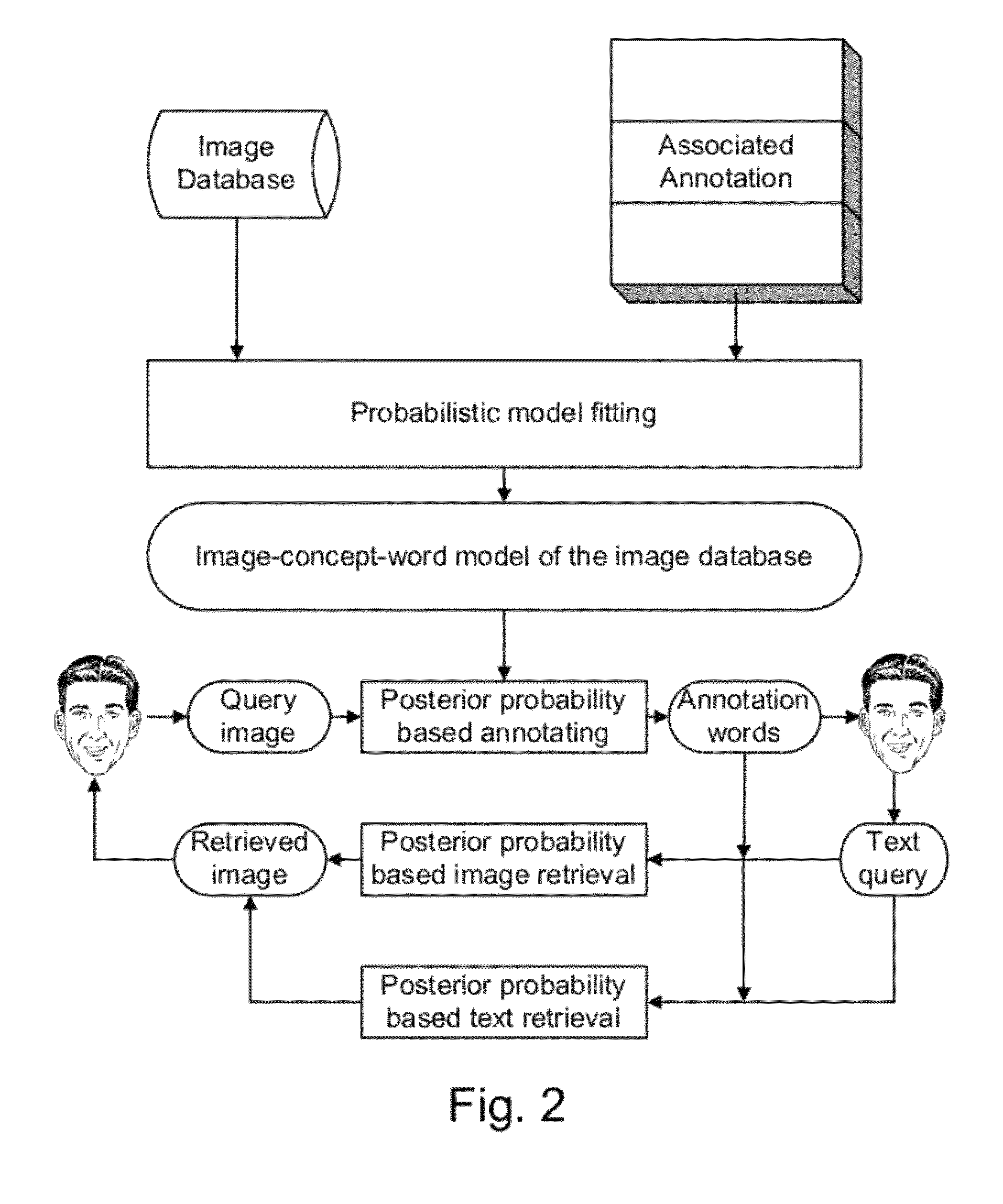

Systems and Methods for multi-modal or multimedia image retrieval are provided. Automatic image annotation is achieved based on a probabilistic semantic model in which visual features and textual words are connected via a hidden layer comprising the semantic concepts to be discovered, to explicitly exploit the synergy between the two modalities. The association of visual features and textual words is determined in a Bayesian framework to provide confidence of the association. A hidden concept layer which connects the visual feature(s) and the words is discovered by fitting a generative model to the training image and annotation words. An Expectation-Maximization (EM) based iterative learning procedure determines the conditional probabilities of the visual features and the textual words given a hidden concept class. Based on the discovered hidden concept layer and the corresponding conditional probabilities, the image annotation and the text-to-image retrieval are performed using the Bayesian framework.

Owner:THE RES FOUND OF STATE UNIV OF NEW YORK

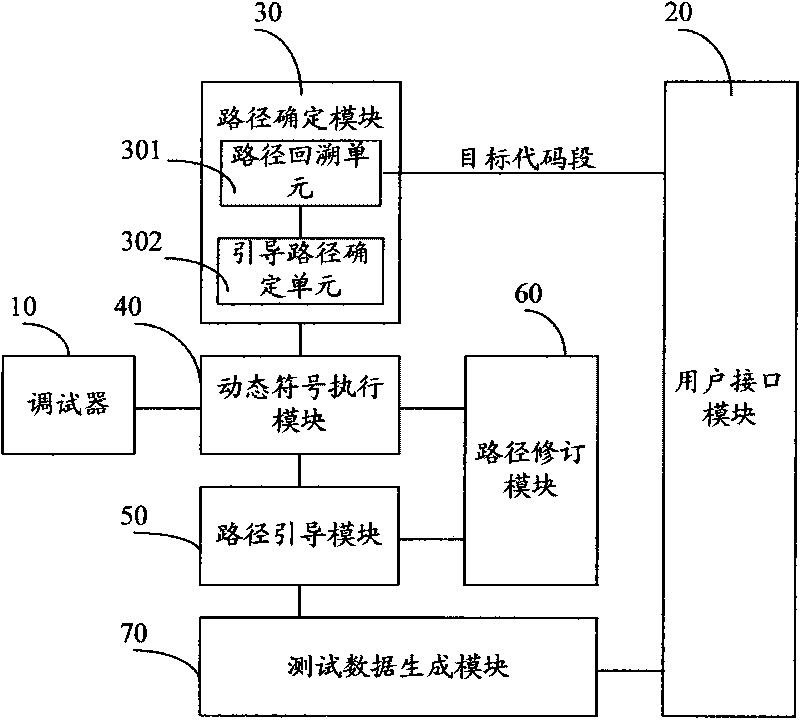

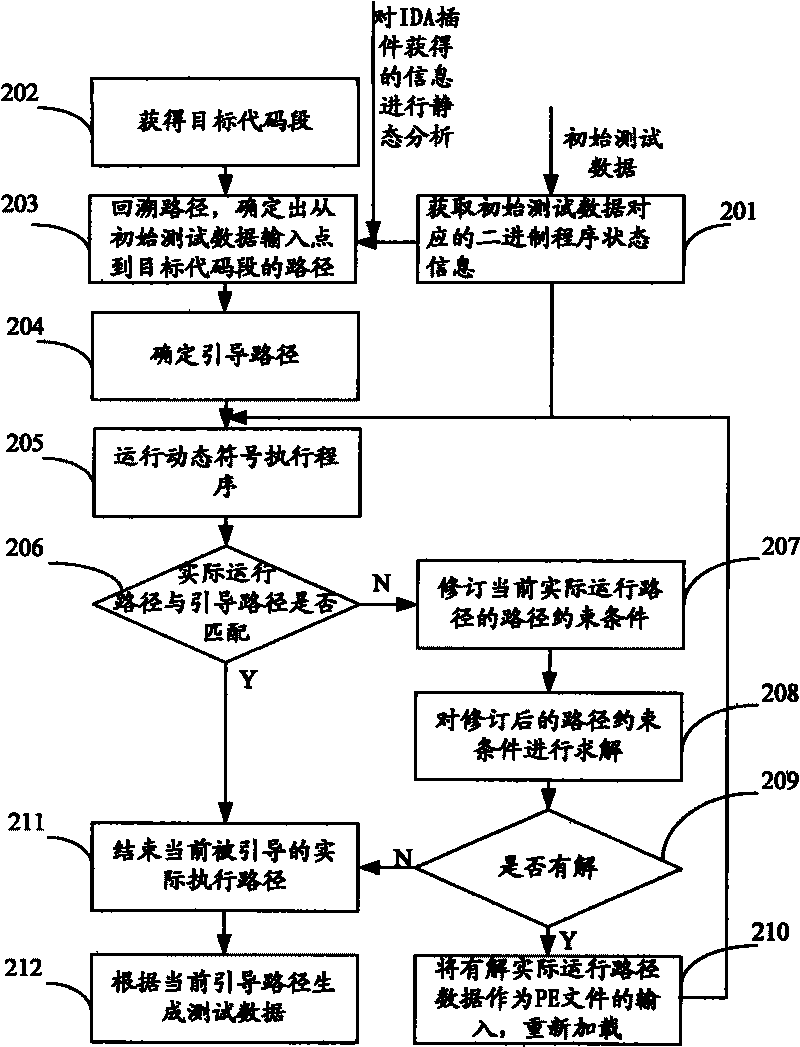

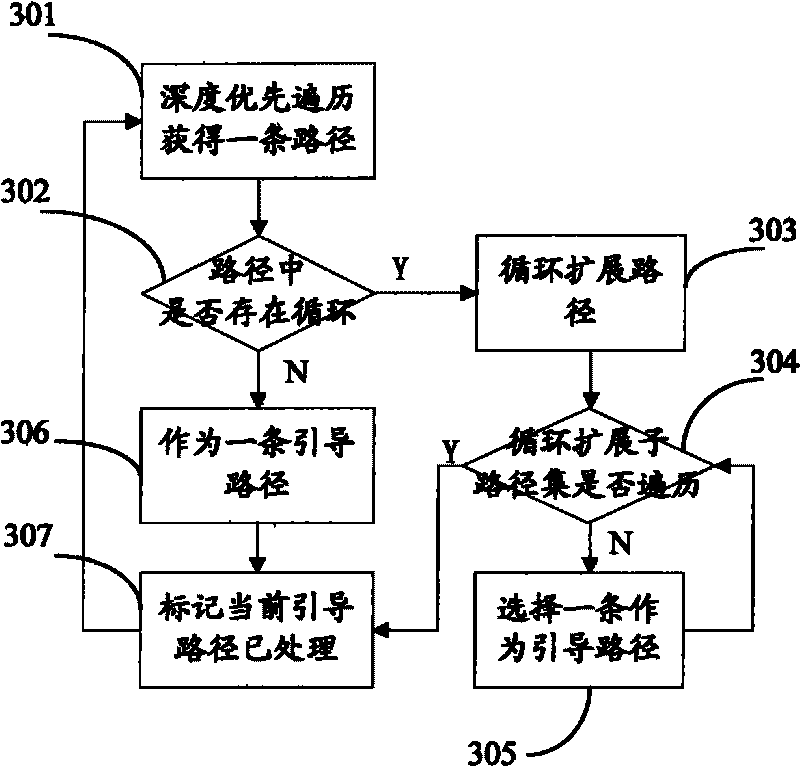

Test data generating device and method based on binary program

InactiveCN101714119AImprove effectivenessImprove accuracySoftware testing/debuggingCorresponding conditionalSensitivity analyses

The invention relates to test data generating device and method based on a binary program. The test data generating method mainly comprises the following steps of: operating a dynamic symbol executing program for the state information of the binary program which corresponds to a guide path and initial test data; obtaining corresponding conditional jump address information according to an operating result; matching an actual operation path which corresponds to the initial test data with the guide path on the basis of the obtained conditional jump address information; and generating the test data which corresponds to the actual operation path and is matched with the guide path. The invention combines the advantages of static analysis and dynamic analysis and can enhance the availability and the accuracy of symbolic execution and the accuracy degree of the generated test data and generate the test data used for carrying out path sensitivity analysis on a key code segment, thereby effectively mitigating the problem of path explosion in the symbolic execution.

Owner:BEIJING UNIV OF POSTS & TELECOMM

System and method for image annotation and multi-modal image retrieval using probabilistic semantic models comprising at least one joint probability distribution

ActiveUS8204842B1Easy to annotate and retrieveEvaluation lessMathematical modelsDigital data information retrievalHidden layerProbabilistic semantics

Systems and Methods for multi-modal or multimedia image retrieval are provided. Automatic image annotation is achieved based on a probabilistic semantic model in which visual features and textual words are connected via a hidden layer comprising the semantic concepts to be discovered, to explicitly exploit the synergy between the two modalities. The association of visual features and textual words is determined in a Bayesian framework to provide confidence of the association. A hidden concept layer which connects the visual feature(s) and the words is discovered by fitting a generative model to the training image and annotation words. An Expectation-Maximization (EM) based iterative learning procedure determines the conditional probabilities of the visual features and the textual words given a hidden concept class. Based on the discovered hidden concept layer and the corresponding conditional probabilities, the image annotation and the text-to-image retrieval are performed using the Bayesian framework.

Owner:THE RES FOUND OF STATE UNIV OF NEW YORK

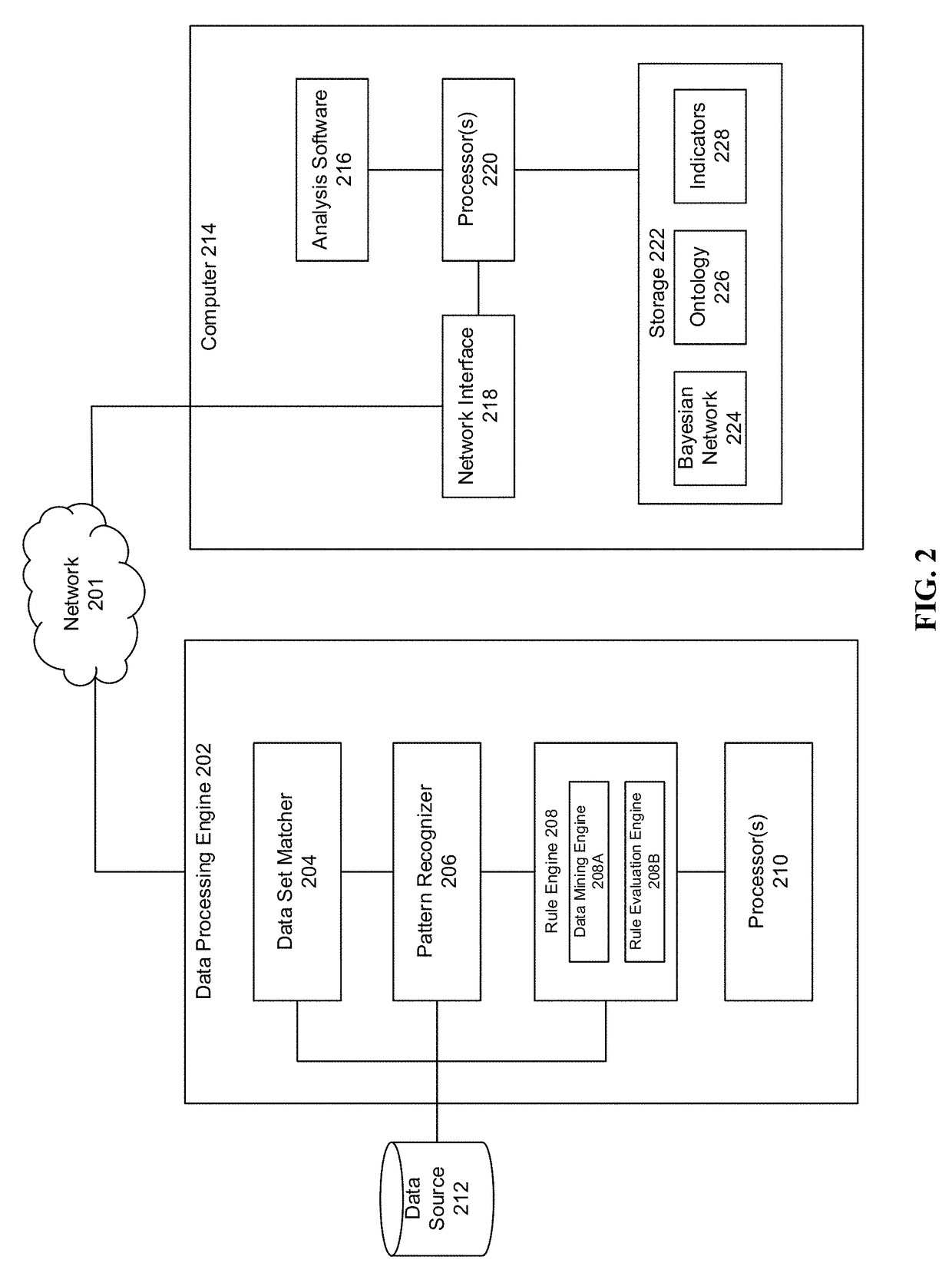

Root cause analysis in a communication network via probabilistic network structure

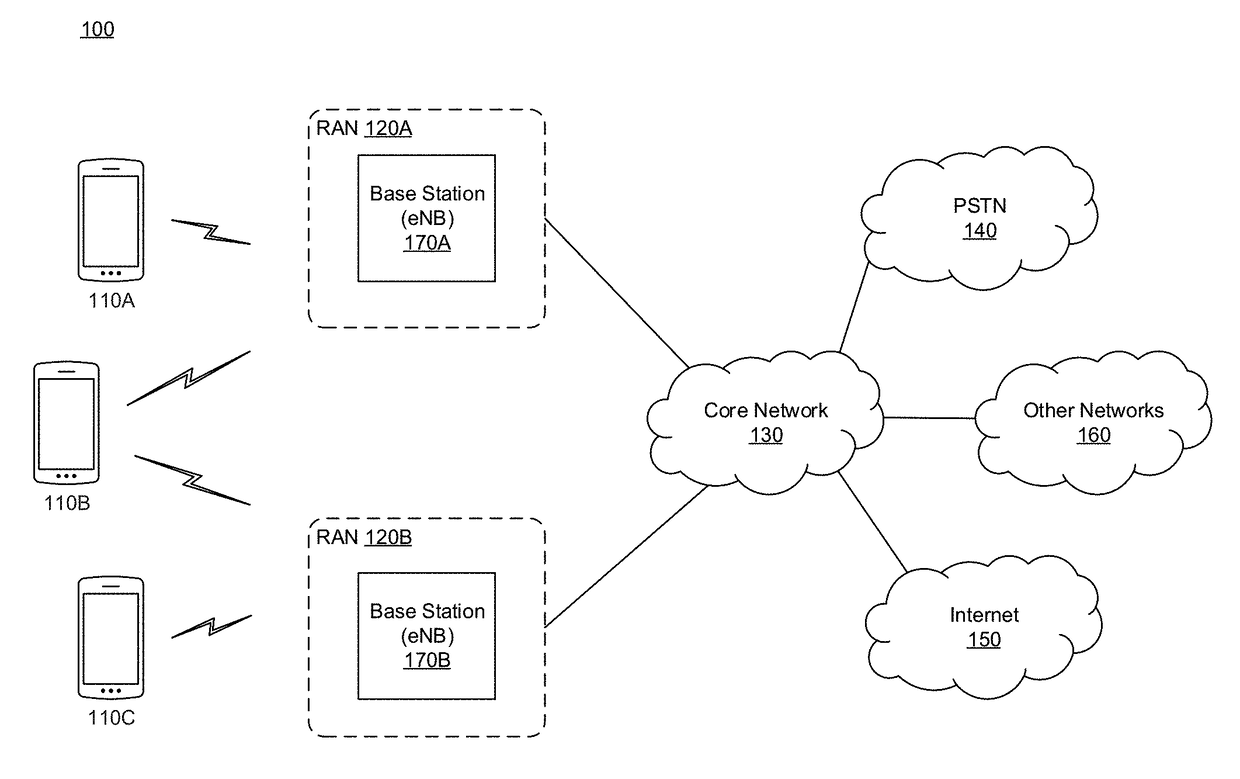

InactiveUS20170364819A1Well formedProbabilistic networksKnowledge representationCorresponding conditionalAnomalous behavior

The disclosure relates to technology for determining a root cause of anomalous behaviors in networks. First indicators (KQIs) are categorized into first groups (states) and second indicators (KPIs) are categorized into second groups. A conditional probability is estimated by calculating a probability that the second indicators will result in degradation of the first indicators based on historical data using association rule learning. The second indicators having the conditional probability associated with degradation of the first indicators are mapped to a corresponding one of the first groups in a probabilistic network structure based on a detected degradation of the first indicators in the historical data. Then it is determined whether the second indicators mapped to the corresponding first groups satisfy a threshold when degradation of the first indicators is detected, and each of the second indicators resulting in degradation of the first indicator are ranked according to a corresponding conditional probability.

Owner:FUTUREWEI TECH INC

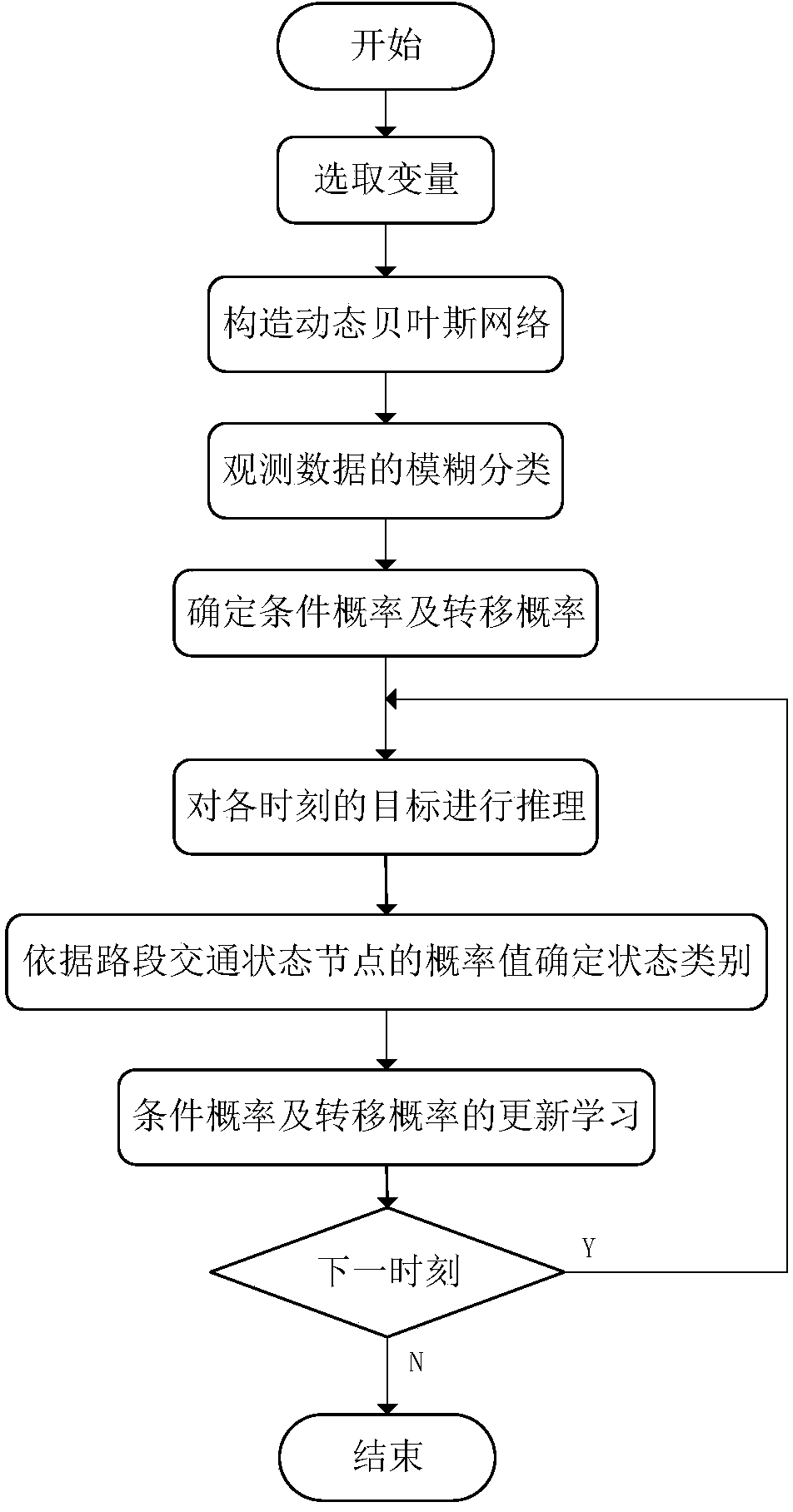

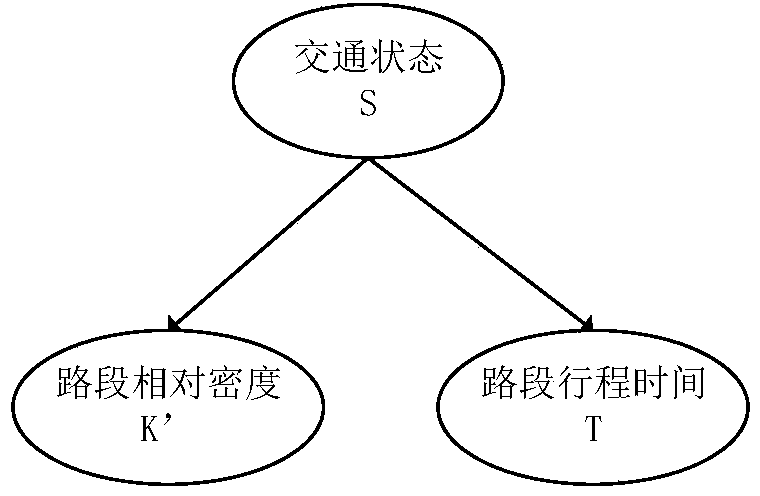

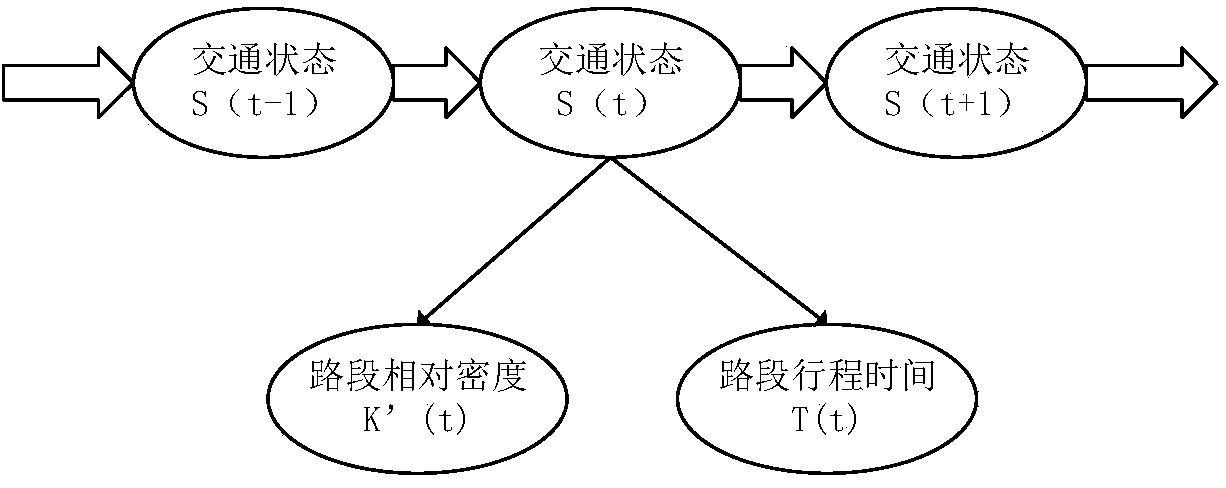

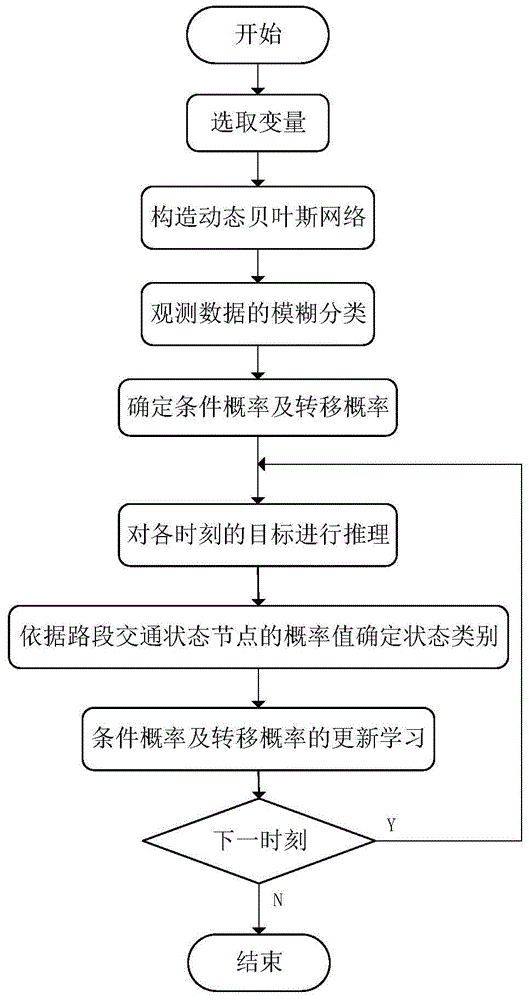

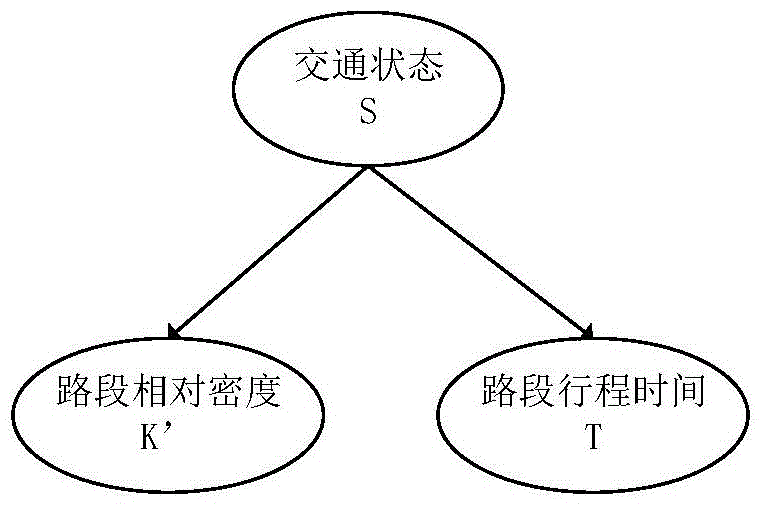

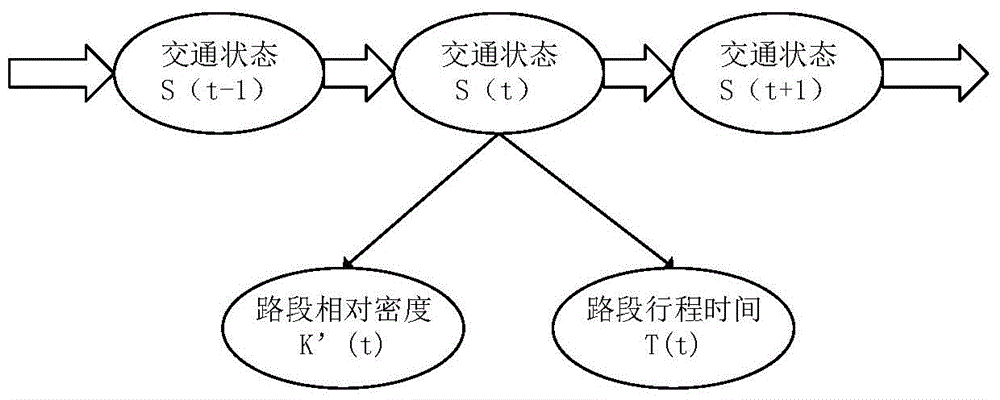

Expressway road traffic state estimation method based on dynamic Bayesian network

ActiveCN104809879AGood effectImprove reliabilityDetection of traffic movementSpecial data processing applicationsCorresponding conditionalEstimation methods

The invention belongs to the technical field of road traffic detection and particularly discloses an expressway road traffic state estimation method based on a dynamic Bayesian network; the method comprises the following steps: (1) extracting relevant parameters of the road traffic state as nodes; (2) determining an interrelationship among the nodes and establishing the dynamic Bayesian network; (3) carrying out a fuzzy classification on data of the observable nodes, analyzing the historical data to obtain a clustering center of each classification and determining a membership degree of the data of the observable data, belonging to each classification; 4) for a target node selected in the dynamic Bayesian network, acquiring a corresponding conditional probability and a transition probability and establishing each moment characteristic table of the selected target node; 5) inputting road traffic flow parameters of the current moment to the dynamic Bayesian network and triggering to reason a target of each moment to obtain a traffic state estimation result. According to the expressway road traffic state estimation method disclosed by the invention, the uncertainty in a single parameter estimation state is solved and simultaneously the relevance in the traffic state is considered, so that better effect and reliability when the road traffic state is estimated are achieved.

Owner:重庆科知源科技有限公司

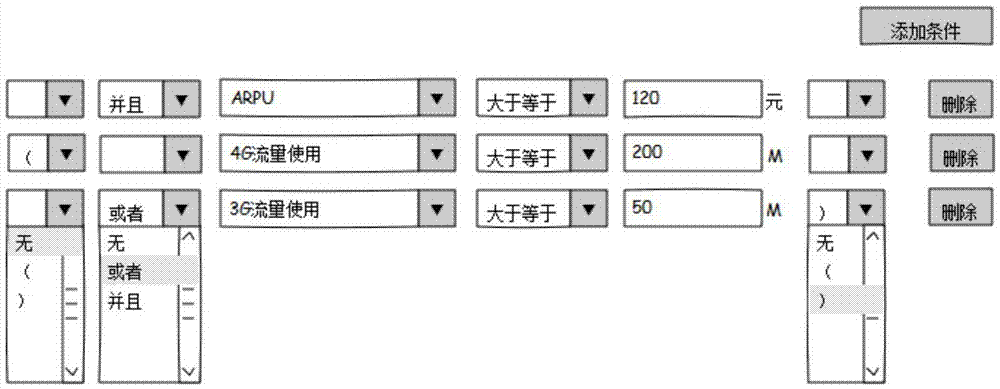

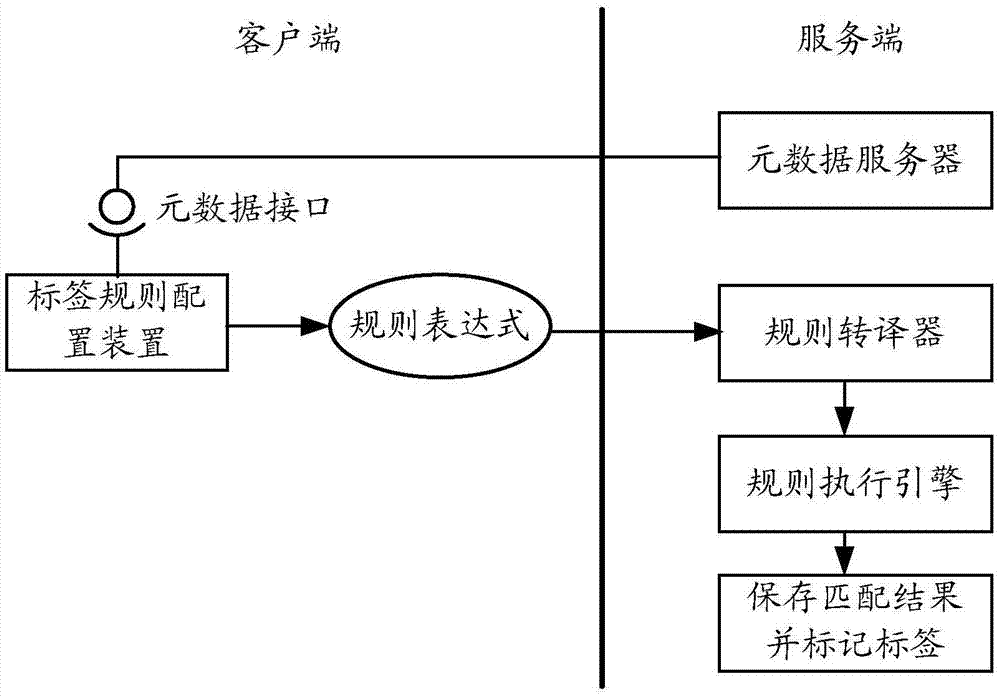

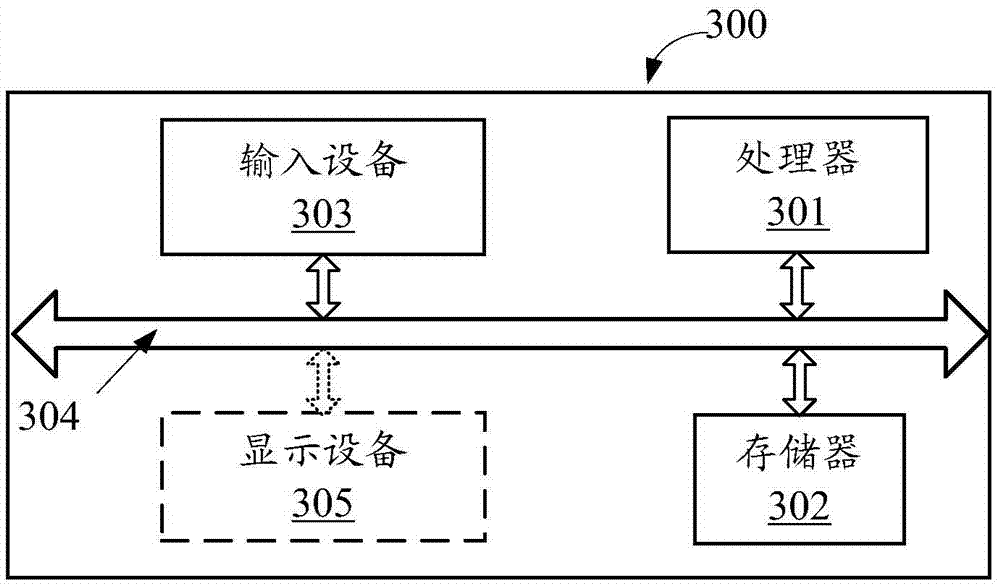

Screening rule configuration method, display method and client

ActiveCN106933889AFlexible configurationFlexible settingsSpecial data processing applicationsMarketingRelationship - FatherCorresponding conditional

The embodiment of the invention provides a screening rule configuration method, a display method and a client. The configuration method comprises the steps that an operation object is determined according to first input of a user, wherein the operation object is a property or tag; metadata of the operation object is acquired, wherein the metadata comprises the type of the operation object; a conditional item used for rule configuration is determined according to the type of the operation object; the conditional item determined this time and existing conditional items form a tree structure, a father node of the conditional item determined this time in the tree structure is a logic operation node, and the logic operation node is used for expressing a logic relation between conditional items on child nodes of the logic operation node; and a rule expression is generated according to the tree structure. According to the embodiment, the corresponding conditional item is determined through the operation object, the conditional item determined this time and the existing conditional items form the tree structure, the logic relation of multilevel nested conditions can be flexibly set, and therefore screening rules can be flexibly configured.

Owner:HUAWEI TECH CO LTD

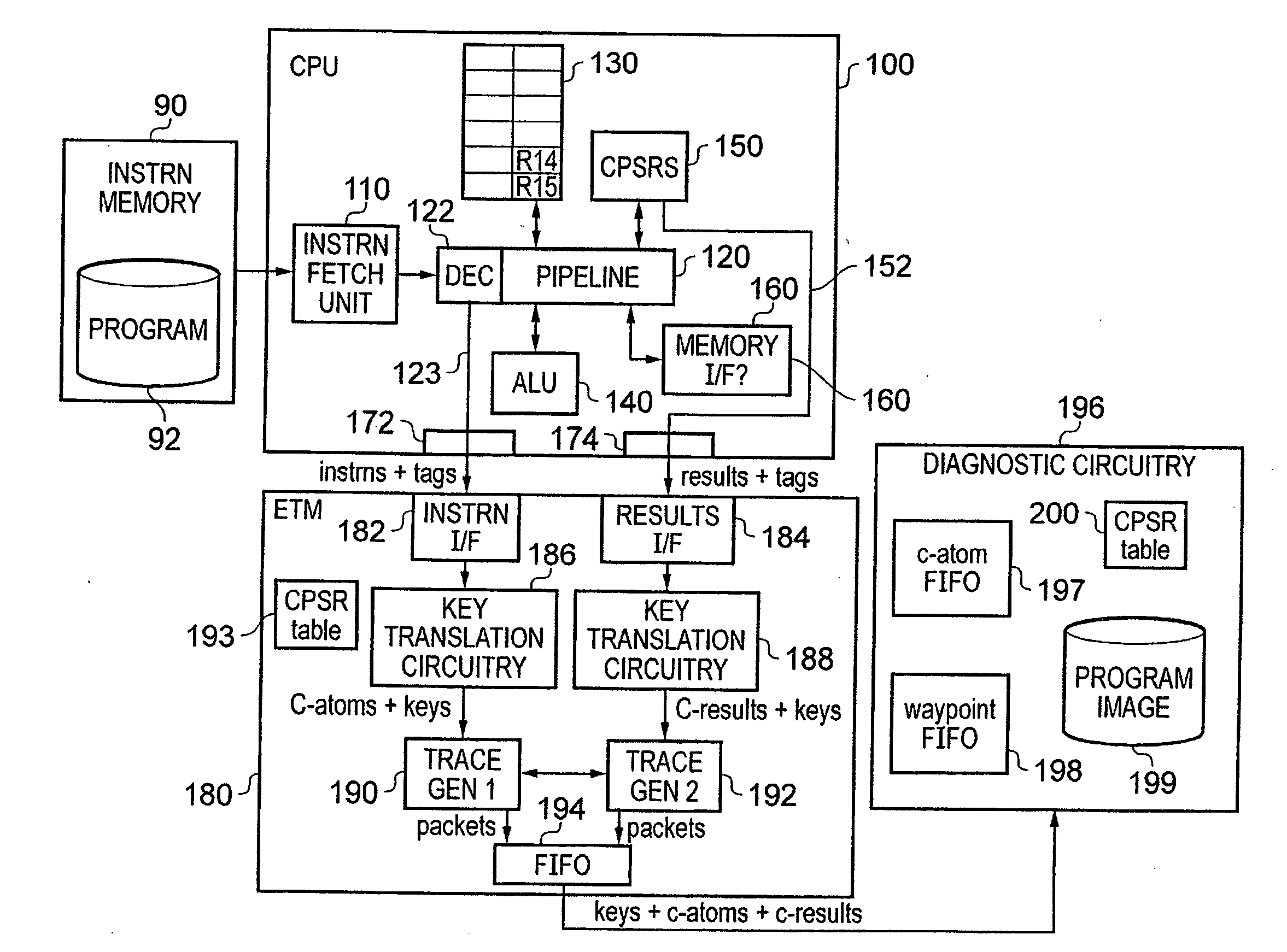

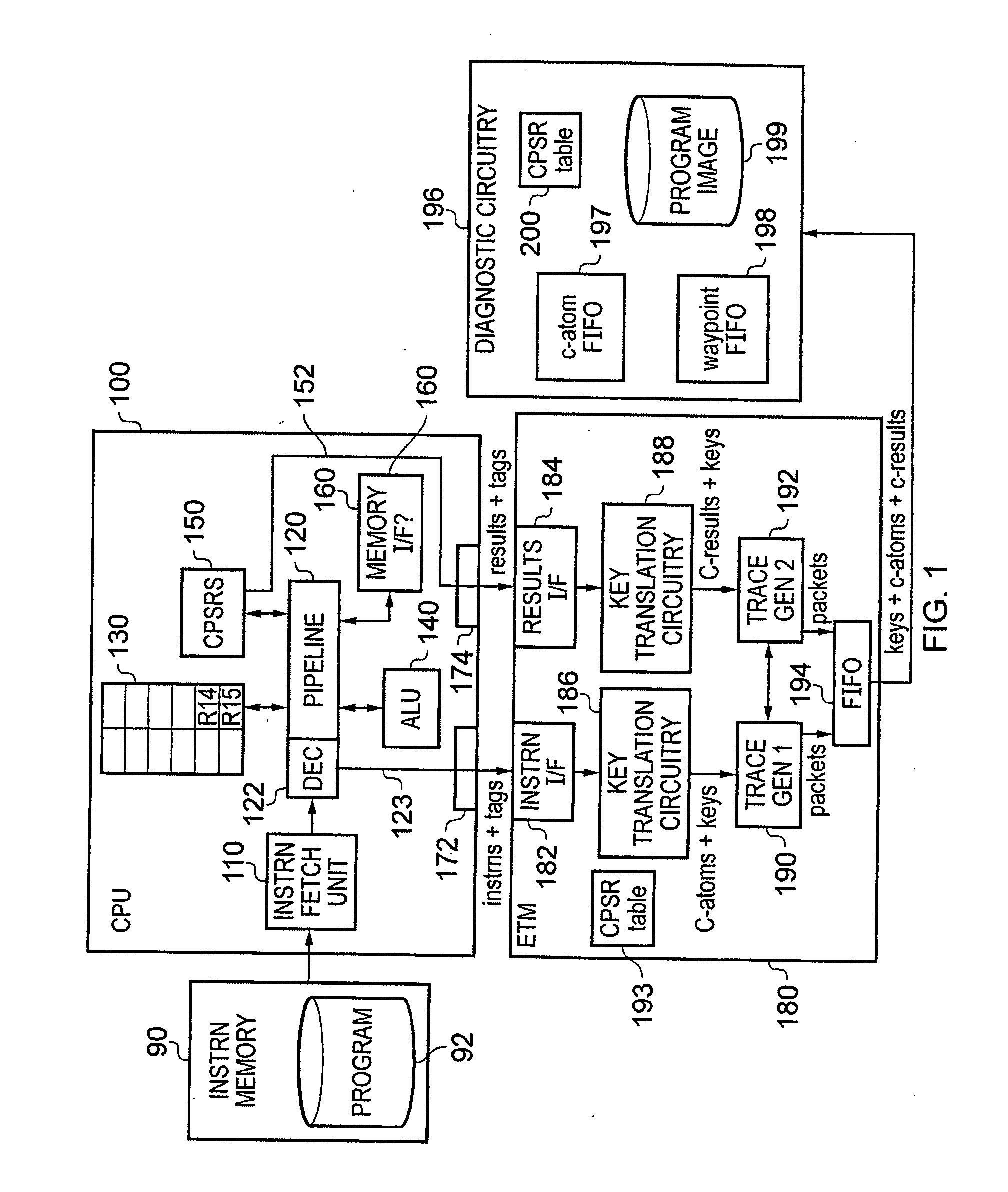

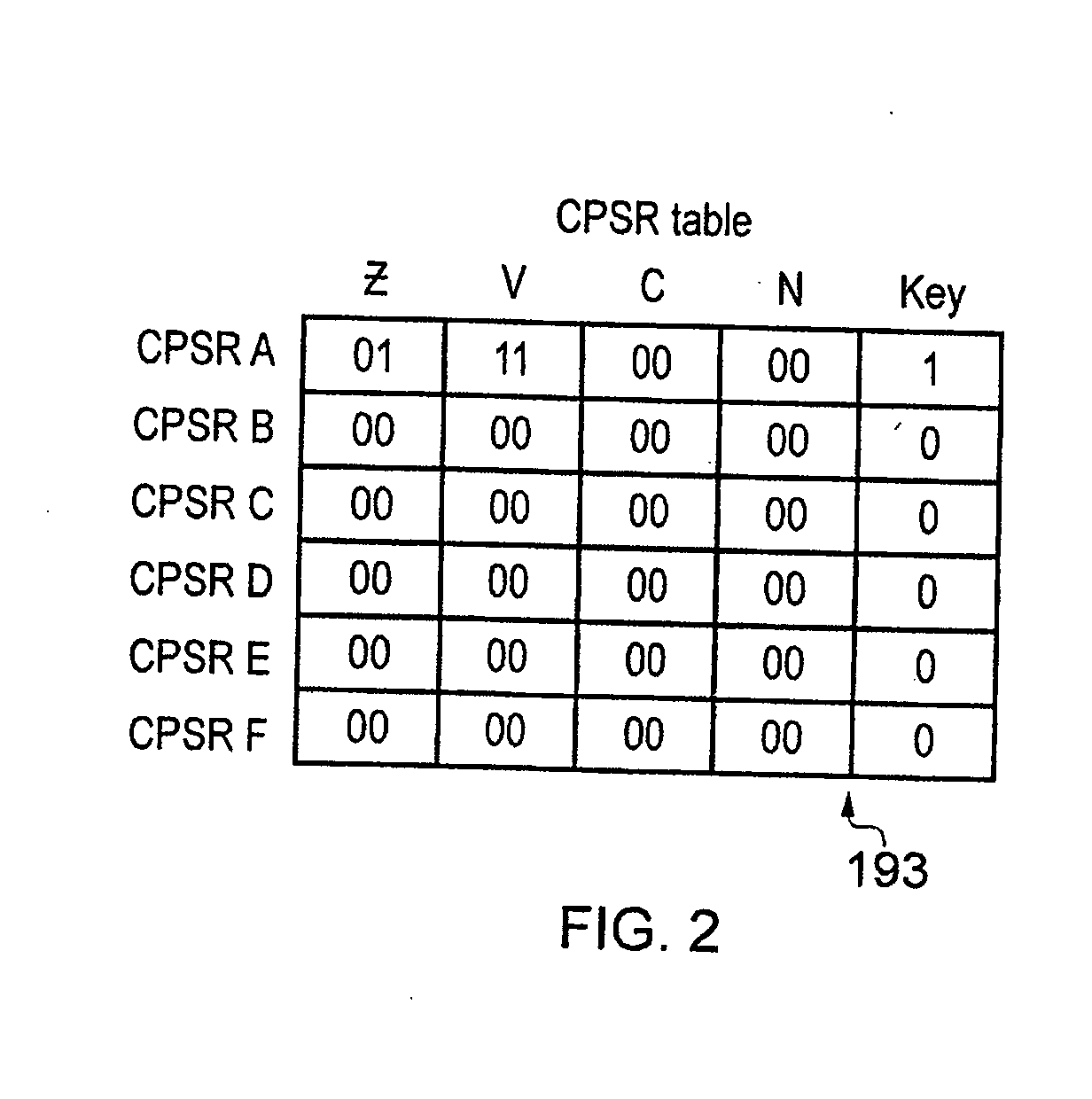

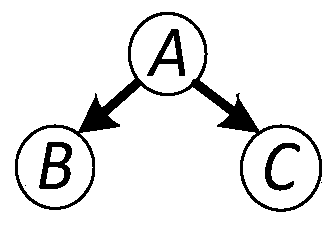

Tracing of a data processing apparatus

ActiveUS20120185734A1Reduced trace bandwidthLower the volumeConditional code generationInstruction analysisCorresponding conditionalParallel computing

A trace unit, diagnostic apparatus and data processing apparatus are provided for tracing of conditional instructions. The data processing apparatus generates instruction observed indicators indicating execution of conditional instructions and result output indicators indicating output by the data processing apparatus of results of executing respective conditional instructions. The instruction observed indicators and result output indicators are received by a trace unit that is configured to output conditional instruction trace data items and independently output conditional result trace data items enabling separate trace analysis of conditional instructions and corresponding conditional results by a diagnostic apparatus. The instruction observed indicator is received at the trace unit in a first processing cycle of the data processing apparatus whilst result output indicator is received at in a second different processing cycle.

Owner:ARM LTD

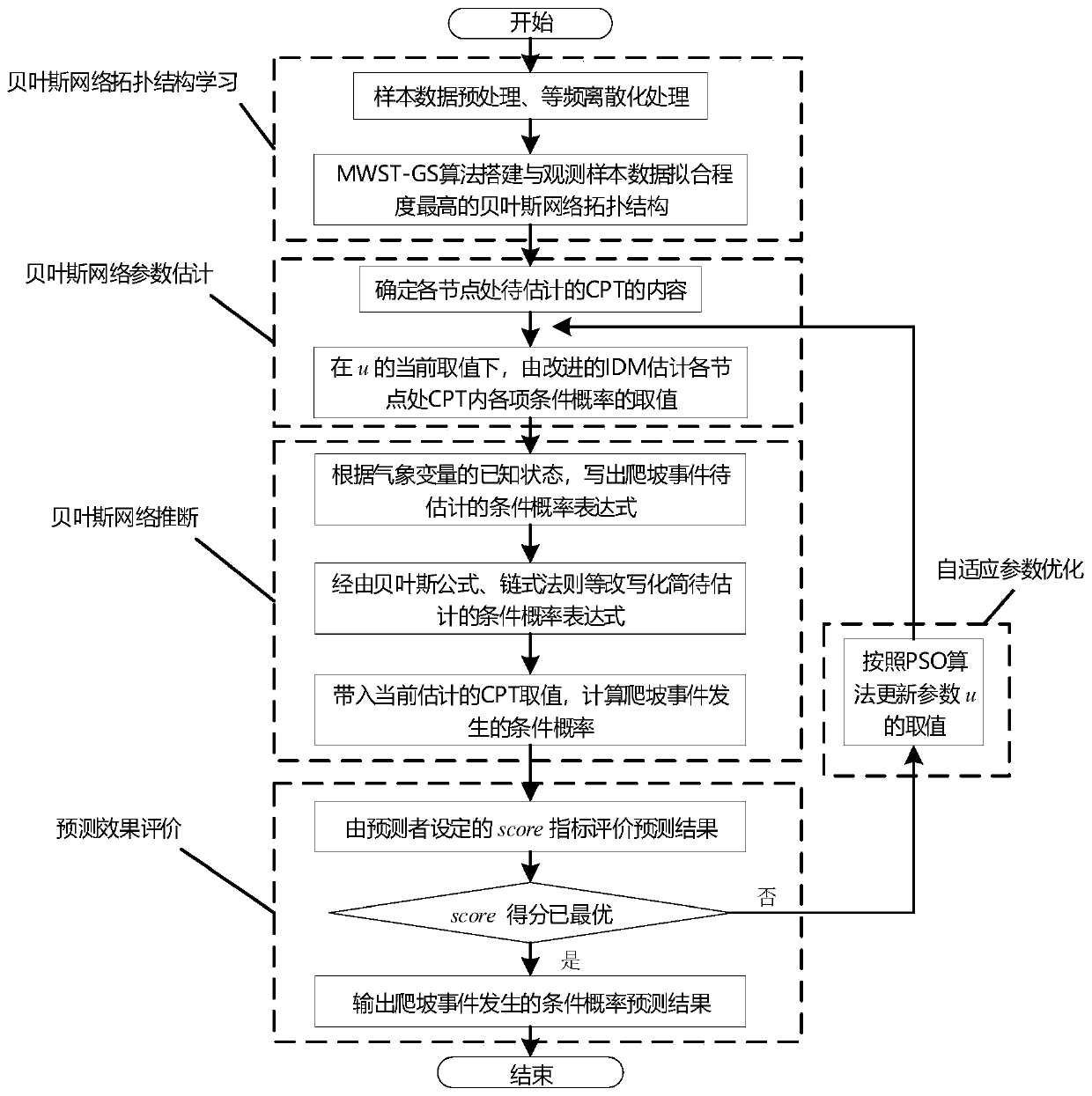

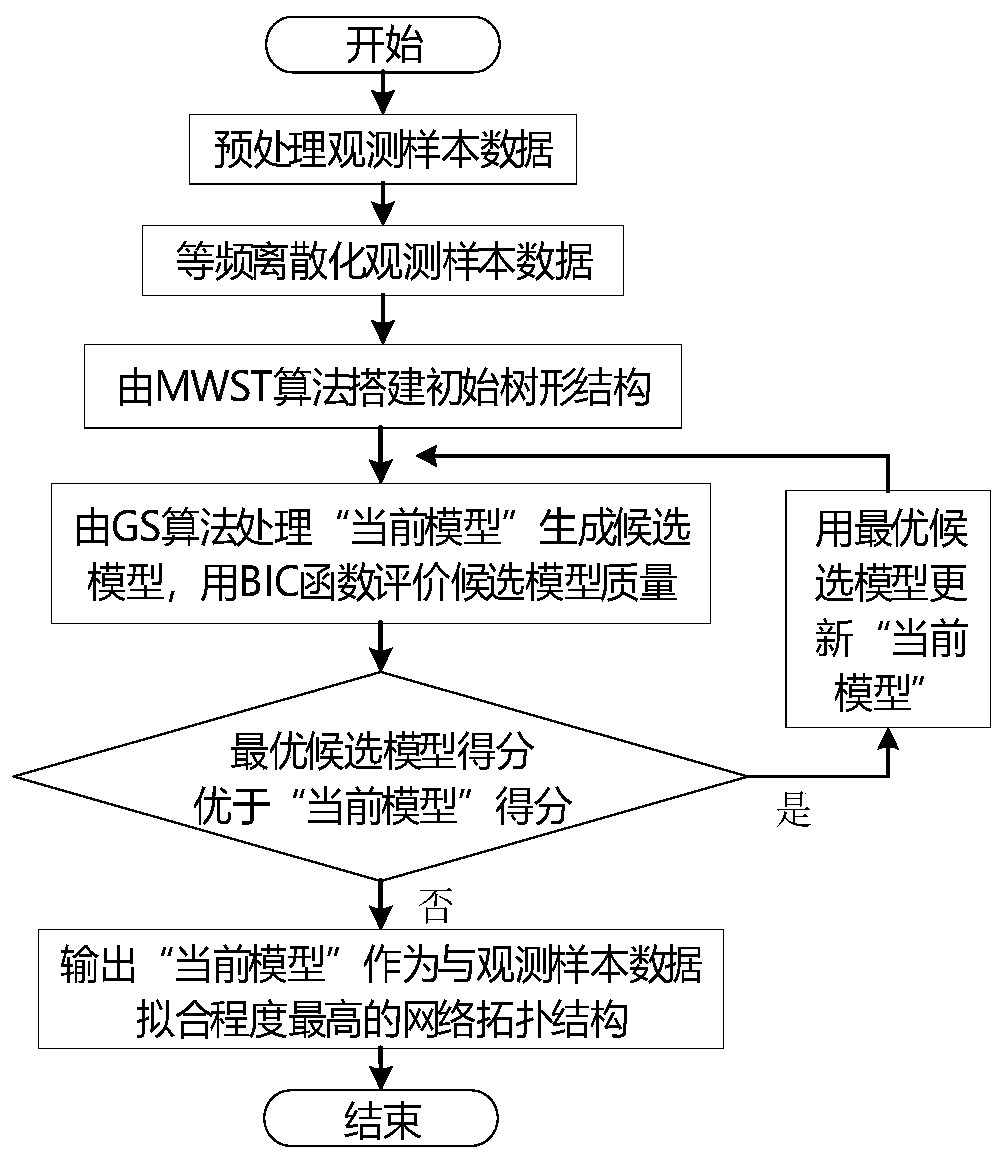

Wind power climbing event probability prediction method and system based on Bayesian network

ActiveCN109978222AMinimize prediction errorAvoid Cumulative ErrorsForecastingCharacter and pattern recognitionNODALGaussian network model

The invention discloses a wind power climbing event probability prediction method and system based on a Bayesian network, and the method comprises the steps: mining the dependency relationship betweena wind power climbing event and related meteorological influence factors such as wind speed, wind direction, temperature, air pressure, humidity, and the like, and building a Bayesian network topological structure with the highest fitting degree with sample data; quantitatively describing a conditional dependency relationship between the climbing event and each meteorological factor, estimating the value of each conditional probability in a conditional probability table at each node of the Bayesian network, and forming a Bayesian network model for predicting the wind power climbing event together with a Bayesian network topological structure; deducing the probability of occurrence of each state of the climbing event according to the numerical weather forecast information of the mastered prediction time; the value of the corresponding conditional probability at each node is adaptively adjusted, so that the inferred conditional probability result of each state of the climbing event is optimized, and the compromise between the reliability and the sensitivity of the prediction result is realized.

Owner:ELECTRIC POWER RESEARCH INSTITUTE OF STATE GRID SHANDONG ELECTRIC POWER COMPANY +3

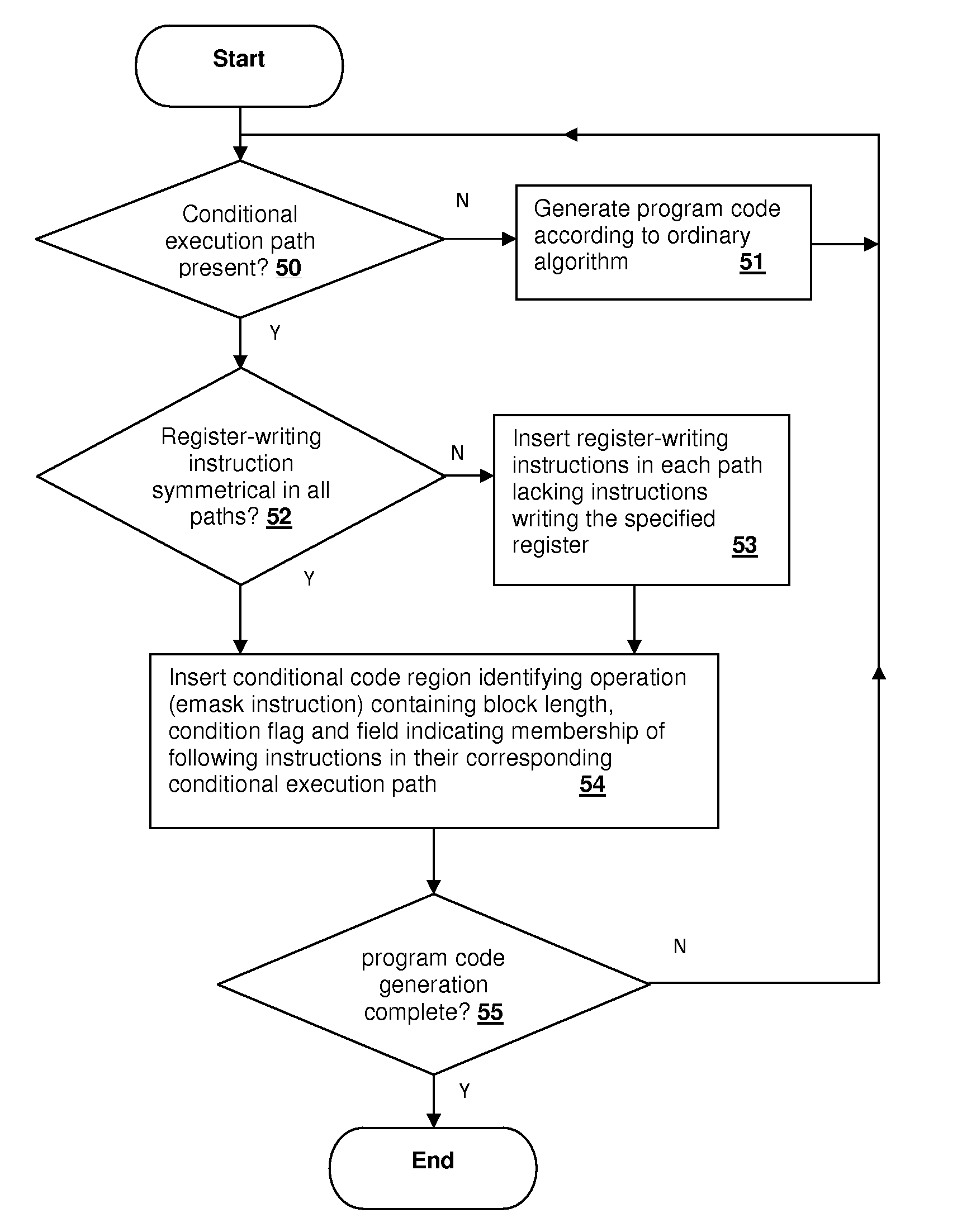

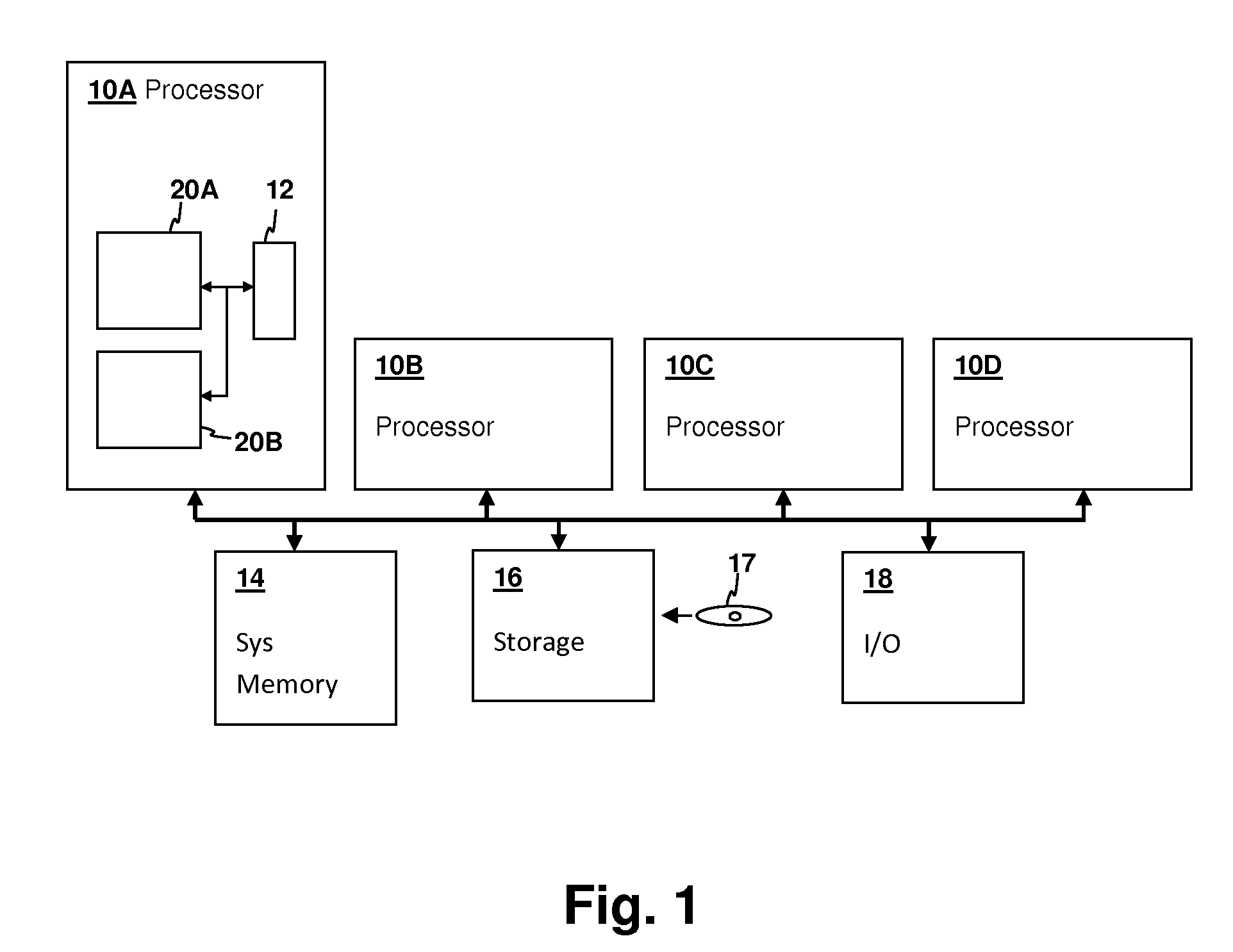

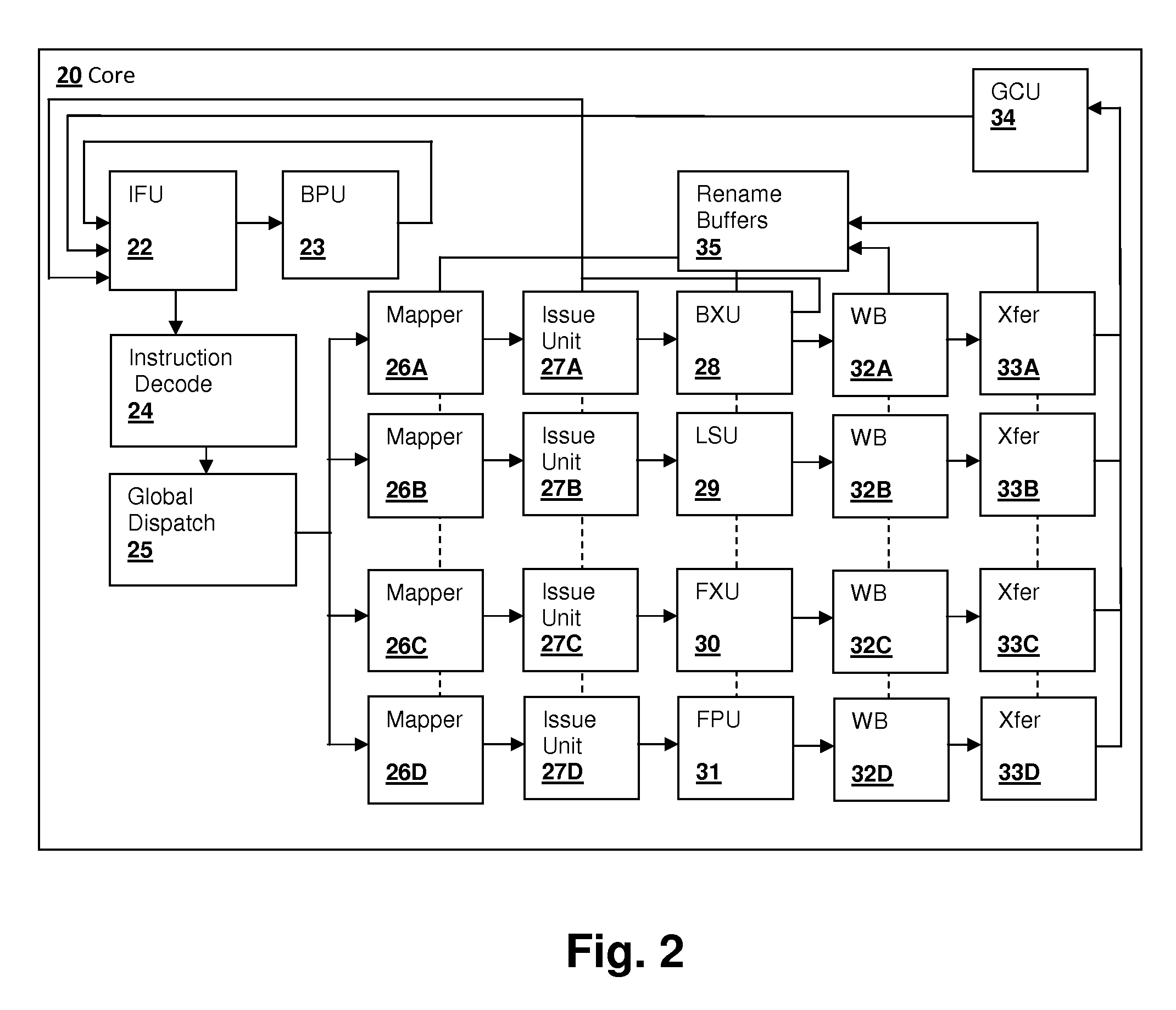

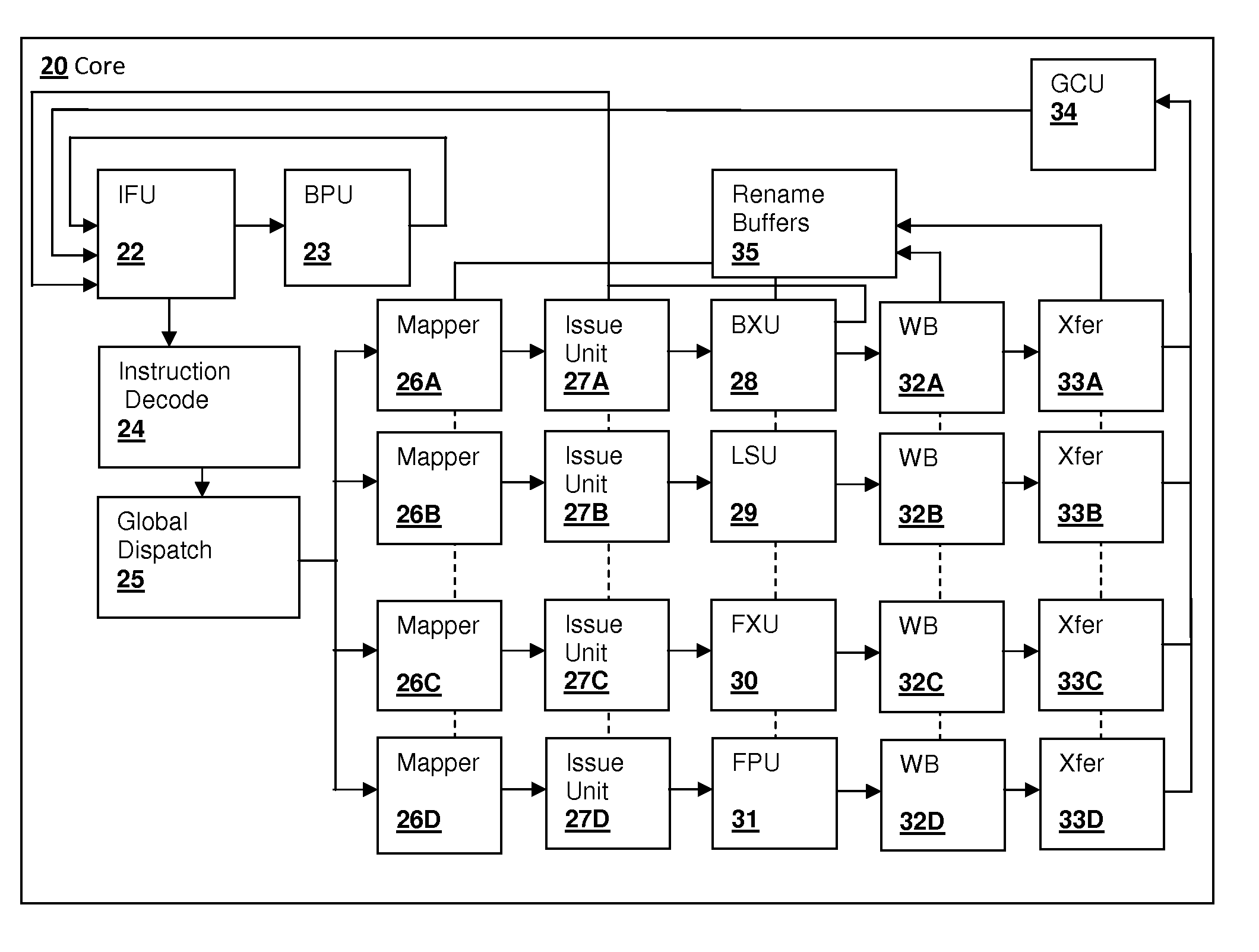

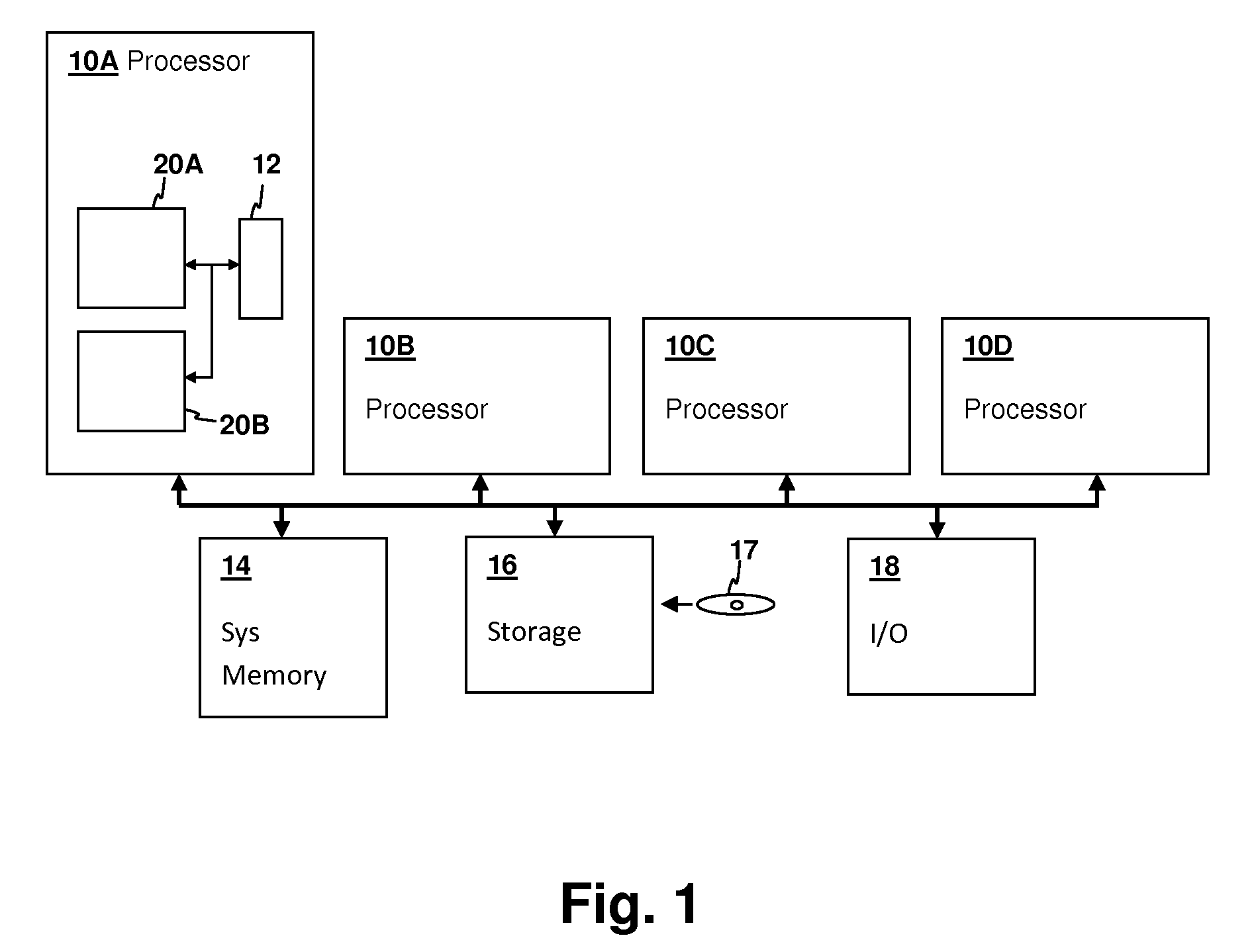

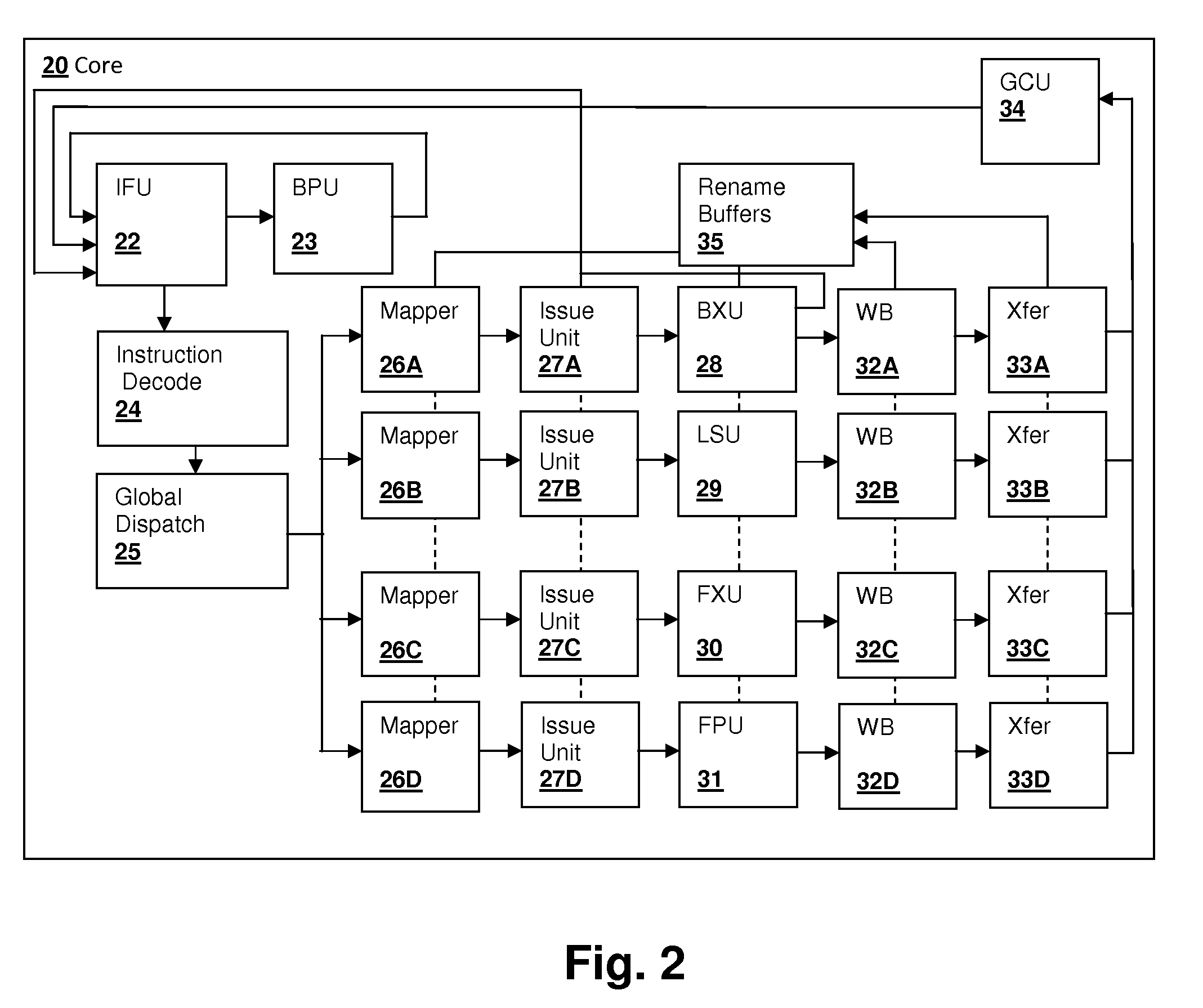

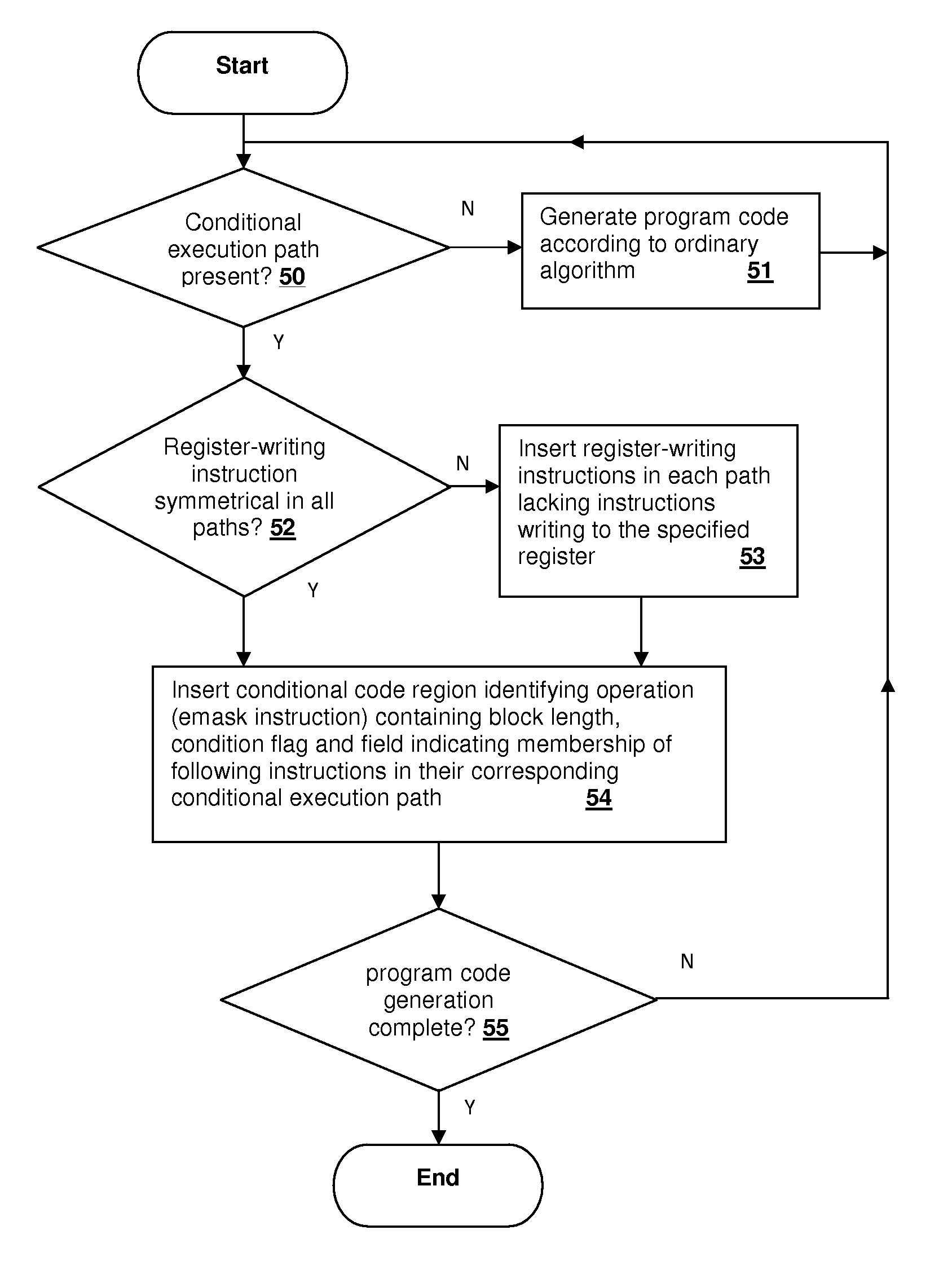

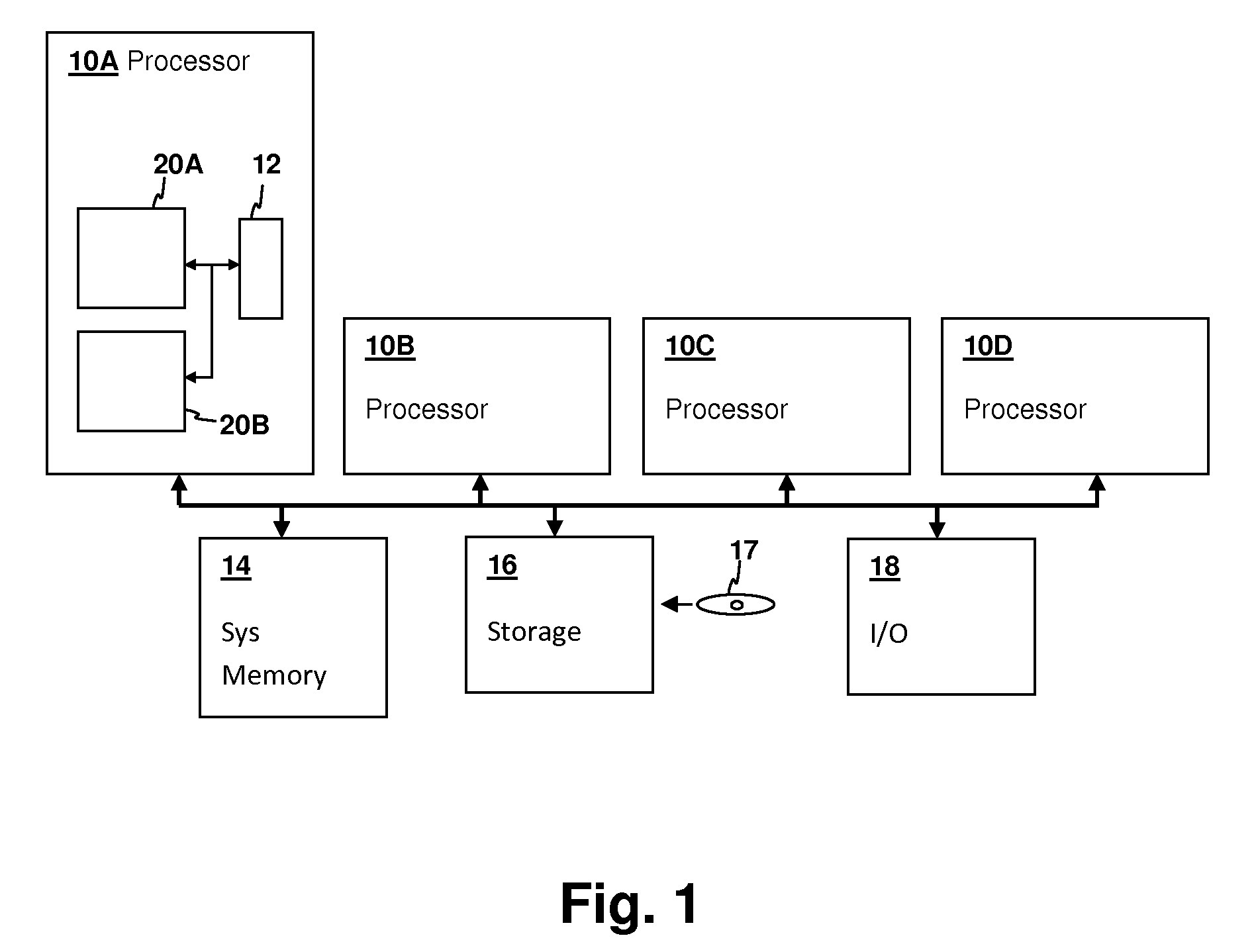

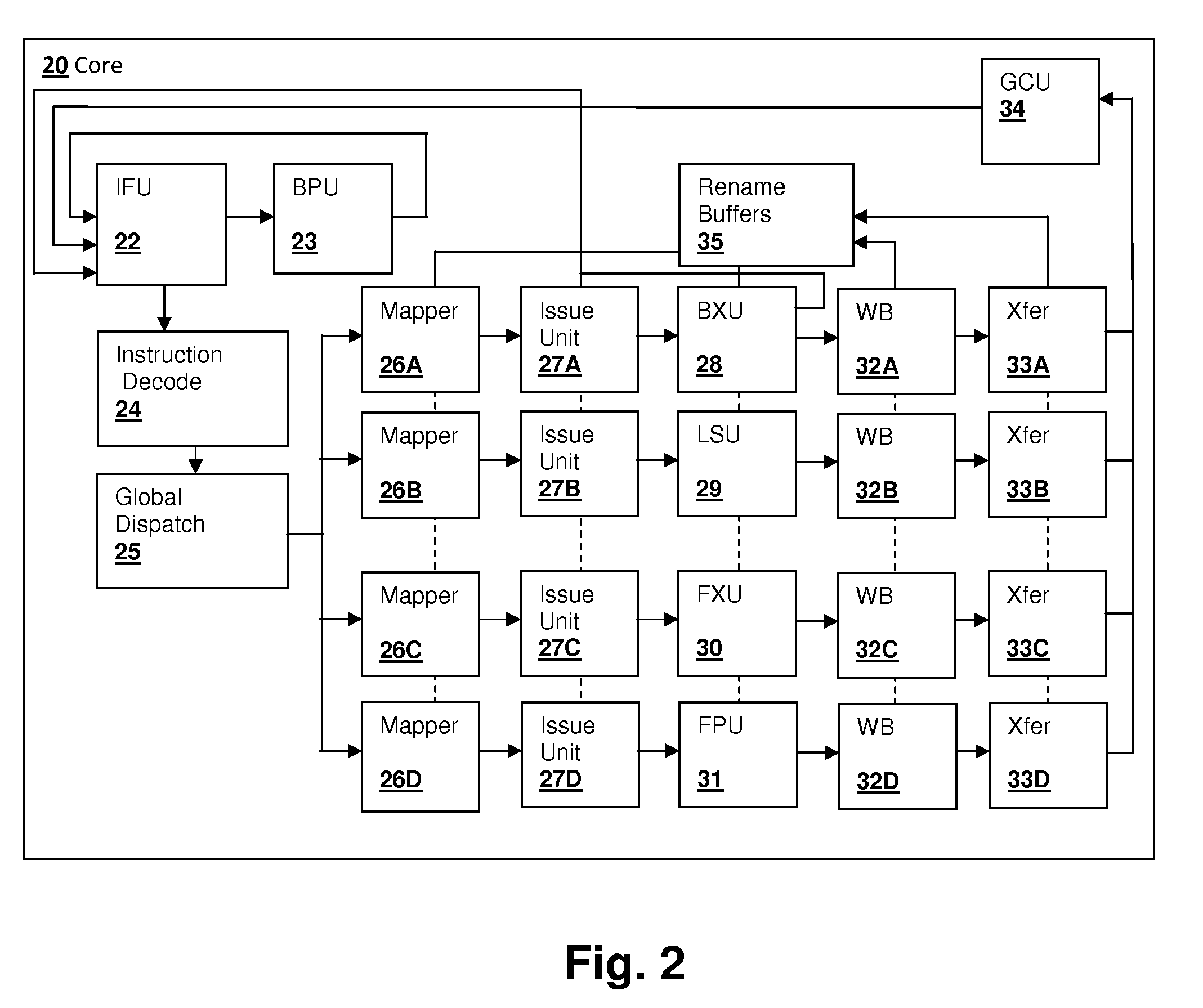

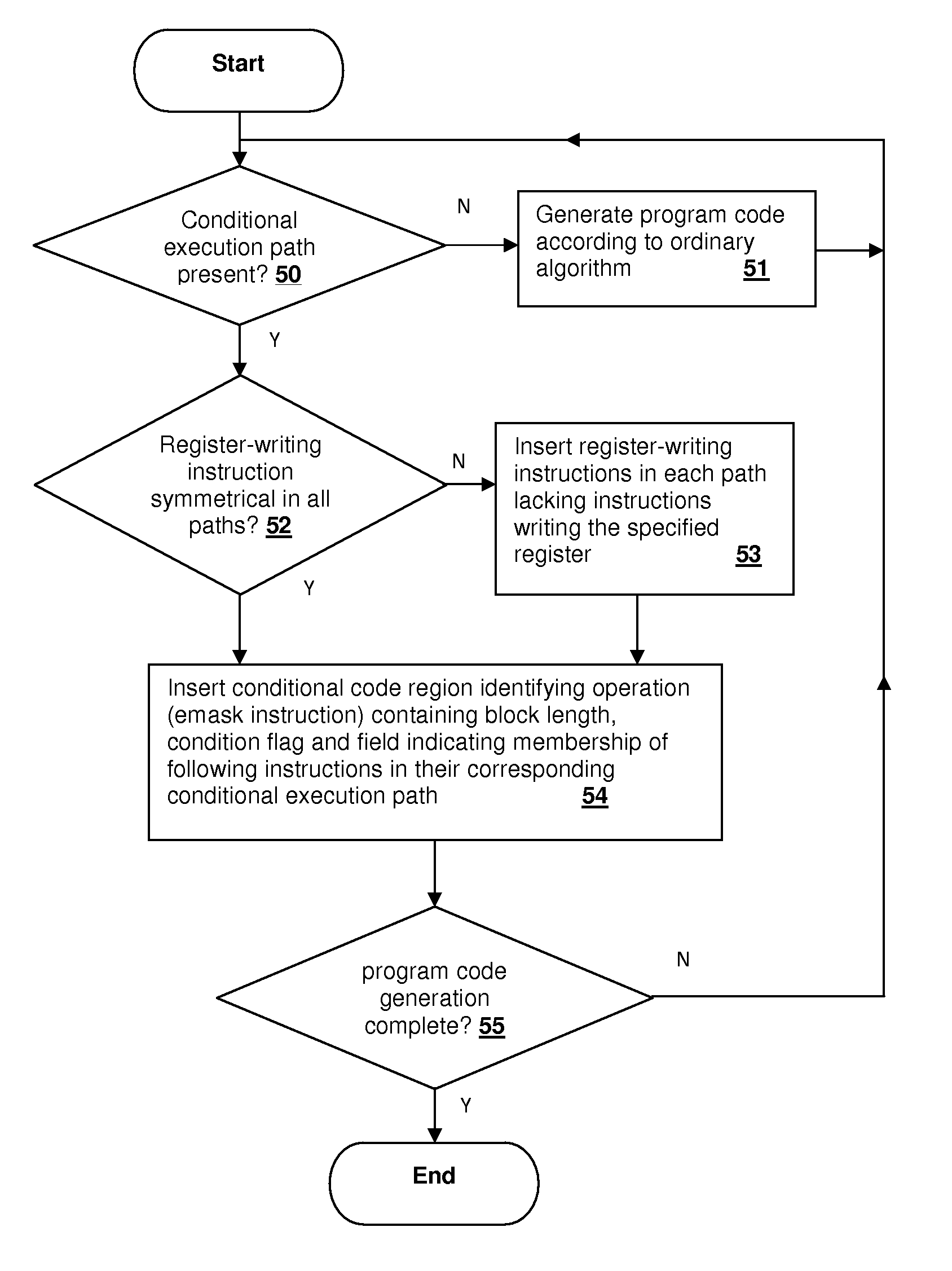

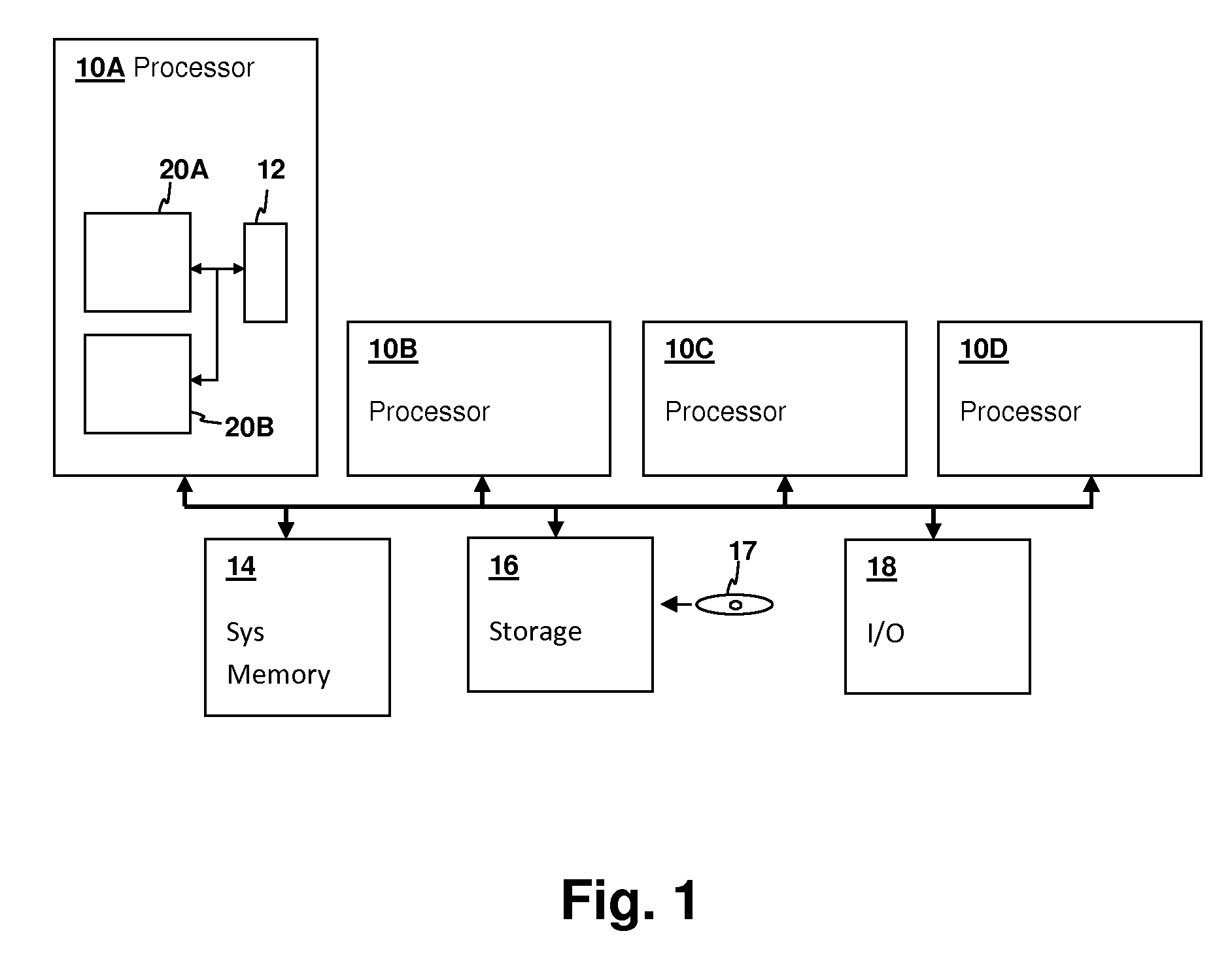

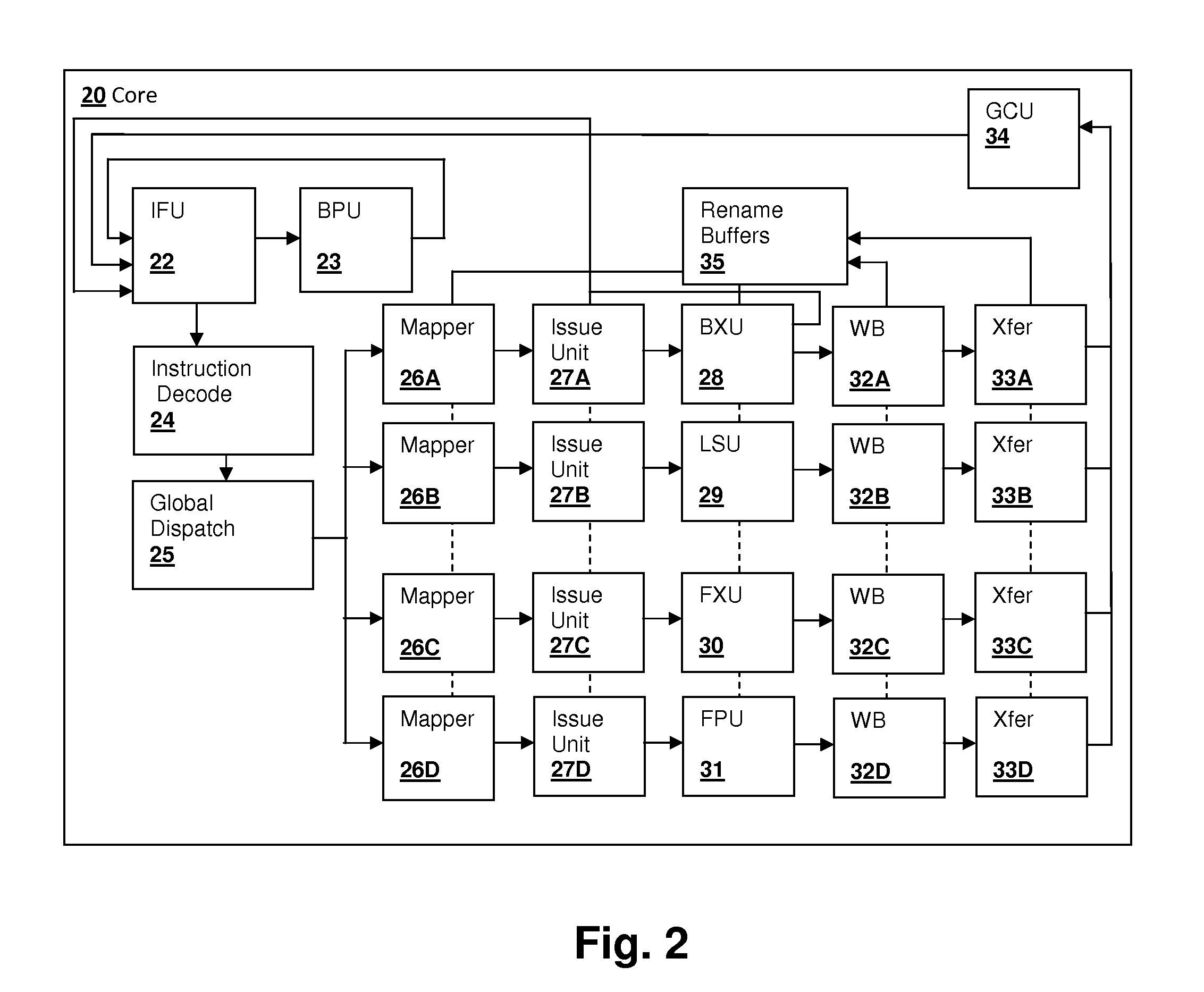

Predication supporting code generation by indicating path associations of symmetrically placed write instructions

ActiveUS20090288063A1Software engineeringSpecific program execution arrangementsCorresponding conditionalImage resolution

A predication technique for out-of-order instruction processing provides efficient out-of-order execution with low hardware overhead. A special op-code demarks unified regions of program code that contain predicated instructions that depend on the resolution of a condition. Field(s) or operand(s) associated with the special op-code indicate the number of instructions that follow the op-code and also contain an indication of the association of each instruction with its corresponding conditional path. Each conditional register write in a region has a corresponding register write for each conditional path, with additional register writes inserted by the compiler if symmetry is not already present, forming a coupled set of register writes. Therefore, a unified instruction stream can be decoded and dispatched with the register writes all associated with the same re-name resource, and the conditional register write is resolved by executing the particular instruction specified by the resolved condition.

Owner:IBM CORP

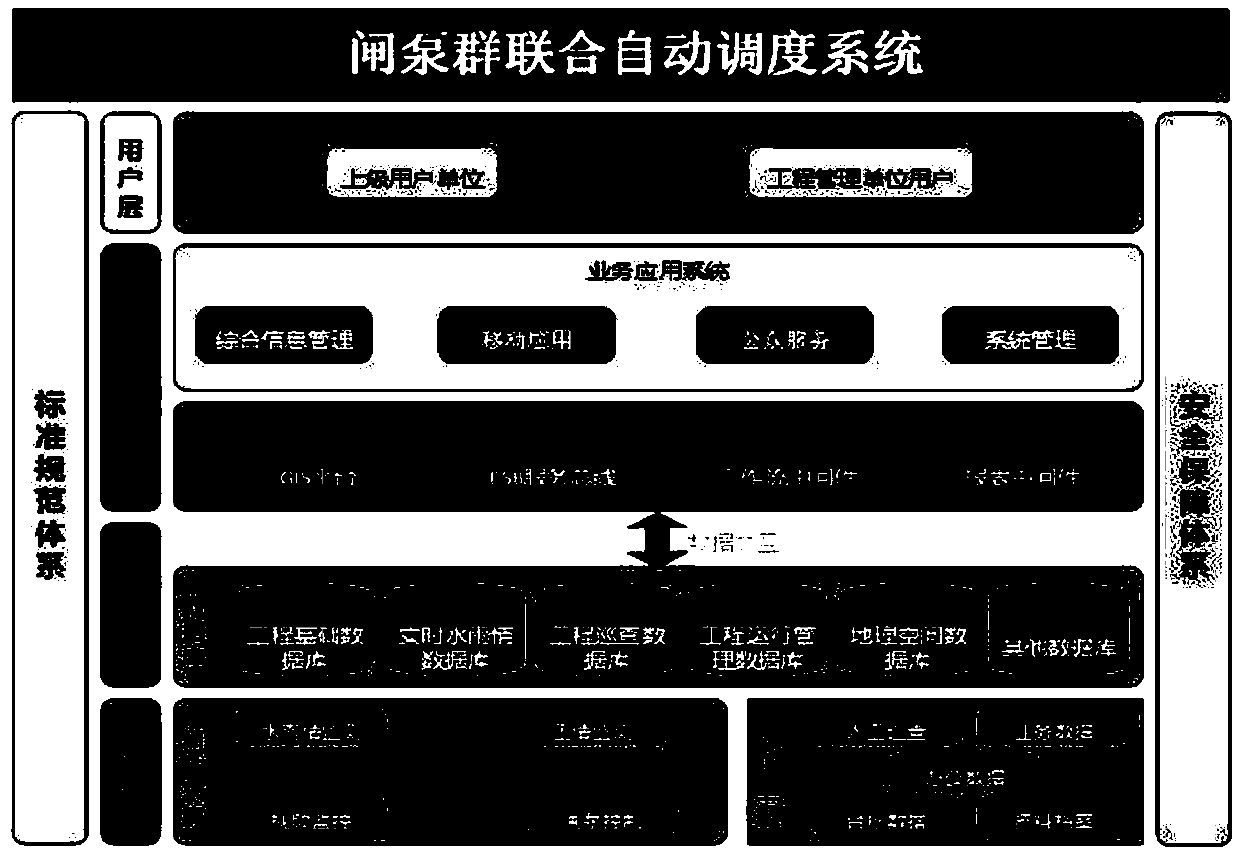

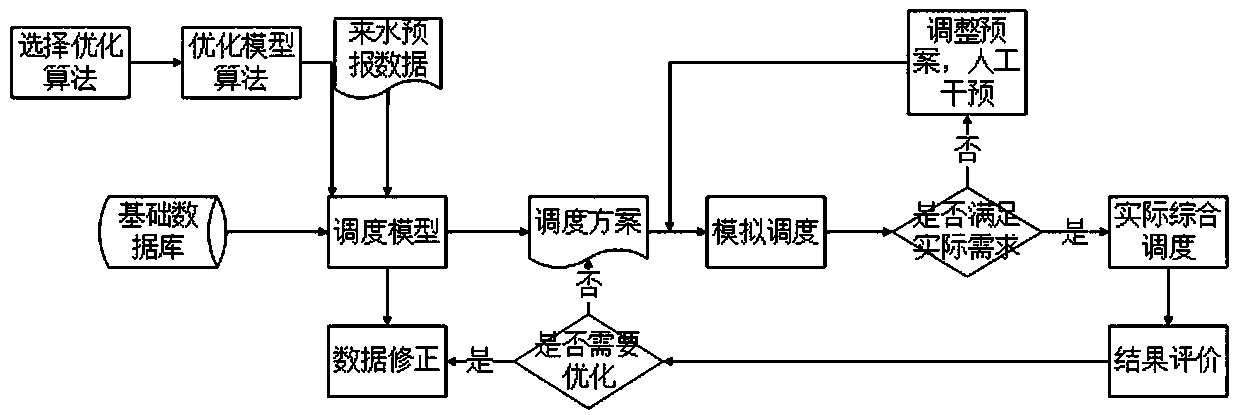

Combined automatic scheduling method for gate pump group based on automatic generation plan

InactiveCN107609787AAutomatically generate scheduling plansRealize the goal of "unmanned watch"Climate change adaptationResourcesAutomatic Generation ControlCorresponding conditional

The invention relates to a combined automatic scheduling method for gate pump group based on an automatic generation plan. When the combined scheduling of the gate pump group meets a scheduling condition, a system selects a corresponding conditional algorithm from a model algorithm. The method comprises the steps: carrying out the analysis and selection of corresponding model data through combining with the functions of flood control safety, ecological water compensation and water distribution when the incoming water forecast and the water level of a gate pump group channel reach a certain value; outputting the whole gate pump group combined scheduling plan after calculation of a mathematic model; carrying out the simulation demonstration through the simulation of the scheduling demonstration, correspondingly correcting and optimizing an optimization scheduling plan through manual adjustment or according to a demonstration result if the demonstration result does not meet the requirements, and carrying out the simulation till the actual requirements are met; switching to a next step of actual comprehensive scheduling when the simulation demonstration result meets the requirements, and carrying out the real-time monitoring in the process of the actual comprehensive scheduling.

Owner:SICHUANG TECH CO LTD

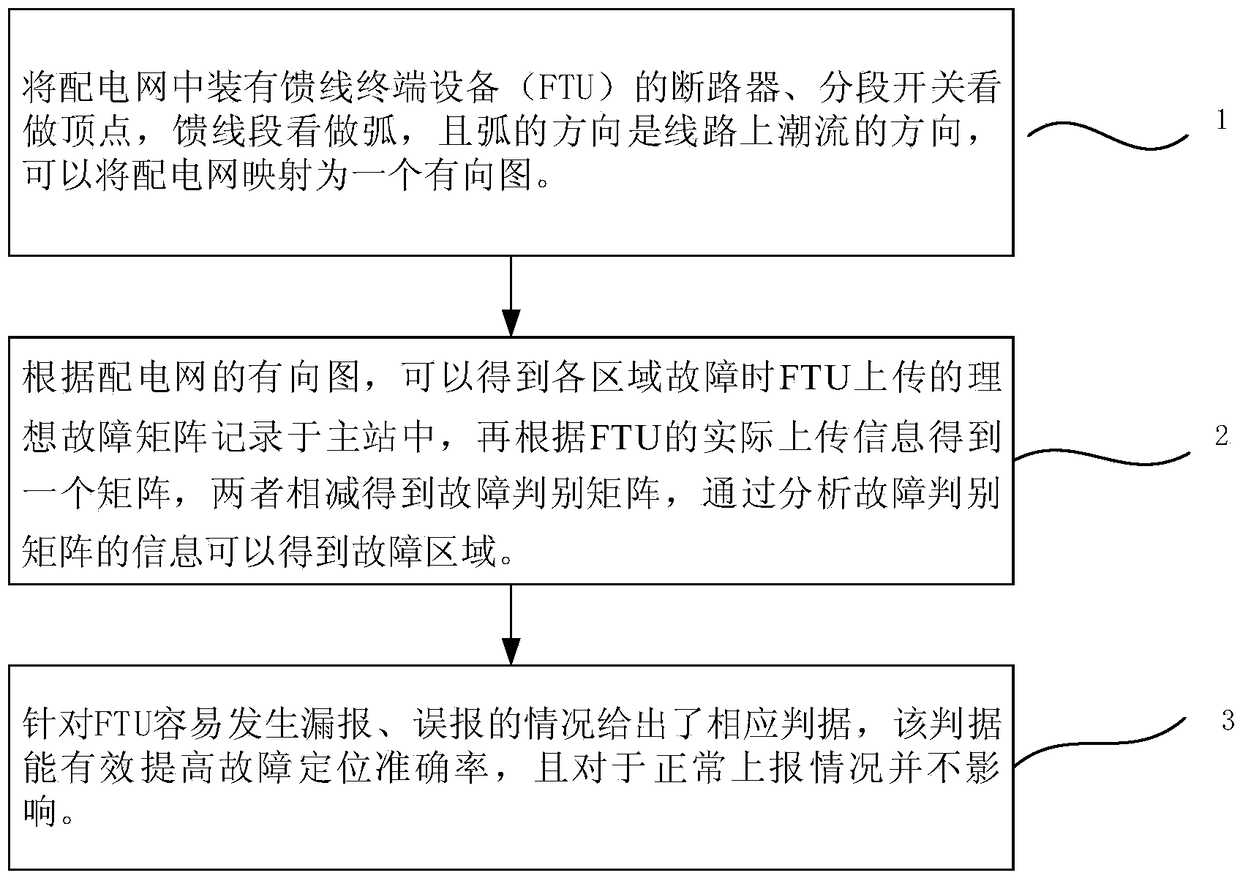

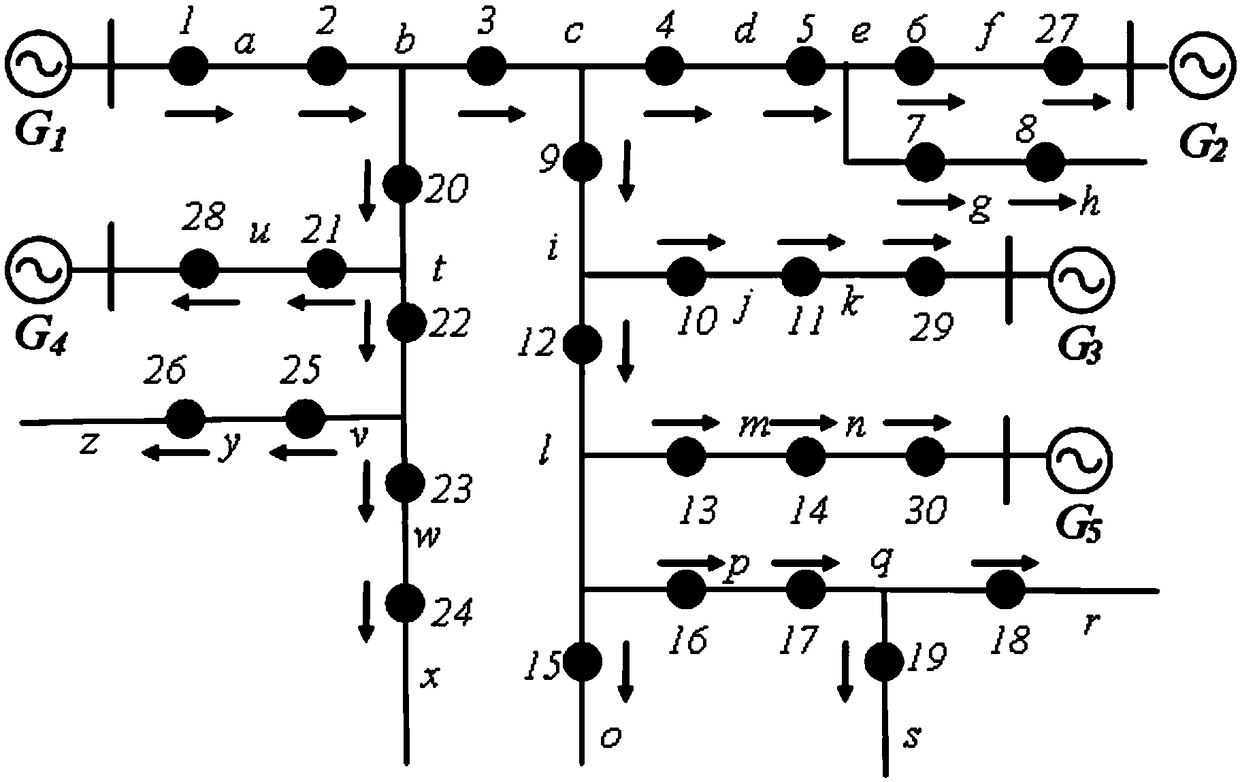

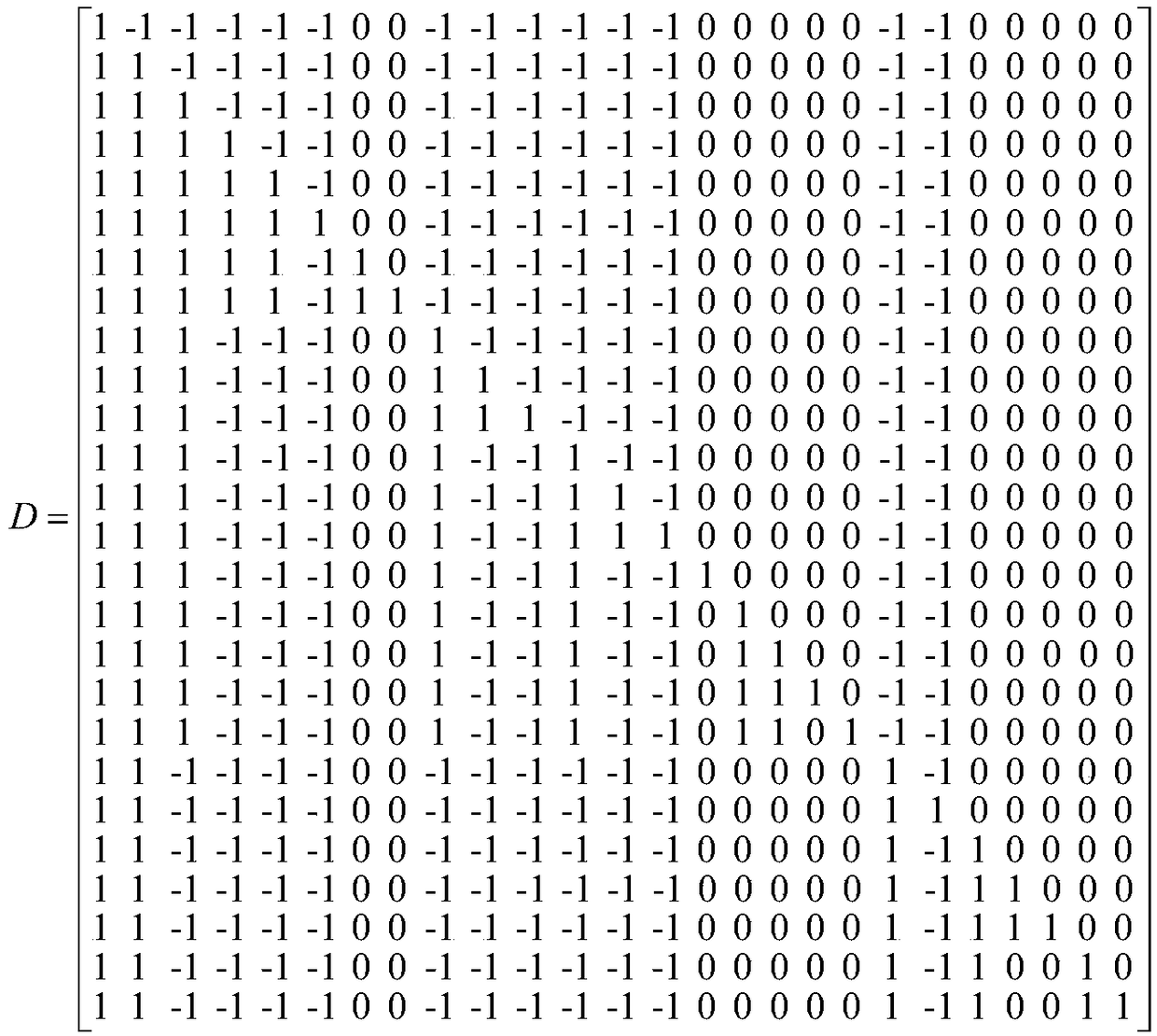

Distribution network fault location method with high fault-tolerant rate

InactiveCN108931709AImprove fault toleranceImproved fault location accuracyFault location by conductor typesFault tolerancePower flow

The invention discloses a distribution network fault location method with a high fault-tolerant rate belonging to the technical field of AC distribution network fault diagnosis. The method achieves the fault location by comparing an overcurrent information matrix uploaded during a FTU fault with an overcurrent information matrix formed according to a network topology, and specifically includes: mapping a distribution network into a directed graph; according to the directed graph of the distribution network, obtaining an ideal fault matrix D; obtaining an reported information matrix G accordingto the information actually uploaded by the FTU; making subtraction on the ideal fault matrix D and the reported information matrix G to obtain a fault discriminant matrix P, thereby obtaining a fault region; and in view of missed reporting and false reporting, establishing a fault location criterion, and simulating a fault condition that the FTU generates missed reporting and false reporting according to the fault location criterion and a corresponding conditional probability. The method is simple in reasoning and good in fault tolerance. Particularly for the missed reporting and false reporting of the FTU, the provided fault location discrimination method and the expanded fault location region can improve the positioning accuracy.

Owner:NORTH CHINA ELECTRIC POWER UNIV (BAODING) +3

Predication support in an out-of-order processor by selectively executing ambiguously renamed write operations

InactiveUS20090287908A1Software engineeringDigital computer detailsCorresponding conditionalImage resolution

A predication technique for out-of-order instruction processing provides efficient out-of-order execution with low hardware overhead. A special op-code demarks unified regions of program code that contain predicated instructions that depend on the resolution of a condition. Field(s) or operand(s) associated with the special op-code indicate the number of instructions that follow the op-code and also contain an indication of the association of each instruction with its corresponding conditional path. Each conditional register write in a region has a corresponding register write for each conditional path, with additional register writes inserted by the compiler if symmetry is not already present, forming a coupled set of register writes. Therefore, a unified instruction stream can be decoded and dispatched with the register writes all associated with the same re-name resource, and the conditional register write is resolved by executing the particular instruction specified by the resolved condition.

Owner:IBM CORP

Predication support in an out-of-order processor by selectively executing ambiguously renamed write operations

InactiveUS7886132B2Software engineeringInterprogram communicationImage resolutionCorresponding conditional

Owner:INT BUSINESS MASCH CORP

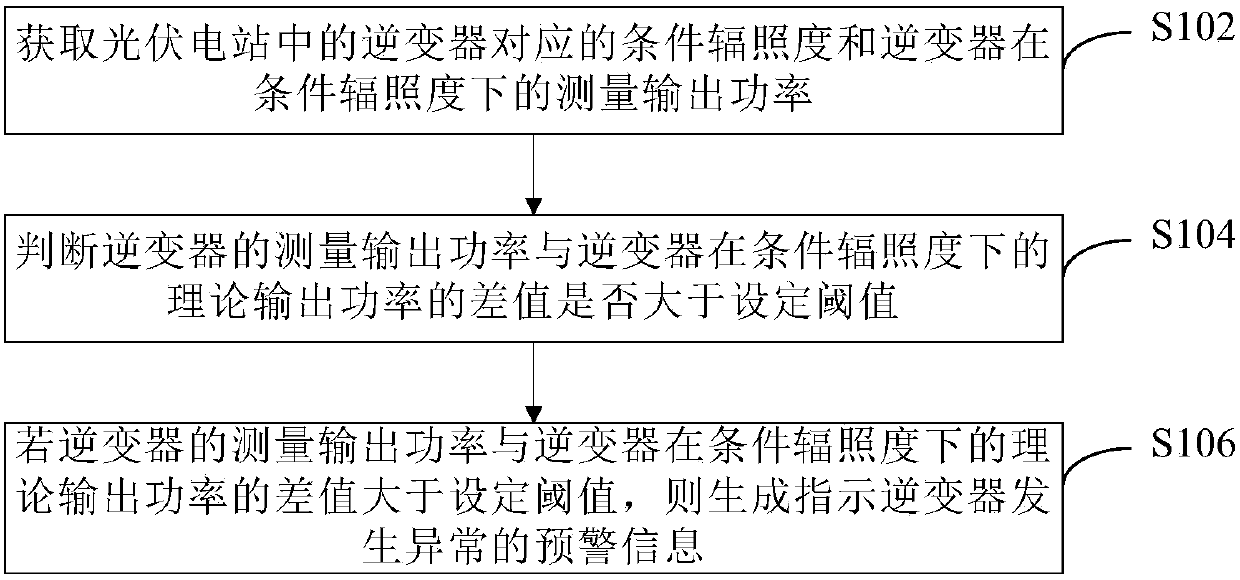

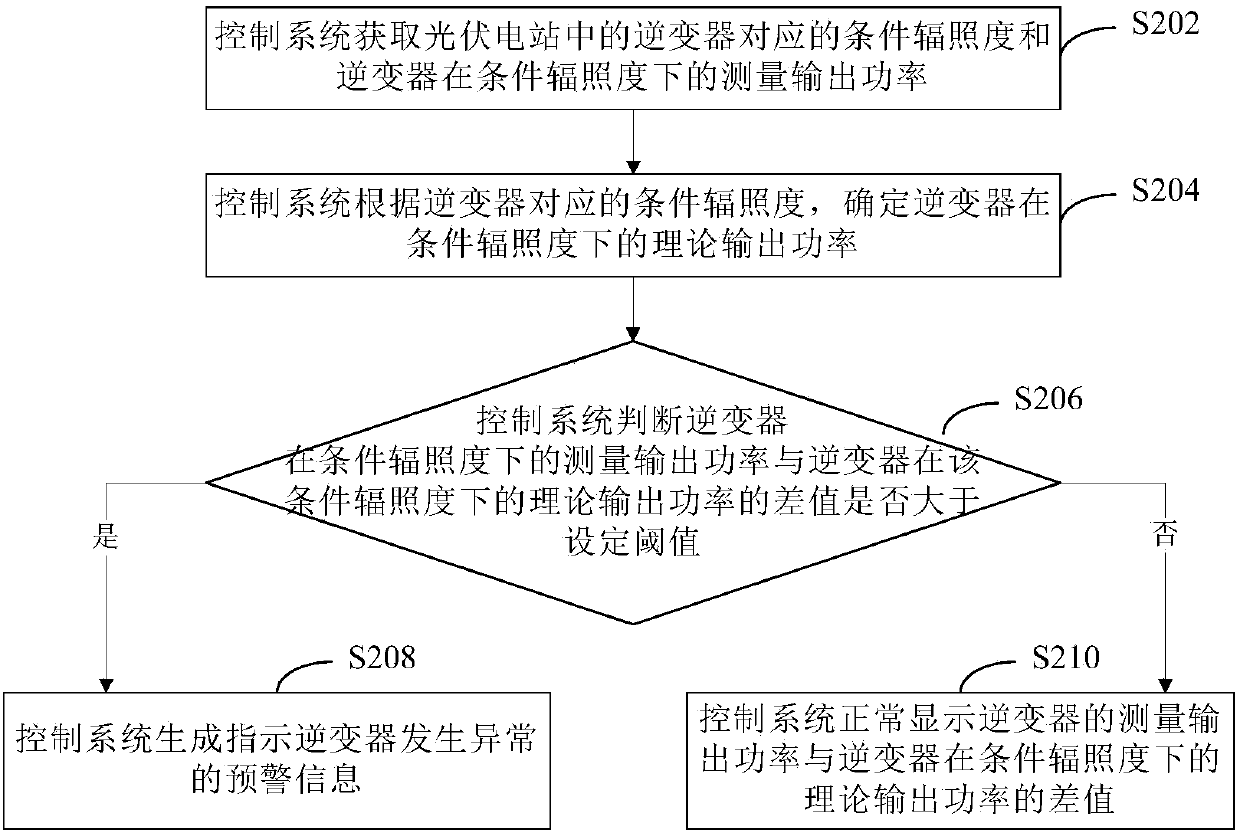

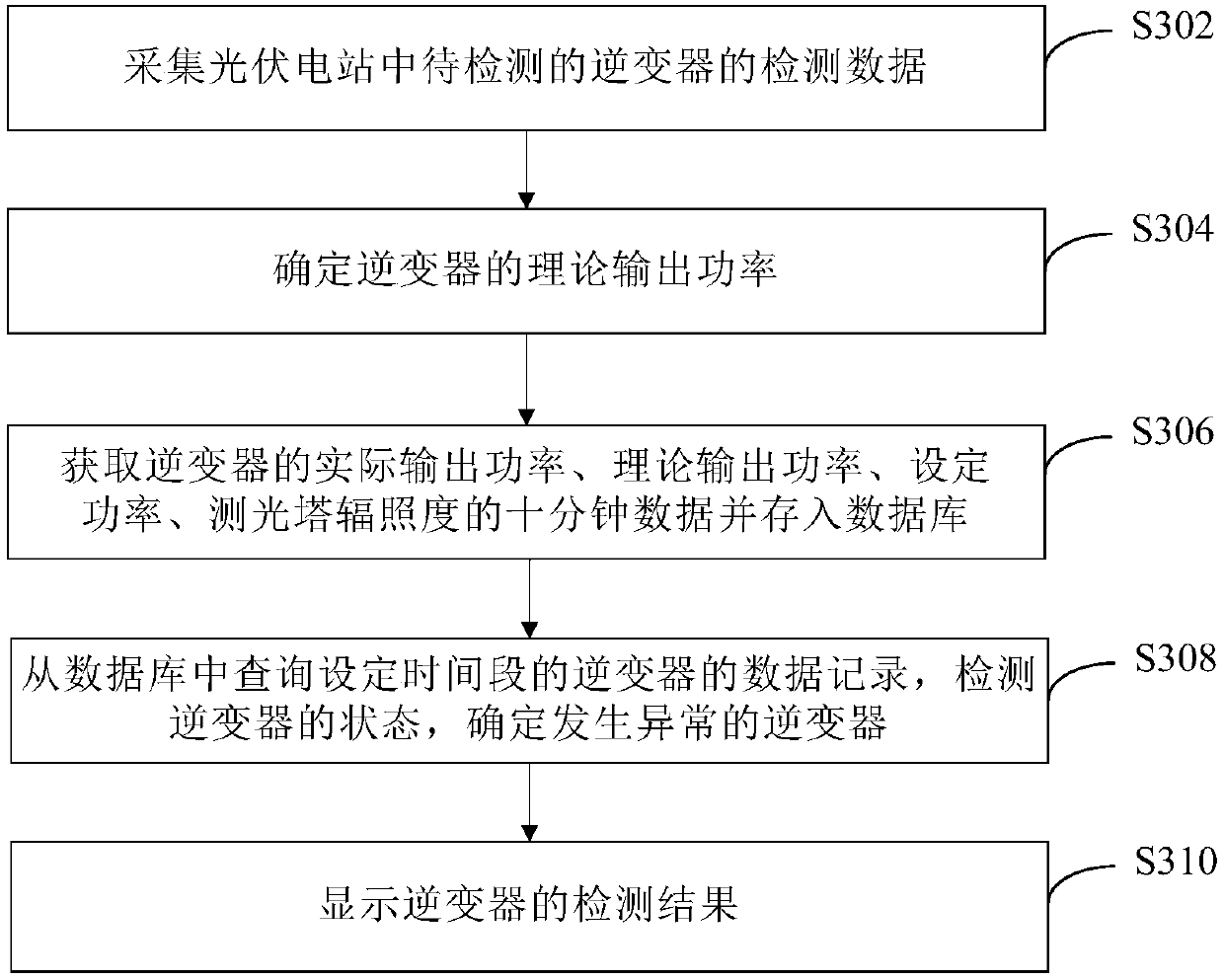

Abnormality detection method and device for inverter in photovoltaic power station

InactiveCN107589318AImprove operational efficiencyImprove maintenance efficiencyElectrical testingPower inverterAnomaly detection

The embodiment of the invention provides an abnormality detection method and device for an inverter in a photovoltaic power station, and the method comprises the steps: obtaining the corresponding conditional irradiance of the inverter in the photovoltaic power station and the measurement output power of the inverter under the condition of the conditional irradiance; judging whether the differencebetween the measurement output power of the inverter and the theoretical output power of the inverter under the condition of the conditional irradiance is greater than a set threshold value or not; and generating early warning information for indicating the abnormality of the inverter if the difference is greater than the set threshold value. According to the embodiment of the invention, the method achieves the timely and effective efficiency detection of the inverter in the photovoltaic power station, achieves the prediction and early warning of the state of the inverter, and greatly improves the operation and maintenance efficiency of the photovoltaic power station.

Owner:XINJIANG GOLDWIND SCI & TECH

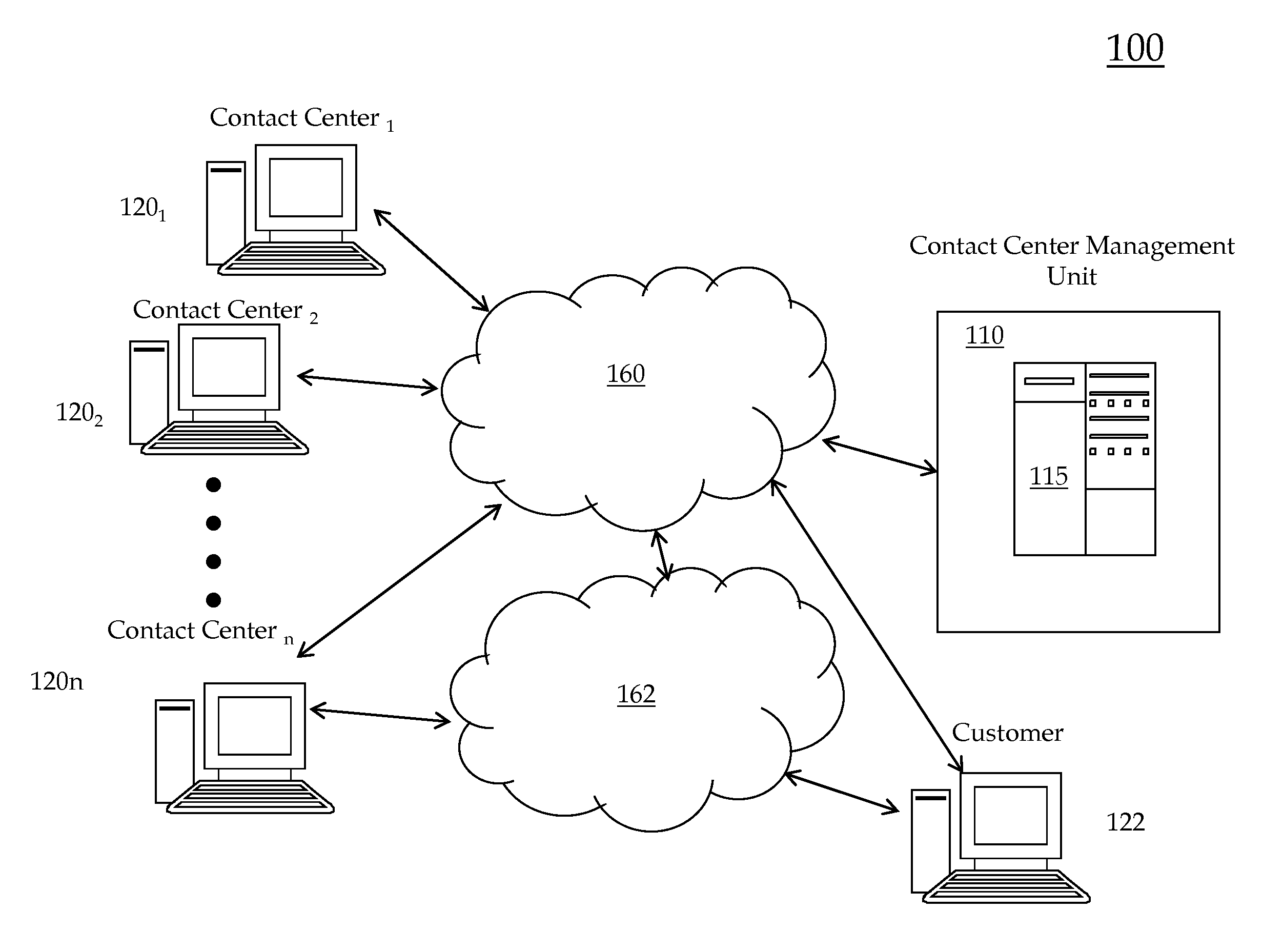

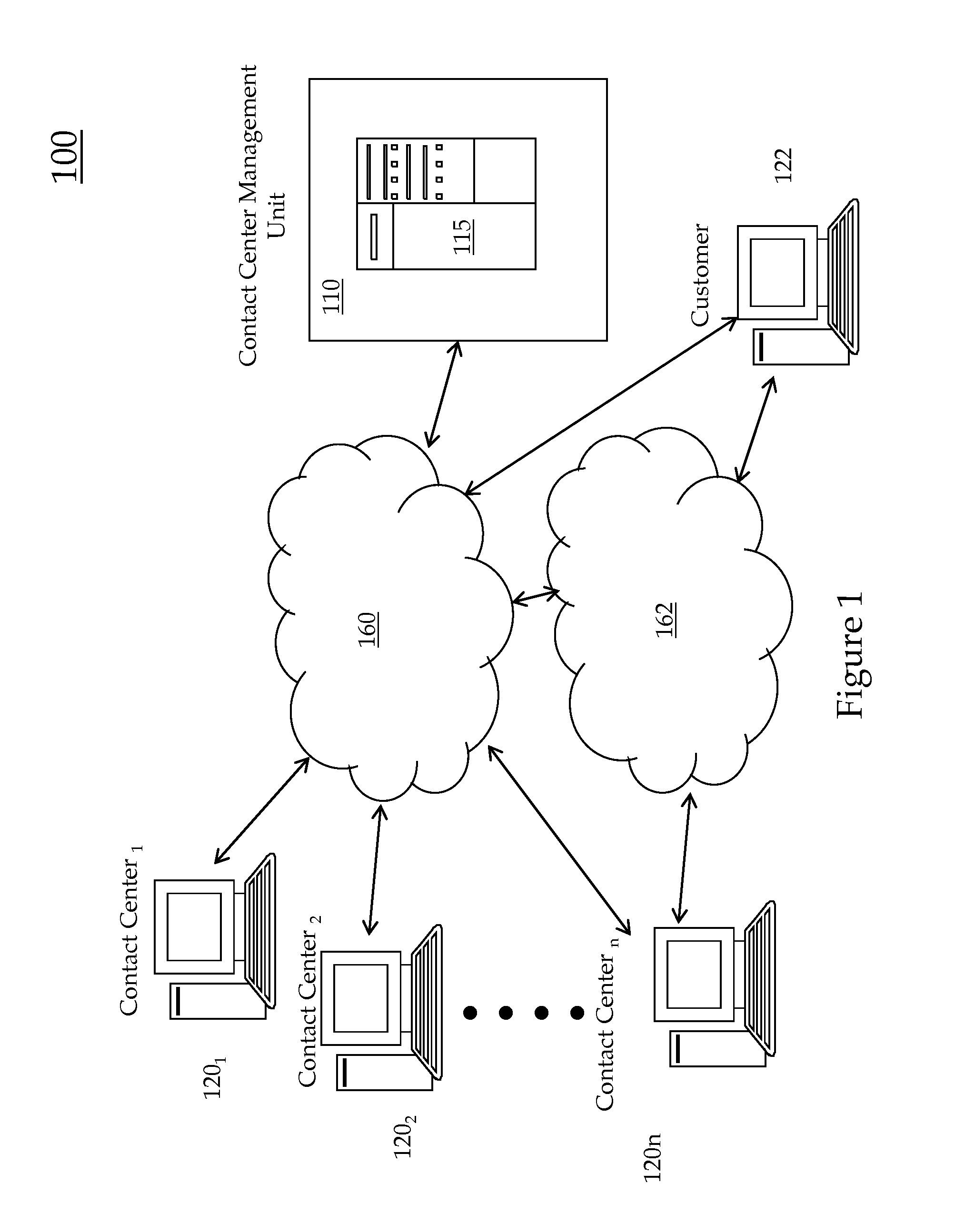

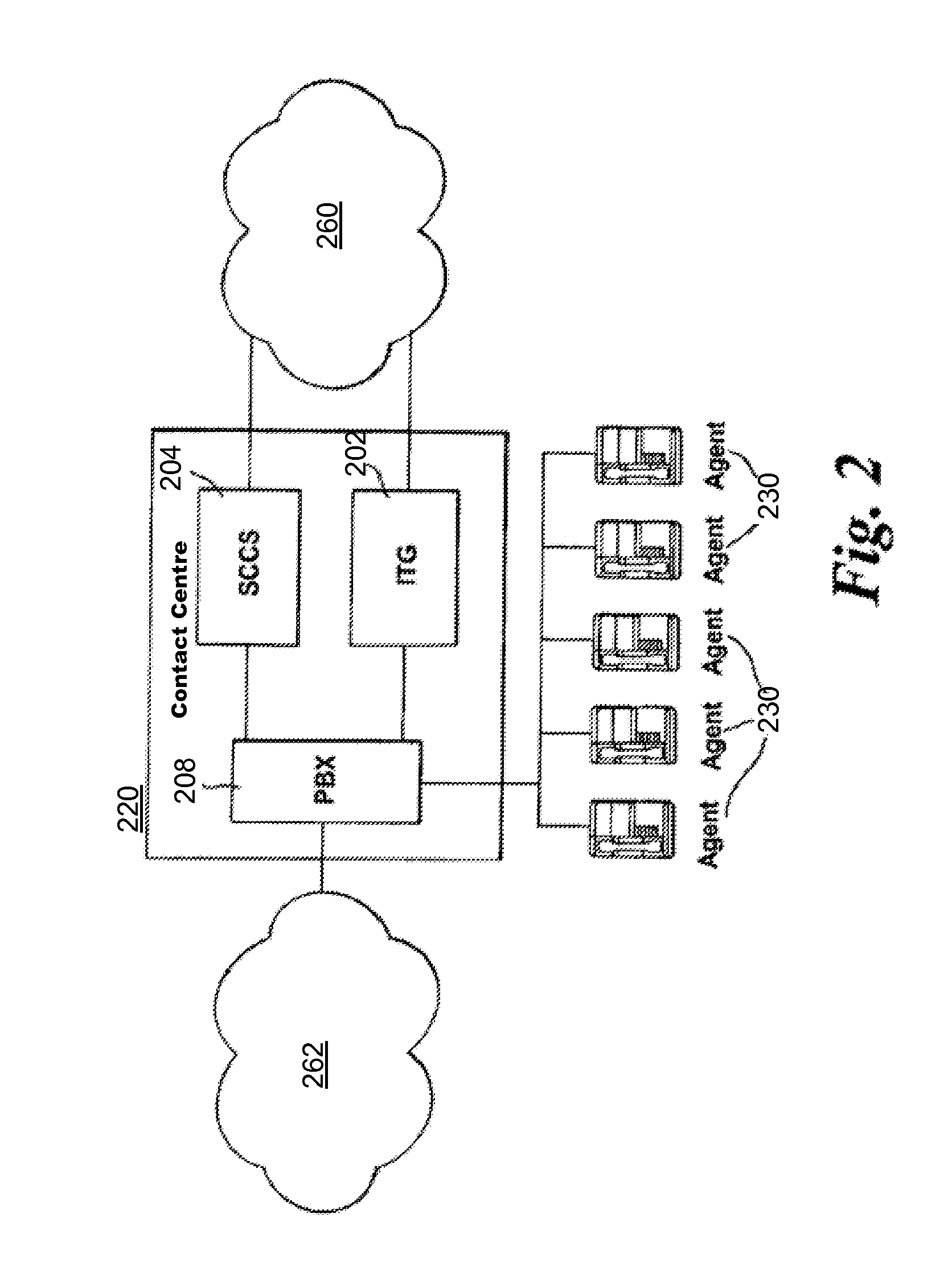

System and method for responding to changing conditions in contact centers

Embodiments of the present invention relate to a business continuity system and method where conditional profiles and processes can be set for multiple contact centers with a set of object types while each contact center object is capable of responding uniquely to a changed condition. In accordance with one embodiment, there is provided a contact center management system for managing conditions in a contact center, the system comprising a computer system database for storing conditional profiles, each conditional profile corresponding to a set of varying types of objects associated with a contact center; a condition monitoring unit for receiving condition notifications from the contact center; and a response unit for responding to a received condition notification with a corresponding conditional profile having the set of objects associated with that conditional profile, wherein multiple object types in the set of objects are capable of uniquely responding to the received condition.

Owner:AVAYA INC

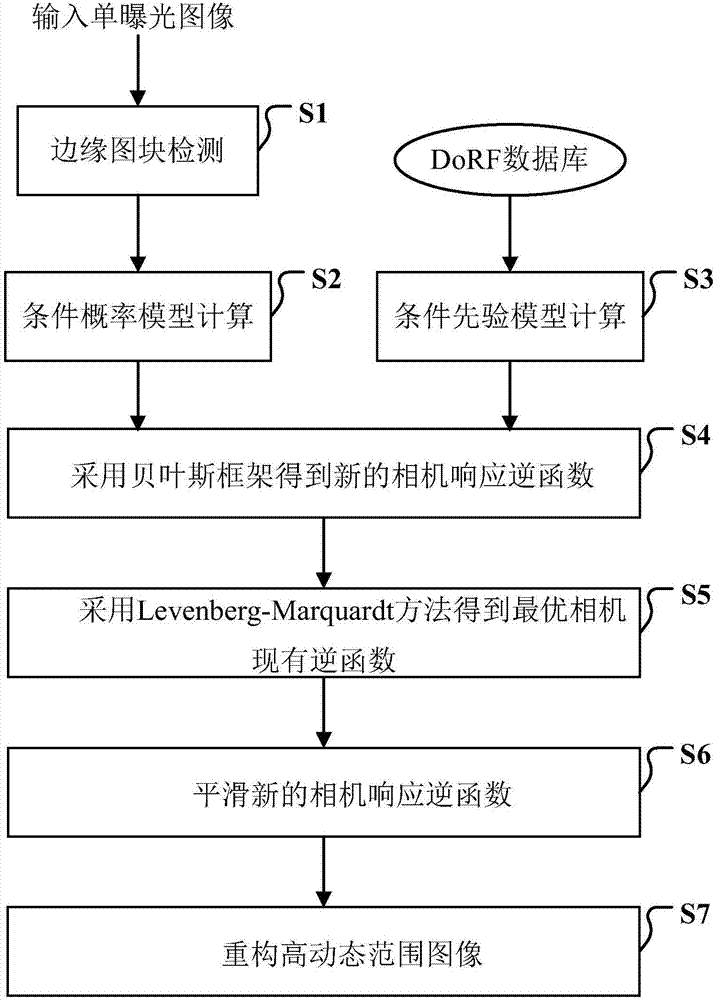

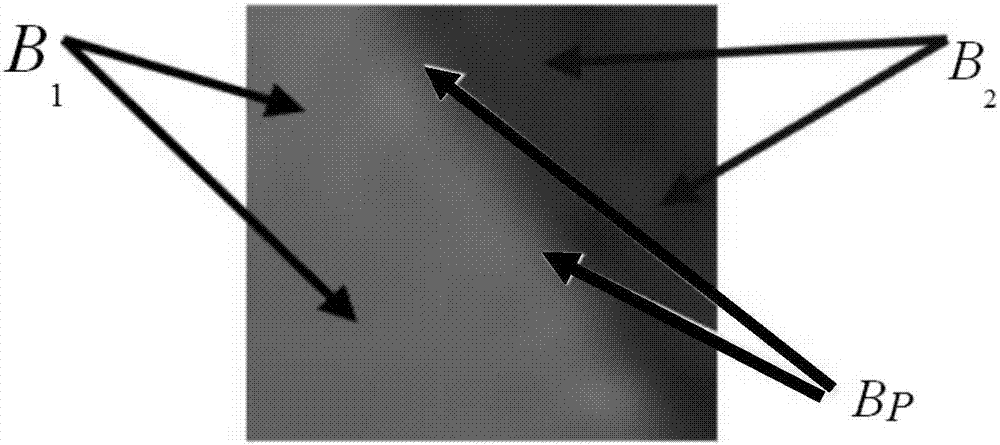

Single-frame image-based high-dynamic range image generation method

InactiveCN107451970AAvoid artifactsImage enhancementTelevision system detailsCorresponding conditionalMaximum a posteriori estimation

The invention discloses a single-frame image-based high-dynamic range image generation method. According to the method, an edge image block set is found through edge detection, and the total distance of the edge image block set is calculated under a camera response inverse function; a corresponding conditional probability model is constructed; a sampling Gaussian mixture model is constructed according to 201 camera response curves in a DoRF database so as to describe a priori probability model; a maximum posteriori probability model is obtained by using a Bayesian framework; new camera response inverse functions are obtained under a condition that the maximum posteriori probability model is maximum; a Levenberg-Marquardt method is adopted to iteratively solve the new camera response inverse functions to obtain an optimal camera response inverse function; and reconstruction is performed according to the optimal camera response inverse function, so that a high-dynamic range image can be obtained. Image dynamic range expansion is performed in a high-brightness area and a low-brightness area simultaneously, and therefore, the problem of artifacts and details loss at the dark area of the image caused by a method only performing image dynamic range expansion in the high-brightness area can be avoided.

Owner:UNIV OF ELECTRONICS SCI & TECH OF CHINA

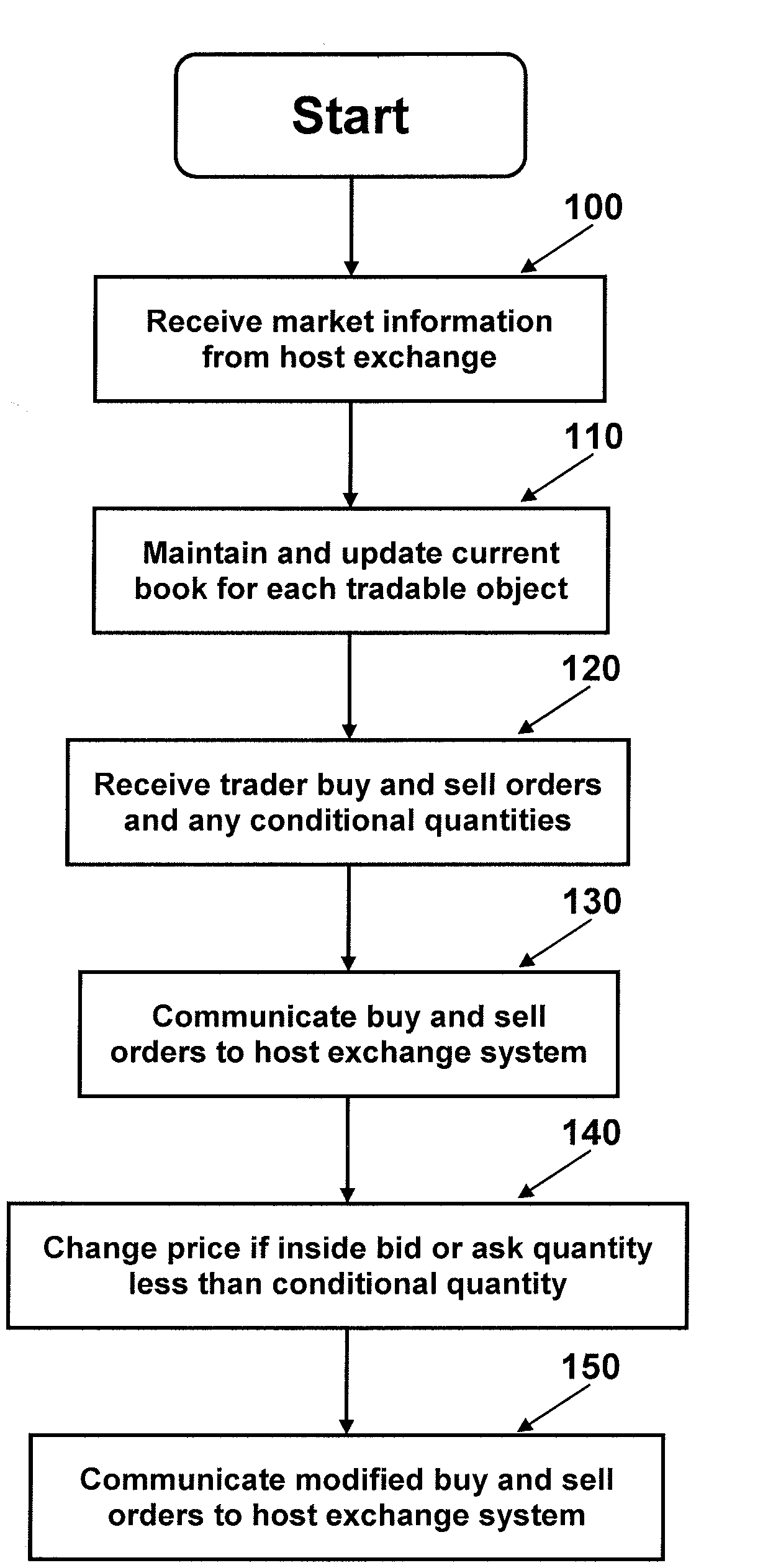

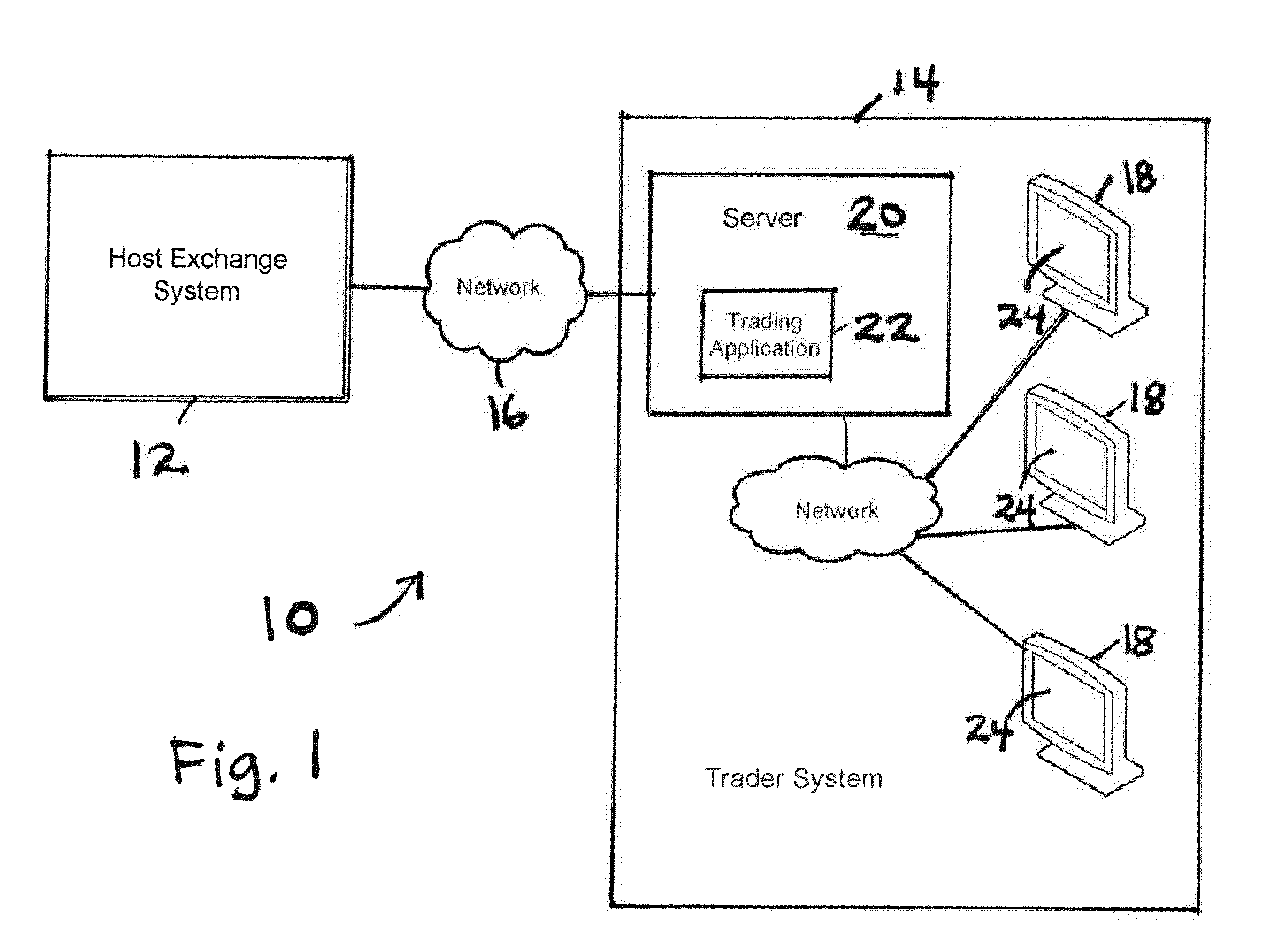

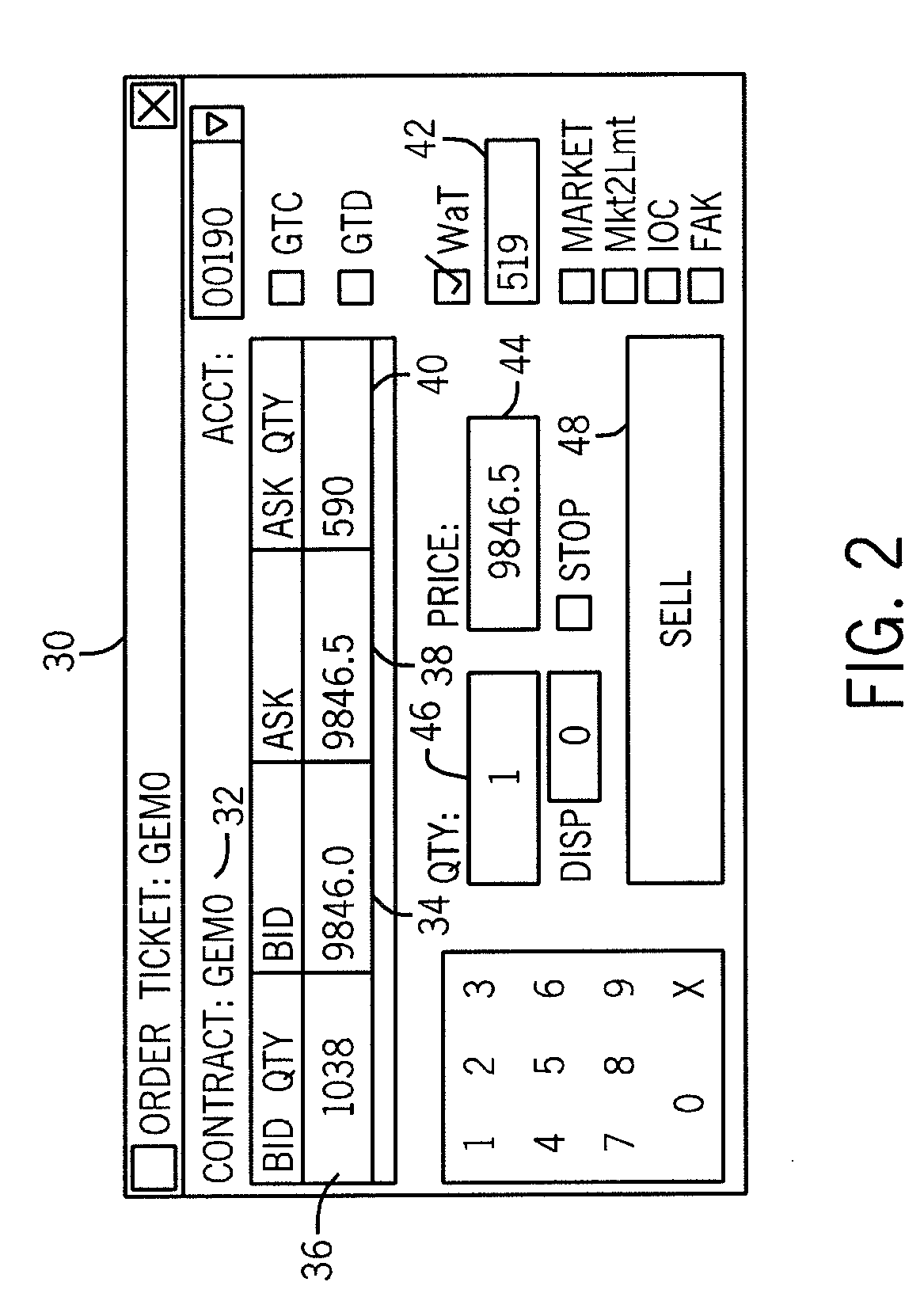

System and Method for Conditional Modification of Buy and Sell Orders in Electronic Trading Exchange

InactiveUS20100312716A1Raise the possibilityGood priceFinanceMultiple digital computer combinationsUser deviceCorresponding conditional

A system and method for automatically modifying buy and sell orders in an electronic trading exchange is described. The trader system includes a user device for submitting trader buy and sell orders and conditional quantities, and a trading application which receives the trader buy and sell orders and conditional quantities and communicates these to a host exchange system. For each conditional quantity associated with a trader buy order, the trading application monitors an inside ask quantity associated with the identified desired tradable object of that trader buy order such that if the associated inside ask quantity becomes less than the conditional quantity, then the trading application modifies the trader buy order to increase the desired buy price by a predetermined user-defined increment or to the inside ask price associated with the identified desired tradable object and communicates the modified buy order to the host exchange system. Similar operation occurs for a trader sell order, with the desired inside sell price being decreased if an associated inside bid quantity becomes less than the corresponding conditional quantity.

Owner:TRADING TECH INT INC

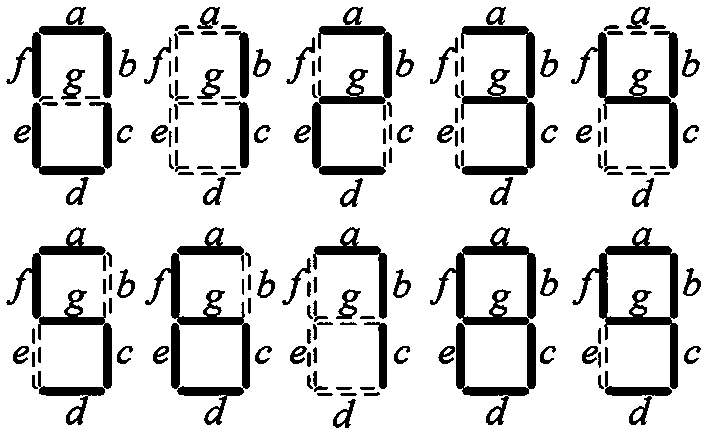

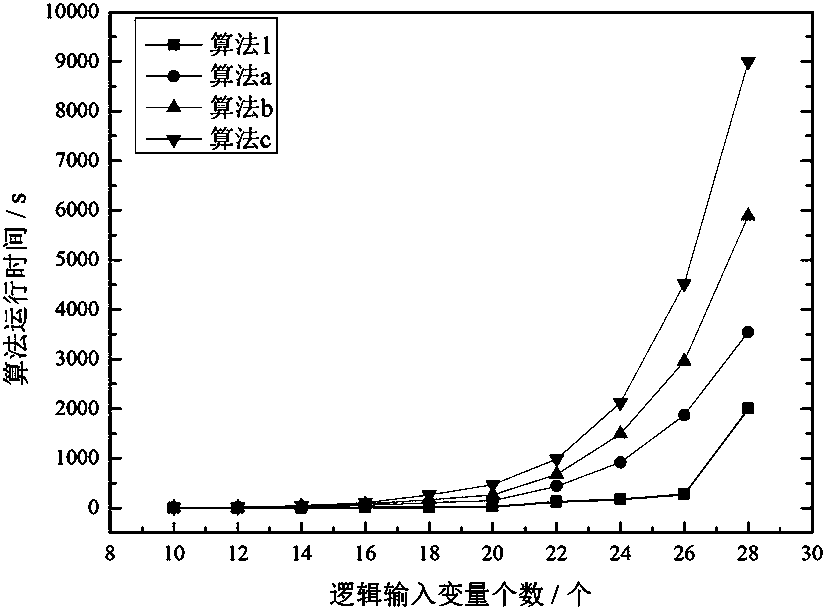

Form vector-based reduction method for multi-input multi-output truth table

PendingCN110263368ACorrectness statementGuaranteed to minimizeSpecial data processing applicationsMulti inputTruth value

The invention discloses a form vector-based reduction method for a multi-input multi-output truth table, and belongs to the technical field of logic circuit optimization. The technical problem to be solved is to provide a form vector-based reduction method for a multi-input multi-output truth table. The technical scheme for solving the technical problem comprises the following steps: 1, converting a truth table into a decision form background; 2, solving all single-attribute non-zero form vectors according to the conditional form background and the decision form background, and respectively storing the vectors into a conditional form vector set and a decision form vector set; 3, for any conditional form vector and decision form vector, if a rule reduction condition is met, calculating a heuristic operator of the corresponding conditional form vector, and storing the conditional form vector completing the rule extraction operation into a redundant form vector set. The method is used for optimizing the logic relation between the input and the output of the digital logic circuit.

Owner:TAIYUAN UNIV OF TECH

Method for Selecting Valid Variants in Search and Recommendation Systems (Variants)

InactiveUS20160004775A1Reduce computational complexityThe result is accurateWeb data indexingDigital data processing detailsCorresponding conditionalMethod selection

Selecting and ranking valid variants in search and recommendation systems selects and ranks variants with accuracy and speed. Criteria for evaluating the relevance of a variant to the search request are generated. A set of procedures for the selection and ranking of variants and a sequence for performing said procedures for the selection of variants evaluated as the most valid are established. An evaluation of each variant is based on relevance to search request criteria. The variants are then ranked by assigning a rank to each variant based on the condition of correspondence to the greatest number of criteria in decreasing order. Then the variants are selected and ranked in at least two stages using the superposition method, and the variants are selected, ranked and excluded until all of the established selection procedures have been used and the selected group of variants is evaluated as being the most valid.

Owner:FEDERALNOE GOSUDARSTVENNOE AVTONOMNOE OBRAZOVATELNOE UCHREZHDENIE VYSSHEGO PROFESSIONALNOGO OBRAZOVANIJA NATSIONALNYJ ISSLEDOVATELSKIJ UNIV VYSSHAJA SHKOLA EHKONOMIKI

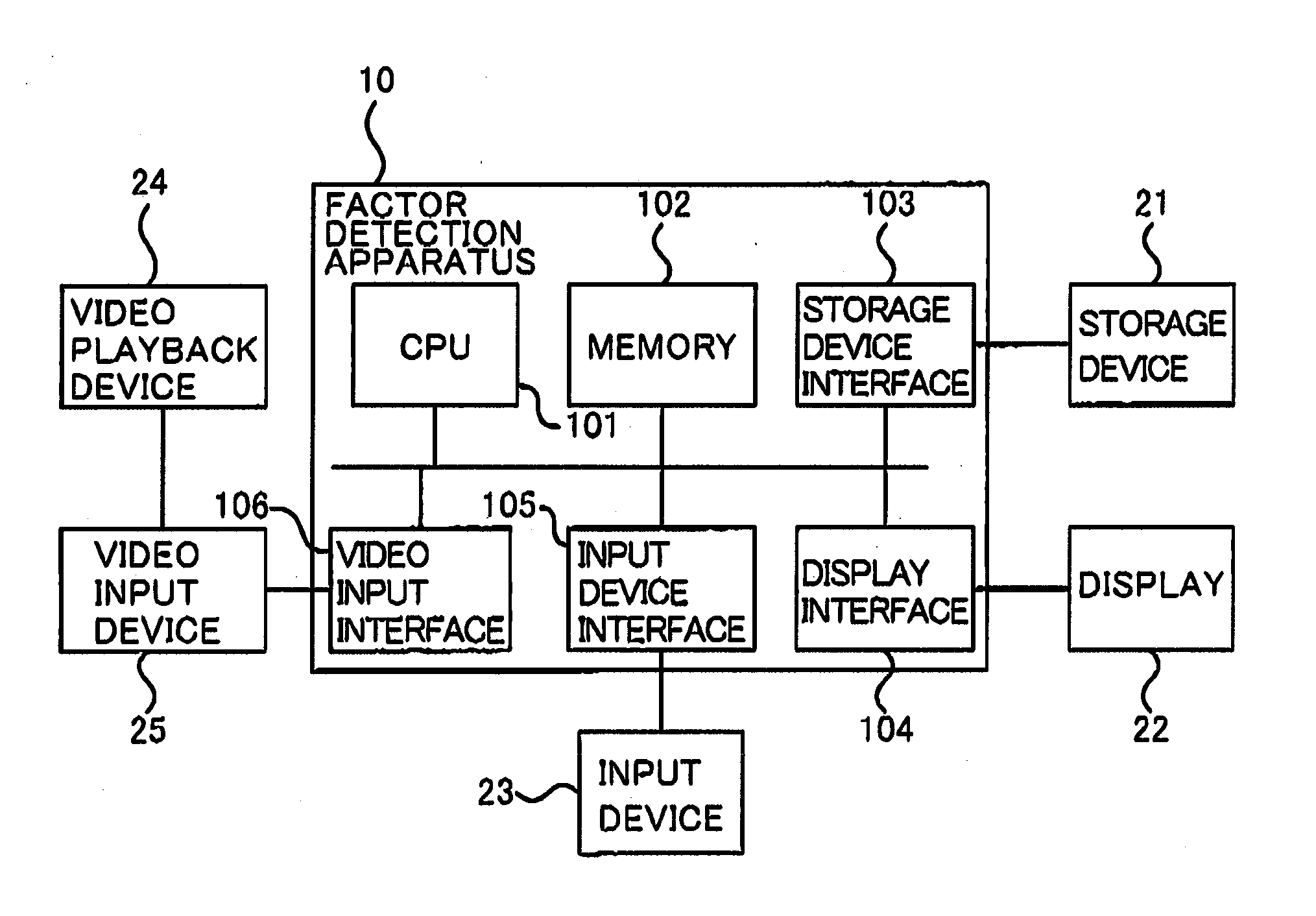

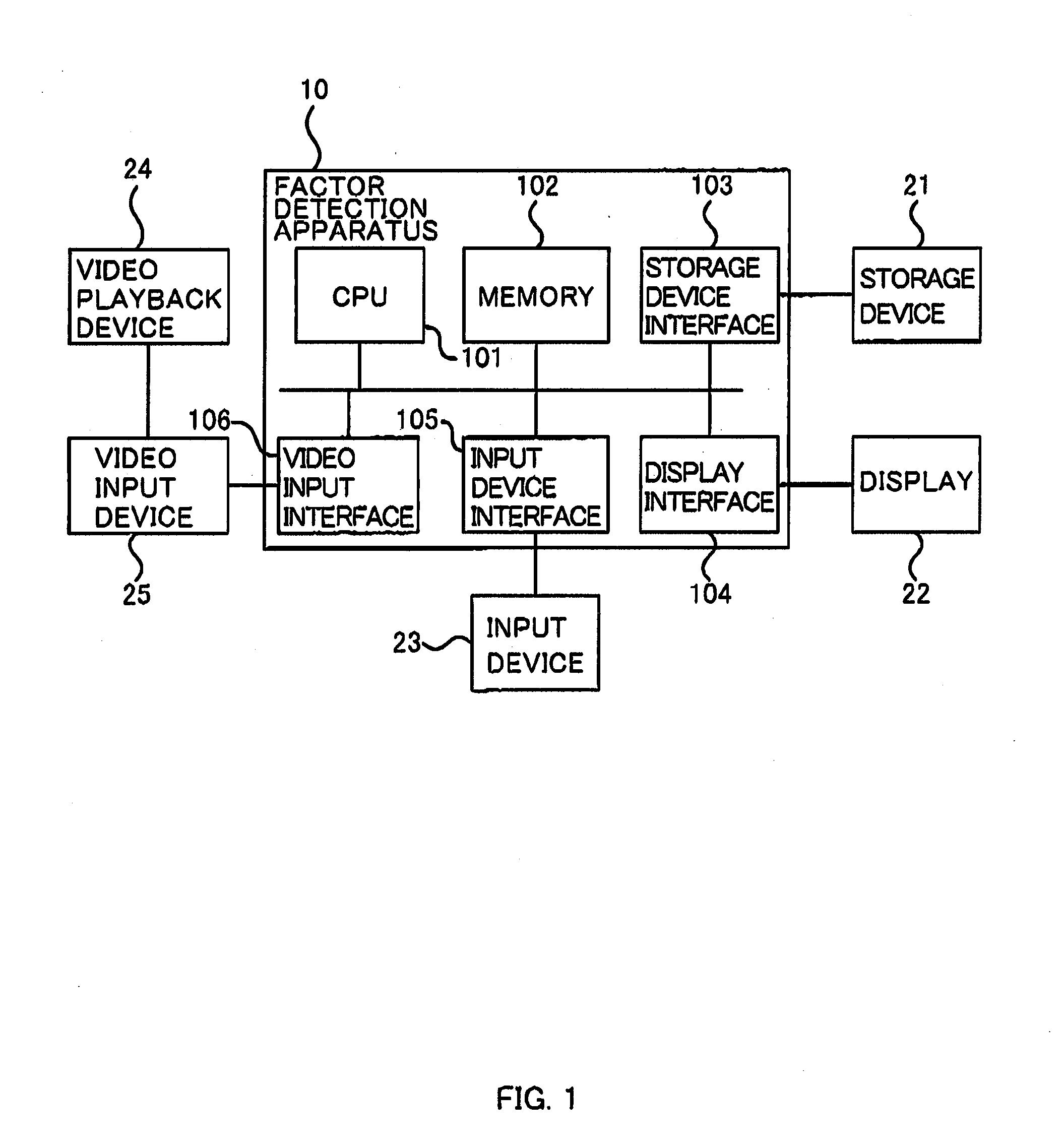

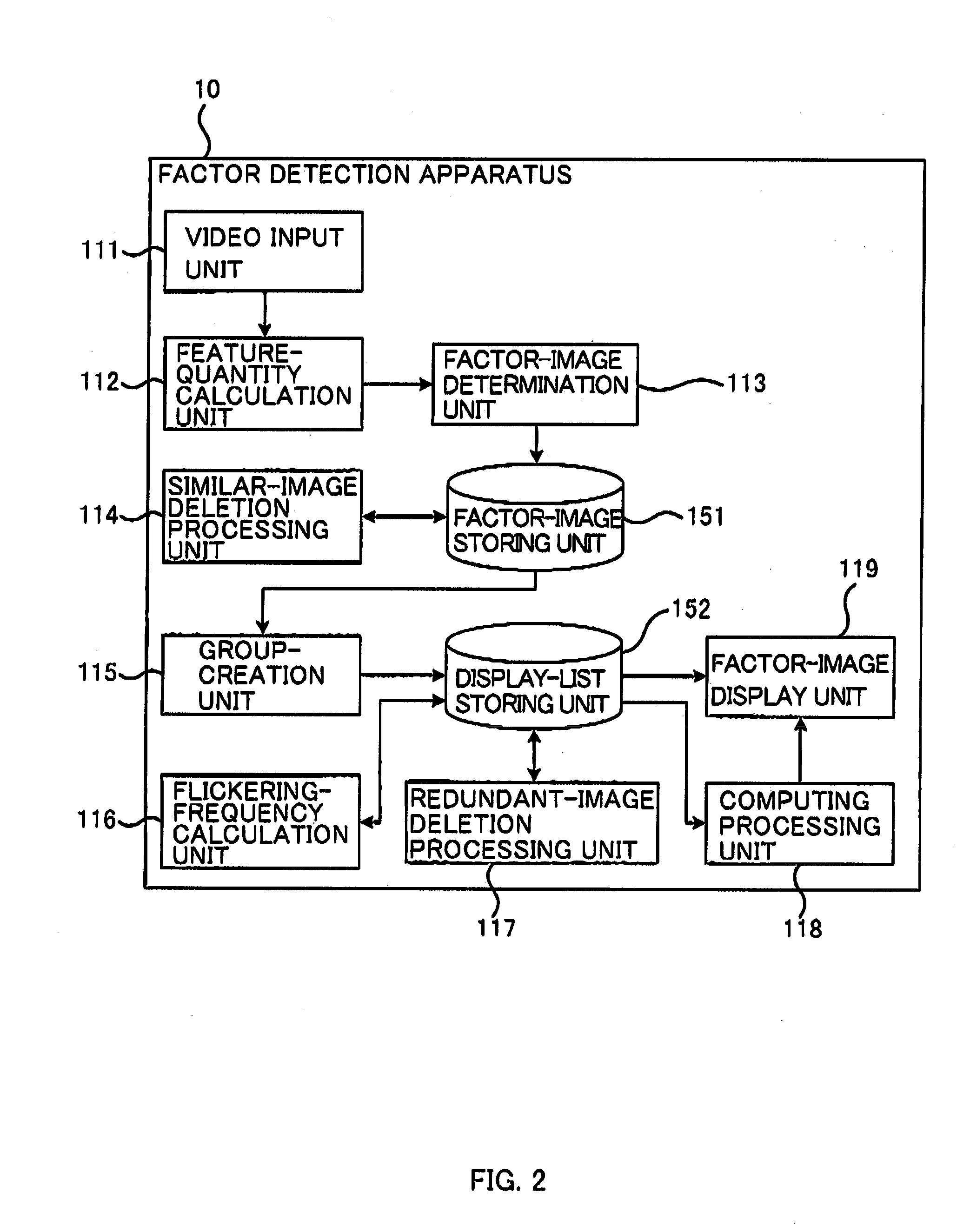

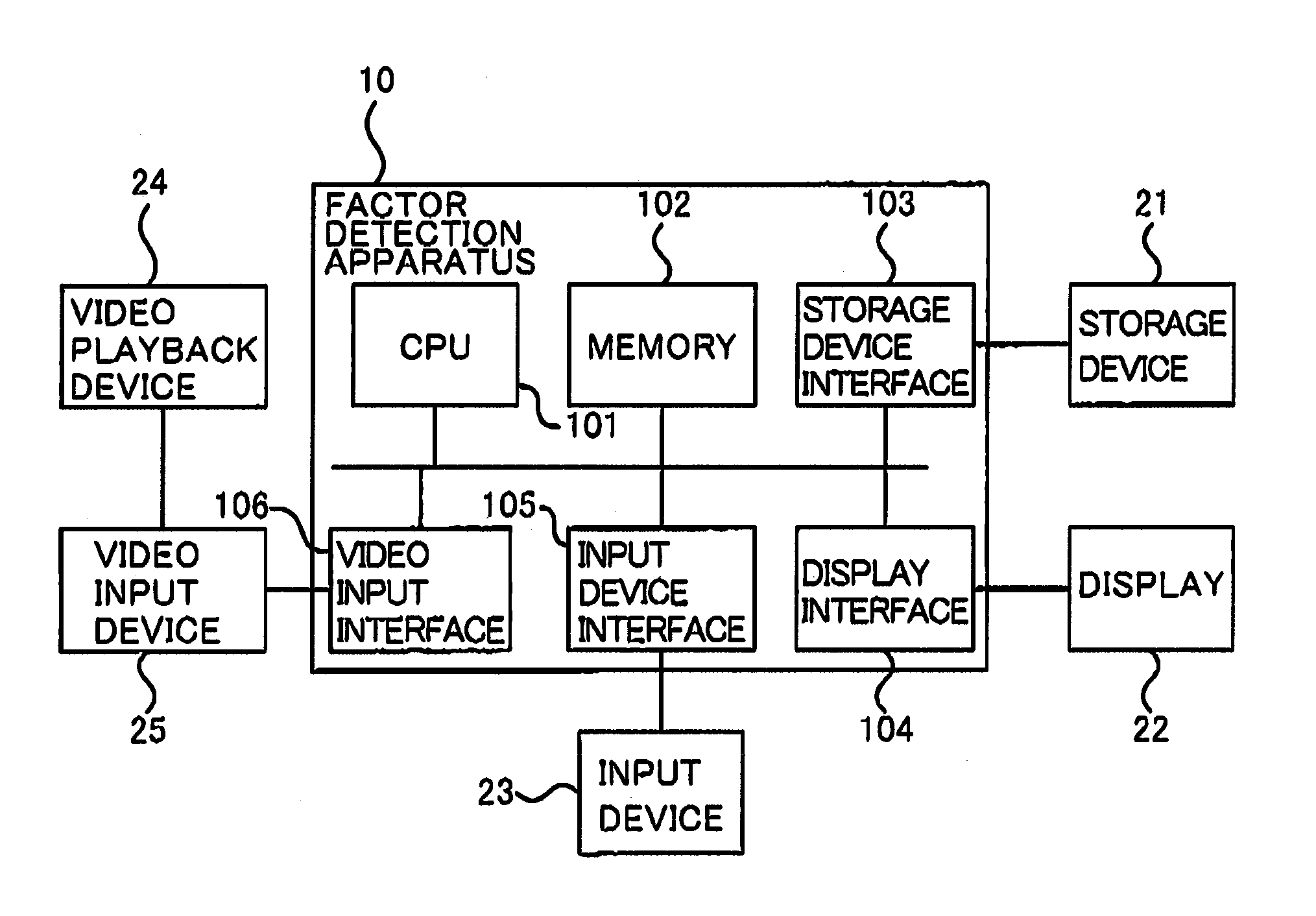

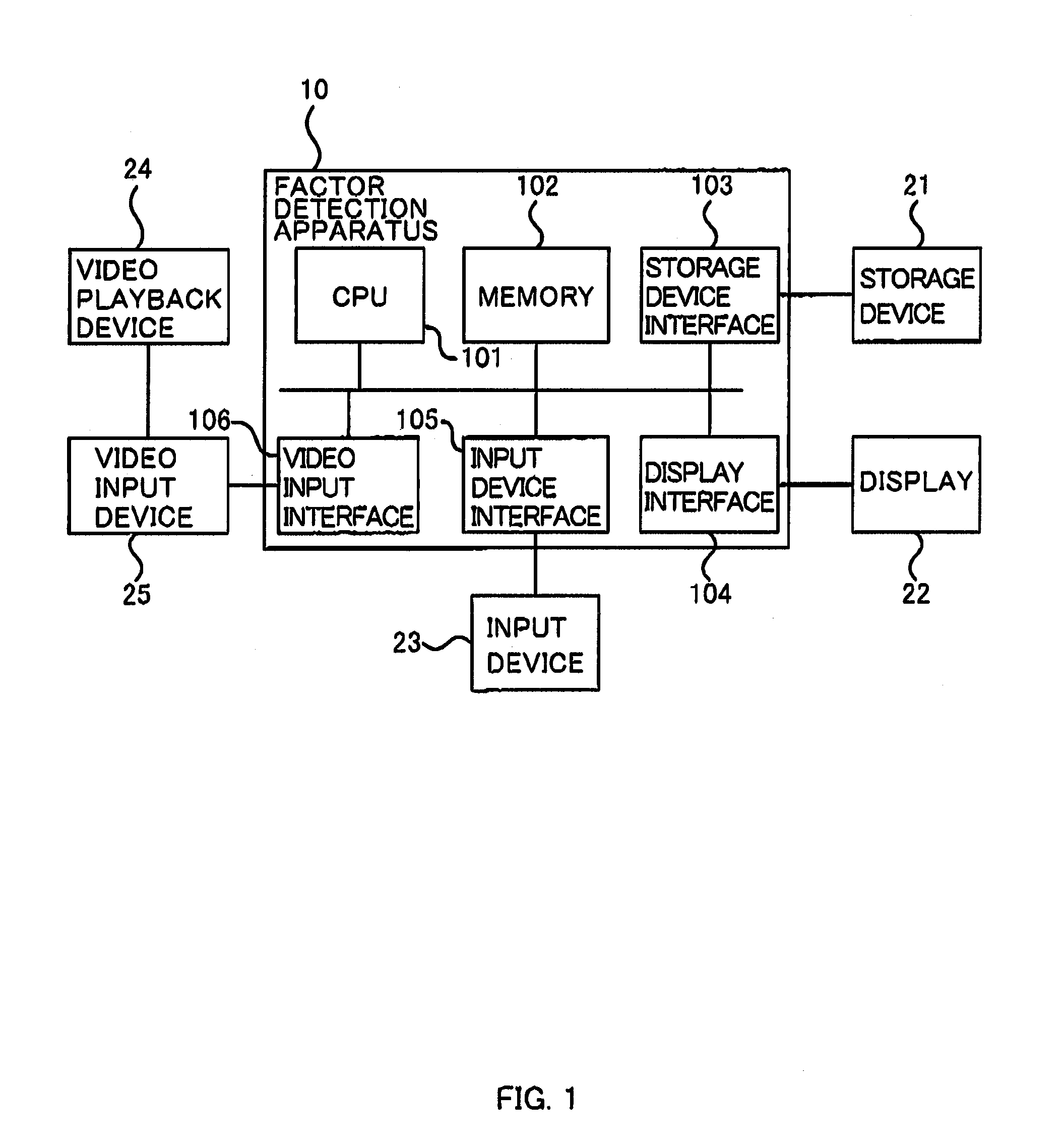

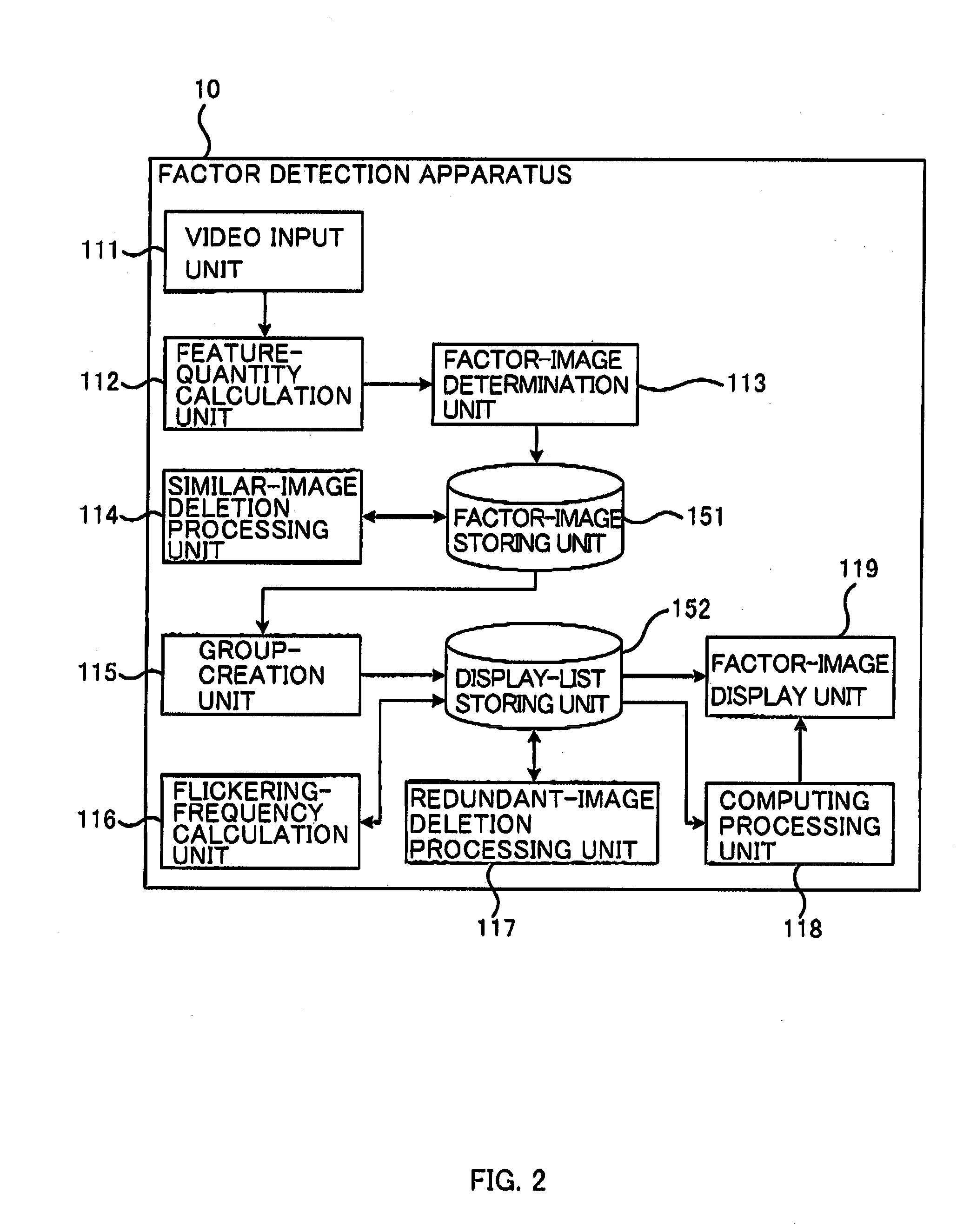

Information processor, method of detecting factor influencing health, and program

InactiveUS20110002512A1Easy to masterElectronic editing digitised analogue information signalsRecord information storagePattern recognitionCorresponding conditional

Provided is an information processor which detects factors, in a video, influencing health, and which includes: an image input unit which receives a series of image data to process a plurality of consecutive still images constituting the video; a feature-quantity calculation unit which calculates a feature quantity of each still images on the basis of the image data; a conditional-expression storing unit which stores a conditional expression, for each factor, to determine whether each of the still images produces the factor on the basis of the corresponding feature quantity; a factor determination unit which determines, for each factor, whether each of the still images produces the factors on the basis of the corresponding feature quantity and the corresponding conditional expression; and an image-list display unit which displays, for each factor, a list of information indicating the still images determined to produce the factor.

Owner:HITACHI CONSULTING

Information processor, method of detecting factor influencing health, and program

InactiveUS8059875B2Easily grasp what factor the displayed still image producesEasy to masterElectronic editing digitised analogue information signalsRecord information storagePattern recognitionCorresponding conditional

Provided is an information processor which detects factors, in a video, influencing health, and which includes: an image input unit which receives a series of image data to process a plurality of consecutive still images constituting the video; a feature-quantity calculation unit which calculates a feature quantity of each still images on the basis of the image data; a conditional-expression storing unit which stores a conditional expression, for each factor, to determine whether each of the still images produces the factor on the basis of the corresponding feature quantity; a factor determination unit which determines, for each factor, whether each of the still images produces the factors on the basis of the corresponding feature quantity and the corresponding conditional expression; and an image-list display unit which displays, for each factor, a list of information indicating the still images determined to produce the factor.

Owner:HITACHI CONSULTING

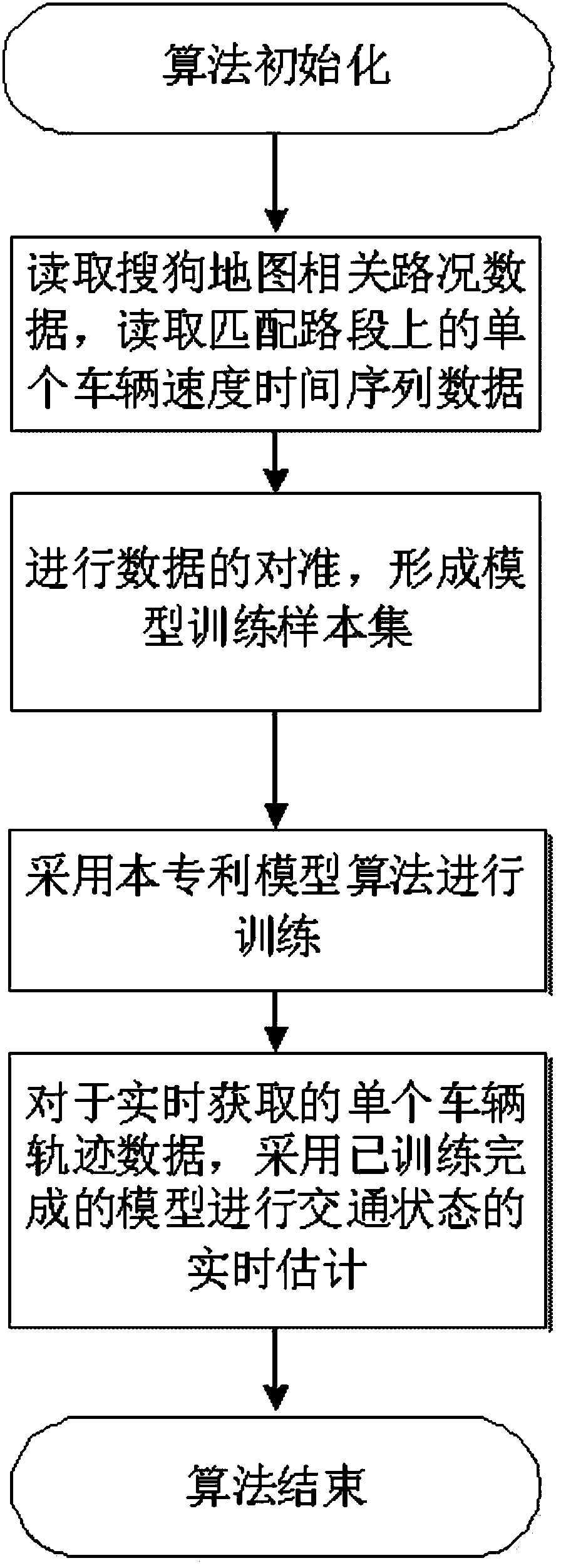

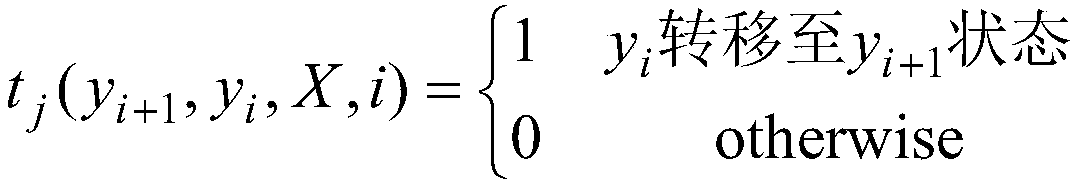

Conditional random field based real time traffic state estimation method

InactiveCN107767036ALess stringent requirementsImprove estimation performanceForecastingResourcesConditional random fieldEstimation methods

The invention discloses a conditional random field based real time traffic state estimation method. Through learning of history data, the corresponding relation between change condition of a speed sequence in a motion process of a single vehicle and road jam degree is constructed. A conditional random field model is adopted for learning the implication relation between the change condition of thespeed sequence in the motion process of the single vehicle and road jam degree and the learning result is a corresponding conditional random field model. After the model is constructed, real time accurate estimation on traffic condition of a specific road segment can be realized through speed sequence data of a single vehicle in the specific road segment acquired in real time. Therefore, strict requirements for data acquisition re reduced and a good estimation effect can be achieved in a comparatively simple collection condition (such as that only the speed sequence data of a single vehicle isneeded).

Owner:北斗导航位置服务(北京)有限公司

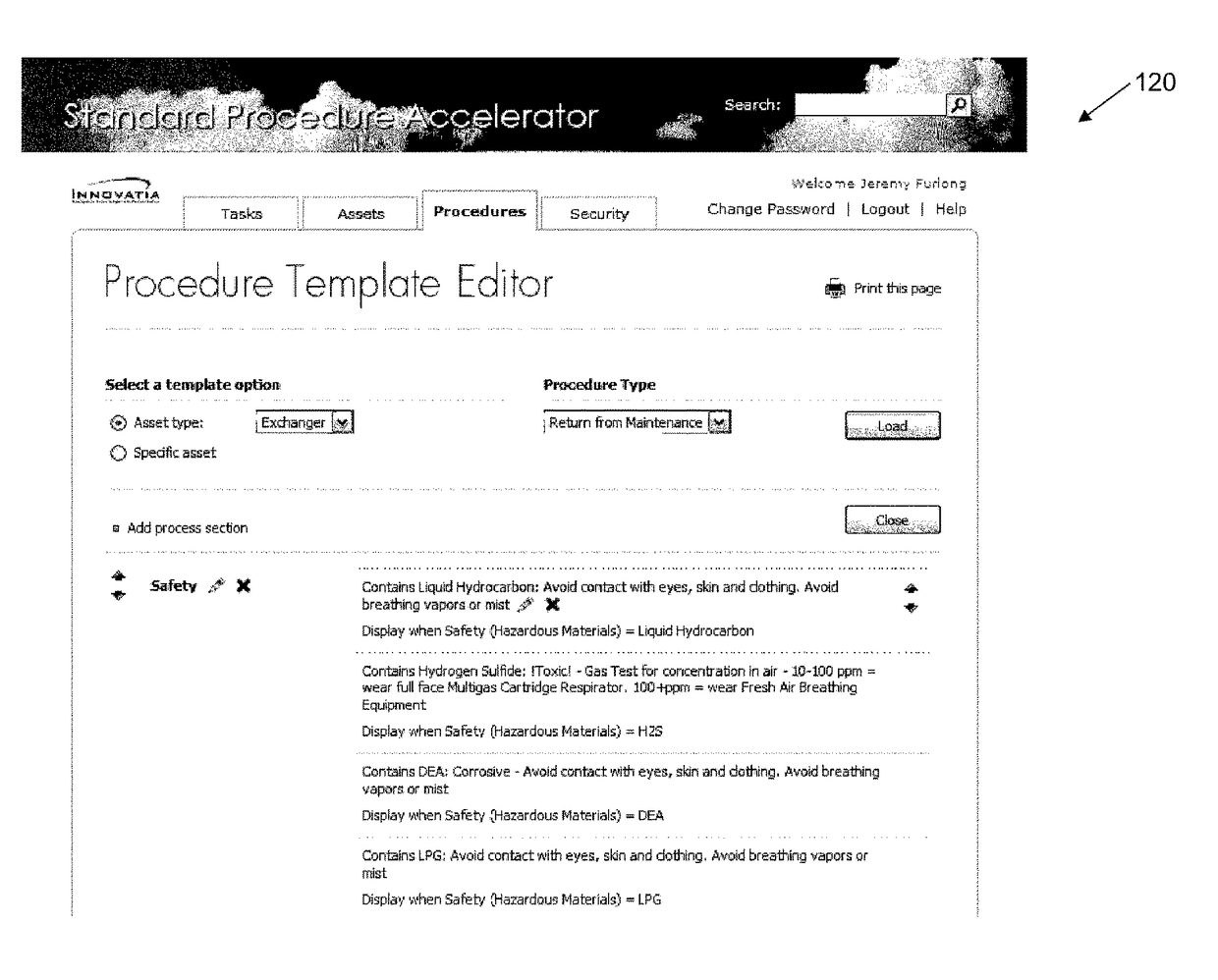

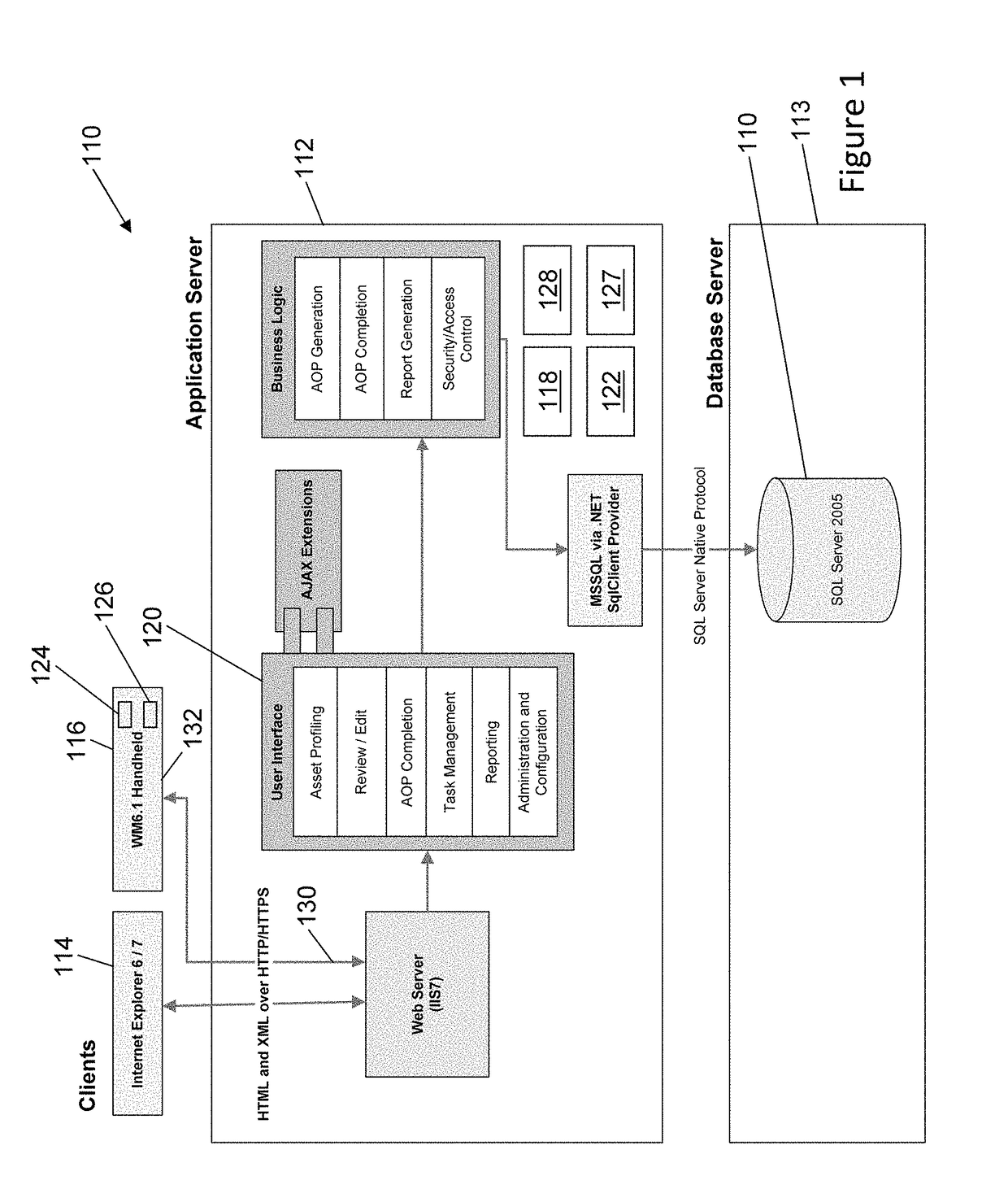

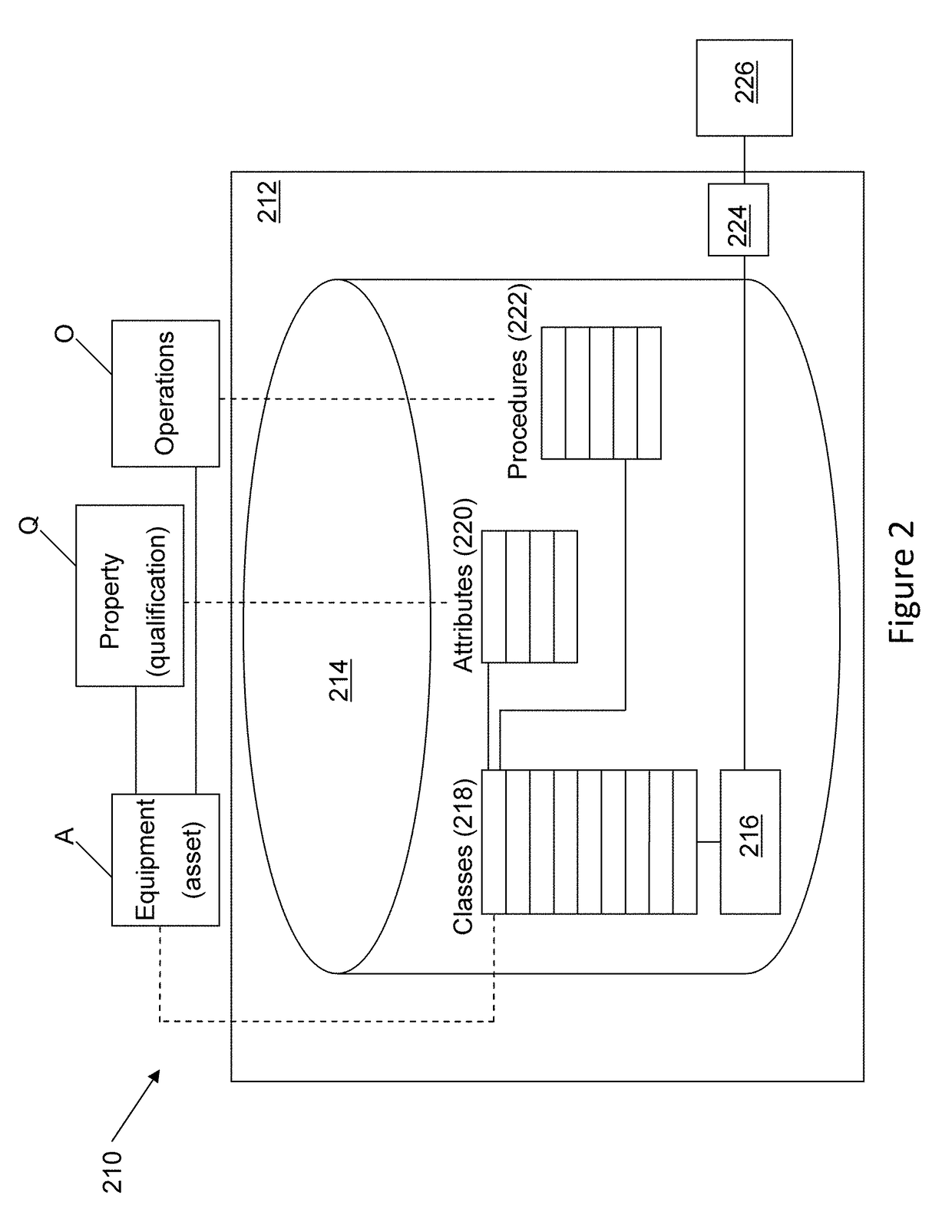

System and method for dynamic generation of procedures

ActiveUS9858535B2Simple and elegantMaintain largeDigital computer detailsMemory systemsCorresponding conditionalUser interface

A system and method for dynamic generation of procedures is disclosed. The method comprises: (a) storing asset types defining attributes; asset instances, inheriting attributes of one of the asset types and having attribute-values; procedure statements being associated to conditional rule(s) to be applied to an attribute-value of an asset instance; and procedure templates, each defining a unique combination of asset type and a group of said procedure statements. The method further comprises (b) for a given asset instance and a given procedure template: (i) iteratively reading each of the procedure statements of the group of procedure statements being associated to the given procedure template; and (ii) presenting, on a user interface, each one of the procedure statements where a condition is met when the corresponding conditional rule(s) is applied to the attribute-values of the given asset instance, in order to dynamically generate an asset specific procedure.

Owner:HEXAGON TECH CENT GMBH

Recognition method and system based on dual-modal emotion fusion of voice and facial expression

ActiveCN105976809BEmotion recognition results are accurateImprove emotion recognition rateSpeech recognitionCorresponding conditionalDimensionality reduction

The invention relates to a voice-and-facial-expression-based identification method for dual-modal emotion fusion. The method comprises: S1, audio data and video data of a to-be-identified object are obtained; S2, a face expression image is extracted from the video data and segmentation of an eye region, a nose region, and a mouth region is carried out; S3, a facial expression feature in each regional image is extracted from images of the three regions; S4, PCA analysis and dimensionality reduction is carried out on voice emotion features and the facial expression features; and S5, naive Bayesian emotion voice classification is carried out on samples of two kinds of modes and decision fusion is carried out on a conditional probability to obtain a final emotion identification result. According to the invention, fusion of the voice emotion features and the facial expression features is carried out by using a decision fusion method, so that accurate data can be provided for corresponding conditional probability calculation carried out at the next step; and an emotion state of a detected object can be obtained precisely by using the method, so that accuracy and reliability of emotion identification can be improved.

Owner:CHINA UNIV OF GEOSCIENCES (WUHAN)

Traffic State Estimation Method for Expressway Sections Based on Dynamic Bayesian Network

ActiveCN104809879BGood effectImprove reliabilityDetection of traffic movementSpecial data processing applicationsNODALCorresponding conditional

Owner:重庆科知源科技有限公司

Method for selecting valid variants in search and recommendation systems (variants)

InactiveUS10275418B2Low computing complexityThe result is accurateWeb data indexingKnowledge representationCorresponding conditionalRanking

Selecting and ranking valid variants in search and recommendation systems selects and ranks variants with accuracy and speed. Criteria for evaluating the relevance of a variant to the search request are generated. A set of procedures for the selection and ranking of variants and a sequence for performing said procedures for the selection of variants evaluated as the most valid are established. An evaluation of each variant is based on relevance to search request criteria. The variants are then ranked by assigning a rank to each variant based on the condition of correspondence to the greatest number of criteria in decreasing order. Then the variants are selected and ranked in at least two stages using the superposition method, and the variants are selected, ranked and excluded until all of the established selection procedures have been used and the selected group of variants is evaluated as being the most valid.

Owner:FEDERALNOE GOSUDARSTVENNOE AVTONOMNOE OBRAZOVATELNOE UCHREZHDENIE VYSSHEGO PROFESSIONALNOGO OBRAZOVANIJA NATSIONALNYJ ISSLEDOVATELSKIJ UNIV VYSSHAJA SHKOLA EHKONOMIKI

Predication supporting code generation by indicating path associations of symmetrically placed write instructions

ActiveUS9262140B2Software engineeringSpecific program execution arrangementsImage resolutionProcessor register

A predication technique for out-of-order instruction processing provides efficient out-of-order execution with low hardware overhead. A special op-code demarks unified regions of program code that contain predicated instructions that depend on the resolution of a condition. Field(s) or operand(s) associated with the special op-code indicate the number of instructions that follow the op-code and also contain an indication of the association of each instruction with its corresponding conditional path. Each conditional register write in a region has a corresponding register write for each conditional path, with additional register writes inserted by the compiler if symmetry is not already present, forming a coupled set of register writes. Therefore, a unified instruction stream can be decoded and dispatched with the register writes all associated with the same re-name resource, and the conditional register write is resolved by executing the particular instruction specified by the resolved condition.

Owner:IBM CORP

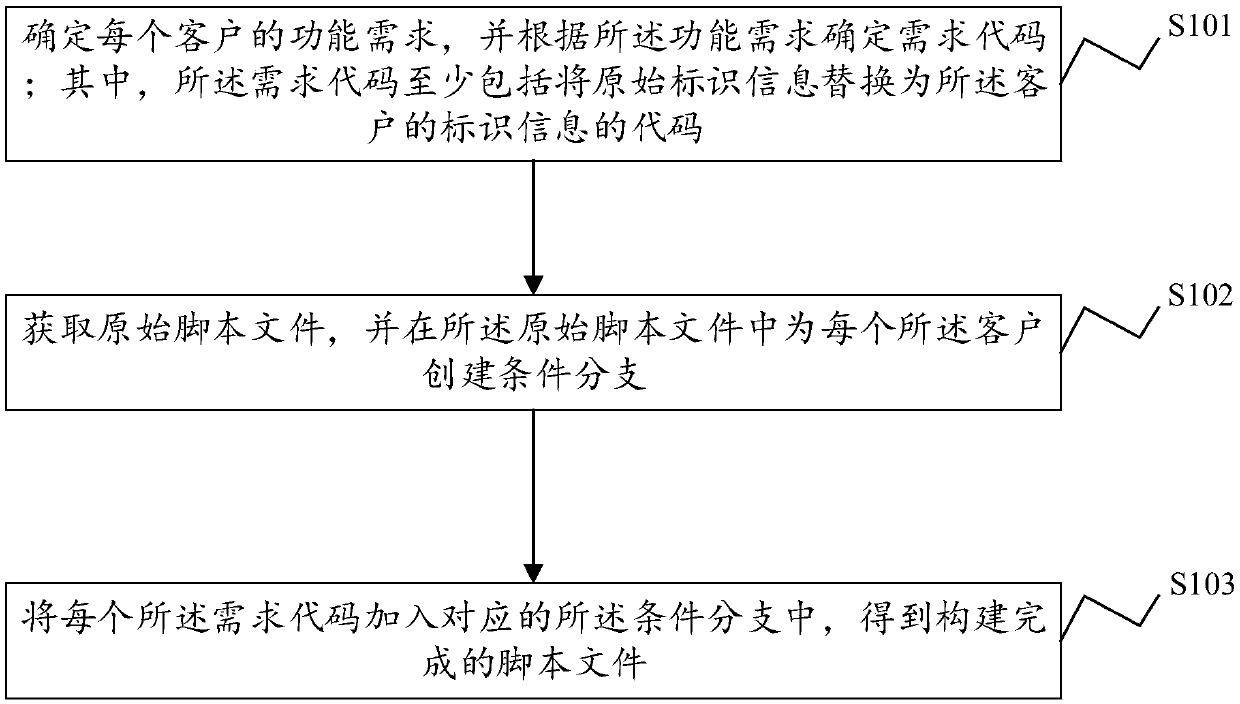

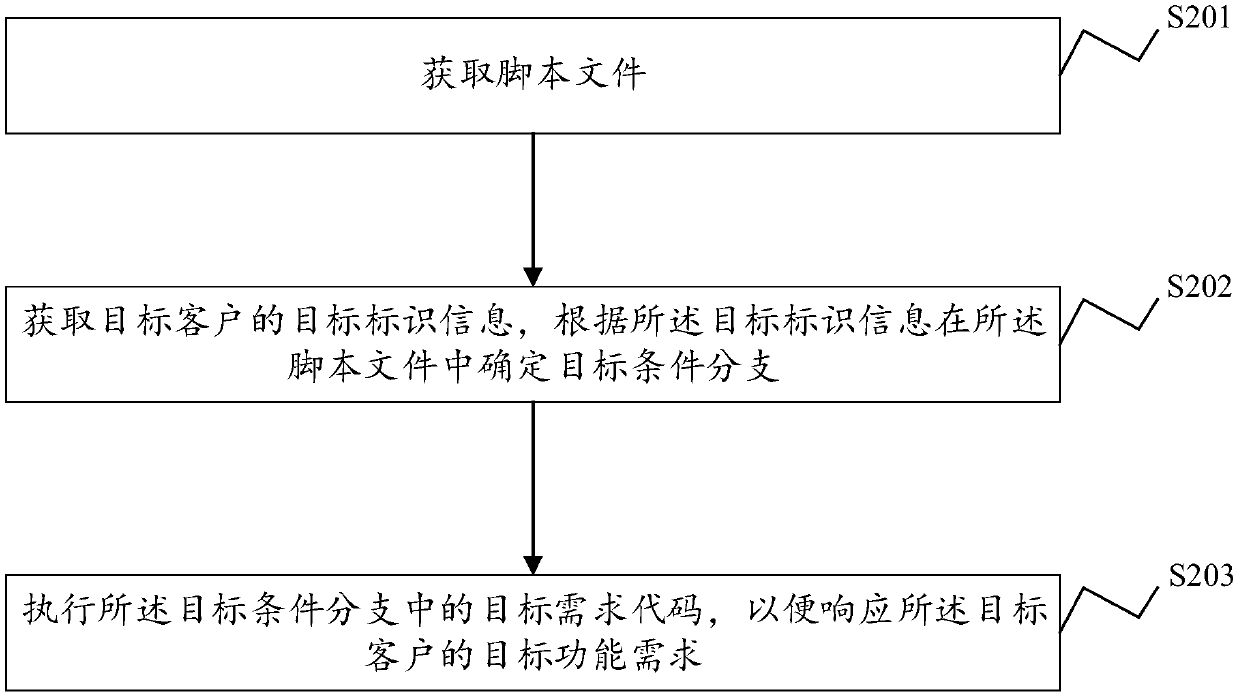

Neutralized version construction method and system, page response method and system and related devices

ActiveCN109669672AReduce redundancyReduce maintenance costsVersion controlRequirement analysisResponse methodCorresponding conditional

The invention discloses a neutralized version construction method and system, a page response method and system, an electronic device and a computer readable storage medium, and the method comprises the steps of determining the function requirement of each client, and determining a requirement code according to the function requirement, wherein the demand code at least comprises a code for replacing the original identification information with the identification information of the client; obtaining an original script file, and creating a condition branch for each client in the original scriptfile; and adding each demand code into the corresponding conditional branch to obtain a constructed script file. According to the neutralized version construction method provided by the invention, formultiple function requirements, only one set of codes needs to be maintained, so that the redundancy of the codes is reduced, and the maintenance cost is reduced. And when new client function requirements exist, only condition branches need to be added, and the expandability is high.

Owner:ZHENGZHOU YUNHAI INFORMATION TECH CO LTD

Features

- R&D

- Intellectual Property

- Life Sciences

- Materials

- Tech Scout

Why Patsnap Eureka

- Unparalleled Data Quality

- Higher Quality Content

- 60% Fewer Hallucinations

Social media

Patsnap Eureka Blog

Learn More Browse by: Latest US Patents, China's latest patents, Technical Efficacy Thesaurus, Application Domain, Technology Topic, Popular Technical Reports.

© 2025 PatSnap. All rights reserved.Legal|Privacy policy|Modern Slavery Act Transparency Statement|Sitemap|About US| Contact US: help@patsnap.com