Patents

Literature

Hiro is an intelligent assistant for R&D personnel, combined with Patent DNA, to facilitate innovative research.

49results about How to "Taking power consumption into consideration" patented technology

Efficacy Topic

Property

Owner

Technical Advancement

Application Domain

Technology Topic

Technology Field Word

Patent Country/Region

Patent Type

Patent Status

Application Year

Inventor

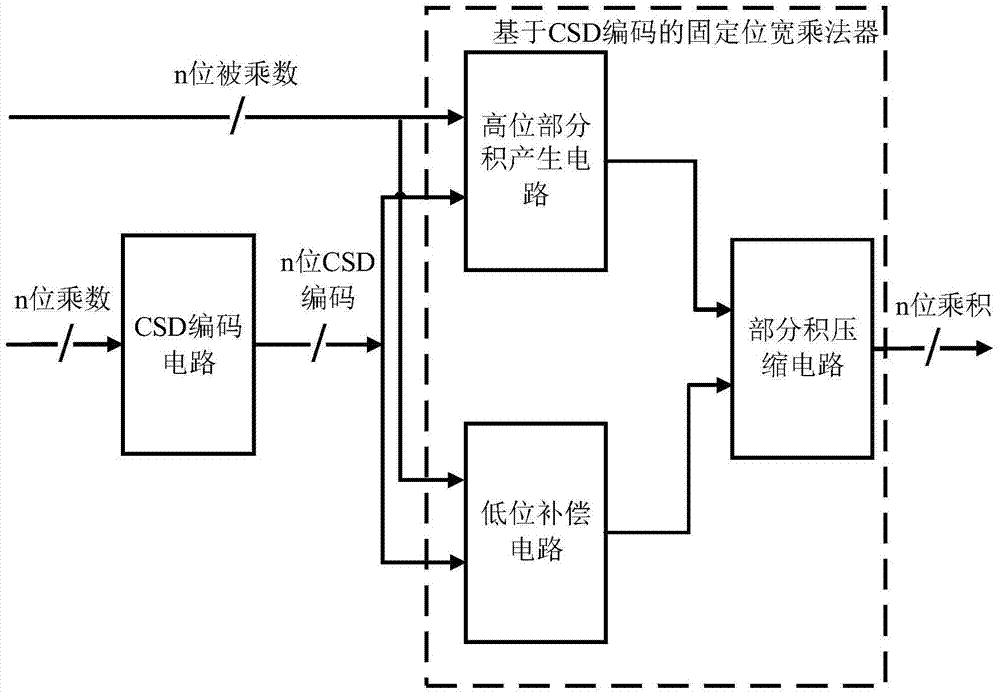

Fixed-bit-width multiplier with high accuracy and low energy consumption properties

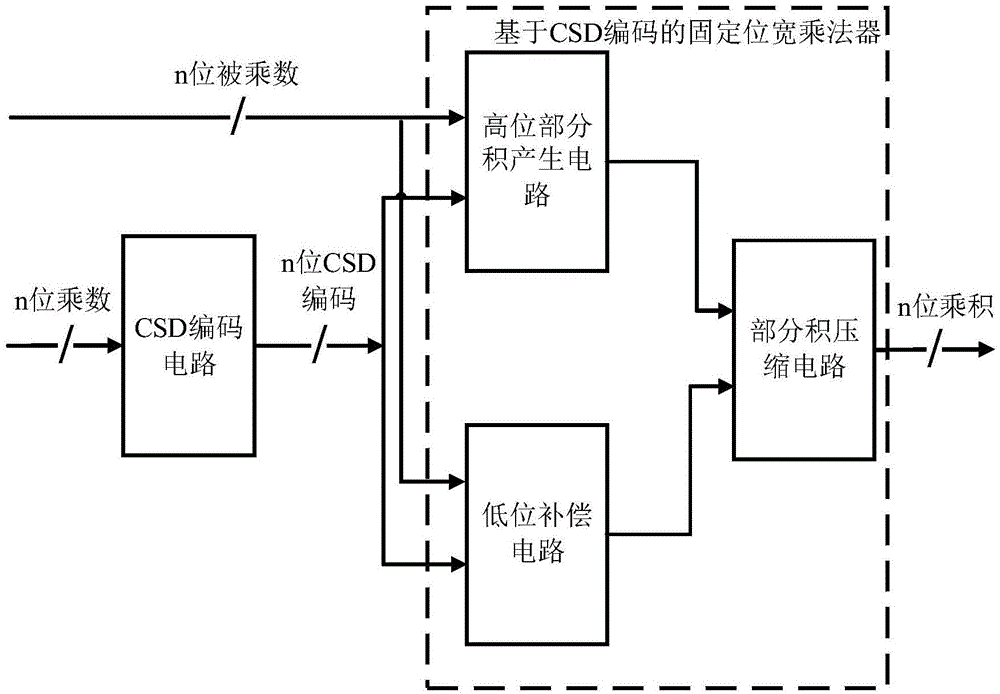

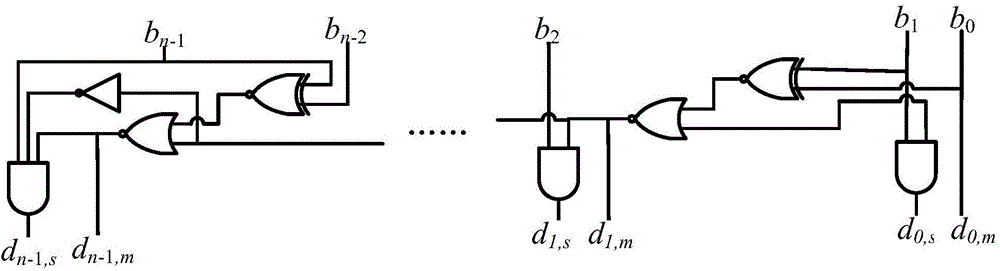

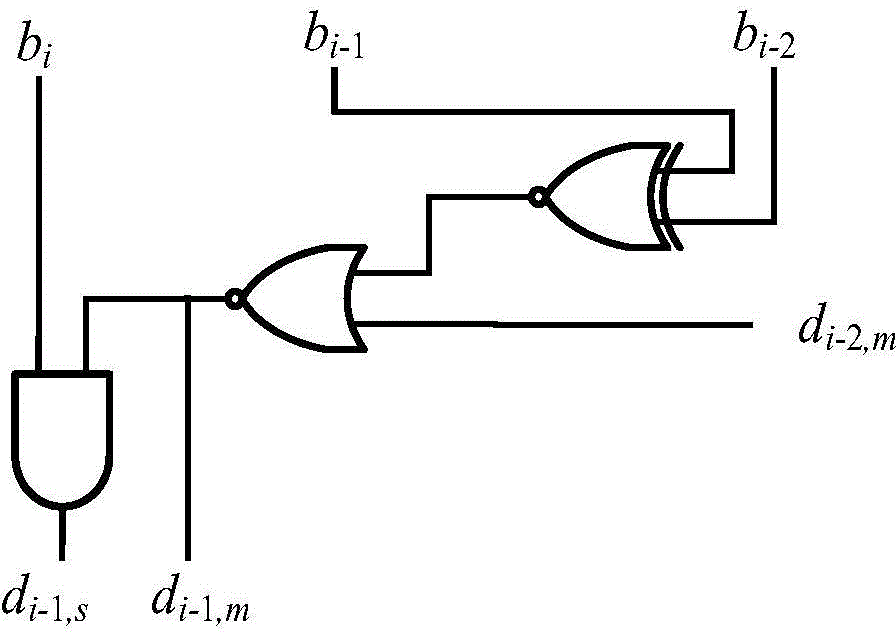

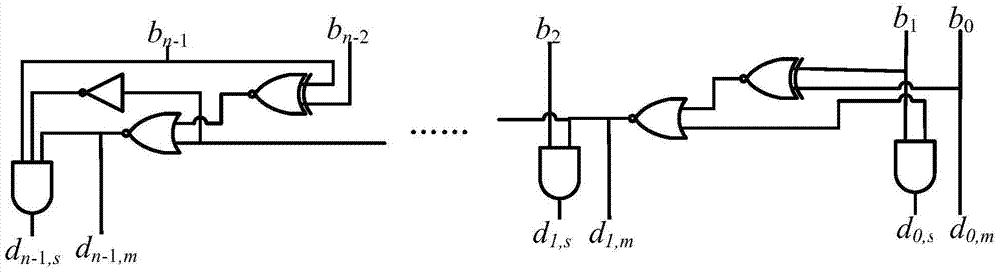

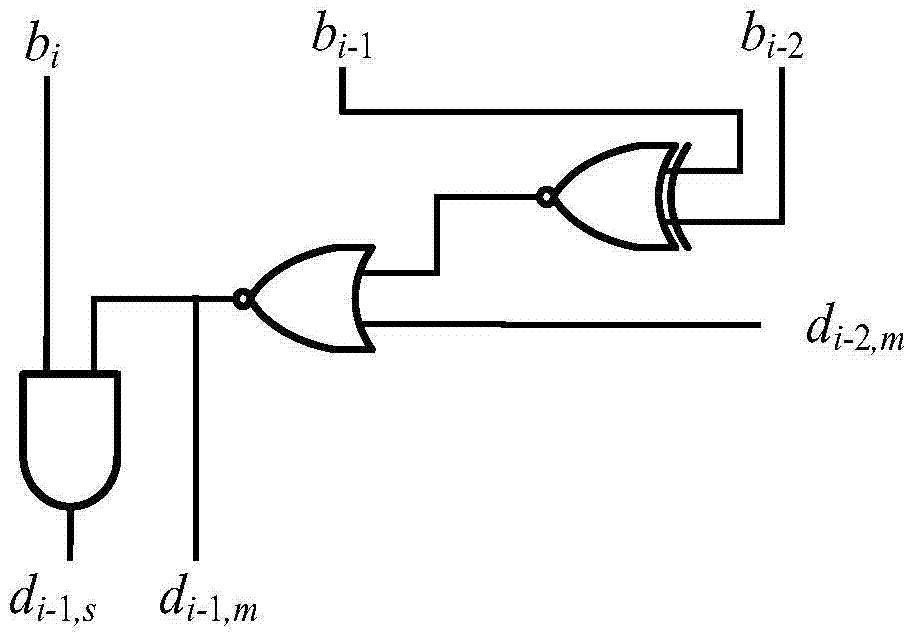

InactiveCN105183424AHigh precisionReduce power consumptionDigital data processing detailsPartial productIntegrated circuit

The present invention relates to the technical field of integrated circuits, and in particular to a fixed-bit-width multiplier with high accuracy and low energy consumption properties. The fixed-bit-width multiplier with the high accuracy and low energy consumption properties comprises a CSD encode circuit, a high position partial product generation circuit, a low position compensation circuit and a partial product compression circuit, wherein an input port of the CSD encode circuit is connected to external input data, and an output port of the CSD encode circuit is connected to the high position partial product generation circuit and the low position compensation circuit; the high position partial product generation circuit is connected to the external input data, and an output port of the high position partial product generation circuit is connected to the partial product compression circuit; the low position compensation circuit is connected to the external input data, and an output port of the low position compensation circuit is connected to the partial product compression circuit; and an output port of the partial product compression circuit is connected to the external input data. The present invention has the beneficial effects that a fixed-bit-width multiplier with low energy consumption and a relatively high speed, and a practical fixed-bit-width multiplier design with high accuracy and low energy consumption are achieved. The fixed-bit-width multiplier of the present invention is particularly suitable for implementation of a high-accuracy multiplication with low energy consumption and a fixed bit width.

Owner:UNIV OF ELECTRONICS SCI & TECH OF CHINA

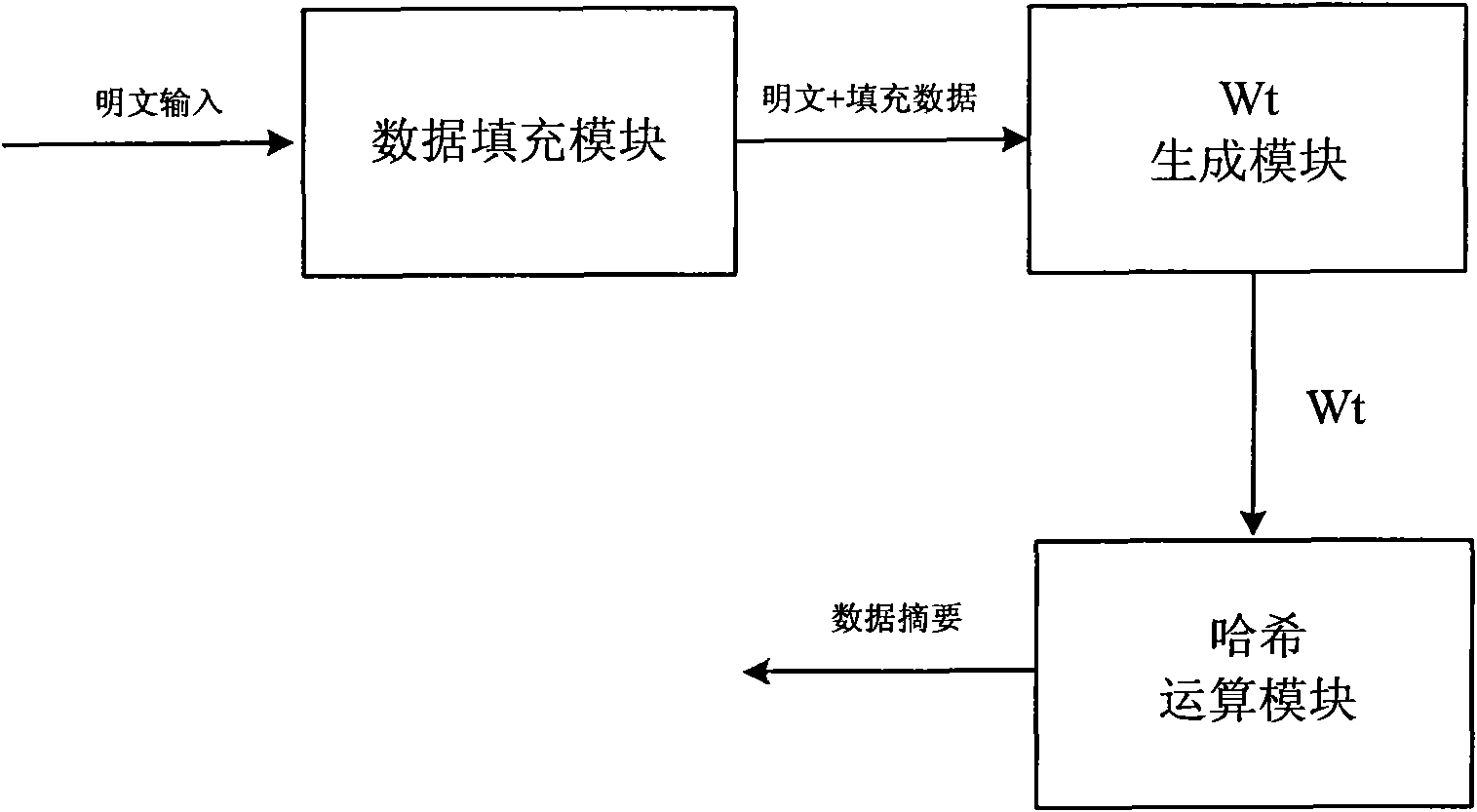

Device compatible with three SHA standards and realization method thereof

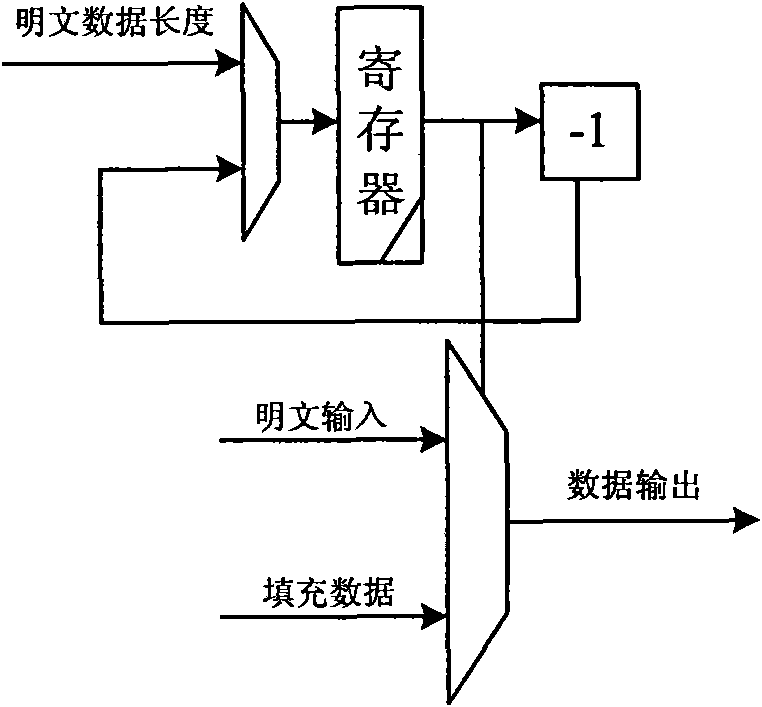

InactiveCN101894229AImprove compatibilityReduce consumptionInternal/peripheral component protectionComputer compatibilityData filling

The invention discloses a device compatible with three SHA standards, which comprises a data filling module, a Wt generation module and an Hash operation module, which are connected in order. The invention also discloses a realization method of the device compatible with three SHA standards, which comprises the following steps: (I) receiving cleartext data by the data filling module to generate filling data and outputting the data to the Wt generation module; (II) generating new Wt operators by the Wt generation module and inputting the new Wt operators into the Hash operation module; and (III) generating a data abstract with 160 bits under an SHA-a mode, generating a data abstract with 256 bits under SHA-256 mode or generating a data abstract with 512 bits under an SHA-512 mode by the Hash operation module. The invention has the advantages of common practicality, good compatibility, low power consumption, less occupation of extra resources and the like.

Owner:SOUTH CHINA UNIV OF TECH

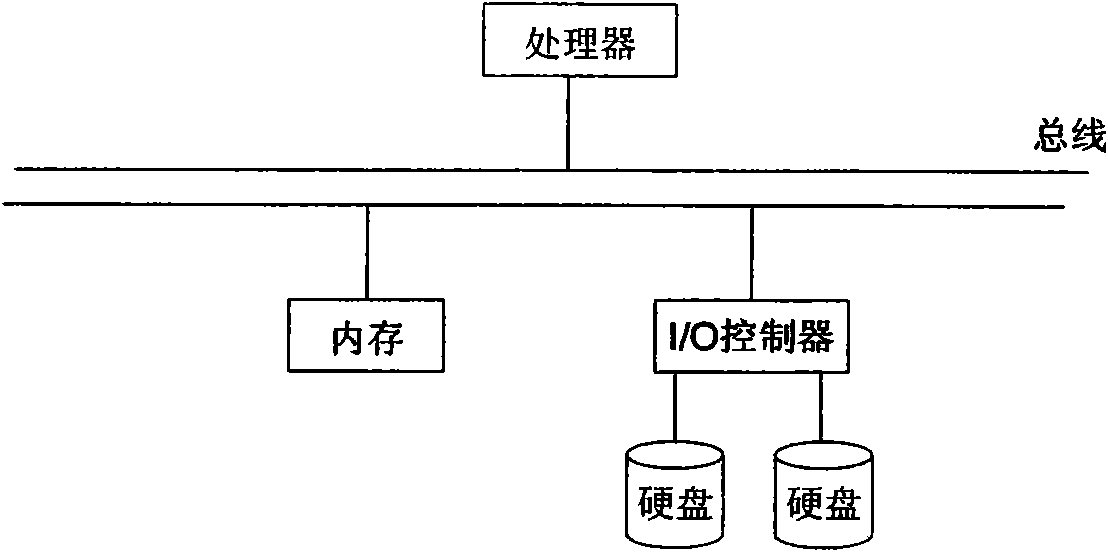

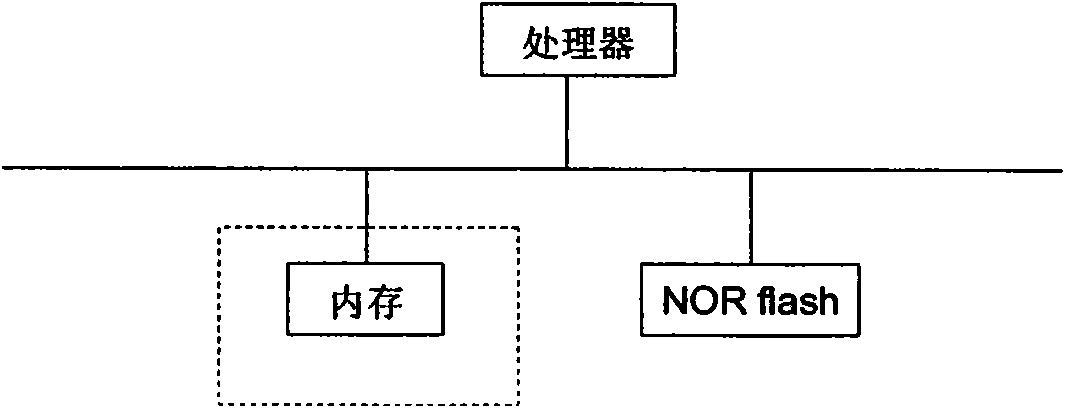

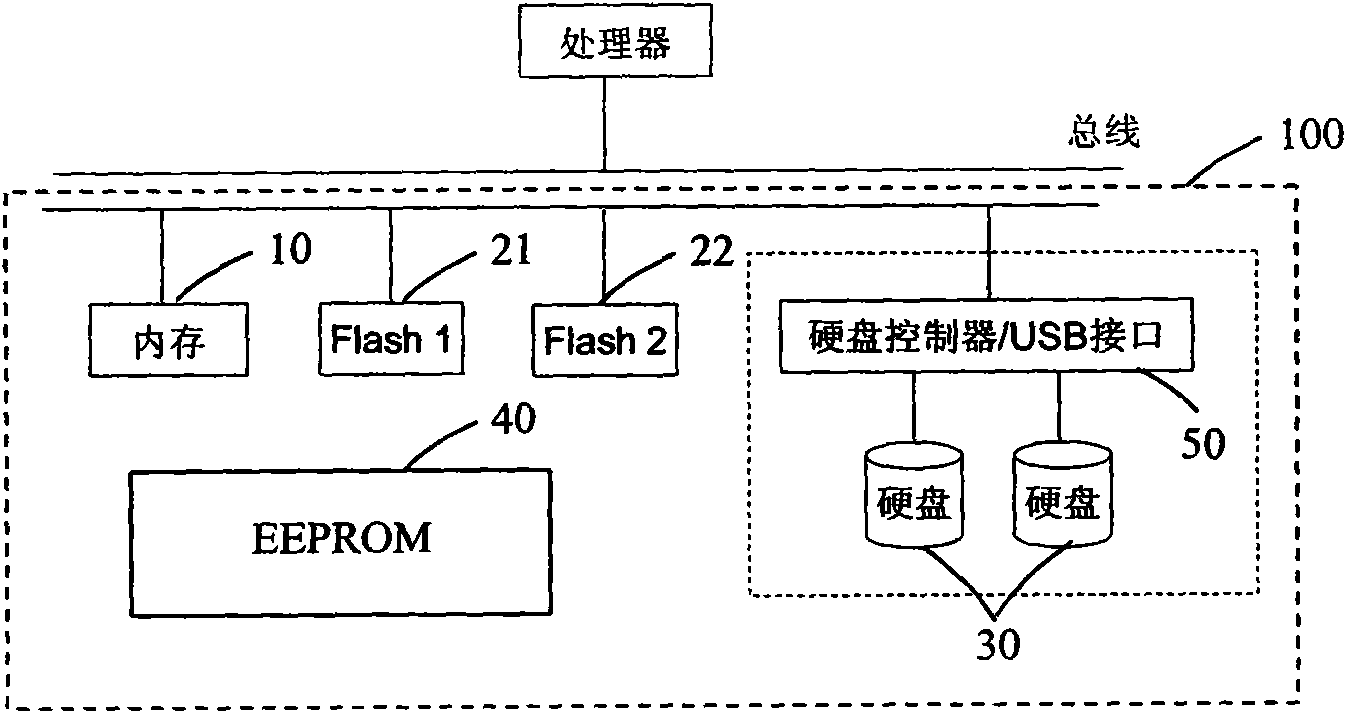

Storage system and storage method thereof for network computer

ActiveCN101788951AMeet needsEasy to manageProgram loading/initiatingMemory systemsOperational systemApplication software

The invention discloses a storage system and a storage method thereof for a network computer, wherein the storage system also comprises a first flash memory and a second flash memory, wherein the first flash memory is used for storing BIOS codes of the network computer, and the second flash memory is used for storing an operation system and local data of the network computer. The invention realizes the storage requirements of the network computer operation system and the local application program, and can perfectly carry out version management and software upgrading on the network computer.

Owner:BEIJING PKUNITY MICROSYST TECH

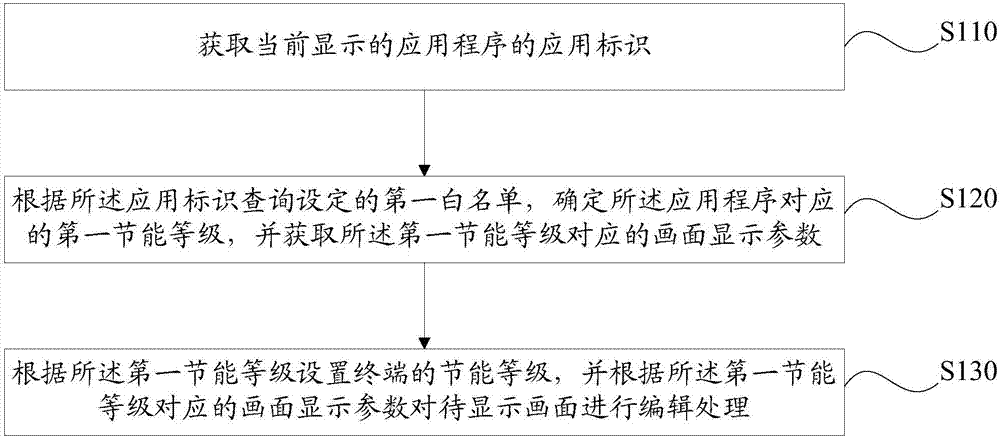

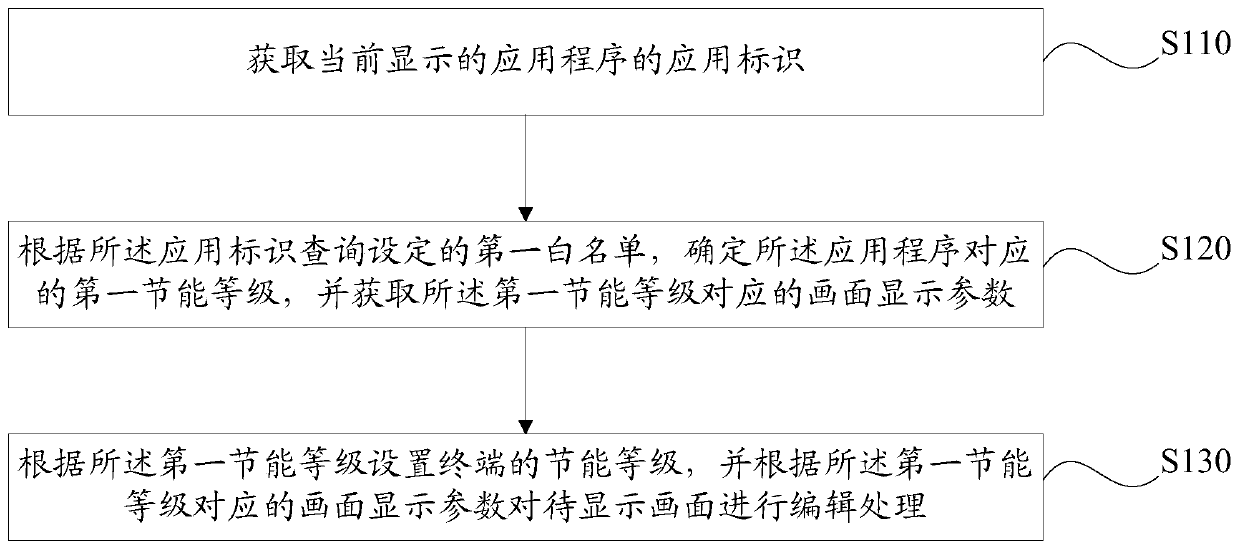

Method and device for dynamically regulating energy saving level of mobile terminal, and mobile terminal

ActiveCN106933326AReduce power consumptionTaking power consumption into considerationPower managementCathode-ray tube indicatorsComputer engineeringPower consumption

The embodiment of the invention discloses a method and a device for dynamically regulating the energy saving level of a mobile terminal, and the mobile terminal. The method comprises the following steps that: obtaining the application identification of an application program which is displayed at present; according to the application identification, inquiring a set first white list, determining a first energy saving level corresponding to the application program, and obtaining a display effect parameter corresponding to the first energy saving level; and according to the first energy saving level, setting the energy saving level of the terminal, and processing a picture to be displayed according to the display effect parameter corresponding to the first energy saving level. By use of the embodiment of the invention, the power consumption of the terminal can be dynamically regulated according to an application scene. By use of the technical scheme of the invention, a display effect can be both given a consideration while the power consumption of the terminal is lowered, and the time of endurance of the terminal is prolonged.

Owner:GUANGDONG OPPO MOBILE TELECOMM CORP LTD

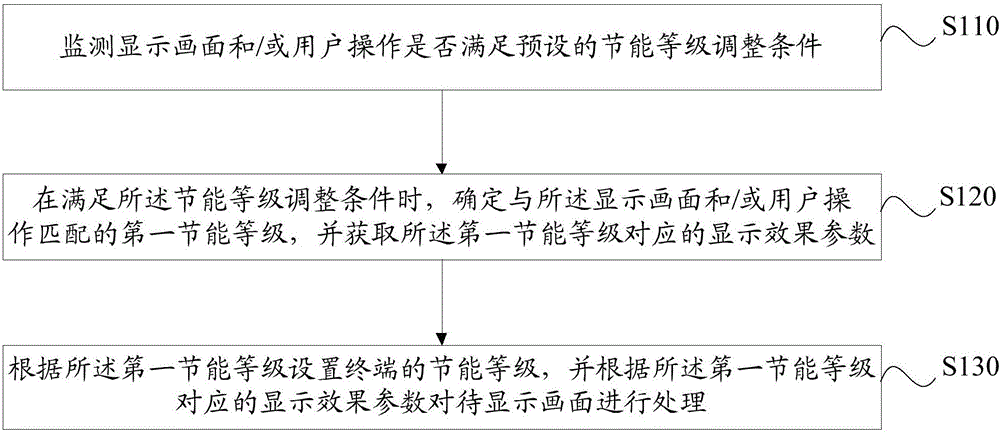

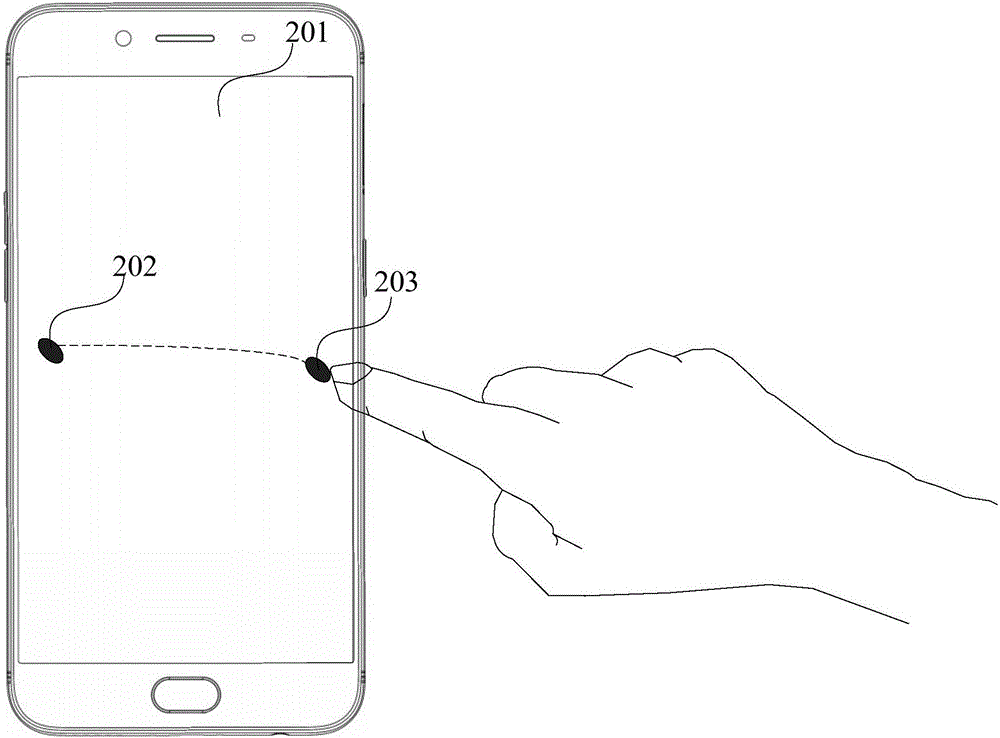

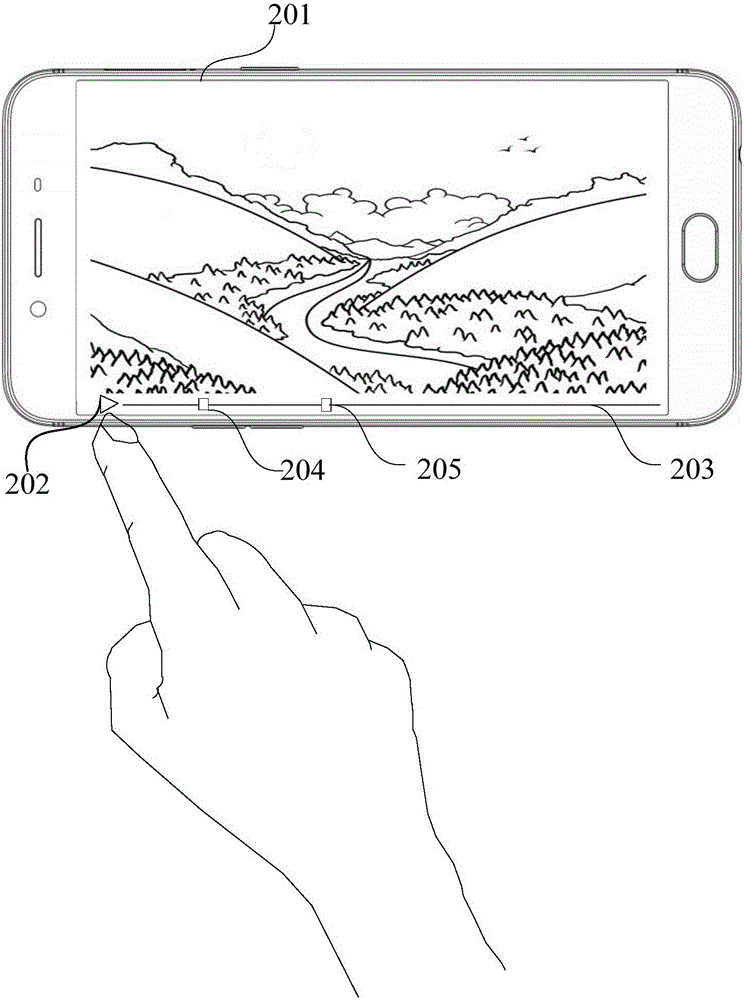

Display control method and apparatus, and mobile terminal

ActiveCN106658691AReduce power consumptionTaking power consumption into considerationPower managementStatic indicating devicesComputer terminalComputer engineering

The embodiment of the invention discloses a display control method and apparatus, and a mobile terminal. The method comprises the following steps: monitoring whether a display image and / or a user operation satisfies a preset energy saving grade adjustment condition; when the energy saving grade adjustment condition is satisfied, determining a first energy saving grade matched with the display image and / or the user operation, and obtaining a display effect parameter corresponding to the first energy saving grade; and setting the energy saving grade of the mobile terminal according to the first energy saving grade, and processing a to-be-displayed image according to the display effect parameter corresponding to the first energy saving grade. The technical scheme of the invention can take the display effect into account while reducing the power consumption of the terminal, and prolong the time of endurance of the terminal.

Owner:GUANGDONG OPPO MOBILE TELECOMM CORP LTD

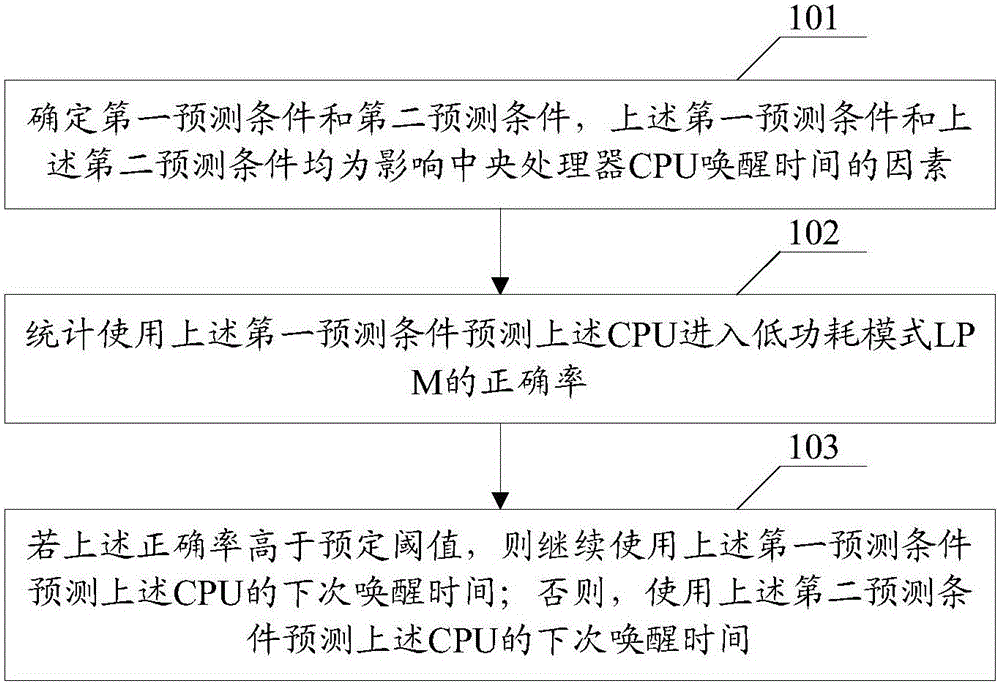

Method and device for managing central processing unit

ActiveCN106055079AForecast timeTaking power consumption into considerationError detection/correctionPower supply for data processingPower modeComputer science

Embodiments of the invention disclose a method and a device for managing a central processing unit. Implementation of the method comprises the following steps of determining a first prediction condition and a second prediction condition, wherein the first prediction condition and the second prediction condition are both factors for influencing the wake-up time of the central processing unit (CPU); counting the accuracy for using the first prediction condition to predict the CPU to enter a low power mode (LPM); if the accuracy is higher than a predetermined threshold, continuing to use the first prediction condition to predict the next wake-up time of the CPU; otherwise using the second prediction condition to predict the next wake-up time of the CPU. When the prediction condition is utilized to predict the next wake-up time of the CPU, the influences of various factors on the next wake-up time of the CPU are considered simultaneously, and the next wake-up time of the CPU can be more accurately predicted, so that the CPU can select more appropriate PLM (Product Lifecycle management), and thus the power consumption and performance requirements of the CPU are considered; therefore, the higher CPU performance can be kept on the premise of keeping the low power consumption.

Owner:SHANGHAI JINSHENG COMM TECH CO LTD

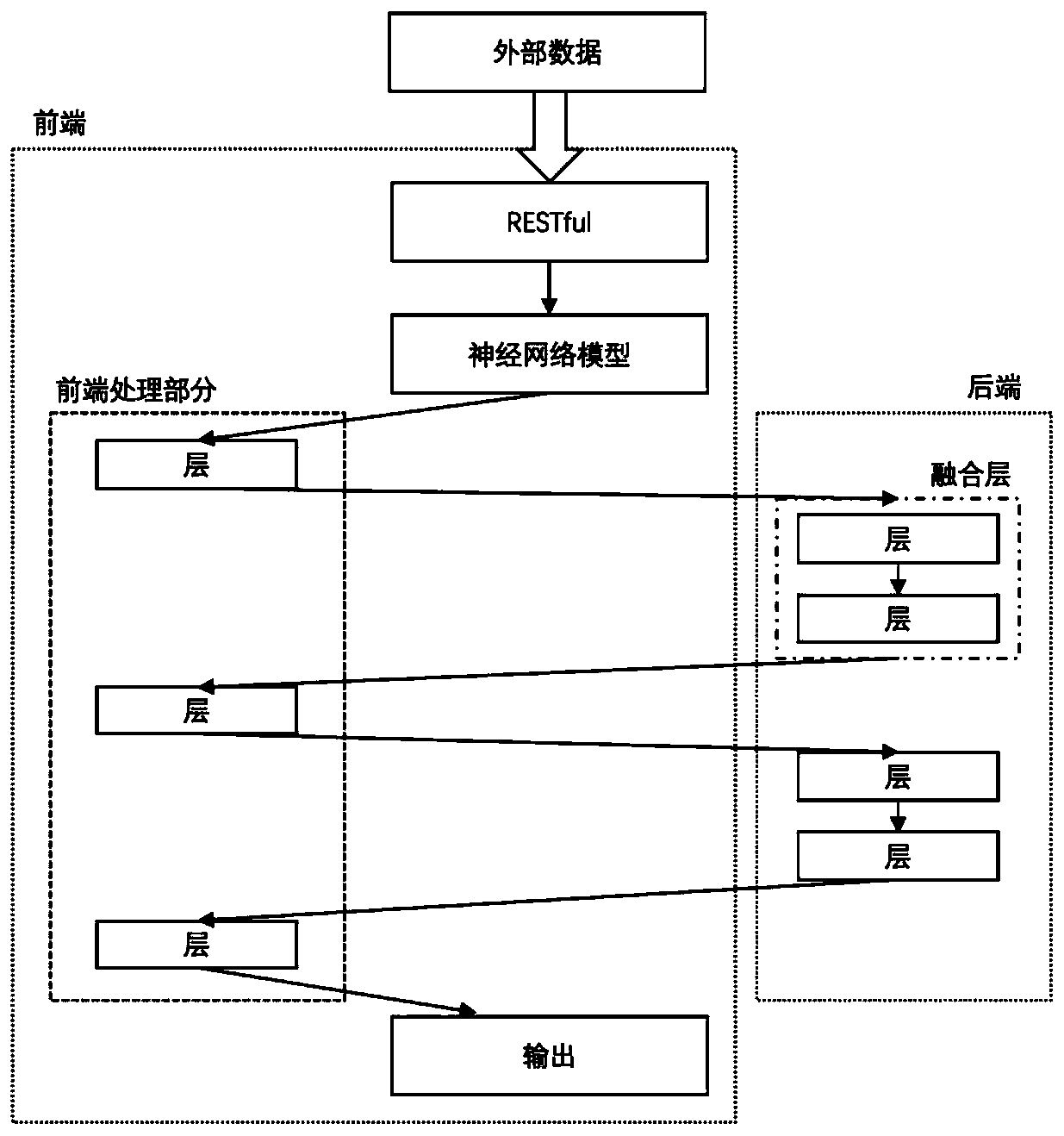

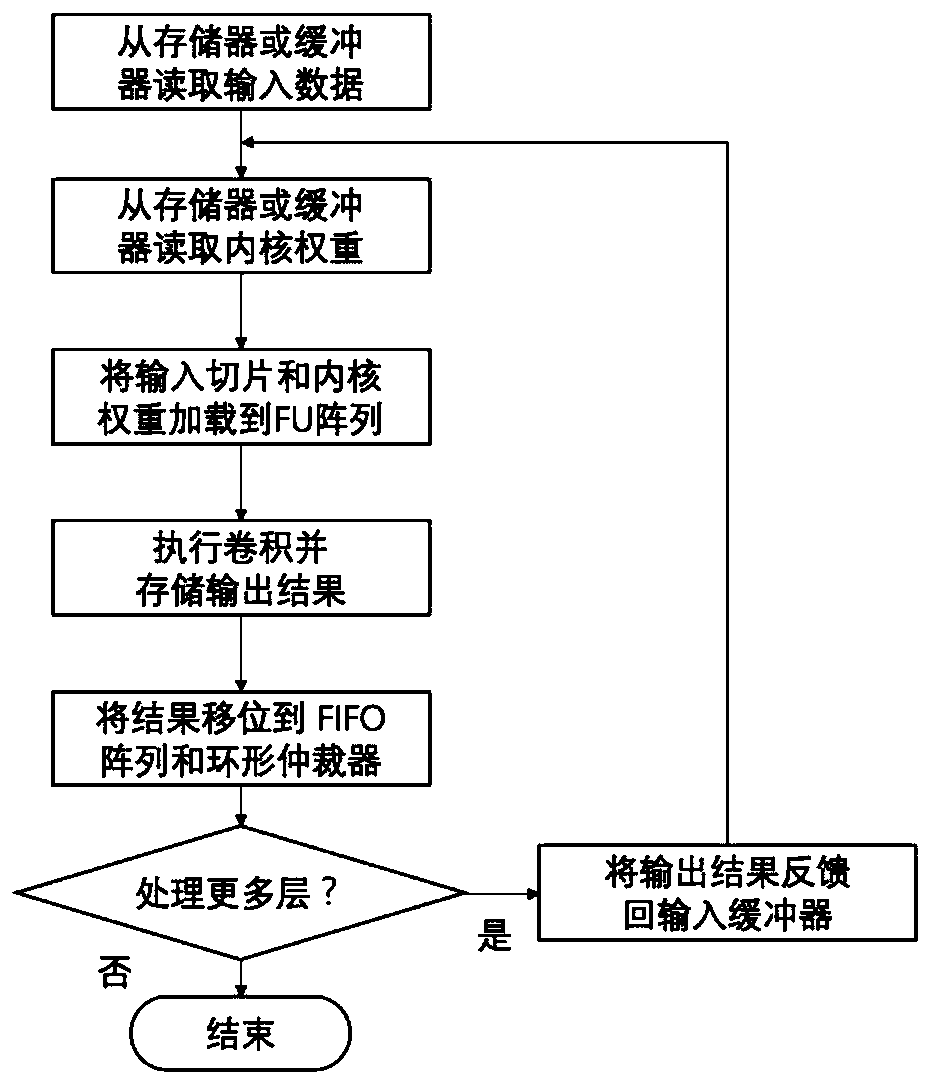

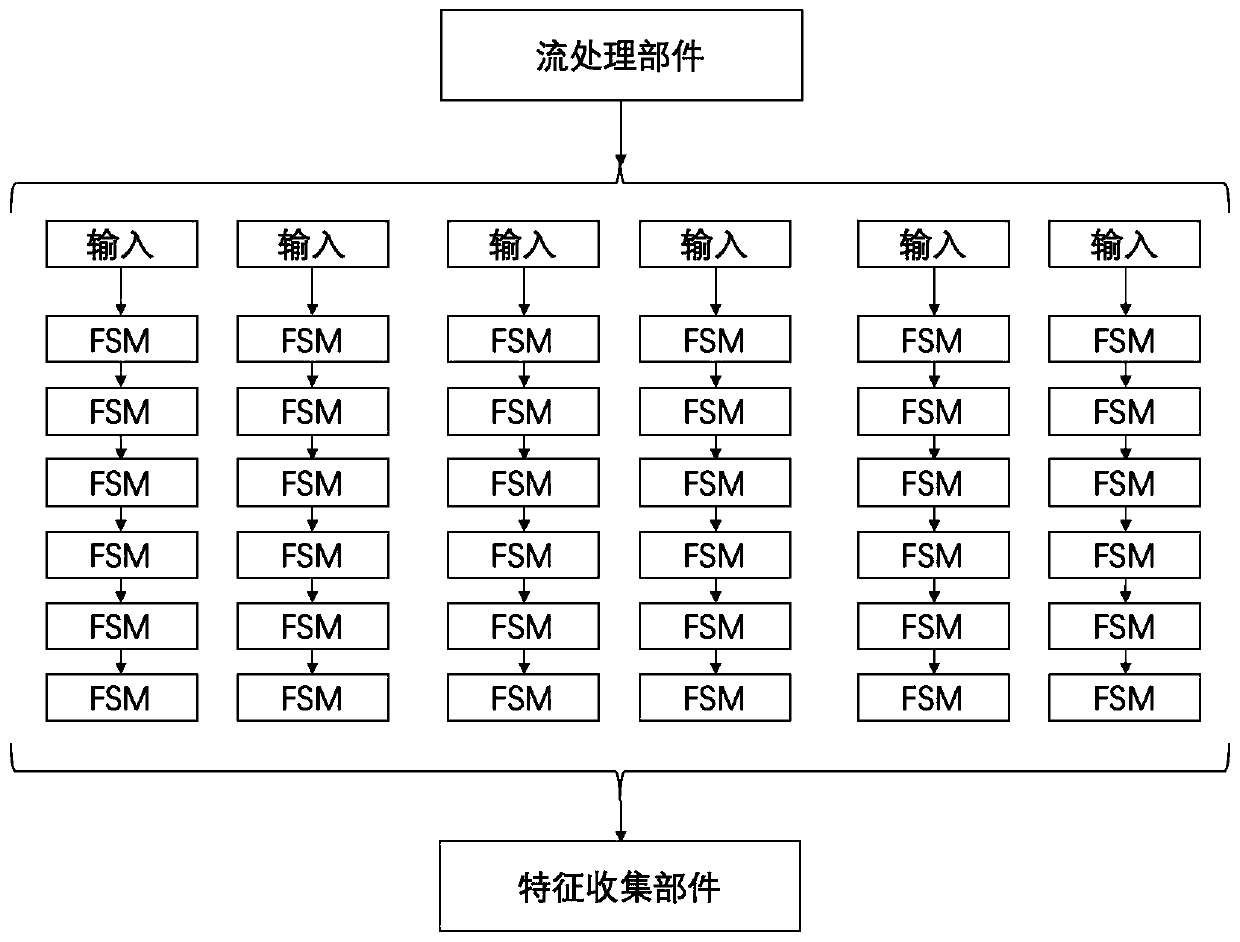

Cloud deep neural network optimization method based on CPU and FPGA cooperative computing

PendingCN111488051AQuick responseImprove performanceResource allocationDigital data processing detailsDeep neural networksIntegrated circuit

The invention belongs to the technical field of computer system structure design, and particularly relates to a cloud deep neural network optimization method based on CPU and FPGA cooperative computing. The method is divided into a front end part and a rear end part. The front end is a server taking a CPU as a core and is responsible for flow control, data receiving and partial processing; and therear end is an acceleration component taking the FPGA as a core, comprises a large-scale parallel processor array, a graphic processing unit, an application-specific integrated circuit and a PCI-E interface, and is responsible for parallel acceleration processing and the like of a key layer of the deep neural network. Firstly, the deep neural network is divided into two parts suitable for front-end processing and rear-end processing according to different levels; the front end shuttles the received data between the front end and the rear end by DDR in the form of a data stream to process eachlayer or a combined layer. The front-end flexible process control is matched with the rear-end efficient parallel structure, so that the energy efficiency ratio of neural network calculation can be greatly improved.

Owner:FUDAN UNIV

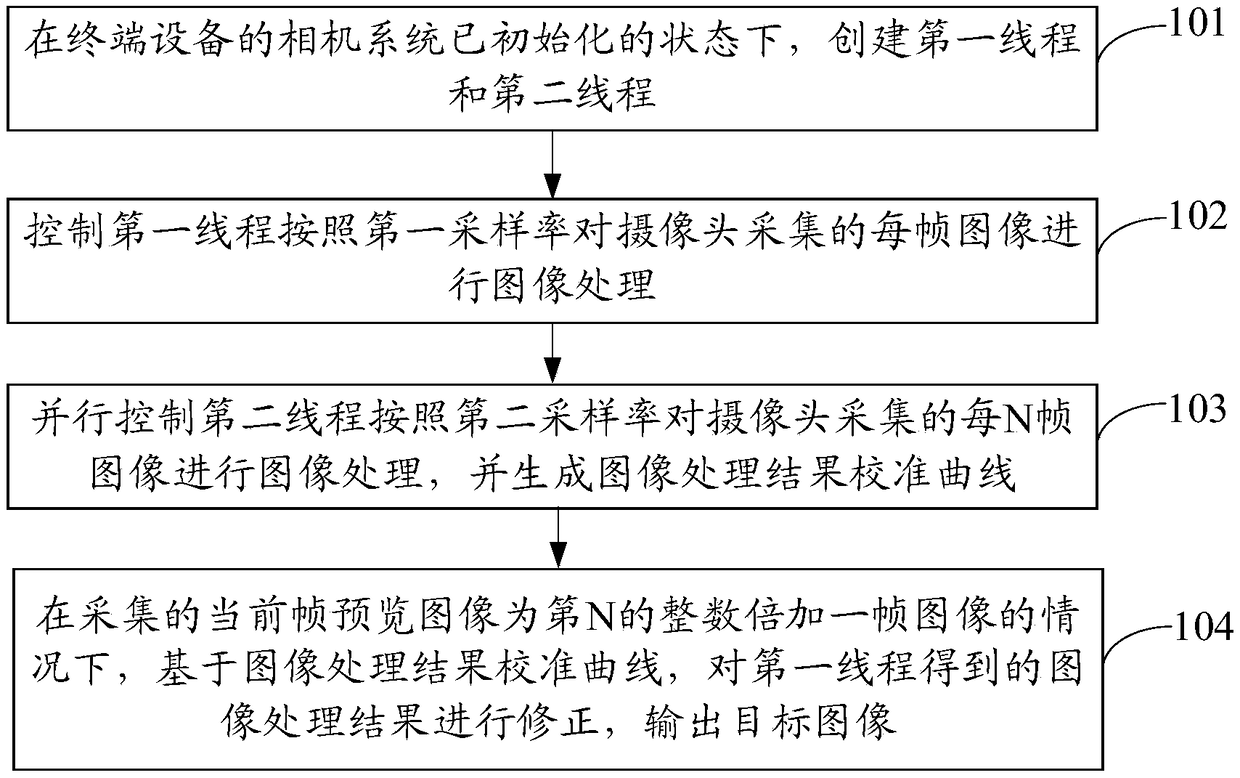

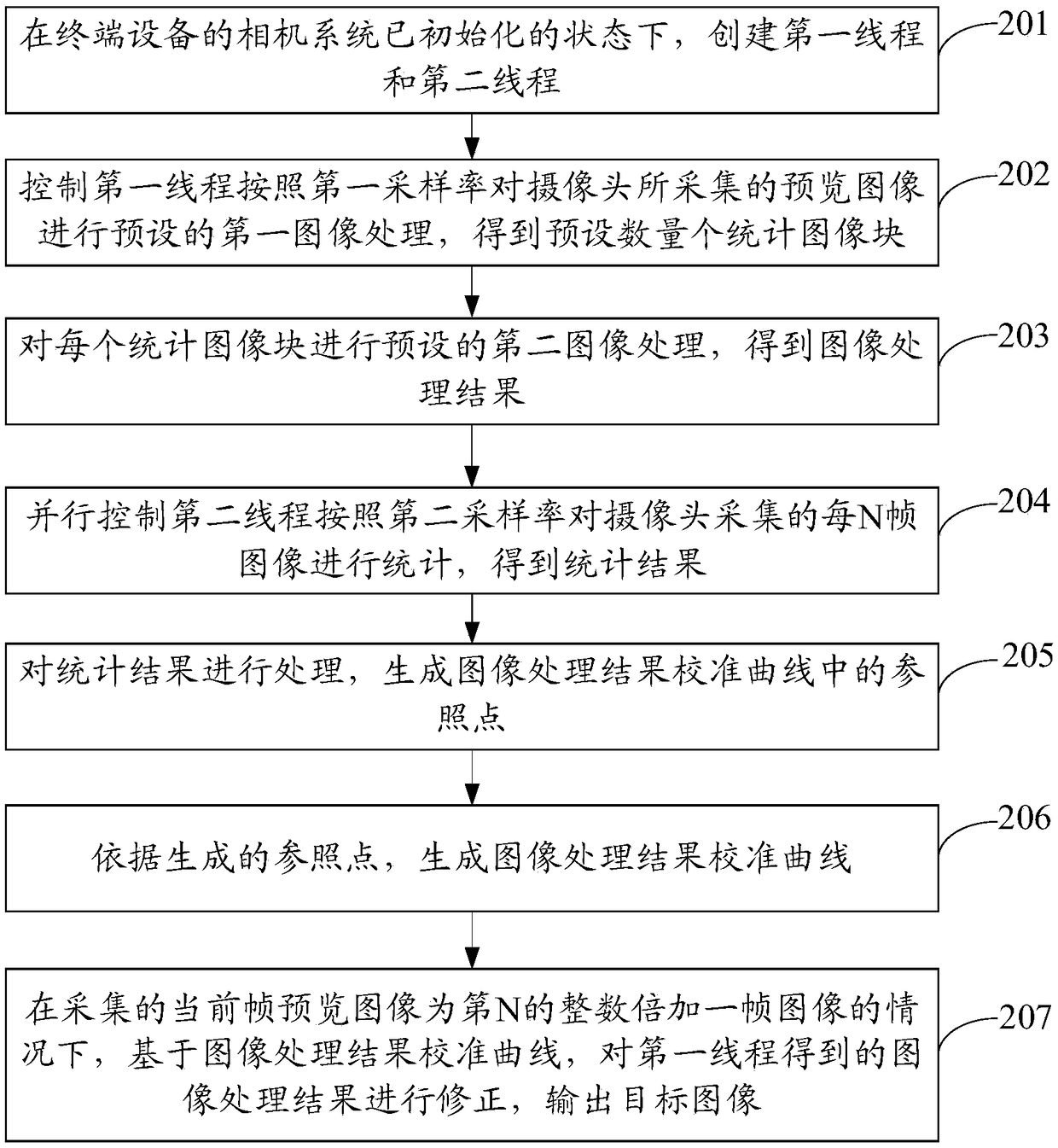

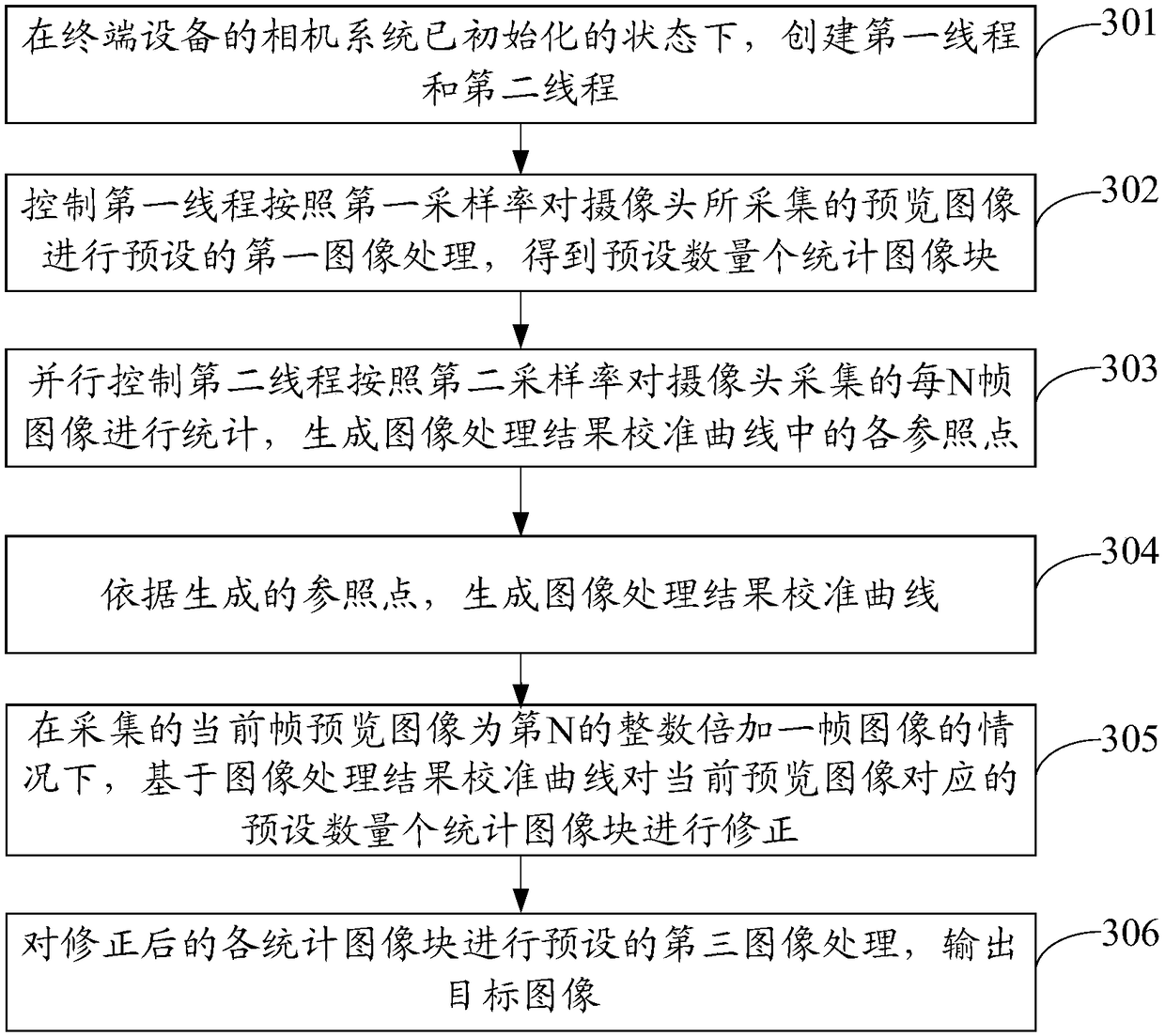

Preview image processing method and terminal device

ActiveCN109474784AImprove the display effectTaking power consumption into considerationTelevision system detailsColor signal processing circuitsTerminal equipmentComputer vision

An embodiment of the invention discloses a preview image processing method and a terminal device. The method is applied to the terminal device, and comprises the following steps: creating a first thread and a second thread in a state where a camera system of the terminal device is initialized; controlling the first thread to perform image processing on each frame of image collected by the camera according to a first sampling rate; controlling the second thread to perform image processing on each N frames of image collected by the camera according to a second sampling rate, and generating a calibration curve of the image processing result; when the current frame of preview image is the image of Nth integer multiple adding one frame, correcting the image processing result obtained by the first thread based on the calibration curve of the image processing result, and outputting the target image. With the preview image processing method provided by the invention, the image sampling rate and the power consumption of the terminal device can be balanced while optimizing the image effect when processing the preview image.

Owner:VIVO MOBILE COMM CO LTD

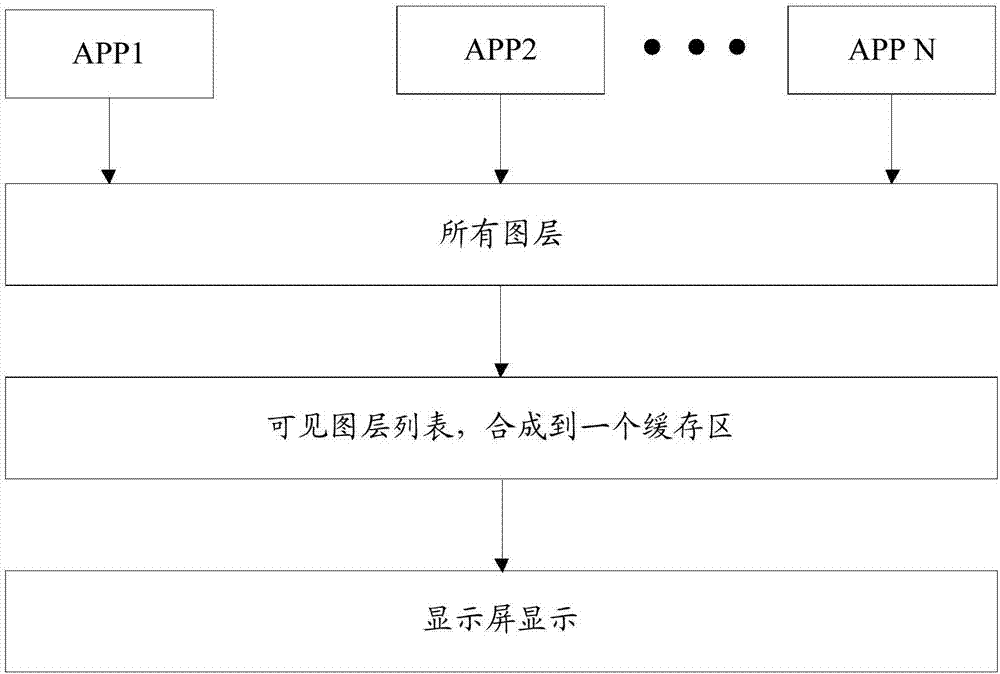

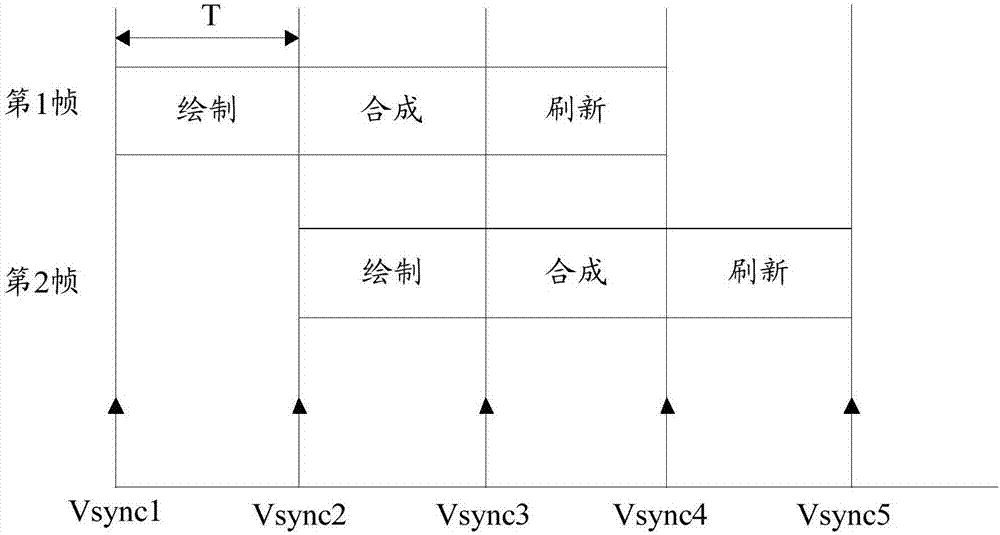

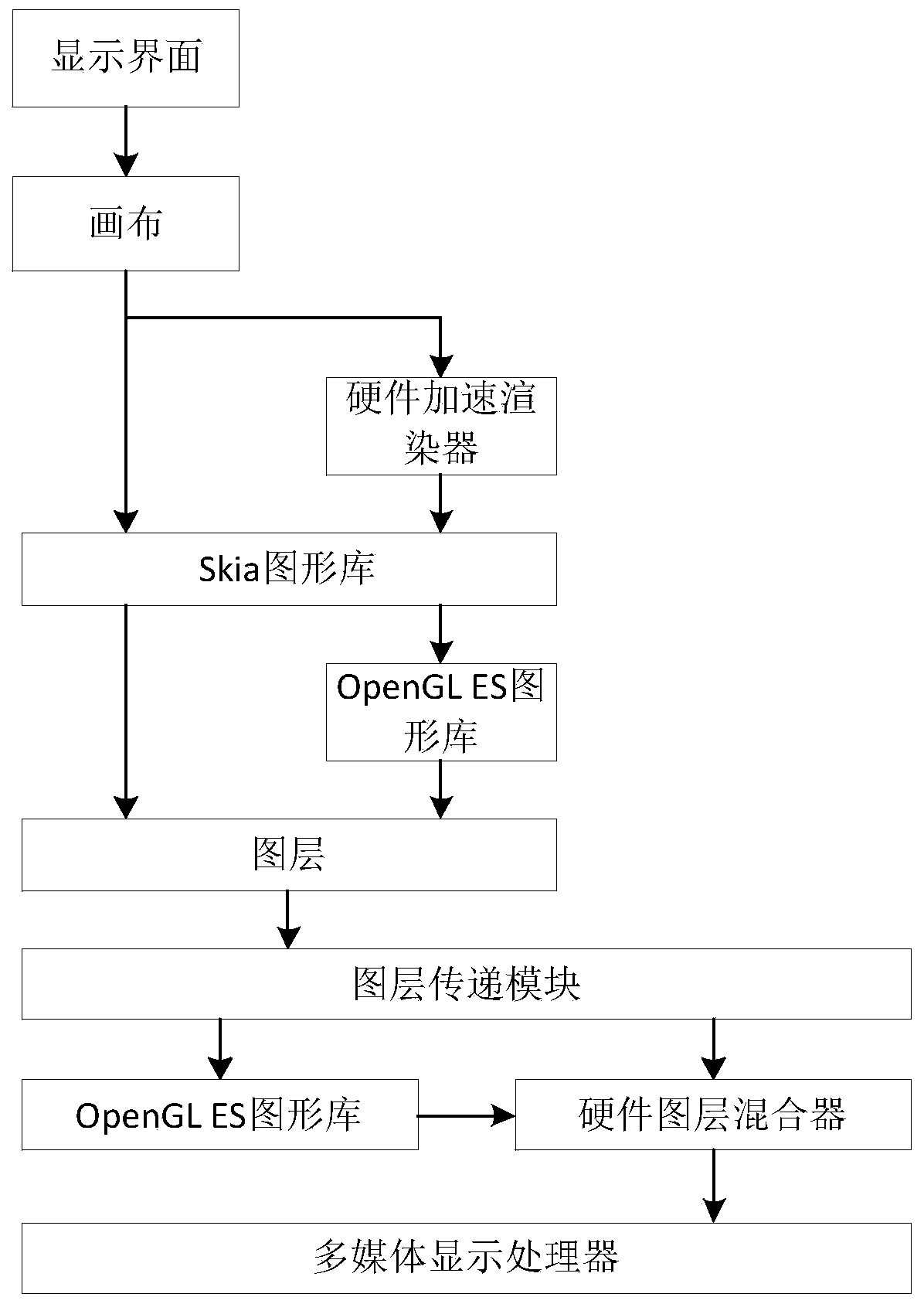

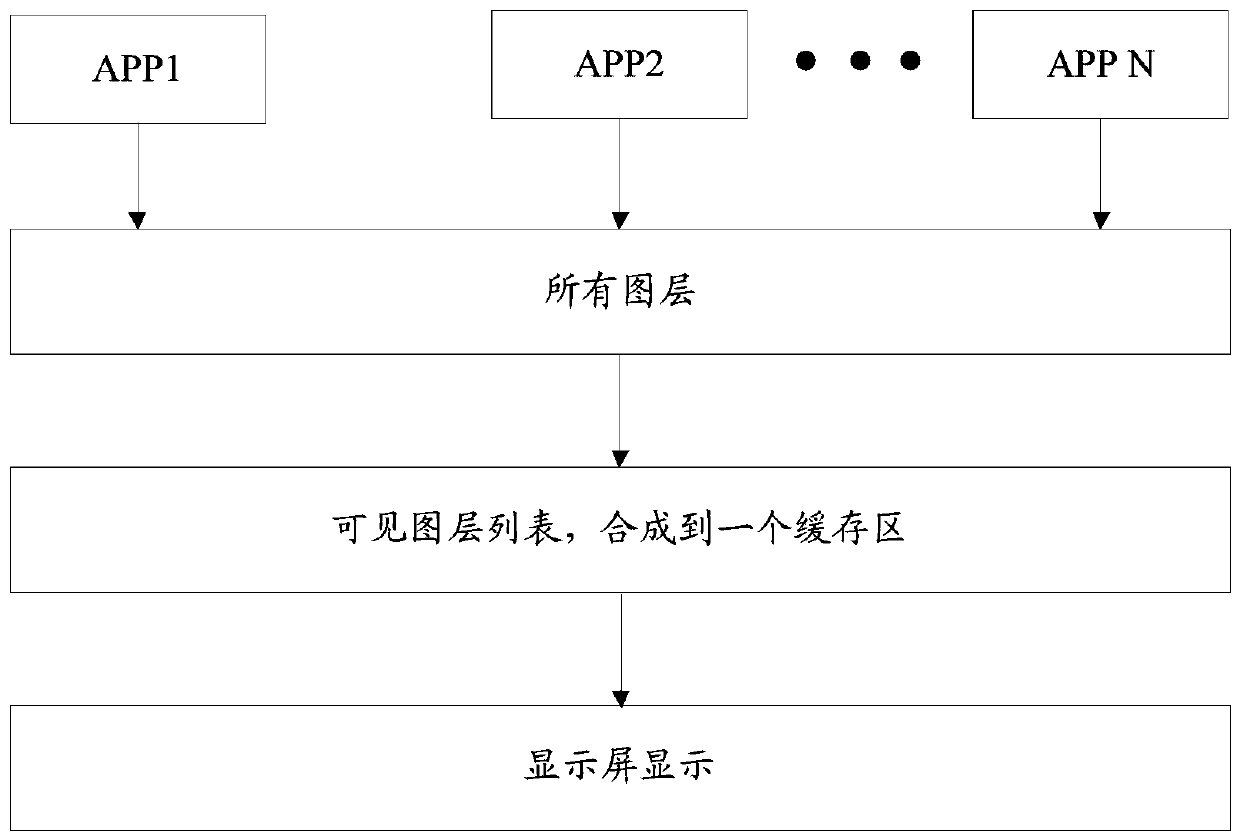

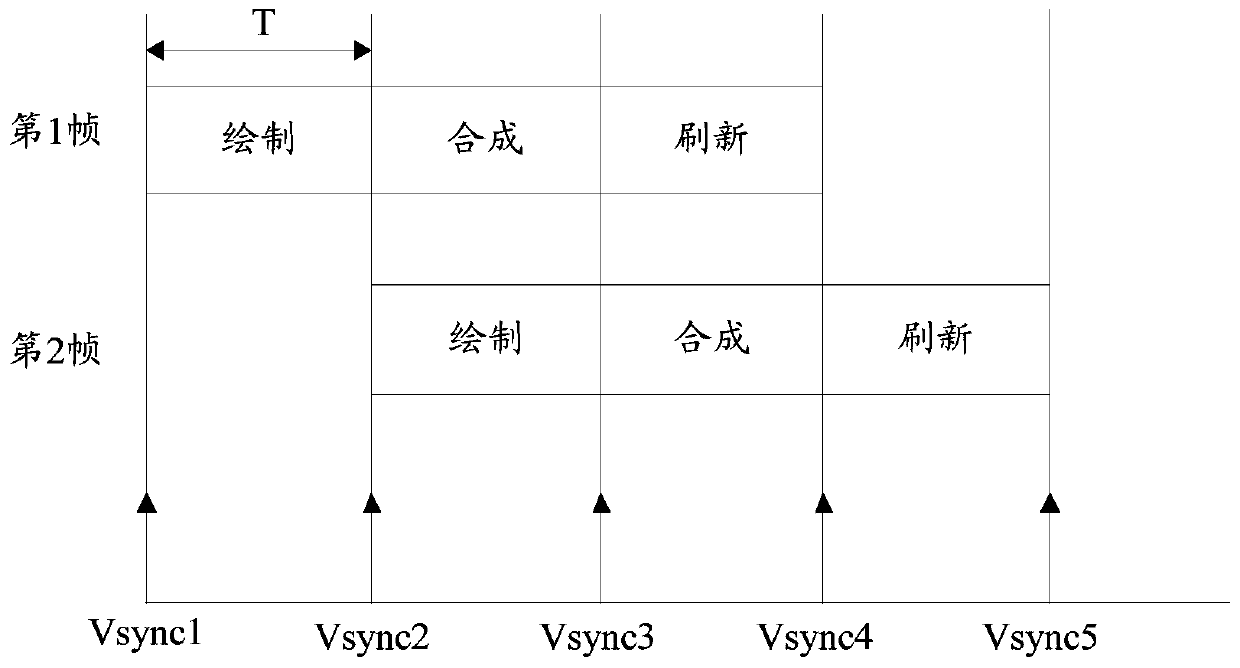

Image synthesis method and device, electronic equipment and storage medium

InactiveCN110413245ABalanced power consumptionTaking power consumption into considerationDigital data processing detailsDigital output to display deviceGraphicsImaging processing

The invention discloses an image synthesis method and device, electronic equipment and a storage medium. The method comprises the following steps: acquiring the current power consumption of the electronic equipment and a plurality of layers of a to-be-displayed image; judging whether the current power consumption is higher than a preset threshold value or not; and if so, synthesizing and displaying the plurality of layers by using a multimedia display processor, and if not, synthesizing and displaying the plurality of layers by using a graphic processor. Different layer synthesis modes are selected according to the current power consumption of the electronic equipment, a synthesis mode with low energy consumption is selected under the condition that the power consumption of the electronicequipment is relatively high so as to balance the power consumption of the electronic equipment, and a synthesis mode with relatively high synthesis capability is selected under the condition that thepower consumption of the electronic equipment is relatively low so as to improve the image processing performance.

Owner:GUANGDONG OPPO MOBILE TELECOMM CORP LTD

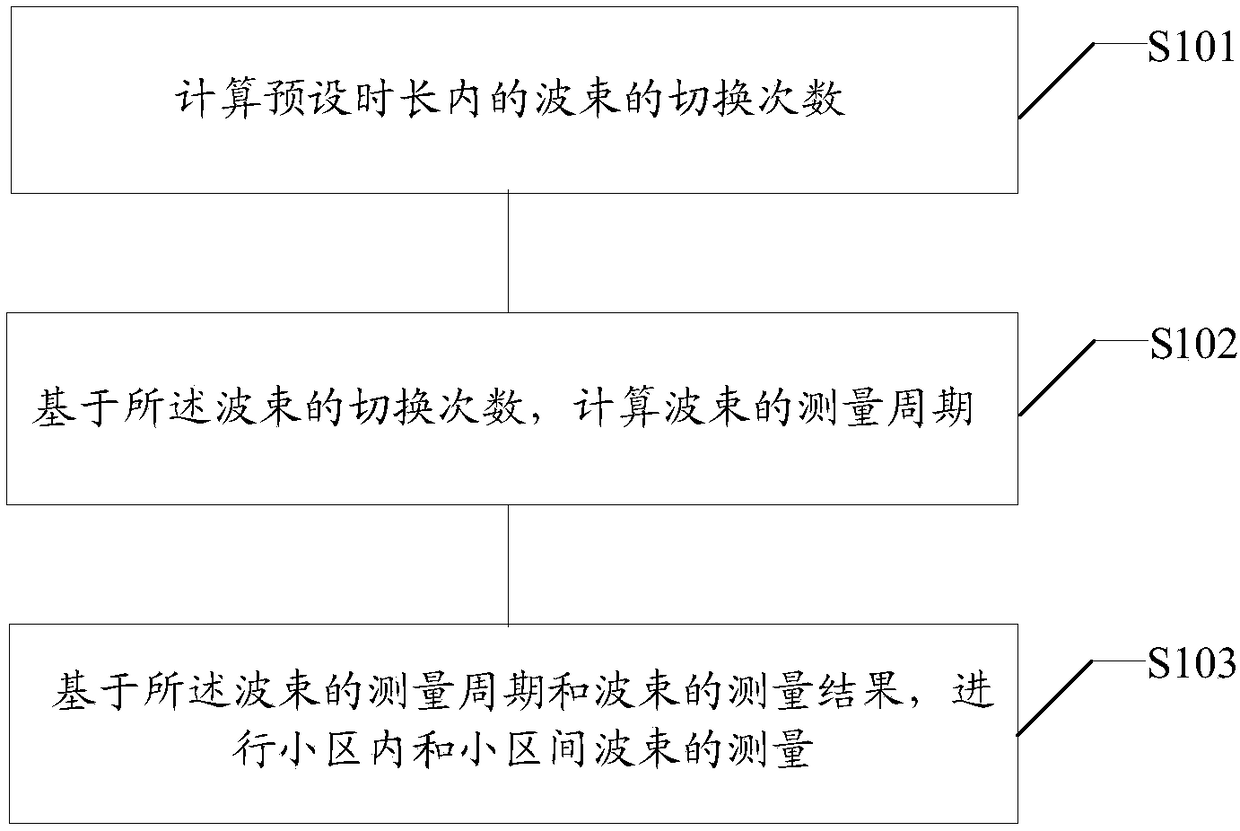

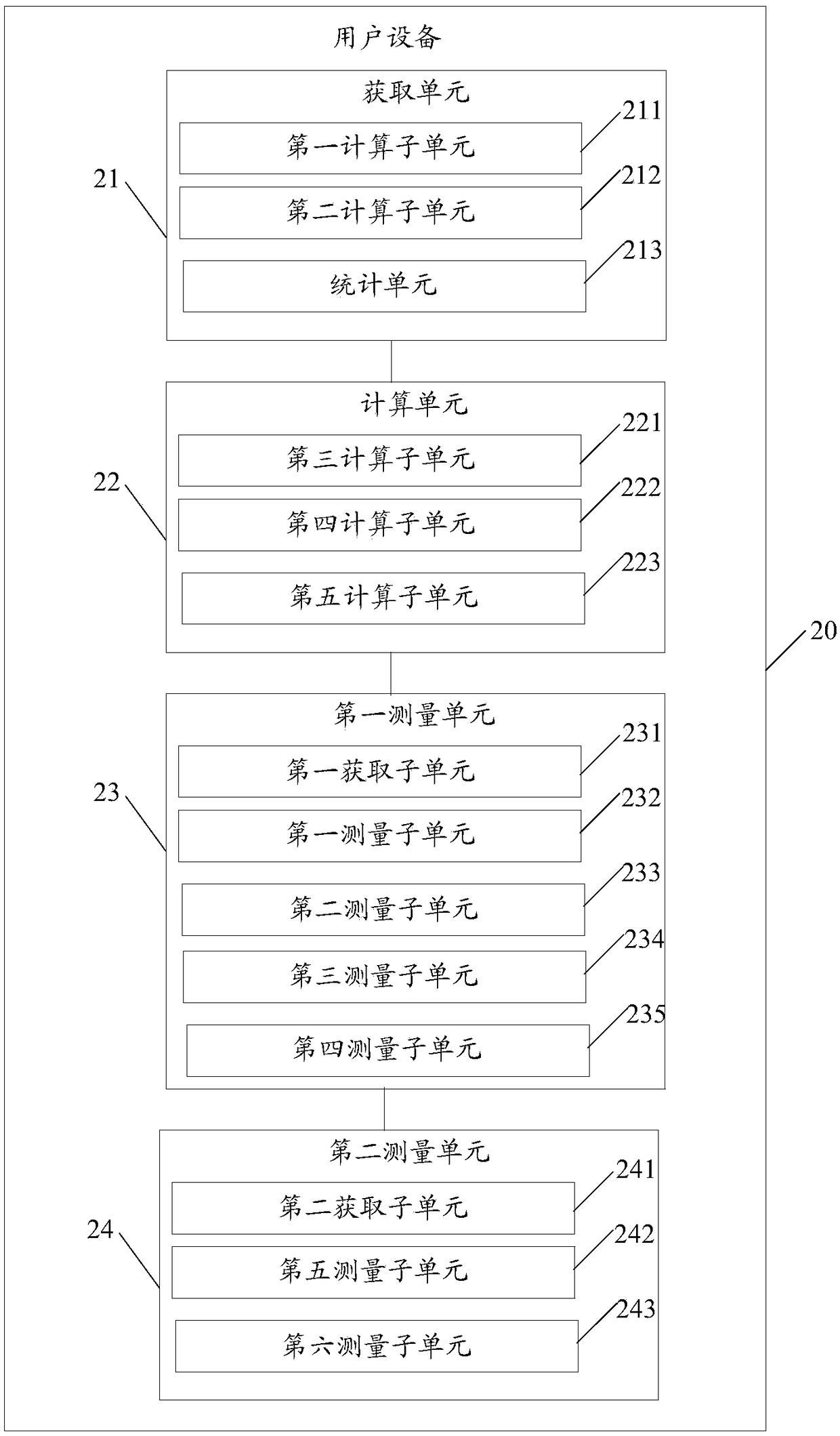

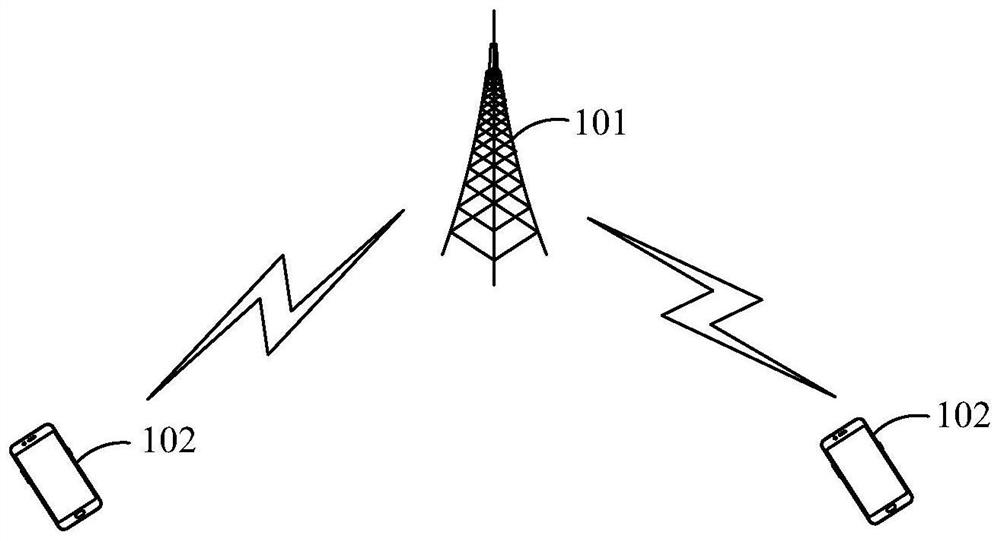

Beam measurement method of user equipment, user equipment and computer readable medium

ActiveCN109474948AImprove accuracyTake into account the accuracyRadio transmissionNetwork planningUser equipmentHandover

The invention discloses a beam measurement method of user equipment, the user equipment and a computer readable medium. The beam measurement method of the user equipment comprises the following steps:computing handover frequency of the beam in the preset duration; computing the measurement cycle of the beam based on the handover frequency of the beam; and performing intra-cell beam measurement and inter-cell beam measurement based on the measurement cycle of the beam and a measurement result of the beam. By applying the above scheme, the measurement cycle and measurement content can be dynamically adjusted according to the handover frequency of the beam and the measurement result of the beam, and the measurement accuracy and the user equipment power consumption are both taken into consideration.

Owner:SPREADTRUM COMM (SHANGHAI) CO LTD

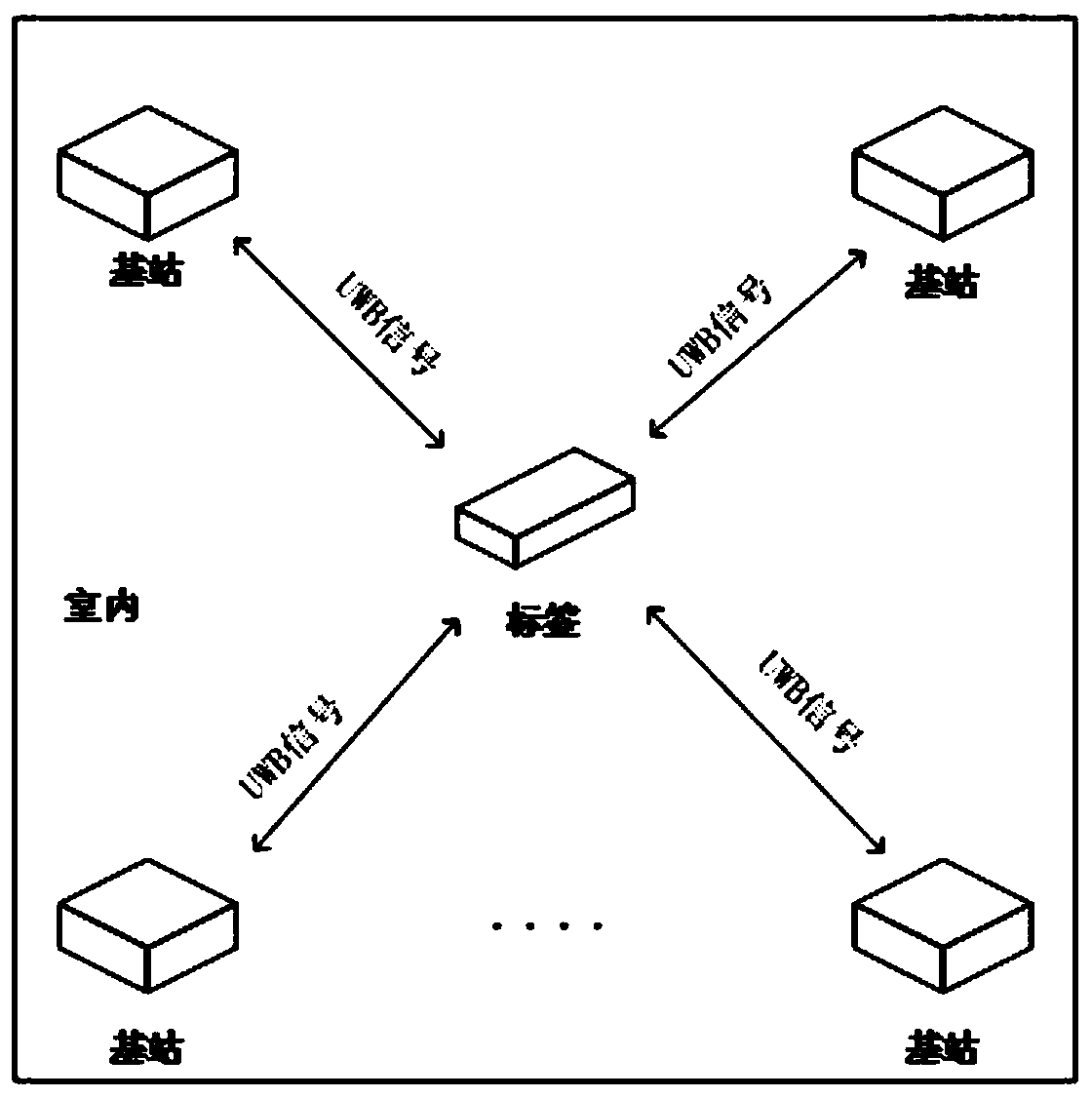

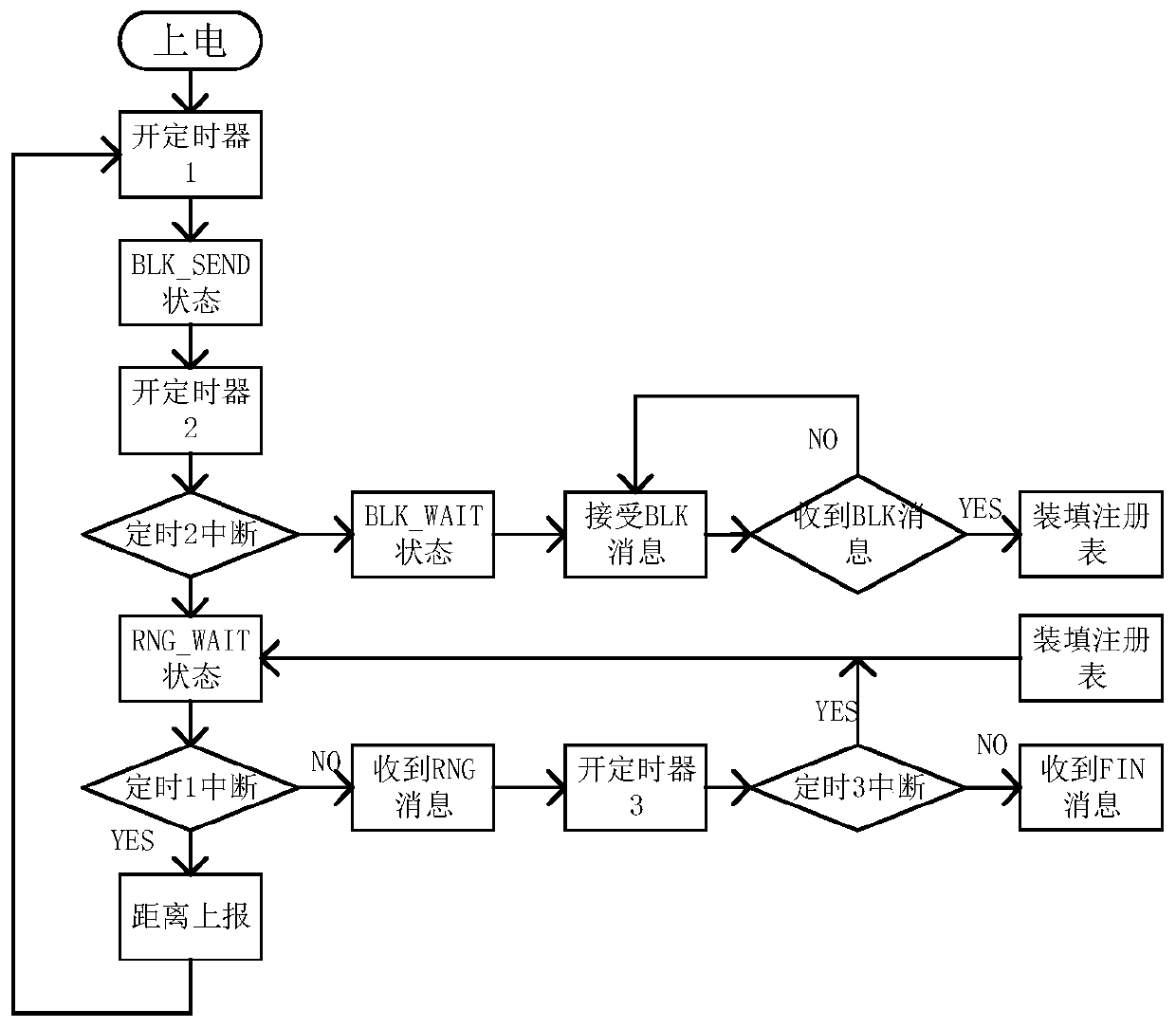

UWB-based distance measuring device and a distance measuring time sequence method thereof

InactiveCN110806562ATaking power consumption into considerationExtended standby lifePosition fixationTimestampEthernet

The invention discloses a UWB-based distance measuring device and a distance measuring time sequence method thereof. The distance measuring device comprises a plurality of positioning base stations and a to-be-located label. The positioning base stations and the label exchange timestamp information through UWB communication. The positioning base stations include first main control chips, DWM1000 modules, Ethernet modules and power supply modules. The label includes a second main control chip, a DWM1000 module and a power supply module. In addition, according to the distance measuring time sequence method, bilateral bidirectional ranging is adopted. When the label moves in space, a distance measuring signal is sent to a base station in the space periodically, the base station carries out distance measuring confirmation by responding to the signal; and then the label sends a final signal to complete distance measuring communication once. According to the invention, the UWB-based distancemeasuring time sequence method has high robustness; the energy consumption of the label is effectively reduced; and the positioning precision is improved.

Owner:NANJING INST OF TECH

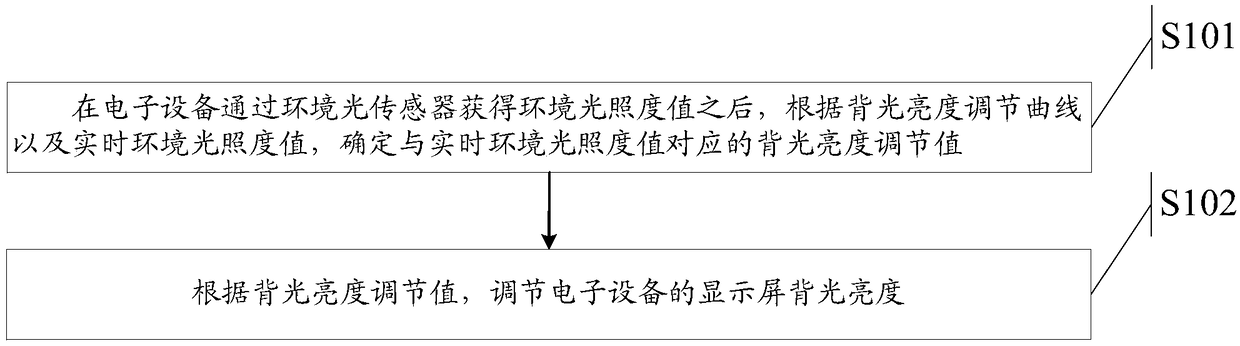

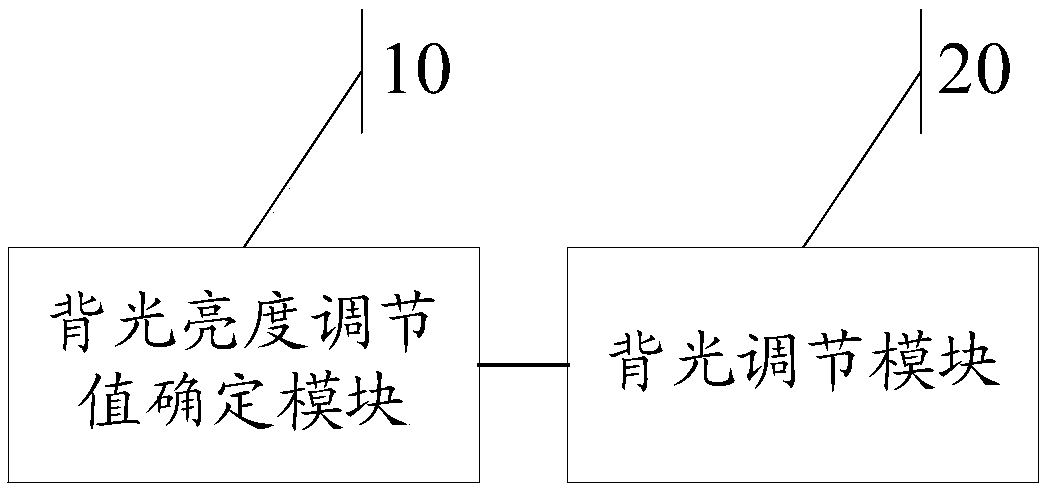

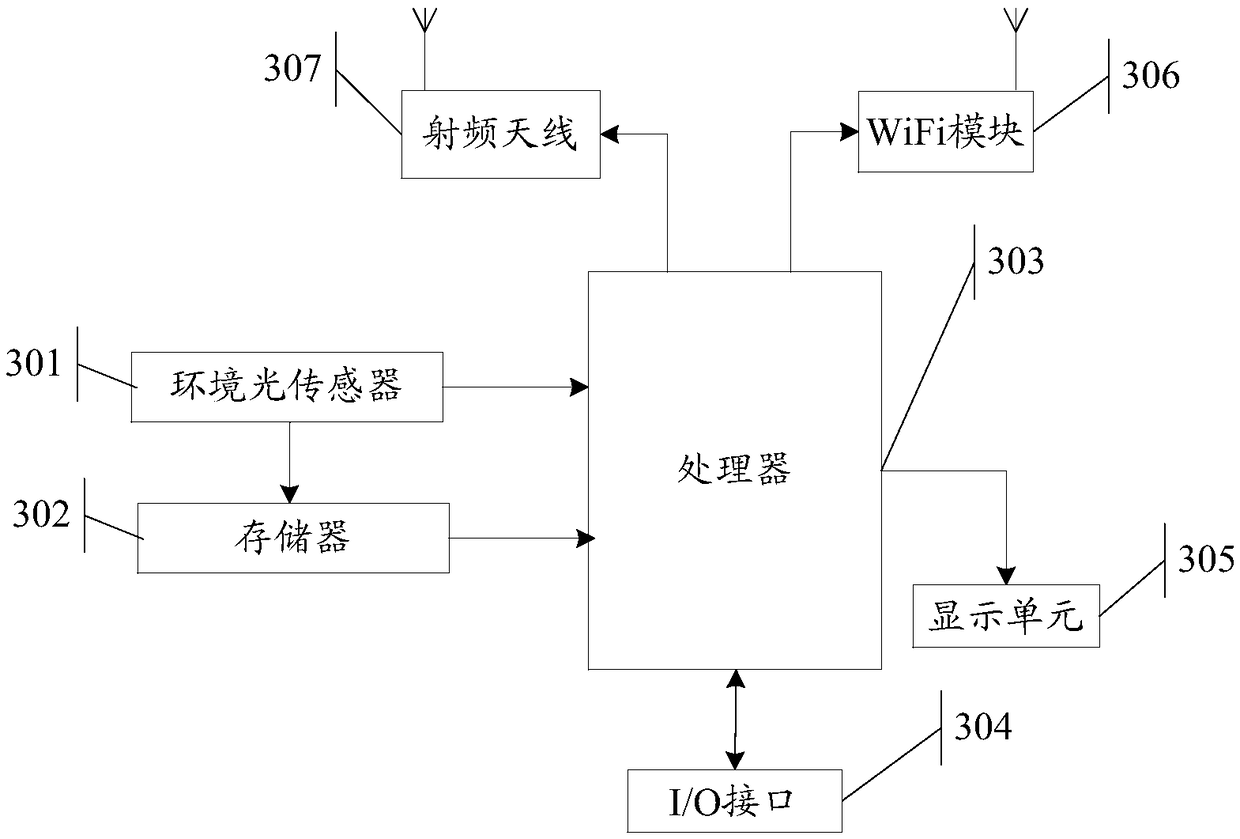

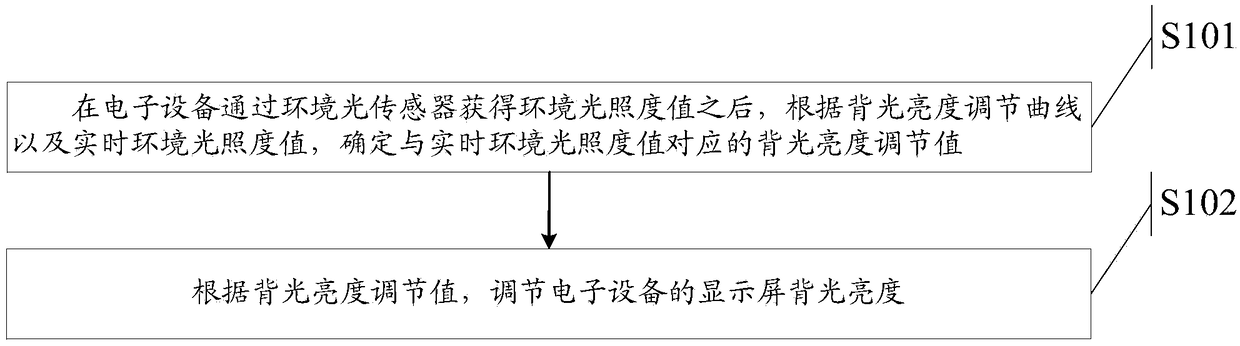

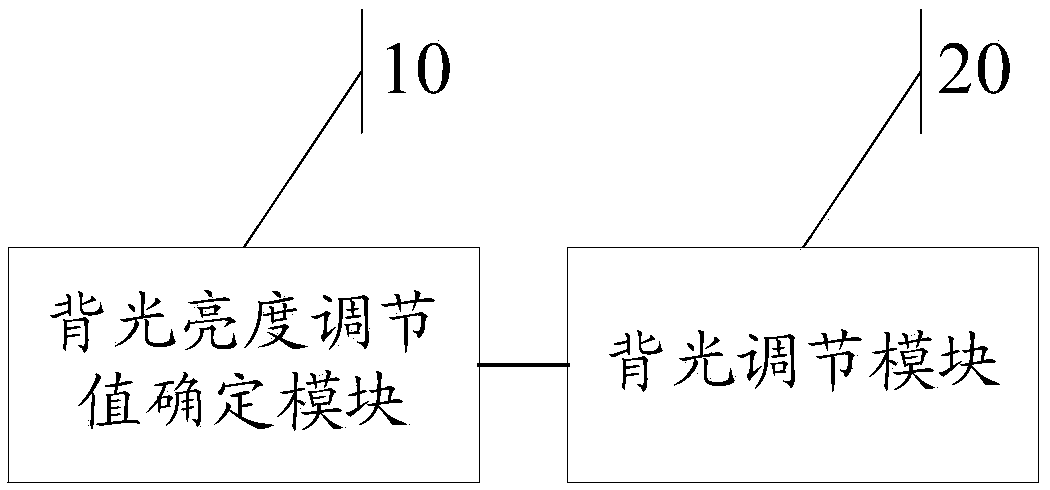

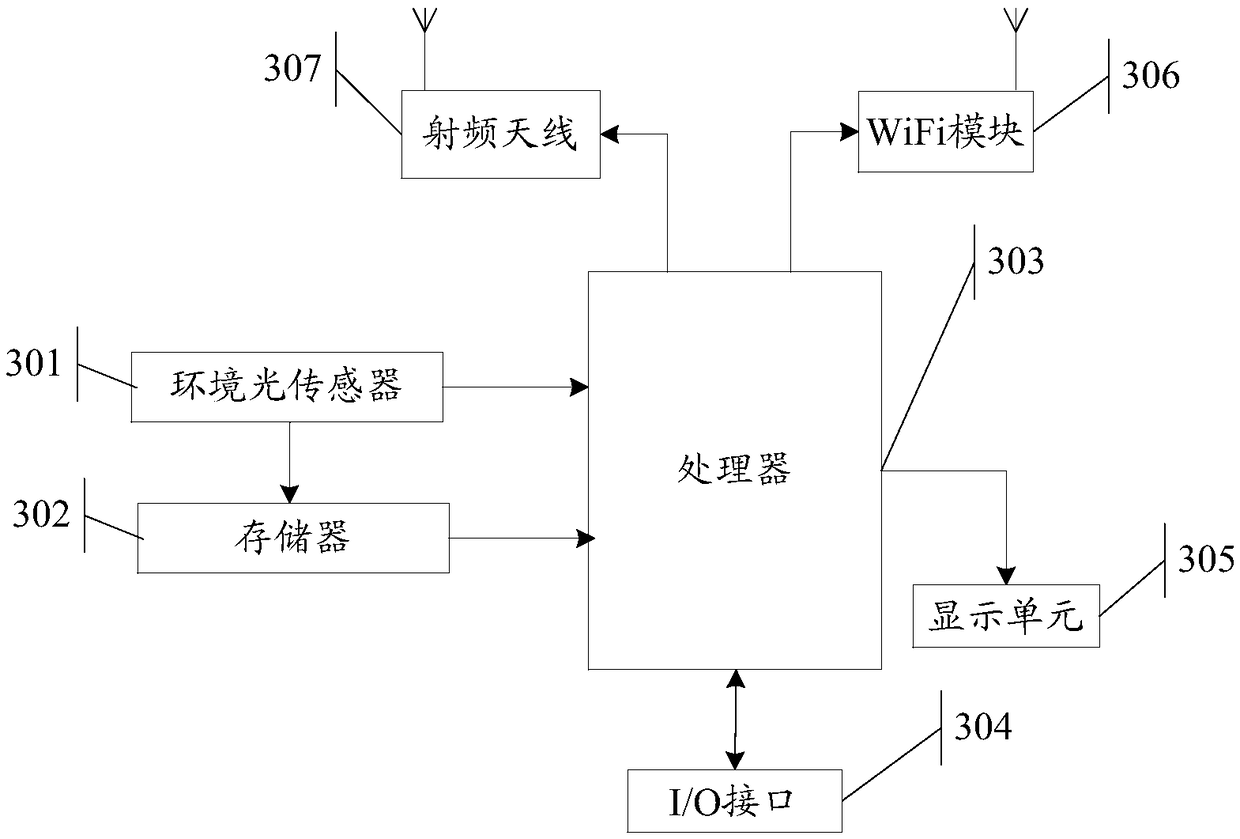

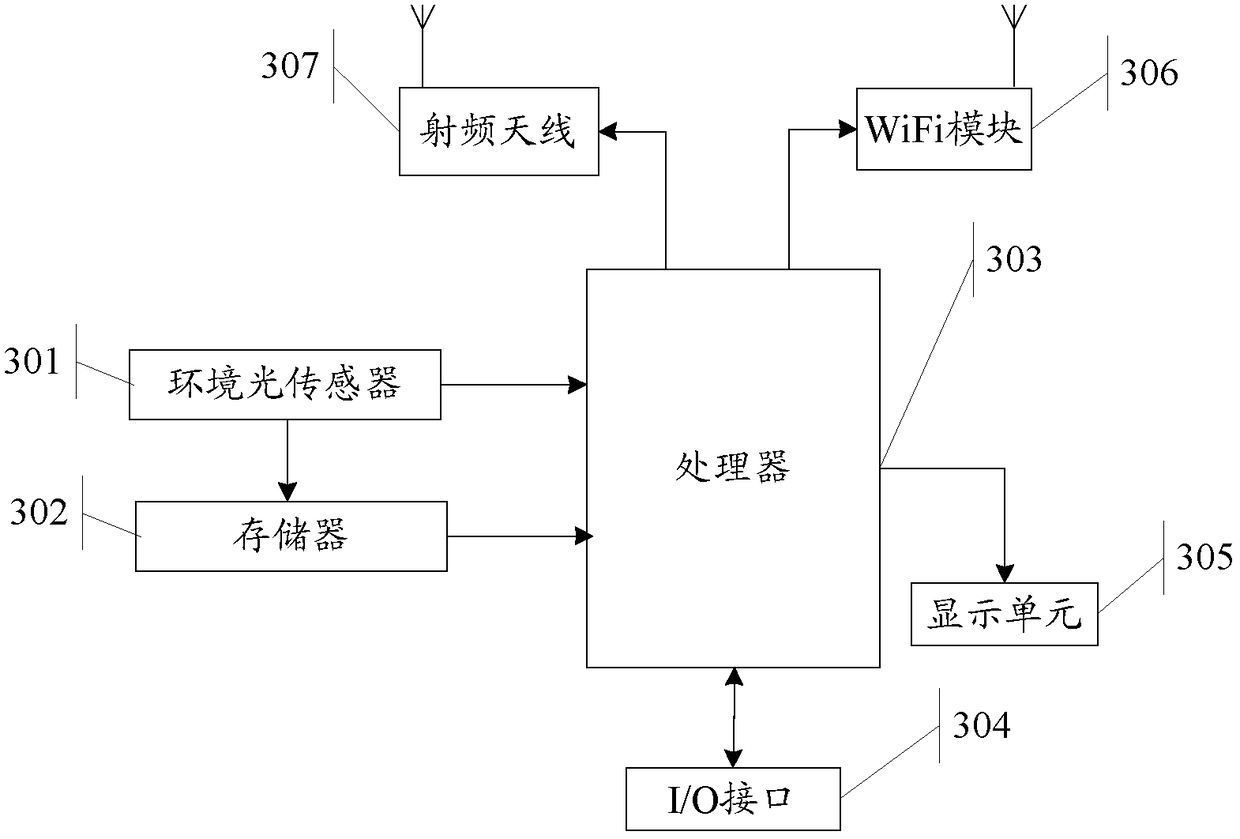

Method and device for adjusting backlight brightness, and electronic equipment

ActiveCN108877692ATaking power consumption into considerationTaking into account the visual experience of the human eyeElectrical apparatusCathode-ray tube indicatorsIlluminanceComputer science

Owner:HUAWEI DEVICE CO LTD

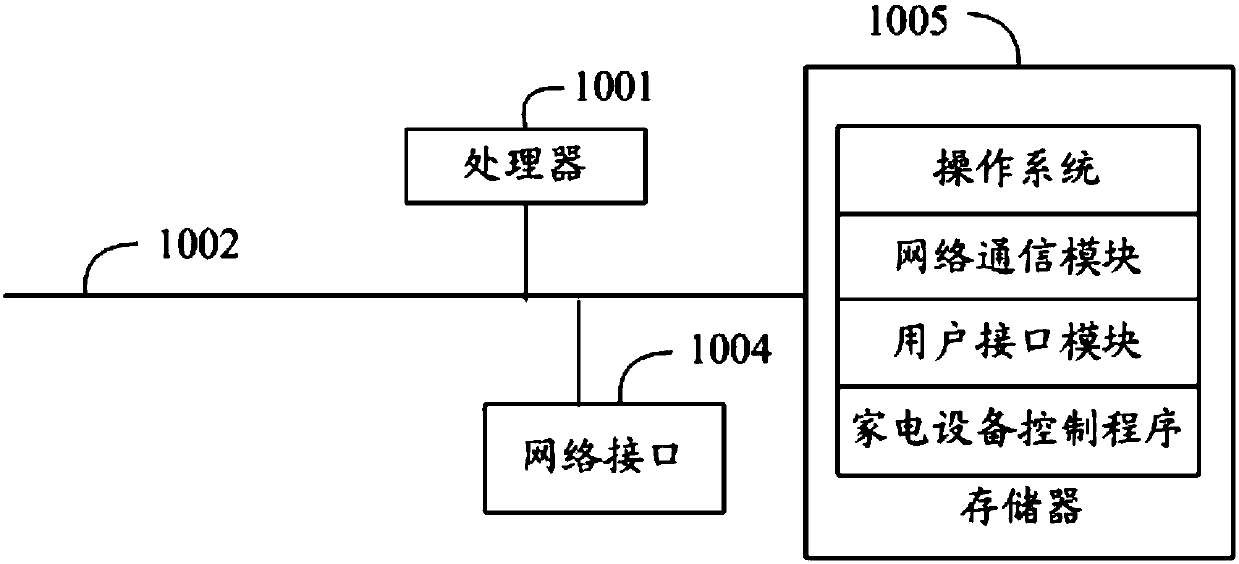

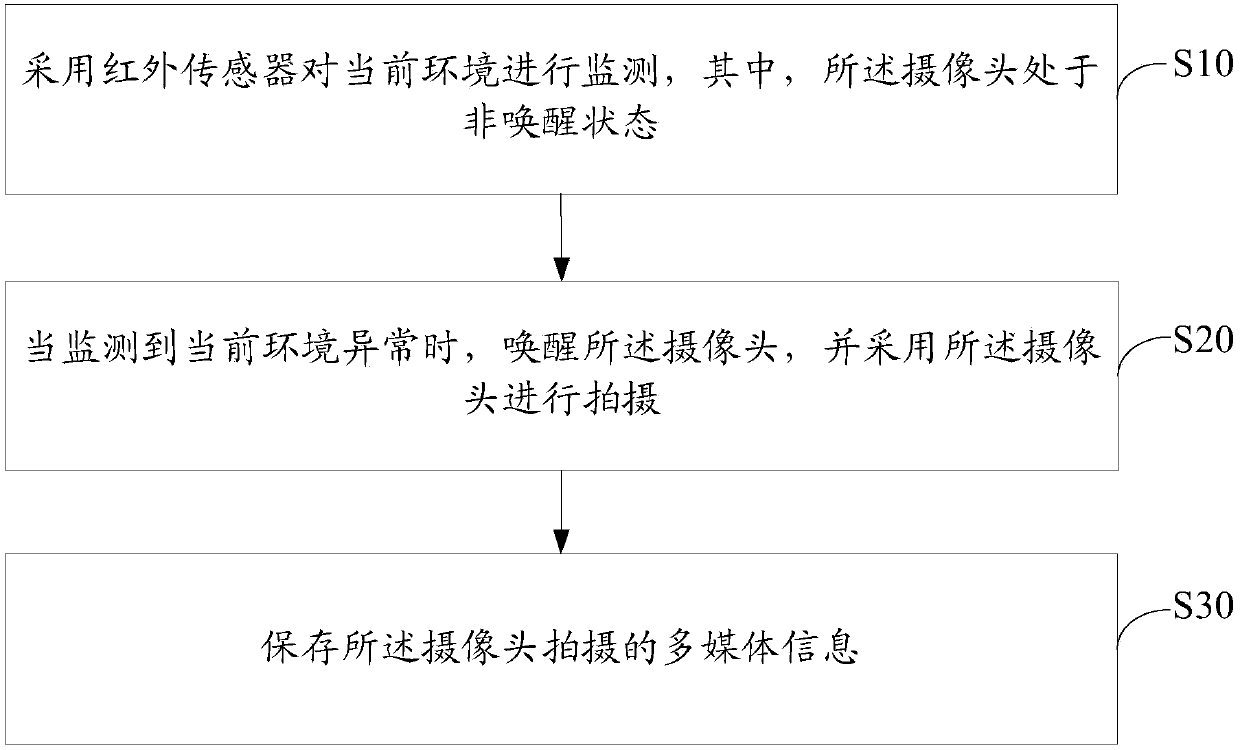

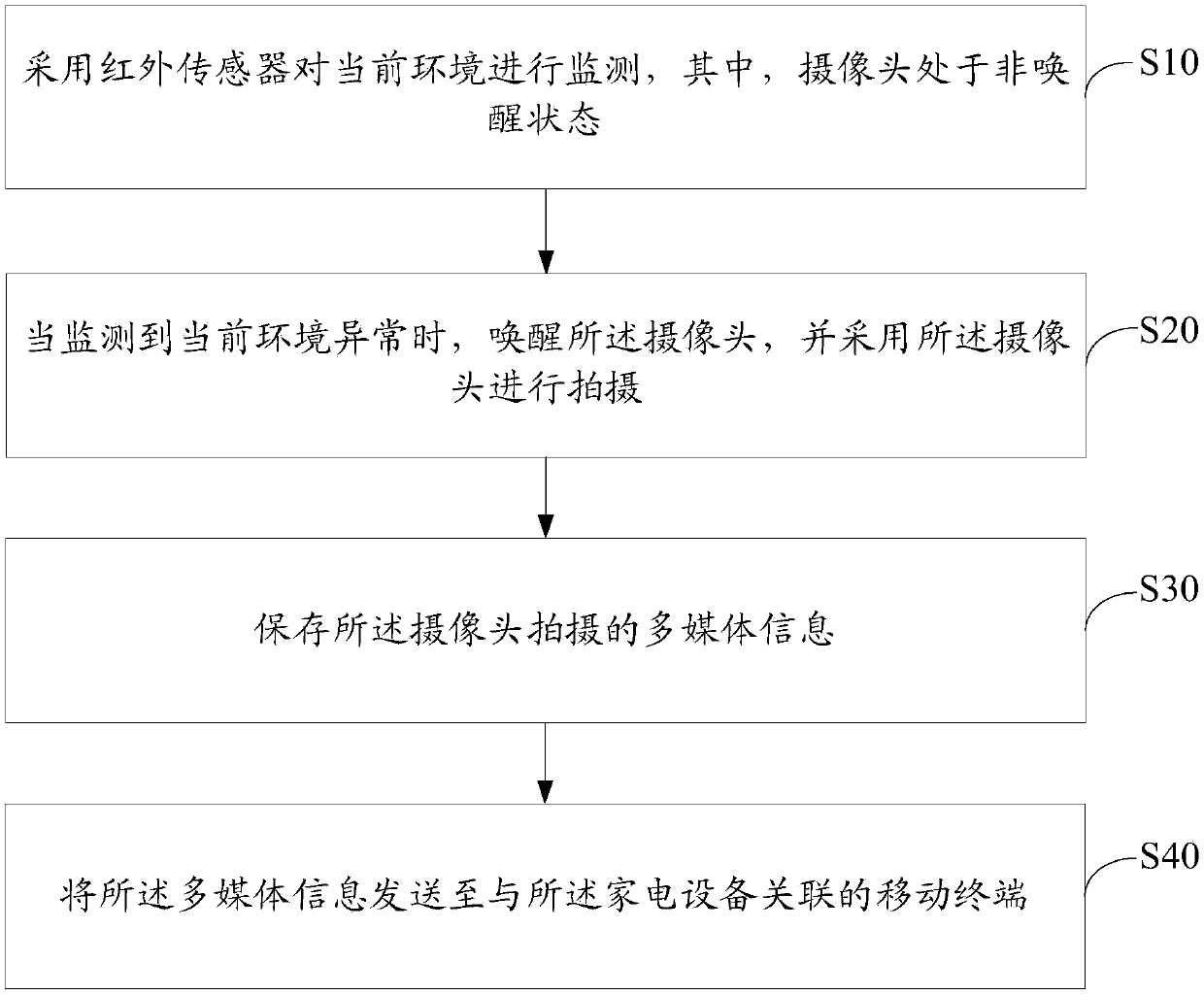

Method and device for controlling household appliance equipment, household appliance equipment and readable storage medium

InactiveCN107861391AAvoid lostReduce power consumptionComputer controlProgramme total factory controlSecurity monitoringEmbedded system

The invention discloses a method for controlling household appliance equipment. The household appliance equipment includes a camera and an infrared sensor, and the method for controlling the householdappliance equipment includes adopting the infrared sensor to monitor current environment, the camera being in a non-wakeup state; when abnormality of current environment is monitored, awakening the camera, and adopting the camera to shoot; and storing multimedia information shot by the camera. The invention also discloses a device for controlling household appliance equipment, household applianceequipment and a computer readable storage medium. The method and device for controlling household appliance equipment, household appliance equipment and computer readable storage medium provided by the invention realize the effects of giving consideration to both power consumption and a security capability when security monitoring of the household appliance equipment is performed.

Owner:GD MIDEA AIR-CONDITIONING EQUIP CO LTD

Method and device for adjusting backlight brightness, and electronic equipment

ActiveCN108877739ATaking power consumption into considerationTaking into account the visual experience of the human eyeElectrical apparatusCathode-ray tube indicatorsIlluminanceComputer science

The invention provides a method and device for adjusting the backlight brightness, and electronic equipment. The method comprises the following steps: obtaining an environment light illuminance valuethrough an environment sensor; determining a backlight brightness adjustment value corresponding to the environment light illuminance value according to a backlight brightness adjustment curve and theenvironment light illuminance value, wherein the backlight brightness adjustment curve is determined based on the corresponding relation among a brightness reference value, the backlight brightness adjustment curve and the backlight brightness; and adjusting the backlight brightness of a display screen of the electronic equipment according to the backlight brightness adjustment value. The methodachieves the adjustment of the backlight brightness of the display screen according to the environment light illuminance with the consideration to the power consumption of the electronic equipment andthe sense of the eyes of a user.

Owner:HUAWEI DEVICE CO LTD

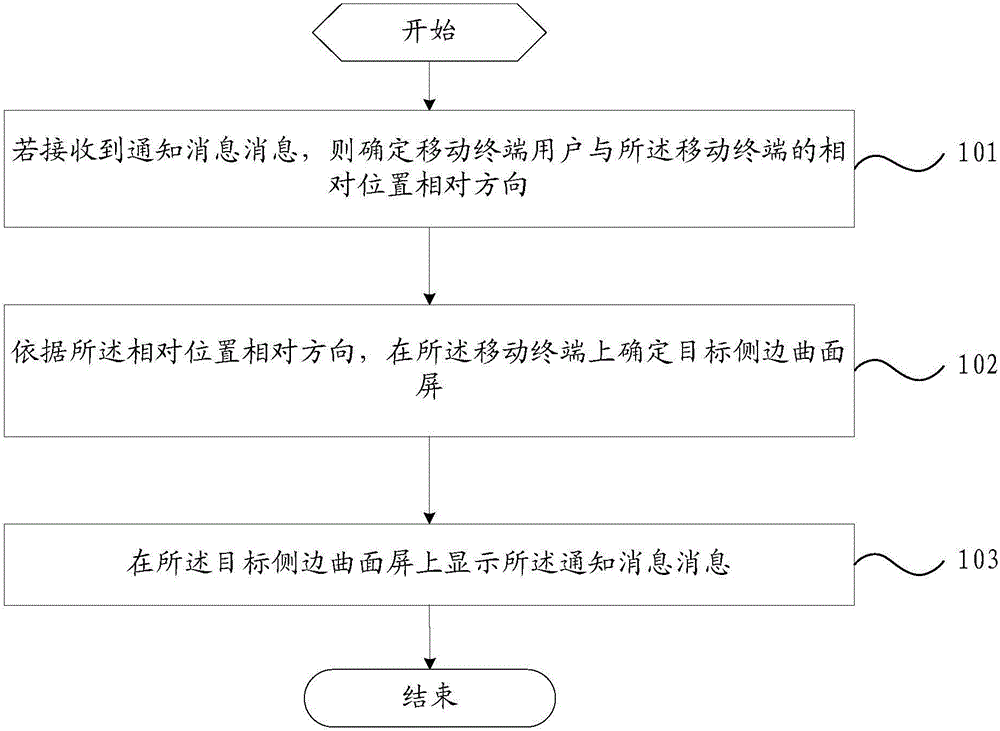

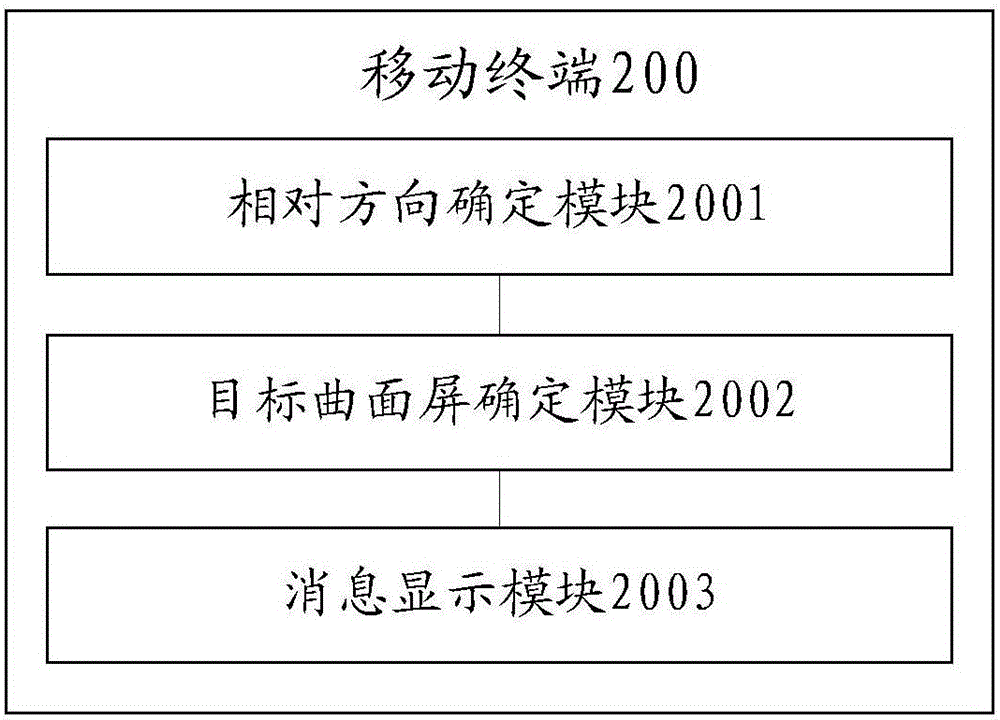

Message display method and mobile terminal

InactiveCN106527850AImprove viewing convenienceAvoid lifePower supply for data processingInput/output processes for data processingComputer terminalComputer science

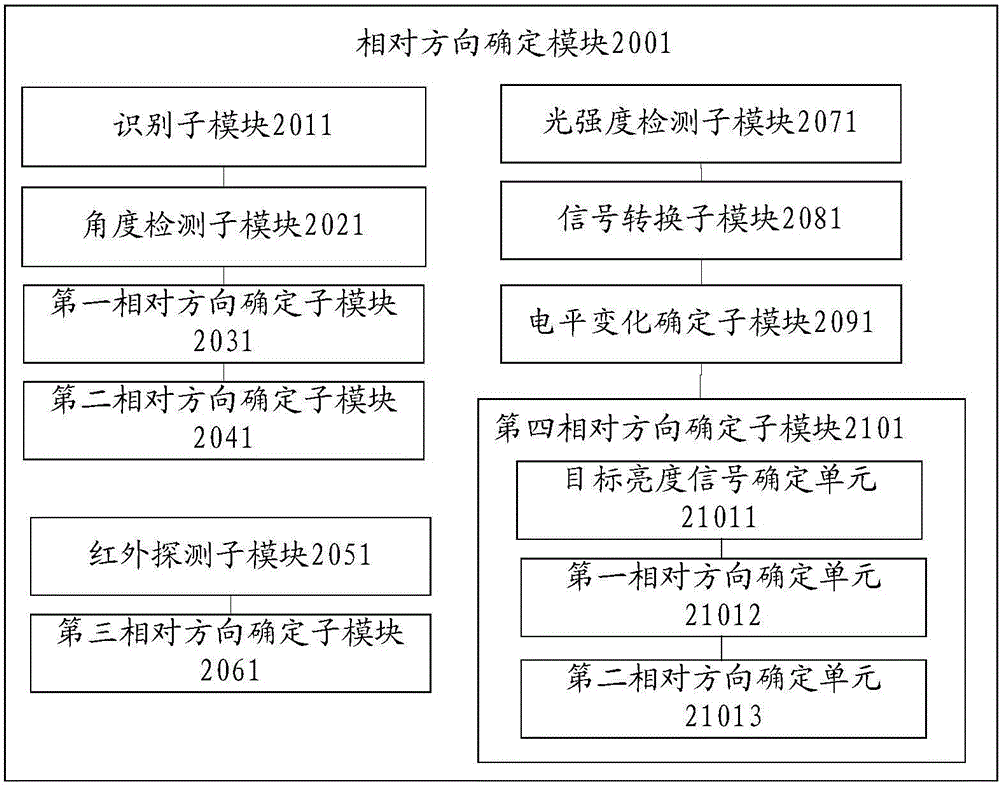

The embodiment of the invention provides a message display method and a mobile terminal. The message display method comprises the steps of determining relative directions of a mobile terminal user and the mobile terminal if a message is received; determining a target lateral curved screen at the mobile terminal based on the relative directions; and displaying the message on the target lateral curved screen. As the target lateral curved screen for displaying the message is determined through the relative directions of the mobile terminal user and the mobile terminal in the embodiment, the lateral curved screen which is closer to a user position is enabled to display the message, and checking convenience of the message is improved. Meanwhile, as the lateral curved screen at one side is only adopted for displaying the message, the problems of short service lifetime and high power consumption of the lateral curved screen caused by simultaneously displaying the same message by two lateral curved screens are avoided. That is to say, both the checking convenience of the message and the long service lifetime and low power consumption of the lateral curved screen can be considered.

Owner:VIVO MOBILE COMM CO LTD

Satellite-borne NAND Flash storage management system

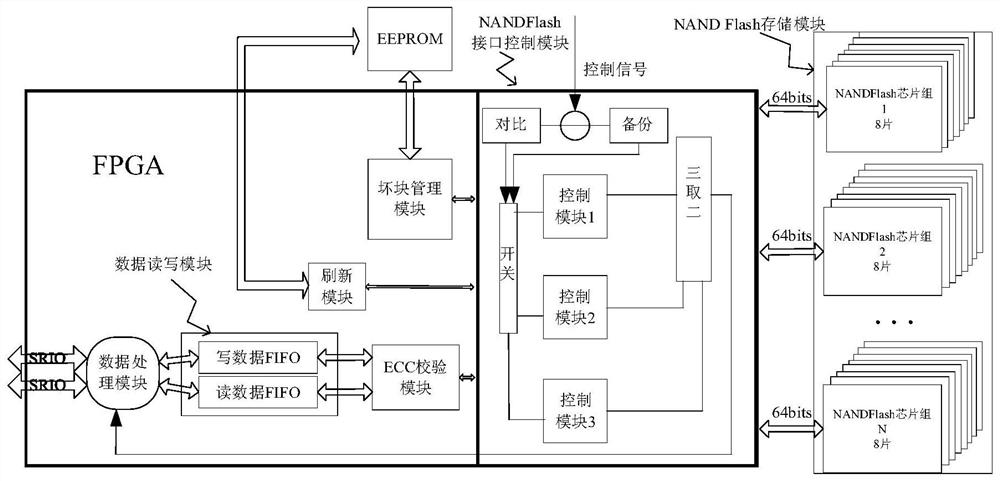

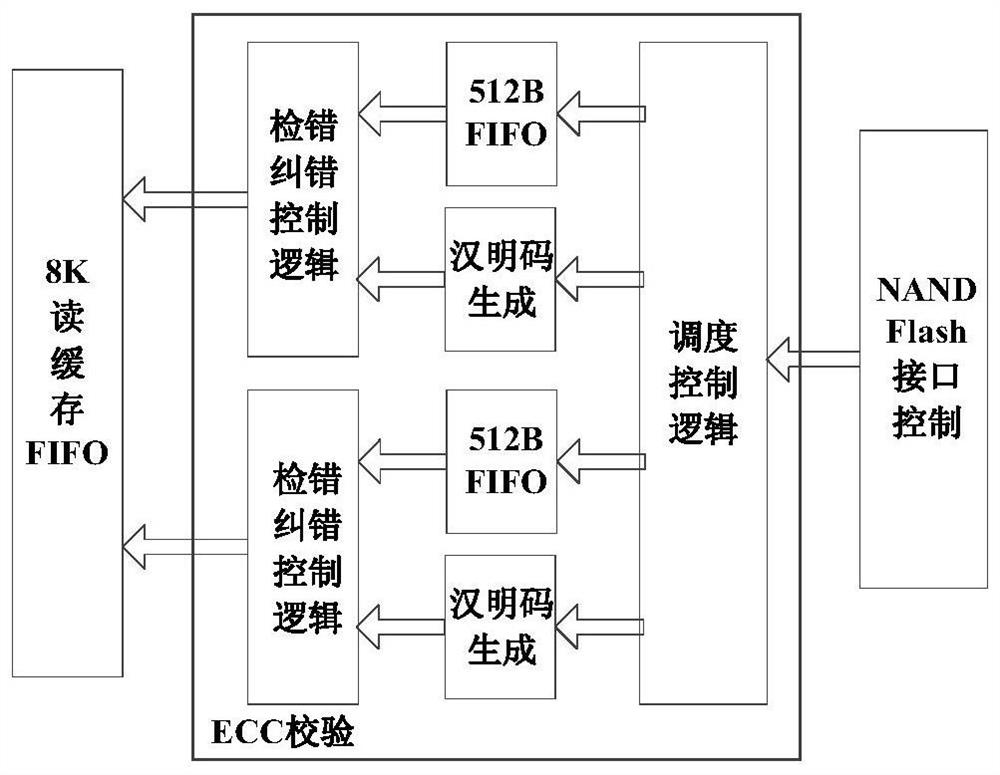

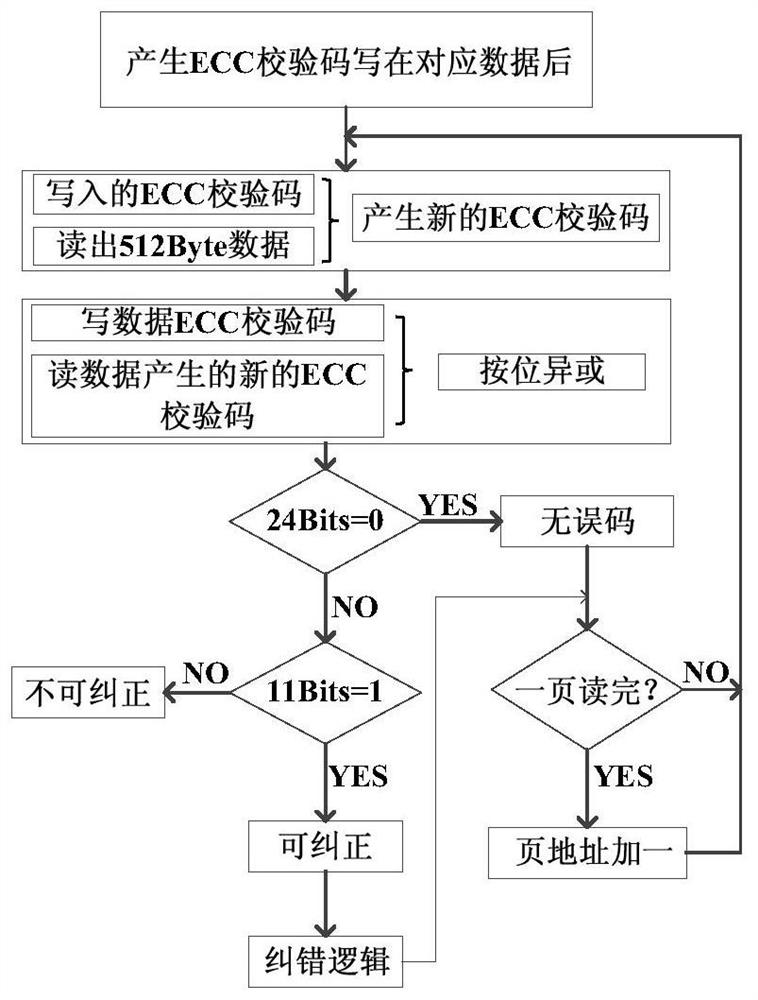

ActiveCN112181304AImprove reliabilitySecure data transfer managementInput/output to record carriersRedundant data error correctionControl signalEngineering

The invention provides an NAND Flash high-reliability storage management system for on-orbit use of a micro-satellite. The NAND Flash high-reliability storage management system comprises a data processing module, a data read-write module, a refreshing function module, an ECC verification module, a bad block management module and an NAND Flash interface control module. The NAND Flash interface control module has a two-out-of-three mode and a cold backup mode, the use mode is determined through an external control signal, and the working number of the control module is selected through a switchmatrix in different modes. The management system is connected with an NAND Flash chip through a GPIO (General Purpose Input / Output) port, and has the expansion capability of serial and parallel dimensions. The ECC verification module is constructed on the basis of the ping-pong operation principle, and the NAND Flash bad block table is managed and updated in combination with the external memory. In order to cope with large-scale errors, a refreshing function for the control module is designed. The high reliability of the NAND Flash storage management system is ensured through multiple modes, and meanwhile, the overall power consumption is considered.

Owner:ZHEJIANG UNIV

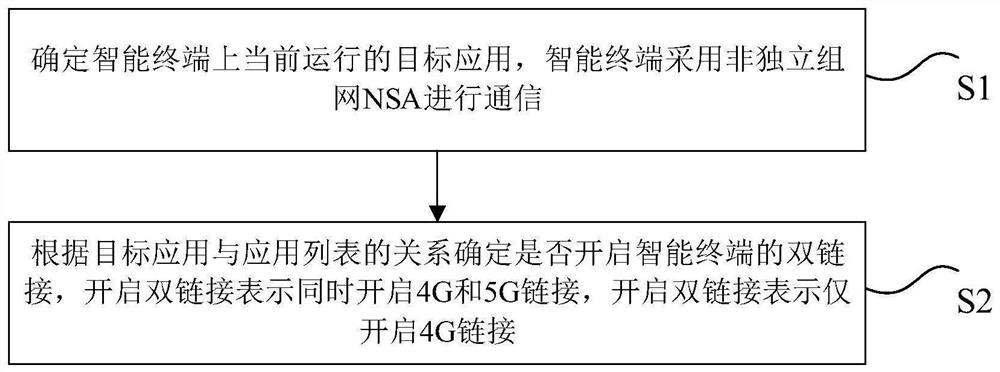

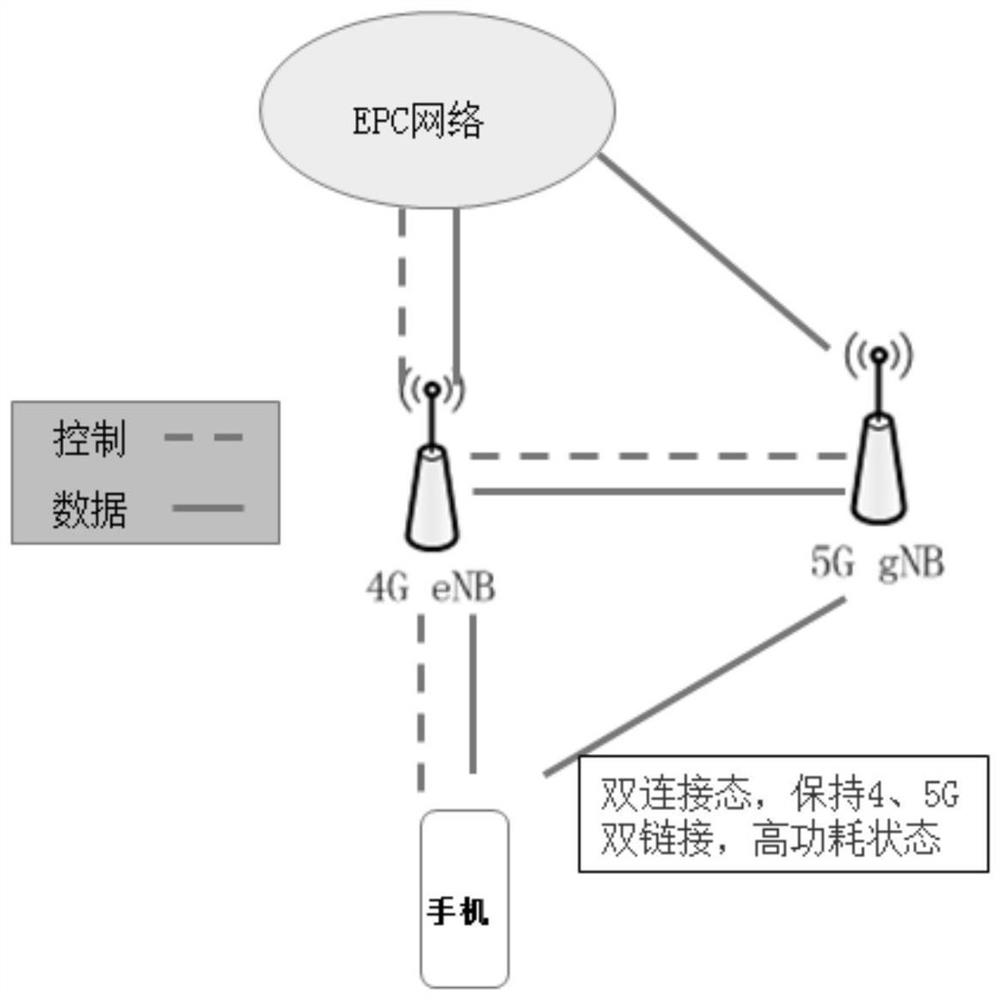

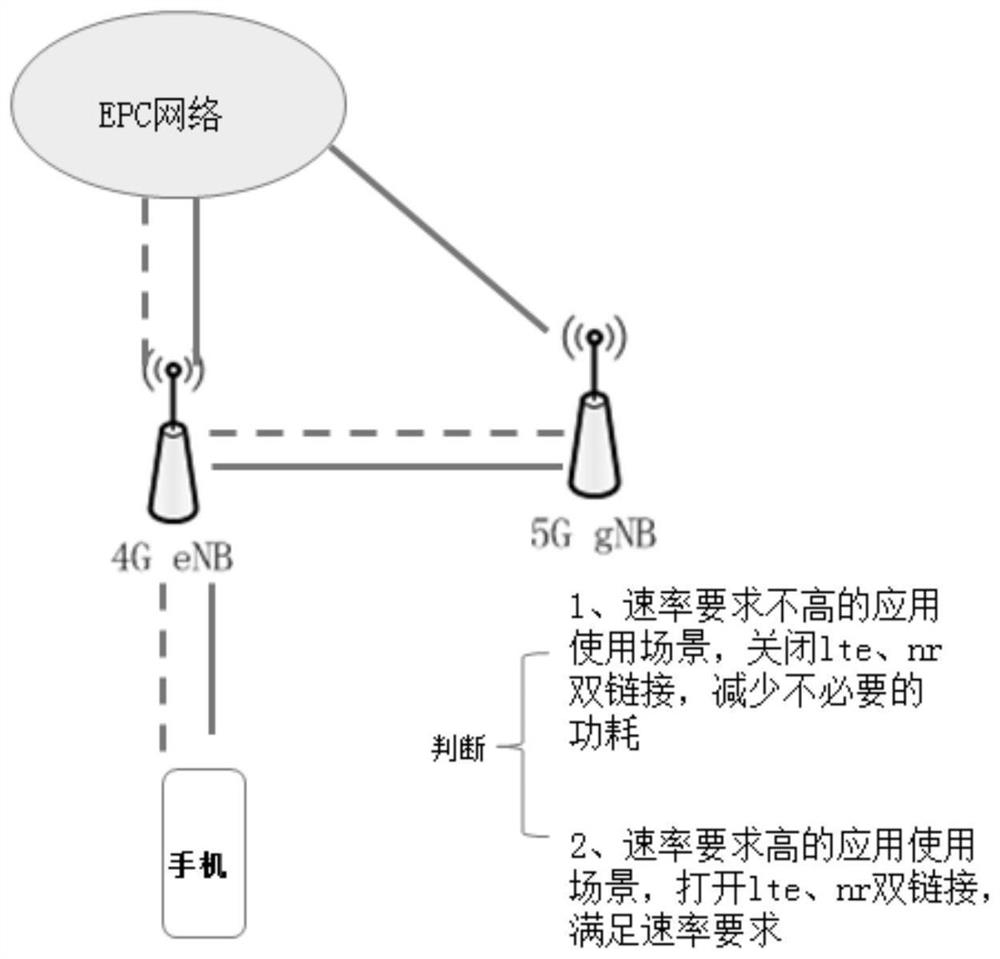

Double-link management method and device, electronic device and storage medium

ActiveCN112118620AAvoid wasting powerImprove user experiencePower managementConnection managementEmbedded system5G

The invention discloses a double-link management method and device, an electronic device and a storage medium. The method comprises the steps of determining a target application currently running on an intelligent terminal, wherein the intelligent terminal adopts a non-independent networking NSA for communication; and determining whether to open a dual link of the intelligent terminal according tothe relationship between the target application and an application list, the dual link indicating that 4G and 5G links are opened. The technical problem of high power consumption of 5G communicationin the prior art is solved.

Owner:GREE ELECTRIC APPLIANCES INC

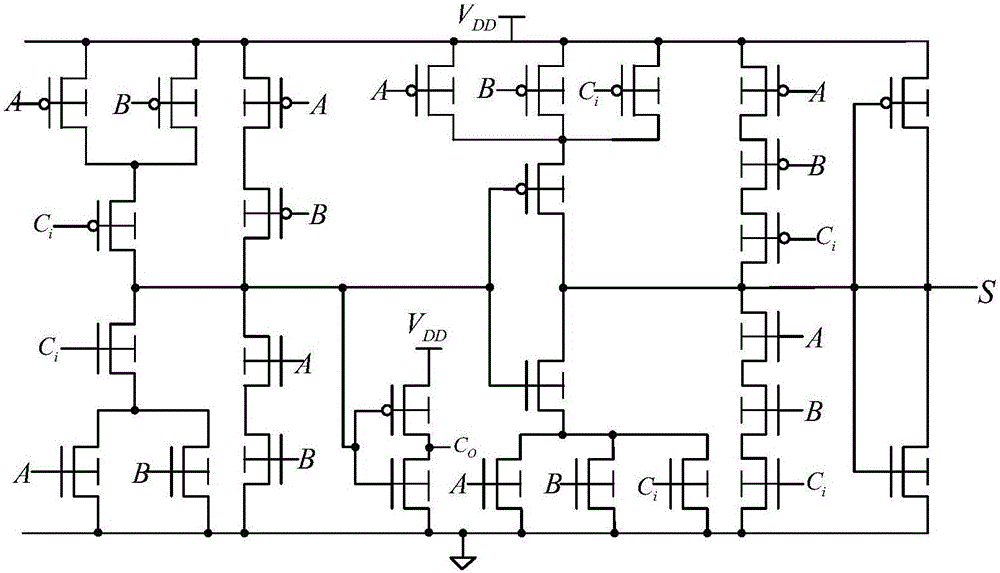

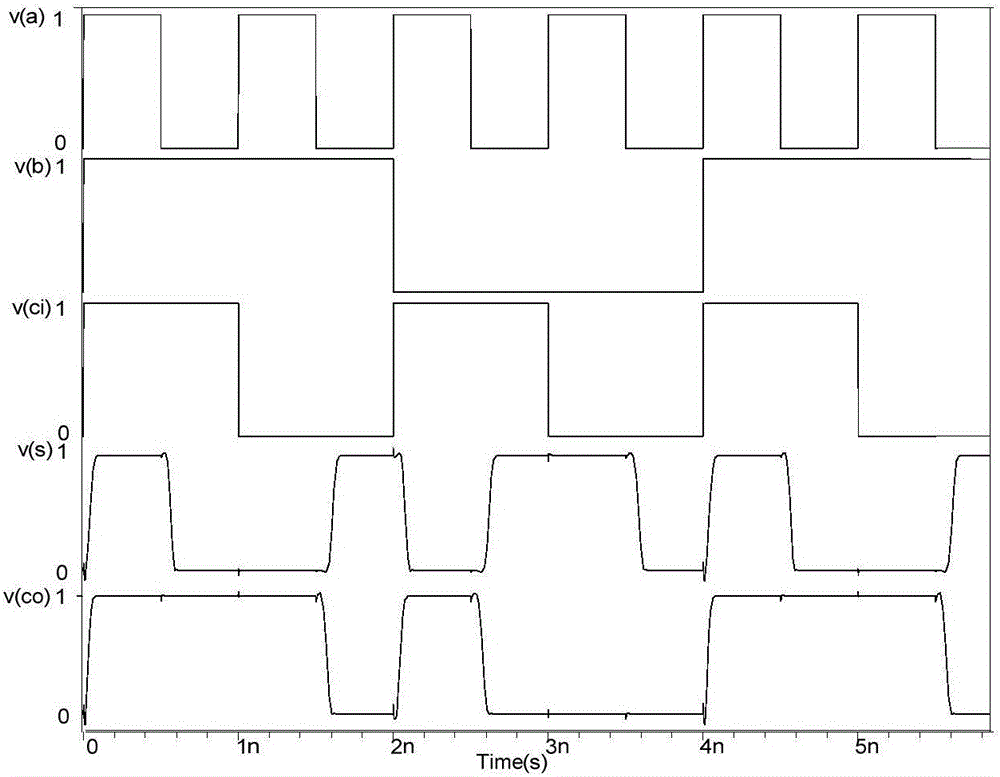

One-bit full adder based on Fin FETs

ActiveCN105958997AReduce power consumptionLower latencyLogic circuits characterised by logic functionEngineeringTime delayed

The invention discloses a one-bit full adder based on Fin FETs. The one-bit full adder comprises first to twenty Fin FETs. The first Fin FET, the second Fin FET, the fifth Fin FET, the seventh Fin FET, the ninth Fin FET, the twelfth Fin FET, the thirteenth Fin FET, the fifteenth Fin FET, the seventeenth Fin FET, and the nineteenth Fin FET are P-type Fin FETs. The third Fin FET, the fourth Fin FET, the sixth Fin FET, the eighth Fin FET, the tenth Fin FET, the eleventh Fin FET, the fourteenth Fin FET, the sixteenth Fin FET, the eighteenth Fin FET, and the twentieth Fin FET are N-type Fin FETs. The one-bit full adder is low in circuit area, time delay, power consumption, and power consumption time delay product.

Owner:NINGBO UNIV

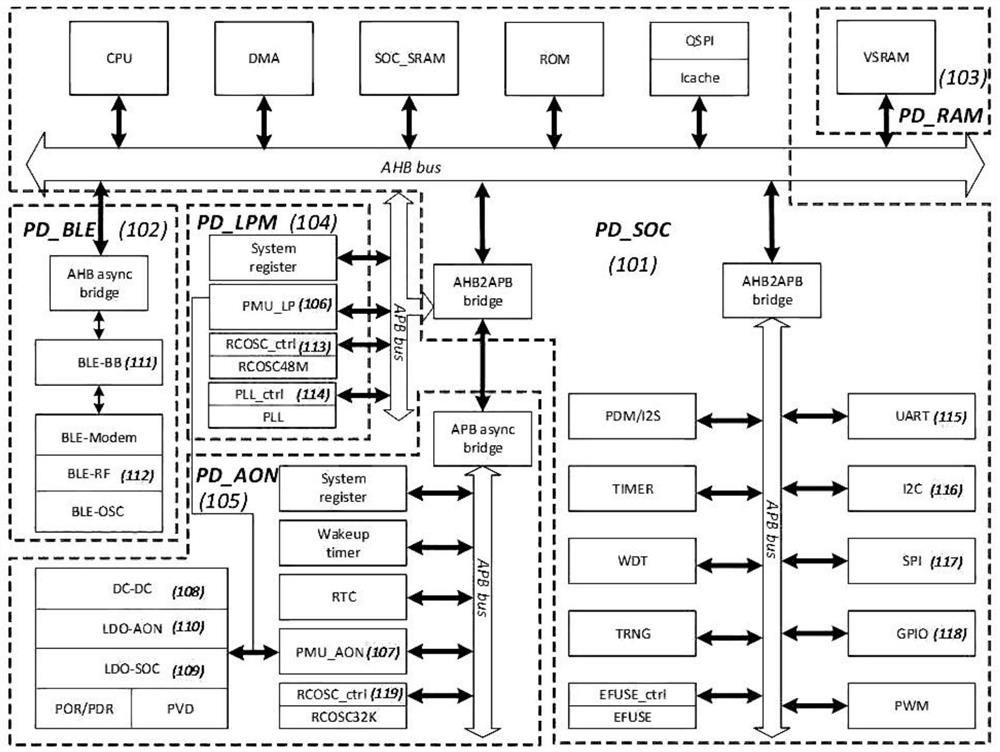

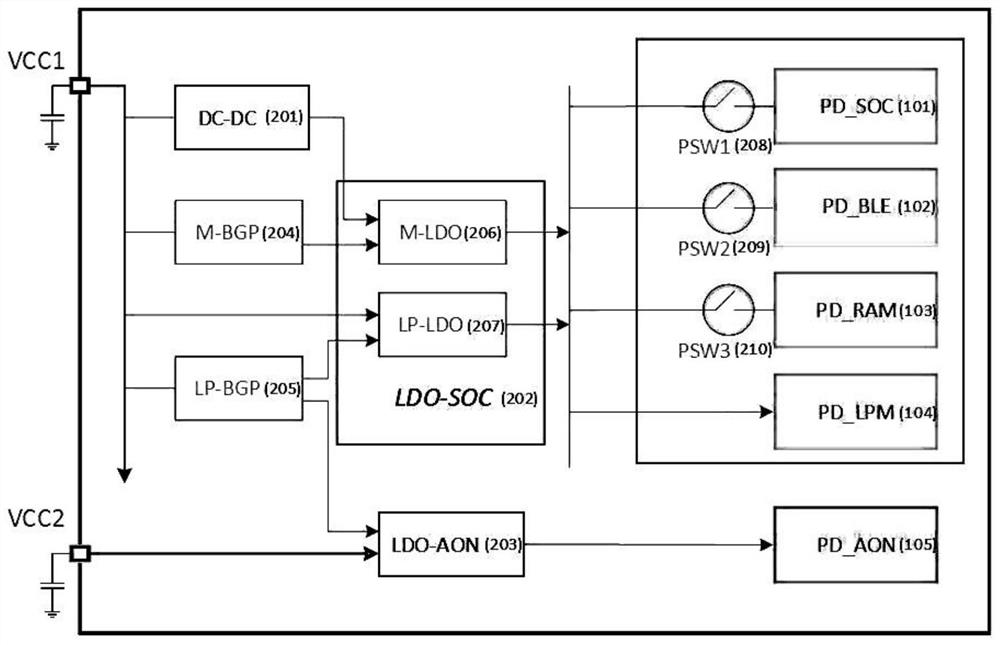

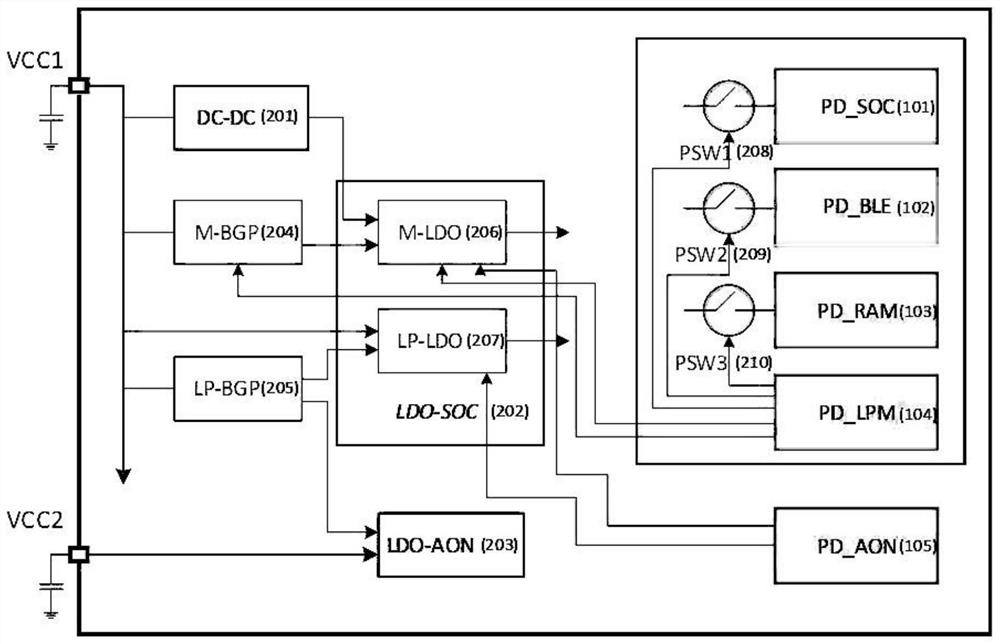

Low-power system and method of Internet of Things chip

ActiveCN112235850AReduce precisionLow power supply capacityPower managementShort range communication servicePower modeComputer architecture

The invention relates to the technical field of Internet of Things chips, in particular to a low-power system and method for an Internet of Things chip, and the system comprises a PD_SOC power domainfor achieving the main functions of a system on chip; a PD_BLE power supply domain for realizing a low-power Bluetooth communication function; a PD_RAM power supply domain for realizing overall poweron and down and formed by combining a plurality of low-power modes such as retention, powerdown and the like; a PD_LPM power supply domain for realizing global configuration, global clock reset and power consumption management; and a PD_AON power supply domain for realizing global configuration, low-frequency clock and global reset, awakening and power supply / power consumption management of the always on domain. By using a double-power switching scheme and utilizing the low-power characteristic of the low-power power supply and the characteristics of relatively high response speed, precision and power supply capability of the main power supply, under mutual combination, the Internet of Things chip realizes two goals of low power consumption and quick response in a low-power mode needing quick response, so that the reliability of the Internet of Things chip is improved. The requirement of people for prolonging the endurance time of electronic products is met, and wider application of the electronic products is promoted.

Owner:上海赛昉科技有限公司

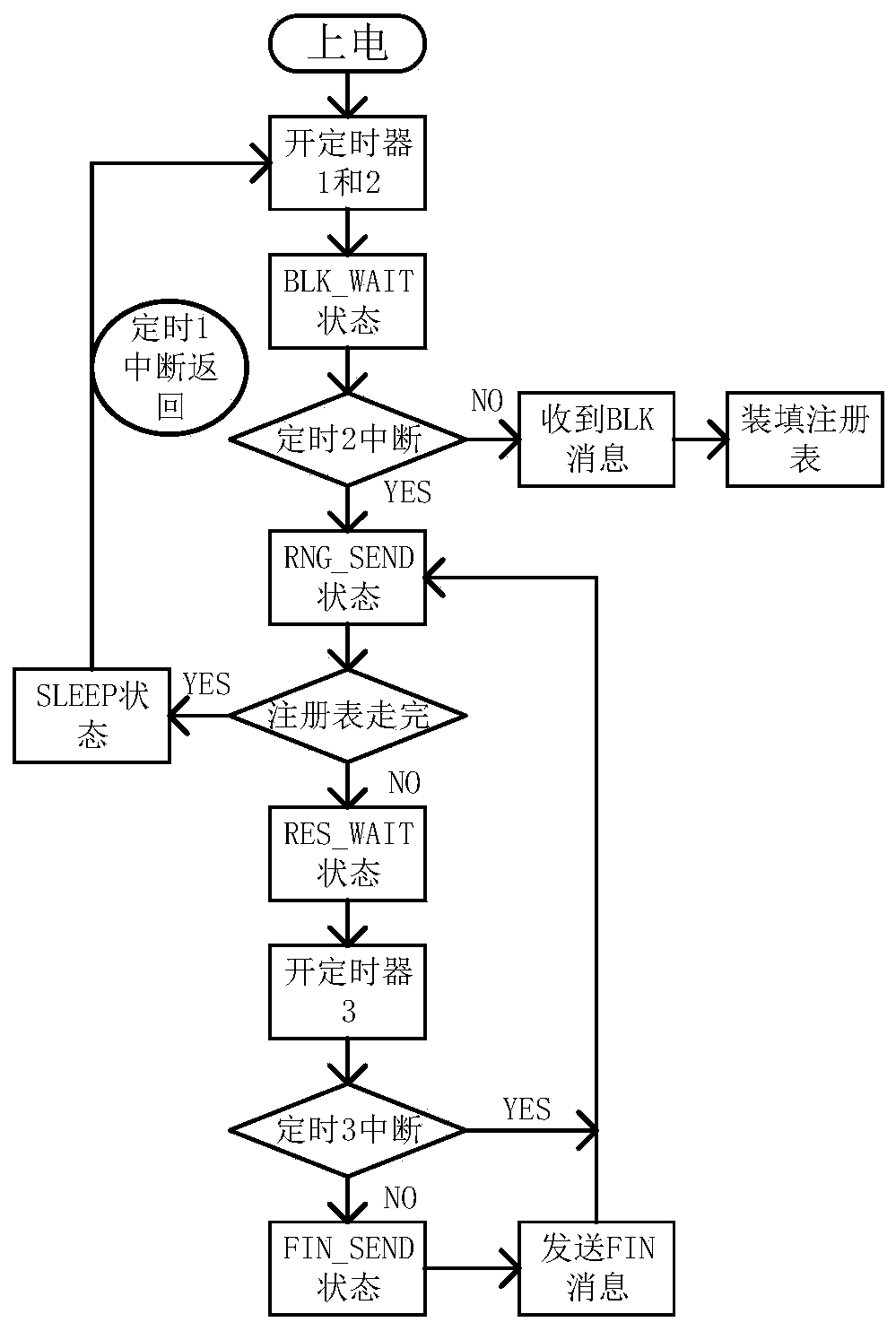

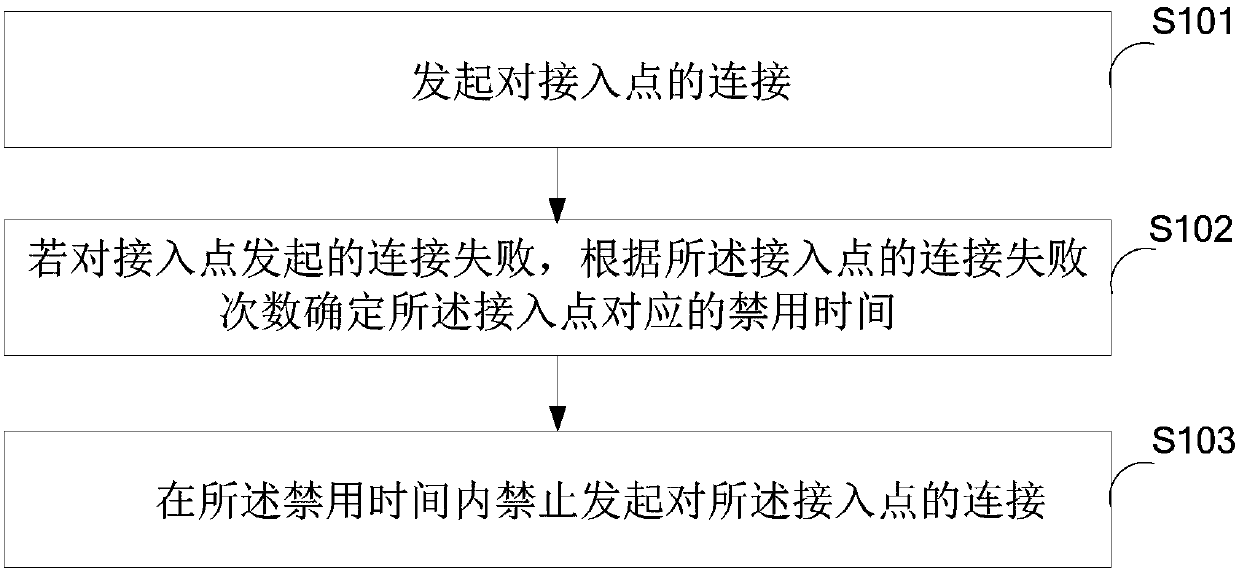

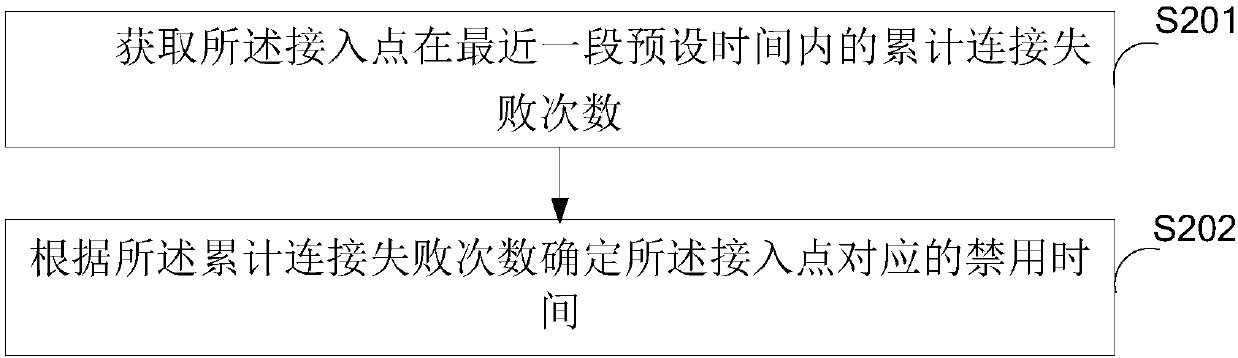

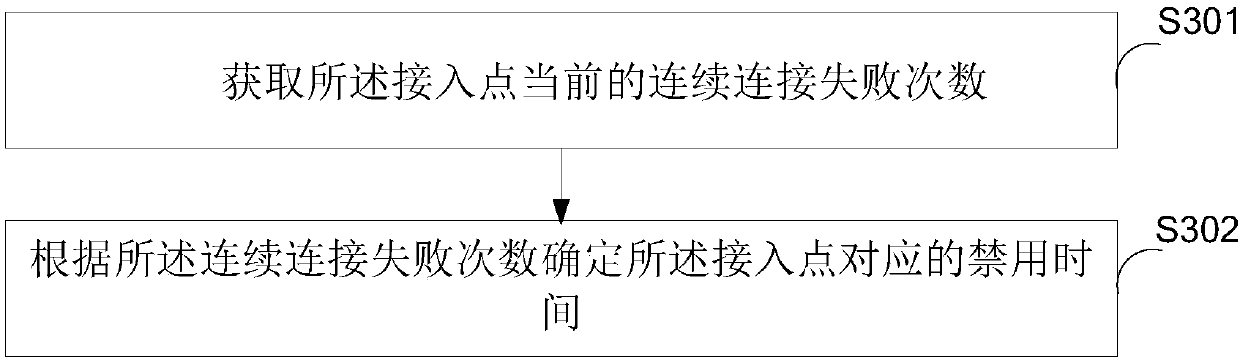

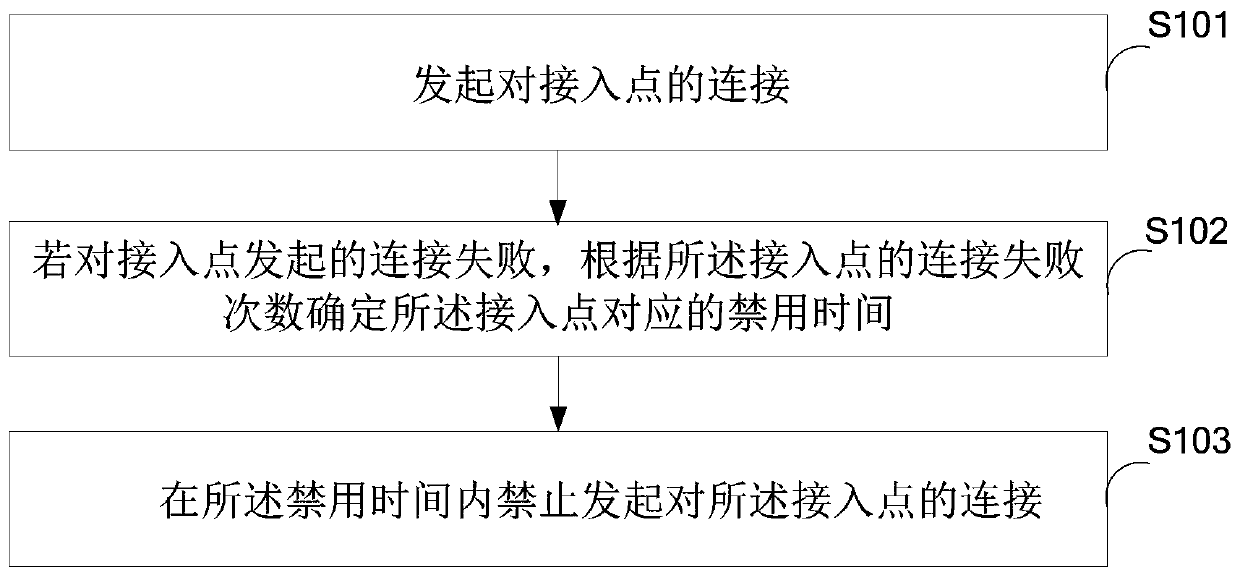

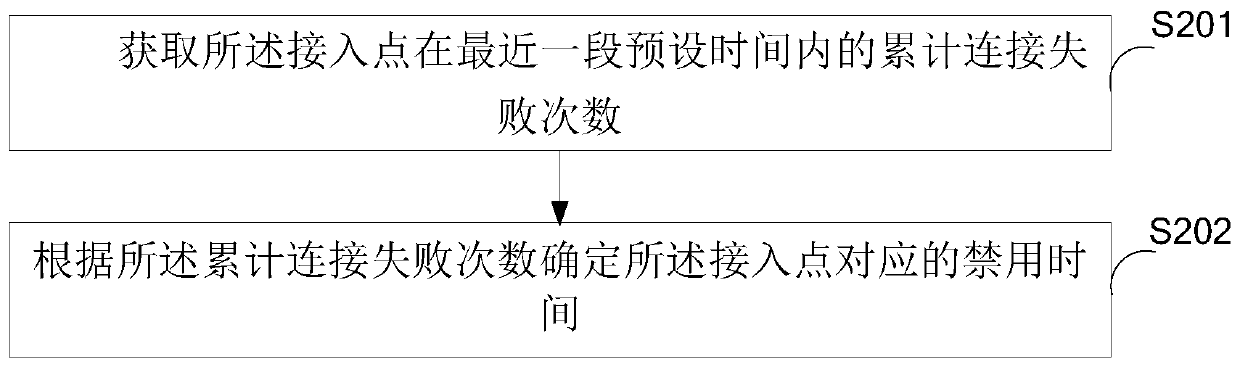

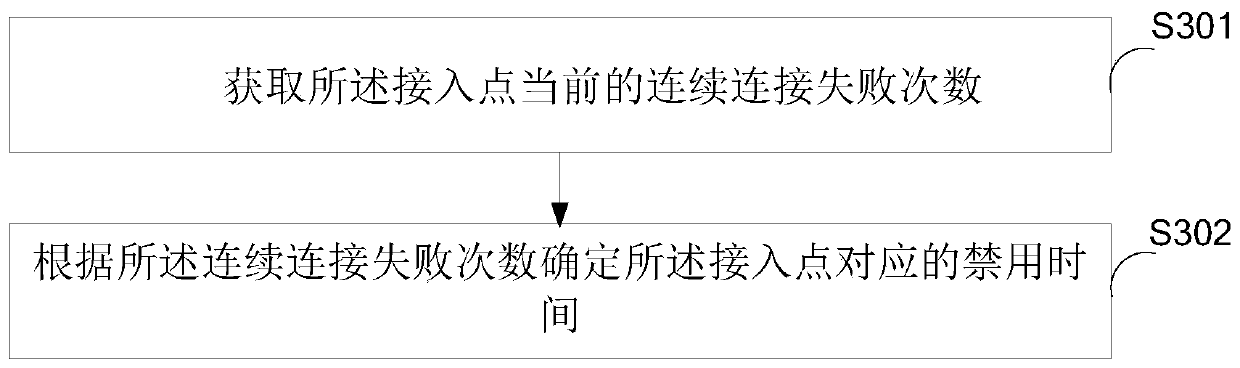

Control method and device for connection of access point

ActiveCN107682909AAvoid power consumptionTaking power consumption into considerationAssess restrictionHigh level techniquesTime durationReal-time computing

The invention is suitable for the technical field of communication, and provides a control method and device for the connection of an access point. The control method comprises the steps: initializingthe connection of the access point; determining the forbidding time duration corresponding to the access point according to the number of connection failure times of the access point if the initialized connection of the access point fails, wherein the number of connection failure times is positively correlated with the forbidding time duration; and forbidding the connection of the access point inthe forbidding time duration. Therefore, the method avoids the power consumption, caused by the repeated initialization of the connection, of a mobile terminal. The method achieves the balance between the connection speed and the power consumption, can guarantee the speed of automatic connection and also can give consideration to the power consumption.

Owner:GUANGDONG OPPO MOBILE TELECOMM CORP LTD

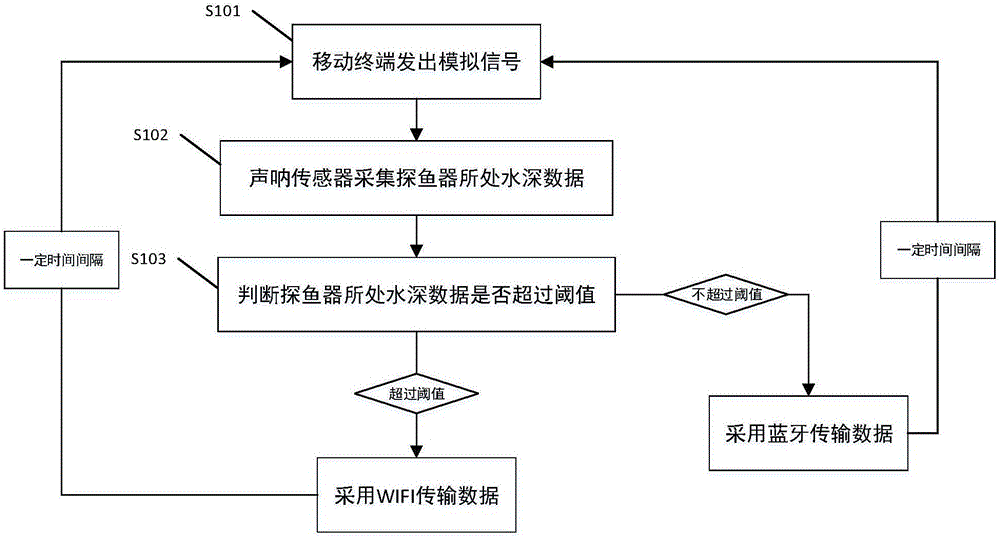

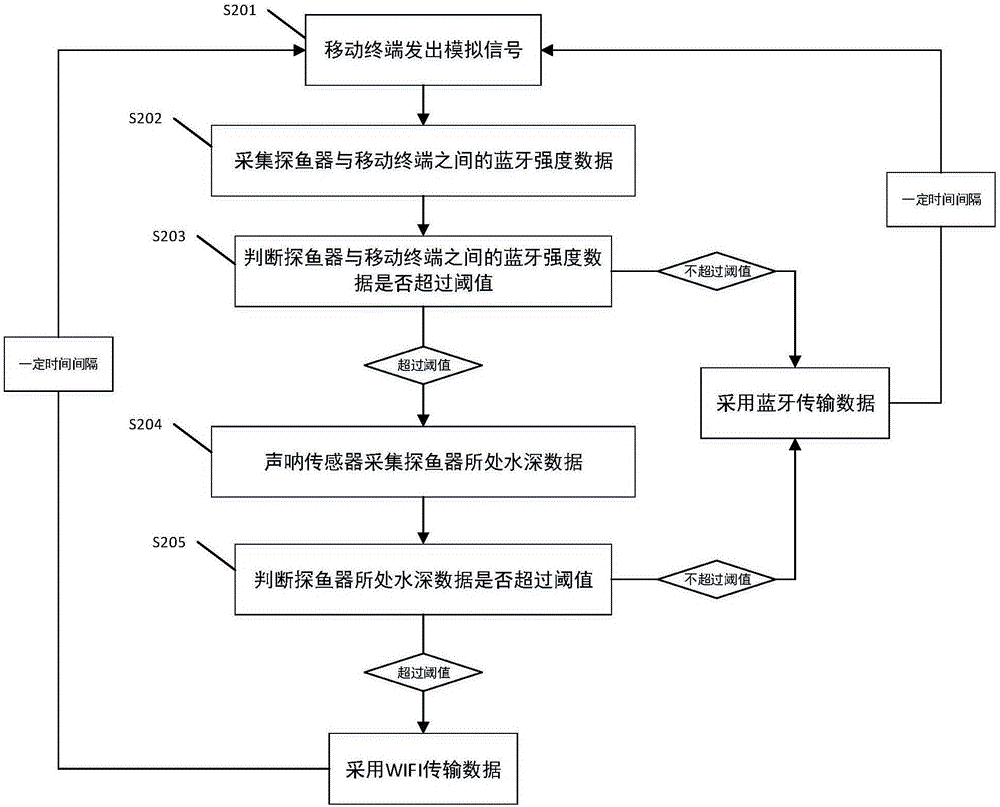

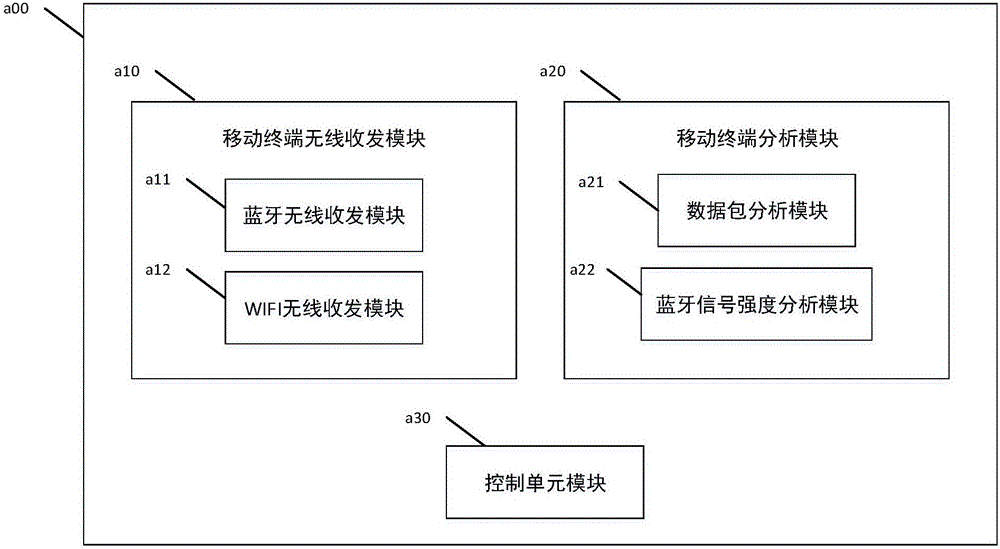

WIFI and bluetooth based double-channel fish finding method and system

InactiveCN106569217AAdd applicable scenariosTaking power consumption into considerationAcoustic wave reradiationAnalog signalBluetooth

The invention discloses a WIFI and bluetooth based double-channel fish finding method, which solves technical problems existing in the prior art that an independent bluetooth-channel fish-finding device is short in effective transmission distance and slow in transmission speed and that although an independent WIFI-channel fish-finding device is long in transmission distance and quick in speed, the power consumption in data transmission is high and the working time is short. The WIFI and bluetooth based double-channel fish finding method comprises the steps that a mobile terminal gives out an analog signal; data of water depth where a fish-finding device is located and / or data of the bluetooth strength between the fish-finding device and the mobile terminal are / is acquired; whether the data of water depth where the fish-finding device is located or the data of the bluetooth strength between the fish-finding device and the mobile terminal exceeds a threshold or not is judged; if the data of water depth where the fish-finding device is located or the data of the bluetooth strength between the fish-finding device and the mobile terminal exceeds the threshold, WIFI is started so perform signal data transmission; and if the data of water depth where the fish-finding device is located and the data of the bluetooth strength between the fish-finding device and the mobile terminal do not exceed the threshold, bluetooth is started to perform signal data transmission.

Owner:深圳市众凌汇科技有限公司

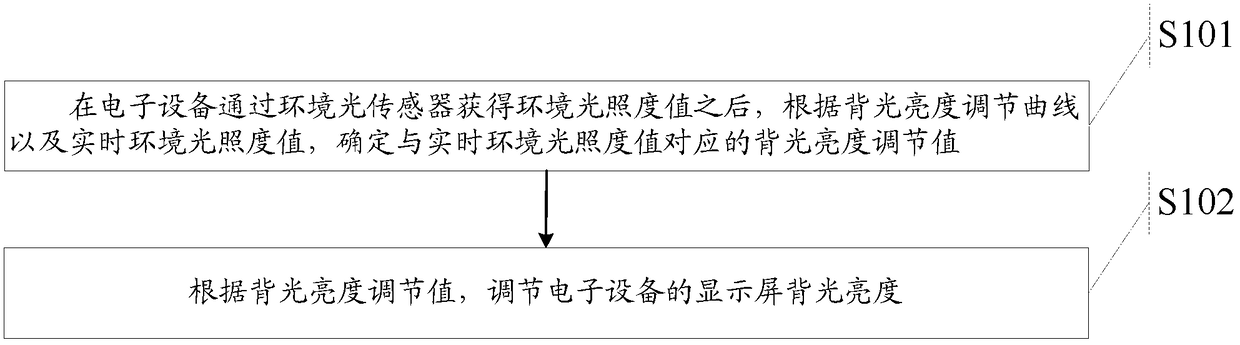

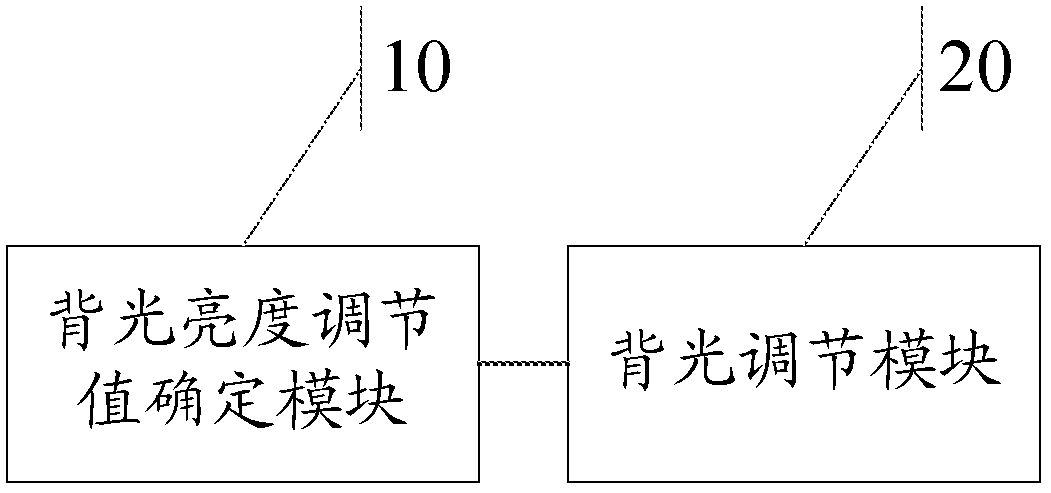

Method, device and electronic equipment for adjusting backlight brightness

ActiveCN105453166BTaking power consumption into considerationTaking into account the visual experience of the human eyeElectrical apparatusStatic indicating devicesIlluminanceLight sensing

Provided are a method, device and electronic equipment for adjusting the brightness of a backlight. The method includes the following steps: after the ambient light intensity value is obtained by the ambient light sensor, according to the backlight brightness adjustment curve and the ambient light intensity value, determine the backlight brightness adjustment value corresponding to the ambient light intensity value, wherein the backlight brightness adjustment curve is based on The brightness reference value and the corresponding relationship between the ambient light intensity and the backlight brightness are determined; and according to the backlight brightness adjustment value, the backlight brightness of the display screen of the electronic device is adjusted. The method can adjust the brightness of the backlight of the display screen according to the ambient light intensity while taking into account the power consumption of the electronic equipment and the user's human visual experience.

Owner:HUAWEI DEVICE CO LTD

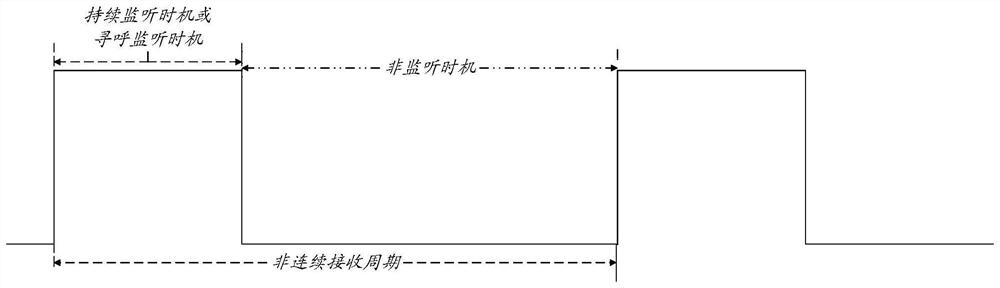

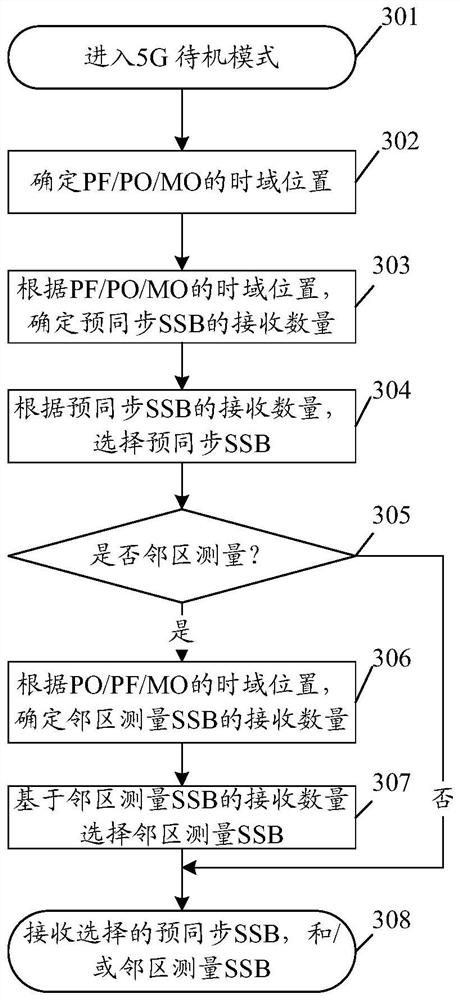

Method and device for receiving SSBs, equipment and storage medium

PendingCN113992283AMeet the qualityMeet needsPower managementSynchronisation arrangementSoftware engineeringMechanical engineering

The embodiment of the invention provides a method for receiving SSBs. The method comprises the steps of: determining the receiving number of SSBs according to the channel quality and the working state; and receiving SSBs based on the reception number. The embodiment of the invention further provides a communication device, communication equipment and a storage medium.

Owner:GUANGDONG OPPO MOBILE TELECOMM CORP LTD

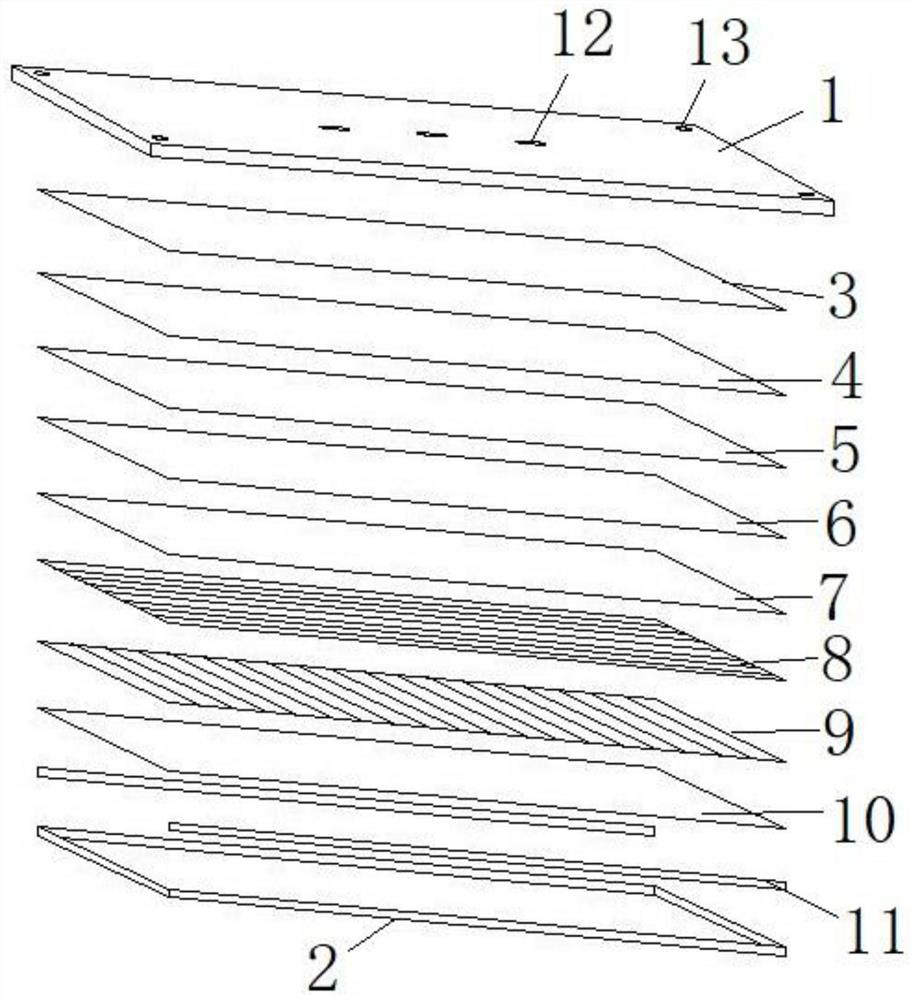

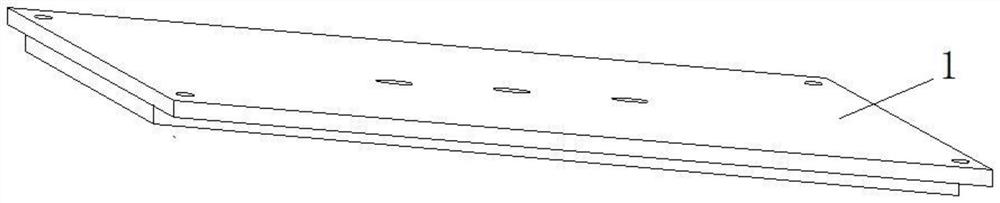

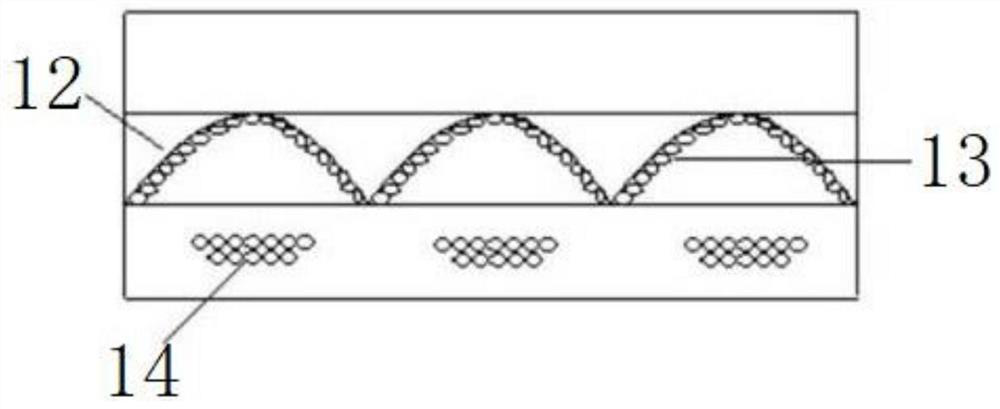

LED lamp for classroom

PendingCN112283621ATaking power consumption into considerationUniform lightSemiconductor devices for light sourcesRefractorsLight guideEngineering

The LED lamp comprises a mounting plate and an LED lamp assembly. The LED lamp assembly comprises a lamp frame, a lightproof plate, a reflecting film, a light guide plate, a diffusion film and a prismatic crystal plate, wherein the lightproof plate, the reflecting film, the light guide plate, the diffusion film and the prismatic crystal plate are arranged on the inner side of the lamp frame in sequence, and an LED light source emitting light towards the light guide plate is arranged on the side face of the light guide plate; the glare control layer is provided with a hollow hole, a hollow layer is arranged in the light guide plate, and reflection supporting mechanisms which are used for guiding light emitted by the LED light source to the light outlet face of the light guide plate and canlimit and support the upper end face and the lower end face of the hollow layer are arranged in the hollow layer. Through arrangement of the glare control layer, illumination of the LED classroom lampis more uniform, and the anti-dazzling effect is better; the reflection support mechanism in the hollow layer of the light guide plate gives consideration to the power consumption of the light sourceand the uniform light generation effect.

Owner:安徽省富鑫雅光电科技有限公司

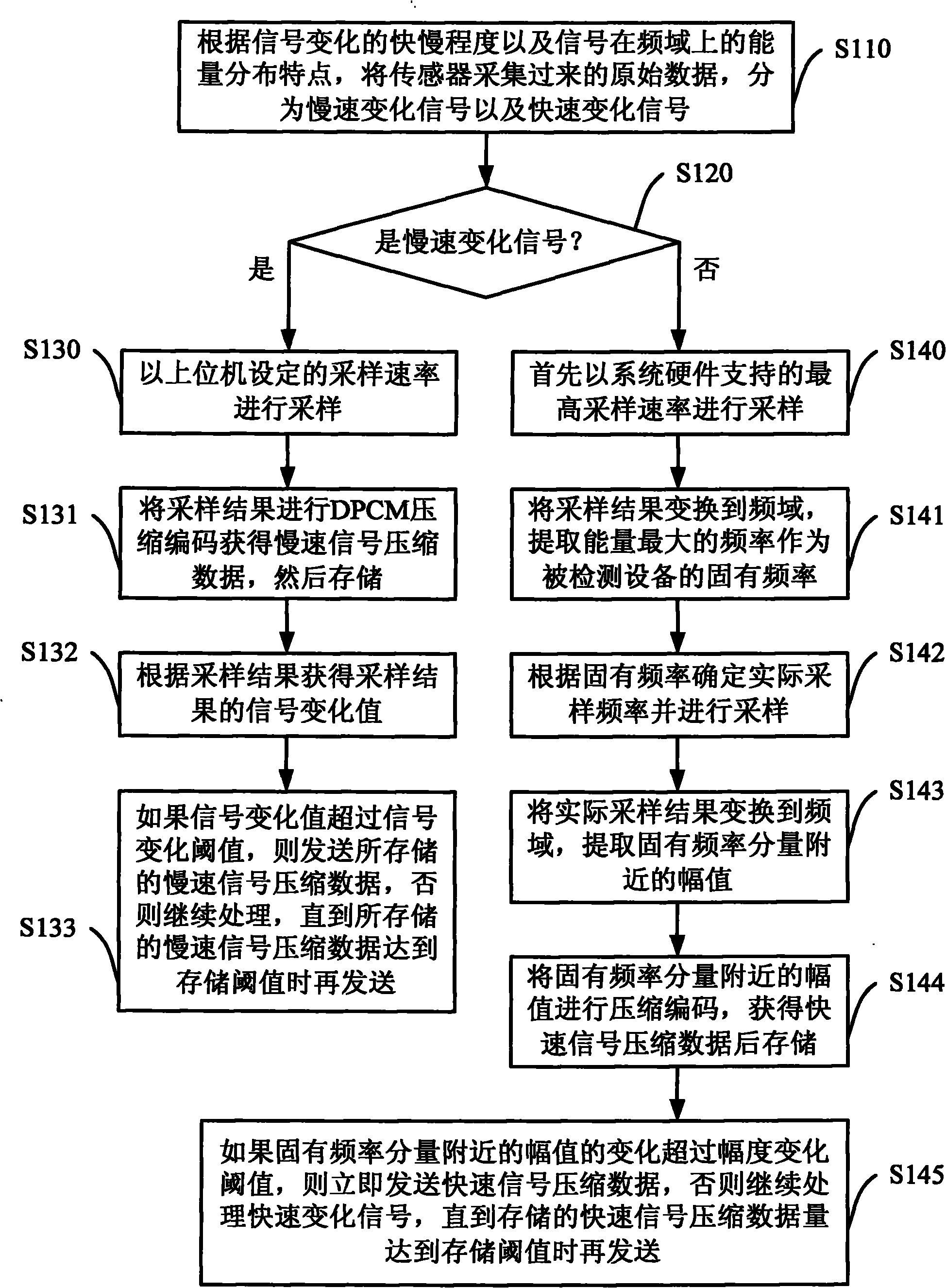

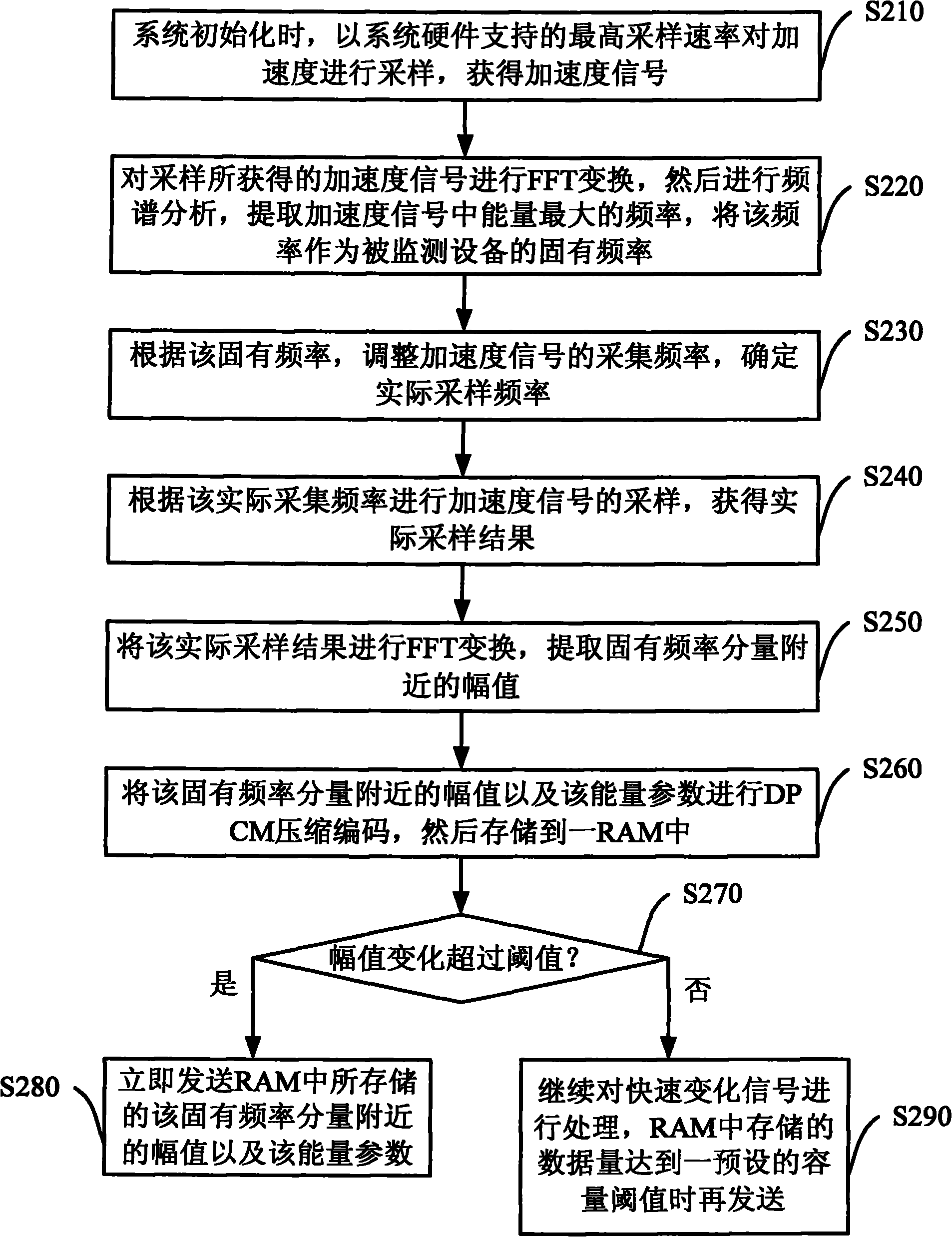

Data transmission method for industrial detection system

ActiveCN101893865BGuaranteed real-timeTaking power consumption into considerationProgramme controlEnergy efficient ICTLow speedOriginal data

The invention discloses a data transmission method for an industrial detection system, which efficiently transmits the acquired data to an upper computer. The data transmission method comprises the following steps of: dividing initial data acquired by a sensor into a low-speed change signal and a high-speed change signal; if the change value of the sampling result exceeds a preset signal change threshold, immediately transmitting the low-speed change signal; and for the high-speed change signal, determining the natural frequency of detection equipment, converting the sampling result to a frequency domain for frequency domain analysis, extracting an amplitude near a natural frequency component, and if the change value of the amplitude exceeds a preset amplitude change threshold, immediately transmitting the stored high-speed signal compression data. The data transmission method guarantees the real-time property for reporting the data acquired by a sensor network, has the advantages in the aspects of power consumption and transmission efficiency, and is particularly suitable for a low-power consumption wireless sensor network.

Owner:BEIJING LOIT TECH

A control method and device for connecting an access point

InactiveCN107682909BAvoid power consumptionTaking power consumption into considerationAssess restrictionHigh level techniquesComputer networkEngineering

The invention is suitable for the technical field of communication, and provides a control method and device for the connection of an access point. The control method comprises the steps: initializingthe connection of the access point; determining the forbidding time duration corresponding to the access point according to the number of connection failure times of the access point if the initialized connection of the access point fails, wherein the number of connection failure times is positively correlated with the forbidding time duration; and forbidding the connection of the access point inthe forbidding time duration. Therefore, the method avoids the power consumption, caused by the repeated initialization of the connection, of a mobile terminal. The method achieves the balance between the connection speed and the power consumption, can guarantee the speed of automatic connection and also can give consideration to the power consumption.

Owner:GUANGDONG OPPO MOBILE TELECOMM CORP LTD

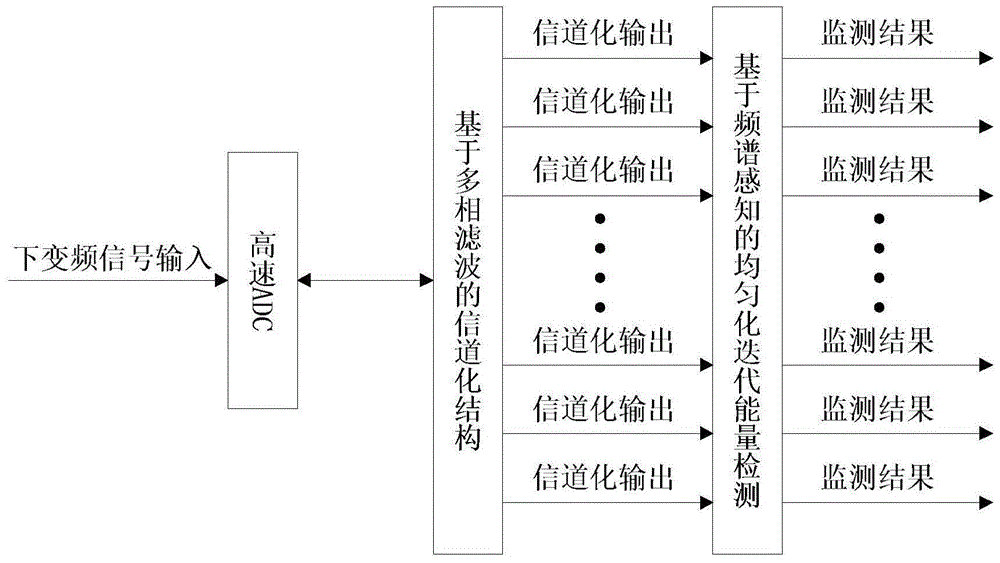

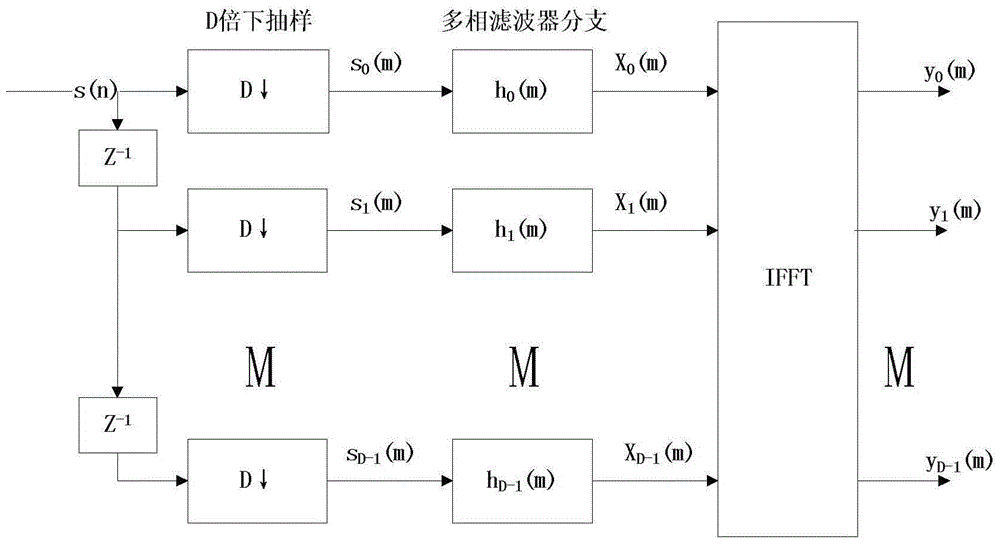

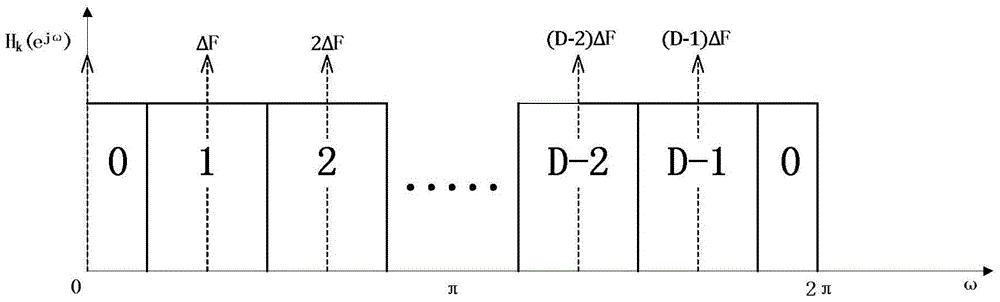

A Channel Monitoring System Based on Channelized Spectrum Sensing

ActiveCN104901754BEasy to implementTaking power consumption into considerationTransmission monitoringFrequency spectrumMonitoring system

The invention discloses a channel monitoring system based on channelization frequency spectrum perception. The channel monitoring system comprises a high-speed ADC sampling module, a channelization structure and an uniformization iteration energy detection module. The high-speed ADC sampling module is used for performing AD sampling and analog-to-digital conversion so as to obtain digital complex baseband signals. The channelization structure is used for determining AD sampling frequency and feeding back the sampling frequency to the high-speed ADC sampling module, and is also used for dividing channels into D channelization branches by using a traditional Fourier-domain lowpass prototype filter via the multiphase filtering technology, and sending to-be-monitored channel information of the digital complex baseband signals into corresponding channelization branches for monitoring output. The uniformization iteration energy detection module is used for performing energy detection for output results of the channelization structure according to the uniformization iteration energy detection algorithm, thereby achieving noise power value estimation of each of the channelization branches. The channel monitoring system is easy to achieve, requires less on hardware facilities, is highly reliable in channel monitoring and has high robustness.

Owner:CHINESE AERONAUTICAL RADIO ELECTRONICS RES INST

A Fixed Bit Width Multiplier with High Accuracy and Low Energy Consumption

InactiveCN105183424BHigh precisionReduce power consumptionDigital data processing detailsPartial productComputer science

Owner:UNIV OF ELECTRONICS SCI & TECH OF CHINA

Method, device and mobile terminal for dynamically adjusting energy-saving level of terminal

ActiveCN106933326BReduce power consumptionTaking power consumption into considerationPower managementDigital data processing detailsApplication IdentifierLevel set

The embodiment of the invention discloses a method, a device and a mobile terminal for dynamically adjusting the energy saving level of a terminal. The method includes obtaining the application identification of the currently displayed application program; querying the set first white list according to the application identification, determining the first energy-saving level corresponding to the application program, and acquiring the display corresponding to the first energy-saving level Effect parameter: setting the energy saving level of the terminal according to the first energy saving level, and processing the image to be displayed according to the display effect parameter corresponding to the first energy saving level. The embodiment of the present invention can dynamically adjust the power consumption of the terminal according to the application scenario. The technical scheme of the present invention can reduce the power consumption of the terminal while taking into account the display effect, prolonging the endurance time of the terminal.

Owner:GUANGDONG OPPO MOBILE TELECOMM CORP LTD

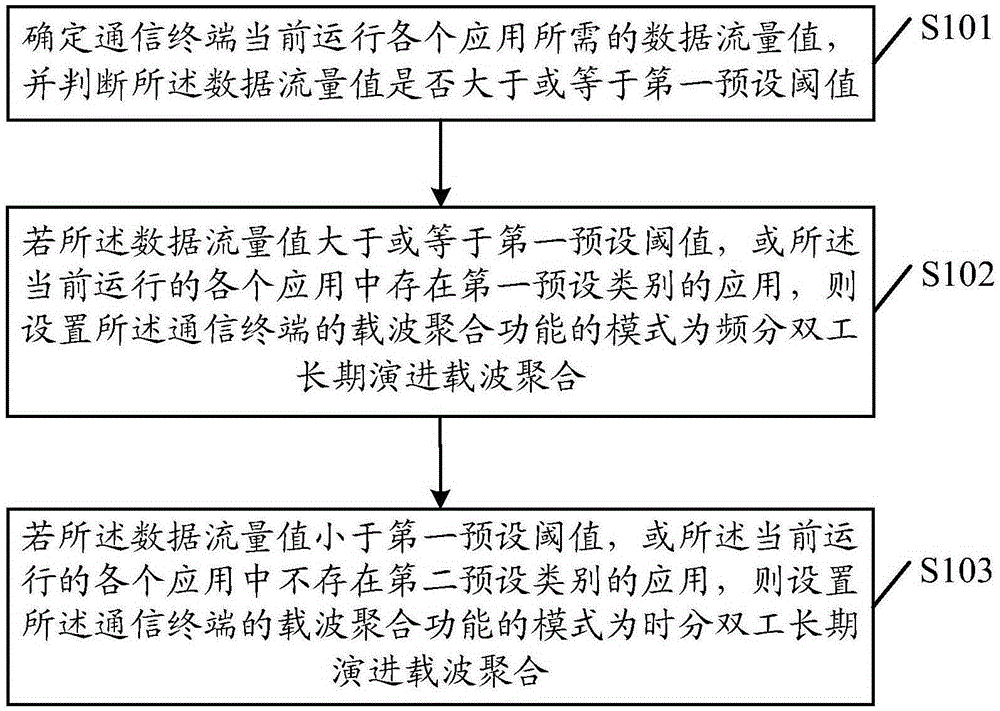

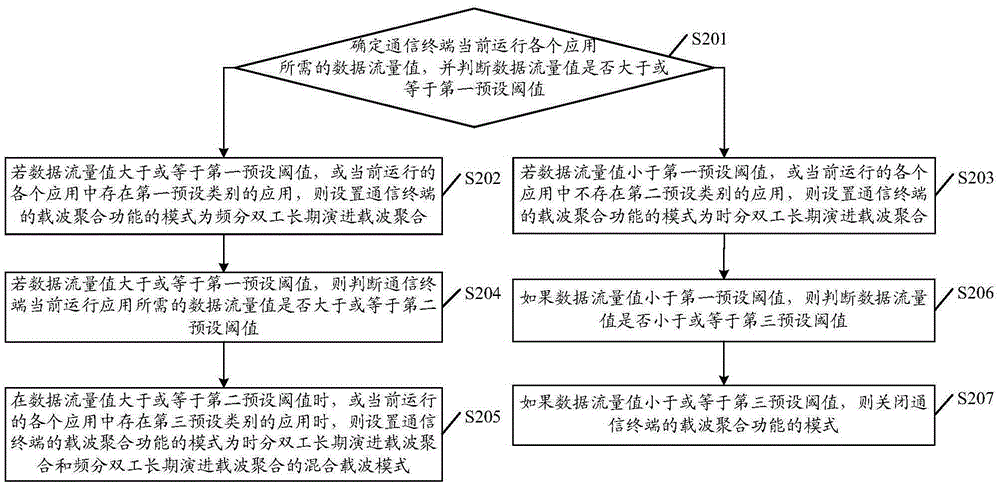

Carrier aggregation mode setting method for communication terminal and communication terminal

ActiveCN105429669ATaking power consumption into considerationRealize reasonable settingsPower managementHigh level techniquesTraffic capacityCarrier signal

Embodiments of the invention disclose a carrier aggregation mode setting method for a communication terminal and the communication terminal. The carrier aggregation mode setting method for the communication terminal comprises the following steps: judging whether a data flow value is larger than or equal to a first preset threshold; if the data flow value is larger than or equal to the first preset threshold, or when applications of a preset category exist in currently operated applications, setting the mode of the carrier aggregation function as frequency division duplex long-term evolution carrier aggregation; and on the contrary, setting the mode of the carrier aggregation function of the communication terminal as time division duplex long-term evolution carrier aggregation. According to the carrier aggregation mode setting method disclosed by the embodiments of the invention, the mode of the CA function of the communication terminal is set according to the categories of the currently operated applications of the communication terminal and the data flow values necessary for the applications, thereby considering the power consumption of the terminal while meeting the requirements of the terminal on the data flow, and reasonably setting the CA function of the terminal.

Owner:GUANGDONG OPPO MOBILE TELECOMM CORP LTD

Features

- R&D

- Intellectual Property

- Life Sciences

- Materials

- Tech Scout

Why Patsnap Eureka

- Unparalleled Data Quality

- Higher Quality Content

- 60% Fewer Hallucinations

Social media

Patsnap Eureka Blog

Learn More Browse by: Latest US Patents, China's latest patents, Technical Efficacy Thesaurus, Application Domain, Technology Topic, Popular Technical Reports.

© 2025 PatSnap. All rights reserved.Legal|Privacy policy|Modern Slavery Act Transparency Statement|Sitemap|About US| Contact US: help@patsnap.com