Patents

Literature

Hiro is an intelligent assistant for R&D personnel, combined with Patent DNA, to facilitate innovative research.

61 results about "Hamming space" patented technology

Efficacy Topic

Property

Owner

Technical Advancement

Application Domain

Technology Topic

Technology Field Word

Patent Country/Region

Patent Type

Patent Status

Application Year

Inventor

In statistics and coding theory, a Hamming space is usually the set of all 2ᴺ binary strings of length N. It is used in the theory of coding signals and transmission. More generally, a Hamming space can be defined over any alphabet (set) Q as the set of words of a fixed length N with letters from Q. If Q is a finite field, then a Hamming space over Q is an N-dimensional vector space over Q. In the typical, binary case, the field is thus GF(2) (also denoted by Z₂).

Sparse dimension reduction-based spectral hash indexing method

InactiveCN101894130AImprove interpretabilityImprove search efficiencyCharacter and pattern recognitionSpecial data processing applicationsSearch problemPrincipal component analysis

The invention discloses a sparse dimension reduction-based spectral hash indexing method, which comprises the following steps: 1) extracting image low-level features of an original image by using an SIFT method; 2) clustering the image low-level features by using a K-means method, and using each cluster center as a sight word; 3) reducing the dimensions of the vectors the sight words by using a sparse component analysis method directly and making the vectors sparse; 4) resolving an Euclidean-to-Hamming space mapping function by using the characteristic equation and characteristic roots of a weighted Laplace-Beltrami operator so as to obtain a low-dimension Hamming space vector; and 5) for an image to be searched, the Hamming distance between the image to be searched and the original image in the low-dimensional Hamming space and using the Hamming distance as the image similarity computation result. In the invention, the sparse dimension reduction mode instead of a spectral has principle component analysis dimension reduction mode is adopted, so the interpretability of the result is improved; and the searching problem of the Euclidean space is mapped into the Hamming space, and the search efficiency is improved.

Owner:ZHEJIANG UNIV

Image retrieval method based on variable-length depth hash learning

InactiveCN105512273AAchieving joint optimizationGuaranteed retrieval efficiencyCharacter and pattern recognitionNeural architecturesNerve networkHash function

The invention discloses an image retrieval method based on variable-length depth hash learning and mainly relates to the field of image retrieval and depth learning. According to the method, learning of hash codes is modeled into the process of similarity learning. Specifically, the method utilizes a training image for generating a batch of ternary image sets. Each ternary image set comprises two images with the same label and one image with the different label. The purpose of model training is to space image pairs matched to the maximum and the unmatched image pairs in hamming space. The depth convolution nerve network is introduced into the learning part of the method, the image characteristics and hash functions are optimized in a combined mode, and the end-to-end training process is achieved; hash codes output by the convolution network have different weights. For the different retrieval task, a user can regular the length of the hash codes by disconnecting unimportant bits; meanwhile, according to the method, the discrimination of the hash codes can be kept effectively under the circumstance that the hash codes are short.

Owner:SUN YAT SEN UNIV

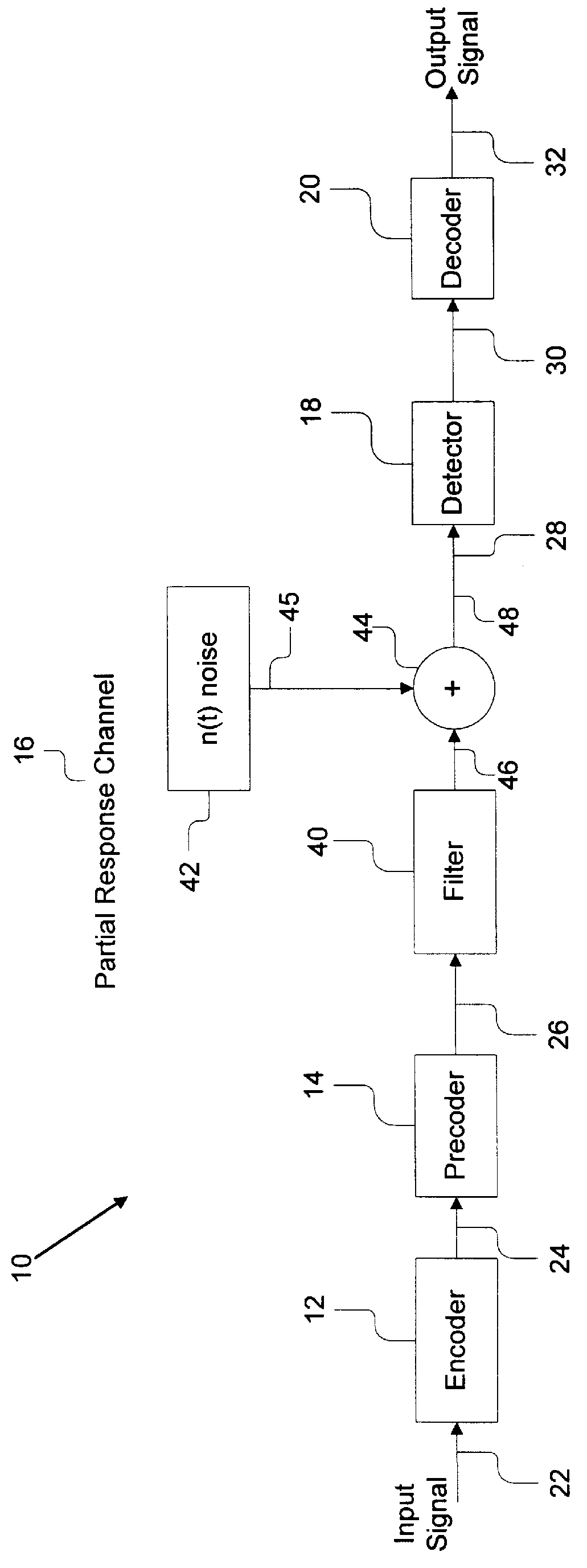

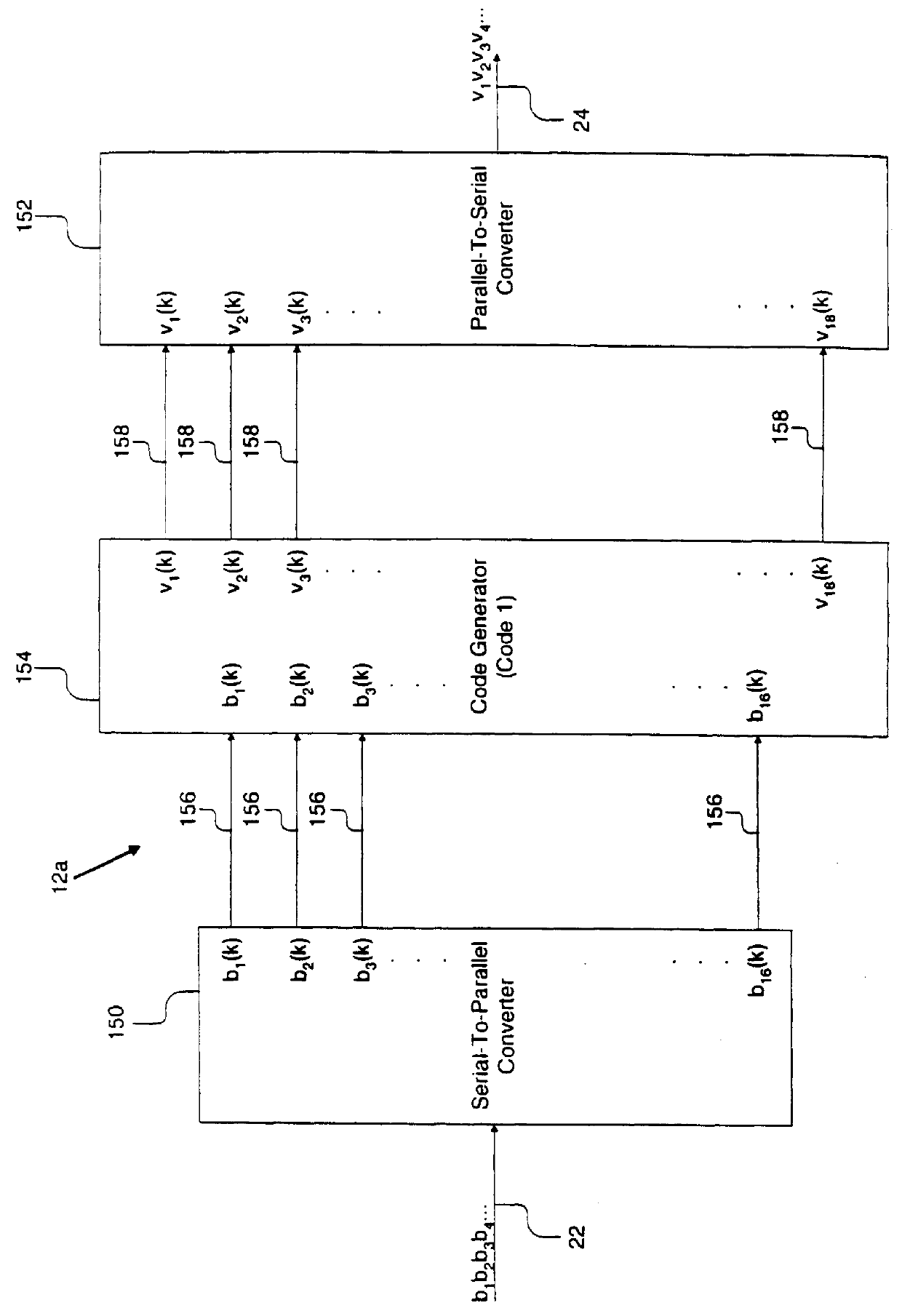

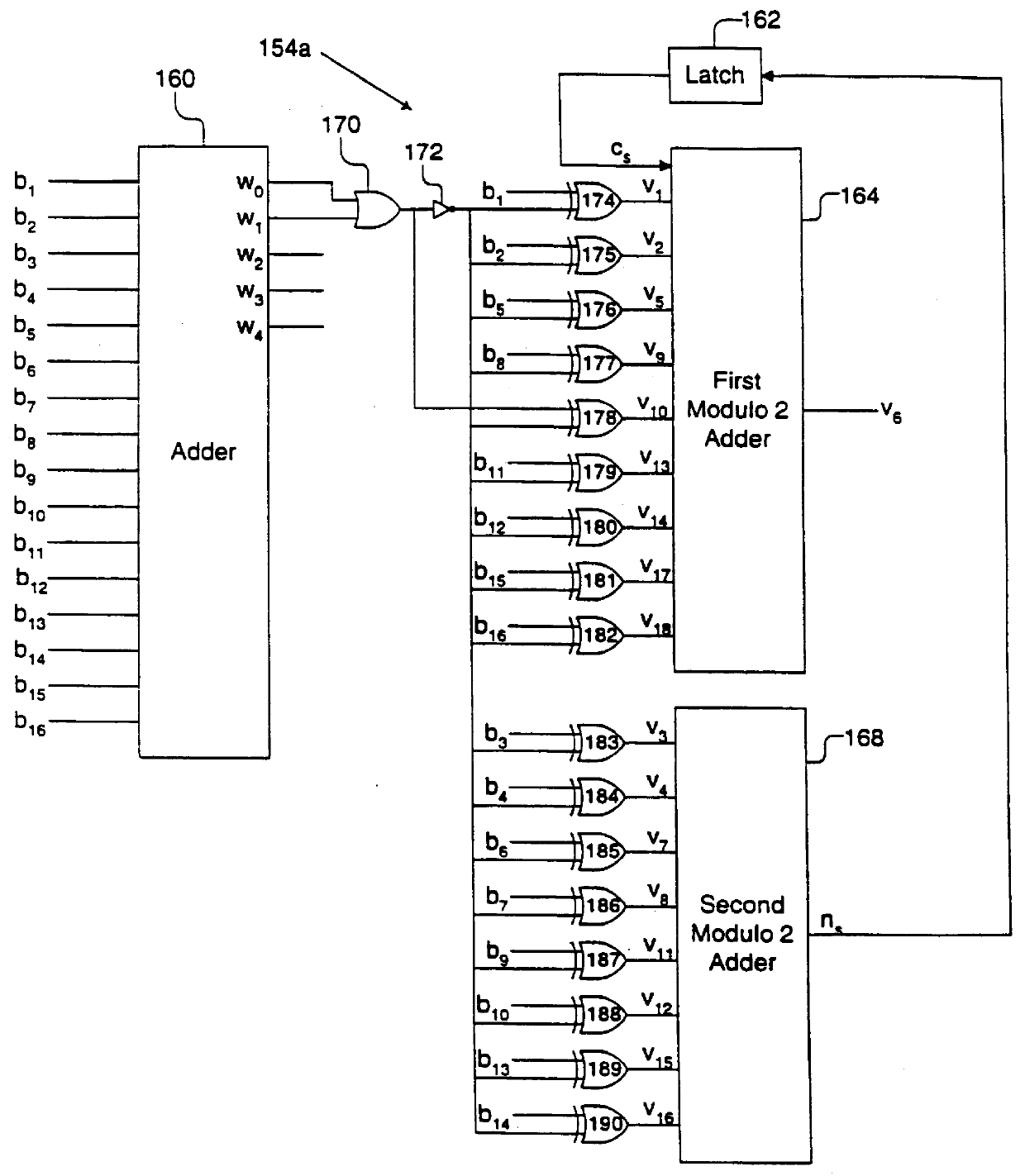

System and method for generating many ones codes with hamming distance after precoding

InactiveUS6084535AModification of read/write signalsRecord information storagePrecodingComputer hardware

A system comprises an encoder, a precoder, a PRML channel, a detector, and a decoder. An input signal is received by the encoder. The encoder generates a code string by adding one or more bits and outputs the code string to the precoder. The encoder applies encoding such that the code string after passing through the precoder has a Hamming distance greater than one to eliminate error events with a small distance at the output of the PRML channel. The present invention also provides codes that after precoding have Hamming distance of 2 and 0 mod 3 number of ones. These codes when used over a PRML channel in an interleaved manner preclude + / -( . . . 010-10 . . . ) error events and error events + / -( . . . 01000-10 . . . ). The code string also has a predetermined minimum number of ones at the output of the PRML channel to help derive a clock from the input signal. The encoder provides a "systematic" encoding scheme in which for many code strings the encoded bits are the same as the input bits used to generate the encoded bits. This systematic approach of the present invention provides an encoder that is easy to implement because a majority of the bits directly "feed through" and non-trivial logic circuits are only needed to generate the control bits. The systematic encoding also dictates a decoder that is likewise easy to construct and can be implemented in a circuit that simply discards the control bit. The encoder preferably comprises a serial-to-parallel converter, a code generator, and a parallel-to-serial converter. The code generator produces a rate 16 / 18 or 16 / 17 code. The present invention also includes a method that is directed to encoding bit strings and comprises the steps of: 1) converting the input strings to input bits, and 2) adding at least one bit to produce an encoded string with many ones and a Hamming distance greater than one after precoding.

Owner:POLARIS INNOVATIONS

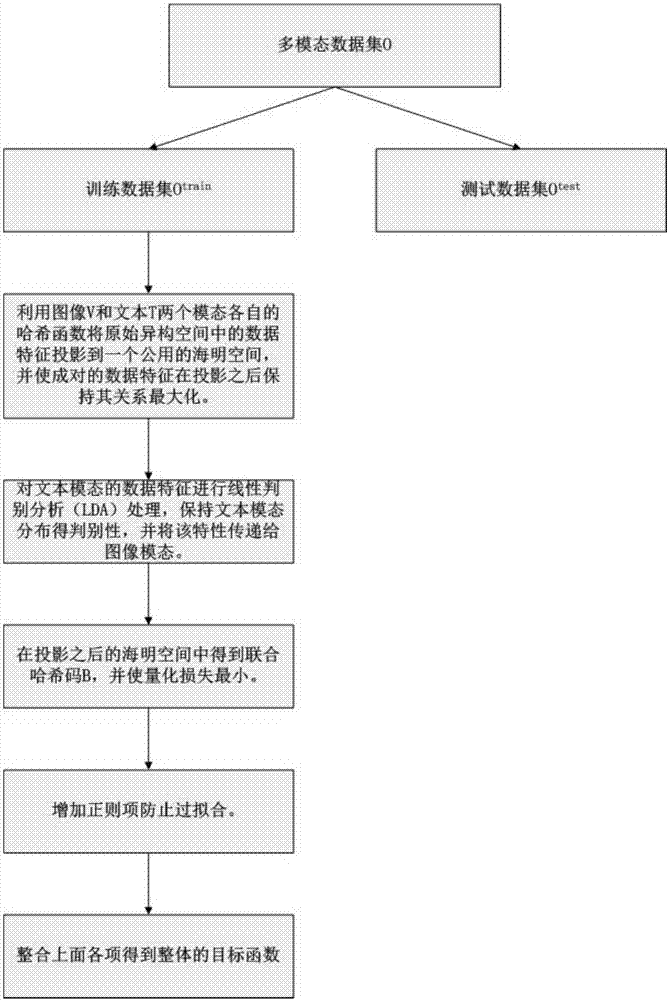

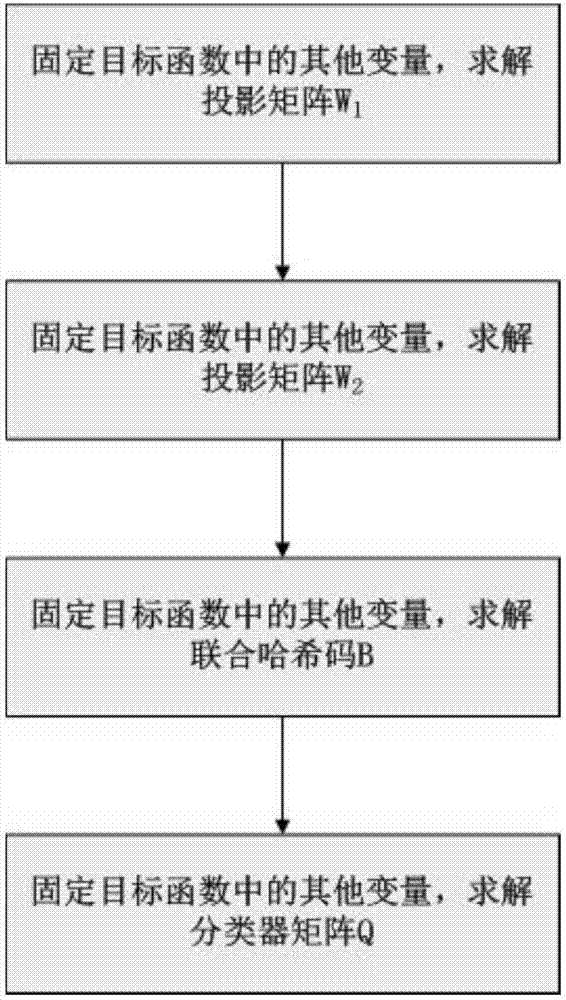

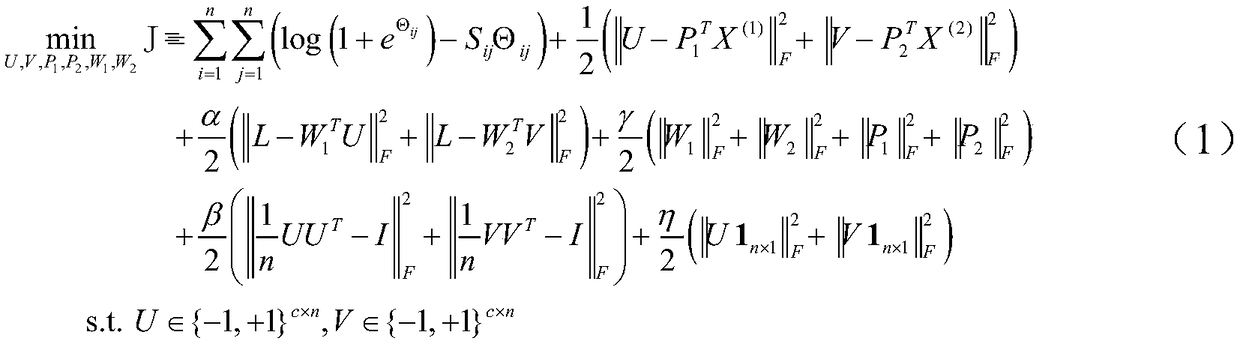

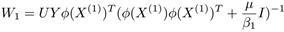

Discriminative association maximization hash-based cross-mode retrieval method

ActiveCN107402993AImprove performanceReduce consumptionCharacter and pattern recognitionSpecial data processing applicationsHat matrixData set

The invention provides a discriminative association maximization hash-based cross-mode retrieval method. The method comprises the steps of performing multi-mode extraction on a training data set to obtain a training multi-mode data set; for the training multi-mode data set, building a discriminative association maximization hash-based target function on the data set; solving the target function to obtain a projection matrix, projected to a common Hamming space, of images and texts, and combined hash codes of image and text pairs; for a test data set, projecting the test data set to the common Hamming space, and performing quantization through a hash function to obtain hash codes of samples of the training set; and performing cross-mode retrieval based on the hash codes. According to the method, the cross-media retrieval efficiency and accuracy are improved.

Owner:SHANDONG NORMAL UNIV

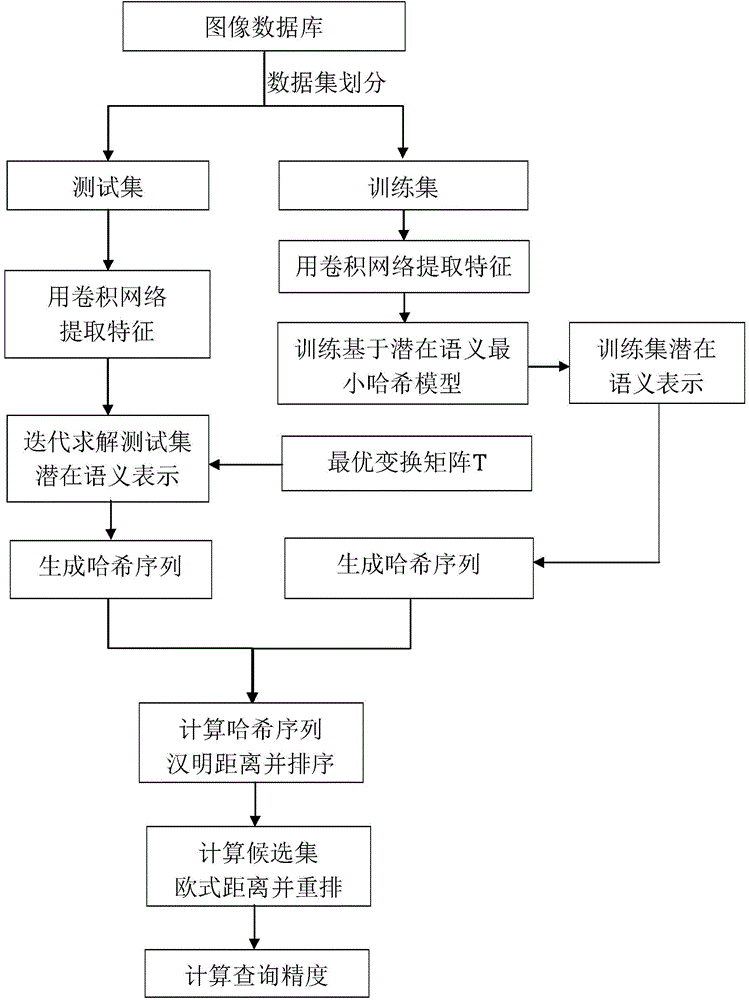

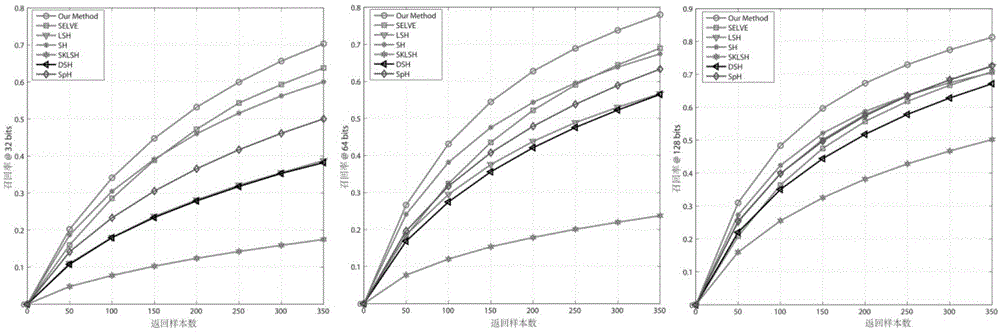

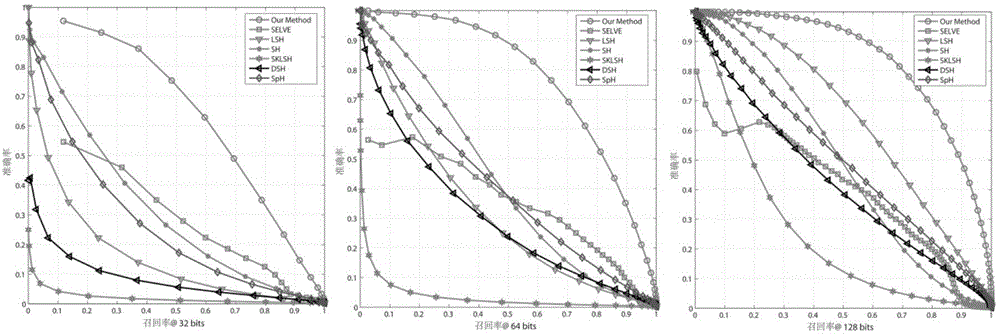

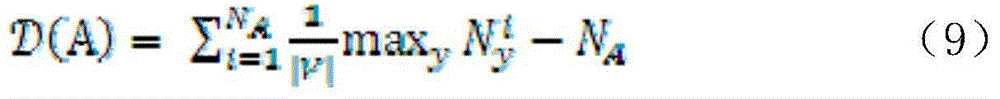

A latent semantic min-Hash-based image retrieval method

ActiveCN106033426AHigh precisionImprove efficiencyCharacter and pattern recognitionSpecial data processing applicationsMatrix decompositionImaging processing

The invention relates to the technical field of image processing and in particular relates to a latent semantic min-Hash-based image retrieval method comprising the steps of (1) obtaining datasets through division; (2) establishing a latent semantic min-Hash model; (3) solving a transformation matrix T; (4) performing Hash encoding on testing datasets Xtest; (5) performing image query. Based on the facts that the convolution network has better expression features and latent semantics of primitive characteristics can be extracted by using matrix decomposition, minimizing constraint is performed on quantization errors in an encoding quantization process, so that after the primitive characteristics are encoded, the corresponding Hamming distances in a Hamming space of semantically-similar images are smaller and the corresponding Hamming distances of semantically-dissimilar images are larger. Thus, the image retrieval precision and the indexing efficiency are improved.

Owner:XI'AN INST OF OPTICS & FINE MECHANICS - CHINESE ACAD OF SCI

A supervised fast discrete multimodal hash retrieval method and system

InactiveCN109446347AImprove discrimination abilityAvoid high computational complexityMultimedia data queryingCharacter and pattern recognitionHash functionData set

The invention discloses a supervised fast discrete multi-modal hash retrieval method and system. The method includes receiving a multi-modal training data set, wherein each sample contains a pair of multi-modal data features; projecting the multi-modal training dataset to a joint multi-modal intermediate representation by using a joint multi-modal feature map; for the joint multimodal intermediaterepresentation of multimodal training datasets, constructing a supervised fast discrete multimodal hash objective function; solving the objective function to obtain a hash function; receiving multimodal retrieval data set and multimodal test data set, projecting samples into joint multimodal middle representation, and then projecting them into Hamming space to obtain hash code according to hash function; based on hash codes, retrieving samples from multimodal test datasets in multimodal retrieval datasets. The invention learns discrete hash codes for heterogeneous multi-modal data, and ensures learning efficiency and retrieval precision at the same time.

Owner:SHANDONG NORMAL UNIV

Cross-media Hash index method based on coupling differential dictionary

The invention discloses a cross-media Hash index method based on a coupling differential dictionary. The cross-media Hash index method based on the coupling differential dictionary comprises the following steps that (1) modeling is conducted on the correlation of a plurality of modal data based on a graph structure, the similarity inside a modal is determined through the Euclidean distance between the data low-level features, the correlation between modals is determined by using the known correlation of different modal data, and classification label information of the data is used for improving the differentiation of the data on the graph structure; (2) the differential coupling dictionary is studied on the correlation of the data on the graph structure obtained in the step (1); (3) sparse coding is conducted on the different modal data by using the studied coupling dictionary in the step (2) and mapped inside unified dictionary space; (4) a Hash mapping function from the dictionary space to binary hamming space is studied. The cross-media Hash index method based on the coupling differential dictionary can realize the efficient cross-media searching of mass data based on content, and a user can submit a searching example of one modal to search a media object of another modal.

Owner:ZHEJIANG UNIV

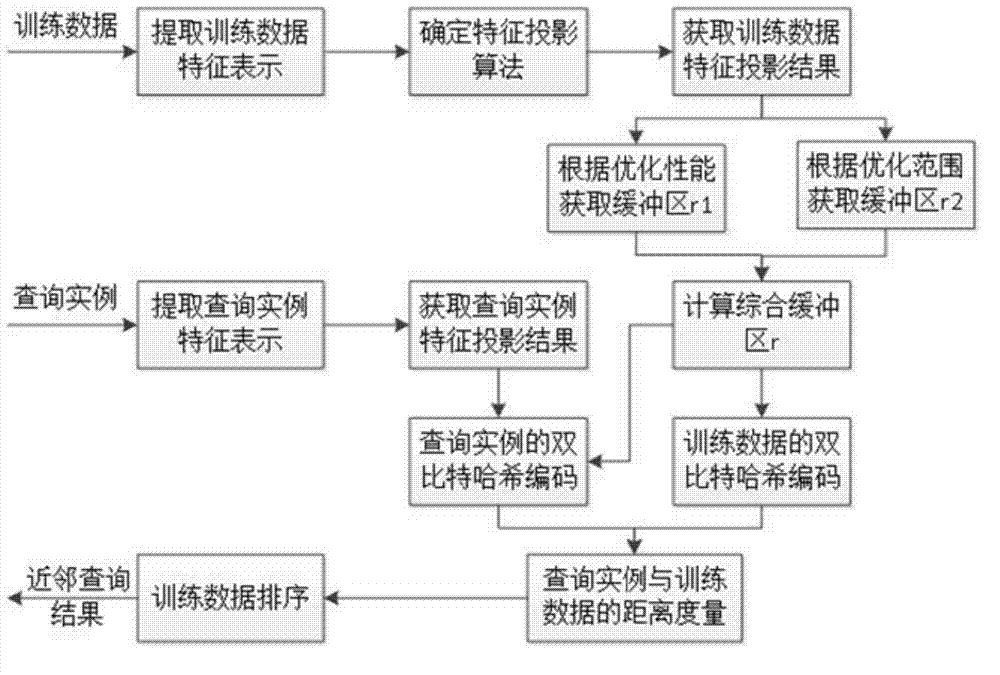

Data search method and system

ActiveCN104123375AAvoid damageImprove performanceSpecial data processing applicationsFeature vectorData set

The invention provides a data search method. The data search method comprises the steps of extracting the feature vector of a training dataset; projecting the feature vector into a preset feature space approximate to a Hamming space; obtaining a quantized threshold value according to the projection algorithm and determining the optimal buffer area according to the optimization performance and the optimization range; carrying out double-bit quantification on the feature vector projection result according to the quantized threshold value and the optimal buffer area to obtain a Hash code; obtaining a Hash code of a search case, and according to the Hash code of the search case and the Hash code of the feature vector projection result, extracting approximate training data from the training data set as the research result of the search case. The data search method has the advantages of being high in search speed and high in search precision. The invention further provides a data search system.

Owner:TSINGHUA UNIV

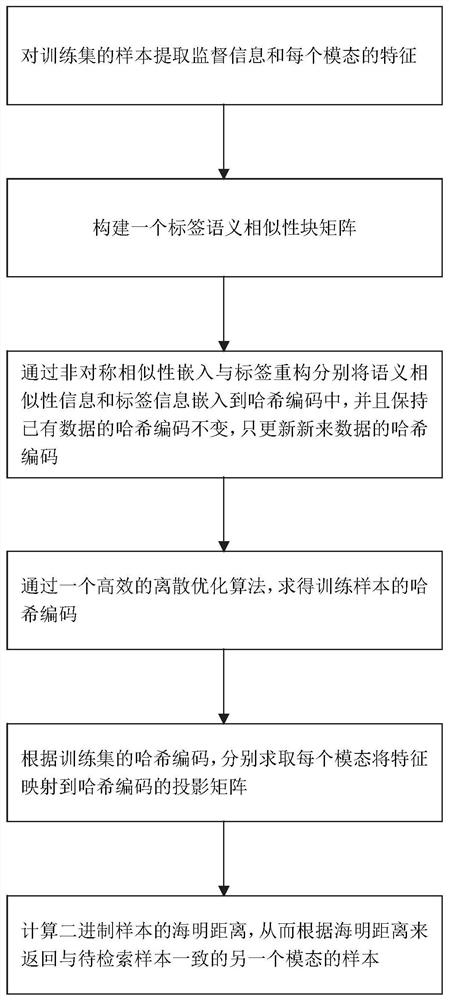

Label embedded online hash cross-modal multimedia data retrieval method and system

ActiveCN111639197AEfficient Online Hash LearningImprove learning efficiencyMultimedia data queryingSpecial data processing applicationsData retrievalHamming space

The invention discloses a label embedded online hash cross-modal multimedia data retrieval method and system, and the method comprises the steps: obtaining a multimedia training label matrix, featurematrixes of different modals of multimedia training data, and feature matrixes of different modals of a to-be-retrieved sample according to the multimedia training data; constructing a label semanticsimilarity block matrix based on the multimedia training label matrix; embedding the label semantic similarity block matrix into a Hamming space to obtain a hash code of the multimedia training data;solving a projection matrix of mapping each modal feature of the multimedia training data to the hash code of the multimedia training data according to the hash code of the multimedia training data and the feature matrixes of different modals of the multimedia training data; obtaining hash codes of the to-be-retrieved sample according to the projection matrix and the feature matrixes of differentmodes of the to-be-retrieved sample; and calculating the distance between the hash code of the to-be-retrieved sample and the hash code of the multimedia training data, and obtaining a sample similarto the to-be-retrieved sample from the multimedia training data.

Owner:SHANDONG UNIV

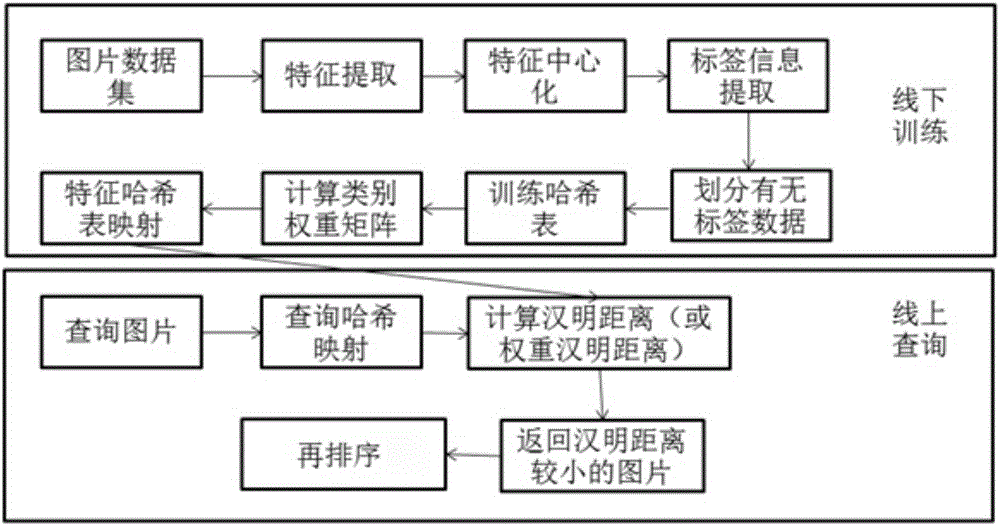

Double compensation based multi-table Hash image retrieval method

ActiveCN106777388ASave memoryAuxiliary memory is smallStill image data retrievalSpecial data processing applicationsInformation processingFeature extraction

The invention discloses a double compensation based multi-table Hash image retrieval method. The method comprises the steps of image characteristics extraction and category information processing, Hash table training, mapping of image characteristics to hamming space according to Hash tables and category weight calculation, hamming distance calculation based on query and query result returning and re-sorting operation. The method has the advantages of being quick in query response, small in internal storage expenditure and high query performance on the aspect of image retrieval, is greatly improved on the aspect of multi-table Hash image retrieval and overcomes the shortcoming of additional expenditure of multi-table Hash.

Owner:SOUTH CHINA UNIV OF TECH

Migration retrieval method based on semi-supervised antagonistic generation network

ActiveCN108959522ASmart and fast image retrievalImprove general adaptabilityCharacter and pattern recognitionSpecial data processing applicationsData setCountermeasure

A migration retrieval method based on a semi-supervised countermeasure generation network is provided. A countermeasure generation network is designed to retrieve hashes across data domains, and the goal is to map the original and target datasets into a common Hamming space, so that the image retrieval in a particular scene can be migrated to a retrieval image of another scene through the learningof the semi-supervised antagonism generation network. Therefore, the problem that the unlabeled data can not be fully utilized and the retrieval model is only suitable for a single scene in the era of big data is solved. The invention effectively improves the automatic and intelligent level of image retrieval.

Owner:ZHEJIANG UNIV OF TECH

A similarity-retaining cross-modal hash retrieval method

ActiveCN109271486AFully preserve the similarityRetain similarityText database queryingPattern recognitionHamming space

A similarity-retaining cross-modal hash retrieval method comprises the following steps: (1) constructing an objective function based on a similarity retaining strategy; (2) solving the objective function; (3) generating a sample binary hash codes in a query sample and a retrieval sample set; (4) calculating Hamming distances between the query sample and each sample in the retrieval sample set; (5)using a cross-modal retriever to complete the retrieval of the query sample. The method of the invention can not only fully retain the similarity of samples between modes, but also retain the similarity of samples in the modes when carrying out hash learning, so that the Hamming space obtained by learning has stronger identification ability and is more conducive to completing cross-modal retrieval.

Owner:JIUJIANG UNIVERSITY

Sequence constrained hashing algorithm in image retrieval

InactiveCN109145143AAdapt to flow pattern distributionReduce precision lossCharacter and pattern recognitionMetadata still image retrievalAlgorithmHamming space

A sequence constrained hashing algorithm in image retrieval relates to image retrieval. First of all, in the process of training the model, the relaxation of the original problem usually brings a lotof loss of precision, that is, the model is usually in real space to learn and optimize the model. At the same time, the previous hashing algorithms always keep the point-to-point relationship of theoriginal data in Hamming space, and ignore the nature of the retrieval task, that is, sorting. In order to deal with the problem of large-scale image search and obtain more accurate ranking results bybinary coding, in order to overcome the problems of large-scale image retrieval and improve the use of the model, we can deal with the problem of image search in different feature metric spaces.

Owner:XIAMEN UNIV

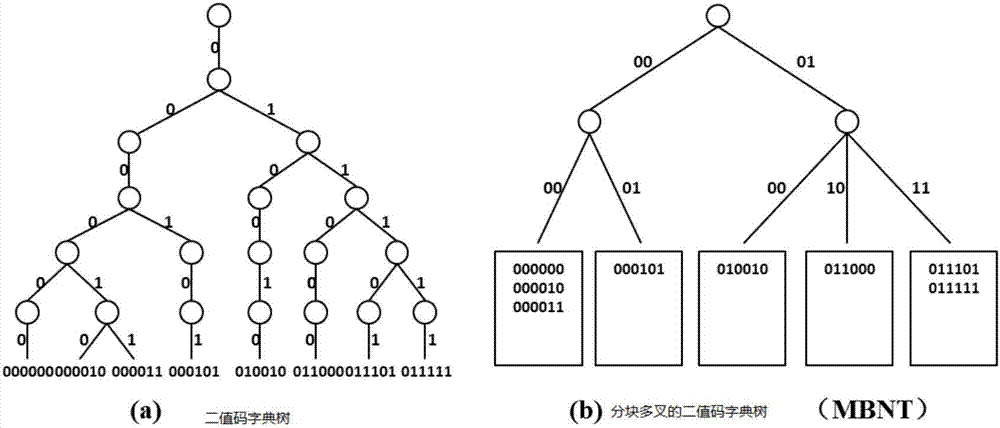

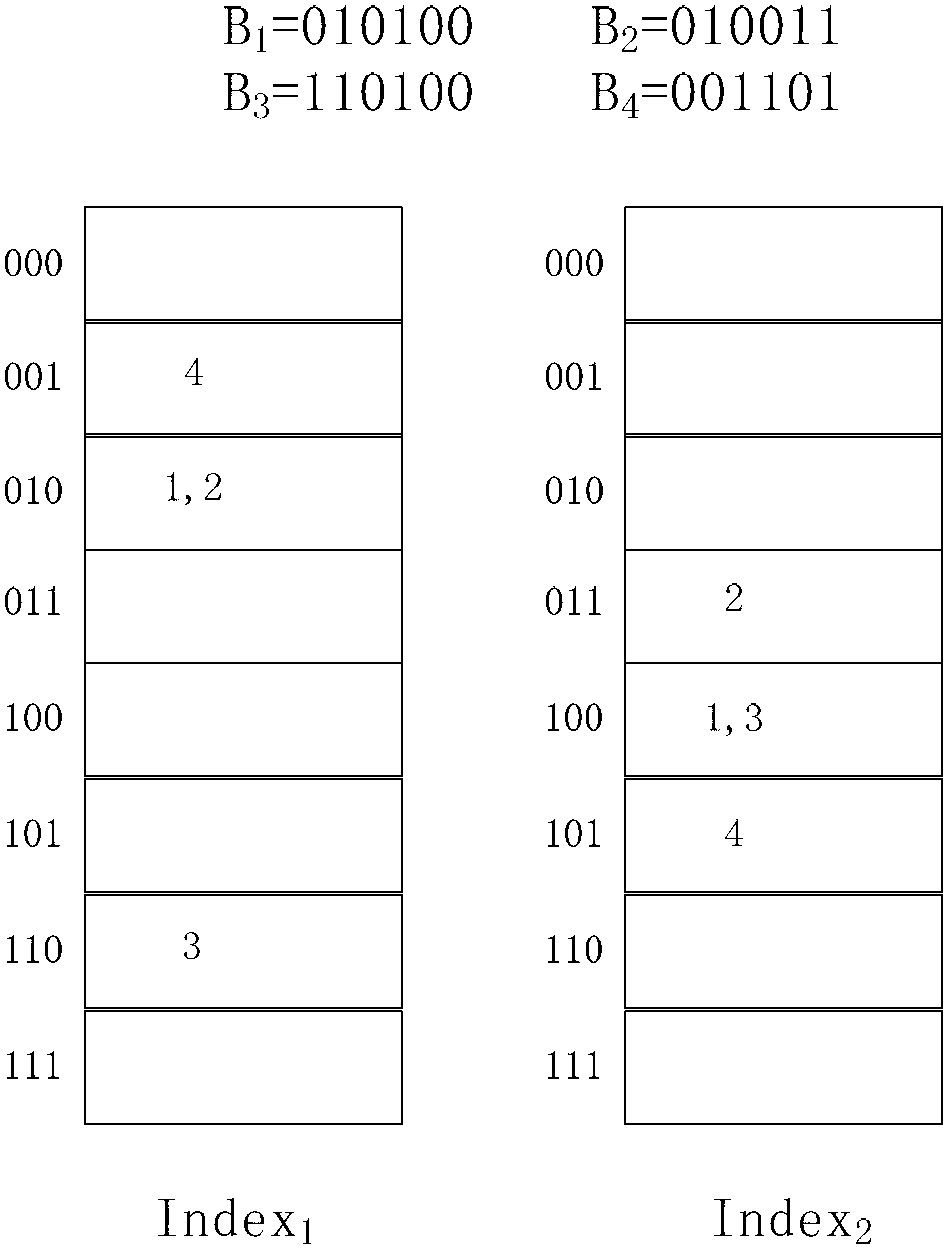

Binary code dictionary tree-based search method

ActiveCN106980656AAvoid finding missing problemsReduce the number of lookupsStill image data indexingSpecial data processing applicationsTheoretical computer scienceHamming space

The invention discloses a binary code dictionary tree-based search method. The method comprises the steps of obtaining a binary code of each image in a database, and dividing each binary code into m sections of substrings; for the jth sections of the substrings of all images in the database, establishing a binary code dictionary tree of the jth sections of the substrings, wherein the number of binary code dictionary trees is m, and each binary code dictionary tree comprises internal nodes and external nodes; obtaining the binary code of the to-be-queried image and the m sections of the substrings of the binary code; for the jth section of the substring of the binary code of the to-be-queried image, searching for the binary code with a Hamming distance not exceeding a value defined in the specification in the binary code dictionary tree corresponding to the jth sections of the substrings of all the images in the database; and traversing all the substrings of the binary code of the to-be-queried image to obtain a query result of each substring, wherein j is smaller than or equal to m. According to the method, the search quantity can be reduced during accurate nearest neighbor search of Hamming space, so that the search speed is increased.

Owner:PEKING UNIV

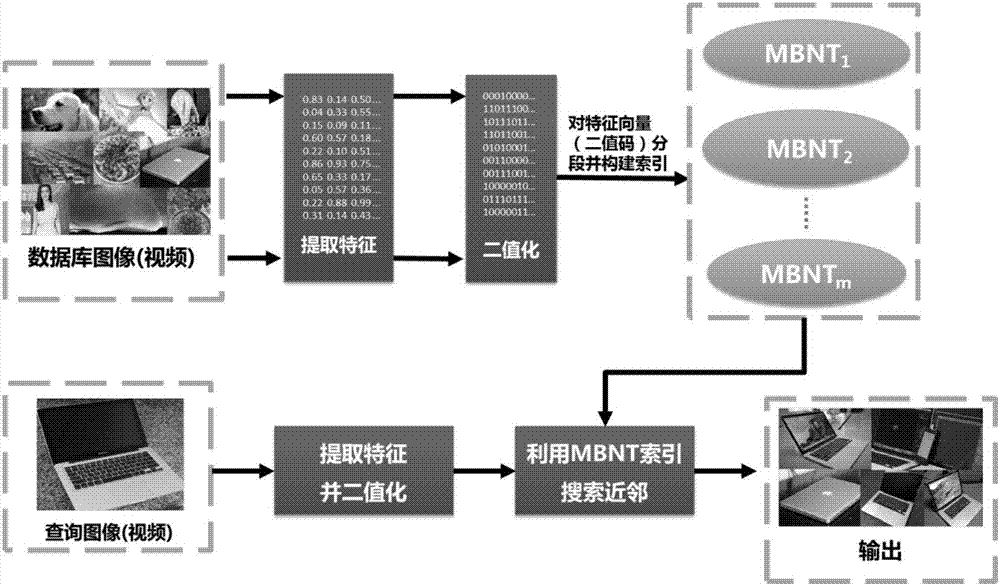

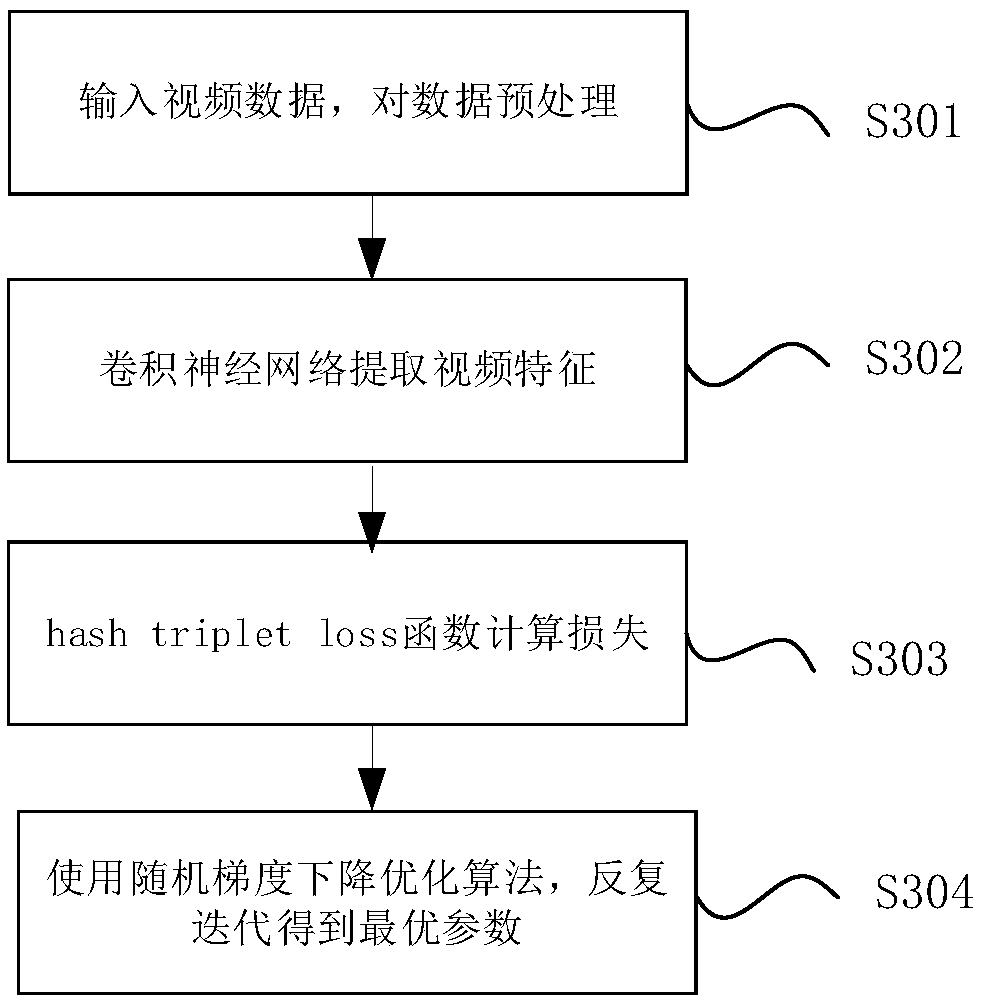

Similar video searching method and system based on a double-flow neural network

ActiveCN109492129AStable trainingFast convergenceDigital data information retrievalSpecial data processing applicationsTemporal informationHamming space

The invention provides a similar video searching method and system based on a double-flow neural network. According to the invention, a key frame extraction technology is adopted for video frame extraction, so that the storage space is greatly saved, the neural network training is more stable, and the convergence speed of the neural network training is accelerated; the video features are extractedby adopting the double-flow convolutional neural network, so that the extracted video features keep the spatial information and the time information in the video at the same time, and the robustnessis better. The Hamming distance is used for measuring the similarity of videos, the distance operation in the Hamming space is a bit operation, so that the calculation cost of the Hamming space is farlower than that of the original space even if the Hamming space is a complex retrieval algorithm, and the retrieval algorithm is an efficient retrieval mode.

Owner:WUHAN UNIV OF TECH

Aerial image rapid matching algorithm based on multi-characteristic Hash learning

InactiveCN106886785AQuick matchSimplified Feature Matching MethodCharacter and pattern recognitionHash functionFloating point

The invention discloses an aerial image rapid matching algorithm based on multi-characteristic Hash learning. The method is characterized by according to a course overlap rate of an aerial image, selecting a matched area, extracting a characteristic point in the matched area and acquiring a characteristic point set; carrying out multi-characteristic description on the acquired characteristic point so as to acquire a characteristic vector; through a nuclear method, mapping the characteristic vector to an uniform nuclear space; selecting training sample data, in the nuclear space, learning a binary system Hash code of a sample characteristic point and generating a Hash function; and according to the Hash function, carrying out binary system Hash code description on the characteristic point extracted from the matched area, and in a Hamming space, according to a Hamming distance, carrying out rapid matching. In the invention, multi-characteristic fusion and a Hash learning method are adopted, and the characteristic point is expressed in a binary system Hash code form; problems that calculating is complex and a matching speed is slow by using a traditional floating point type characteristic descriptor are overcome, and a characteristic matching method is simplified; and compared to a characteristic descriptor of a single characteristic, by using the method of the invention, high distinguishing performance is possessed, the matching speed is fast and accuracy is high.

Owner:NANJING UNIV OF INFORMATION SCI & TECH

Nearest neighbor methods for non-Euclidean manifolds

InactiveUS8280839B2Long distanceCharacter and pattern recognitionFuzzy logic based systemsNear neighborHamming space

Owner:MITSUBISHI ELECTRIC RES LAB INC

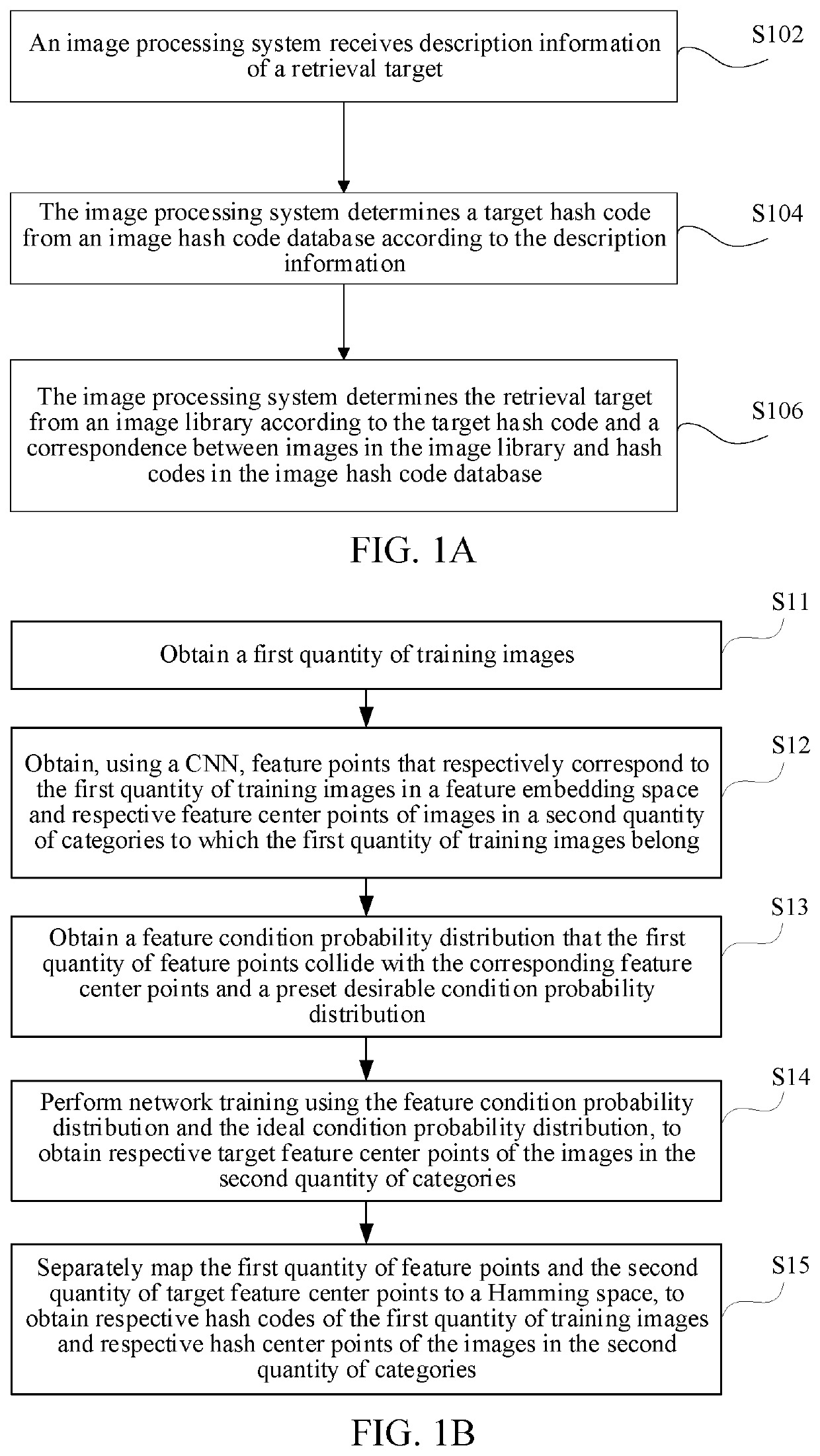

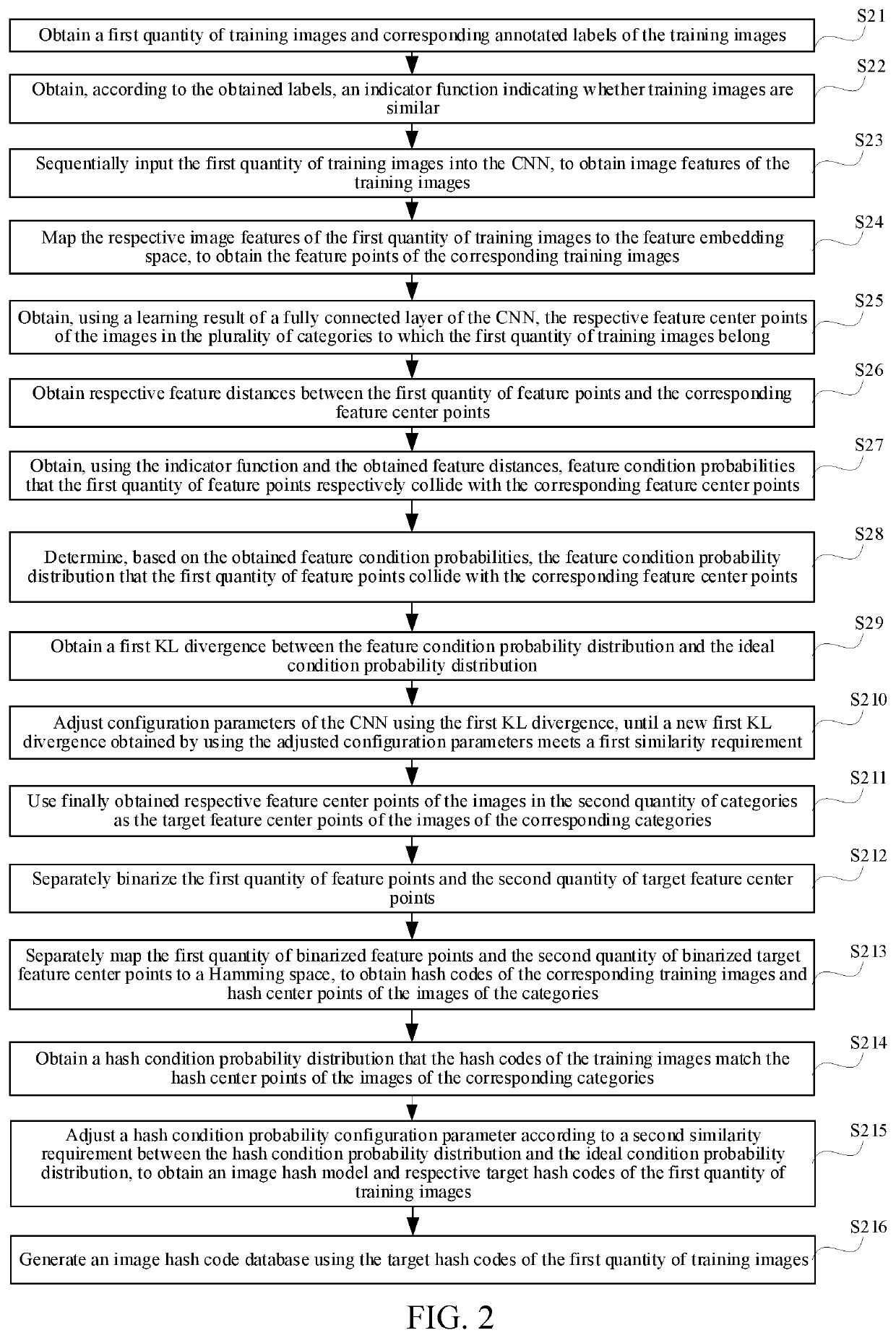

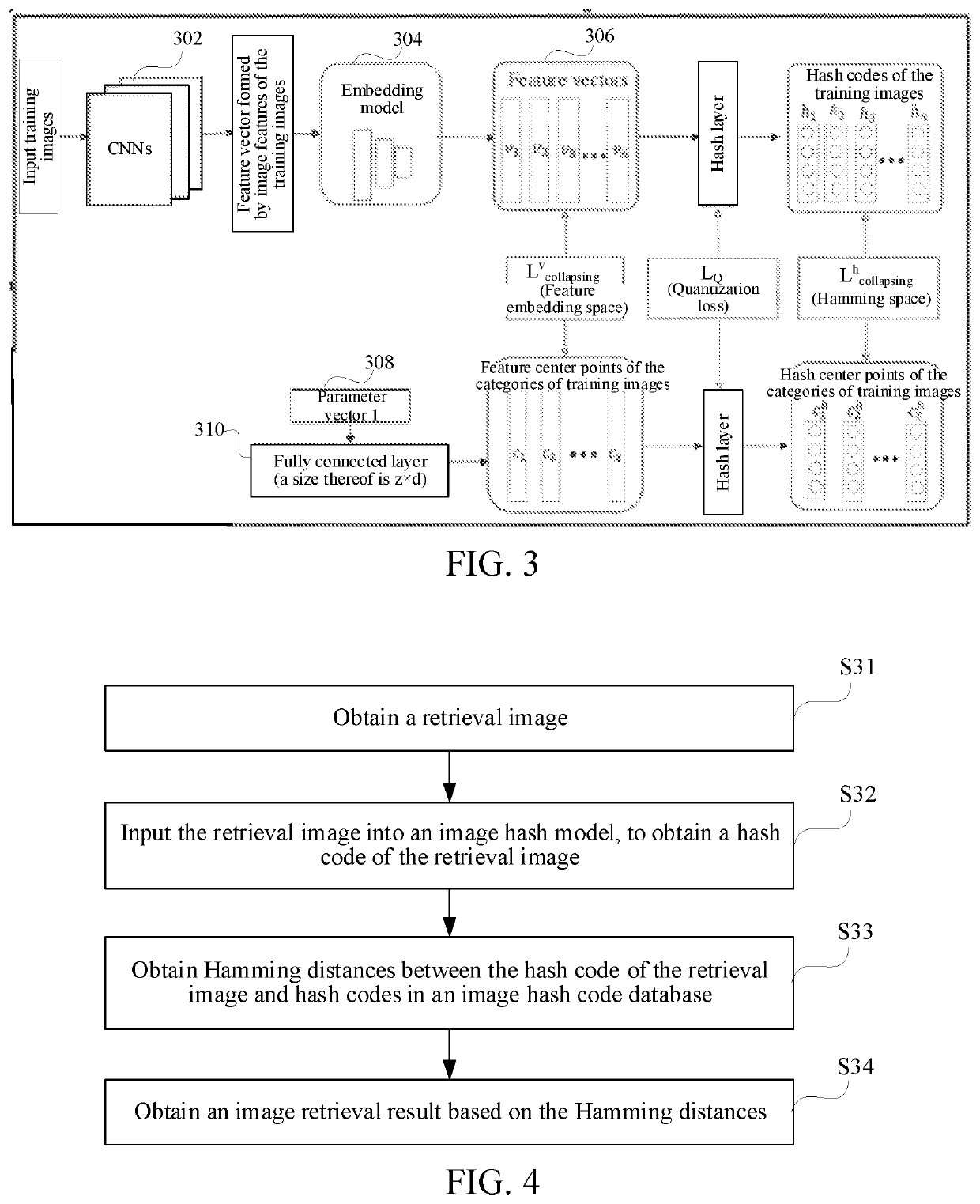

Image processing method and device and computer equipment

ActiveCN110188223AReduce training timeHigh precisionStill image data indexingCharacter and pattern recognitionImaging processingGlobal distribution

The invention provides an image processing method and device and computer equipment. According to the cConvolutional neural network, obtaining feature points corresponding to the training images in the feature embedding space,; and feature center points of the second number of types of images to which the training images belong are obtained, network training is carried out through a central collision mode. Obtaining aA target feature center point corresponding to each type of image is obtained; mapping each obtained feature point and each target feature center point are mapped to a Hamming space; obtaining a first number of Hash codes and a second number of Hash center points are obtained, at the moment, network training can still be carried out in a central collision mode to; obtaining animage hash model, compared with the prior art, the method has the advantages that the similarity between the Hash codes of the training images is learned, the similarity between the feature points ofthe training images and the corresponding central points is learned, the global distribution of the images is learned, the network training time is greatly shortened, and the learning efficiency andthe image Hash code precision are improved.

Owner:TENCENT TECH (SHENZHEN) CO LTD

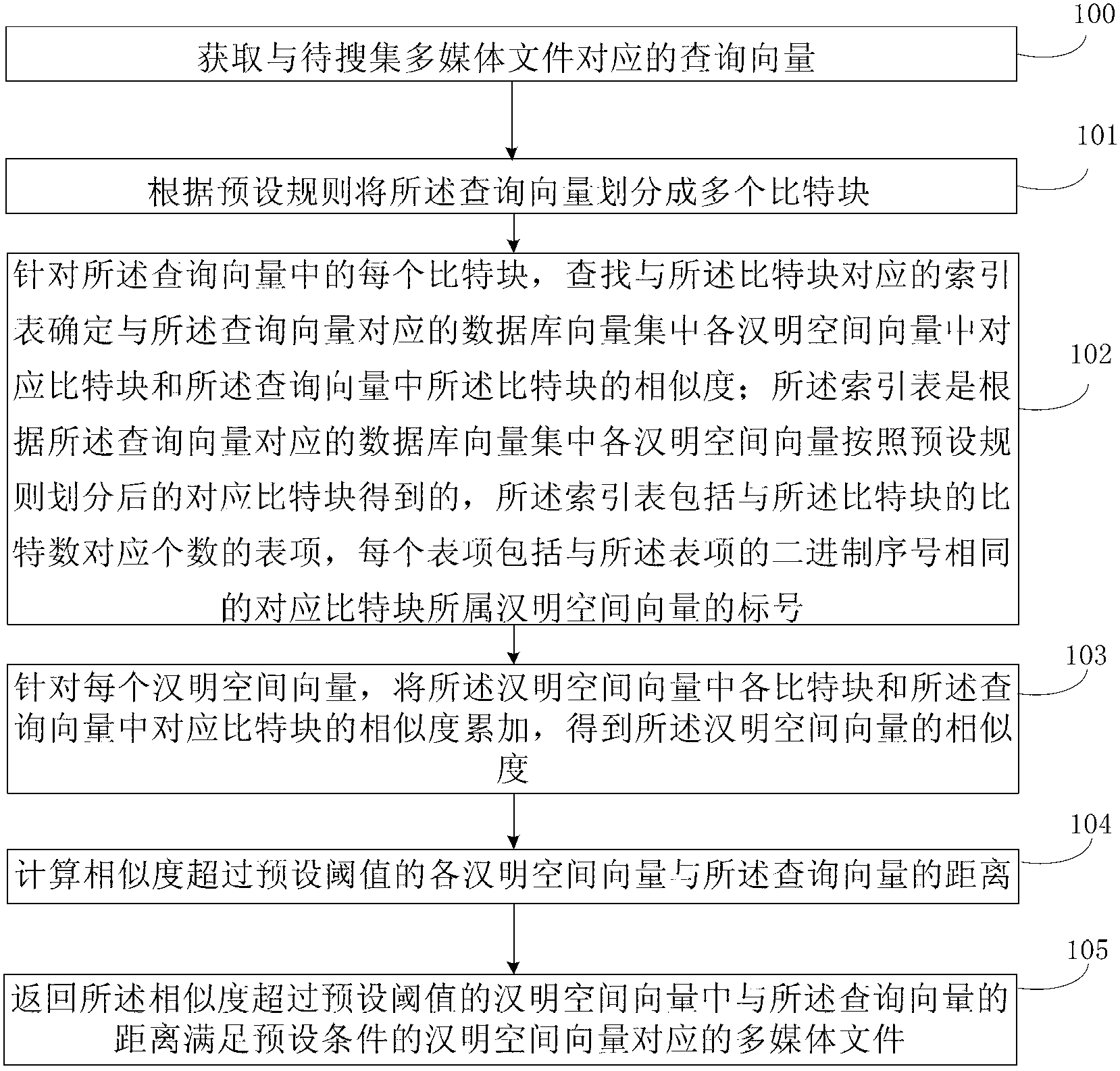

Method and device for searching multimedia file on the net

ActiveCN103309951AReduce occupancyGuaranteed accuracySpecial data processing applicationsHamming spaceLarge scale data

The invention provides a method and a device for searching a multimedia file on the net, wherein after a query vector is divided into a plurality of bit blocks, the similarity of Hamming spatial vectors in a database vector set is determined according to the similarity of the corresponding bit blocks so as to only calculate the distances between the Hamming spatial vectors of which the similarity exceeds the preset threshold value and the query vector and return the multimedia file corresponding to the Hamming spatial vector among the Hamming spatial vectors of which the similarity exceeds the preset threshold value while the distance of the Hamming spatial vector and the query vector meets the preset conditions, so that most retrieved target vectors are contained in the Hamming spatial vectors of which the similarity exceeds the preset threshold value to guarantee the retrieval accuracy; in addition, not all Hamming spatial vectors are necessarily calculated one by one among the database vectors, so that the calculation complexity is reduced, the occupancy of system resources by the calculation is reduced, the multimedia file needed by a user can be retrieved from a large-scale database within a short period of time, and the retrieval efficiency is improved.

Owner:PEKING UNIV

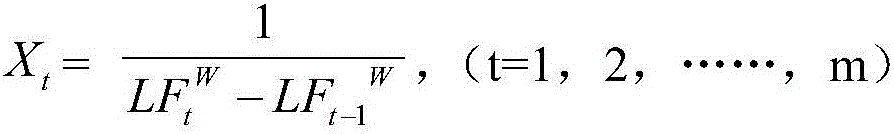

LF entropy-based DNA sequence similarity detection method

The invention discloses an LF entropy-based DNA sequence similarity detection method. An original DNA sequence is mapped based on an L-Gram model to obtain a new numerical value sequence. By calculating a matrix formed by LF entropy values of N sequences, a standard entropy is further obtained, and the standard entropy is projected to a hamming space for performing sequence similarity comparison. According to the LF entropy-based DNA sequence similarity detection method, a condition that a converted characteristic space comprises enough original DNA information is taken into full consideration, so that missing of DNA information is avoided; and meanwhile, each section of DNA sequence is converted into a new space, so that operation speed and accuracy can be improved.

Owner:FUJIAN NORMAL UNIV

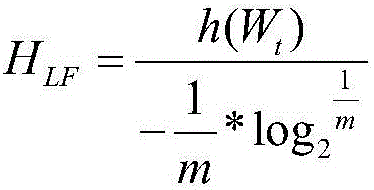

Unsupervised depth hashing method based on target detection

InactiveCN110196918AEasy to learnGood effectDigital data information retrievalSpecial data processing applicationsPattern recognitionData set

The invention relates to an unsupervised depth hashing method based on target detection, and belongs to the technical field of computer information retrieval and picture retrieval. The method comprises the steps of obtaining the object tags existing in the pictures by utilizing the target detection, taking the tags as pseudo tags of the pictures, and training a designed end-to-end depth hash modelbased on the pseudo tags to obtain the hash code representation of each picture in a Hamming space; evaluating the quality of the deep Hash model through the average accuracy mean value of the corresponding Hash codes in the picture retrieval task, wherein the average accuracy rate mean value is the MAP, and the unsupervised deep hash model comprises a target detection algorithm unit and a hash network unit. According to the method, the more instructive information can be obtained, the capability of a depth model can be fully utilized to learn the high-quality Hash codes with maintained similarity, and the picture retrieval is carried out in a real picture data set to obtain the best effect, namely, the MAP value is the highest.

Owner:BEIJING INSTITUTE OF TECHNOLOGYGY +1

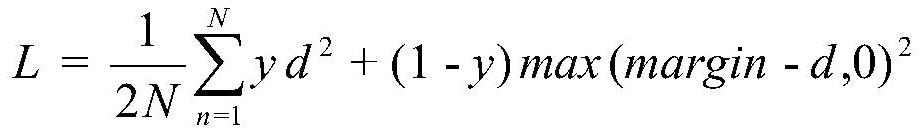

Image processing method and apparatus, and storage medium

PendingUS20210382937A1Improve retrieval efficiencyImprove retrieval accuracyStill image data indexingStill image data clustering/classificationPattern recognitionImaging processing

Embodiments of this disclosure include an image processing method and apparatus. The method may include obtaining feature points corresponding to training images and feature center points of images in a second quantity of categories and obtaining a feature condition probability distribution that the feature points collide with corresponding feature center points. The method may further include performing network training to obtain target feature center points of the images in the second quantity of categories. The method may further include mapping the first quantity of feature points and the second quantity of target feature center points to a Hamming space, to obtain hash codes of the first quantity of training images and hash center points of the images in the second quantity of categories. The method may further include performing network training using the hash condition probability distribution and the ideal condition probability distribution, to obtain an image hash model.

Owner:TENCENT TECH (SHENZHEN) CO LTD

Universal cross-modal retrieval model based on deep hash

PendingCN113076465AQuick buildBridging the Semantic GapSemantic analysisCharacter and pattern recognitionOriginal dataTheoretical computer science

The invention discloses a universal cross-modal retrieval model based on deep hash. The universal cross-modal retrieval model comprises an image model, a text model, a binary code conversion model and a Hamming space. The image model is used for the feature and semantic extraction of the image data; the text model is used for the feature and semantic extraction of the text data; the binary code conversion model is used for converting the original features into the binary codes; the Hamming space is a common subspace of images and the text data, and the similarity of the cross-modal data can be directly calculated in the Hamming space. According to the universal model for solving cross-modal retrieval by combining deep learning and Hash learning, the data points in an original feature space are mapped into the binary codes in the public Hamming space, similarity ranking is carried out by calculating the Hamming distance between the codes of the data to be queried and the codes of the original data, and therefore a retrieval result is obtained, and the retrieval efficiency is greatly improved. The binary codes are used for replacing the original data storage, so that the requirement of the retrieval tasks for the storage capacity is greatly reduced.

Owner:CHINA UNIV OF PETROLEUM (EAST CHINA)

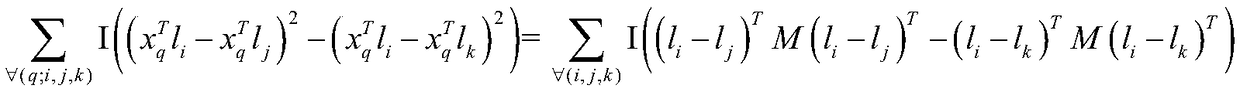

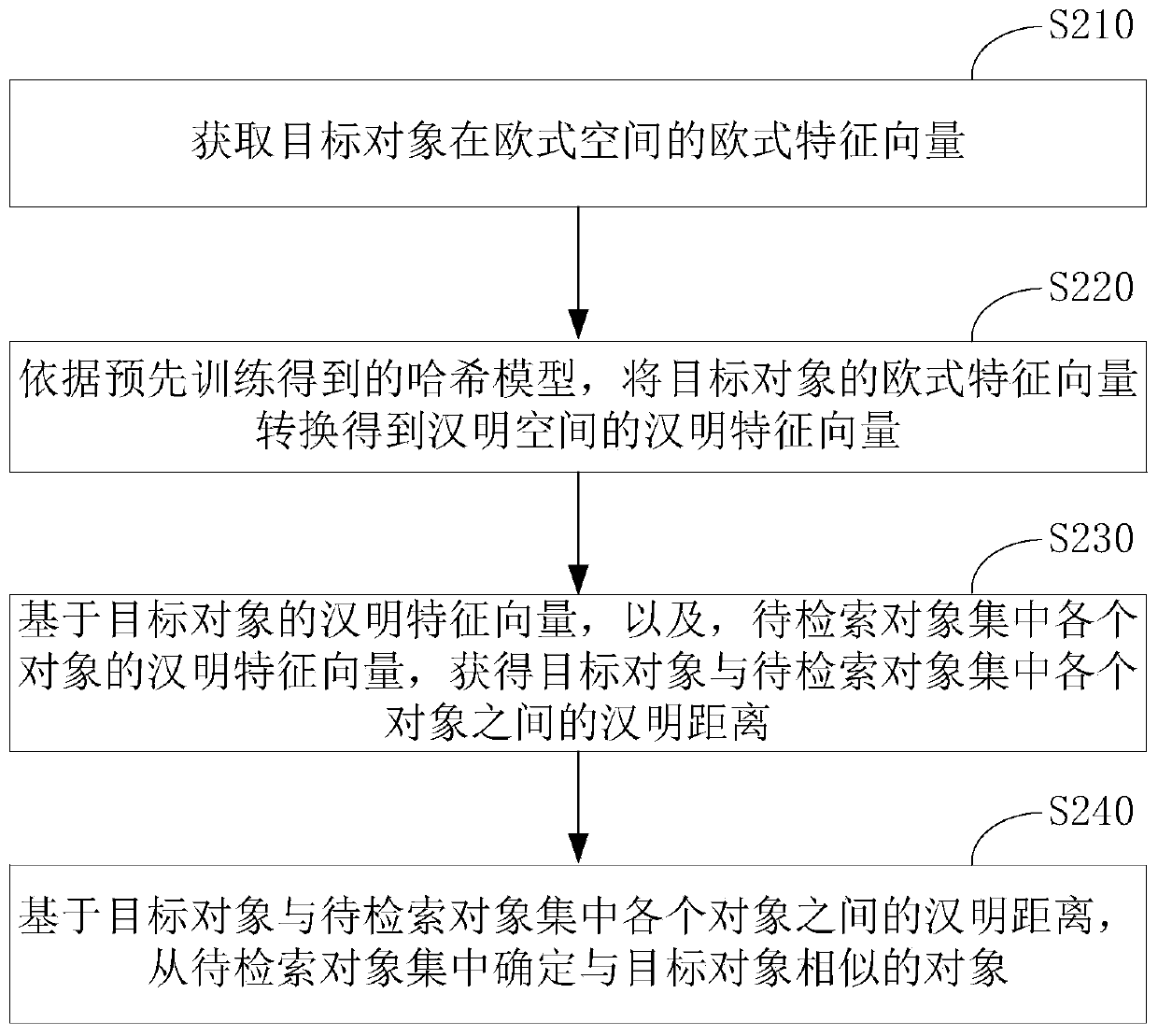

Hash model training method, similar object retrieval method and device

InactiveCN110659375AKeep sortedImprove accuracyDigital data information retrievalCharacter and pattern recognitionFeature vectorAlgorithm

The invention provides a hash model training method, a similar object retrieval method and device. A multilayer neighborhood pyramid is constructed by gradually increasing the number of neighbor points. And in each layer of neighborhood of the neighborhood pyramid, the average distance from the neighbor point in the layer to the reference point is taken as the Euclidean neighborhood measure of thelayer of neighborhood. And data points in the original space are mapped to a Hamming space by utilizing a hash model, and Hamming neighborhood measure of each layer of the pyramid is calculated. Theoptimization target of the hash model is to keep neighborhood measurement in an original space in a Hamming space, the optimization target can keep distance distribution of real neighbor points and also can keep neighbor sorting, finally better distance keeping is obtained, and then the accuracy of approximate nearest neighbor retrieval is improved. The feature vector of the Hamming space of the object obtained by using the hash model can better maintain the features of the object in the Euclidean space, so that the similarity retrieval accuracy can be improved by using the method.

Owner:UNIV OF SCI & TECH OF CHINA

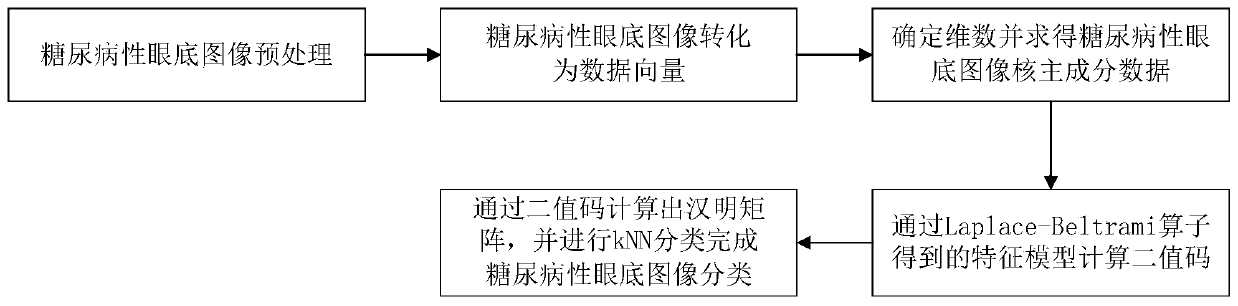

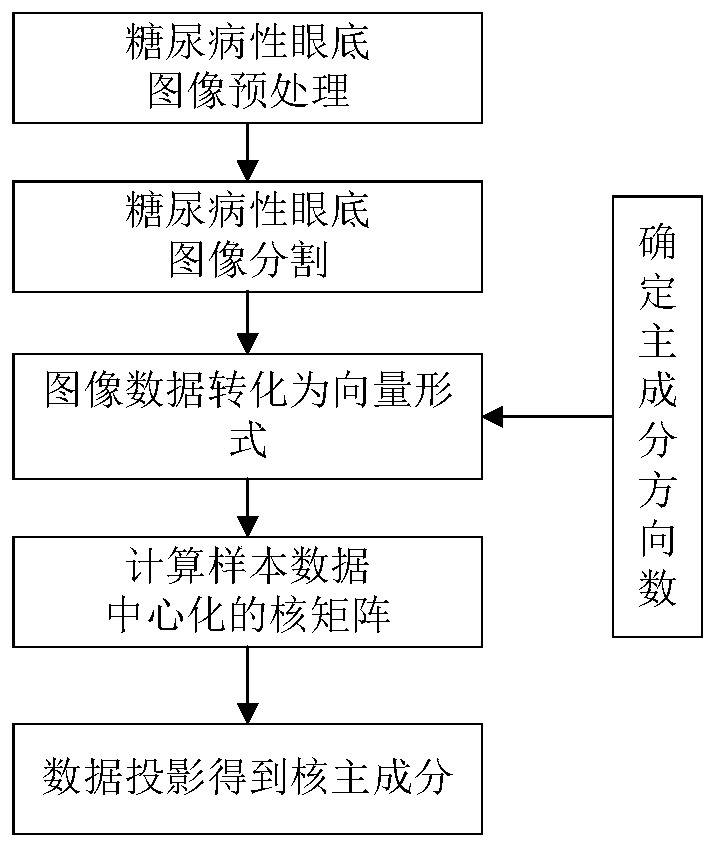

Nuclear main component spectrum Hash method for diabetic eyeground image classification

ActiveCN110176298AImprove classification accuracySimplify the optimization processCharacter and pattern recognitionMedical imagesKernel principal component analysisPattern recognition

The invention discloses a nuclear main component spectrum Hash method for diabetic eyeground image classification. The method comprises the steps of performing preprocessing and segmentation operationon diabetic eyeground image data, converting the processed eyeground image data to a vector form; then extracting nonlinear characteristic information in the eyeground image data in a nuclear main component analysis algorithm; then converting the data to a binary code form, representing the eyeground image sample data by means of the characteristic value and the characteristic function value of an Laplace-Beltrami operator; and finally converting the sample characteristic function value to the binary code by means of a threshold, and performing effective classification of the diabetic eyeground image in a Hamming space by means of a nearest neighbor algorithm. The nuclear main component spectrum Hash method has advantages of sufficiently extracting the complicated nonlinear diabetic eyeground image data characteristic, realizing relatively high classification accuracy and effectively reducing calculation complexity in large-scale eyeground image classification.

Owner:NANTONG UNIVERSITY

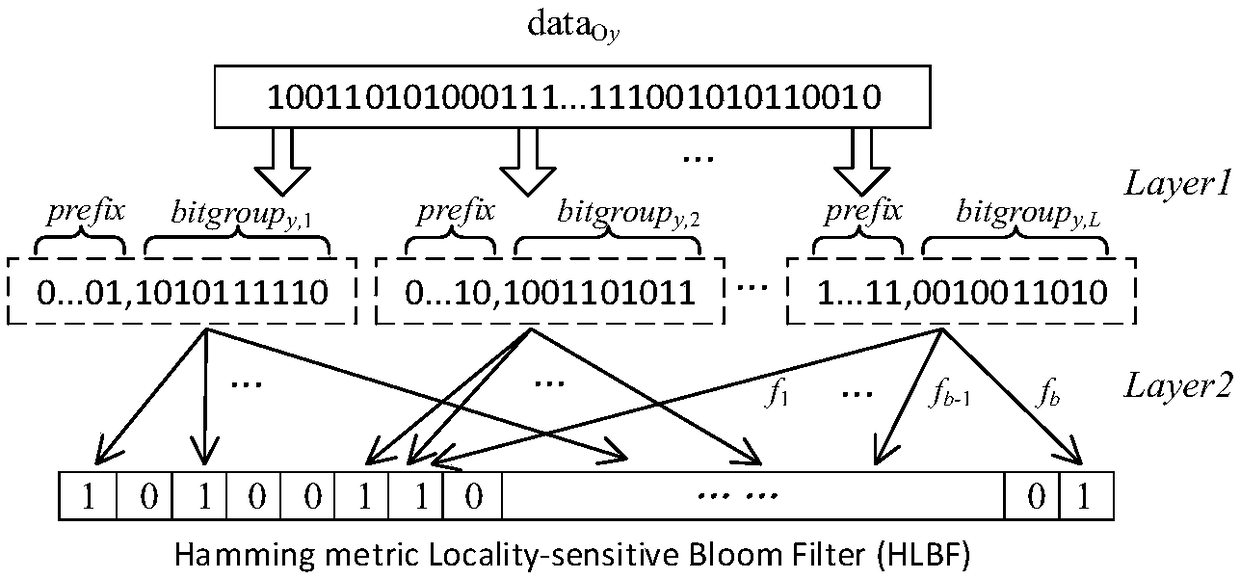

Approximate member query method based on hamming distance

The invention discloses an approximate member query method based on Hamming distance, characterized in that a locally sensitive hash function (LSH)-bit sampling LSH suitable for Hamming distance metrics is used, combined with the random hash function in the standard Bloom filter (BF), to build the Bloom filter HLBF, for a given query data Q, L bit groups are generated. If the bit bits of b addresses of one bit group in the Bloom filter HLBF are all 1, the bit group is said to pass. If any one of the L bit groups passes, it is determined that the query data Q is an approximate member of the setOmega. The advantage is that the query of the approximate member can be completed in Hamming space.

Owner:NINGBO UNIV

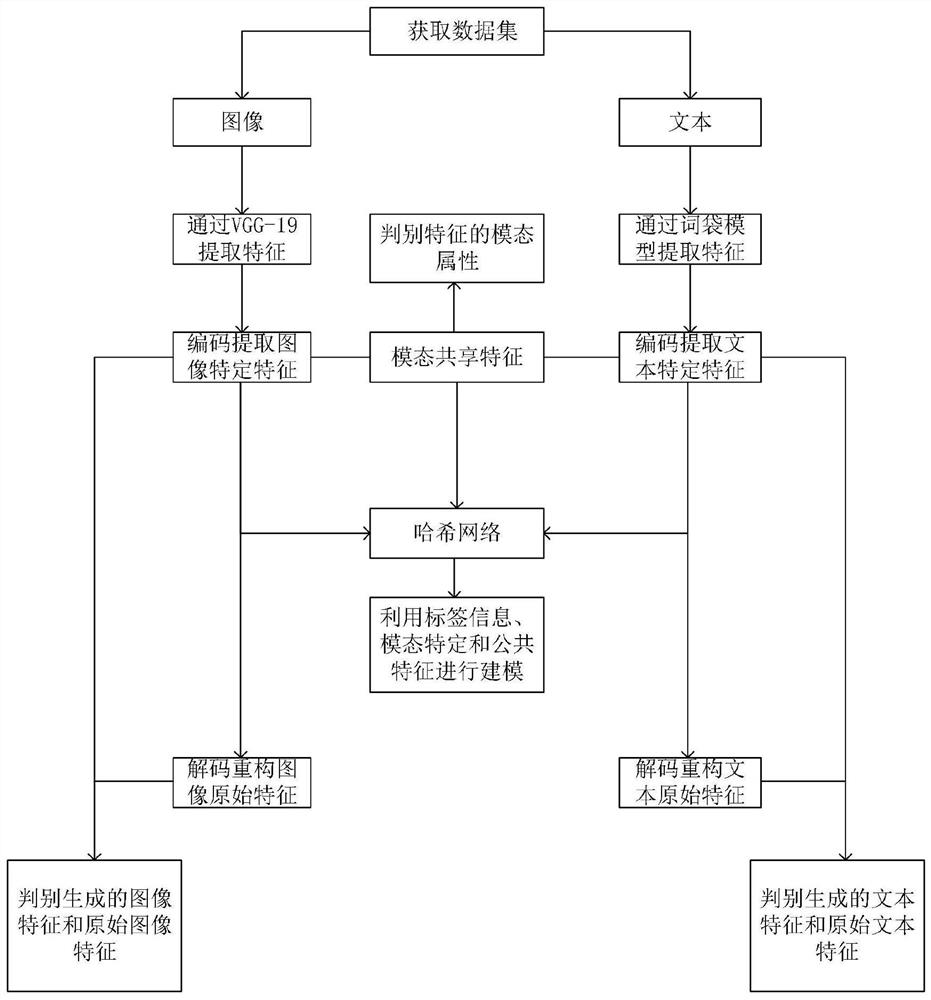

Cross-modal retrieval method based on modal specificity and shared feature learning

ActiveCN112800292AReduce distribution varianceOriginal features preservedOther databases indexingNeural architecturesData setFeature extraction

The invention discloses a cross-modal retrieval method based on modal specificity and shared feature learning, which comprises the following steps: S1, acquiring a cross-modal retrieval data set, and dividing the cross-modal retrieval data set into a training set and a test set; S2, respectively carrying out feature extraction on the text and the image; S3, extracting modal specific features and modal sharing features; S4, generating a hash code corresponding to the modal sample through a hash network; S5, training the network by combining the loss function of the adversarial auto-encoder network and the loss function of the Hash network; and S6, performing cross-modal retrieval on the samples in the test set by using the network trained in the step S5. According to the method, a Hash network is designed, encoding features of image channels, encoding features of text channels and modal sharing features are projected into a Hamming space, and modeling is performed by using label information, modal specificity and sharing features, so that output Hash codes have better semantic discrimination between modals and in the modals.

Owner:NANJING UNIV OF POSTS & TELECOMM

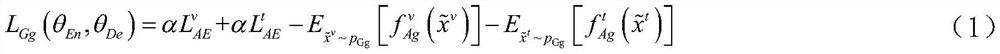

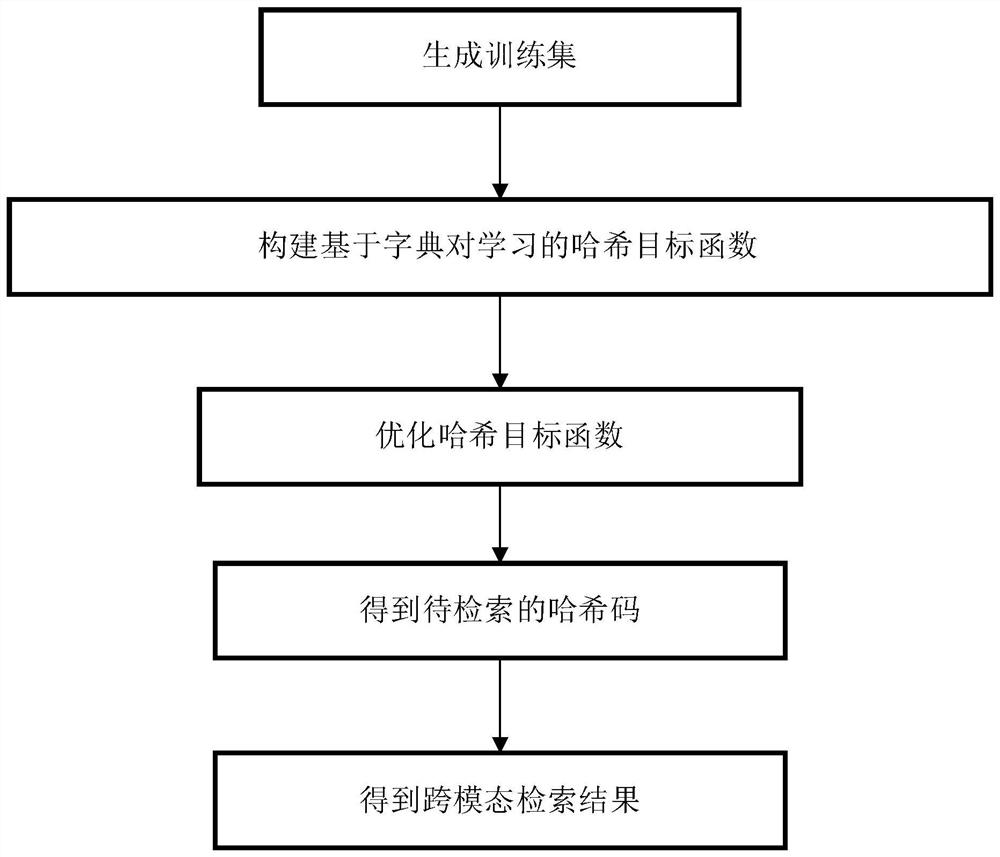

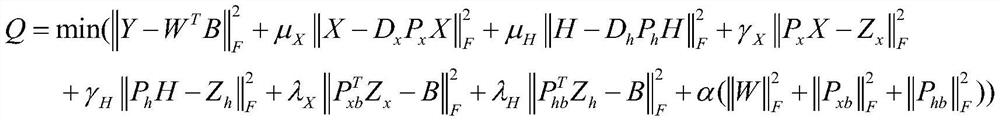

Hash cross-modal information retrieval method based on dictionary pair learning

PendingCN111984800AHigh Average Retrieval AccuracyOvercoming the problem of consuming too many computing resourcesDigital data information retrievalCharacter and pattern recognitionAlgorithmTheoretical computer science

The invention discloses a hash cross-modal information retrieval method based on dictionary pair learning. The hash cross-modal information retrieval method comprises the following steps of: (1) generating a training set; (2) constructing a hash objective function based on dictionary pair learning; (3) optimizing the hash objective function based on dictionary pair learning; (4) calculating a hashcode of a test set; and (5) obtaining a retrieval result. According to the invention, an objective function is constructed by using a dictionary pair thought, a coefficient embedding matrix with smaller modal difference is obtained by changing the data of different modals through a dictionary pair, so that the problem that the data of different modals is directly mapped to the Hamming space is solved, the problem of isomerism of the data of different modals can be better solved, and the method has higher average retrieval precision when solving the problem of cross-modal retrieval.

Owner:XIDIAN UNIV

Trademark retrieval method based on deep hash

InactiveCN113254688AImprove retrieval efficiencySave labor resourcesStill image data clustering/classificationNeural architecturesData setTrademark

The invention discloses a trademark retrieval method based on deep hash. The method comprises the steps of firstly, constructing a trademark retrieval database according to an existing trademark graphic element classification method; secondly, associating different preset classifications with the Hash center to obtain a Hash code for optimizing the center similarity so as toencourage the same-element category images sharing one hash center to generate hash codes close to each other in the Hamming space; and then selecting a convolutional neural network trained on the ImageNet data set to be combined with the Hash layer; after the Hash center is determined, realizing the end-to-end training of the two; and finally, according to the generated Hash code, calculating a returned image through a Hamming distance. According to the method, image depth features are converted into Hash codes in the Hamming space through a depth hashing method, and the high-dimensional trademark image feature comparison is converted into the comparison of Hamming distances in the Hamming space to retrieve trademarks of the same kind or a service group, so that the trademark retrieval efficiency can be improved, and labor resources are reduced.

Owner:GUANGDONG POLYTECHNIC NORMAL UNIV

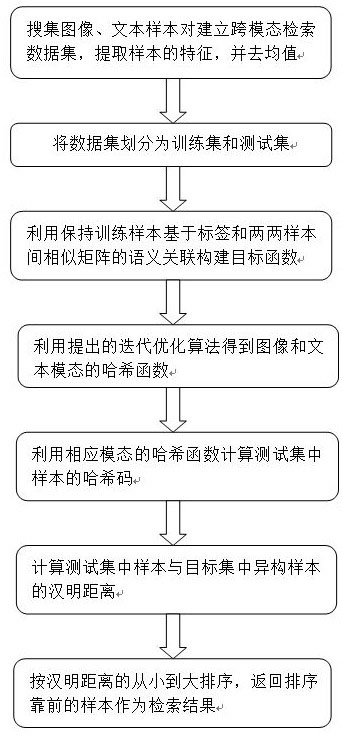

Discrete supervision cross-modal hash retrieval method based on semantic preservation

PendingCN111914108AFast trainingFast retrievalDigital data information retrievalCharacter and pattern recognitionData setHash function

The invention discloses a discrete supervision cross-modal hash retrieval method based on semantic preservation, which realizes semantic-based image and text cross-modal retrieval, and comprises the following steps of: firstly, collecting an image and text sample pair from a network, and respectively extracting features of an image and a text sample; dividing the data set into a training set and atest set; learning a shared Hamming space for the image and text modalities by keeping semantic consistency of samples in the training set, and learning hash functions for the image and text modalities respectively; taking the training set and the test set as a target set and a query set respectively, and calculating Hamming distances between samples in the query set and heterogeneous samples inthe target set; and sorting from small to large according to the Hamming distance, and returning the sample sorted in the front as a retrieval result. According to the method, images and text samplescan be mapped to a shared Hamming space for efficient retrieval, the training speed is high, the accuracy is high, and the method has a good application prospect.

Owner:LUDONG UNIVERSITY

Features

- R&D

- Intellectual Property

- Life Sciences

- Materials

- Tech Scout

Why Patsnap Eureka

- Unparalleled Data Quality

- Higher Quality Content

- 60% Fewer Hallucinations

Social media

Patsnap Eureka Blog

Learn More Browse by: Latest US Patents, China's latest patents, Technical Efficacy Thesaurus, Application Domain, Technology Topic, Popular Technical Reports.

© 2025 PatSnap. All rights reserved.Legal|Privacy policy|Modern Slavery Act Transparency Statement|Sitemap|About US| Contact US: help@patsnap.com