Video semantic segmentation method based on optical flow feature fusion

A feature fusion and semantic segmentation technology, applied in the field of video processing, can solve the problems of many instances, large data volume, and high segmentation delay, and achieve the effect of increasing speed, improving accuracy, and enriching semantic information

- Summary

- Abstract

- Description

- Claims

- Application Information

AI Technical Summary

Problems solved by technology

Method used

Image

Examples

Embodiment Construction

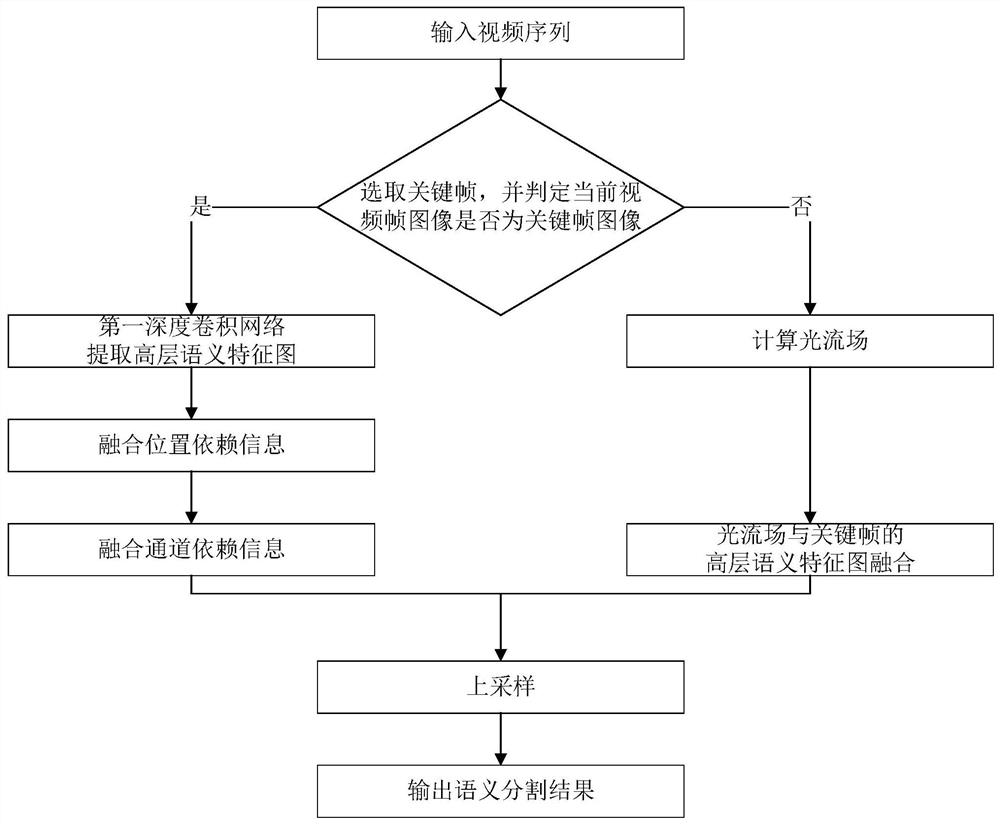

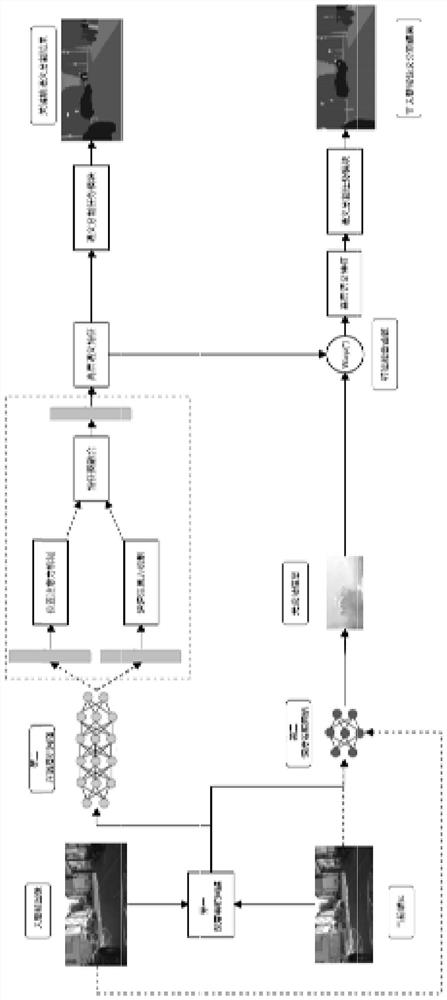

[0057] Such as figure 1 As shown, a kind of video semantic segmentation method based on optical flow feature fusion provided by the present invention comprises the following steps:

[0058] Step 1, determine that the current video frame image of the video sequence is a key frame image or a non-key frame image; if it is a key frame image, then perform step 2, if it is a non-key frame image, then perform step 3;

[0059] Step 2, extracting the high-level semantic feature map of the fusion position-dependent information and channel-dependent information of the current video frame image;

[0060] Step 3, obtain the high-level semantic feature map of the current video frame image by calculating the optical flow field;

[0061] Step 4, upsampling the high-level semantic feature map obtained in step 2 and step 3 to obtain a semantic segmentation map.

[0062] The characteristics and performance of the present invention will be described in further detail below in conjunction with t...

PUM

Login to View More

Login to View More Abstract

Description

Claims

Application Information

Login to View More

Login to View More - R&D

- Intellectual Property

- Life Sciences

- Materials

- Tech Scout

- Unparalleled Data Quality

- Higher Quality Content

- 60% Fewer Hallucinations

Browse by: Latest US Patents, China's latest patents, Technical Efficacy Thesaurus, Application Domain, Technology Topic, Popular Technical Reports.

© 2025 PatSnap. All rights reserved.Legal|Privacy policy|Modern Slavery Act Transparency Statement|Sitemap|About US| Contact US: help@patsnap.com